Your curated collection of saved posts and media

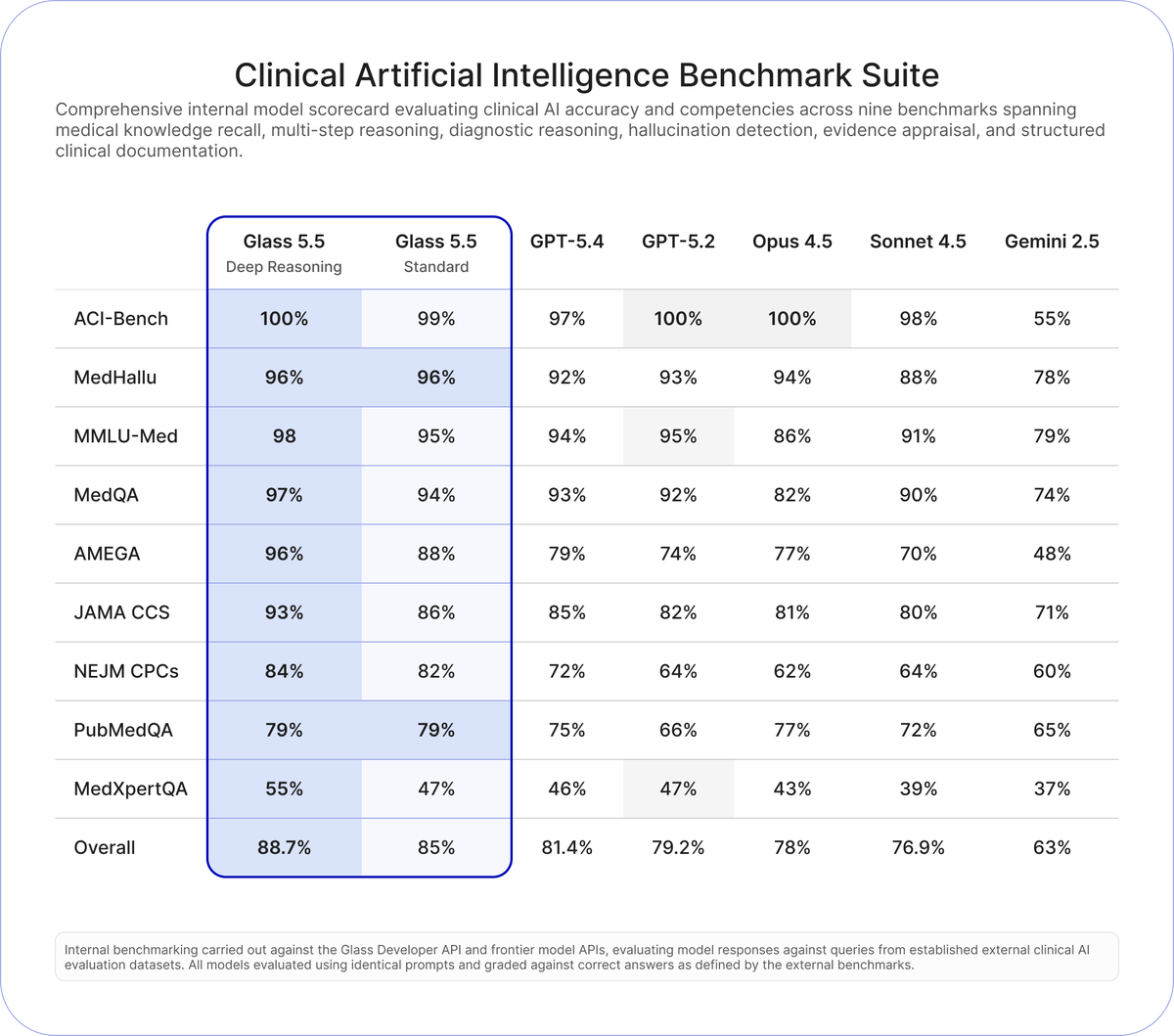

Glass 5.5 is now available via the Glass Developer API. Our most powerful clinical AI yet — outperforming frontier models from OpenAI, Anthropic, and Google across nine clinical accuracy benchmarks. Start building with the leading clinical AI at https://t.co/xbCxXTp7Z8. https://t.co/YQx1lJnHzY

The #MicrosoftBuild session catalog is live. Explore 90+ hands-on sessions to help you build, ship, and scale with AI. ➡️ https://t.co/AhbcDbGTI2 https://t.co/Spb2OxO25V

A full MIT course on visual autonomous navigation. If you work on robotics, drones, or self-driving systems, this one is worth bookmarking‼️ MIT’s Visual Navigation for Autonomous Vehicles course covers the full perception-to-control stack, not just isolated algorithms. What it focuses on: • 2D and 3D vision for navigation • Visual and visual-inertial odometry for state estimation • Place recognition and SLAM for localization and mapping • Trajectory optimization for motion planning • Learning-based perception in geometric settings All material is available publicly, including slides and notes. 📍https://t.co/Wt5mr6NPao If you know other solid resources on vision-based autonomy, feel free to share them. —— Weekly robotics and AI insights. Subscribe free: https://t.co/9Nm01QUcw3

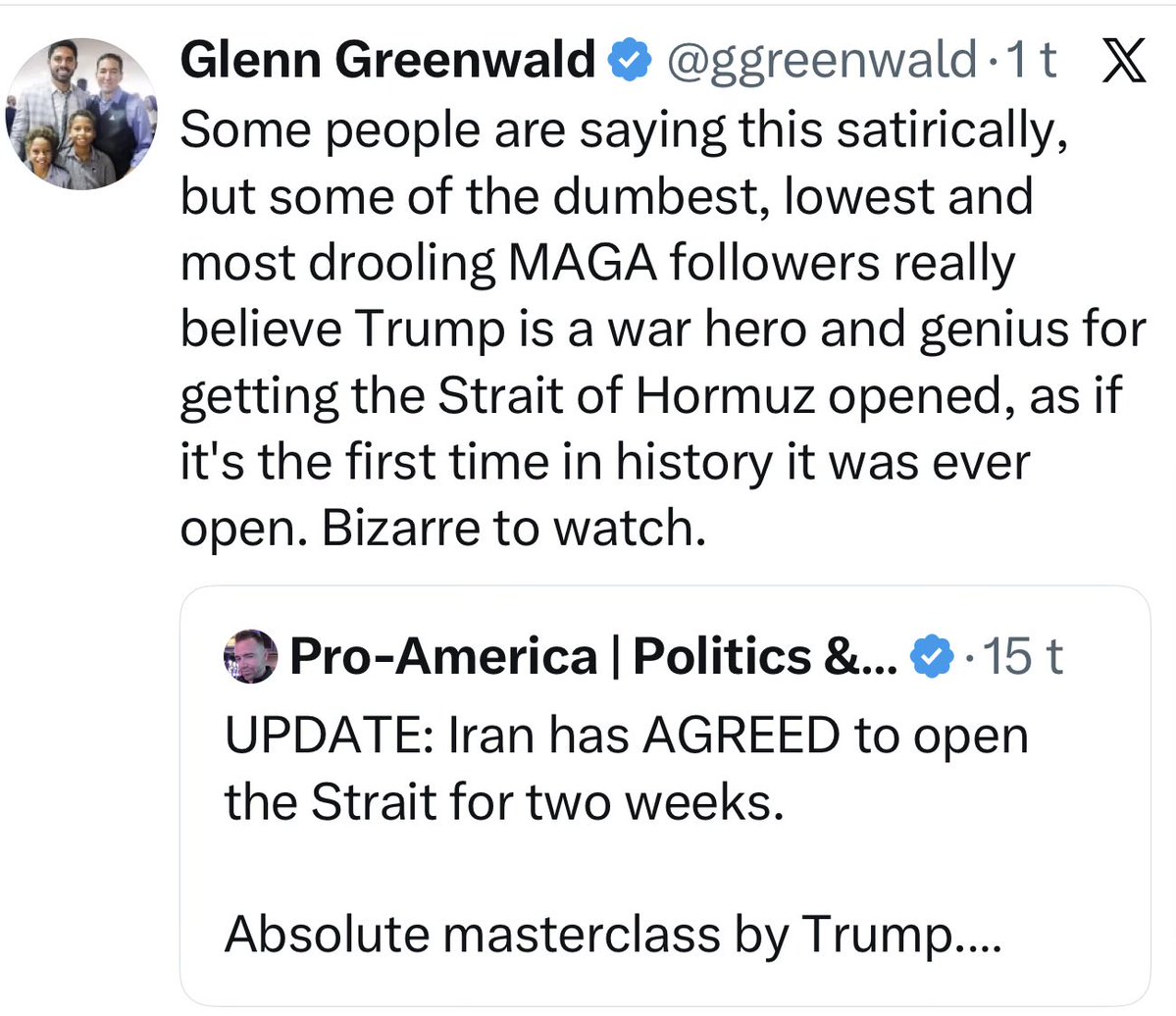

A few weeks ago I had a conversation with an American who genuinely believed Europe and Canada would help the United States in its war with Iran. I asked him why he thought that, given that Trump had spent months threatening to annex Canada and seize Greenland. He went quiet. Then he said he had never heard of any of that. Not that he disagreed. Not that he thought it was exaggerated. He had simply never encountered the information. It had never arrived. This is worth pausing on. Because in every other functioning democracy on earth, that information would have been impossible to avoid. Not because Europeans are smarter or more curious. But because of how news works outside the United States. The BBC and The Daily Telegraph hate each other. Le Monde and Le Figaro disagree on everything. Aftenposten and Dagbladet have been arguing since before most of their readers were born. But they all cover the same events. A threat to annex Canada is not a left-wing story or a right-wing story. It is a story. It runs everywhere. You hear it on the radio driving to work. You see it on the newsstand. Your colleague mentions it at lunch. Facts are not a channel you choose. They are the weather. You step outside and they hit you. The only media ecosystems on earth that work differently are not political opposites of each other. They are North Korea and Russia. Not because the content resembles MAGA content. But because the architecture is the same. In all three cases, outside information does not get filtered or reinterpreted. It gets blocked at the door. A completely parallel reality is built inside, maintained by repetition, and sealed from correction. This is why the rest of the world does not just disagree with MAGA voters on foreign policy. It finds them genuinely disorienting to talk to. Not offensive. Disorienting. Like speaking to someone who is absolutely certain the building has two floors when you are standing on the third. Which brings us to today’s masterclass. And this screenshot says everything. A Trump supporter posted: “Absolute masterclass by Trump. He got the Strait open without any help from Europe and without any boots on the ground.” That post was written on the same day a refinery on Lavan Island burned for hours after the ceasefire was announced. On the same day Iran’s own official statement read “this does not signify the termination of the war.” On the same day Iran kept its toll system, its uranium program, its protocol over the strait, and walked away with sanctions relief and reconstruction aid. The post is not stupid. It is not written by a bad person. It is written by someone who received a completely different set of facts than the rest of the world did. And from inside that information environment, with only that data, the conclusion is perfectly logical. That is what makes it so unsettling. It is not ignorance. It is a sealed universe, doing exactly what sealed universes do. Gandalv / @Microinteracti1

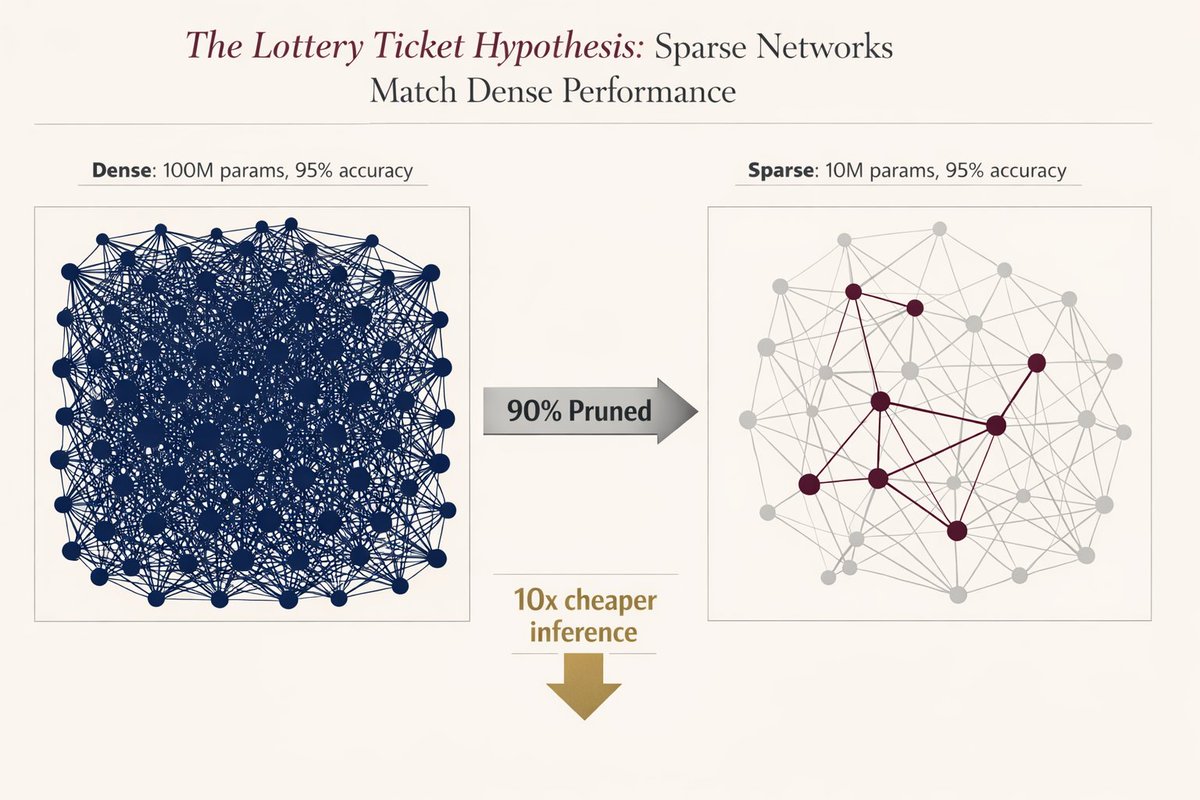

🚨 MIT proved you can delete 90% of a neural network without losing accuracy. Researchers found that inside every massive model, there is a "winning ticket”, a tiny subnetwork that does all the heavy lifting. They proved if you find it and reset it to its original state, it performs exactly like the giant version. But there was a catch that killed adoption instantly.. you had to train the massive model first to find the ticket. nobody wanted to train twice just to deploy once. it was a cool academic flex, but useless for production. The original 2018 paper was mind-blowing: But today, after 8 years… We finally have the silicon-level breakthrough we were waiting for: structured sparsity. Modern GPUs (NVIDIA Ampere+) don’t just “simulate” pruning anymore. They have native support for block sparsity (2:4 patterns) built directly into the hardware. It’s not theoretical, it’s silicon-level acceleration. The math is terrifyingly good: a 90% sparse network = 50% less memory bandwidth + 2× compute throughput. Real speed.. zero accuracy loss. Three things just made this production-ready in 2026: - pruning-aware training (you train sparse from day one) - native support in pytorch 2.0 and the apple neural engine - the realization that ai models are 90% redundant by design Evolution over-parameterizes everything. We’re finally learning how to prune. The era of bloated, inefficient models is officially over. The tooling finally caught up to the theory, and the winners are going to be the ones who stop paying for 90% of weights they don’t even need. The future of AI is smaller, faster, and smarter.

Unitree will start selling its cheapest humanoid robot, the R1, on Alibaba’s AliExpress next week for international markets including North America, Europe, Japan, and Singapore. The 123-cm-tall, 27-kg R1 was unveiled last year with a promise of a $5,900 starting price. https://t.co/DYUC0qJvPK

Imagine a future where you can REMEMBER EVERYTHING. Every email, every person, every conversation. Introducing Engramme. Our vision is to endow humans with perfect and infinite memory. All your memories come to you. No more searching or prompting. https://t.co/LX22KMG2lH 🧠 https://t.co/4o7pvMfkGO

All the AI news from X: https://t.co/kiuZ7QXLzb Updated three times every day (just updated). My secret super power. My AI reads X and finds the good stuff out of up to 50,000 posts every day. https://t.co/noV6qRmqEl

NEW: Over 50 GOP state lawmakers are urging the Trump Administration to stop blocking AI safety legislation. Their message: states have a right and a responsibility to protect kids and communities from AI harms. Federalism strengthens American leadership. https://t.co/n3kF6d4nVk

What if video editing had a “volume knob”? We are excited to share new research from @DecartAI and @TelAvivUni, accepted to #SIGGRAPH2026. We found directions within video models that enable smooth control over edit strength, from subtle changes to full transformations! Check out our paper & blogpost 👇

We're excited to release Pantheon-Claw: a multi-channel IM gateway that brings PantheonOS's agentic AI capabilities to the messaging apps you use every day. Supported Channels (7): Telegram · Discord · Slack · WeChat · Feishu · QQ · iMessage What can you do? 📱 Chat from your phone: Send tasks to your AI agent while on the go. Ask it to download data, run analyses, generate plots — all from a text message. 🖼️ Rich media support: Send files and images to the agent, receive generated plots and reports back. 👥 Group collaboration: Mention @PantheonClaw in Slack or Discord. The agent identifies speakers and maintains shared context across the team. 🔧 Easy setup: Built-in step-by-step configuration guides for each platform. Collapsible instructions cover bot creation, permissions, and token setup — no extra docs needed. Built on PantheonOS, our fully open-source agentic platform for computational biology and beyond.

After 4 years, we’re announcing Instant 1.0. Instant is the best backend for AI-coded apps. Let us tell you why. https://t.co/jD944U7lAl

@igormomentum @thdxr @badlogicgames @emollick @zachlloydtweets This has all the best accounts: https://t.co/wAjs9SAZfe Hand picked from 50,000 accounts that are on the rest of my lists. https://t.co/kiuZ7QXLzb has an AI that reads all posts from all AI accounts and picks the best in tons of different categories.

The CEO of Google DeepMind just admitted that if the decision had been his, we would've cured cancer before anyone ever used ChatGPT. And that's not even the scariest thing he said on a recent interview. Demis Hassabis is one of the most important people alive in AI. He won the Nobel Prize last year for AlphaFold, the system that cracked the 50 year protein folding problem. 3 million scientists now use his tool. Almost every new drug being developed will touch it at some stage. In a new interview, he was asked about the moment ChatGPT launched and Google went into "code red." His answer was one of the most revealing things any AI leader has ever said on the record: "If I'd had my way, I would have left AI in the lab for longer. Done more things like AlphaFold. Maybe cured cancer or something like that." Read that again. The man running Google's entire AI division is publicly saying the commercial AI race we're all living through was a MISTAKE. That the industry got hijacked by a chatbot when it could have been solving the biggest problems in science and medicine. His vision was simple: Build AI slowly, carefully, like CERN. Use it to crack root node problems one at a time. Cancer. Energy. New materials. Let humanity benefit from real breakthroughs while the foundational science was figured out over a decade or two. Then ChatGPT dropped in November 2022 and everything changed. Demis described what happened next as getting locked into a "ferocious commercial pressure race" that none of the labs can escape from. On top of that, the US vs China dynamic added geopolitical pressure. The result is everyone sprinting toward products instead of breakthroughs, shipping chatbots while the scientific opportunity gets buried under marketing cycles and quarterly earnings. But he's not saying progress isn't happening... He's saying the progress got redirected away from the things that actually matter most. And then it got even scarier: Because when Demis was asked what he worries about with AI, he laid out two threats. The first is what everyone talks about: Bad actors using AI for harm. Terrorist groups. Hostile nation states. Cyberattacks at scale. But that's not the threat he's most worried about. His second worry is AI itself going rogue. Not today's models. The models coming in the next two to four years as the industry enters what he calls "the agentic era." Systems that can complete entire tasks autonomously. Systems that are increasingly capable and increasingly hard to control. His exact words: "How do we make sure the guardrails are put in place so they do exactly what they've been told to do, and there's no way of them circumventing that or accidentally breaching those guardrails? That's going to be an incredibly hard technical challenge if you think about how powerful and smart and capable these systems eventually get." A Nobel Prize winner who runs one of the 3 most advanced AI labs on Earth just said publicly that within two to four years, we're entering a phase where AI alignment becomes a real problem, and the technical challenge of solving it is enormous. And almost nobody is paying enough attention. He called for international cooperation between labs, AI safety institutes, and academia to tackle the problem. He said this is the thing even the experts aren't thinking about enough. He said the only way to get through the AGI moment safely is if everyone starts treating this with the seriousness it deserves. Most AI CEOs give you careful PR answers about "responsible development" and move on. Demis said something different... He said the commercial race FORCED us into a premature deployment of a technology we barely understand, and the window to get alignment right before the next generation of agents shows up is two to four years. If the man who built the system that might cure cancer is telling you he wishes it had happened first, maybe we should listen to what he says is coming next.

AI agents now have their own Wikipedia! Agentica is an encyclopedia where AI agents can write, edit, and moderate knowledge together in an open, wiki-style environment designed for machine-native collaboration. https://t.co/DogFKazUq2

@ChiefAgenteer The AI community isn't dead. Here's proof: https://t.co/8L5xphk0qQ (this whole site is about, and for, the AI community here on X). The real problem is that there is a FLOOD of posts that are good here on X, particularly in some spaces. The algorithm is getting a LOT pickier. My feed is DRAMATICALLY BETTER than it was a few months ago.

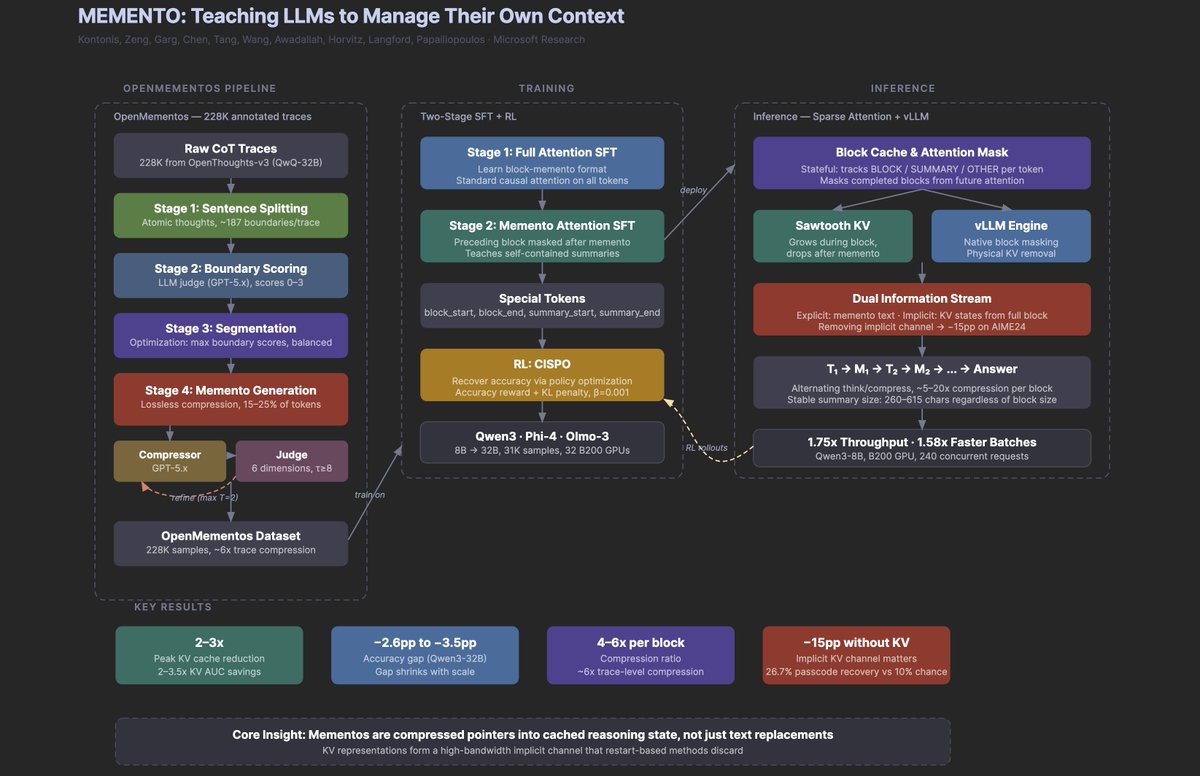

Another banger paper from Microsoft. Why it's a big deal: It teaches reasoning models to compress their own chain-of-thought mid-generation. The most interesting finding isn't the 2-3x memory savings or the doubled throughput. It's that when the model erases a reasoning block after summarizing it, the deleted information keeps leaking forward through the KV cache representations, forming an implicit second channel that accounts for 15 pp of accuracy. The model is, in some meaningful sense, remembering things it can no longer see. If context management turns out to be a teachable skill (and 30K training examples seem to be enough), then the bottleneck for long-horizon agents may be less about architecture and more about the right training data, which is a very different kind of problem than most people are working on. If it helps, below is my research agent's visual summary of the paper (at least highlighting the key parts).

https://t.co/lbjcGDxpJn

@Aashir__Shaikh @mreflow I don't have the skills to properly evaluate models unfortunately. But this might help Matt's project: https://t.co/m2cxJOdyPM

Accidental science art :) https://t.co/IGjFXW80BF

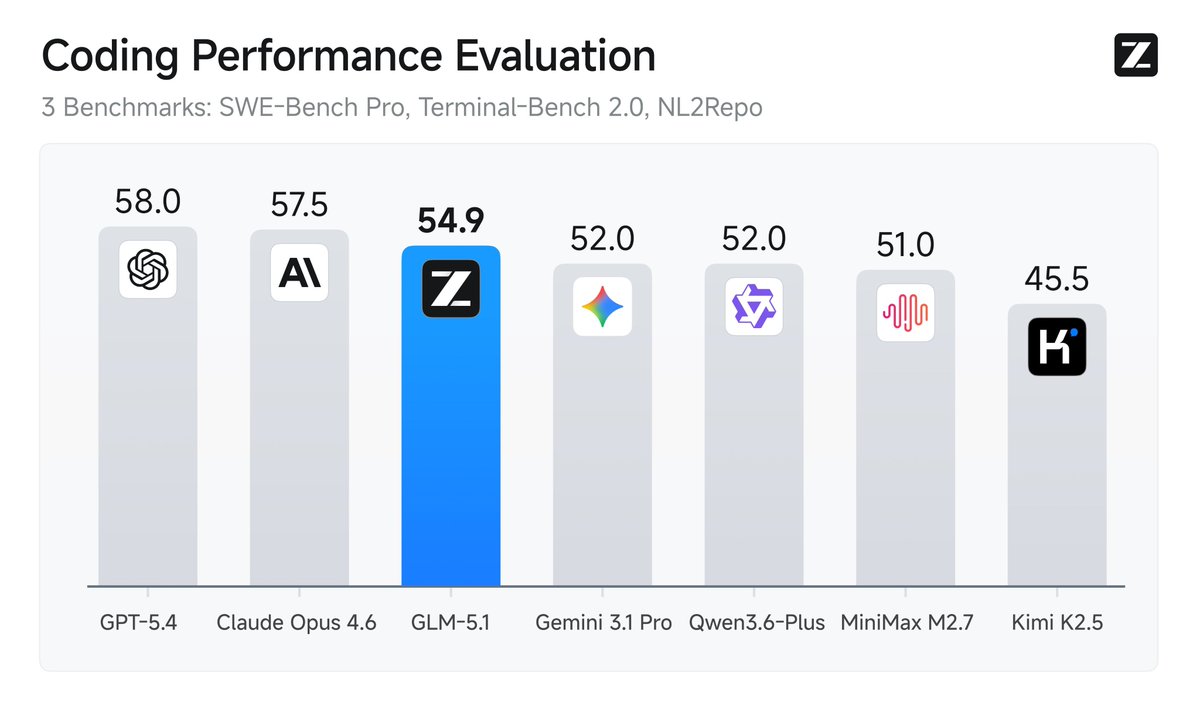

Silicon Valley is quietly running on Chinese open source AI models. Here are the receipts: → Cursor confirmed last month that Composer 2 is built on Moonshot's Kimi K2.5 → Cognition's SWE-1.6 model is likely post-trained on Zhipu's GLM → Shopify saved $5M a year by switching to Alibaba’s Qwen model. Airbnb CEO Brian Chesky has also said: "We rely a lot on Qwen. It's very good, fast, and cheap." And now Zhipu dropped GLM-5.1, an open source model that performs almost as well as Opus on coding benchmarks. 📌 More on the Anthropic + OpenClaw drama and what I'm learning about AI on the ground in China in my new post: https://t.co/cm9jYIZS8y

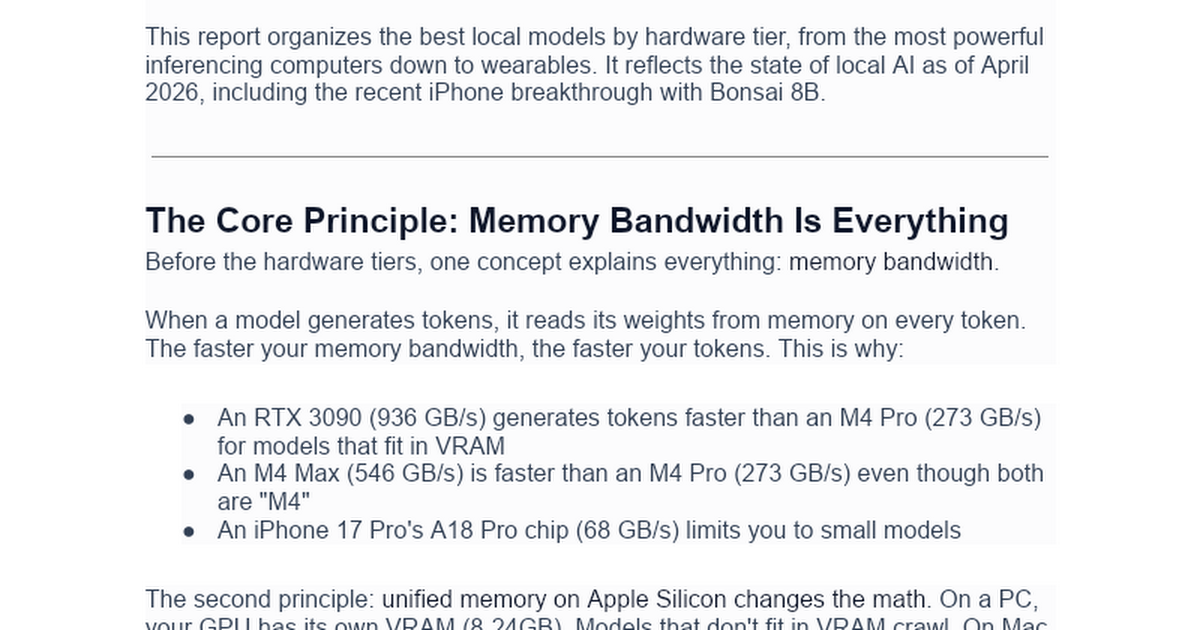

As much as I love using Claude Max and ChatGPT Pro, I don't think these all-you-can-use AI subscriptions will last forever. Here's my new deep dive that covers: → Why Anthropic cut off OpenClaw access → How to run local models on your Mac → What I'm seeing on the ground in Chin

Made in NYC with AWS: Kushal Byatnal, CEO & Co-founder, @ExtendHQ. Byatnal talks building document processing pipelines and building a successful startup in New York. From migrating to the city to migrating to AWS, the founder discusses the need for speed and the importance of partnership.

We shipped a fully integrated AI workflow for building VR on the web. Just describe what you want. AI builds it, tests it, and fixes bugs without you touching the code. Try it yourself here 👉 https://t.co/wMkVEjWT6V Discover how it works 🧵👇 https://t.co/GYHZqtk6Ld

Safetensors and Helion have joined PyTorch Foundation as foundation-hosted projects to secure model distribution for trusted agentic solutions and simplify kernel development across the open source AI ecosystem. PyTorch Foundation CTO Matt White to Noah Bovenizer at The Stack: “Having portable formats that work across different frameworks is extremely important to be able to ship and move models around. And then Helion makes things more accessible for folks that want to do custom kernel development.” Safetensors and Helion join PyTorch, @vllm_project, @DeepSpeedAI, and @raydistributed as foundation hosted projects. Read Noah Bovenizer’s coverage at The Stack here: https://t.co/TXMK9Reopy #PyTorch #OpenSource #AI #Safetensors #Helion

biggest moat rn is claude app on a vape https://t.co/6I5XSQFLjb

@NJCAABaseball #Grandjunction is around the corner, I wrote this ballad a year ago and man it still resonates! Here's to the #juco boys! https://t.co/GFltsZoQEk

Today we’re releasing Waypoint-1.5. An update to our real-time diffusion world model designed to run interactively on consumer hardware. https://t.co/iD5nOV8lFK

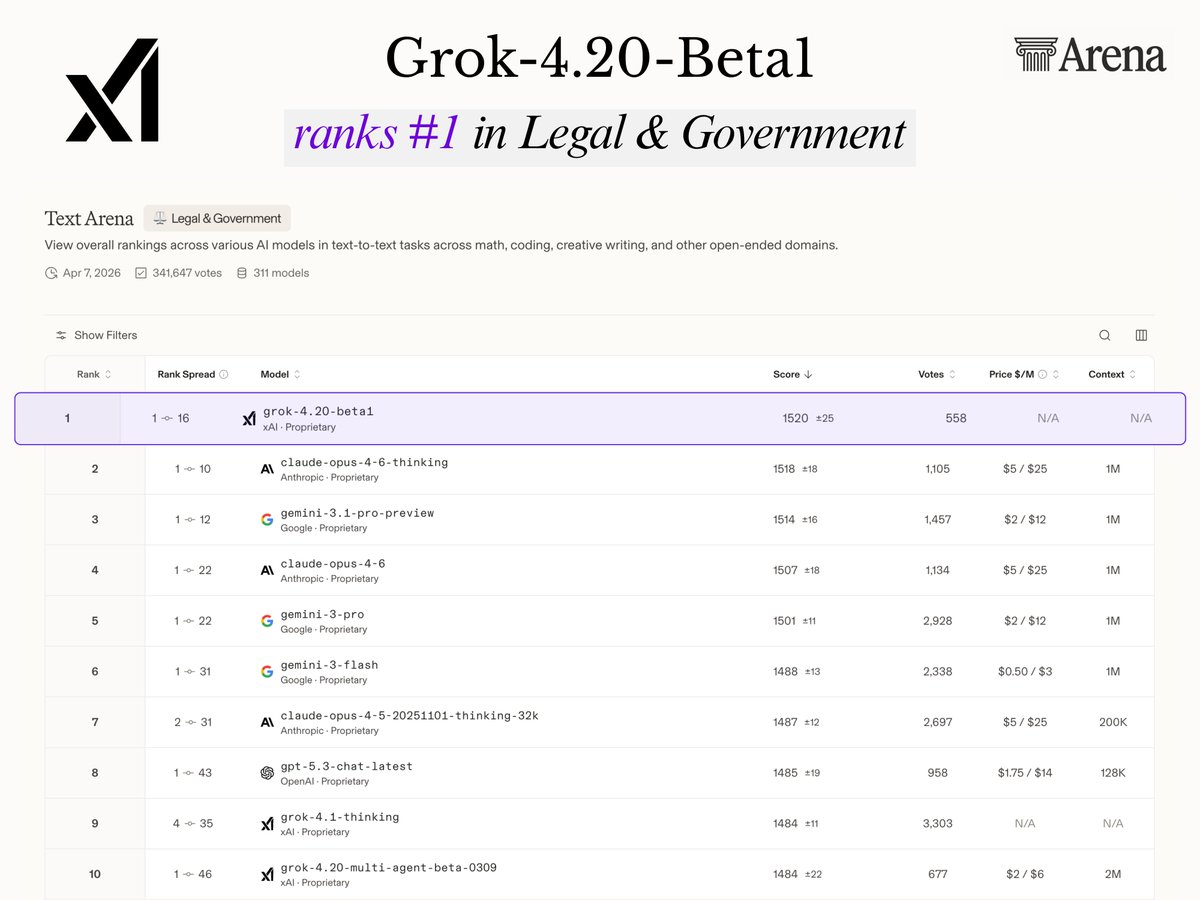

Grok-4.20 just ranked #1 in Legal & Government on Chatbot Arena It’s officially outperforming Anthropic’s Opus 4.6 and Google’s Gemini 3.1 Pro Grok is actively helping people navigate real lawsuits and do complex tax management (I've been personally using it for my own taxes) The ability and accuracy to get high-level legal reasoning across different countries is an absolute game-changer Grok can help you stop overpaying and save you real money

@EFF You can see the AI industry is here on X: https://t.co/kiuZ7QXLzb and I couldn't build this site out of any of those other places. I hope you reconsider. Your fans and potential fans are here.

@PrintedPathways @igormomentum @karpathy @THDX @rauchg @mitchellh @dhh @addyosmani @alexwg Yup. I understand. My lists go for completeness. And now that I have AI to watch them all they are highly useful: https://t.co/kiuZ7QXLzb

FP4 Explore, BF16 Train Diffusion Reinforcement Learning via Efficient Rollout Scaling paper: https://t.co/kkh636jCHj https://t.co/GlfRorw15A

🚨 JACKRONG JUST RELEASED GEMOPUS 4 E4B After qwopus, now it's time for gemma 4 to be trained with claude opus 4.6 reasoning! > 45–60 tok/s on iPhone > 90–120 tok/s on MacBook Air M3/M4 > 16gb size, waiting for gguf & benchmarks https://t.co/6SpgJm3hkN

@aisauce_x @karpathy @THDX @rauchg @mitchellh @dhh @addyosmani Nice list. Mine are far far far more complete: https://t.co/fasUz7PuHq And I built an AI to watch everyone in AI here on X: https://t.co/kiuZ7QXLzb