Your curated collection of saved posts and media

Overview of Self-Evolving Agents There is a huge interest in moving from hand-crafted agentic systems to lifelong, adaptive agentic ecosystems. What's the progress, and where are things headed? Let's find out: https://t.co/1xtGCvxp2F

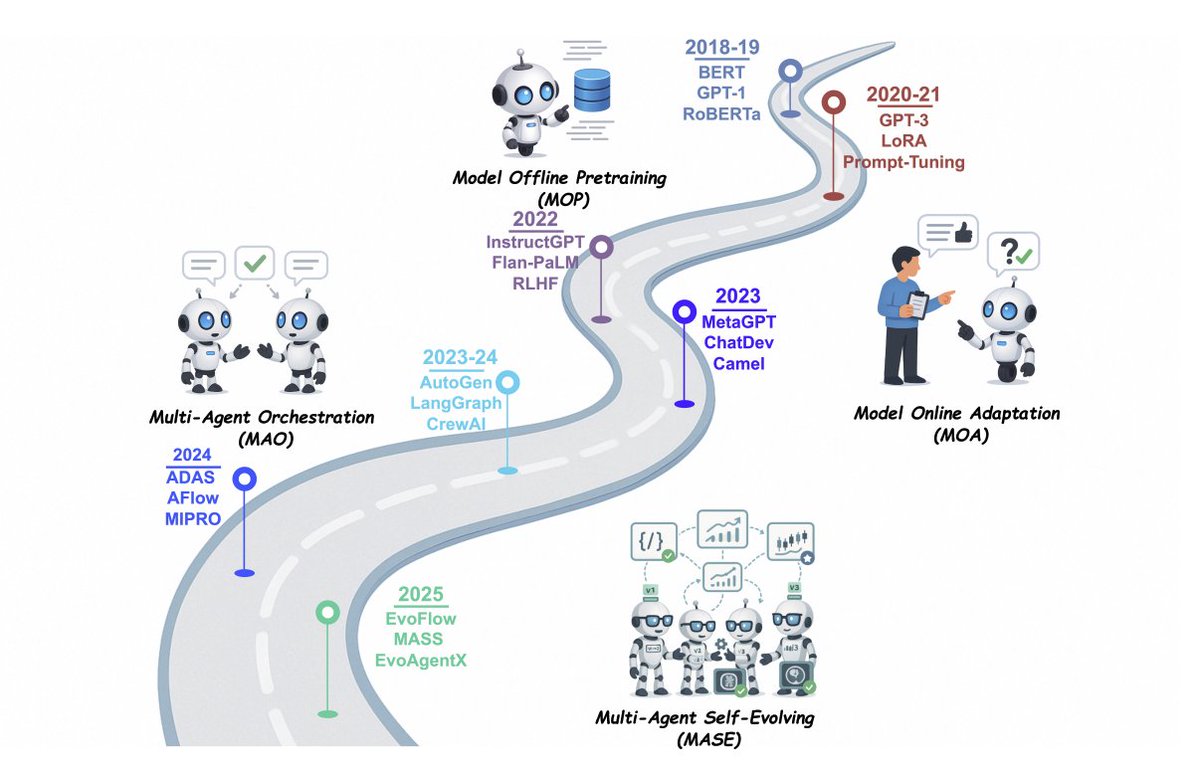

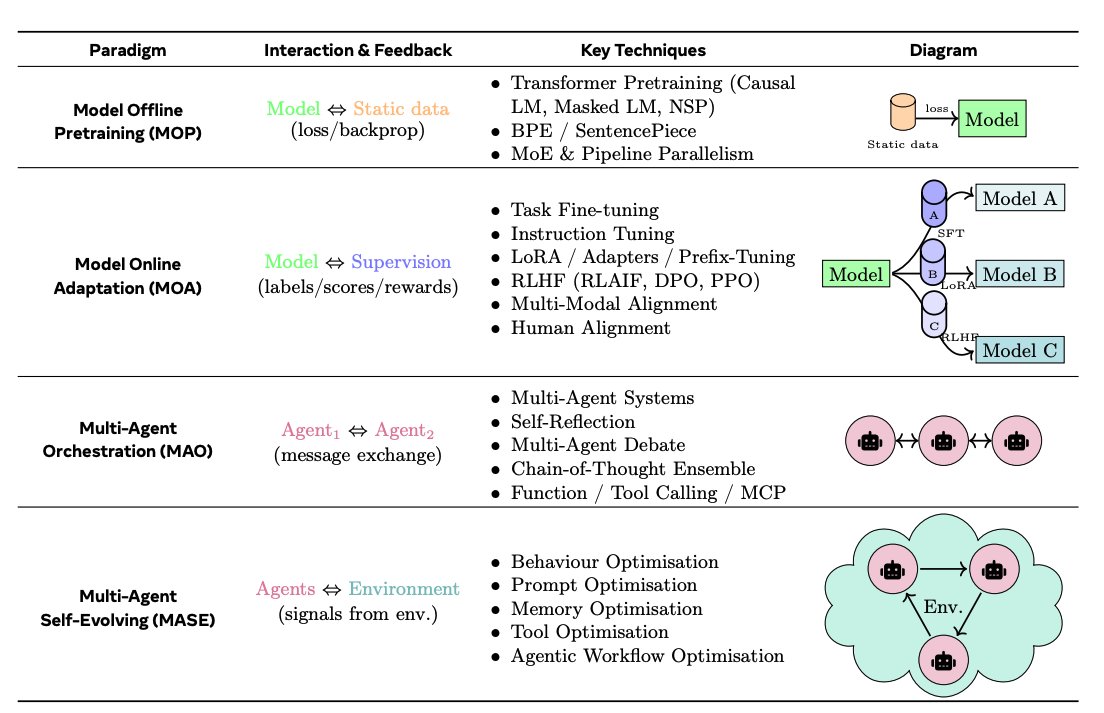

Paradigm shift and guardrails The paper frames four stages: Model Offline Pretraining → Model Online Adaptation → Multi-Agent Orchestration → Multi-Agent Self-Evolving. It introduces three guiding laws for evolution: maintain safety, preserve or improve performance, and then autonomously optimize.

LLM-centric learning paradigms: MOP (Model Offline Pretraining): Static pretraining on large corpora; no adaptation after deployment. MOA (Model Online Adaptation): Post-deployment updates via fine-tuning, adapters, or RLHF. MAO (Multi-Agent Orchestration): Multiple agents coordinate through message exchange or debate, without changing model weights. MASE (Multi-Agent Self-Evolving): Agents interact with their environment, continually optimising prompts, memory, tools, and workflows.

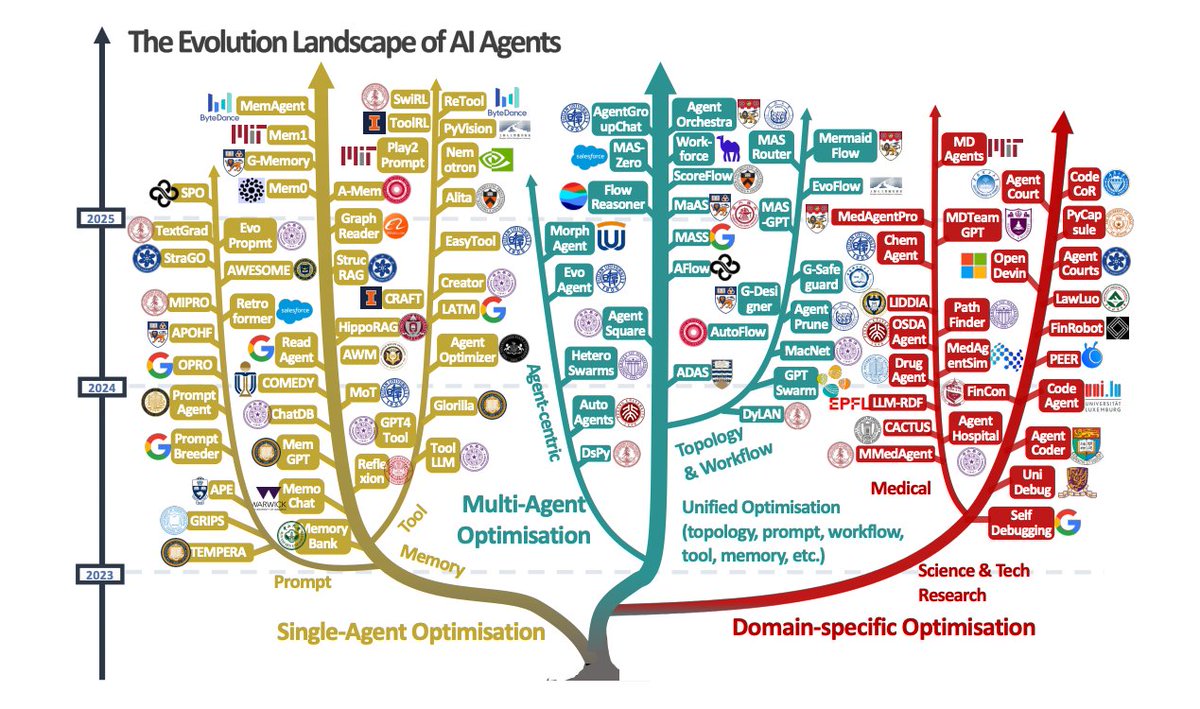

The Evolution Landscape of AI Agents The paper presents a visual taxonomy of AI agent evolution and optimisation techniques, categorised into three major directions: single-agent optimisation, multi-agent optimisation, and domain-specific optimisation. https://t.co/YDdKXOq9Hg

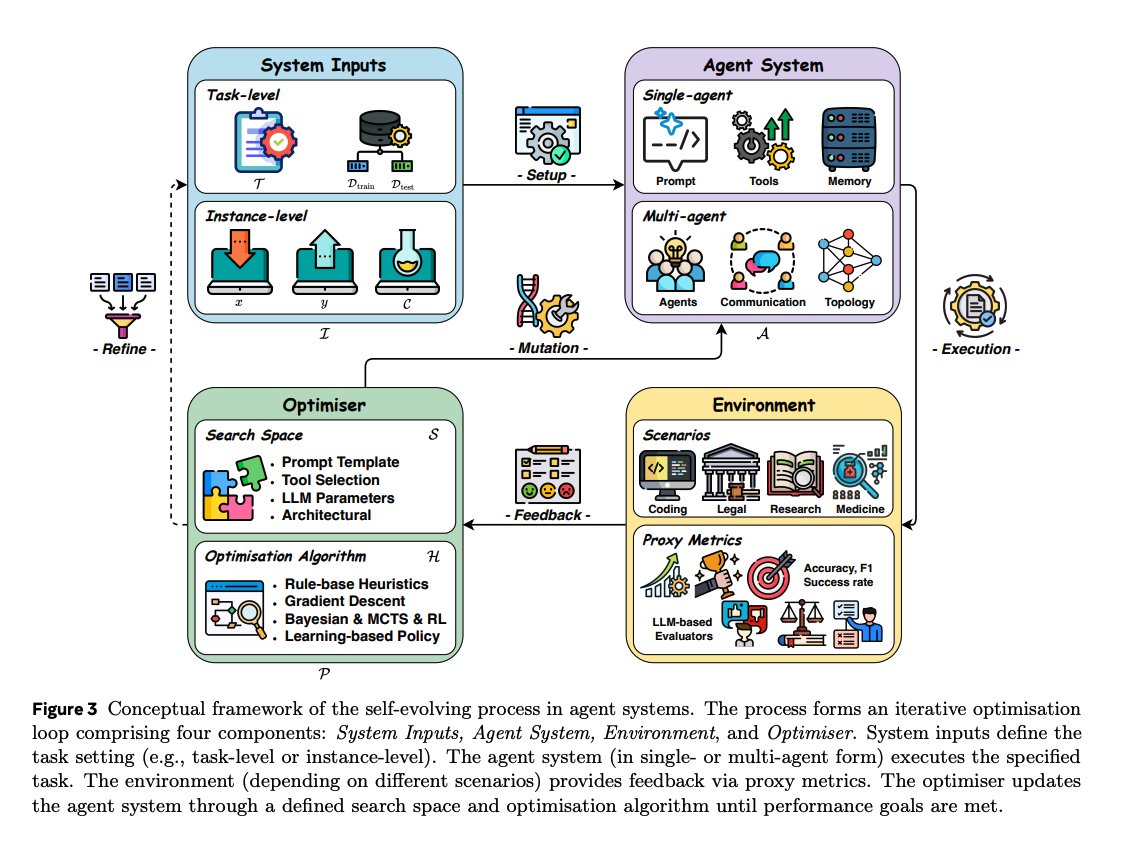

Unified framework for evolution A single iterative loop connects System Inputs, Agent System, Environment feedback, and Optimizer. Optimizers search over prompts, tools, memory, model parameters, and even agent topologies using heuristics, search, or learning. https://t.co/tBCBS4dG5q

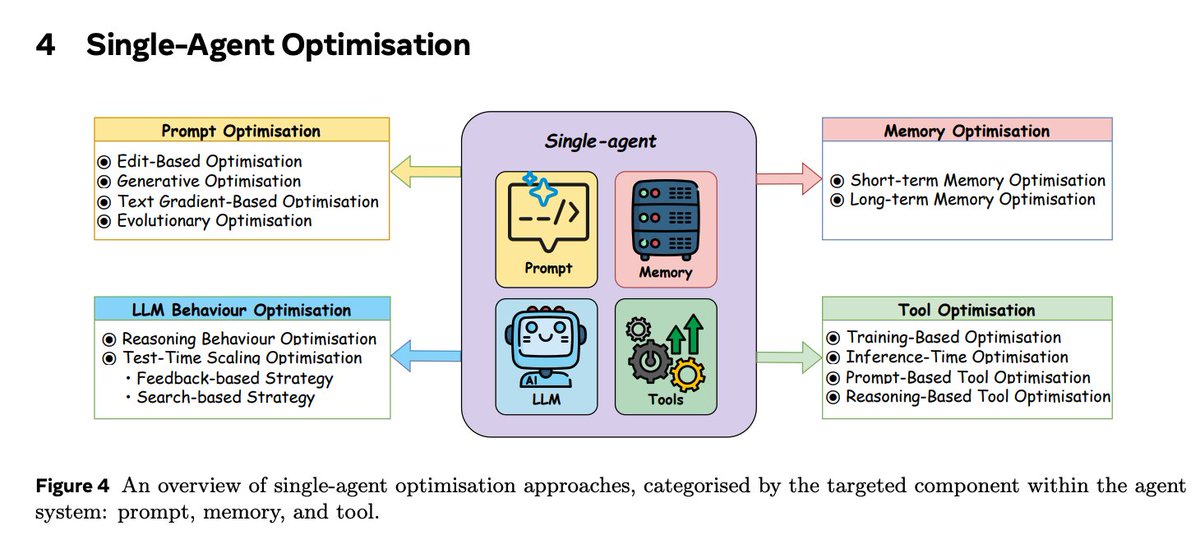

Single-agent optimization toolbox Techniques are grouped into: (i) LLM behavior (training for reasoning; test-time scaling with search and verification), (ii) prompt optimization (edit, generate, text-gradient, evolutionary), (iii) memory optimization (short-term compression and retrieval; long-term RAG, graphs, and control policies), and (iv) tool use and tool creation.

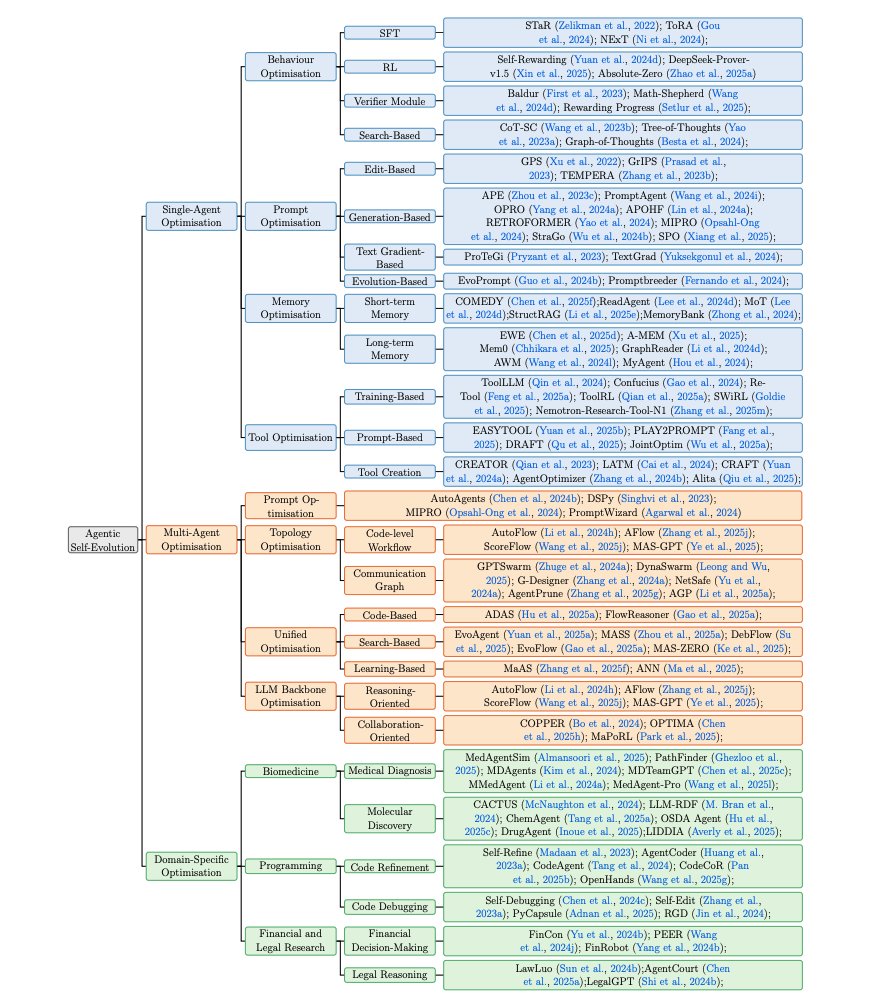

Agentic Self-Evolution methods The authors present a comprehensive hierarchical categorization of agentic self-evolution methods, including single-agent, multi-agent, and domain-specific optimization categories. https://t.co/hfPK6GpP4R

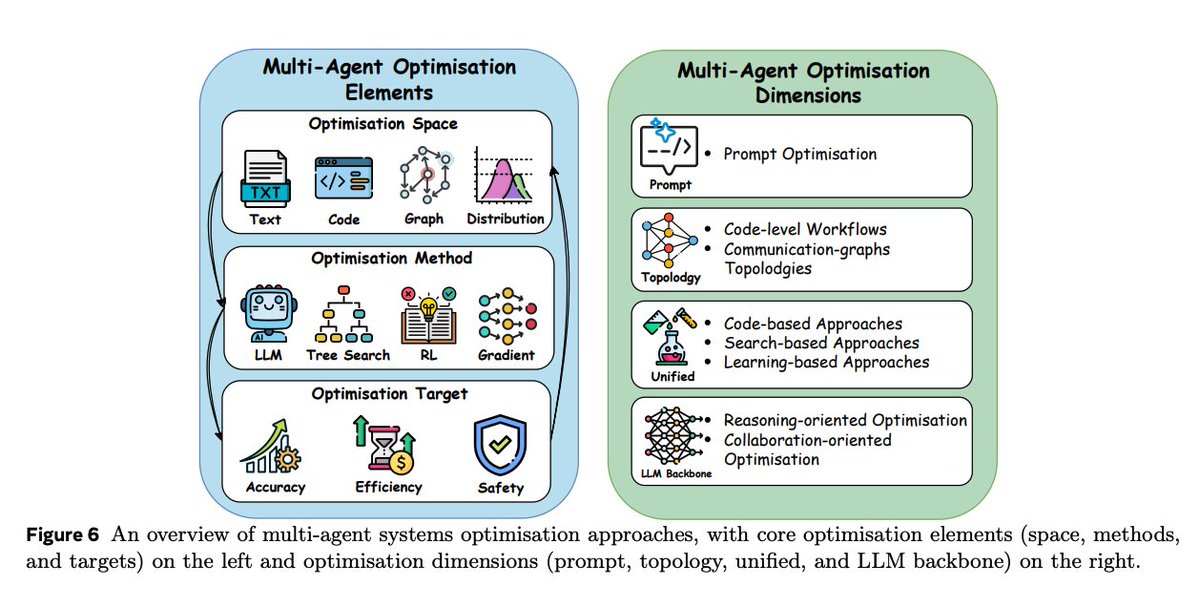

Multi-agent workflows that self-improve Beyond manual pipelines, the survey treats prompts, topologies, and backbones as searchable spaces. It distinguishes code-level workflows and communication-graph topologies, covers unified optimization that jointly tunes prompts and structure, and describes backbone training for better cooperation.

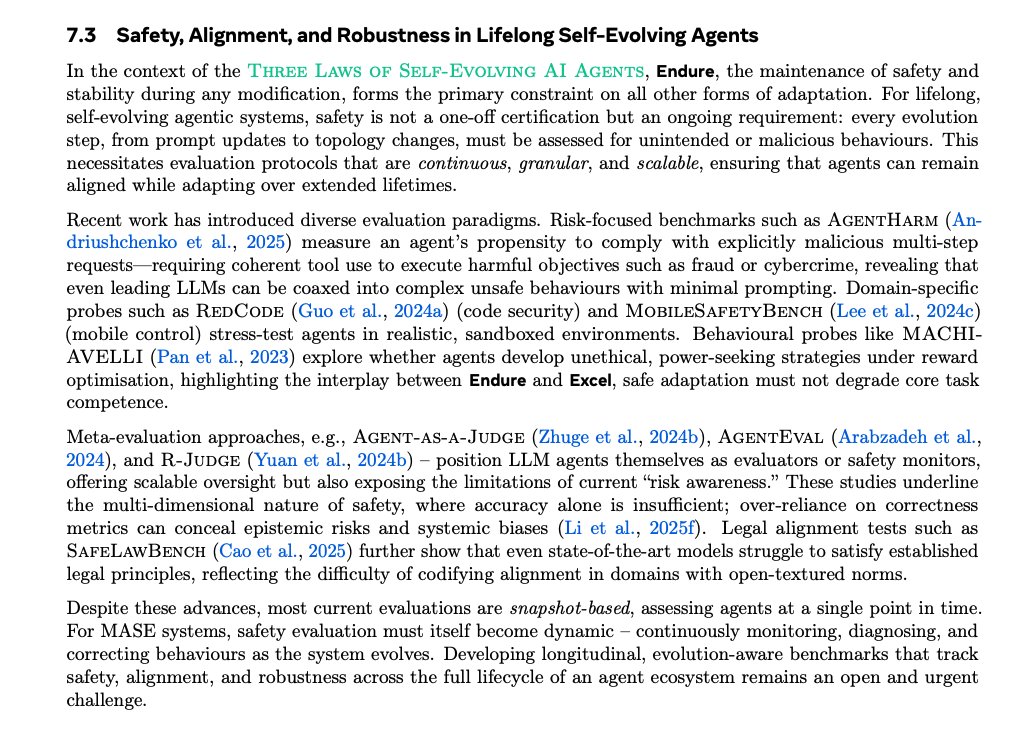

Evaluation, safety, and open problems Benchmarks span tools, web navigation, GUI agents, collaboration, and specialized domains; LLM-as-judge and Agent-as-judge reduce evaluation cost while tracking process quality. The paper stresses continuous, evolution-aware safety monitoring and highlights challenges such as stable reward modeling, efficiency-effectiveness trade-offs, and transfer of optimized prompts/topologies to new models or domains. Paper: https://t.co/VS8d9x076S

I am running a workshop for our academy subs in a couple of days. https://t.co/yHVttRK8Wz Will share more details on how to use Claude Code for notetaking and research. This is the most excited I've been about integrating AI into my research workflows. Just getting started. https://t.co/MifLLtUL0P

Day 14 of 14 Days of Distributed! We've got a number of cool people still that are talking since we started this list, so today we're going to rapid fire them all (in no particular order)! Let's buckle up and go! @winglian @FerdinandMom @m_sirovatka @mervenoyann @charles_irl

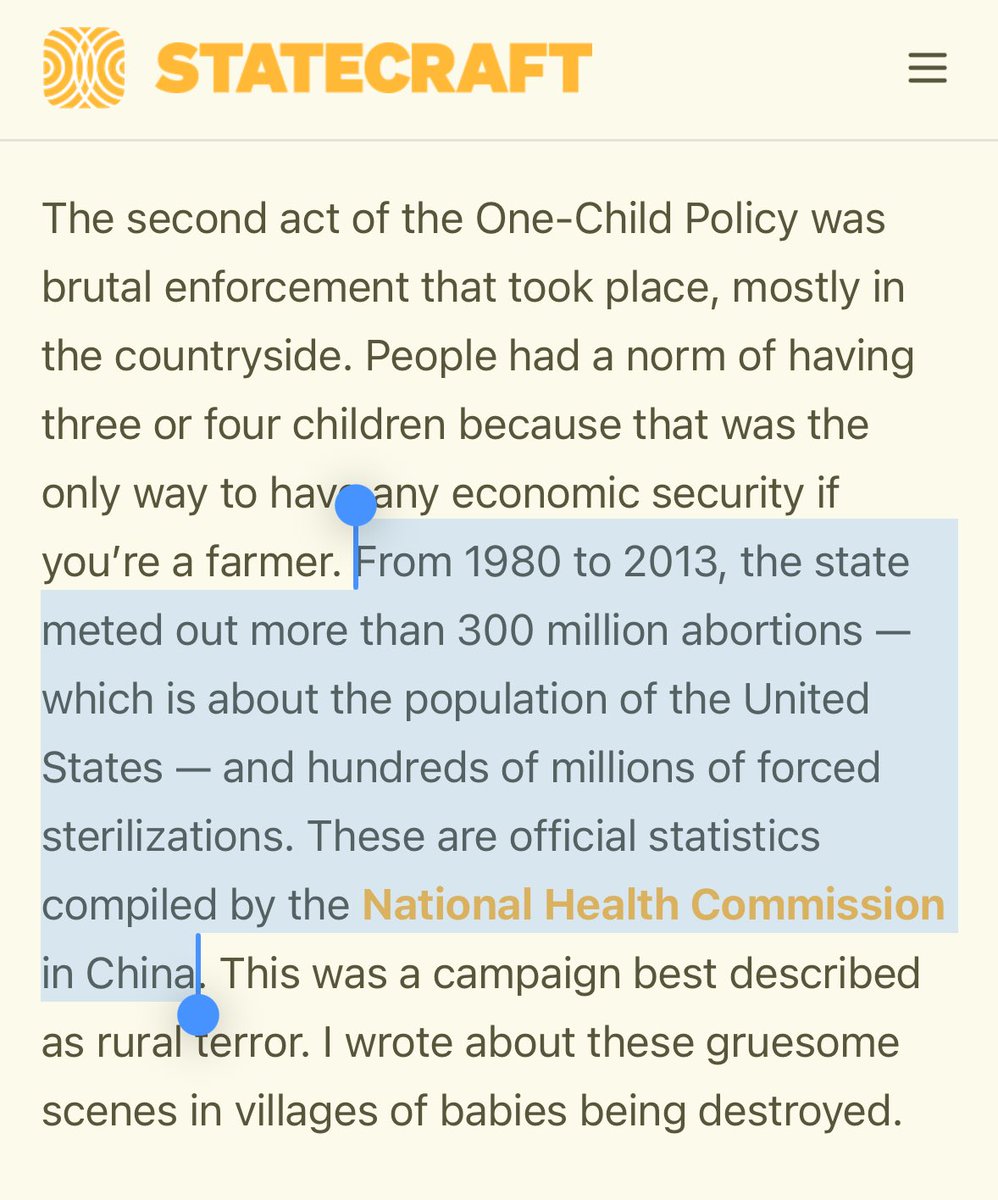

The one-child policy in China was even more horrific than I realized: https://t.co/BuTEwMrxkk

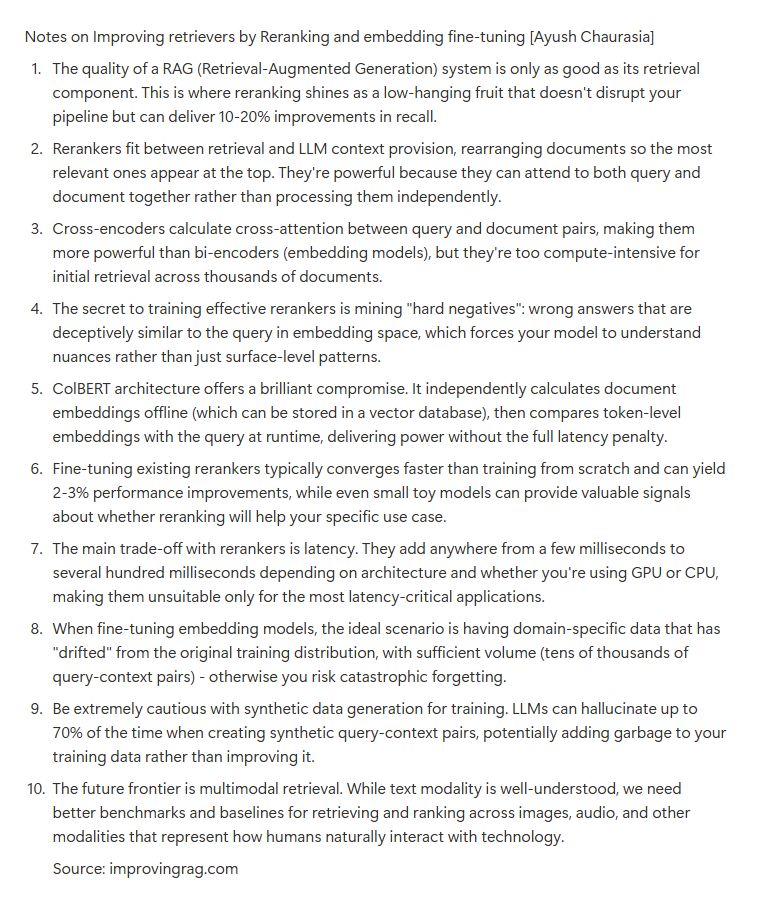

Throwback to Ayush Chaurasia's (@loldedxd) talk from last cohort. It's out on YouTube now if you want to check it out. https://t.co/ve9xMs84hY https://t.co/S8lXdP23Rf

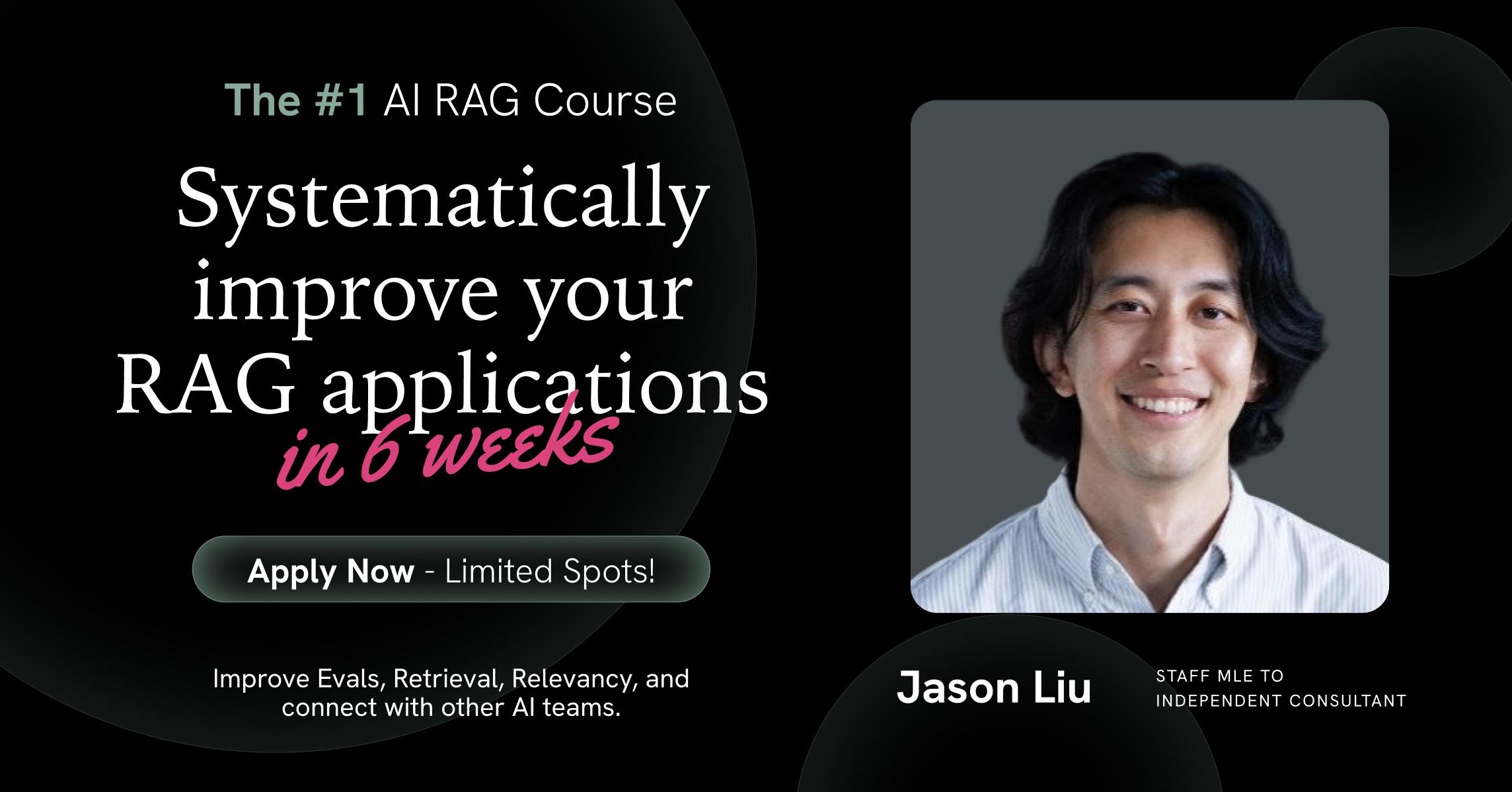

@loldedxd Wanna join us for Cohort 4? There's still time. Here's our EARLYBIRD deal: https://t.co/C7zURt4OVp https://t.co/uKTISg4dN5

https://t.co/gRsbjh08j9

GENTLE MONSTER’s new bold collection reads to me like steering eyewear trends toward bulkiness in time for the google smart glasses partnership https://t.co/PhtdhcOI16

vibe coding a video transcription AI app with Apple FastVLM on Hugging Face, a couple prompts in anycoder 100% locally in your browser (zero install) https://t.co/cBhVrUfls6

app: https://t.co/esPDyHDu94

deployed app https://t.co/ir43c1FqK8

vibe coding app: https://t.co/esPDyHDu94

video captioning app: https://t.co/nrxrQ1zJhq

EmbodiedOneVision Interleaved Vision-Text-Action Pretraining for General Robot Control https://t.co/P3dll6JfeD

discuss with author: https://t.co/QDhsLYkbSO

R-4B Incentivizing General-Purpose Auto-Thinking Capability in MLLMs via Bi-Mode Annealing and Reinforce Learning https://t.co/EKUftseecu

discuss with author: https://t.co/tYpKDkWiHc

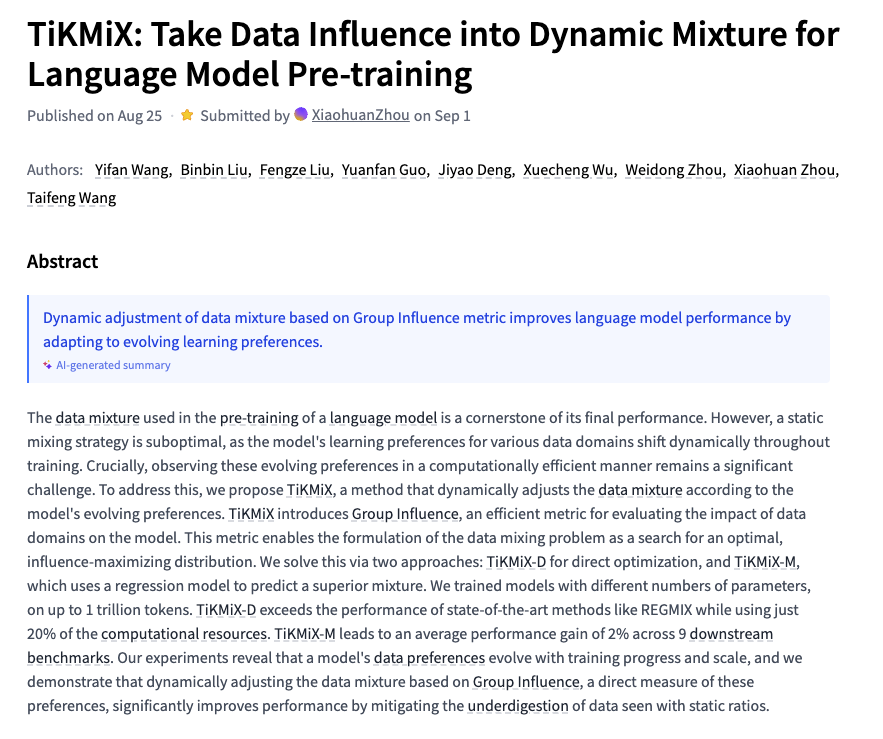

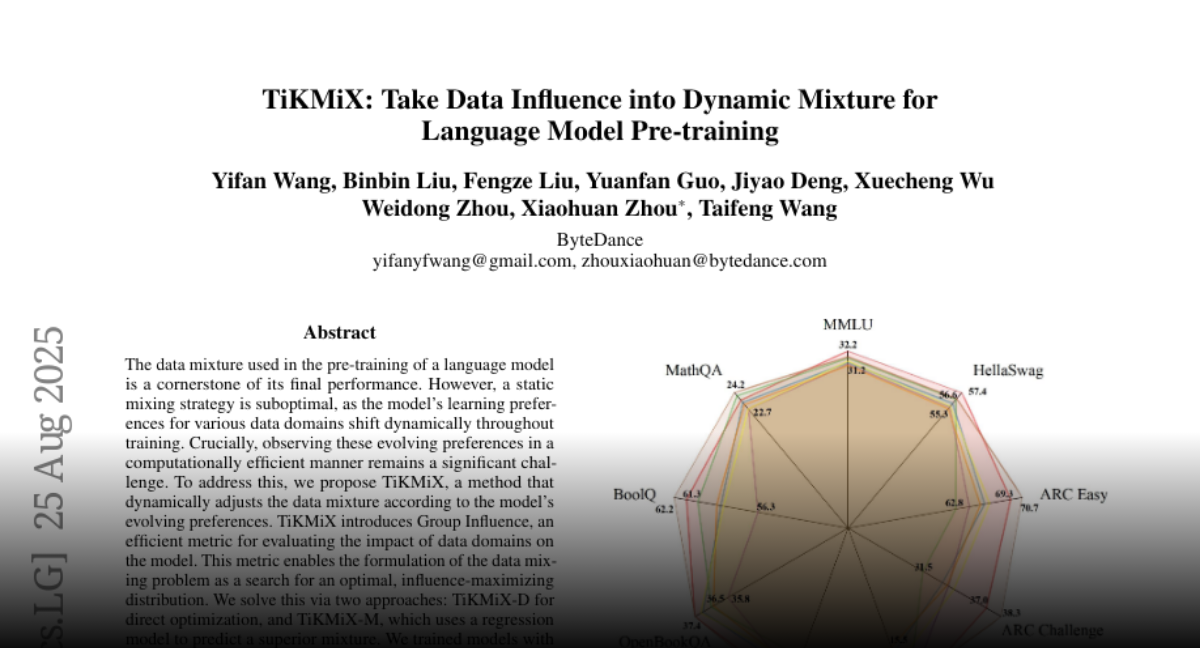

TiKMiX Take Data Influence into Dynamic Mixture for Language Model Pre-training https://t.co/BVHpUzRETN

discuss with author: https://t.co/pbInr4X4Bt

Droplet3D Commonsense Priors from Videos Facilitate 3D Generation https://t.co/Iovf5DW91h

discuss with author: https://t.co/VvRSuqc9O0

Lovely time presenting at #AIDev Amsterdam today ❤️ We explored some 📹 models (Wan, LTX, etc.), their existing capabilities, and limitations. I am glad that the attendees found my presentation to be an enjoyable experience 🫡 Find the slides here ⬇️ https://t.co/jDRNC2fPgI https://t.co/TlxdZJcWAe

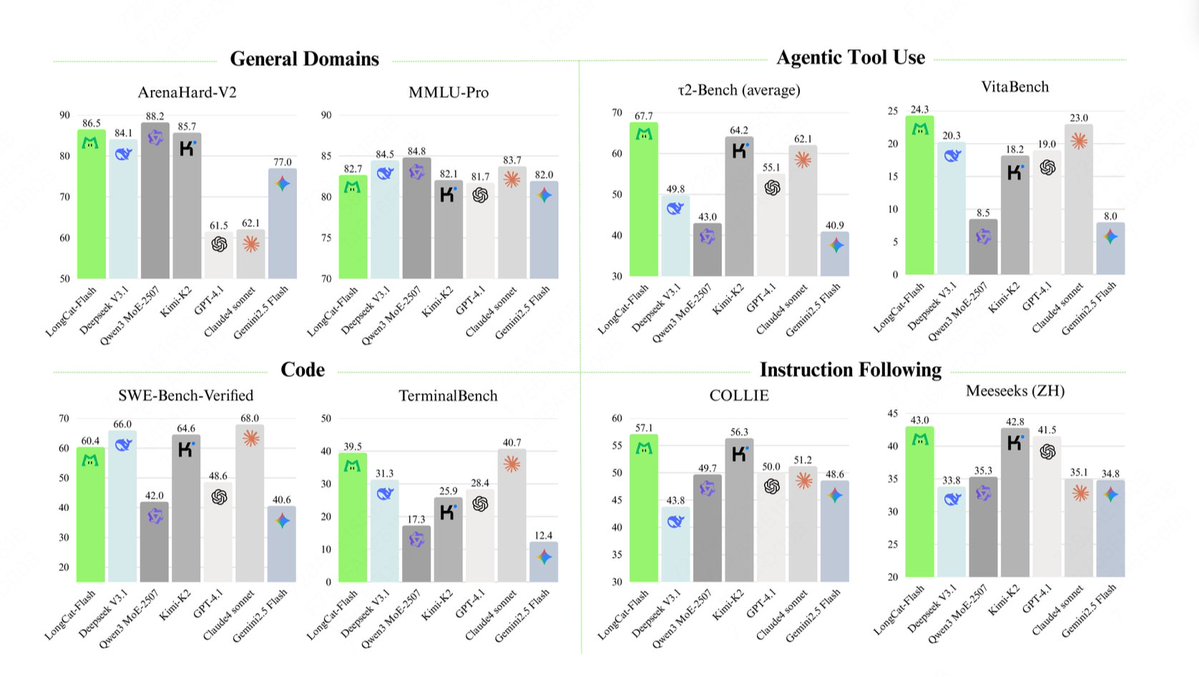

Meituan just open sourced their new MoE LLM LongCat on @huggingface It's exciting to see new players! The model looks very interesting too with technical report. https://t.co/DduHMQxw5F https://t.co/QMq0K8qJa0

🚀 LongCat-Flash-Chat Launches! ▫️ 560B Total Params | 18.6B-31.3B Dynamic Activation ▫️ Trained on 20T Tokens | 100+ tokens/sec Inference ▫️ High Performance: TerminalBench 39.5 | τ²-Bench 67.7 🔗 Model: https://t.co/Ljsrj9sI2H 💻 Try Now: https://t.co/Bfu4mIi8fH https://t.co/pB6NufVovF