@omarsar0

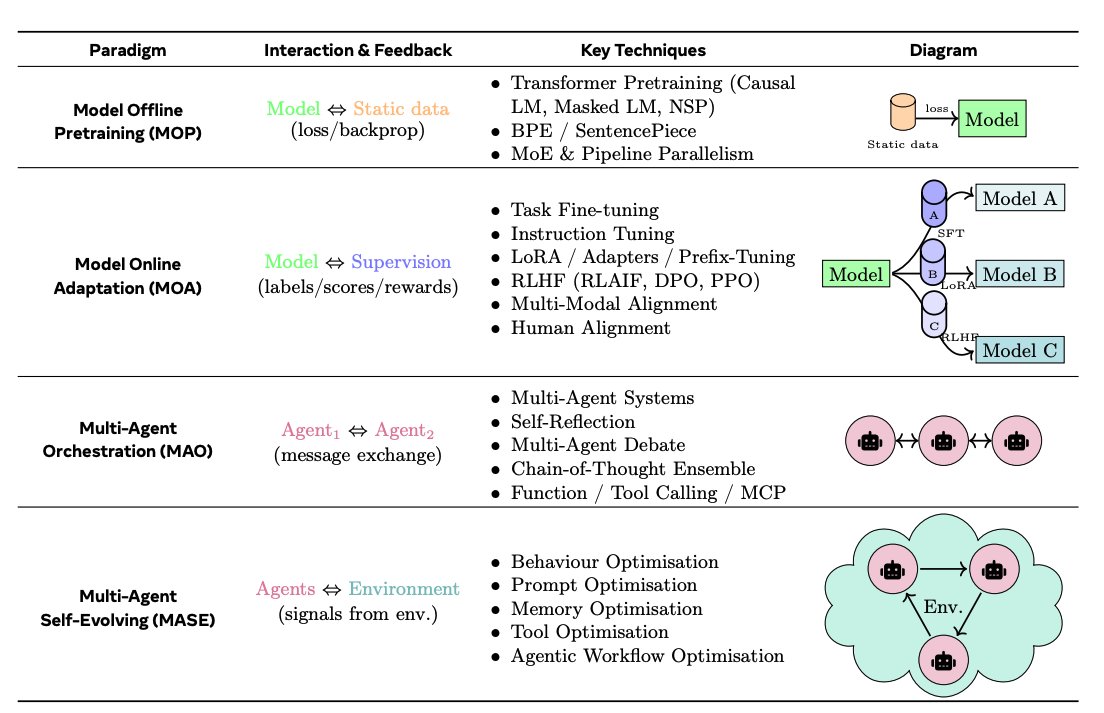

LLM-centric learning paradigms: MOP (Model Offline Pretraining): Static pretraining on large corpora; no adaptation after deployment. MOA (Model Online Adaptation): Post-deployment updates via fine-tuning, adapters, or RLHF. MAO (Multi-Agent Orchestration): Multiple agents coordinate through message exchange or debate, without changing model weights. MASE (Multi-Agent Self-Evolving): Agents interact with their environment, continually optimising prompts, memory, tools, and workflows.