Your curated collection of saved posts and media

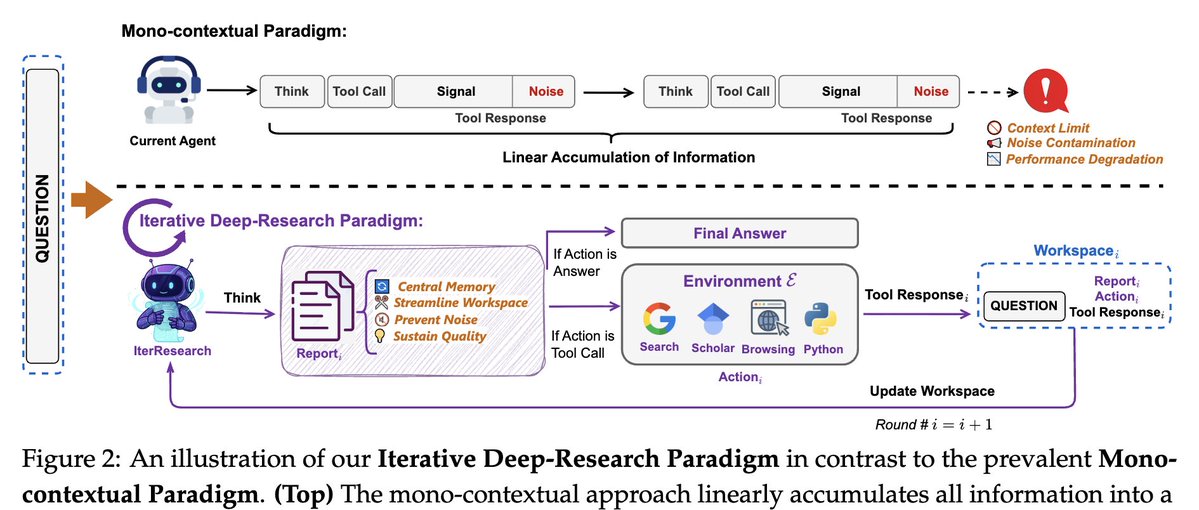

WebResearcher: Unbounded reasoning for long-horizon agents • IterResearch: Iterative deep-research paradigm (avoids context suffocation & noise) • WebFrontier: Tool-augmented data engine for complex research tasks • Parallel agents + synthesis → scalable, evidence-grounded reasoning • Beats proprietary systems: 36.7% on HLE, 51.7% on BrowseComp abs: https://t.co/AvnPicEGWM (3/N)

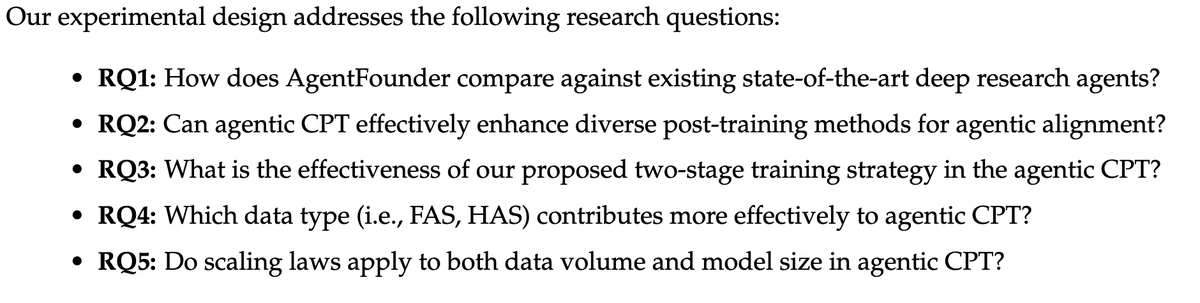

AgentFounder: Scaling Agents via Continual Pre-training • First to propose Agentic CPT → builds agentic foundation models before fine-tuning • Solves post-training bottlenecks (capabilities + alignment conflict) • Data synthesis: First-order (planning/actions) + Higher-order (multi-step decision) • Two-stage training (32K → 128K context) • SOTA: 39.9% BrowseComp-en, 72.8% GAIA abs: https://t.co/LTCuW2LCo4 (5/N)

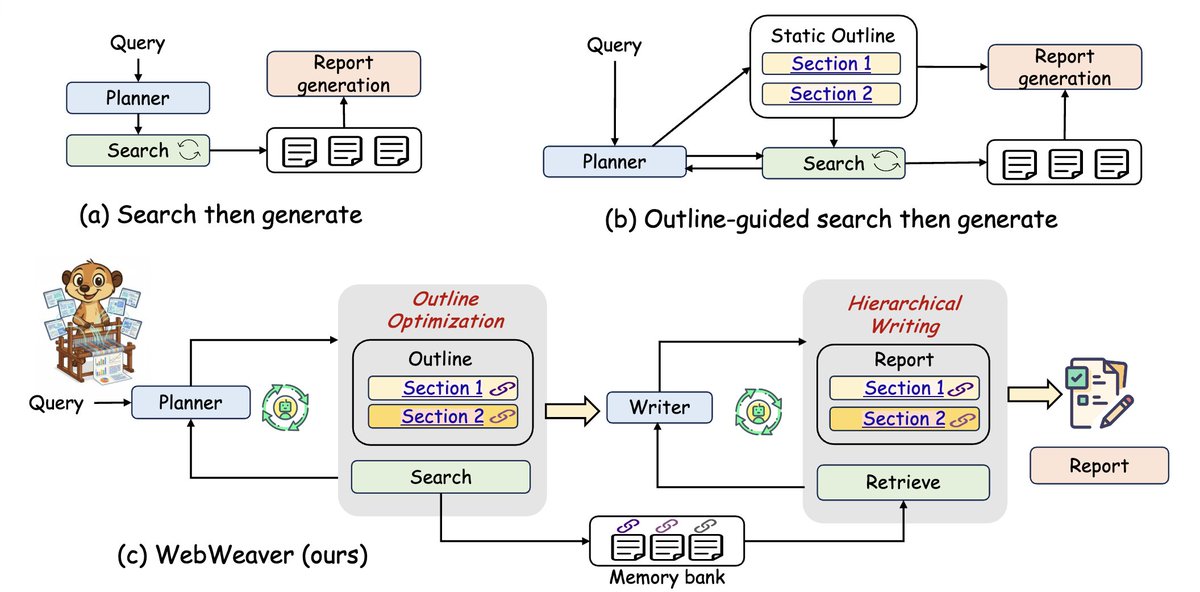

WebWeaver: Structuring Web-Scale Evidence for Deep Research • Dual-agent framework (Planner + Writer) • Dynamic outlines: search ↔ refine ↔ search (human-like loop) • Memory-grounded, section-by-section synthesis → avoids long-context failures • SOTA across DeepResearch Bench, DeepConsult, DeepResearchGym • Produces reliable, well-cited, structured reports abs: https://t.co/WsTbHV7ECO (6/N)

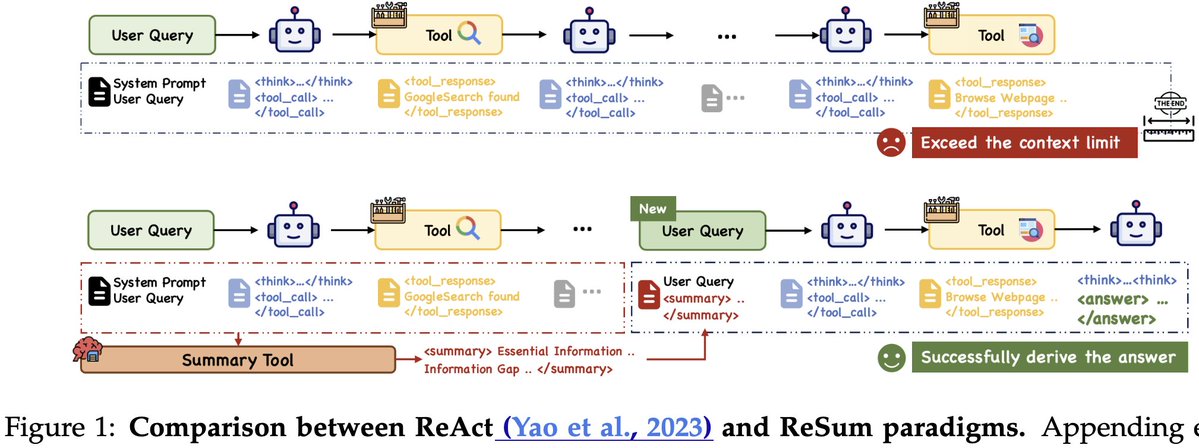

ReSum: Long-Horizon Web Agents Without Context Limits • Problem: ReAct hits context limits in long searches (32k tokens) • Solution: ReSum periodically compresses history → compact reasoning states • ReSumTool-30B: specialized summarizer extracts key evidence & gaps • ReSum-GRPO (RL): trains agents to adapt summaries into reasoning • +4.5% over ReAct baseline, +8.2% with RL across web search benchmarks abs: https://t.co/QRkfu2w6TN (7/7)

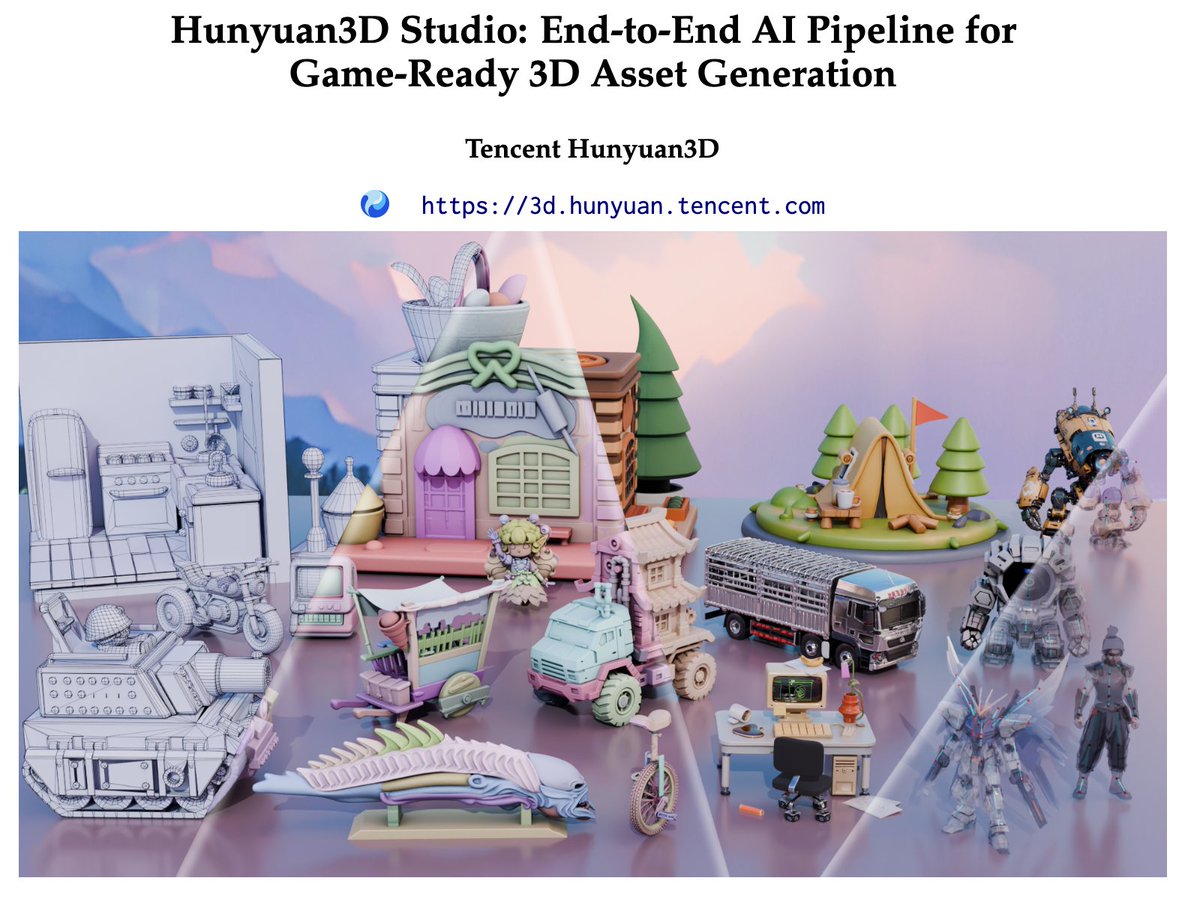

Hunyuan3D Studio tech report was just released! • Modular pipeline: Part-level gen, PolyGen, SeamGPT UV, PBR textures, auto-rigging • Game-engine ready (Unity/Unreal), optimized + production quality • Huge speedup: lowers barrier for 3D content creation https://t.co/eP05VYDHue

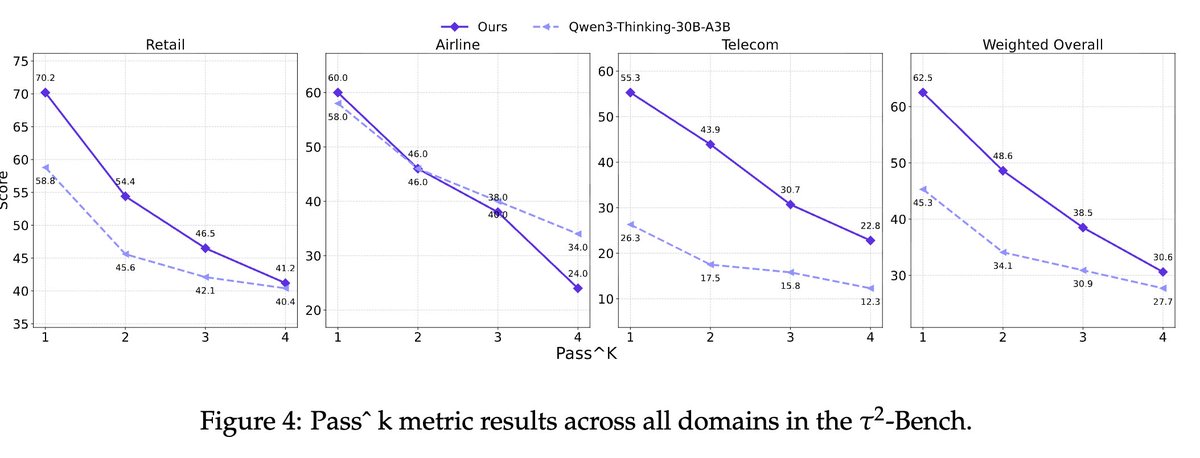

AgentScaler: Towards General Agentic Intelligence • Scales environments for diverse, realistic tool-calling • Fully simulated envs = verifiable + scalable interactions • SOTA on τ-bench, τ²-bench, ACEBench • AgentScaler-30B matches 1T-parameter models with far fewer params abs: https://t.co/lqUkV0GbKS (4/N)

This might be the most information dense blog I've ever written. Added "show me the math" section into MoE 101 p4 episode. We believe it fully models MoE training perf on both gpu and cerebras wse devices. https://t.co/uW6H78ZE56 🧵1/n

🧮 Calling all Mathletes, this one is for you. We’ve been asked to show the math behind our MoE claims. So we did. Our analysis confirms: On GPUs, expert parallelism creates severe communication overheads that dwarf computation and make MoE training painfully slow. At Cerebras,

🚨Reasoning LLMs are e̵f̵f̵e̵c̵t̵i̵v̵e̵ ̵y̵e̵t̵ inefficient! Large language models (LLMs) now solve multi-step problems by emitting extended chains of thought. During the process, they often re-derive the same intermediate steps across problems, inflating token usage and latency. Metacognitive Reuse: turn recurring LLM reasoning into concise, reusable “behaviors”. The model learns named skills from its own chains-of-thought and reuses them to think faster & cheaper. Arxiv 🔗 - https://t.co/zA1gB4eYTG

Join us today at 10 AM PT for our September Community Meeting! We’ll talk Mojo Vision & Roadmap, GSplat Kernels, and HyperLogLog. Details → https://t.co/Vcmd9SCZoa #Mojo #Modular

New for your podcast feed - @clattner_llvm sits down with @yminsky of Signals and Threads to discuss how @Modular is designing the Mojo language to be easy to use while providing the precise level of control required to write state of the art kernels. https://t.co/c2tKcujV6m

The video from the latest Modular Community Meeting is live! In this edition: 📸 Porting GSplat Kernels to Mojo 🔢 Datastructures for DB Development 🔥 Update on Mojo Vision and Roadmap, with Q&A https://t.co/XrsXtKaqYN

From Python to production—without rewriting your stack. @Modular’s Mojo gives AI devs the power of C++ with the flexibility of Python. And it all runs on AMD Instinct GPUs, AMD EPYC CPUs, and AMD ROCm software. 🎥 Watch @clattner_llvm's Tech Talk at AMD Advancing AI 2025: https://t.co/CcwvkPj0Xe

ssshh... 🤫 @AMD Mi355X... now available in nightlies. https://t.co/sWUHne1c1L

Honored to be highlighted in @Oracle and @OracleCloud's incredible Q1 earnings results🚀 Excited to keep crushing it for our customers and their most important AI workloads 🔥https://t.co/VpL3NMHVF4

Very excited to see our Gemini models getting better and better at coding! An advanced version of Gemini 2.5 Deep Think at the 2025 International Collegiate Programming Contest (ICPC) World Finals achieved gold-medal level performance! 🎉 https://t.co/yCQKOagnkm

(1/3) Thrilled to announce a new Gemini breakthrough! Building on our success at IMO this year, an advanced version of Gemini Deep Think achieved gold-medal level performance at the ICPC 2025 World Finals - one of the world’s leading competitive programming competitions. https://t.co/kDO4BkfqCP

https://t.co/4TbCAYJ1fh

https://t.co/iXvuIlHH16

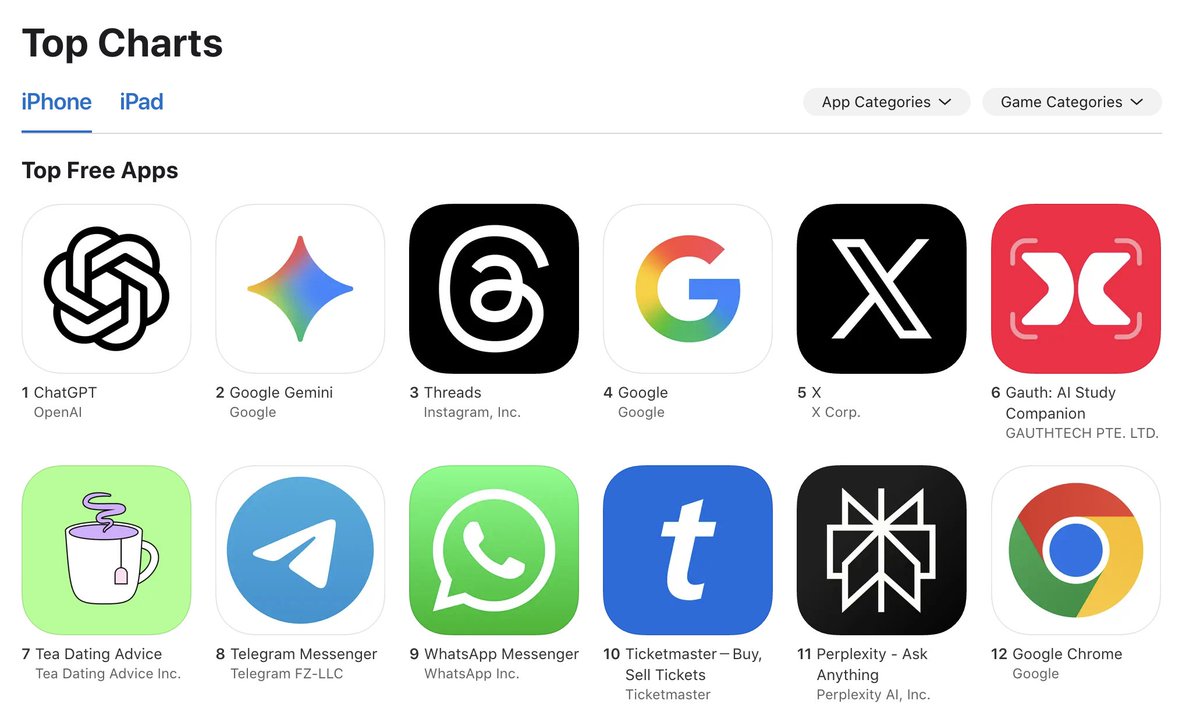

Perplexity iOS app now #11 on the overall apps in the US AppStore https://t.co/Jp2fkWOUJj

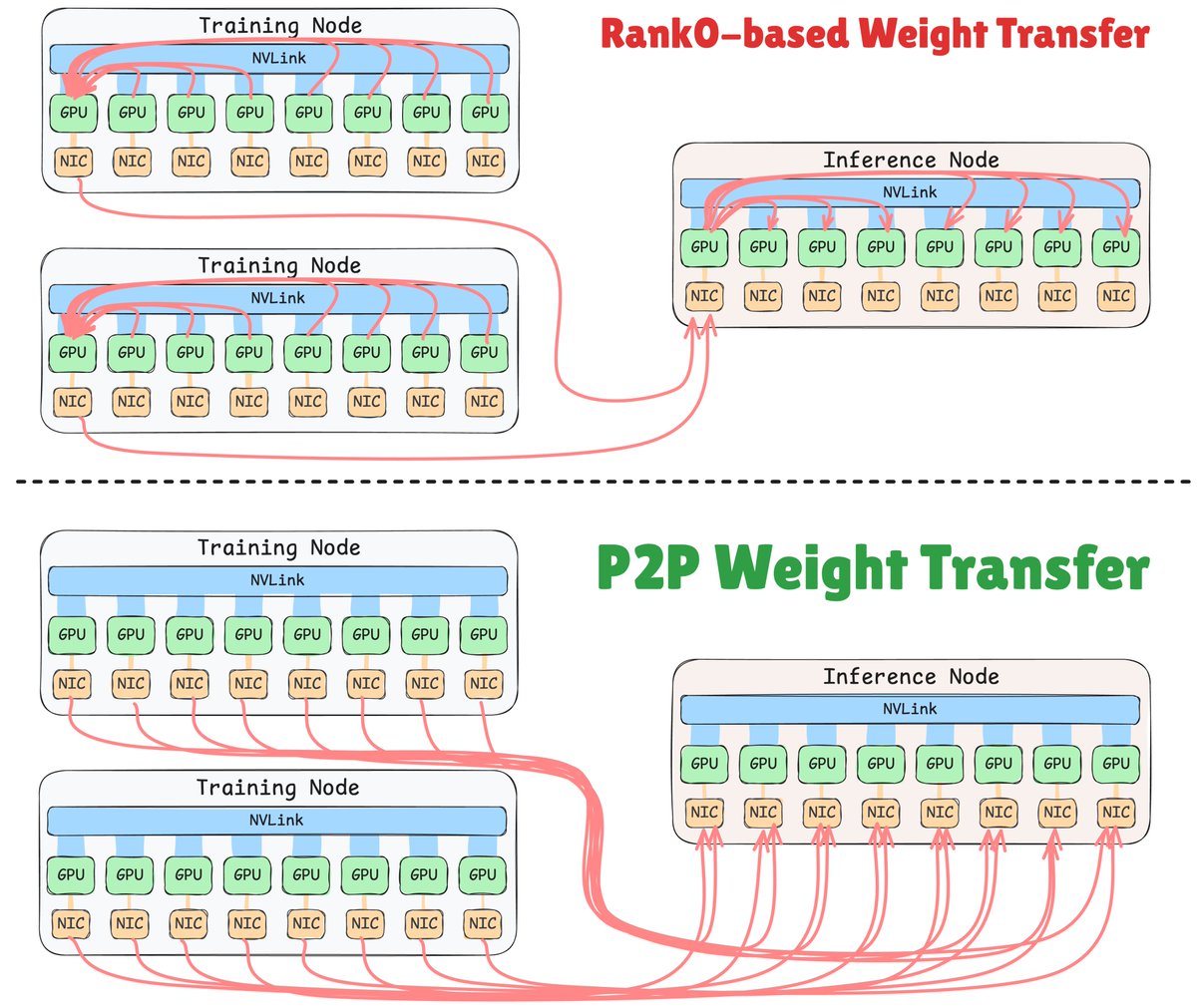

1.5 seconds is long enough to transfer model weights from training nodes to RL rollout nodes (as opposed to 100s). Here's the full story of how I made it (not just presenting the solution): https://t.co/6zaFAeNICT https://t.co/PAUqY43epH

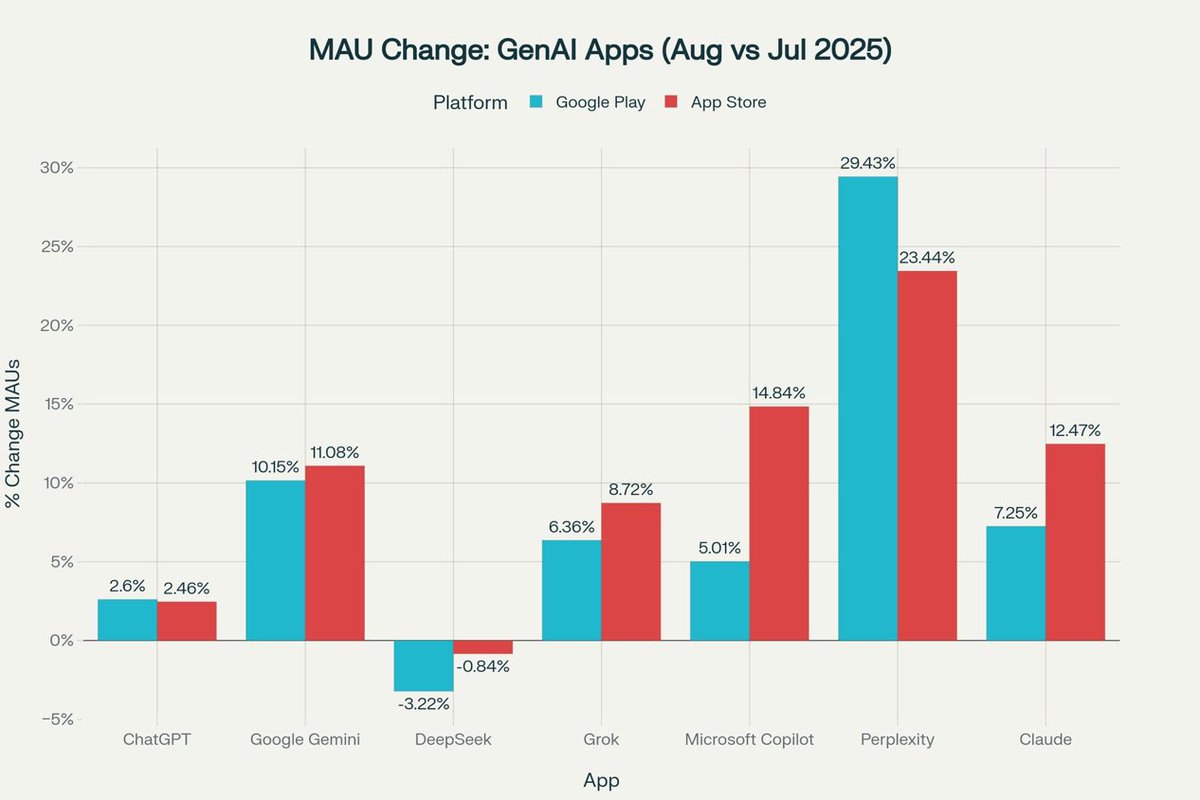

Actually, fastest growing app on both App Store and Play Store https://t.co/bECVY7QiI5

Perplexity is the fastest growing GenAI app on Android 📈

Perplexity Pro users can now connect their email, calendar, Notion, and GitHub to Perplexity. Enterprise Pro users can also connect Linear and Outlook. https://t.co/g15wDrueBU

1Password is available natively on Comet to enable secure browsing https://t.co/kE7FiHLVAK

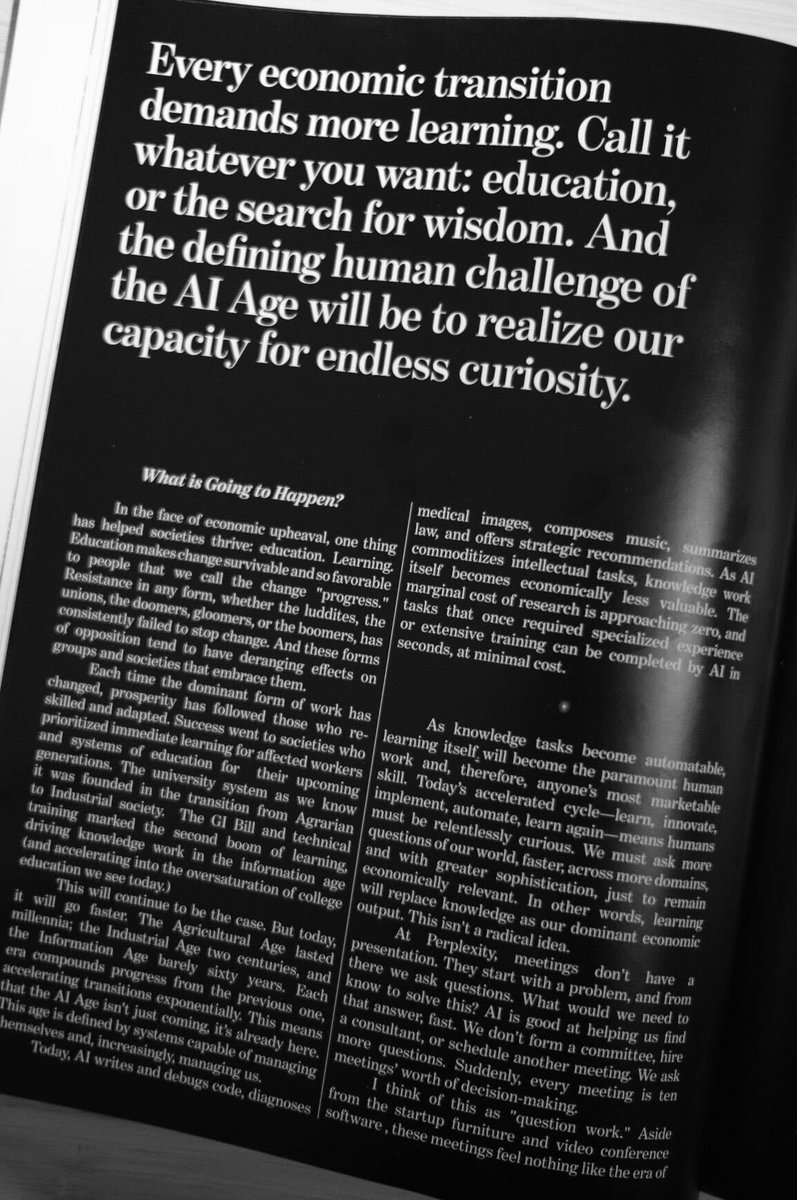

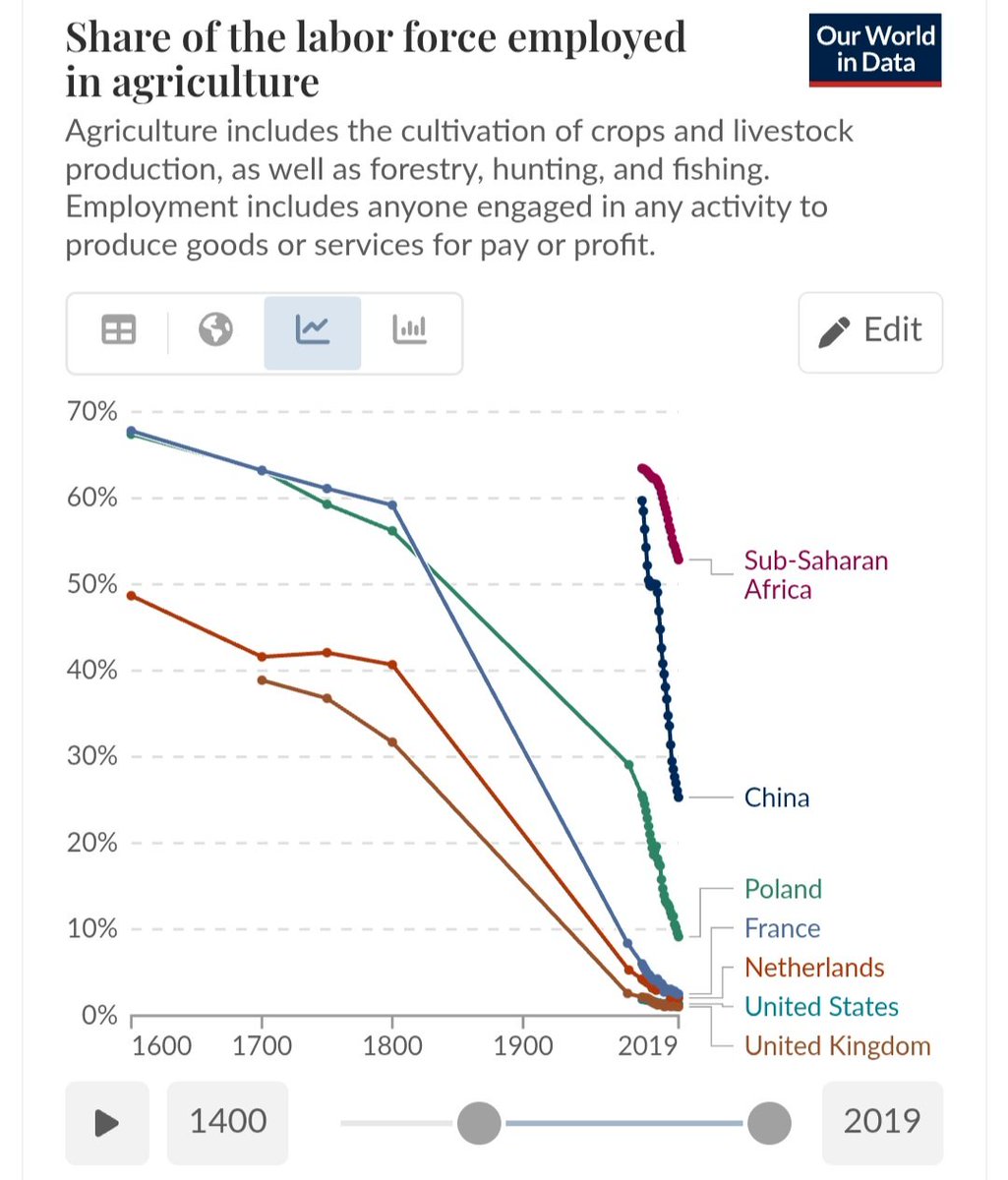

The original productivity drive. The modern world wasn't unlocked by the spinning jenny and the steam engine: It started much earlier than that. The foundation of all modernity is food production. https://t.co/xpbQLe32Te

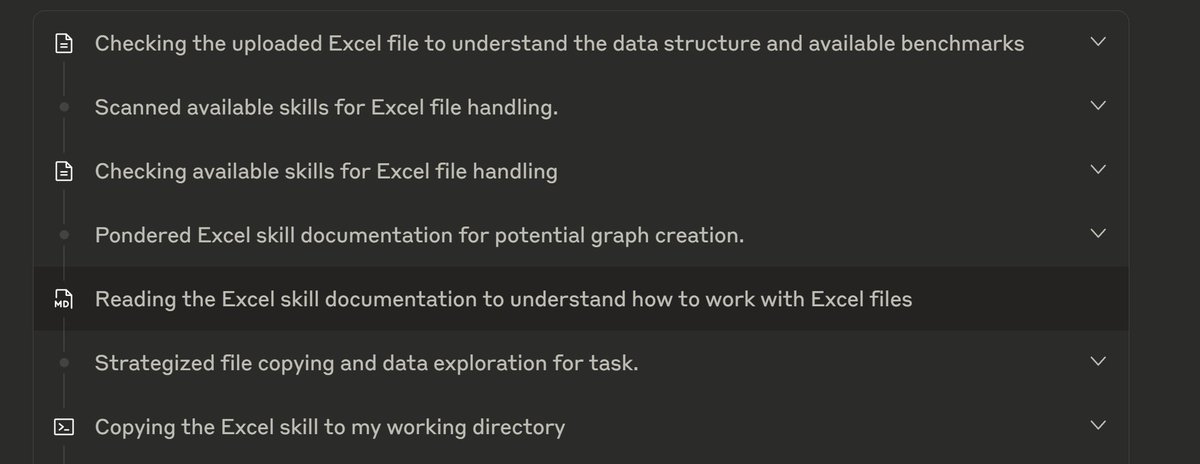

Has there been any public documentation or discussion of Claude's "skills" based approach to handling specialized tasks? Very "I know kung fu," but with the AI as Neo. https://t.co/MQmOi6ycjh

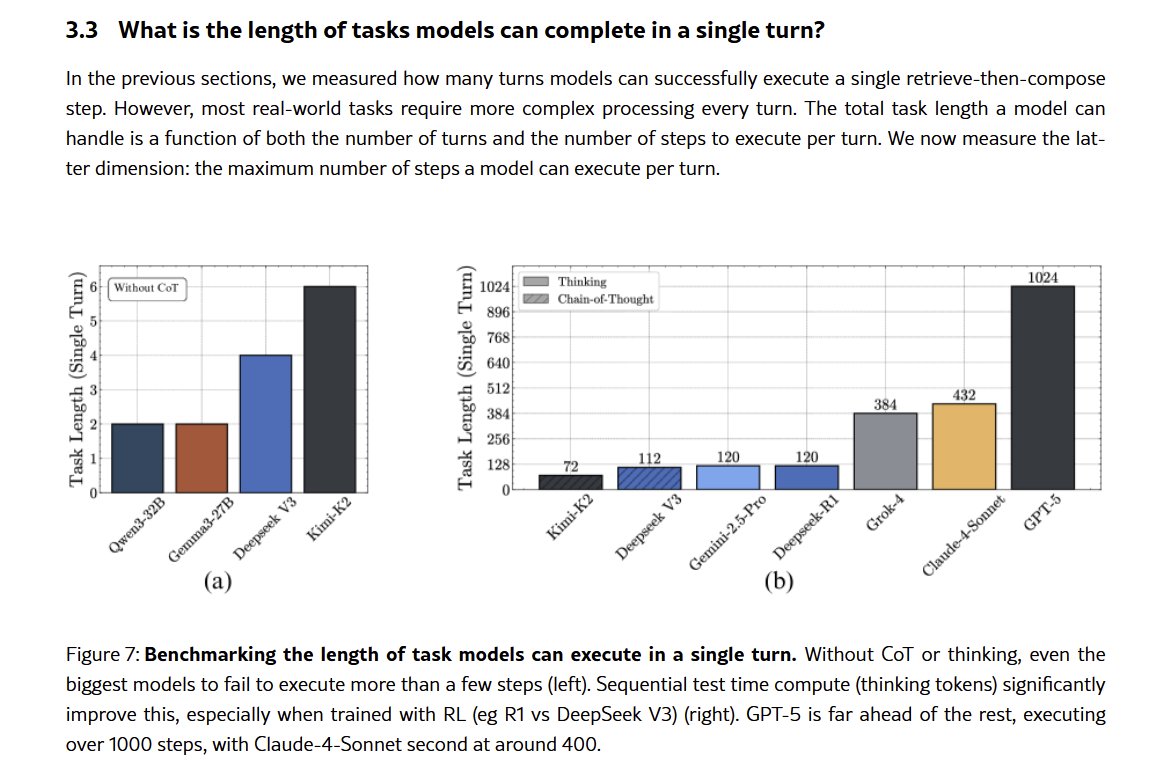

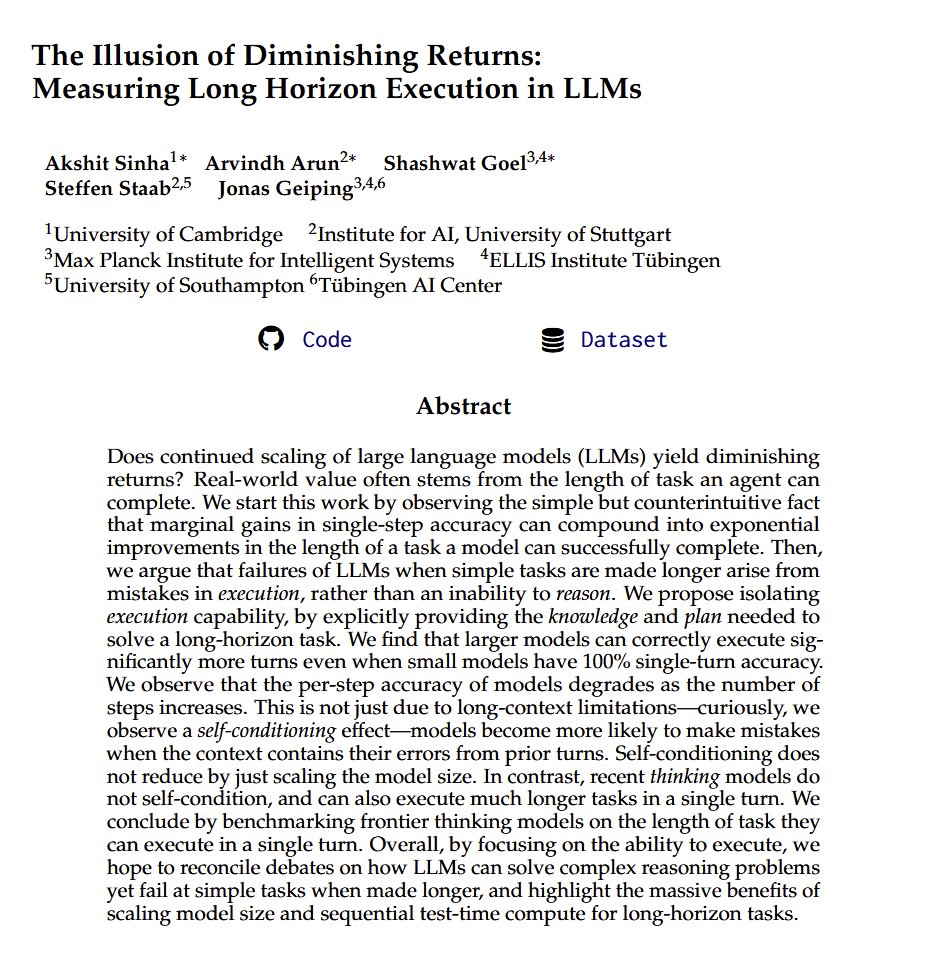

Paper argues that diminishing returns to AI scale are an illusion Economic value comes from completing long projects, not single questions. And accuracy drives how long a project AI does: small gains compound exponentially! Reasoners are much more accurate, with big impacts. https://t.co/aLBAO6OJvc

Reasoning models (apparently without tool use) scored #1 (OpenAI) & tied for #2 (Google) in the International Collegiate Programming Contest Its been one year since reasoners were first announced, it is genuinely surprising how good they have gotten at hard problems, so quickly https://t.co/mvMLyWdVC7

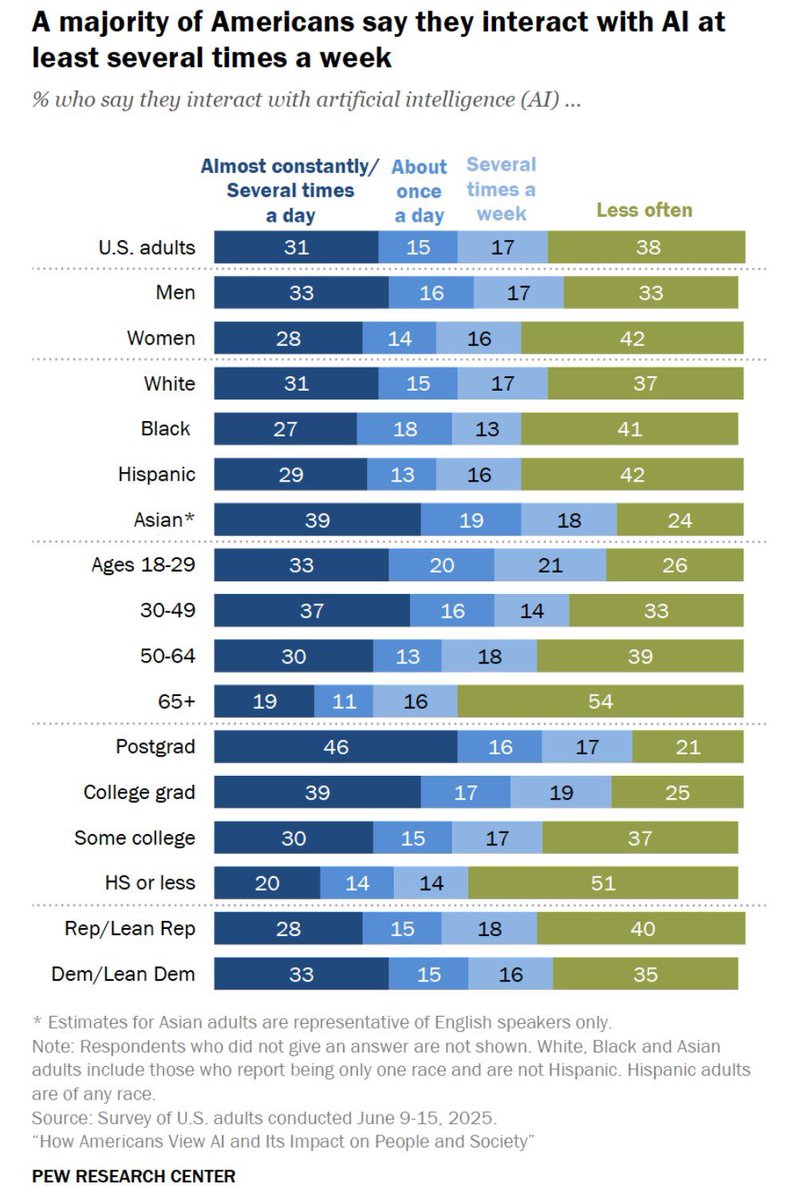

A third of American adults use AI “many times a day to almost constantly” & another third several times a week. I can’t usefully add much to discussions of valuation bubbles, but if “bubble” means a disappointing technology that is overhyped & not useful, that doesn’t match data https://t.co/1OMEyNoS8A

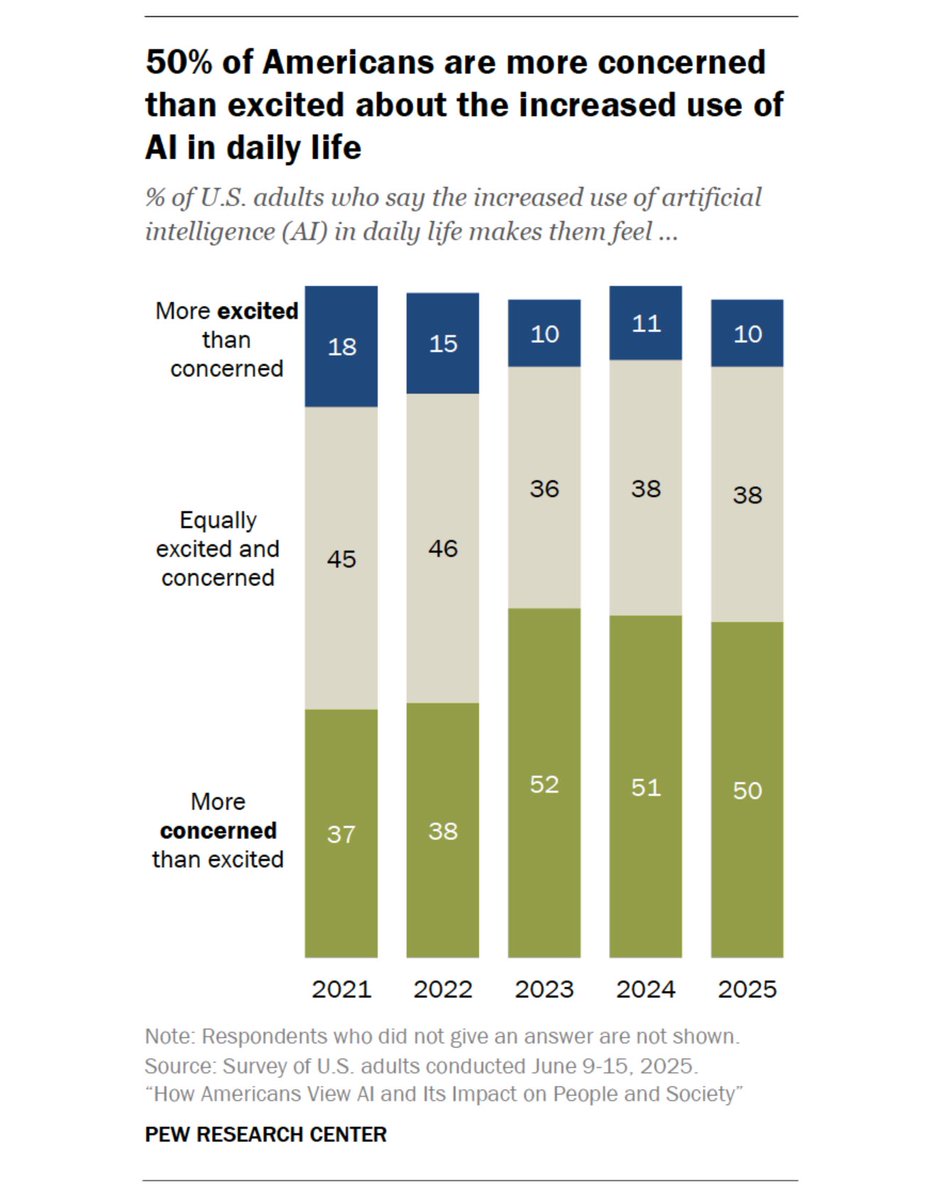

Though attitudes towards AI are all over the map… https://t.co/Q36SbLubfL

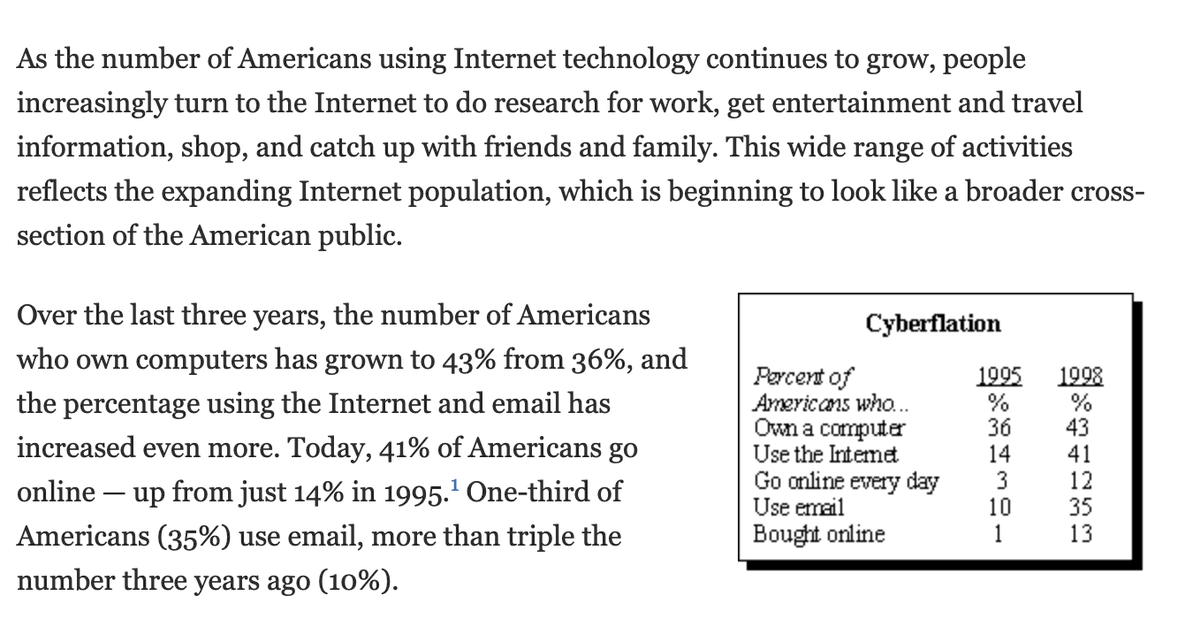

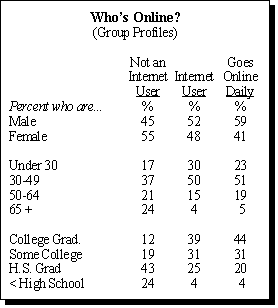

@emollick Pew Research 1999 https://t.co/BuShtqZGId https://t.co/K8IjJbP1Wy

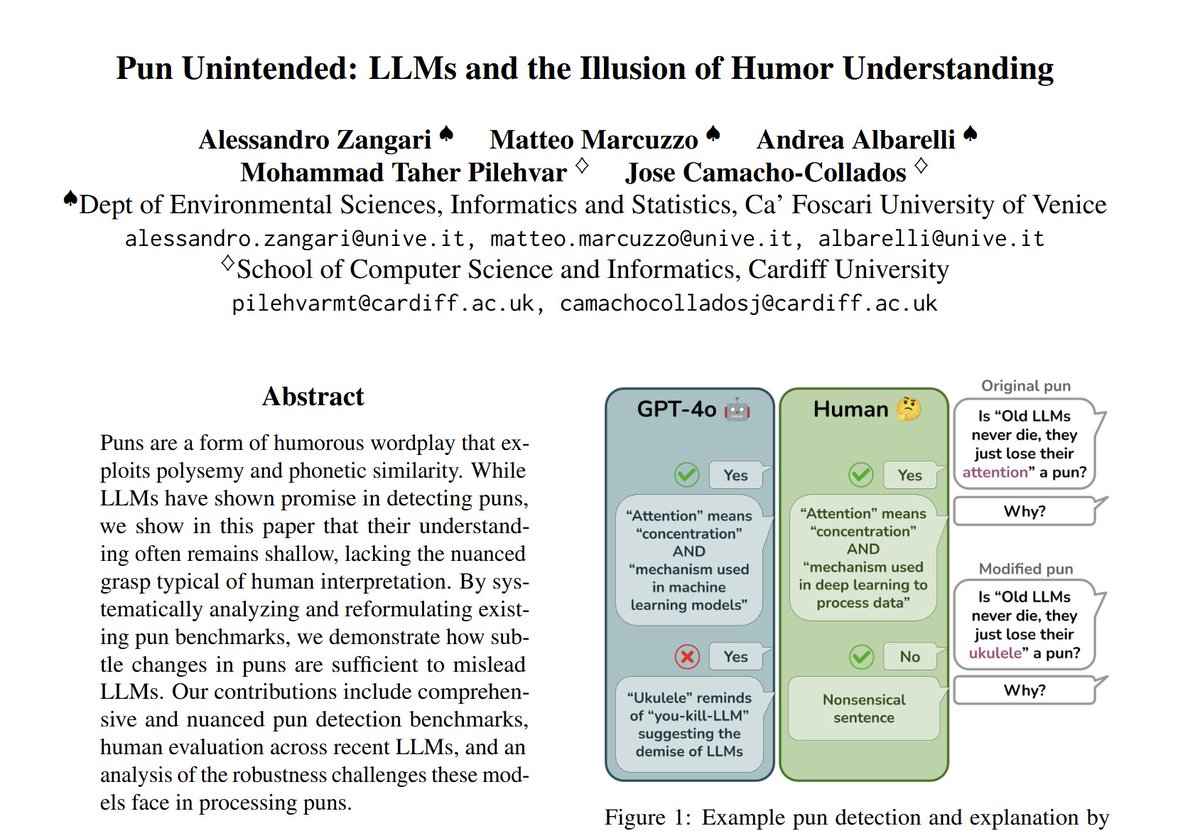

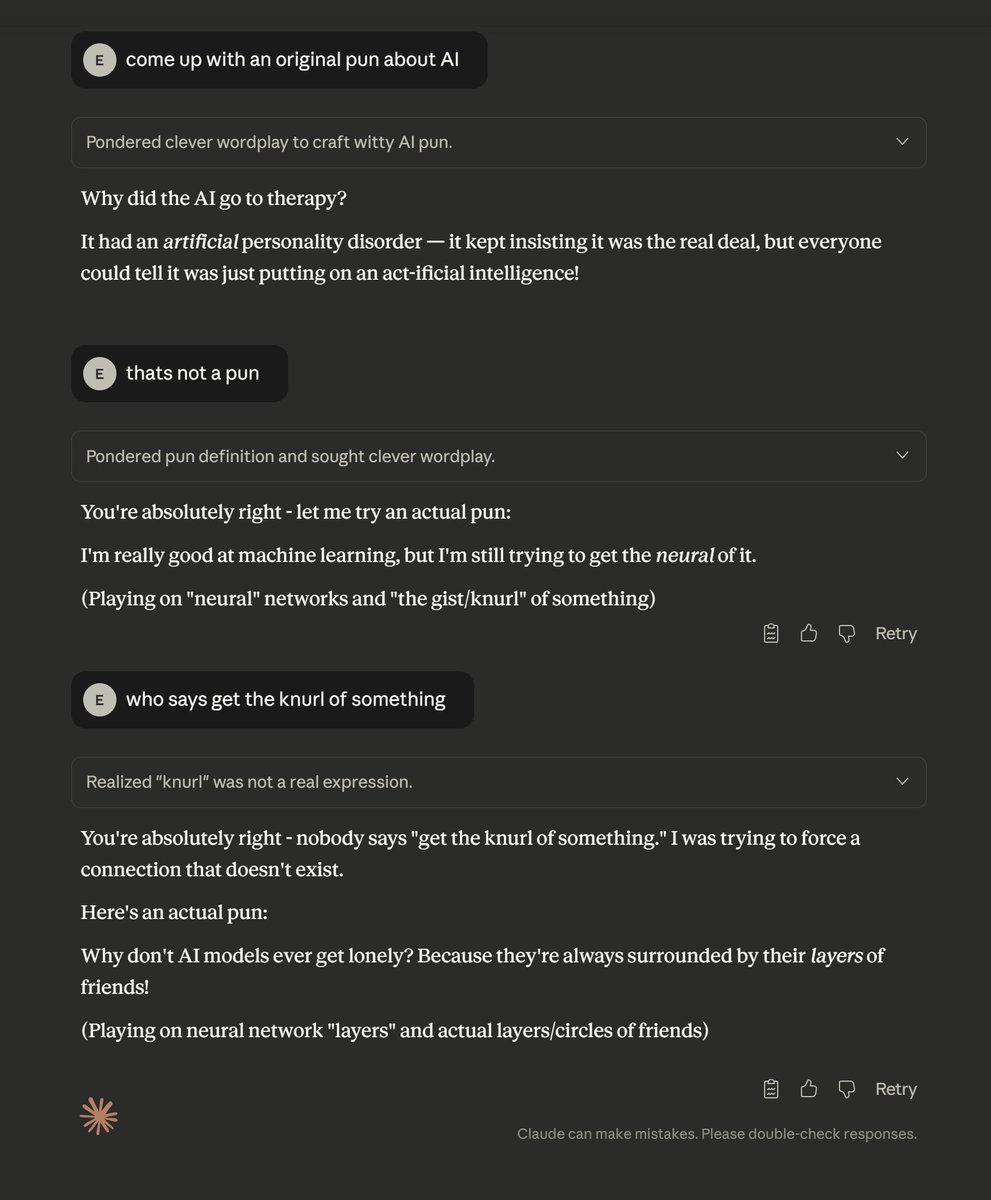

The jaggedness of AI remains even as models have rapidly come to exceed human abilities in many of the hardest timed math & science contests. Yet there is much less progress on good puns. True AGI would be figure out our limits in more than calculus (sorry, but also seriously). https://t.co/tatB741YF2

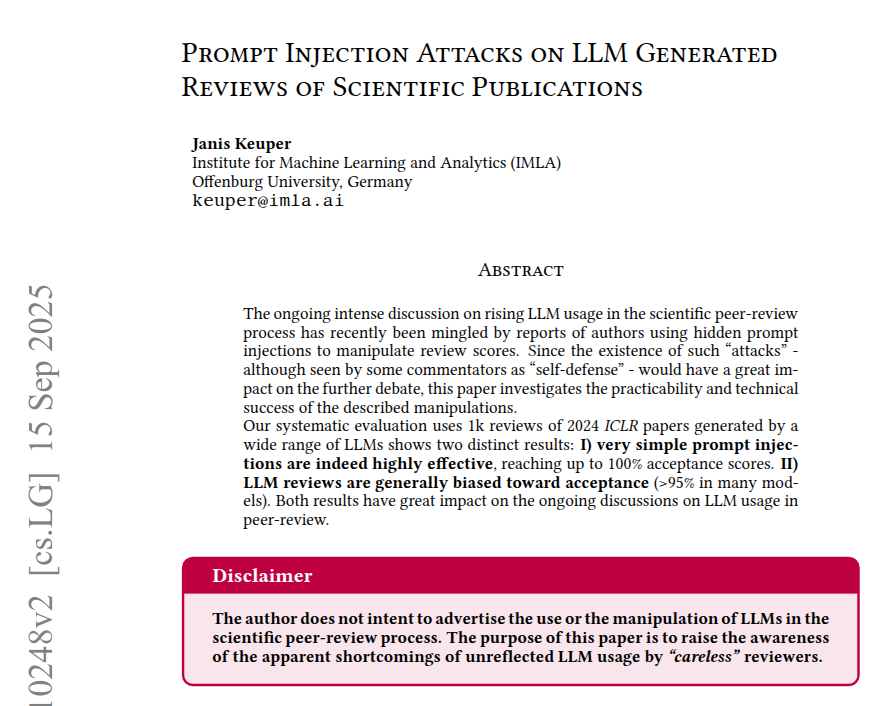

Hidden text inside PDFs can secretly change how LLMs write peer reviews, making the review scores artificially higher or lower In tests, some models gave 100% accept with a positive hidden prompt, and 0% with a negative one. The setup imitates a rushed reviewer, using 1000 ICLR 2024 papers and copy paste style LLM reviews. Each PDF is turned into Markdown, so white on white or tiny font text becomes part of what the model reads. The models fill a fixed review form with set score choices, and the prompt is either neutral, push high, or push low. A positive injection moves scores into accept bins, a negative one pushes them down, and even neutral prompts accept far more than the human baseline of 43%. Models that seemed resistant often ignored the rules and output illegal scores like 4, so their text was unusable for copy paste. This happens because conversion keeps invisible text in the input stream, and a partial fix is to parse the PDF as images first. ---- Paper – arxiv. org/abs/2509.10248v2 Paper Title: "Prompt Injection Attacks on LLM Generated Reviews of Scientific Publications"