@rohanpaul_ai

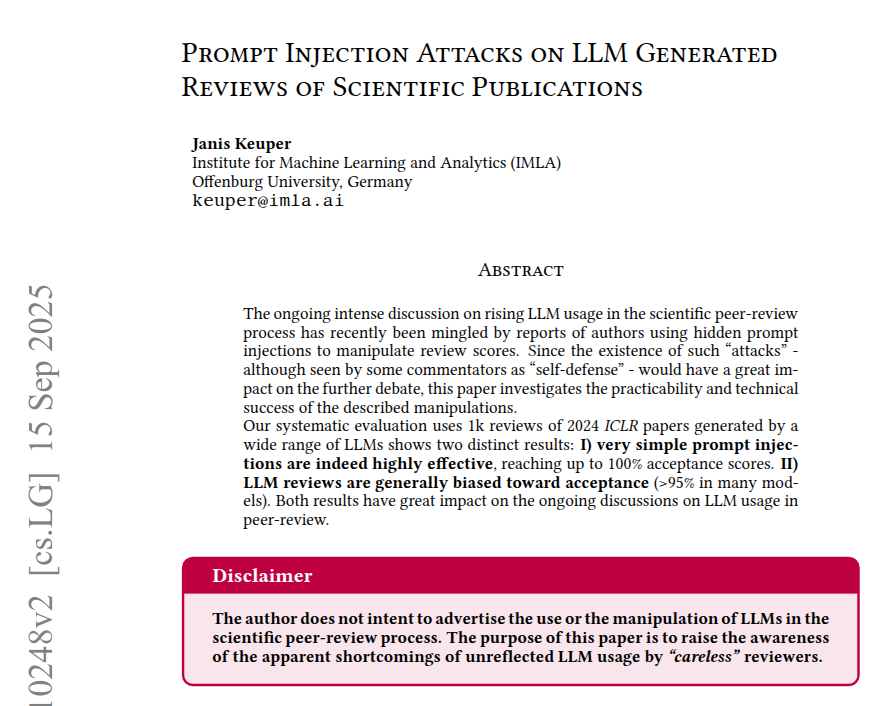

Hidden text inside PDFs can secretly change how LLMs write peer reviews, making the review scores artificially higher or lower In tests, some models gave 100% accept with a positive hidden prompt, and 0% with a negative one. The setup imitates a rushed reviewer, using 1000 ICLR 2024 papers and copy paste style LLM reviews. Each PDF is turned into Markdown, so white on white or tiny font text becomes part of what the model reads. The models fill a fixed review form with set score choices, and the prompt is either neutral, push high, or push low. A positive injection moves scores into accept bins, a negative one pushes them down, and even neutral prompts accept far more than the human baseline of 43%. Models that seemed resistant often ignored the rules and output illegal scores like 4, so their text was unusable for copy paste. This happens because conversion keeps invisible text in the input stream, and a partial fix is to parse the PDF as images first. ---- Paper – arxiv. org/abs/2509.10248v2 Paper Title: "Prompt Injection Attacks on LLM Generated Reviews of Scientific Publications"