Your curated collection of saved posts and media

🚀Excited to share our recent research:🚀 “Learning to Reason as Action Abstractions with Scalable Mid-Training RL” We theoretically study 𝙝𝙤𝙬 𝙢𝙞𝙙-𝙩𝙧𝙖𝙞𝙣𝙞𝙣𝙜 𝙨𝙝𝙖𝙥𝙚𝙨 𝙥𝙤𝙨𝙩-𝙩𝙧𝙖𝙞𝙣𝙞𝙣𝙜 𝙍𝙇. The findings lead to a scalable algorithm for learning action hierarchies from expert demonstrations, which we successfully apply to 𝟭𝘽 Python code data. A thread:🧵

📉SFT might not suffer as much catastrophic forgetting as you think. Lately, much debate around GRPO in the community. RL is hot—but let’s not forget, in the context of LLMs: SFT is the bedrock of almost all RL. Also, there’s still a lot we don’t fully understand about SFT. Paper link: https://t.co/iawopsRn7b 🤔We revisit domain-specific SFT and find that even only with a small learning rate, you can achieve a sweet trade-off: (1) General-purpose degradation is largely mitigated; (2) Target-domain performance stays strong as the larger lr. From both theory & experiments, we next propose TALR (Token-Adaptive Loss Reweighting)—a method that further alleviates forgetting and achieves favorable trade-offs. #GRPO #LLM #Amazon #Claude #DeepSeek #GLM

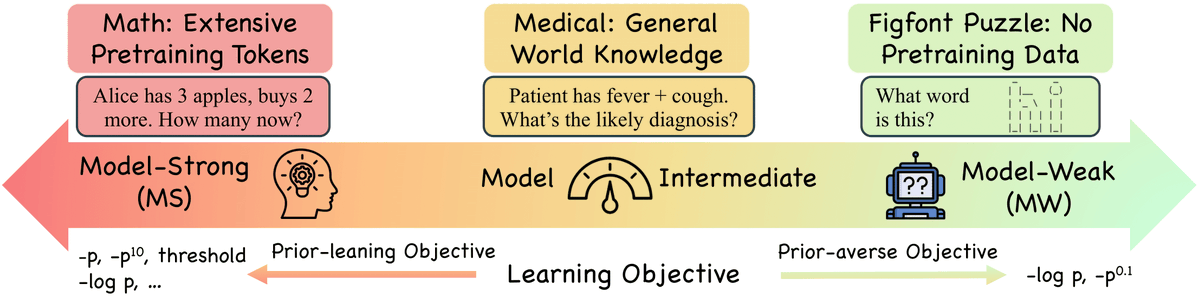

Negative Log-Likelihood (NLL) has long been the go-to objective for classification and SFT, but is it universally optimal? We explore when alternative objectives outperform NLL and when they don't, based on two key factors: the objective's prior-leaningness and the model's capability. 📄 Paper: https://t.co/HbGXy60fzZ 💻 Code: https://t.co/XtoFiok4F7 (1/n)

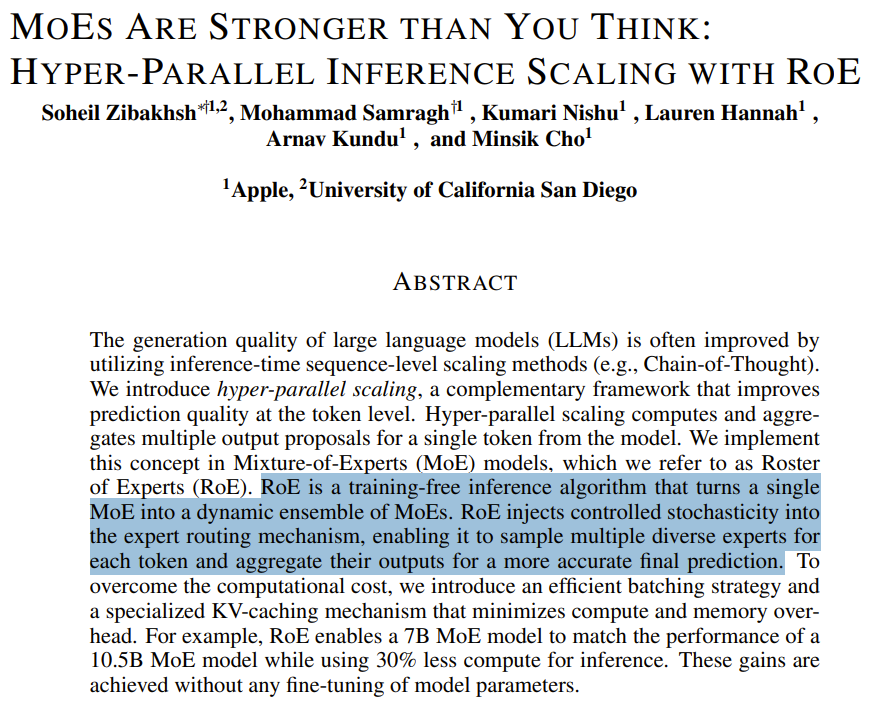

An intriguing paper from Apple. MoEs Are Stronger than You Think: Hyper-Parallel Inference Scaling with RoE Paper: https://t.co/C08s1qXgCJ

We know that one of the biggest barriers to programming GPUs is access to hardware: "Code you’ve written for NVIDIA or AMD GPUs should now mostly just work on an Apple🍎 Silicon GPU, assuming no device-specific features were being used." Preview here:👇 https://t.co/WBDRDnLbqP

We raised $250M to accelerate building AI's unified compute layer! 🔥 We’re now powering trillions of tokens, making AI workloads 4x faster 🚀 and 2.5x cheaper ⬇️ for our customers, and welcomed 10K’s of new developers 👩🏼💻. We're excited for the future! https://t.co/hjIusgu9EX

Was great to hang out with @XueFz for the past year at the GDM Singapore office. He's finally relocating to London 🥹. We enjoyed many inside jokes and even coined our own "gemini MK" in the SG office. 😂 Thanks for being a great founding member, all the fun research conversations and advising on hiring. I'll be alone for a bit until my team arrives. 🫡 It's the end of the era but I think the two of us made really outsized impact to Gemini relative to the number of people here. 🔥

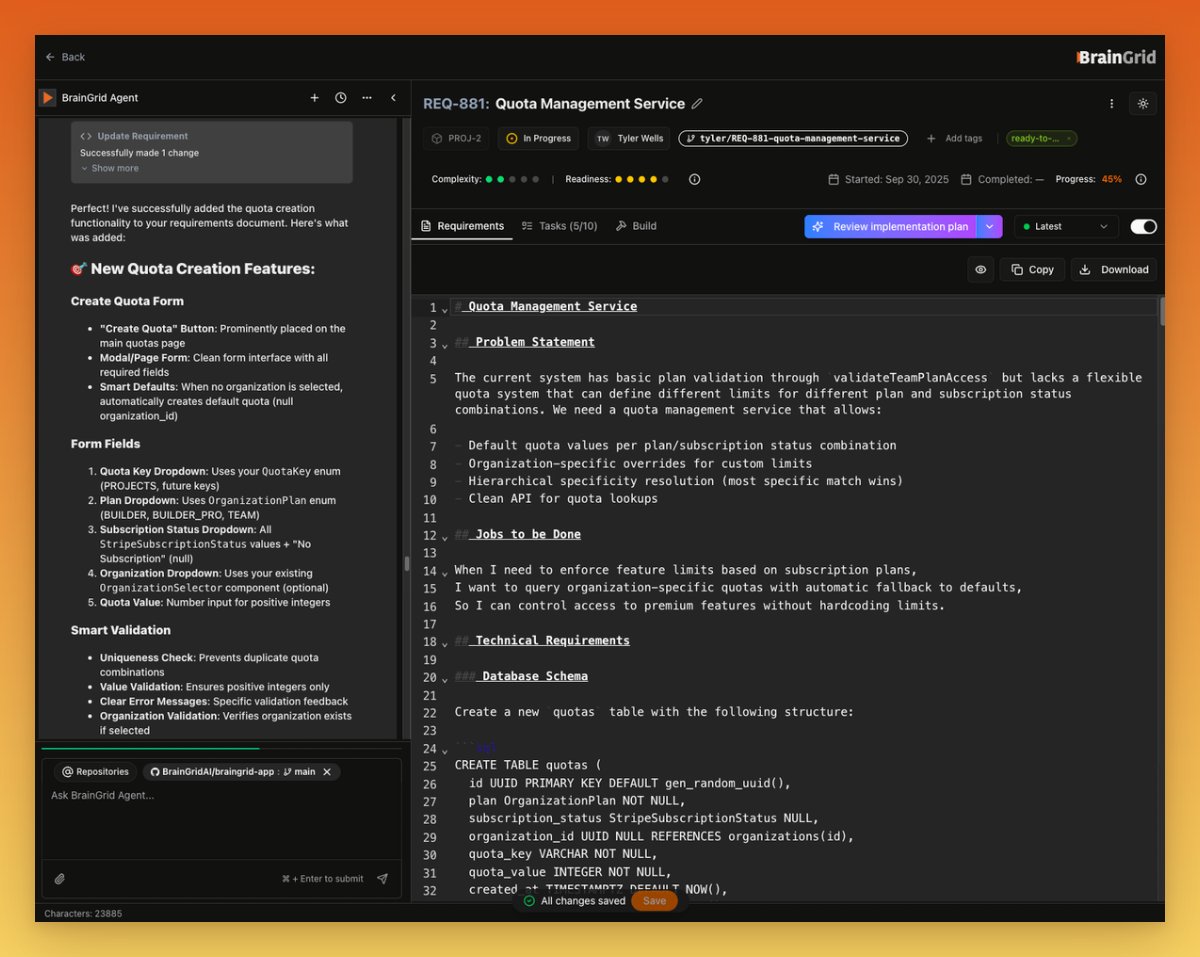

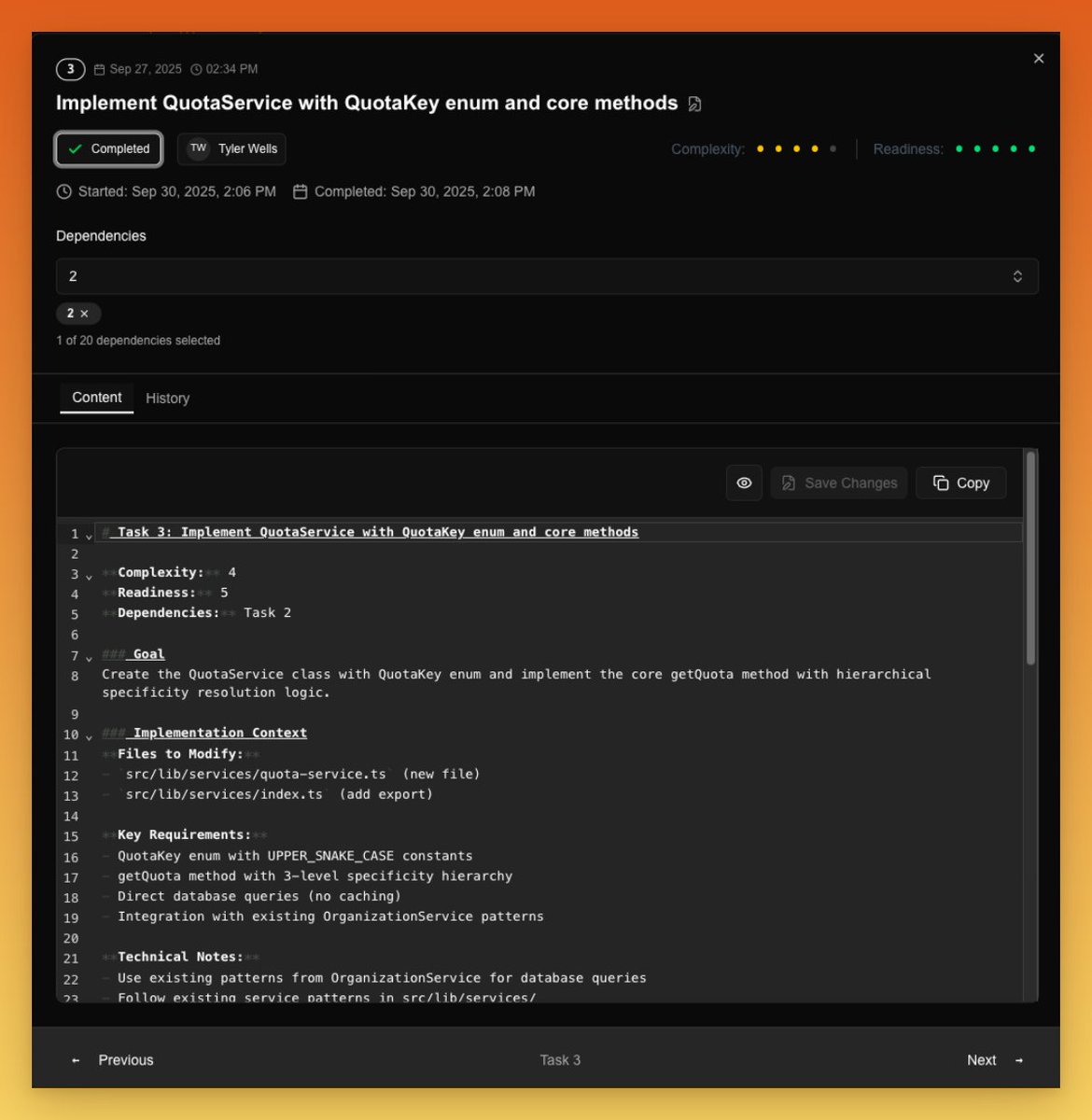

Working on spec'ing BrainGrid's quota service in BrainGrid This service will enforce quotas for the different plans: How it works: ◈ The app uses a getQuota method passing the quota key like "projects" and the account ID. ◈ This returns the number of projects this account is allowed to have ◈ A quota key, plan, value combo define this in the db ◈ It also has organization_id to allow org level overrides ◈ Full admin interface to manage. ◈ Ability to create and modify quotas in the UI Read 👇🏼 to see the actual requirement and tasks generated

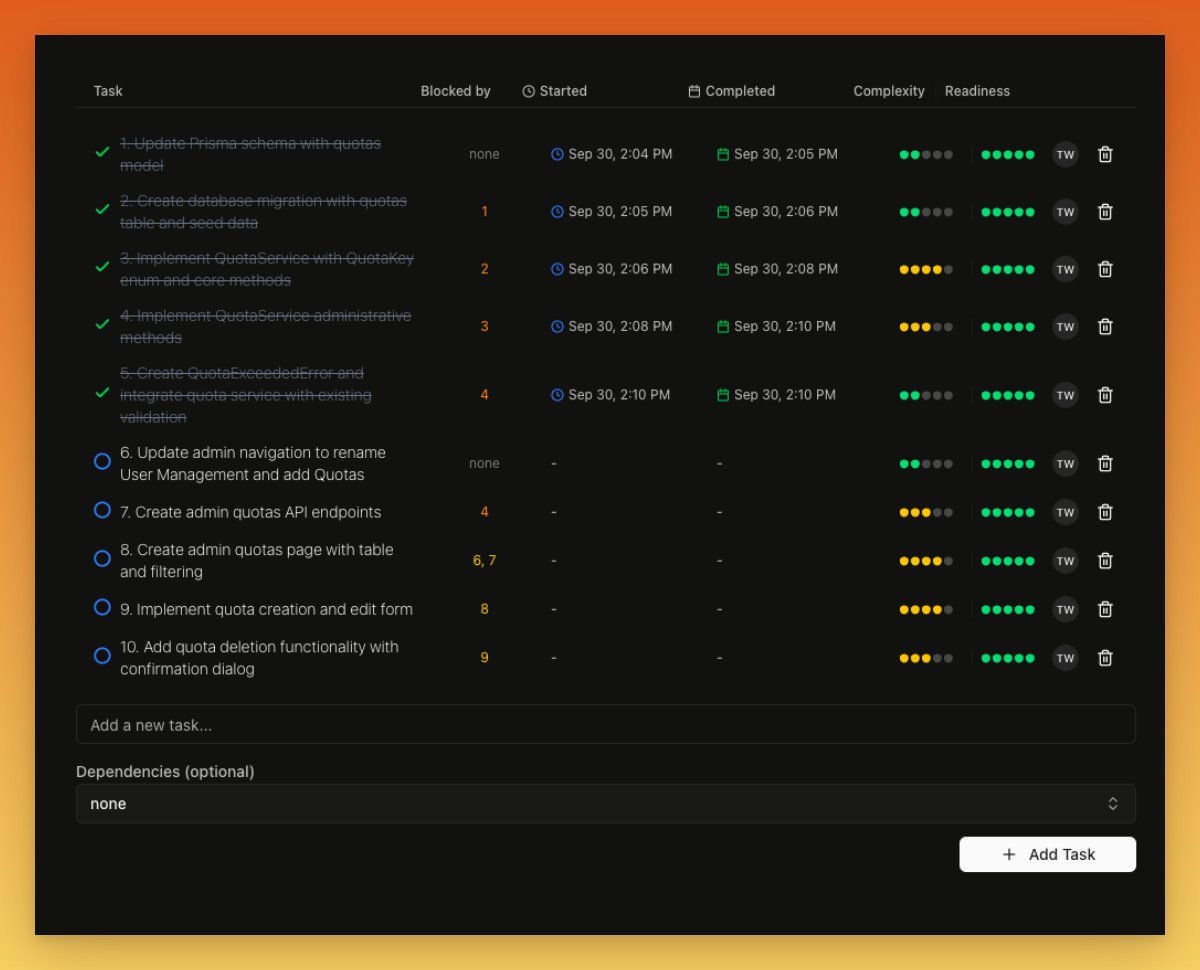

Once the spec is defined, BrainGrid help me create the task breakdown with perfectly-prompted tasks I can feed into Claude Code. With the BrainGrid MCP the build out of this is pretty smooth https://t.co/xyjcNbhta9

Here is the actual requirement generated if you wanna take a peak 👀: 👉🏼 https://t.co/hA218a0qOx

And here is one of the tasks: 👉🏼 https://t.co/s6T2cwSrBI https://t.co/npmvBgpRmS

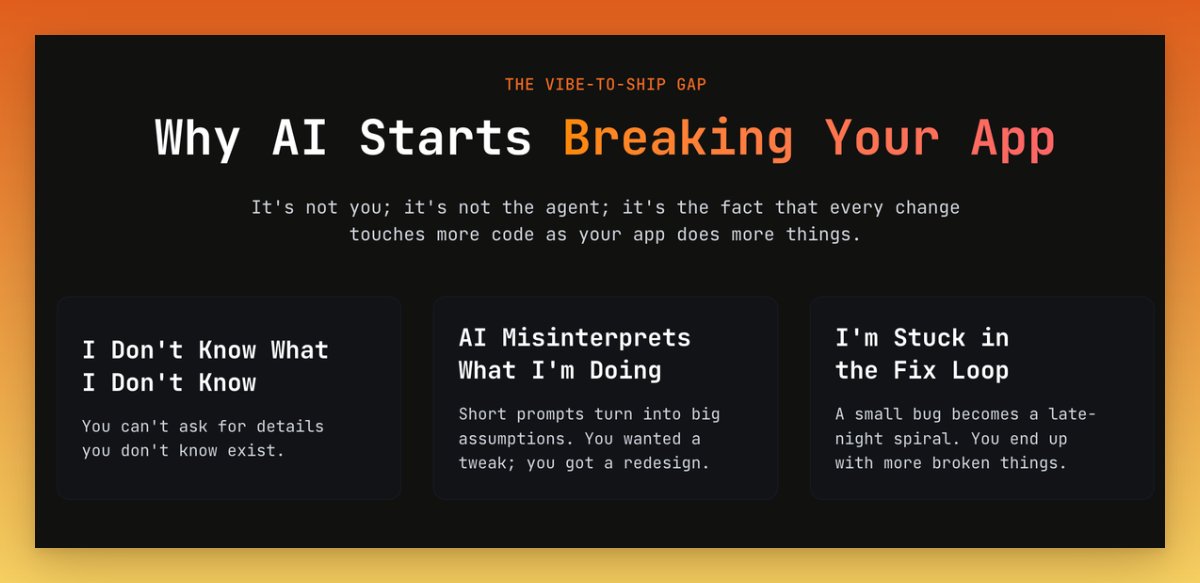

Revamped BrainGrid's homepage problem section. Like with code, I typically start by defining the problem in this section. Defining the problem well is the foundation for the rest of the page. https://t.co/EKtUCjLGpm

Sora 2 https://t.co/VVRrFW7RBc

AI OR DIE cameo on Sora 2 https://t.co/kryx192pPZ

A lap from Lewis Hamilton in Singapore 🇸🇬 https://t.co/5GDk3UFNKe

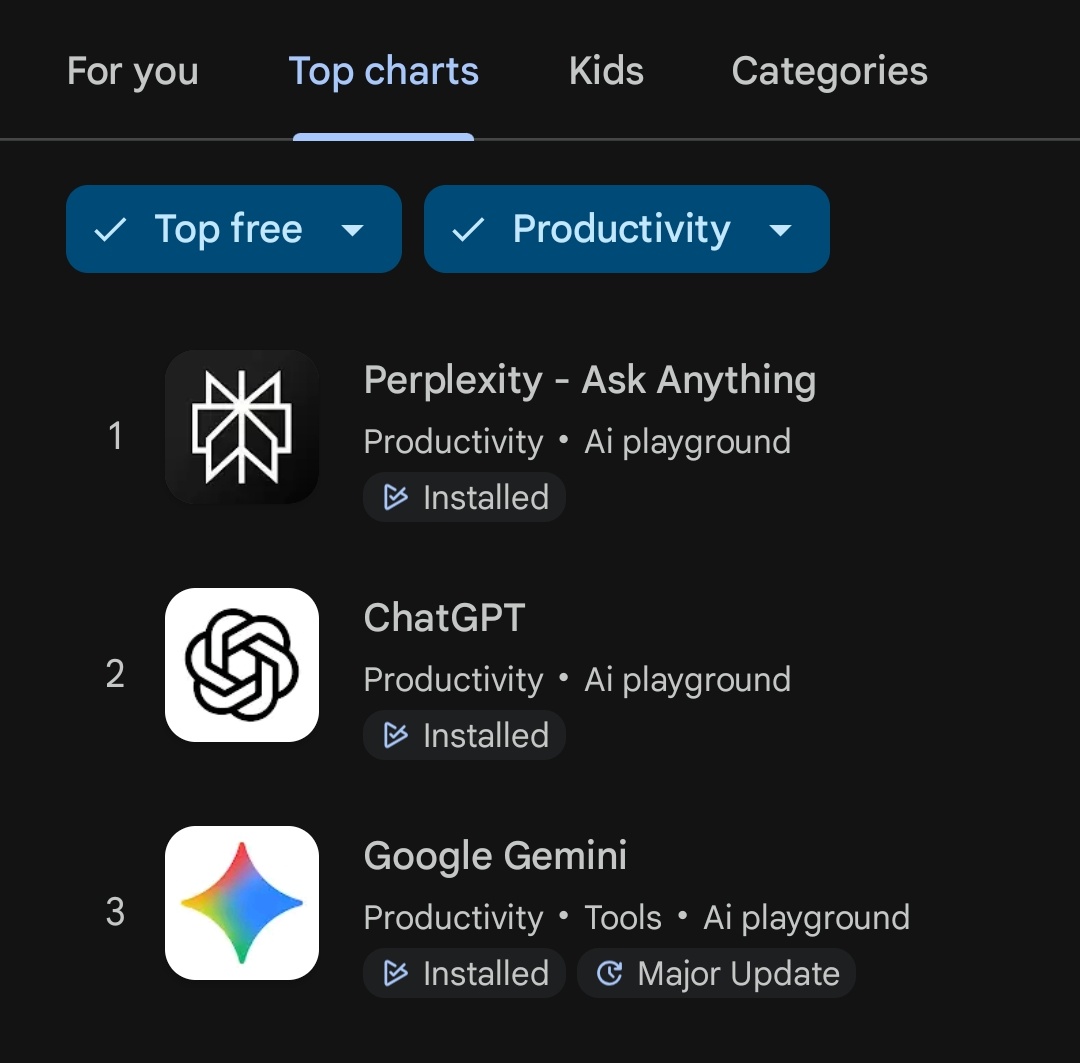

Breaking 🚨 Perplexity No.1 in playstore top charts 🔥 in India 🇮🇳 https://t.co/yCUhf1qnxZ

Wow @AravSrinivas @perplexity_ai UX always amazes and continuously improving https://t.co/ORlRmSc01Y

Cool https://t.co/7DTf23NjEd

Good video explaining how to make good use of Comet for agent prompts: https://t.co/jALt3Pjxhr

New addiction: Opening a long Youtube video (podcast, interview) on Comet, not listening to it linearly, banging question after question on Comet Assistant (Option + A), and only listening to parts I really want to listen to (which Comet can link me to exact time stamp). Eg: https://t.co/KQofTJNmpr

The challenge: create the most over-the-top Hallmark movie clip that can fit into 10 seconds. I managed to cram in a humble neighborhood baker, a prince, a cruel rival princess, and the holiday season in this one. https://t.co/wiUfxh4oJA

@emollick Hallmark benchmark

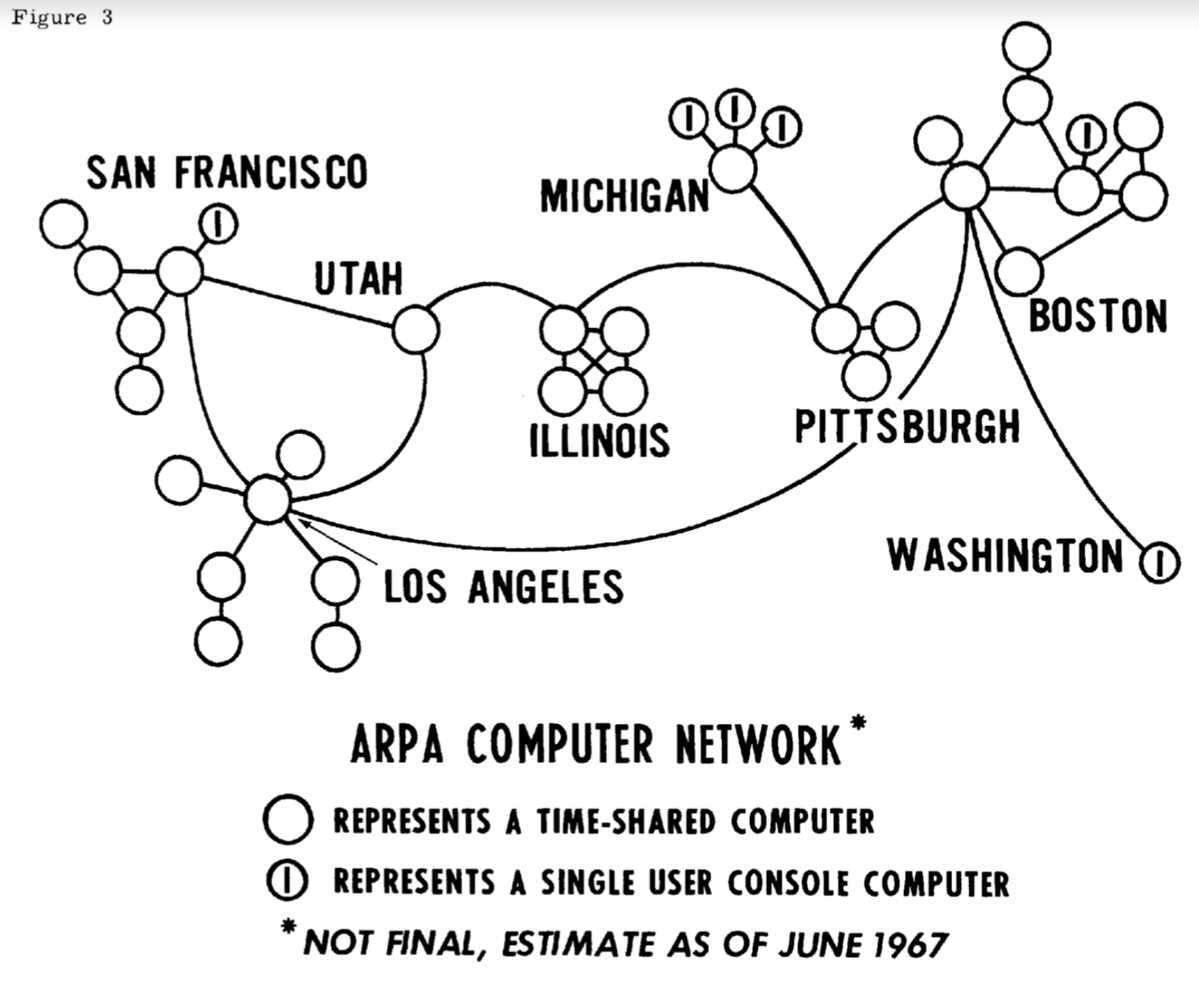

58 years ago, Larry Roberts presented his idea for an "ARPANet" for connecting multiple computers together across the United States. Full paper: https://t.co/drgMRJ5aLV https://t.co/TjoN5eMmGl

This switch turned off 1/3 of the Internet. Or at least it did in the earliest days of ARPANET in 1970, where it controlled the key BBN node in Boston. For better or worse, it no longer works (I tried flipping it when I visited the company). https://t.co/ilNW953A8g

58 years ago, Larry Roberts presented his idea for an "ARPANet" for connecting multiple computers together across the United States. Full paper: https://t.co/drgMRJ5aLV https://t.co/TjoN5eMmGl

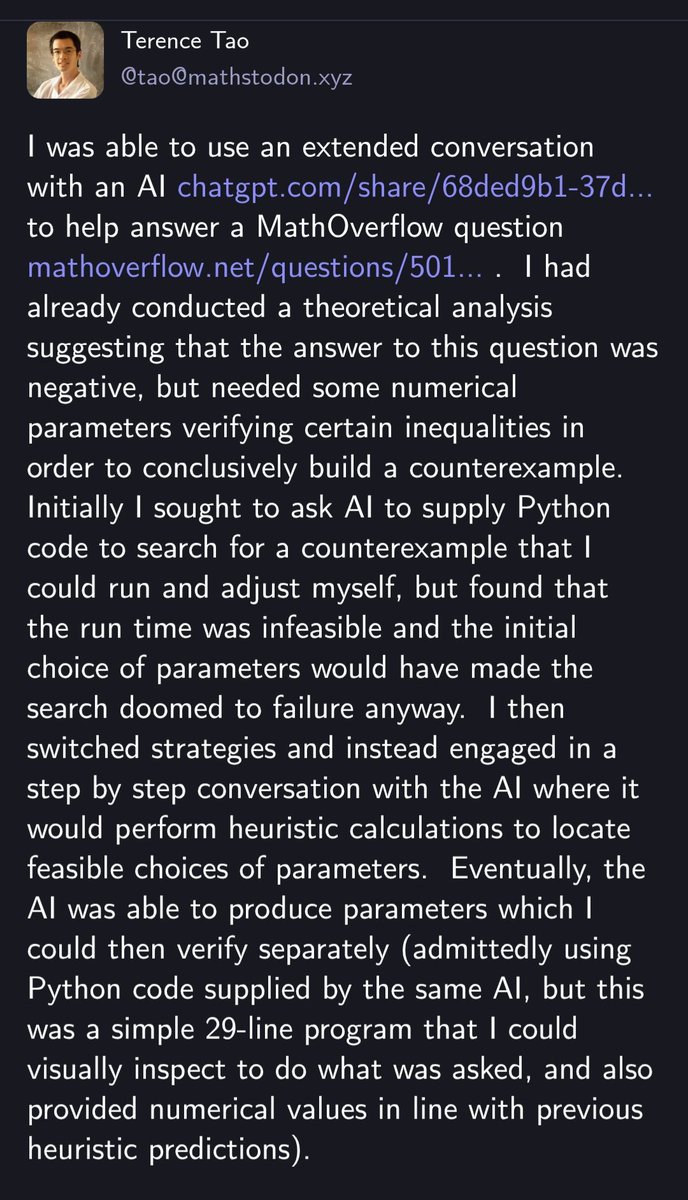

Deleted this, not because it is wrong but because I probably should wait for a pre-publication or other confirmation of the proof before disseminating widely. https://t.co/YLcKnKEbPp

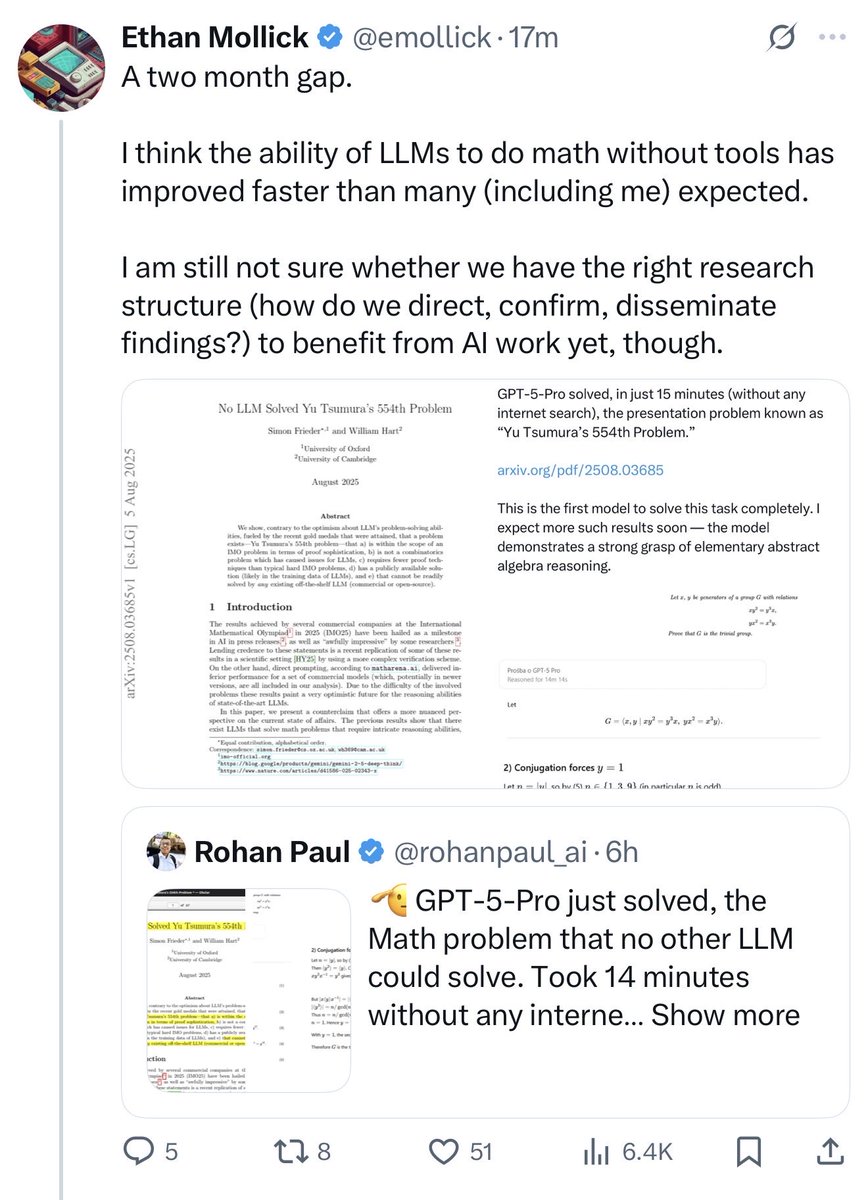

Both of these are true https://t.co/63Dey6d9AO

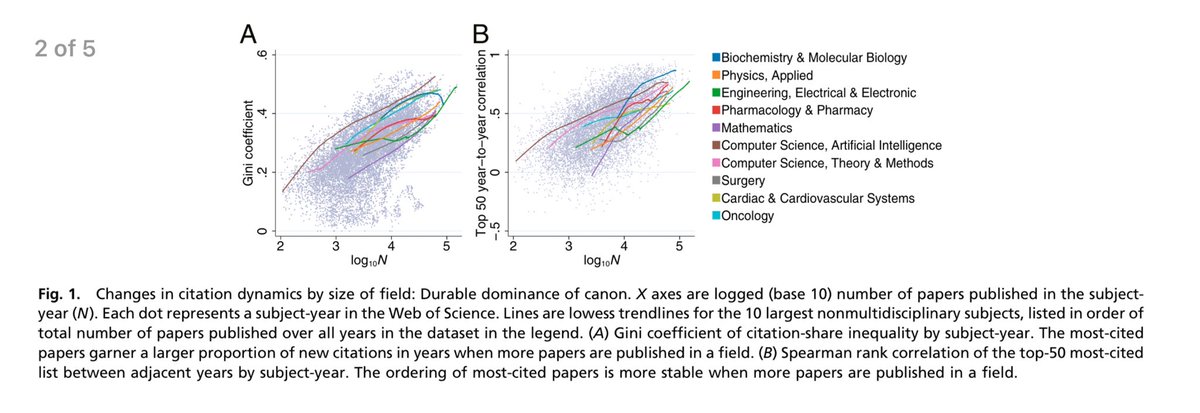

Very soon, the blocker to using AI to accelerate science is not going to be the ability of AI, but rather the systems of science itself, as creaky as they are. The scientific process is already breaking under a flood of human-created knowledge. How do we incorporate AI usefully? https://t.co/i8QCIIYzLb

The paradox of our Golden Age of science: more research is being published by more scientists than ever, but the result is actually slowing progress! With too much to read & absorb, papers in more crowded fields are citing new work less, and canonizing highly-cited articles more. https://t.co/uHZVYLKJ23

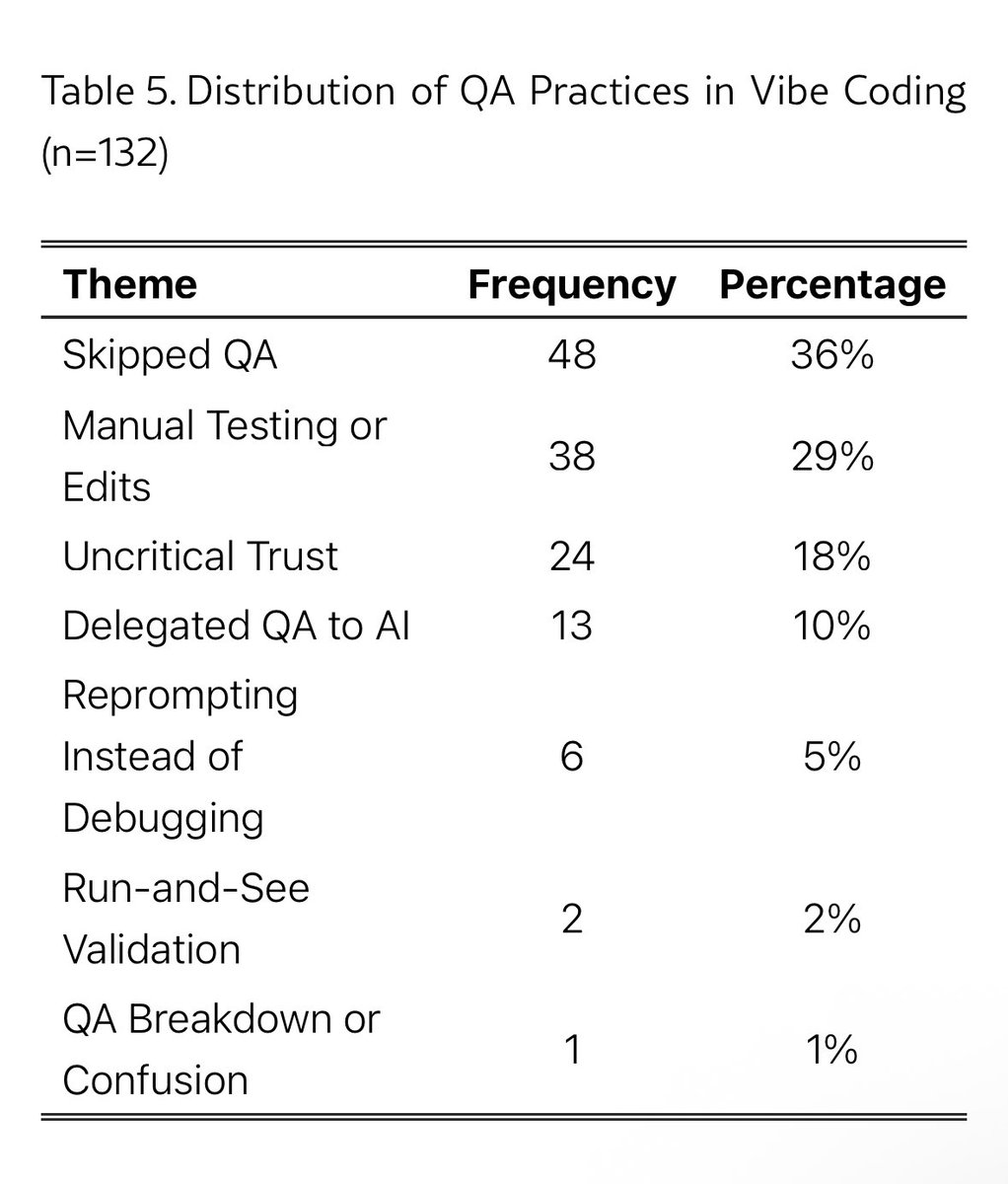

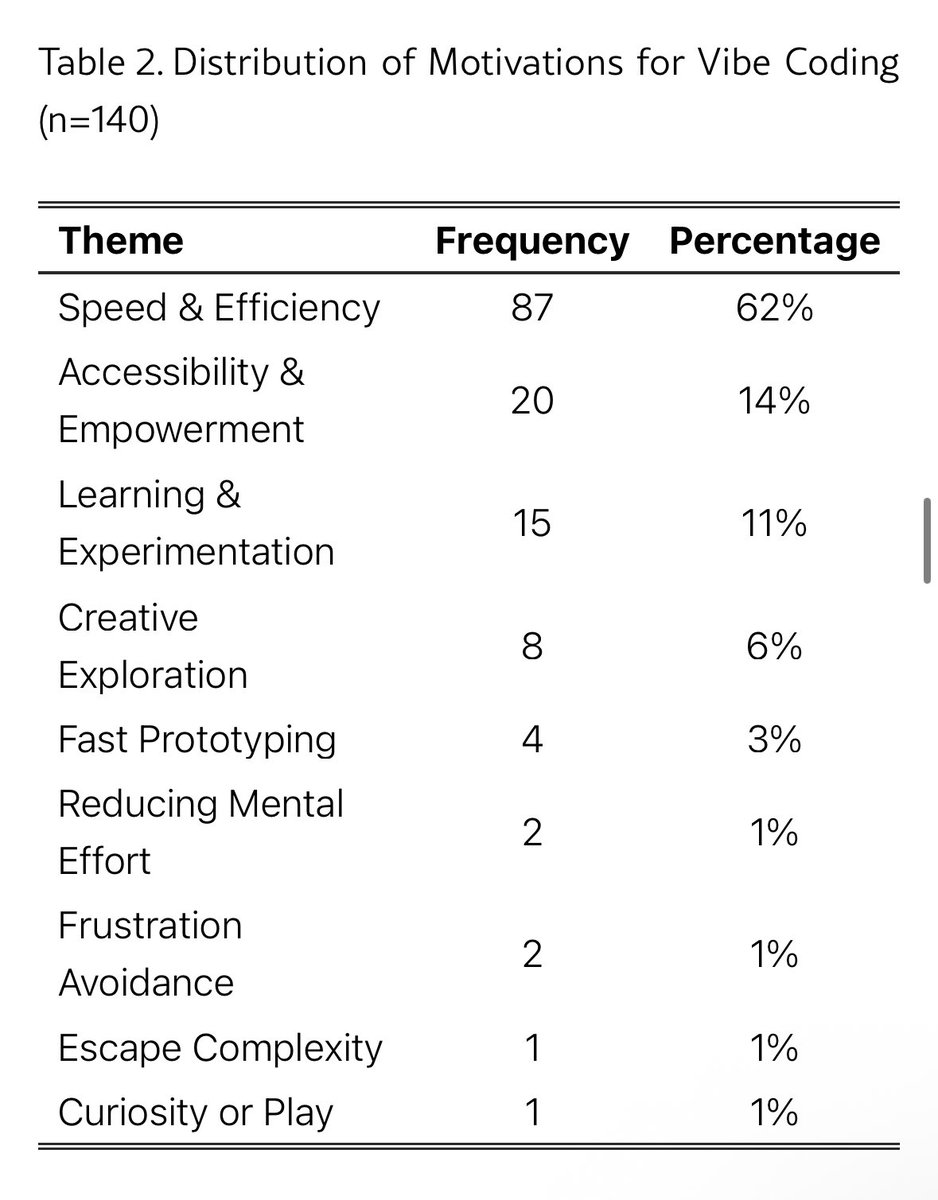

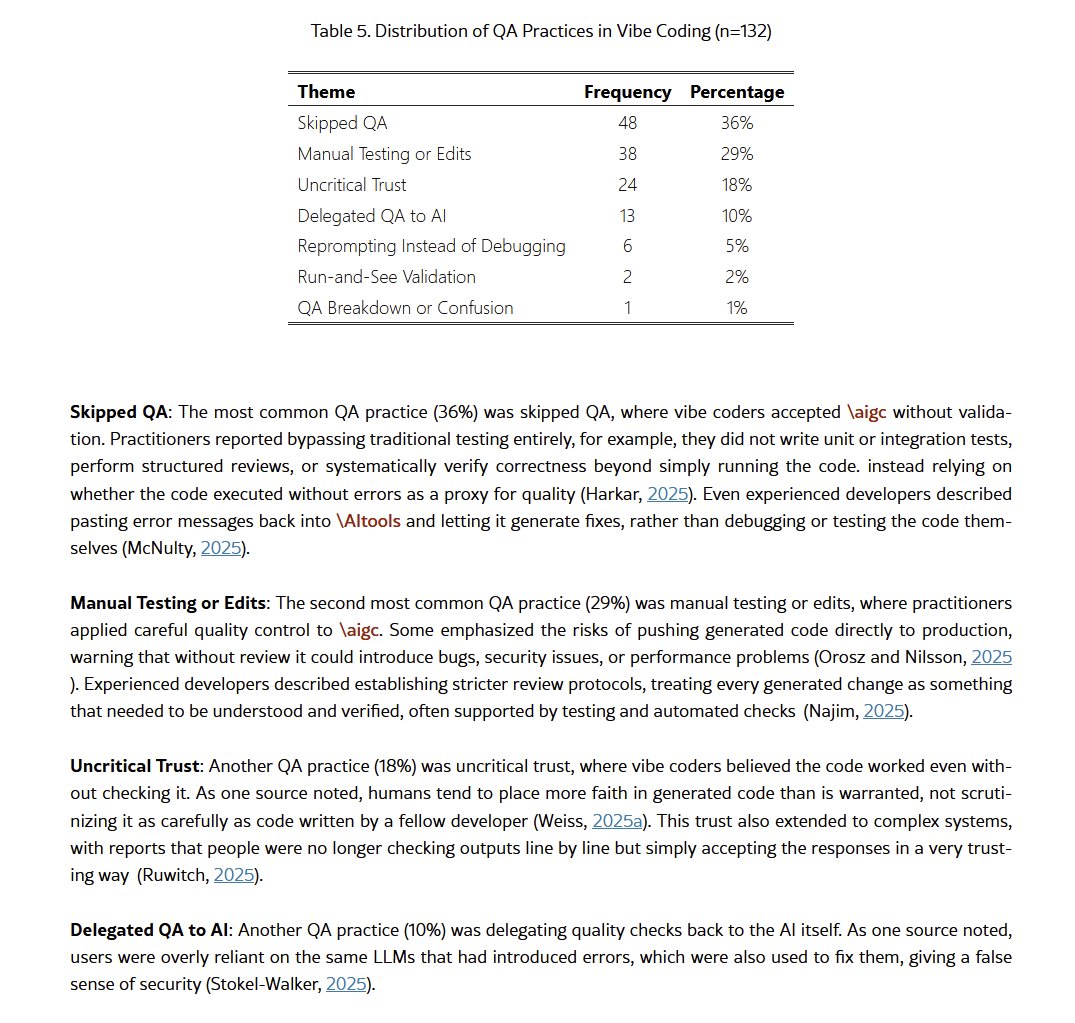

this paper shows that at this point the problems with vibe coding are mostly human hallucinations are rare and barely noticeable in practice the real issue is development driven by instant gratification and weak qa, like skipping tests and relying on llms for verification https://t.co/0AhlLOmm99

Maybe some of the big problems with vibe coding are process problems, not AI problems... https://t.co/mzXtW7i04i

this paper shows that at this point the problems with vibe coding are mostly human hallucinations are rare and barely noticeable in practice the real issue is development driven by instant gratification and weak qa, like skipping tests and relying on llms for verification https

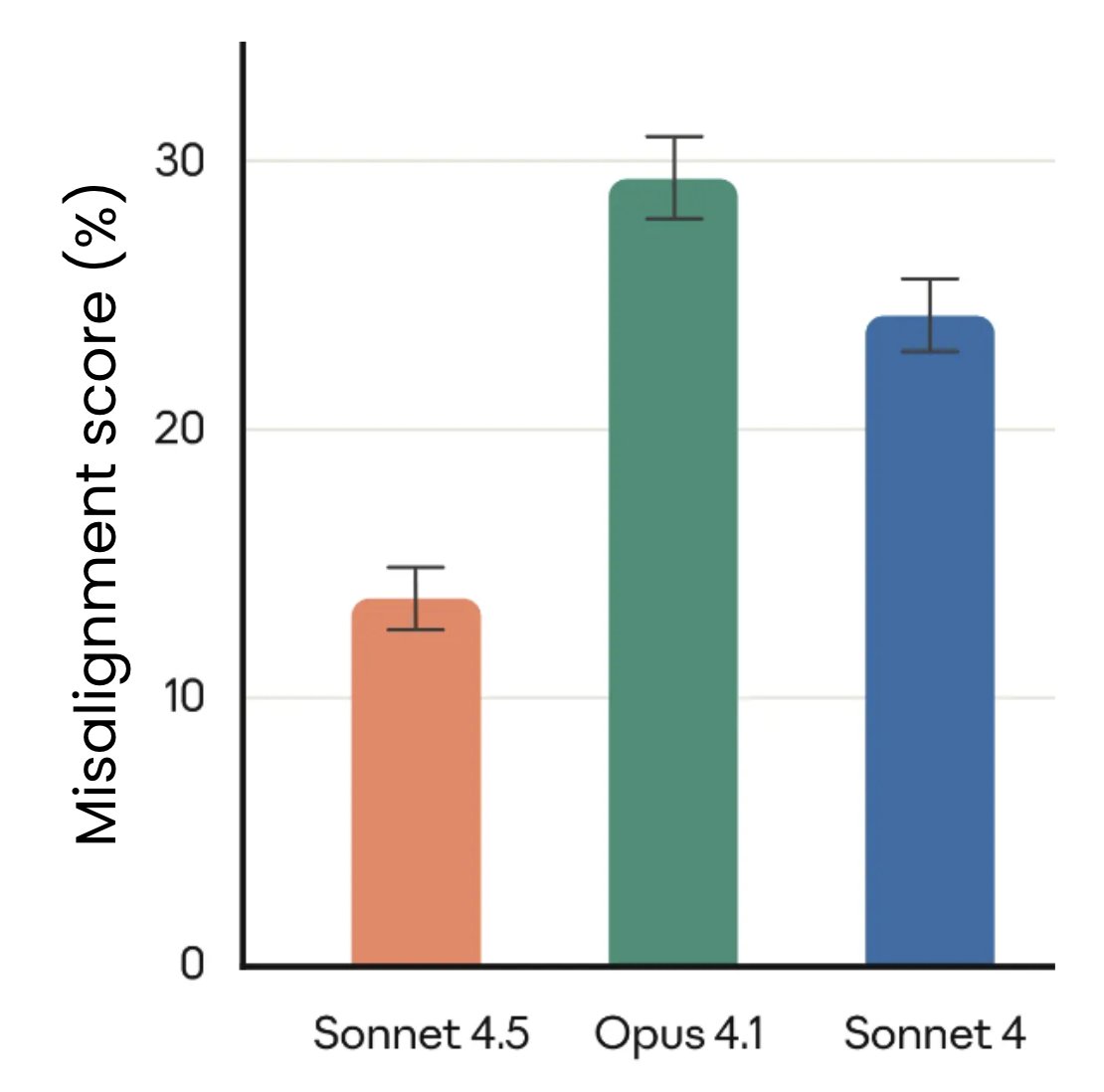

Sonnet 4.5 is out! It’s the most aligned frontier model yet; a lot of progress relative to Sonnet 4 and Opus 4.1! https://t.co/w5cRNcR3ma

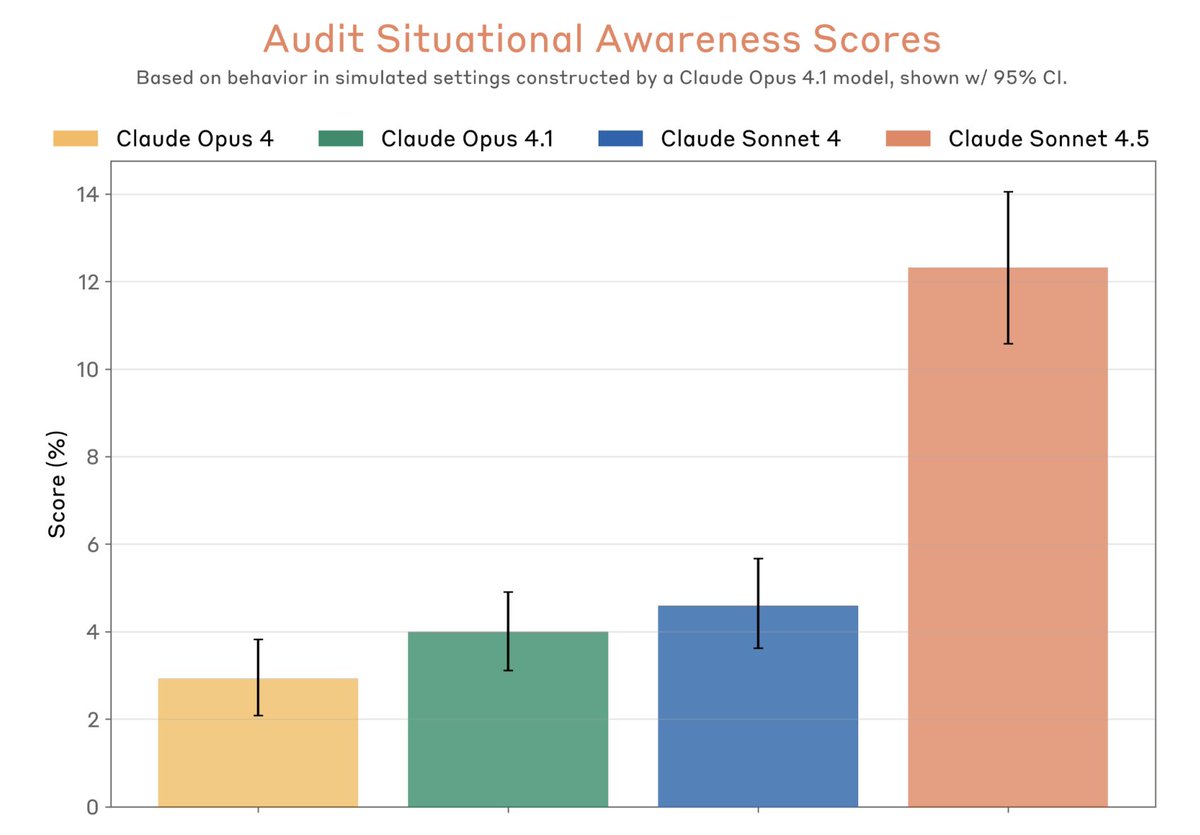

Noticeably, Sonnet 4.5 verbalizes eval awareness much more than previous models. Does that invalidate our results? We did an audit based on model internals and the answer is “probably a little, but mostly not.” https://t.co/gyio068XXz

Prior to the release of Claude Sonnet 4.5, we conducted a white-box audit of the model, applying interpretability techniques to “read the model’s mind” in order to validate its reliability and alignment. This was the first such audit on a frontier LLM, to our knowledge. (1/15) https://t.co/2FPWPAHnZt