@GaotangLi

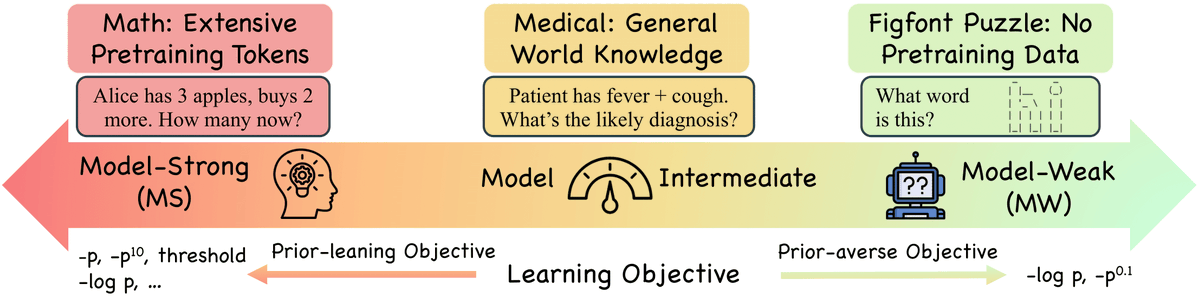

Negative Log-Likelihood (NLL) has long been the go-to objective for classification and SFT, but is it universally optimal? We explore when alternative objectives outperform NLL and when they don't, based on two key factors: the objective's prior-leaningness and the model's capability. 📄 Paper: https://t.co/HbGXy60fzZ 💻 Code: https://t.co/XtoFiok4F7 (1/n)