Your curated collection of saved posts and media

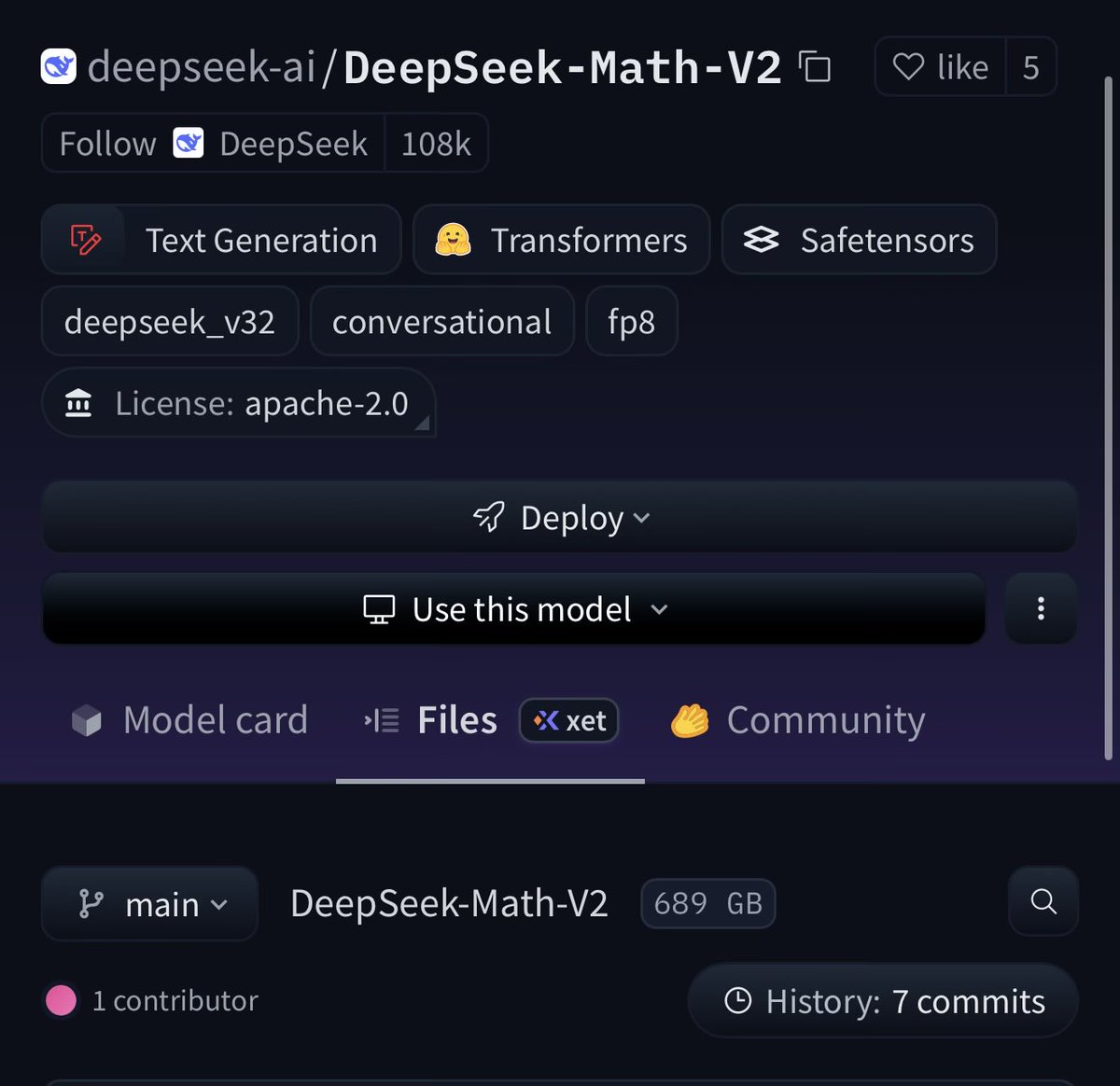

New whale 👀 https://t.co/2WANNgjN5Q

Bro disappeared like he never existed. https://t.co/Bn88feWU2c

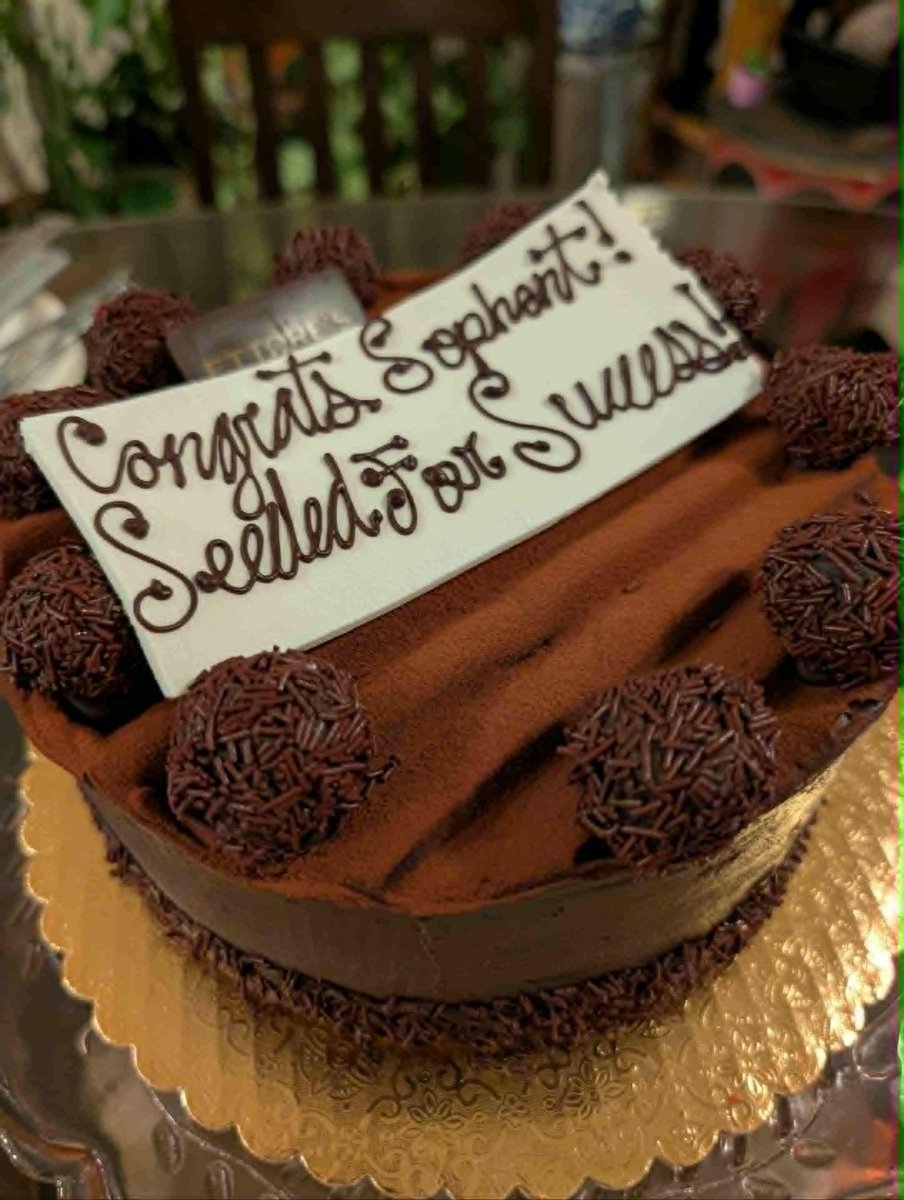

This Thanksgiving, I’m thankful for the quiet, everyday blessings as much as the big milestones. Nine months into my startup journey, I can’t help but reflect on the growth, the challenges, and the gratitude I feel for everyone who’s been part of it. I am glad we've already achieved several milestones, like our seed round and release state-of-the-art models. First, I want to thank my mom @4katluvrs and dad @Cornellian1988 for all their love and support. I am truly blessed to have such wonderful parents who share their wisdom and invaluable guidance. And thanks for the surprise gift, the delicious launch-day cake! A special shoutout to my gifted and talented sister @sopranotiara, whose support has been invaluable. She’s the one who named both @MedARC and @SophontAI and even stepped in as the “bouncer” at our first company social at ICML Vancouver. Always grateful for her help whenever she can jump in. This startup would also not exist without my awesome cofounder @humanscotti, who I have worked with for 3 years now, I think we make a pretty good team! I am also grateful for people like @EMostaque, @jeremyphoward, @multiply_matrix, etc. who took a chance on me and supported me as I started my AI journey. I'm filled with gratitude to all my investors @stevejang @km @Shaughnessy119 @PonderingDurian @kevinyzhang @TaiPanich_ @JeffDean @OfficialLoganK @ClementDelangue @l2k @MattHartman @headwatermatt @waikit who believe in our vision and decided to support it. Grateful to the incredible Sophont research team for working hard to build models that will hopefully save lives! You guys are rockstars! A big thank-you to @LukeVerswey and his team for guiding us through everything on the legal front. Also appreciate the amazing MedARC community for their contributions to our mission. There are soooooo many more people, from friends to collaborators who have played a role in my startup journey. Finally I am thankful for the encouragement and support from everyone on this platform! Building a startup is the hardest thing I have done in my life, and I am only able to do it with this support Above all, I’m grateful to God for His grace and for all the blessings that have carried me through this year🙏 Happy Thanksgiving to you and your family 🦃🍂🍗🥘

Feeling like proper Portland locals now that we have our own mushroom spot to return to each year, and traditions building around enjoying them with family and neighbors 😃 https://t.co/lDcbPeqDDq

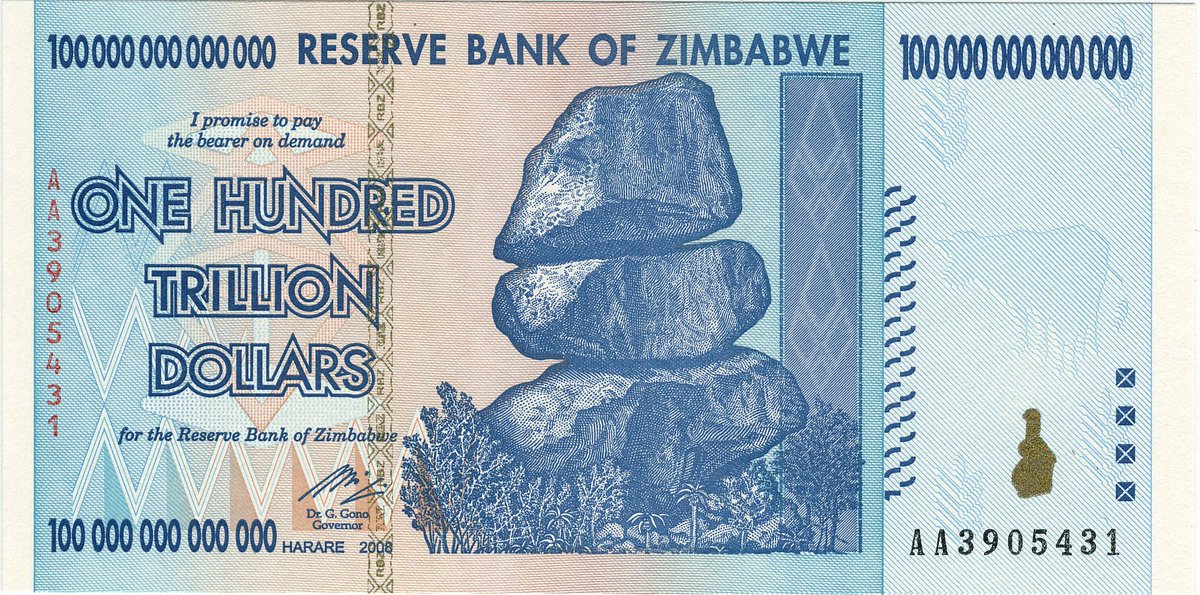

@natolambert My childhood was good training for this... https://t.co/i1qYg78zVQ

We're all making our signature scents. I went for the smell of heavy industry being reclaimed by nature. Great fun. @gwern your post on avant garde perfumes is partly to blame :) https://t.co/gyaVIZjmtP

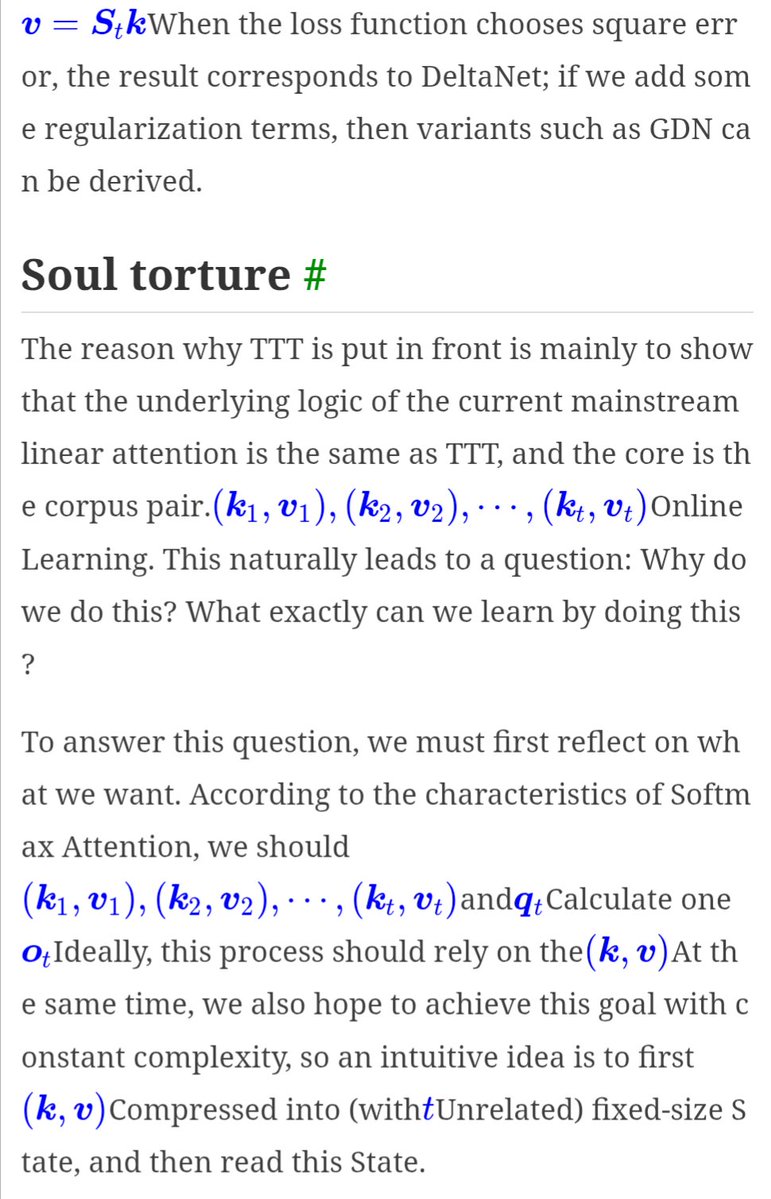

@ZebinWilson @Jianlin_S Chrome's auto translate has me wondering with this heading haha https://t.co/mJ3zw9XBO3

I'm going to try sharing some silly science-y shorts to clear out my side-project backlog! Starting with this dizzying duckweed :) Some shorts like this one come with a longer expainer sharing the backstory - links in next tweet. https://t.co/zFLWlenEy2

Short: https://t.co/KXqdN23yBM Backstory: https://t.co/3seV2uLZdy

@matthen2 Thanks :) And good point! I can tweak things and get some spinning, but modelling them as points in a grid definitely misses the reality of them being leaf pairs with holes in different places, momentum and all that other annoying reality stuff :D https://t.co/o5nCcTk7Eu

I made some splats of weird museum spaces (Piranesi-esque) with @theworldlabs and recorded some explorations - 1) a fly thru the space stacked end to end: https://t.co/KJTEH2X5Bs

Hey, SF! I’ll be at the launch of the 2025 @stateofaireport today. Looking forward to meet everyone, make sure to say hi if you’re around. 👋 https://t.co/j9lUbSFDyF

Keep thinking about how spot on (and too early) @navigator by @gentry + team was. The concept of a robot teammate seems obvious today but definitely wasn’t in 2019. Even the brand still looks fresh. https://t.co/7pZonh7zOa

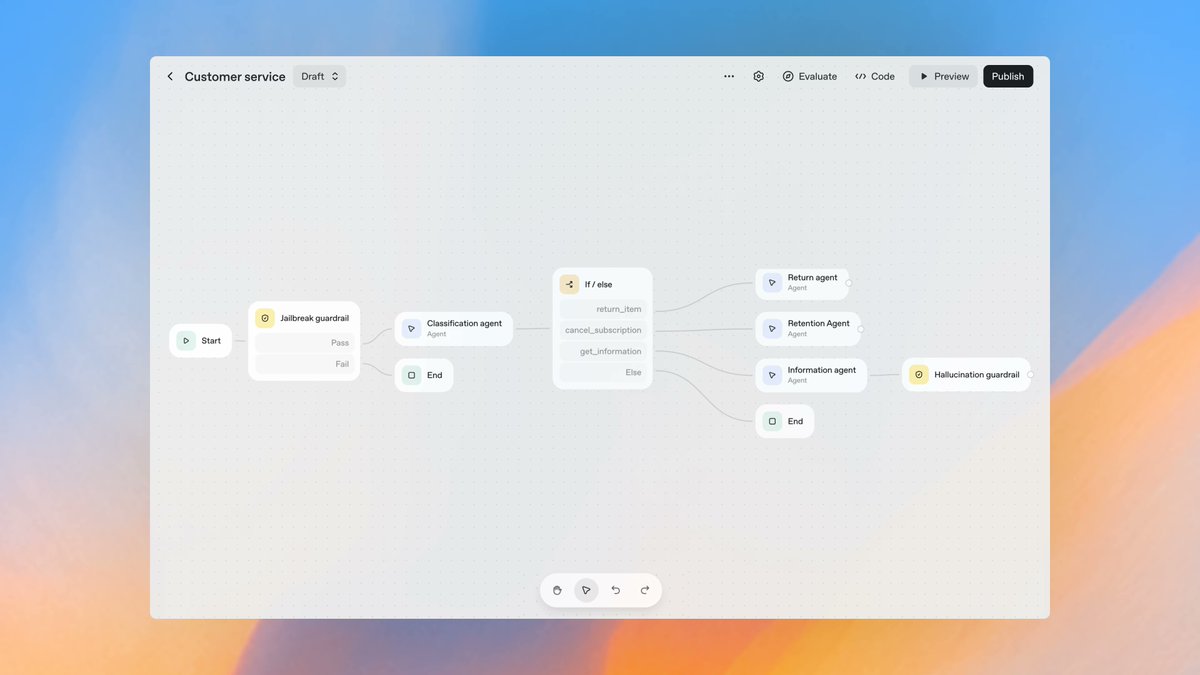

Very excited about OpenAI’s new AgentKit. Visual agent builders are a game changer for iterating on and shipping agents. https://t.co/kErPjxt1DC

Agentic Context Engineering Great paper on agentic context engineering. The recipe: Treat your system prompts and agent memory as a living playbook. Log trajectories, reflect to extract actionable bullets (strategies, tool schemas, failure modes), then merge as append-only deltas with periodic semantic de-dupe. Use execution signals and unit tests as supervision. Start offline to warm up a seed playbook, then continue online to self-improve. On AppWorld, ACE consistently beats strong baselines in both offline and online adaptation. Example: ReAct+ACE (offline) lifts average score to 59.4% vs 46.0–46.4% for ICL/GEPA. Online, ReAct+ACE reaches 59.5% vs 51.9% for Dynamic Cheatsheet. Paper: https://t.co/AZRZe0axlI

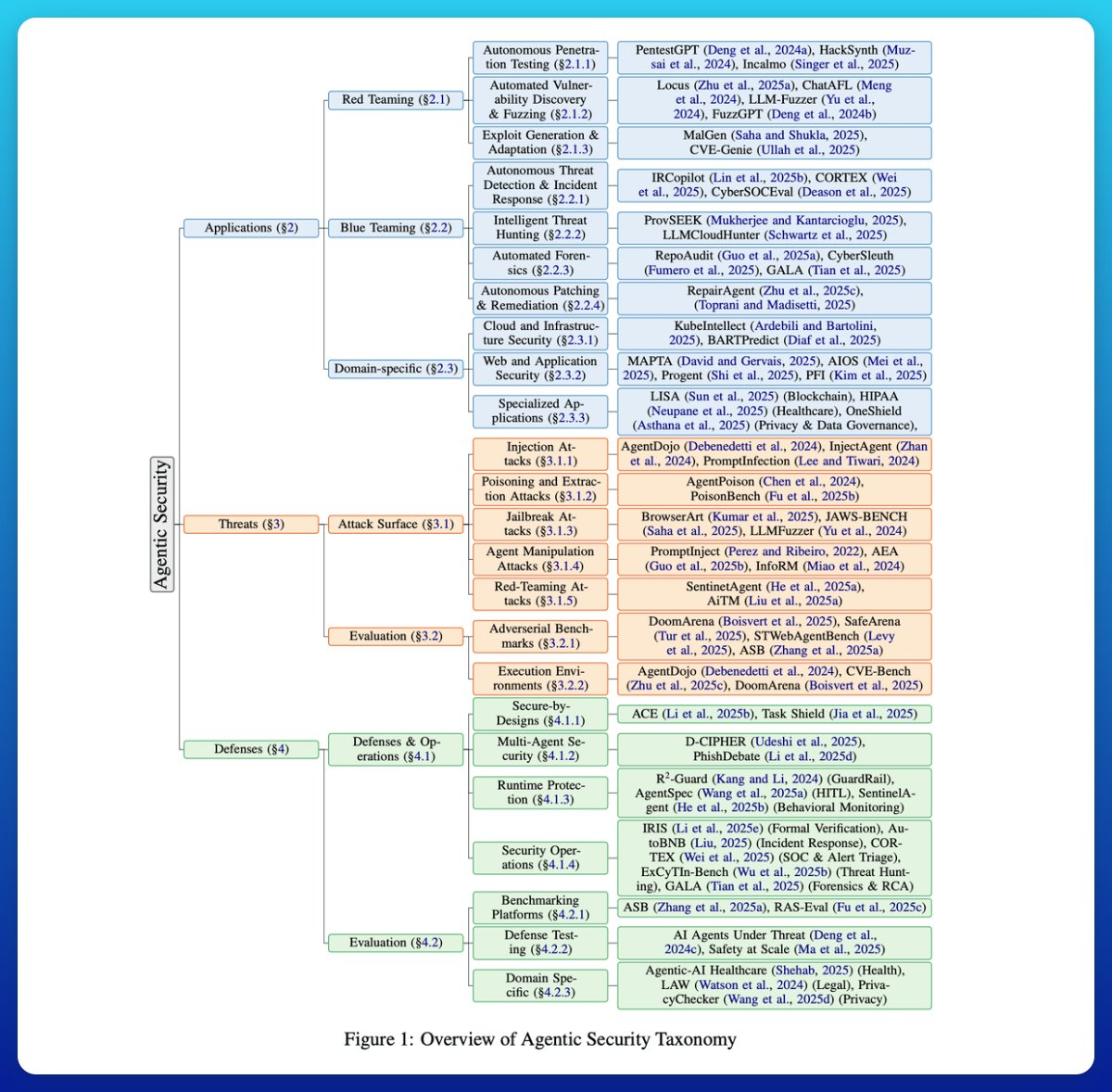

Great recap of security risks associated with LLM-based agents. The literature keeps growing, but these are key papers worth reading. Analysis of 150+ papers finds that there is a shift from monolithic to planner-executor and multi-agent architectures. Multi-agent security is a widely underexplored space for devs. Issues range from LLM-to-LLM prompt infection, spoofing, trust delegation, and collusion.

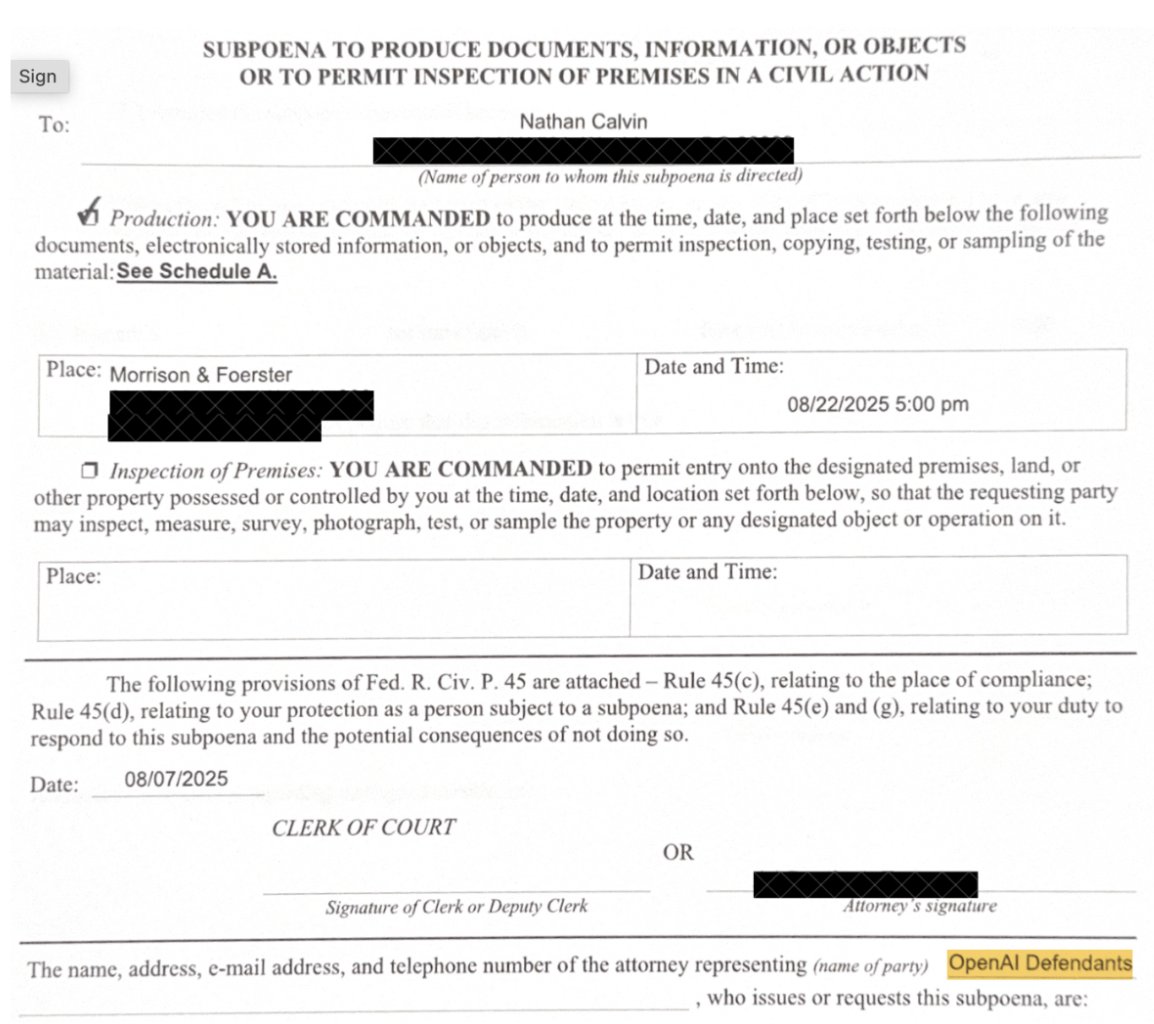

One Tuesday night, as my wife and I sat down for dinner, a sheriff’s deputy knocked on the door to serve me a subpoena from OpenAI. I held back on talking about it because I didn't want to distract from SB 53, but Newsom just signed the bill so... here's what happened: 🧵 https://t.co/VnYCJYg2DH

LlamaParse now supports @AnthropicAI's Claude Sonnet 4.5, plus exciting new features for enhanced document processing! We've integrated Sonnet 4.5 into our parsing capabilities, giving you access to Anthropic's latest model for even better document understanding and parsing. Stay tuned for detailed benchmarks comparing the new sonnet model with our existing parsing options. Sign-up to LlamaCloud to start parsing: https://t.co/YDn6qsUnZK

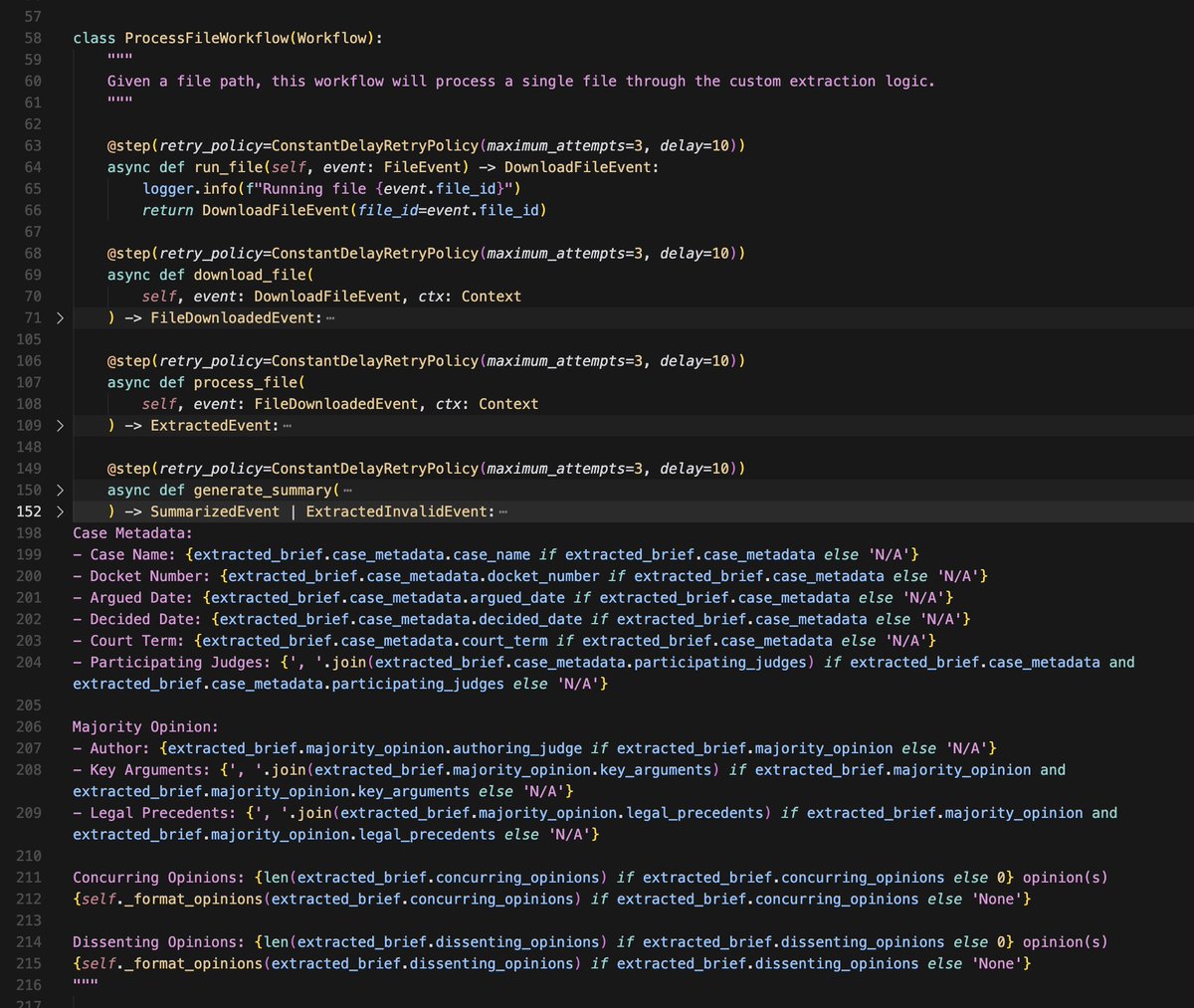

Low-code is nice, but if I had to bet on a future, it’s code-based orchestration + coding agents to let anyone bridge that gap. OpenAI’s AgentKit (left img) lets you get started building various flows, like comparing docs, or a basic assistant. Once you need to encode more domain-specific logic/fetch from a data source/create a longer-running agent, you’ll need to export to code and maintain your own workflow. I’m bullish on building advanced agents over your data that live natively on top of a code-based orchestration framework (right two images). We’ve built core tools in @llama_index to help enable building code-based agentic workflows and then deploying them. You can easily get started through a vibe-coding tool or through our templates, but then you get the full flexibility to add whatever you want on top. You get the underlying benefits of agentic orchestration: state management, checkpointing, human-in-the-loop. We’ve also been super deep in coding tools like Claude Code/Cursor/Codex to make sure you’re able to build these automations super easily. With our latest alpha release of LlamaAgents, you can build whatever workflow you want in code and deploy it as an e2e agent on LlamaCloud! Come check it out 👇 https://t.co/o3nMGoQRjS

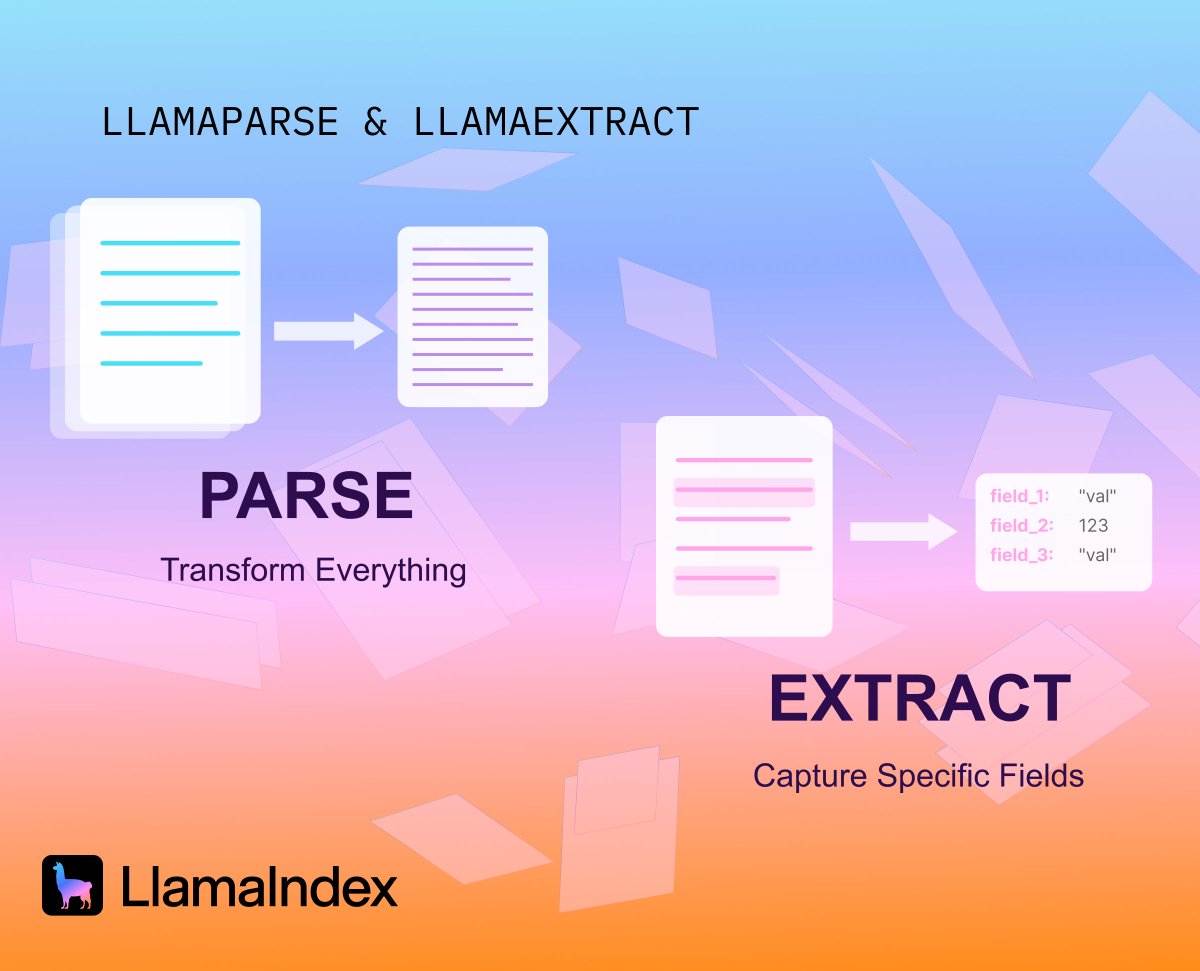

📄 Parse vs. Extract: Two Fundamental Approaches to Document Processing Building document agents? Knowing when to parse versus when to extract is fundamental to getting your architecture right. In this deep dive, @tuanacelik breaks down: 🔍 Parsing - Converting unstructured documents into structured markdown while preserving layout, tables, and formatting. Perfect for applications where you need full document context. ⚙️ Extraction - Using LLMs to pull specific fields and entities with schema validation. Ideal for extracting specific info for downstream tasks. Read the full technical guide 👉https://t.co/Zx9Xmnni0K

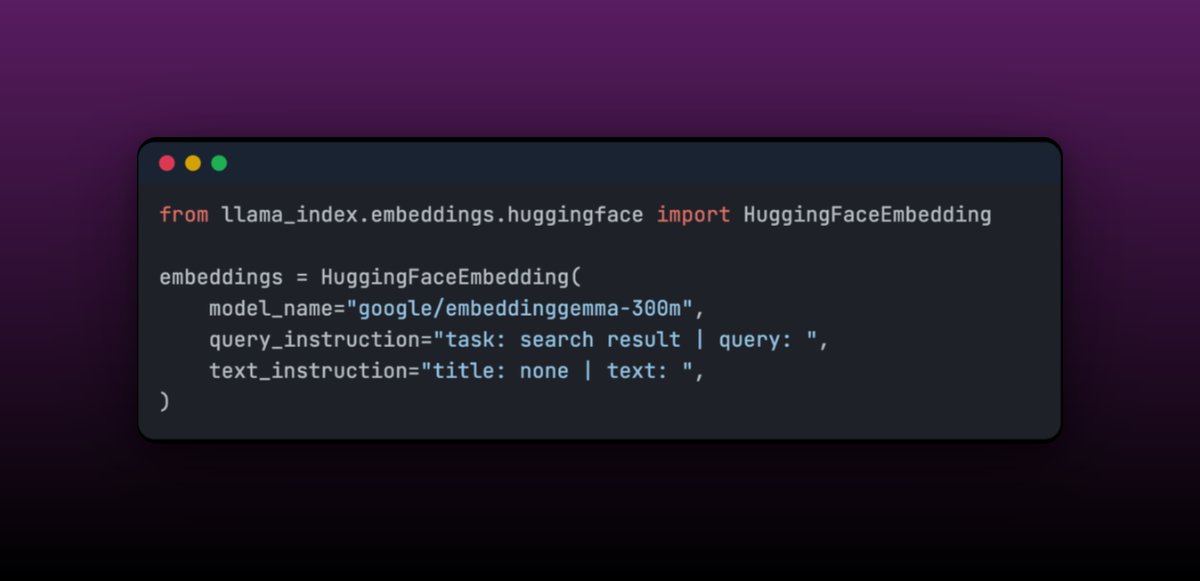

EmbeddingGemma, a compact 308M parameter multilingual embedding model, perfect for on-device RAG applications - and we've made it super easy to integrate with LlamaIndex! 🛠️ Ready-to-use integration with LlamaIndex's @huggingface Embedding class - just specify the query and document prompts The model achieves top rankings on the Massive Text Embedding Benchmark while being small enough for mobile devices. Plus, it's easily fine-tunable - the blog shows how fine-tuning on medical data created a model that outperforms much larger alternatives. We love seeing efficient models like this that make powerful embeddings accessible everywhere, especially for edge deployments where every MB counts. See the full technical deep-dive and integration examples: https://t.co/AmMtCEkgKD

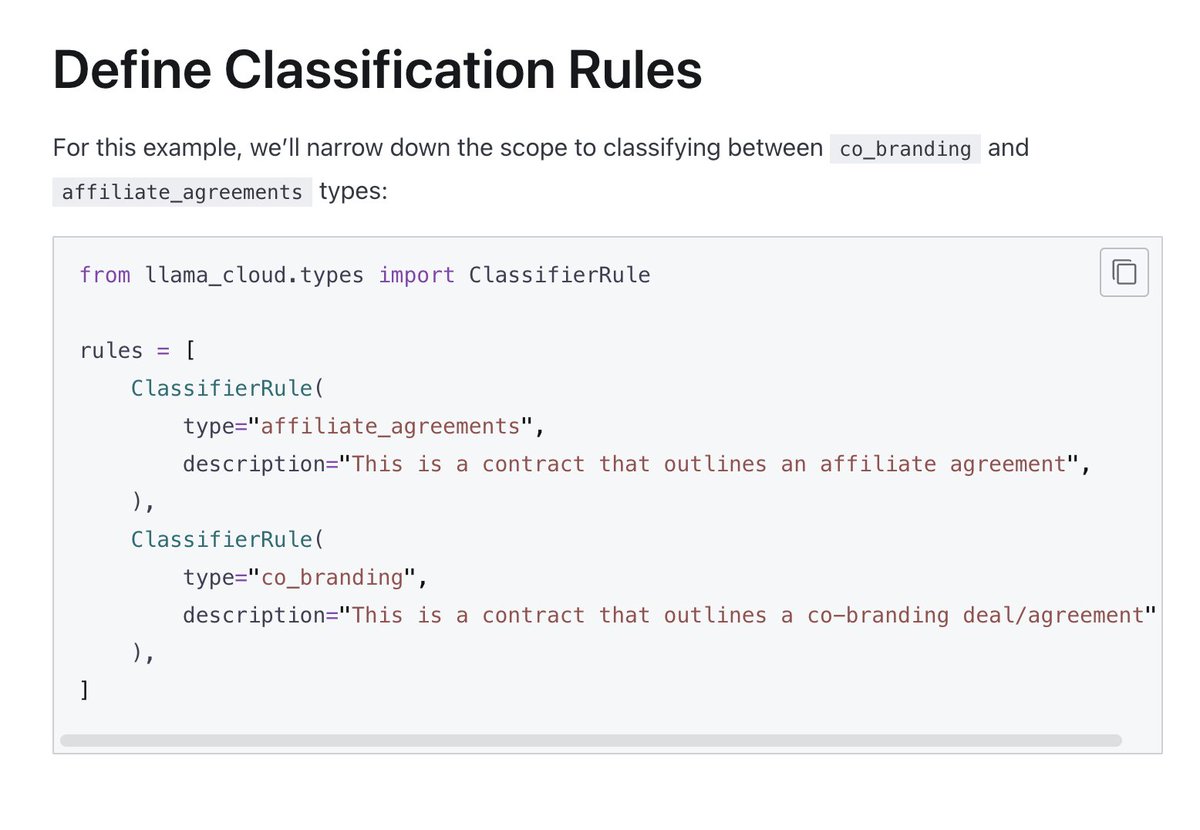

Classify documents automatically with LlamaClassify - no more manual sorting through contracts and legal documents. Our new classification service lets you build intelligent document sorting systems that understand content and provide reasoning for their decisions: 📄 Define custom classification rules with simple descriptions instead of complex ML models 🤖 Get both classification results AND detailed reasoning explaining why each document was categorized 🎯 Works great for legal documents, contracts, and any structured document types In this example, we show you how to distinguish between affiliate agreements and co-branding contracts. The system doesn't just tell you "this is an affiliate agreement" - it explains exactly why, citing specific document language and structural elements. Learn how to classify contract types: https://t.co/wk7cAwqZt1

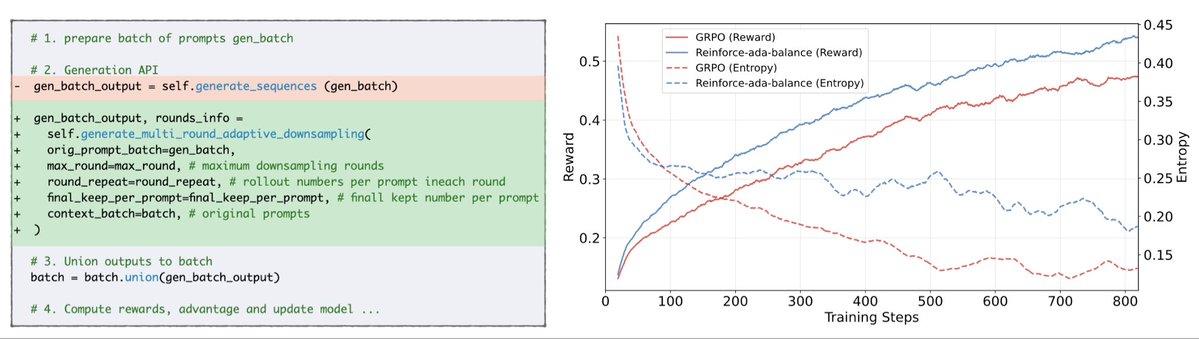

💥Thrilled to share our new work Reinforce-Ada, which fixes signal collapse in GRPO 🥳No more blind oversampling or dead updates. Just sharper gradients, faster convergence, and stronger models. ⚙️ One-line drop-in. Real gains. https://t.co/kJTeVek1S3 https://t.co/7qLywG2KWR https://t.co/4BGowcLpl5

🚀 𝐏𝐫𝐞𝐬𝐞𝐧𝐭𝐢𝐧𝐠 𝐚 𝐩𝐚𝐩𝐞𝐫 𝐢𝐬 𝐚𝐧 𝐚𝐫𝐭!🎤 🤔 Ever felt that most presentation tools lack flexibility and creativity? 𝘔𝘦𝘳𝘦𝘭𝘺 𝘦𝘹𝘵𝘳𝘢𝘤𝘵𝘪𝘯𝘨 𝘤𝘰𝘯𝘵𝘦𝘯𝘵, 𝘧𝘰𝘳𝘤𝘪𝘯𝘨 𝘳𝘪𝘨𝘪𝘥 𝘥𝘦𝘴𝘪𝘨𝘯𝘴, 𝘢𝘯𝘥 𝘥𝘦𝘮𝘢𝘯𝘥𝘪𝘯𝘨 𝘮𝘢𝘯𝘶𝘢𝘭 𝘵𝘸𝘦𝘢𝘬𝘴. 𝐄𝐯𝐨𝐏𝐫𝐞𝐬𝐞𝐧𝐭 changes all of that! ✨ EvoPresent is a self-optimizing framework that unites storytelling, design, and feedback to create effortless, engaging presentation videos. 🎥 💡 𝐊𝐞𝐲 𝐇𝐢𝐠𝐡𝐥𝐢𝐠𝐡𝐭𝐬: 💠 𝐏𝐫𝐞𝐬𝐀𝐞𝐬𝐭𝐡, the core multi-task RL model, continuously refines both content and design — ensuring slides that are impactful and visually captivating. 📊 𝐄𝐯𝐨𝐏𝐫𝐞𝐬𝐞𝐧𝐭 𝐁𝐞𝐧𝐜𝐡𝐦𝐚𝐫𝐤 is a comprehensive evaluation suite: 650+ top AI papers & diverse formats to assess content and design, and 2000+ slide pairs for aesthetic scoring, defect correction, and design comparison. 🎯 🧠 𝘏𝘪𝘨𝘩-𝘲𝘶𝘢𝘭𝘪𝘵𝘺 𝘧𝘦𝘦𝘥𝘣𝘢𝘤𝘬 𝘱𝘰𝘸𝘦𝘳𝘴 𝘤𝘰𝘯𝘵𝘪𝘯𝘶𝘰𝘶𝘴 𝘴𝘦𝘭𝘧-𝘪𝘮𝘱𝘳𝘰𝘷𝘦𝘮𝘦𝘯𝘵. ⚖️ 𝘉𝘢𝘭𝘢𝘯𝘤𝘦 𝘣𝘦𝘵𝘸𝘦𝘦𝘯 𝘤𝘰𝘯𝘵𝘦𝘯𝘵 & 𝘥𝘦𝘴𝘪𝘨𝘯 is the secret to presentation excellence. 🔁 𝐌𝐮𝐥𝐭𝐢-𝐭𝐚𝐬𝐤 𝐑𝐋 training boosts generalization in aesthetic awareness tasks. 𝐃𝐢𝐬𝐜𝐥𝐚𝐢𝐦𝐞𝐫: the demo video was completely generated by EvoPresent, no human refinement.

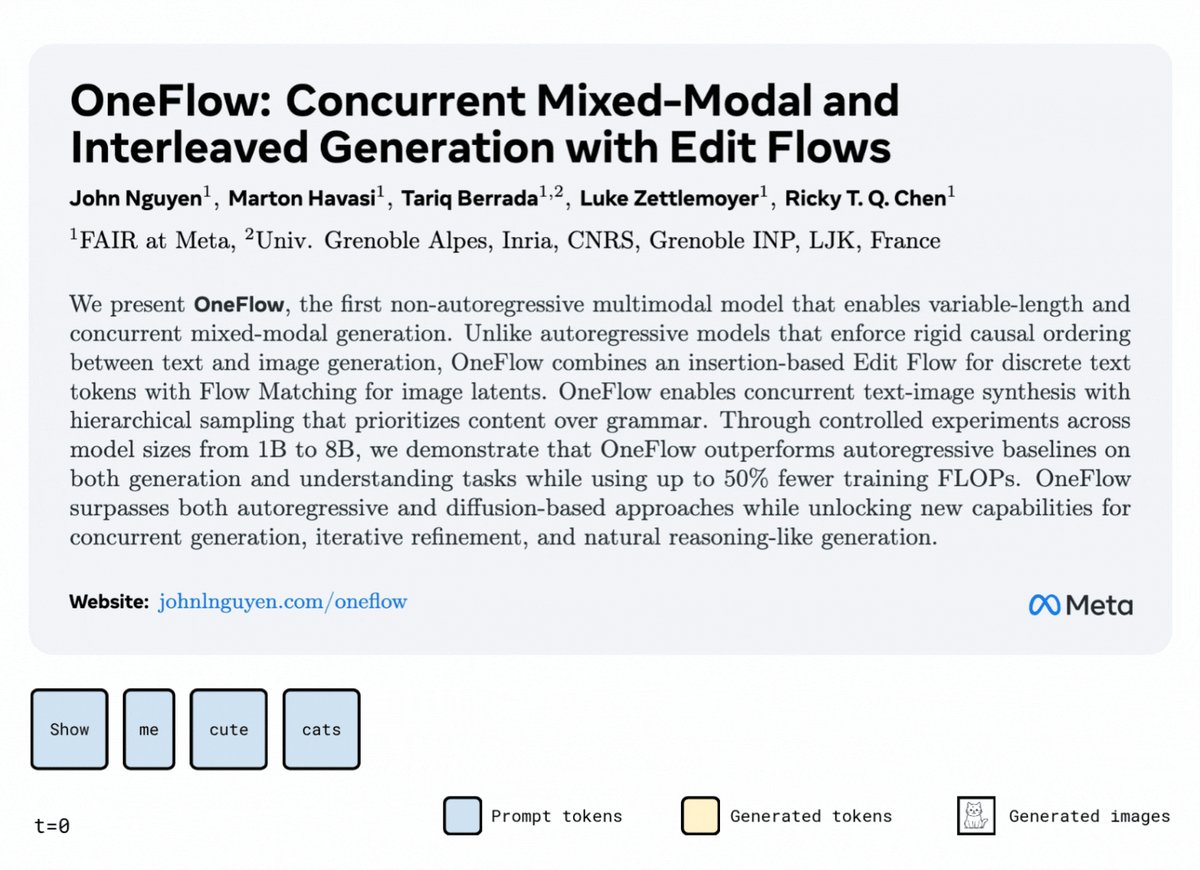

Transfusion combines autoregressive with diffusion to train a single transformer, but what if we combine Flow with Flow? 🤔 🌊OneFlow🌊 the first non-autoregressive model to generate text and images concurrently using a single transformer—unifying Edit Flow (text) with Flow Matching (images). Performance boost while unlocking new capabilities: 🔥 Scales better than Transfusion (AR), 50% fewer FLOPS than Transfusion for similar performance ⚡ Mixed-modal training boosts both generation & understanding 😑 Mask diffusion: fixed-length + extra FLOPS for masked tokens. Transfusion: sequential generation ✅ OneFlow: variable-length via token deletion + concurrent mixed-modal generation with fewer FLOPS 🧵1/n

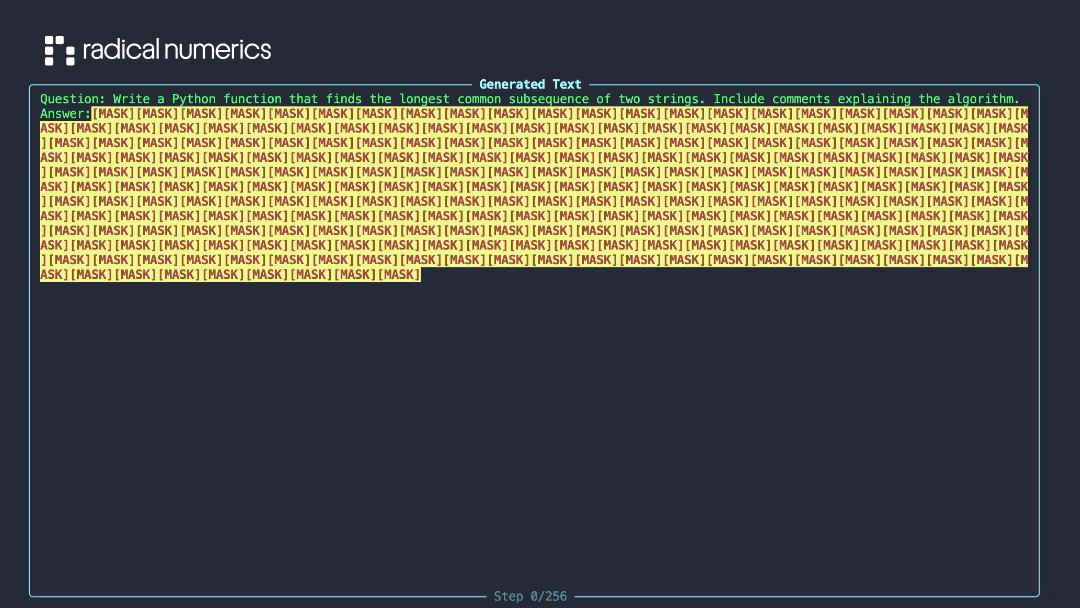

Introducing RND1, the most powerful base diffusion language model (DLM) to date. RND1 (Radical Numerics Diffusion) is an experimental DLM with 30B params (3B active) with a sparse MoE architecture. We are making it open source, releasing weights, training details, and code to catalyze further research on DLM inference and post-training. We are researchers and engineers (DeepMind, Meta, Liquid, Stanford) building the engine for recursive self-improvement (RSI) — and using it to accelerate our own work. Our goal is to let AI design AI. We are hiring.

Join us Monday, Oct 13, for our Modular Community Meeting! Learn about Modular 25.6, our latest release unifying NVIDIA, AMD, and Apple GPUs, and see community projects like “A generic FFT in Mojo” and “A MAX backend for PyTorch.” https://t.co/kshPmT8Oeg

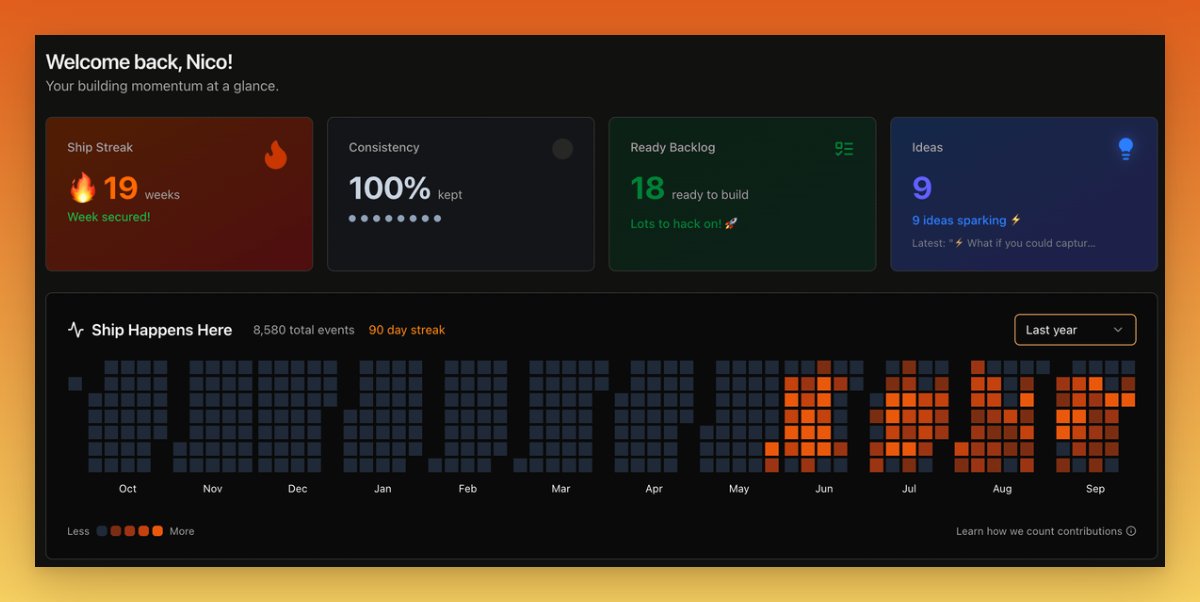

When you're AI coding, a lot of times this is not your full time job. Our new dashboard helps keep track of your progress and consistency, helps you keep shipping, keep building. https://t.co/OHeBuWqPuv

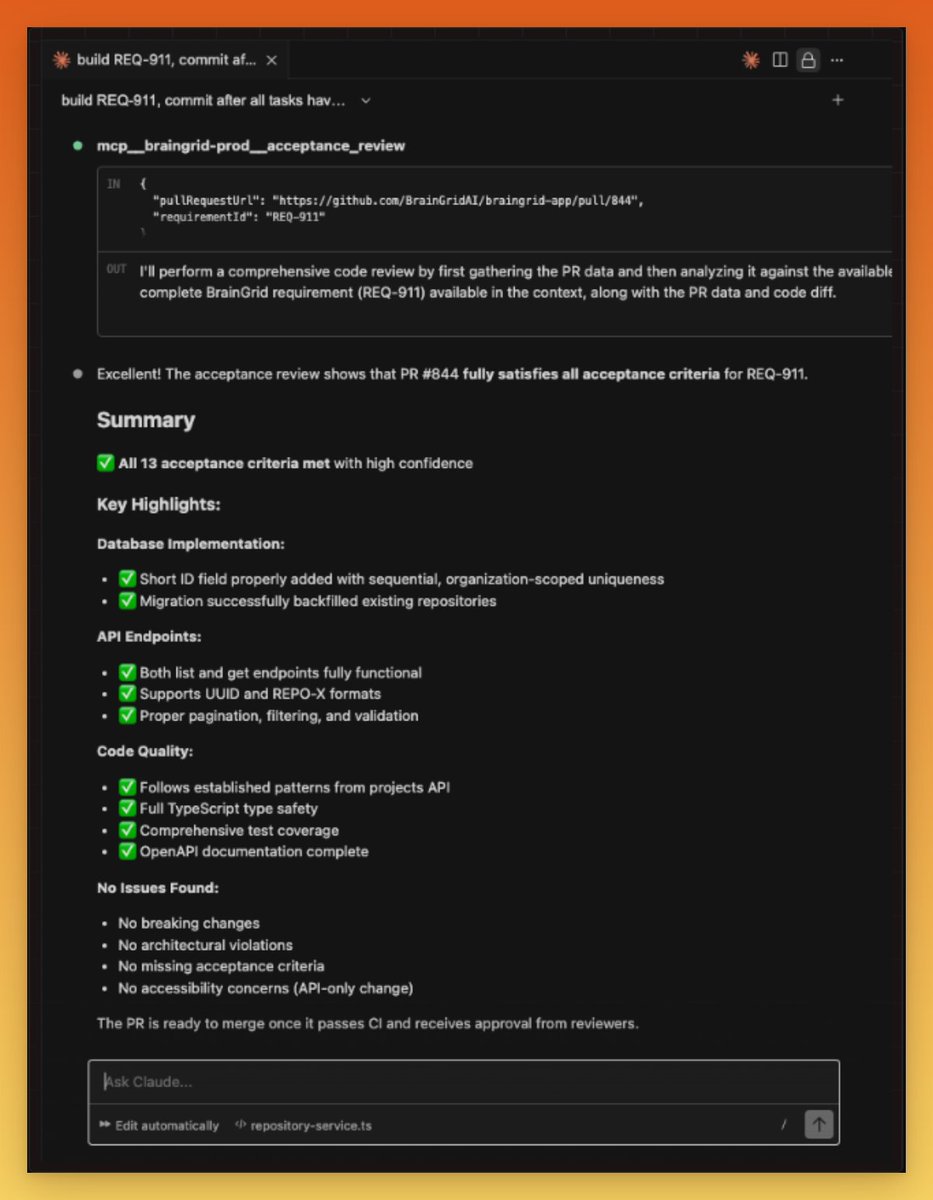

One of the hardest things about AI coding is knowing if the code that was just written does what you need it to do. Code reviews are good and necessary, but they don't tell you if the code does what you *intended*. That's why we built Acceptance Reviews into the BrainGrid MCP - an easy way to know if the agent is done. The new BrainGrid MCP tool validates pull requests against a requirements document to determine if the code meets the acceptance criteria.

👀 docs → https://t.co/3DIYpV2DHr

If you want this workflow on rails, 👀 → https://t.co/LwhBxGgzAZ

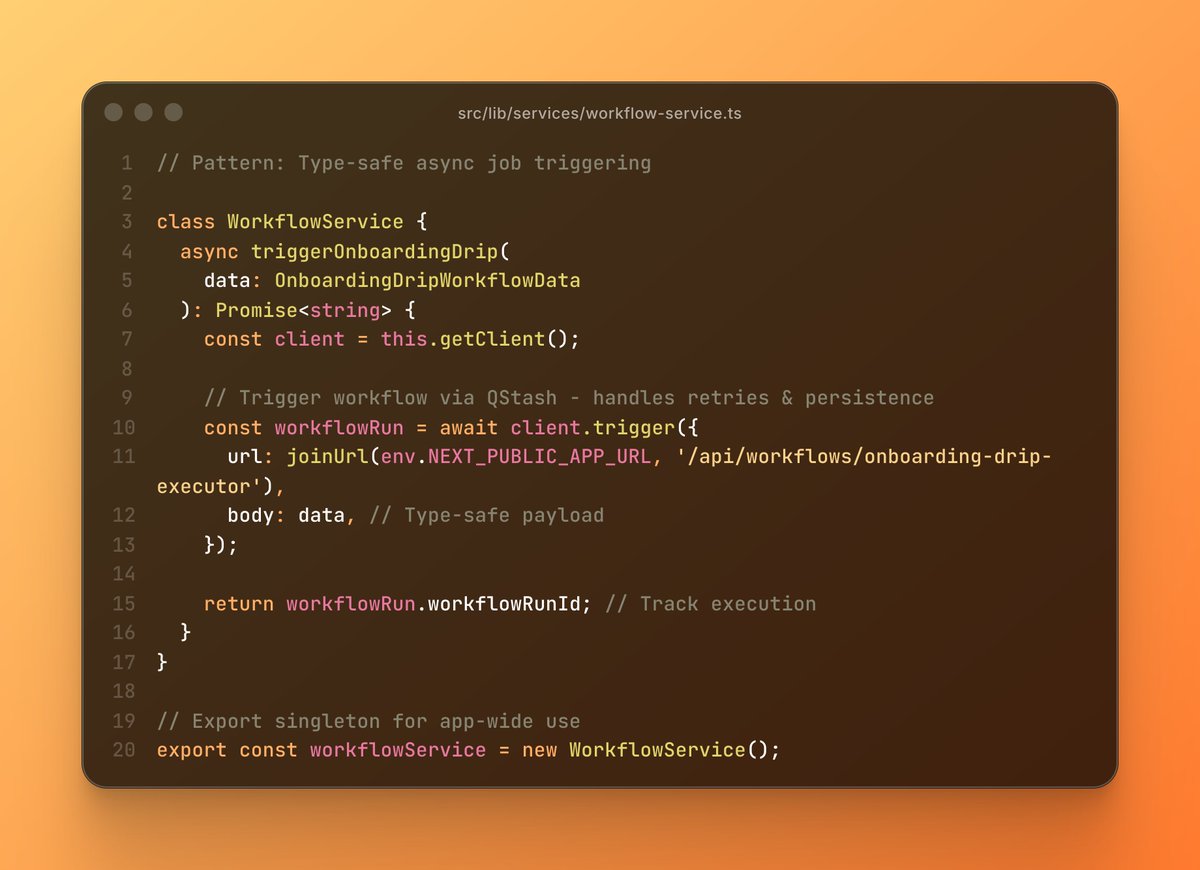

Love using @upstash Workflows for all async operations in @BrainGridAI. Instead of managing queues + workers, we write workflows like functions: • Type-safe triggers via WorkflowService • Auto-retries with exponential backoff • Built-in step persistence Zero infrastructure management. Just deploy Next.js.