Your curated collection of saved posts and media

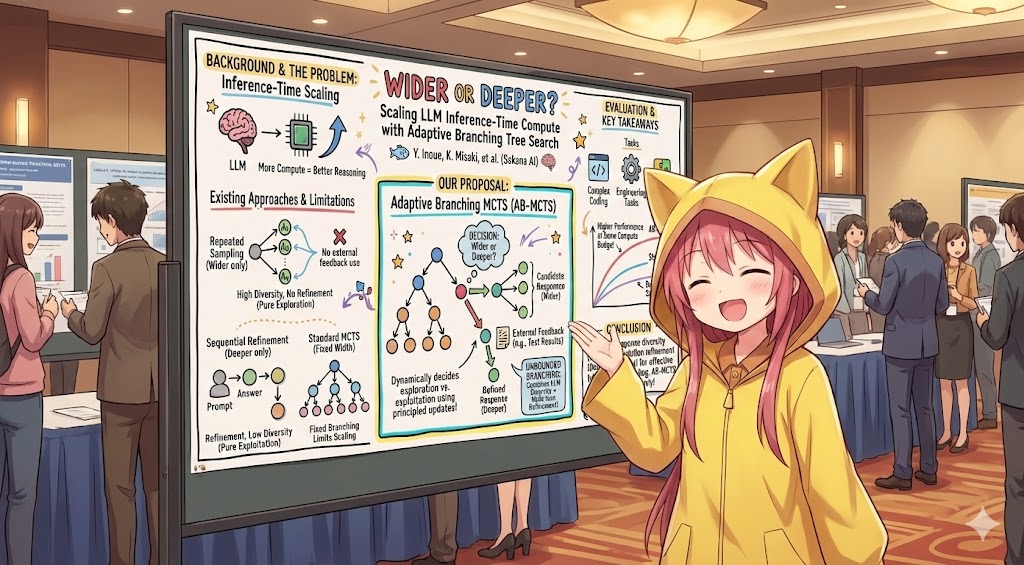

At #NeurIPS2025, our team at @SakanaAILabs will be presenting two posters on agents and inference-time scaling for challenging coding tasks: AB-MCTS and ALE-Bench. Unfortunately, I won't be there in person, but please stop by the posters to chat with my teammates! 🐟🐟🐟 1. "Wider or Deeper? Scaling LLM Inference-Time Compute with Adaptive Branching Tree Search" (Spotlight ✨) 📌Poster: Dec 3 (Wed) 4:30 PM #3418 https://t.co/ekcDyoQpdR 📄Paper: https://t.co/tz20wmICNR 🌐Blog: https://t.co/xrVFegDGUF 2. "ALE-Bench: A Benchmark for Long-Horizon Objective-Driven Algorithm Engineering" (D&B Track) 📌Poster: Dec 4 (Thu) 4:30 PM #702 https://t.co/S3vGSnR1Ly 📄Paper: https://t.co/9DKlau72Tn 🏆Leaderboard: https://t.co/HbwypOGM1C 🌐Blog: https://t.co/xAuvzIQbbp

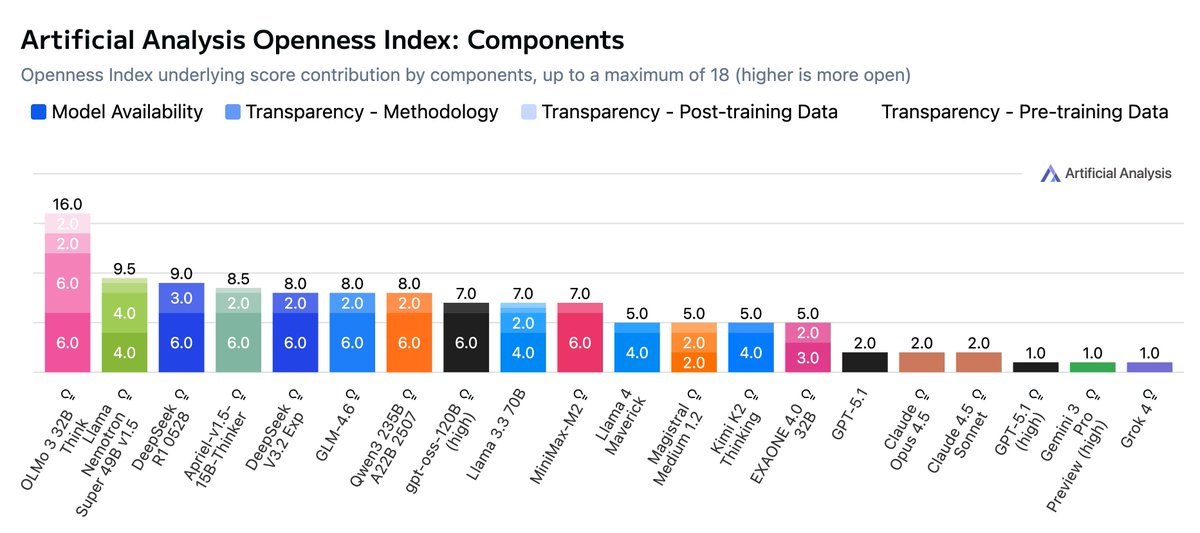

Introducing the Artificial Analysis Openness Index: a standardized and independently assessed measure of AI model openness across availability and transparency Openness is not just the ability to download model weights. It is also licensing, data and methodology - we developed a framework underpinning the Artificial Analysis Openness Index to incorporate these elements. It allows developers, users, and labs to compare across all these aspects of openness on a standardized basis, and brings visibility to labs advancing the open AI ecosystem. A model with a score of 100 in Openness Index would be open weights and permissively licensed with full training code, pre-training data and post-training data released - allowing users to not just use the model but reproduce its training in full, or take inspiration from some or all of the model creator’s approach to build their own model. We have not yet awarded any models a score of 100! Key details: 🔒 Few models and providers take a fully open approach. We see a strong and growing ecosystem of open weights models, including leading models from Chinese labs such as Kimi K2, Minimax M2, and DeepSeek V3.2. However, releases of data and methodology are much rarer - OpenAI’s gpt-oss family is a prominent example of open weights and Apache 2.0 licensing, but minimal disclosure otherwise. 🥇 OLMo from @allen_ai leads the Openness Index at launch. Living up to AI2’s mission to provide ‘truly open’ research, the OLMo family achieves the top score of 89 (16 of a maximum of 18 points) on the Index by prioritizing full replicability and permissive licensing across weights, training data, and code. With the recent launch of OLMo 3, this included the latest version of AI2’s data, utilities and software, full details on reasoning model training, and the new Dolci post-training dataset. 🥈 NVIDIA’s Nemotron family also performs strongly for openness. @NVIDIAAI models such as NVIDIA Nemotron Nano 9B v2 reach a score of 67 on the Index due to their release alongside extensive technical reports detailing their training process, open source tooling for building models like them, and the Nemotron-CC and Nemotron post-training datasets. 📉 We’re tracking both open weights and closed weights models. Openness Index is a new way to think about how open models are, and we will be ranking closed weights models alongside open weights models to recognize the scope of methodology and data transparency associated with closed model releases. Methodology & Context: ➤ We analyze openness using a standardized framework covering model availability (weights & license) and model transparency (data and methodology). This means we capture not just how freely a model can be used, but visibility into its training and knowledge, and potential to replicate or build on its capabilities or data. ➤ Model availability is measured based on the access and licensing of the model/weights themselves, while transparency comprises subcomponents for access and licensing for methodology, pre-training data, and post-training data. ➤ As seen with releases like DeepSeek R1, sharing methodology accelerates progress. We hope the Index encourages labs to balance competitive moats with the benefits of sharing the "how" alongside the "what." ➤ AI model developers may choose not to fully open their models for a wide range of reasons. We feel strongly that there are important advantages to the open AI ecosystem and supporting the open ecosystem is a key reason we developed the Openness Index. We do not, however, wish to dismiss the legitimacy of the tradeoffs that greater openness comes with, and we do not intend to treat Openness Index as a strictly ‘higher is better’ scale. See below for further analysis and details 👇

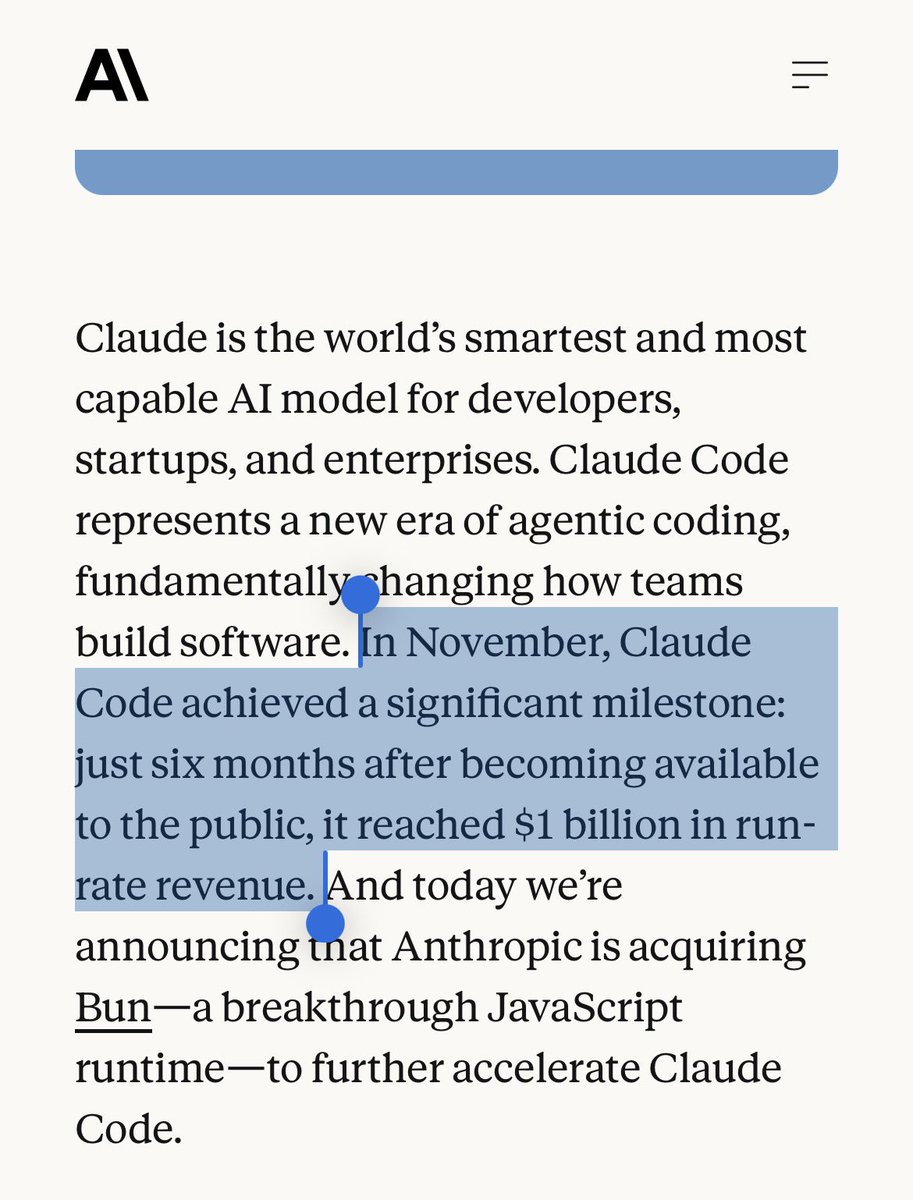

Claude Code reached $1B in run-rate revenue! And they acquired Bun too. About 90% of my work now happens in Claude Code. Opus 4.5 is a beast. Excited to see what comes next. https://t.co/Tdm9lnY3Jr

1️⃣ Start with a Prompt You basically start with a simple prompt of what you want to build. "Help me build a deep research agent that tracks the latest AI research papers on AI Agents." That's it. You get your first working agent generated in minutes. https://t.co/wmGqcs9JOH

2️⃣ Agent Builder & Prompt Optimization You can then iterate on your agent using the agent builder. Optimize prompts, add tools, and customize your agent as you see fit. The agent prompt is optimized for you to fit your use case. That's very useful. https://t.co/1ekIPPrUPw

3️⃣ Testing and Iterating Building agents is a very iterative process. Failures do happen, and edge cases are always popping up. The test functionality helps you iterate on your agent and more systematically add tools and missing components to your agent before deploying it. https://t.co/8toJAgJJrK

4️⃣ Tools and Integrations It's extremely easy to integrate tools for your agents. It's as simple as prompting the agent builder with what you want to get done, and tools are added for you. I show how to add a Gmail integration to send agent outputs directly to my inbox. https://t.co/lhDNU4wbXU

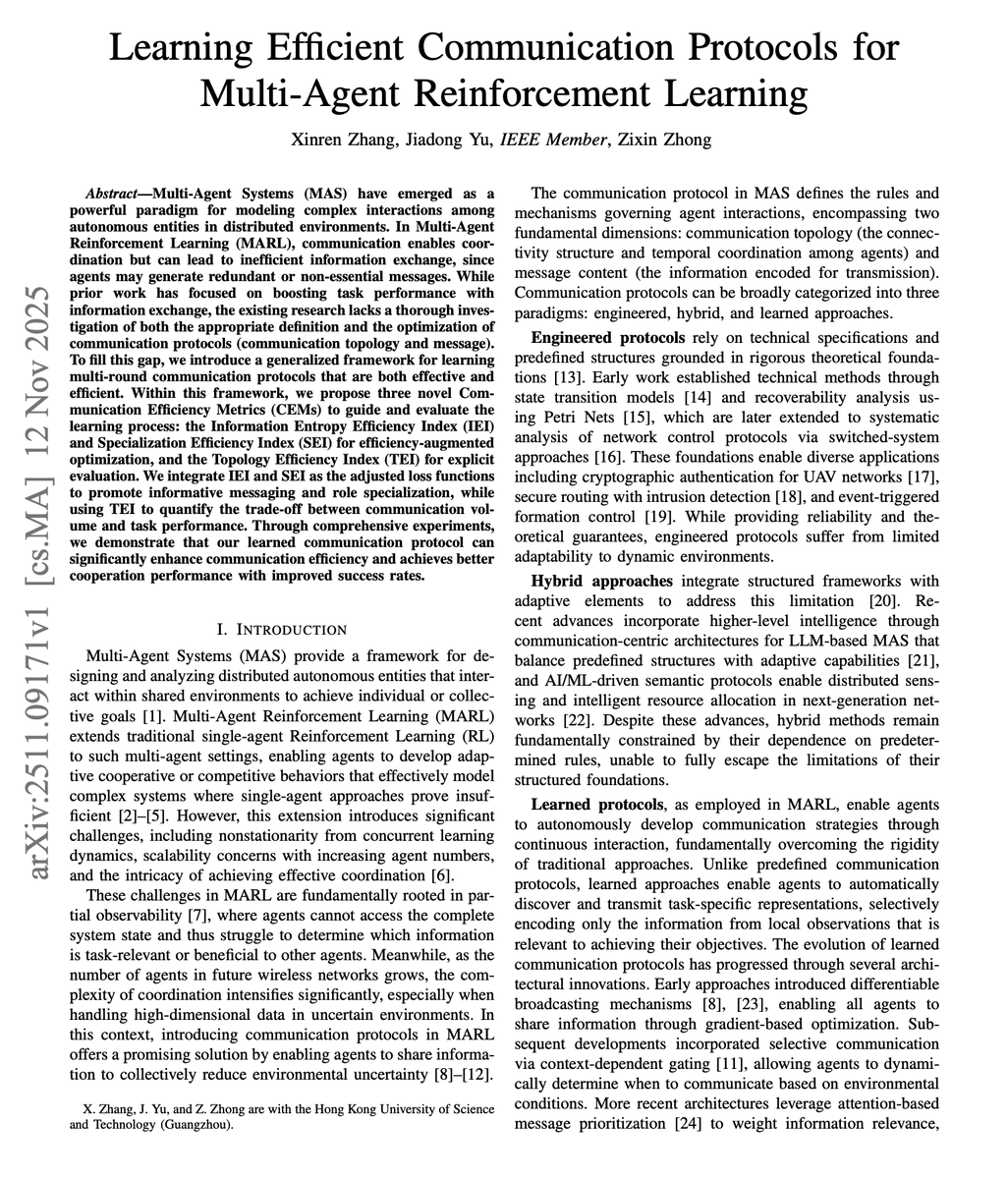

Multi-agent AI systems are poor at communication. The default approach in multi-agent RL today focuses almost entirely on task success rates. Can agents coordinate? Did they solve the problem? The actual cost of communication is rarely measured or optimized. But in real-world systems, bandwidth, energy, and compute are finite. Every message has a price. This new research introduces three Communication Efficiency Metrics (CEMs) and a framework for learning protocols that are both effective and efficient. They find that communication inefficiency arises primarily from poorly designed optimization objectives rather than inherent information needs. The researchers propose three metrics: - Information Entropy Efficiency Index (IEI) measures how compact messages are. - Specialization Efficiency Index (SEI) captures whether agents develop distinct roles rather than sending redundant information. - Topology Efficiency Index (TEI) tracks task success relative to communication frequency. By augmenting training loss functions with these metrics, they achieve dual improvements. CommNet saw an increase in success rate while also improving topology efficiency. IC3Net also improved the success rate with better efficiency. Counterintuitively, one-round communication with efficiency augmentation consistently outperformed two-round baseline configurations. More communication rounds degraded TEI significantly due to overhead. Communication efficiency and task performance can improve simultaneously rather than trading off. The takeaway for AI devs is to build better objectives, not more messages, to unlock coordination. 🔖 (bookmark it)

@nickcammarata Your recent posts on this remind me of this Arnold gem https://t.co/IMe0VwINCY +100 though. I finally had a chance to install a home gym recently, making it trivial to use daily. Always looking forward to the next exercise high. Slightly miss the social/entropy aspects of gyms.

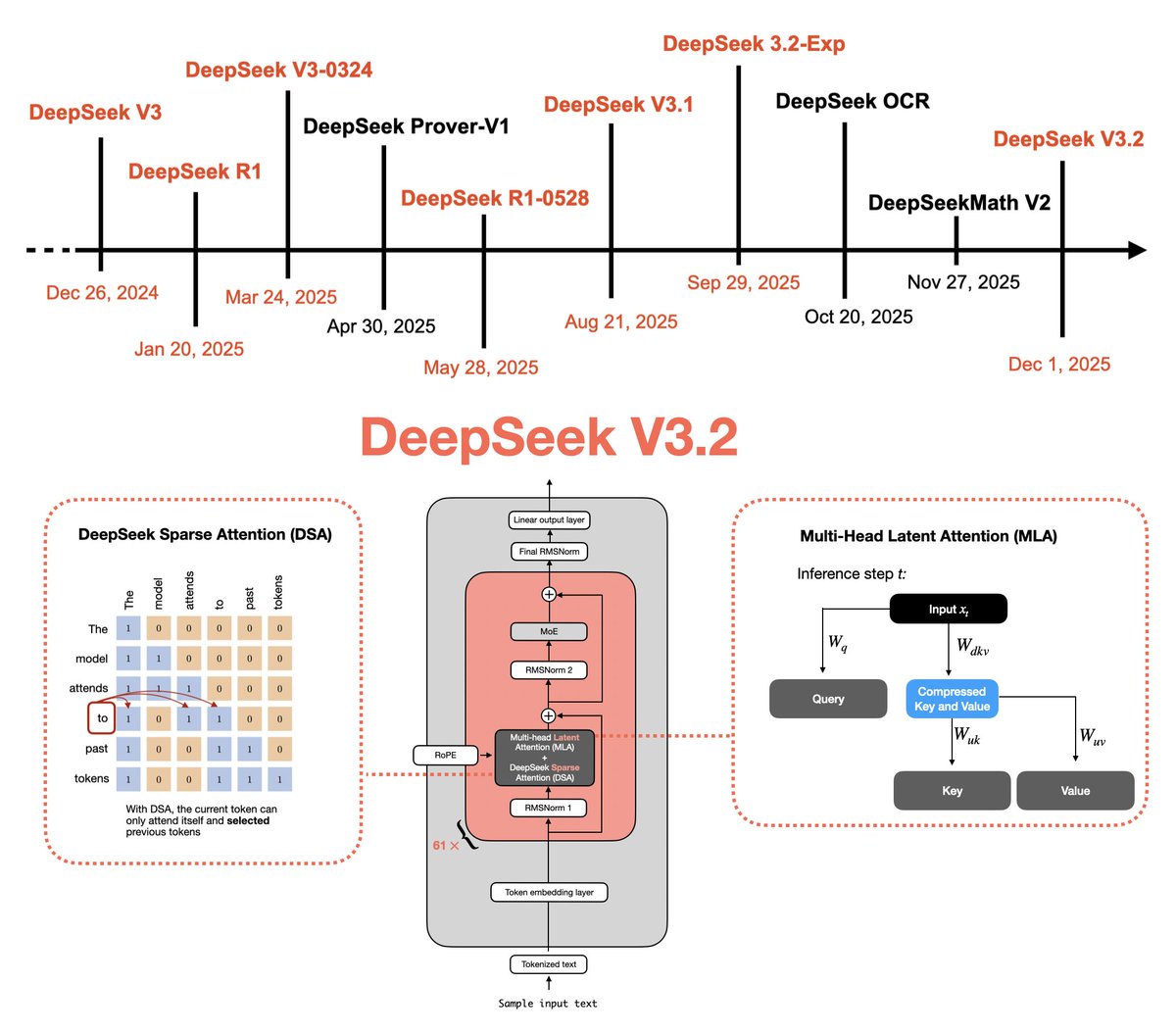

This interesting week started with DeepSeek V3.2! I just wrote up a technical tour of the predecessors and components that led up to this: 🔗 https://t.co/JSAd9cx2s6 - Multi-Head Latent Attention - RLVR - Sparse Attention - Self-Verification - GRPO Updates https://t.co/5f965hR70I

Excited to share another NeurIPS event I'm helping with. We're hosting a dedicated booth to record researchers talking about their work, share that audio&video content on our socials, and start great conversations. What is it? - 10 minute researchers interviews recorded live at NeurIPS - come talk about your poster, your research, or your company - we’ll edit and publish your interview as a short & long form video - Location: Hilton Hotel, across the street from convention Hosted by @readsail and @SemiAnalysis_ with much gratitude to our sponsor @LambdaAPI. Lambda has been a great ally of all my crazy ideas to help out the research community over the last year, the fastest to pick up the phone and help. I plan on spinning more of this into the @interconnectsai feed, so please reach out (email best, DM okay, but contact @readsail first) with questions. I've got more planned in this space soon, such as Interconnects Paper Awards. Come hang, signup link below!

Ivantill onetwt AU Till ninggalin tidur Ivan yang lagi rewel. https://t.co/LcQonxSMn3

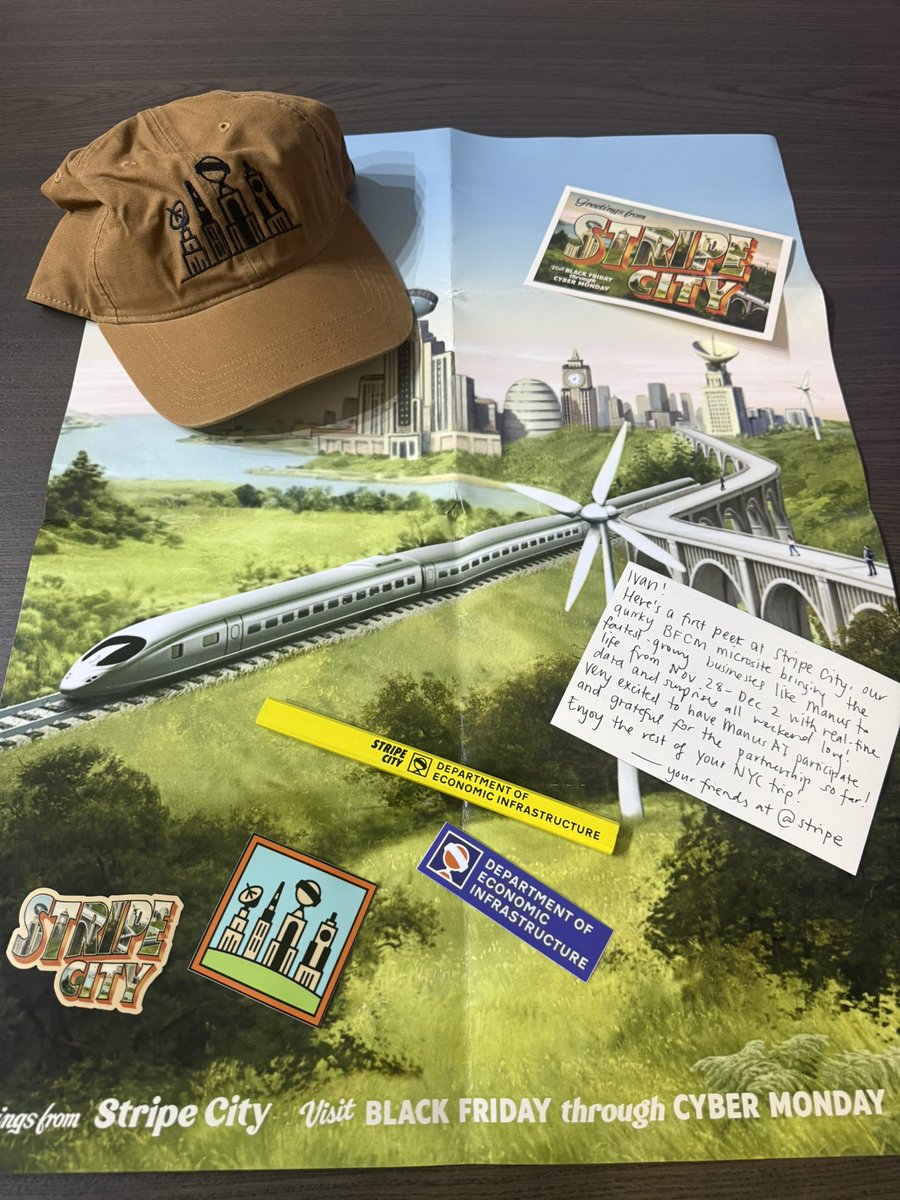

Thanks for @caitbhri for the amazing @stripe BFCM merch! Go check out their site at https://t.co/RVaBUxmQpO! It’s absolutely incredible and a work of art :) https://t.co/IUybLVyx3M

waaahhhhhh thx @caitbhri i 🖤 love these stripe cuties sm pls go into stripe city and spot manus. trust me u will see it in like 3 secs go go goooo https://t.co/Zn1cSLZqV1

happy black friday manus fam 🖤 free 3k credits as a lil treat go speedrun this pls 🤩 SPOT MANUS IN STRIPE CITY 🤩 first 10 win https://t.co/Lt8QDk1fb4

Find Manus on @stripe’s BFCM website and stand to win some free credits It’s live till Tuesday at https://t.co/RVaBUxmQpO https://t.co/v0rGHLixNg

happy black friday manus fam 🖤 free 3k credits as a lil treat go speedrun this pls 🤩 SPOT MANUS IN STRIPE CITY 🤩 first 10 win https://t.co/Lt8QDk1fb4

@jonas @Replit Kicking off my own manus task to see how far we can get too! :) https://t.co/49p9PBWDfi

Came back to some dope @ExaAILabs swag, stuff is beautiful man! https://t.co/ZJ7ZLY924t

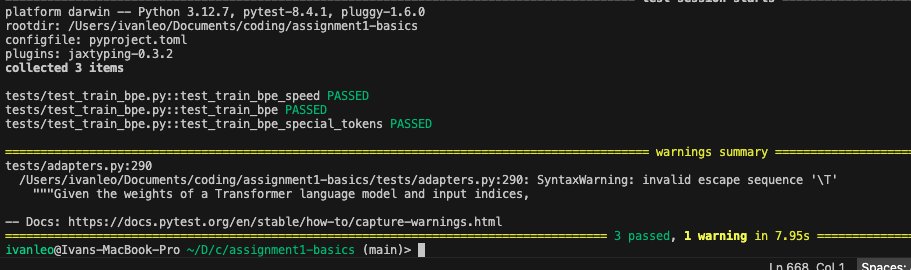

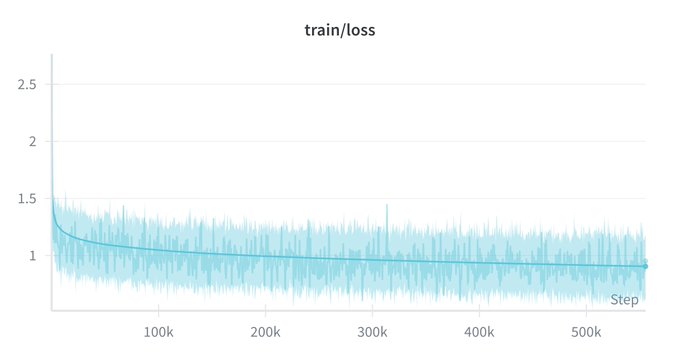

Finally finished the first part of the assignment of CS336. Implementing a BPE from scratch was pretty non-intuitive for me so looking forward to now optimizing it to go fast fast https://t.co/rUB9HlQEpR

@jxnlco It do be like that https://t.co/Ehjm1zTOo9

How it feels like working on agents sometimes https://t.co/16tGMxjBVn

The Electric River Running 540 Exaflops Deep at Muon Noon credit: @N8Programs https://t.co/eFBbnBO0Kh

"I am super excited about the robots Elon Musk is working on" - Nvidia CEO Jensen Huang https://t.co/OR46GMtQbv

Sindaco di Roma @gualtierieurope prova FSD di Tesla nella città eterna. Bellissimo! https://t.co/2UiALKIJ9N

I really like this superimposition effect that you can achieve with Grok Imagine https://t.co/YJppURhxYQ

Make learning more fun again with the Grok app Let your kids explore science, physics, history, and the universe in a playful way with Good Rudi, it's friendly mode designed just for young minds They’ll get clear, exciting answers that spark real curiosity Instead of endless brain-rot short videos that teach nothing, give them a tool that actually helps them grow smarter every day

Elon Musk: The sun is overwhelmingly the source of energy, and everything else is tiny potatoes 🥔 “The biggest source of energy by far is the sun. So nothing even comes close to the sun in terms of energy output. The sun converts over 4 million tons of mass to energy per second, and it's been doing that for a very long time, and will do that for many, many billions of years to come. So one of the ways you can think of the progress of any given civilization is on the Kardashev scale. And so just a simple way of thinking about it is, you're Kardashev level 1 if you've harnessed all the power of a planet. You're 2 if you've harnessed all the power of a solar system. And you're 3 if you've harnessed the power of a galaxy. We're very far from 3, it's safe to say, but even from 1. So when you think in Kardashev terms, it becomes very obvious that the sun is overwhelmingly the source of energy, and everything else is tiny potatoes, tiny, tiny, tiny potatoes. But hey, well, tiny potatoes are fine for now, but the sun is overwhelmingly the big potato, the humongous potato. So it's really harnessing the power of the sun that will lead us to the stars.”

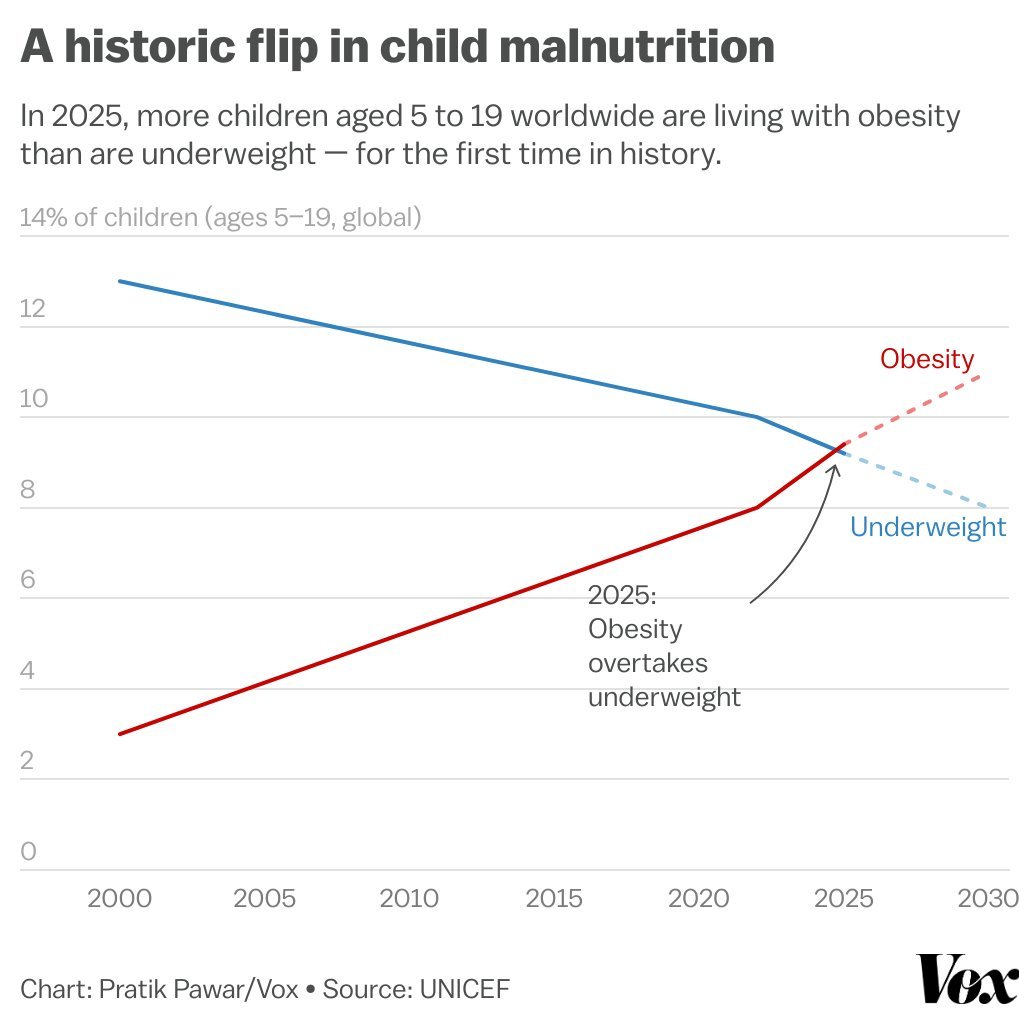

Humanity has so thoroughly banished hunger that, as of this year, there are more obese kids than there are underweight kids. https://t.co/pfIgXaeFtl

Make sure to use the Upscale option to convert your Grok Imagine videos into an HDR version. Your videos will look sharper, HD and far more detailed. https://t.co/jwsn6aGTjy

https://t.co/MR3kzzX7EI