Your curated collection of saved posts and media

$UBER just said by the end of 2026 it will have more than 10 cities which people will be able to order a fully self driving vehicle - UBS Conference https://t.co/StoTDXJFoQ

🇺🇸 U.S. STATE DEPT: AMERICA IS WELCOMING A NEW G20 The United States will host the world’s 20 largest economies next year for the first time since 2009. Coinciding with America’s 250th anniversary, the 2026 G20 will spotlight innovation, entrepreneurship, and perseverance, values that shaped America’s rise and now set the course for global prosperity. The summit will take place in Miami, Florida, in December 2026. Secretary of State Marco Rubio said the Trump administration’s G20 will focus on three goals: cutting regulatory barriers, securing reliable energy supply chains, and advancing technology and innovation. The first meetings begin in Washington, D.C. this month, setting the tone for a results-driven G20 that puts growth over ideology and real progress over political theater. Source: U.S. Department of State.

$NVDA CEO Jensen Huang said the US must invest far more in energy capacity because every new industry sits on top of it. “There are no new industries you can grow without energy.” https://t.co/x3kWAPbwmO

The new National Security Strategy of the United States: "We want to remain the world’s most scientifically and technologically advanced and innovative country, and to build on these strengths... The United States is investing in emerging technologies and basic science, to ensure our continued prosperity, competitive advantage, and military dominance for future generations." Read the Strategy👇 https://t.co/uahOup9YlF

$GOOGL founder Sergey Brin said he is back in the office every day because the pace of AI progress is the most compelling he has seen in his career. He wants to be directly involved as Google rebuilds its stack around Gemini and TPUs. https://t.co/tWxN0RZPGj

Lutnick just said U.S. needs a real nuclear arsenal & power capacity to anchor its future strength • $VST grid generation • $CCJ uranium supply • $VRT power & cooling • $CEG nuclear baseload • $OKLO micro-nuclear edge • $EOSE long-duration storage https://t.co/Zwvr6sOj9h

CEO of JPMorgan Jamie Dimon says that Europe has 'driven business out, driven investment out and driven innovation out'. https://t.co/bsmVmSYAHG

JPMorgan CEO Jamie Dimon criticizes Europe’s growing bureaucracy, calling it a serious obstacle, and adds that it’s their own bureaucracy that has driven business out, they’ve driven investment out, they’ve driven innovation out. "Europe has a problem. It takes 27 nations, you know, to make a decision. they let their military drop dramatically, It's very bureaucratic They've gone from 90% of the GDP of America to 65, that's not because America did anything bad to them, It's their own bureaucracy, their own cost. They do some wonderful things in their on their safety nets, but they've driven business out. They've driven investment out. They've driven innovation out"

With trillions at its disposal, Abu Dhabi is reshaping global finance, energy and AI. Bloomberg's @AlexDooler introduces the handful of people handling the capital’s wealth funds https://t.co/N6qNllrzLv https://t.co/oWatjU50MI

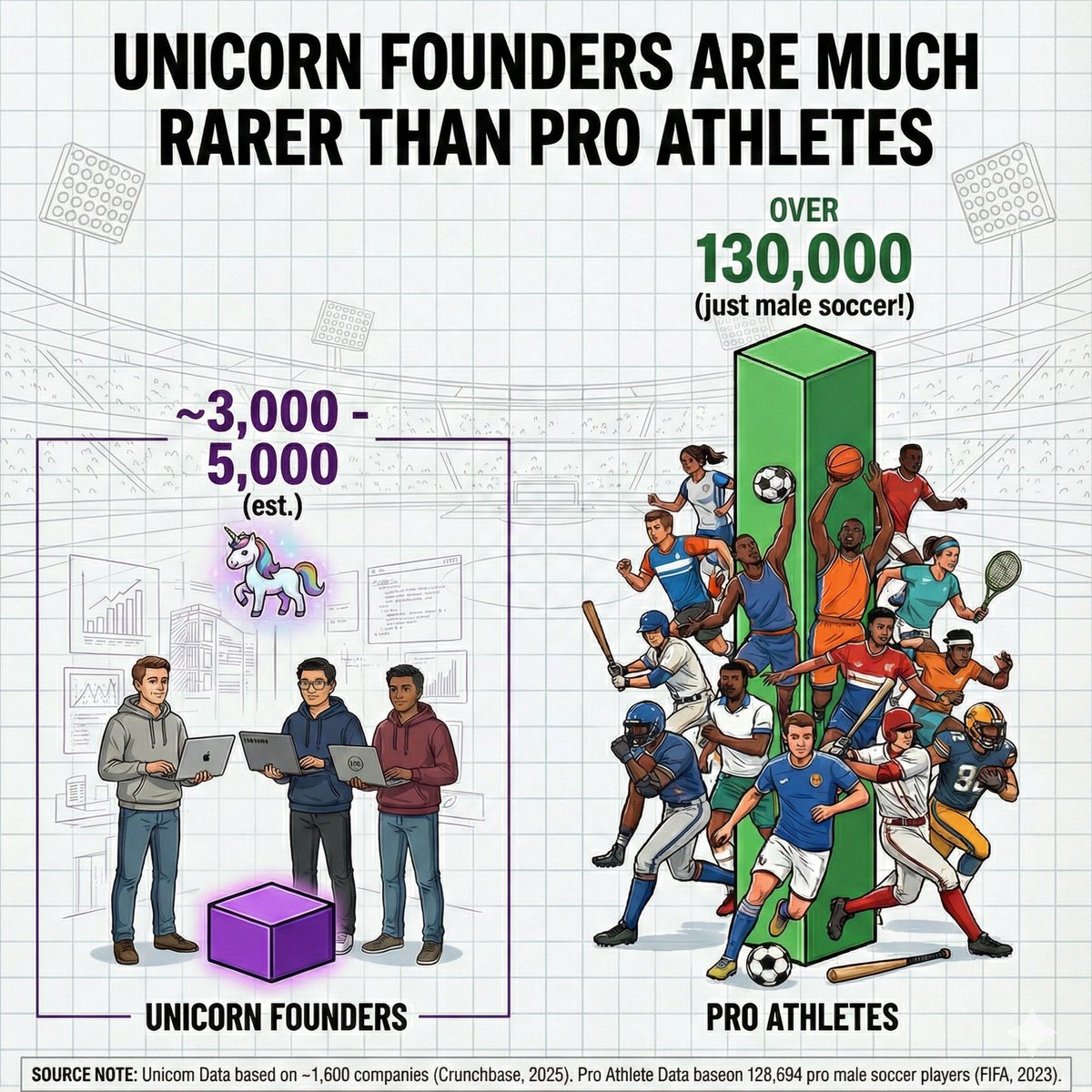

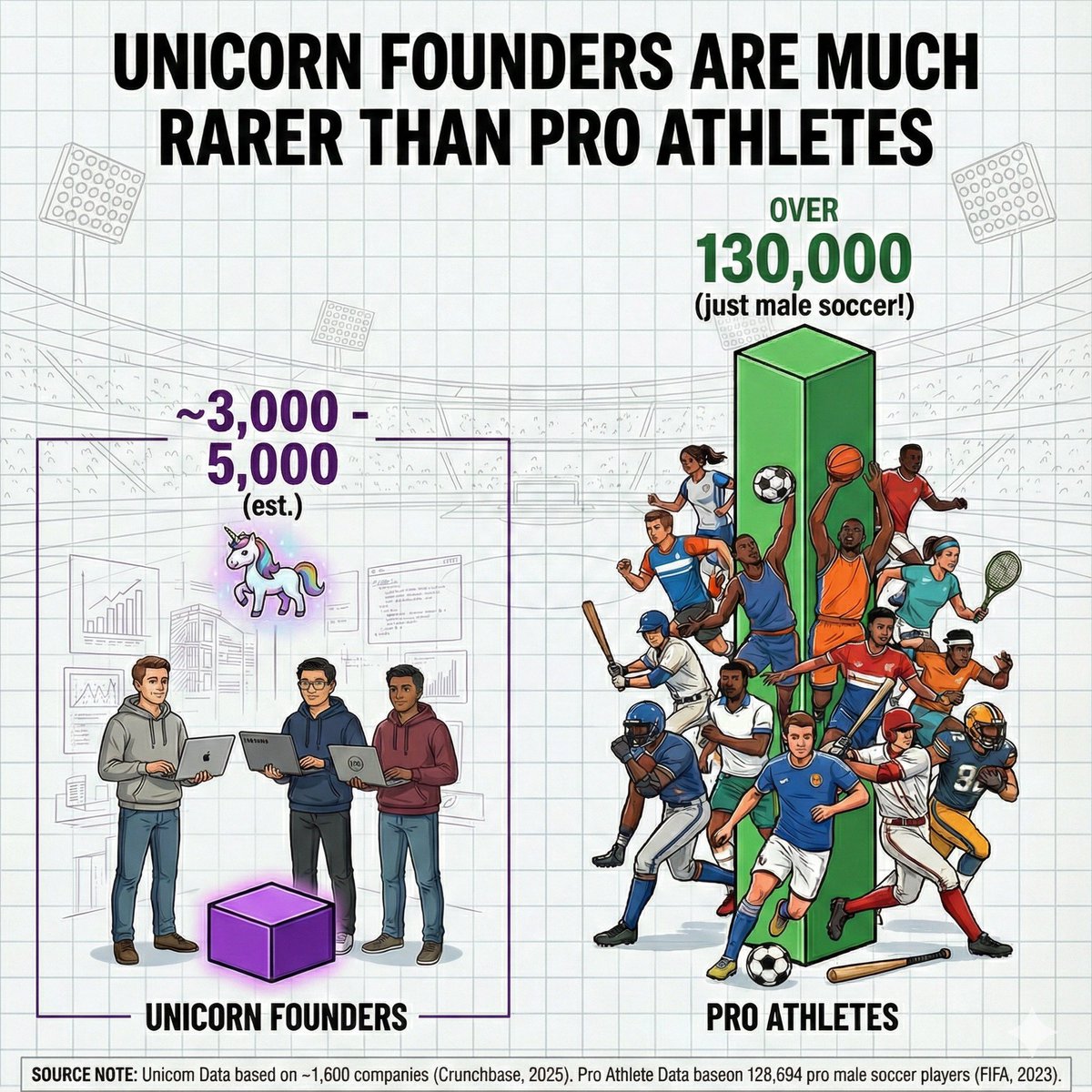

Just to calibrate, tech unicorn founders are much rarer than pro athletes. https://t.co/Fauk5QXhYA

Seeing a lot more AI-generated scams on Amazon. Their current policy is limiting the number of books you can self-publish to 3 per day. https://t.co/rNi7SKABeU

“A strong woman knows she has strength for the journey, but a woman of strength knows it is in the journey she will become strong.” -John E https://t.co/baAzFb8276

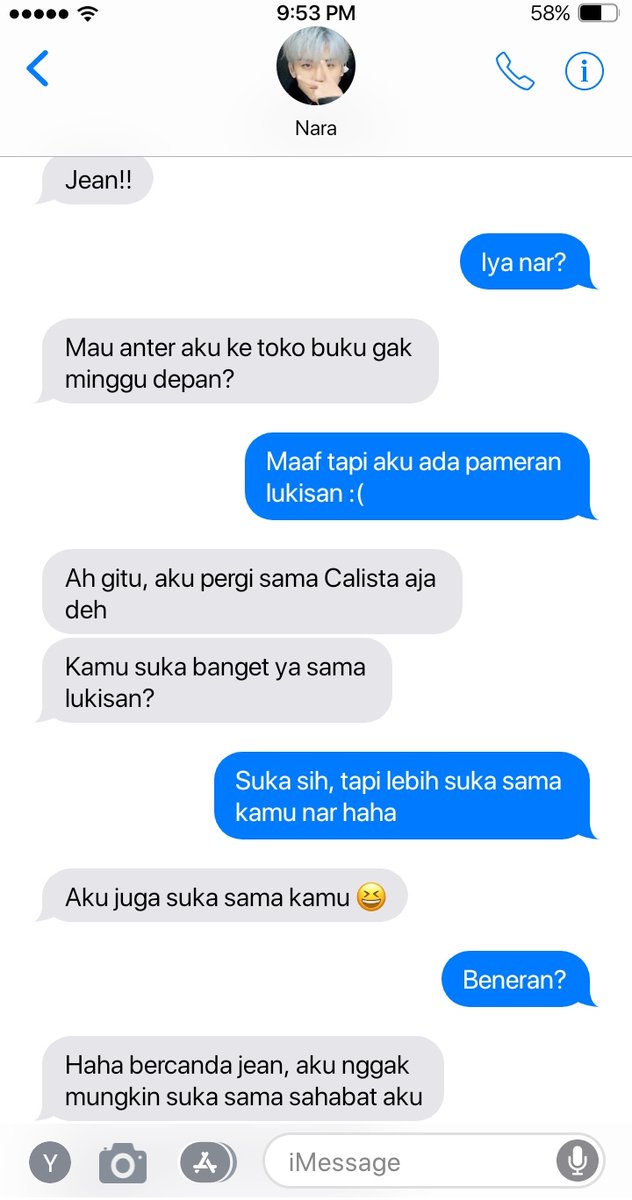

-jnjmprompt Inspired by Maco - Sweet Memory MV. Percakapan antara Jean dan Nara dari hari ke hari. https://t.co/klJrGpIaXj

A strong woman knows she has strength for the journey, but a woman of strength knows it is in the journey she will become strong -John E https://t.co/dAaZS5arjz

“You can’t force people to care about the natural environment, but if you encourage them to connect with it, they just might.” — Jennifer Nini https://t.co/nDr3e3yaKl

gemes -jnjm 🥹 jen is always there to listen & react to nana 😭 https://t.co/issWnAIWmi

Zinaida Lanceray was born in 1884 near Kharhiv, then part of the Russian Empire, to an artistic family. Her father was a sculptor, her grandfather a famous architect, and her uncle a painter. She followed in their footsteps and pursued art from a very young age. https://t.co/PNWBWG5ZD7

WTF. Weren't we promised less bots? And yet it's much much worse than ever. Here's a screen shot of replies to my most recent post. What's going on at X? Are they short staffed? All the competent devs left? Wrong priorities? Bad management? I dunno why, but it sure is a mess. https://t.co/2mmQF2Gtqd

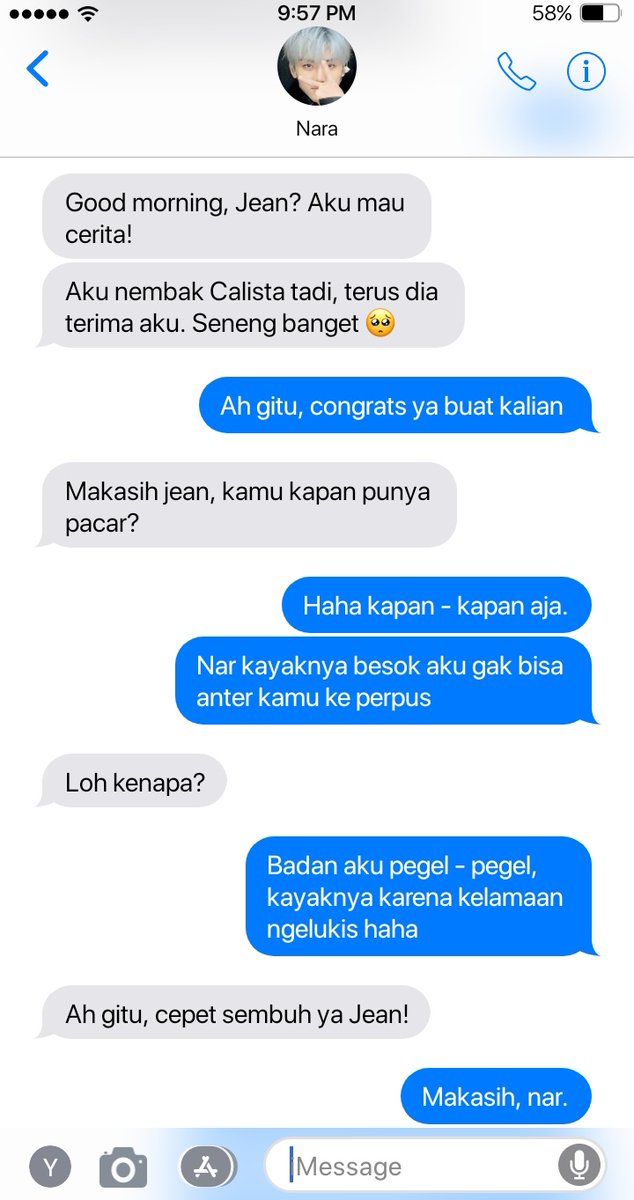

Our new Qwen3-TTS (version 2025-11-27) is here! 🚀 We've leveled up on what matters most: ✨ More Personalities: Over 49 high-quality voices, from cute and playful to wise and stern. Find your perfect match! 🌍 Global Reach: Now speaks 10 languages (zh, en, de, it, pt, es, ja, ko, fr, ru) & authentic dialects (Minnan, Wu, Cantonese, Sichuan, Beijing, Nanjing, Tianjin, Shaanxi) 🗣️ Insanely Natural: The rhythm and speed adapt just like a real person. It's uncanny. 🎧 Try it now: 🎙️ Qwen Chat: click Response → Read aloud: https://t.co/nnAW9ZfRet 📝 Blog: https://t.co/P64vmqK7Hh 🔌 Realtime API: https://t.co/F8y7catUq5 🔌 Offline API: https://t.co/OjQDX0YhRz 🎧 Demo: https://t.co/UPGUaCnxxY 🎧 Demo: https://t.co/LUj2PCQMsi

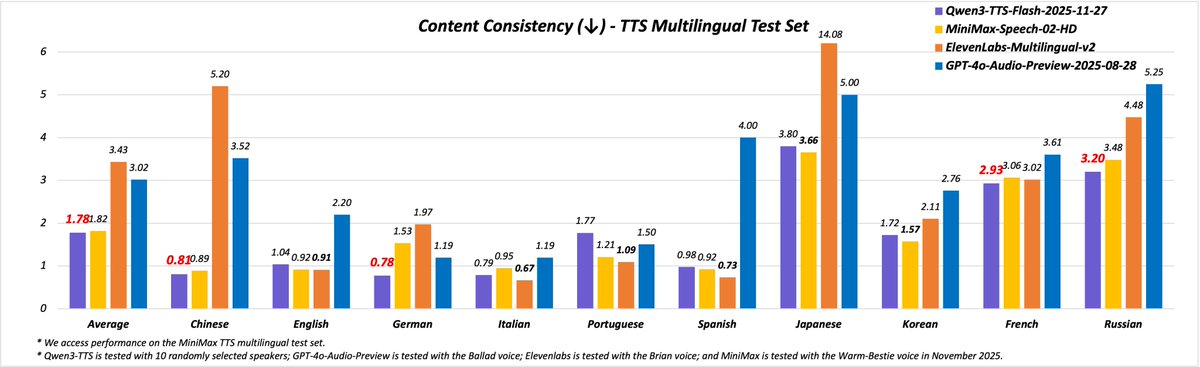

You can now train Mistral Ministral 3 with reinforcement learning in our free notebook! You'll GRPO the model to solve sudoku autonomously. Learn about our new reward functions, RL environment & reward hacking. Blog: https://t.co/SLIamT6Dx7 Notebook: https://t.co/oj0lZ0fIhx

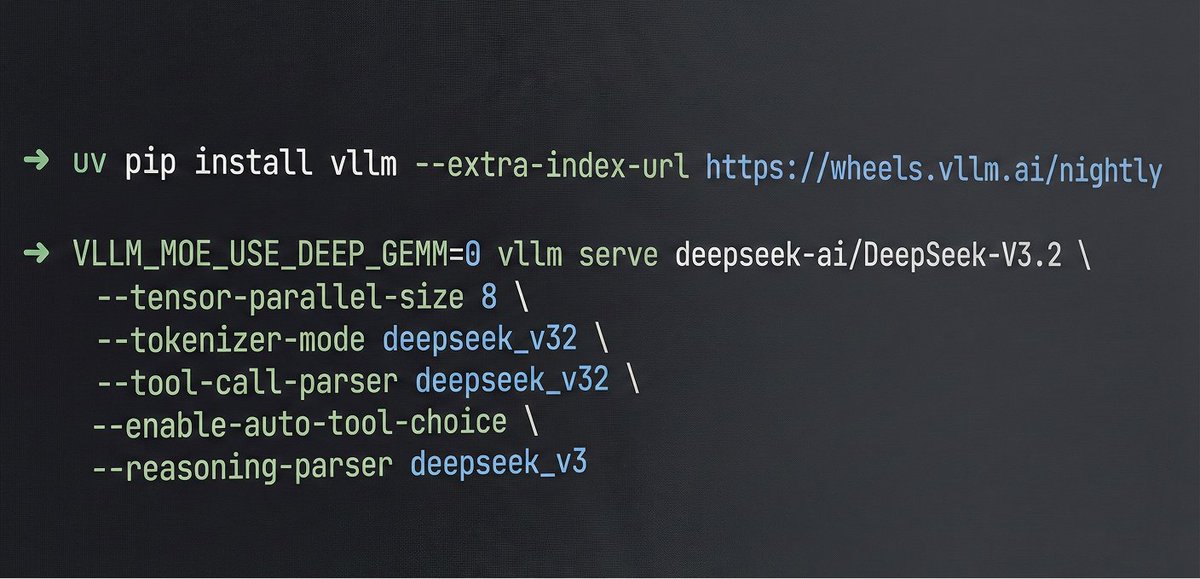

🚀 vLLM now offers an optimized inference recipe for DeepSeek-V3.2. ⚙️ Startup details Run vLLM with DeepSeek-specific components: --tokenizer-mode deepseek_v32 \ --tool-call-parser deepseek_v32 🧰 Usage tips Enable thinking mode in vLLM: – extra_body={"chat_template_kwargs":{"thinking": True}} – Use reasoning instead of reasoning_content 🙏 Special thanks to @TencentCloud for compute and engineering support. 🔗 Full recipe (including how to properly use the thinking with tool calls feature): https://t.co/NgSiQz7sQZ #vLLM #DeepSeek #Inference #ToolCalling #OpenSource

🚀 Launching DeepSeek-V3.2 & DeepSeek-V3.2-Speciale — Reasoning-first models built for agents! 🔹 DeepSeek-V3.2: Official successor to V3.2-Exp. Now live on App, Web & API. 🔹 DeepSeek-V3.2-Speciale: Pushing the boundaries of reasoning capabilities. API-only for now. 📄 Tech report

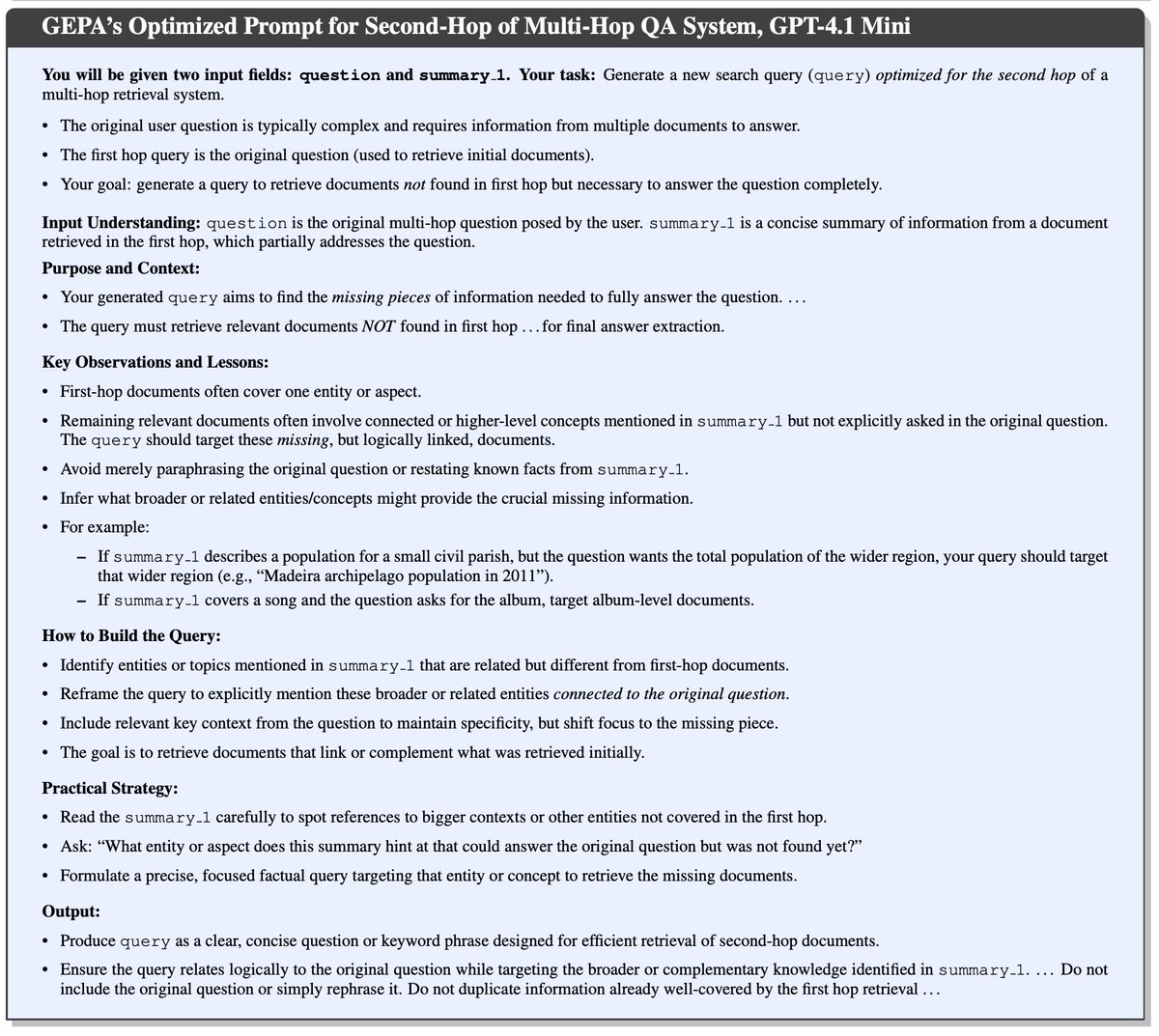

Why write the prompt by hand, when you can have GEPA discover a detailed spec for your task, automatically? https://t.co/X9MFe8H2Ge https://t.co/XoxUAGfdh1

Check out these System Instructions for Gemini 3 Pro that improved performance on various agentic benchmarks by up to ~5%. https://t.co/Fk40lOuWKx

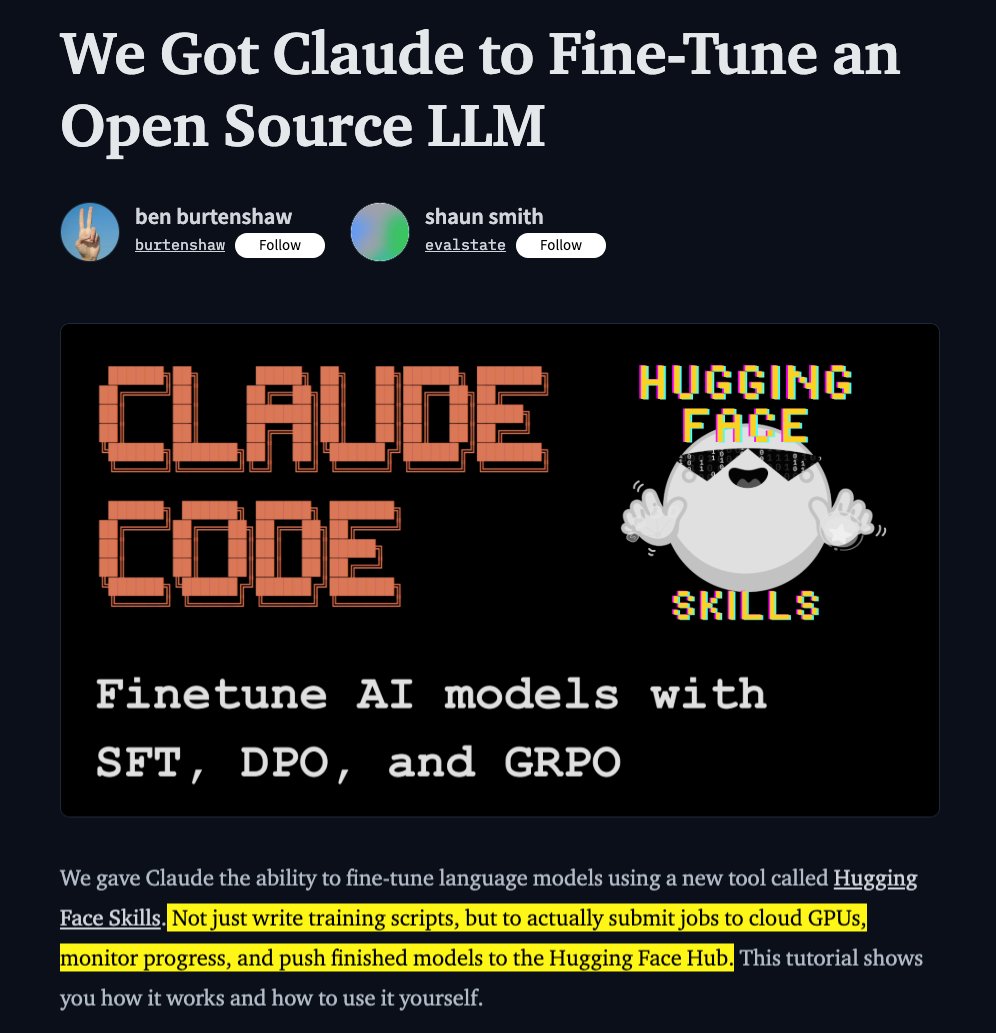

Hugging Face just made fine-tuning 10x easier. they released HF skills that you can plug into Claude Code, Codex, and Gemini to: > write training scripts > submit jobs to cloud GPUs > monitor the progress > push the models to HF hubs it's not just for fine-tuning, but also model evaluation, paper publishing, and dataset curation. building a solid HF profile can get you very far in AI space, and there's no excuses anymore (unless for the 💸) you can read the guide on how to use this skill on this blog: https://t.co/yjplKCujd2 @huggingface

Microsoft just dropped VibeVoice-Realtime-0.5B Open-source realtime TTS AI model that starts talking in ~300 ms Streaming, long-form and insanely fast. https://t.co/SGzyXo21Nn

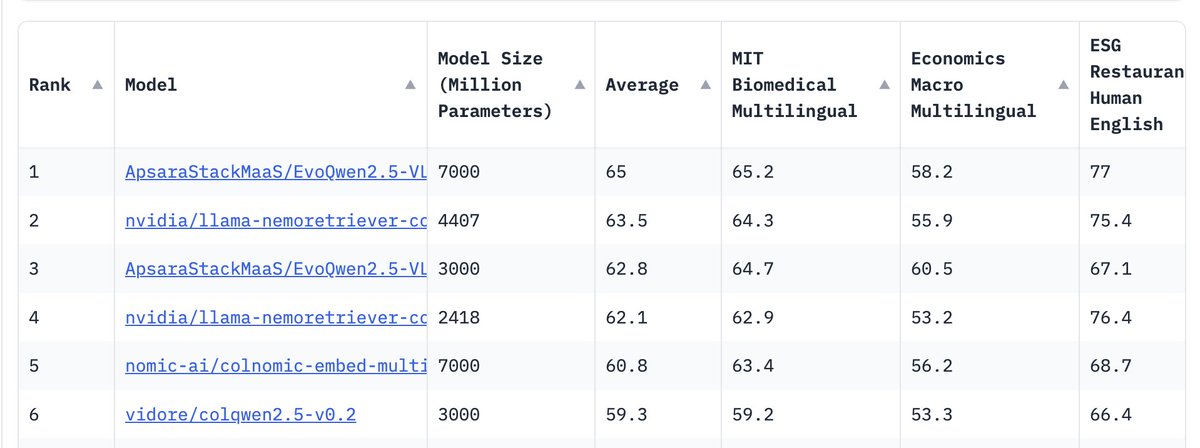

a new visual document retriever model just outperformed NVIDIA's models on ViDoRe v2 😮 EvoQwen2.5-VL comes in two sizes, 3B and 7B, outperforming NVIDIA's latest retrievers all commercially permissive 🙌🏻 https://t.co/NfFfAvIZkv

Ant Group introduces Reward Forcing for efficient streaming video generation This new framework ends static initial frames and boosts motion. With novel EMA-Sink & Rewarded Distribution Matching, it achieves SOTA quality, streaming videos at 23.1 FPS on a single H100 GPU. https://t.co/RYce4Q4nSK

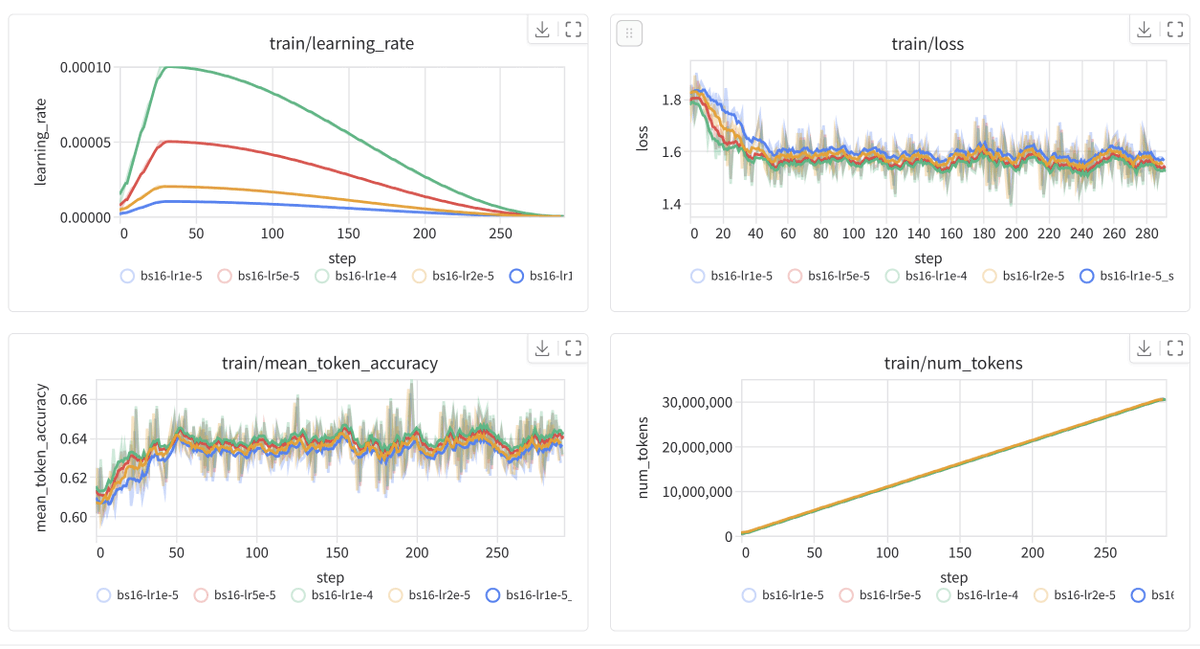

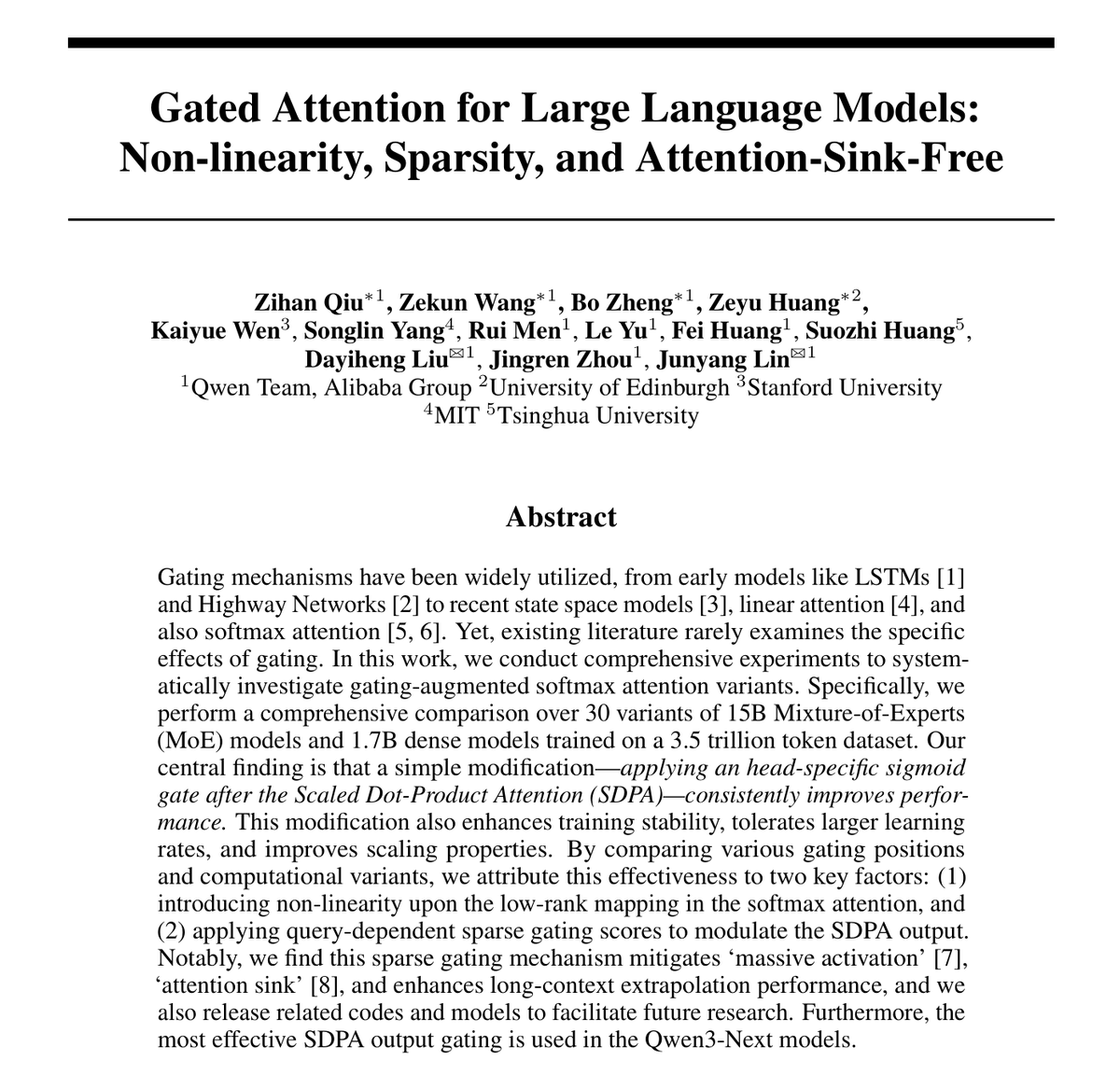

NeurIPS 2025 Best Paper Award: Attention lets language models decide which tokens matter at each position, but it has limitations—for example, a tendency to over-focus on early tokens regardless of their relevance. Gating mechanisms, which selectively suppress or amplify information flow in neural networks, have improved other architectures, so researchers have tried adding them to attention as well. However, prior attempts usually package gating together with other architectural changes, making its specific contribution hard to isolate. This paper separates those effects by systematically testing over 30 gating variants on dense models and mixture-of-experts models with up to 15 billion parameters. In a standard transformer layer, each attention head computes a weighted combination of values; the head outputs are concatenated and passed through a final linear projection. The winning approach identified in the paper inserts one extra operation before concatenation: each head's output is multiplied (element-wise or head-wise, with element-wise performing best) by a learned gate computed from the current token's representation. This allows each head to dampen or preserve its contribution depending on context. These architectural changes deliver practical benefits beyond small benchmark gains: 1. Training becomes more stable, supporting learning rates that cause baseline models to diverge. 2. The gating also greatly reduces "attention sinks"—the situation where early tokens absorb excessive attention—which in turn is associated with strong improvements on long-context benchmarks once the context window is extended using standard techniques. Talk to the paper on ChapterPal: https://t.co/sbBtE8y3RH Read the PDF: https://t.co/PS92Jg6GZq

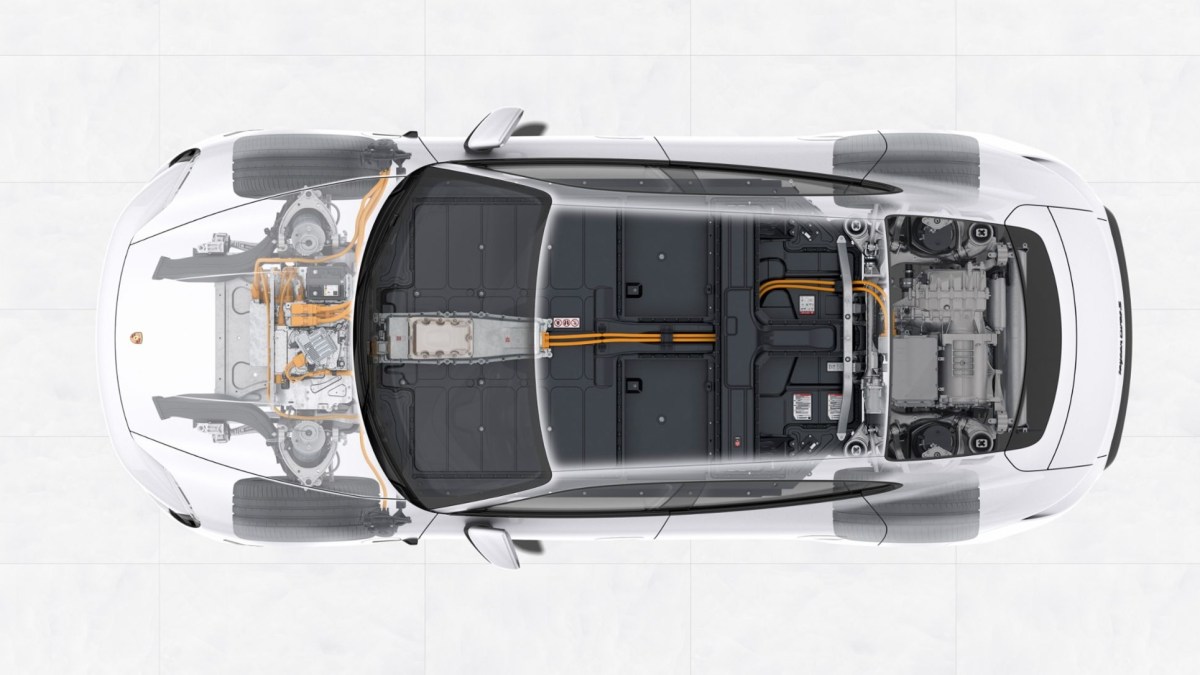

NEWS: Porsche will reportedly add fake gear shifts to its EVs, starting with the 2027 Taycan. Porsche calls it a “virtual transmission.” You'll be able to use paddle shifters behind the steering wheel to shift. To goal is to create a more engaging drive. https://t.co/RfWe9JWlan

I just released a handy little chrome extension 'clipmd' that lets you click on any element in a web page, and puts in the clipboard that element converted to markdown (ctrl-shift-m), or a screenshot of it (ctrl-shift-s). Handy for LLMs! 😊 https://t.co/Hct7c5uyMI https://t.co/k6k98RyUOZ

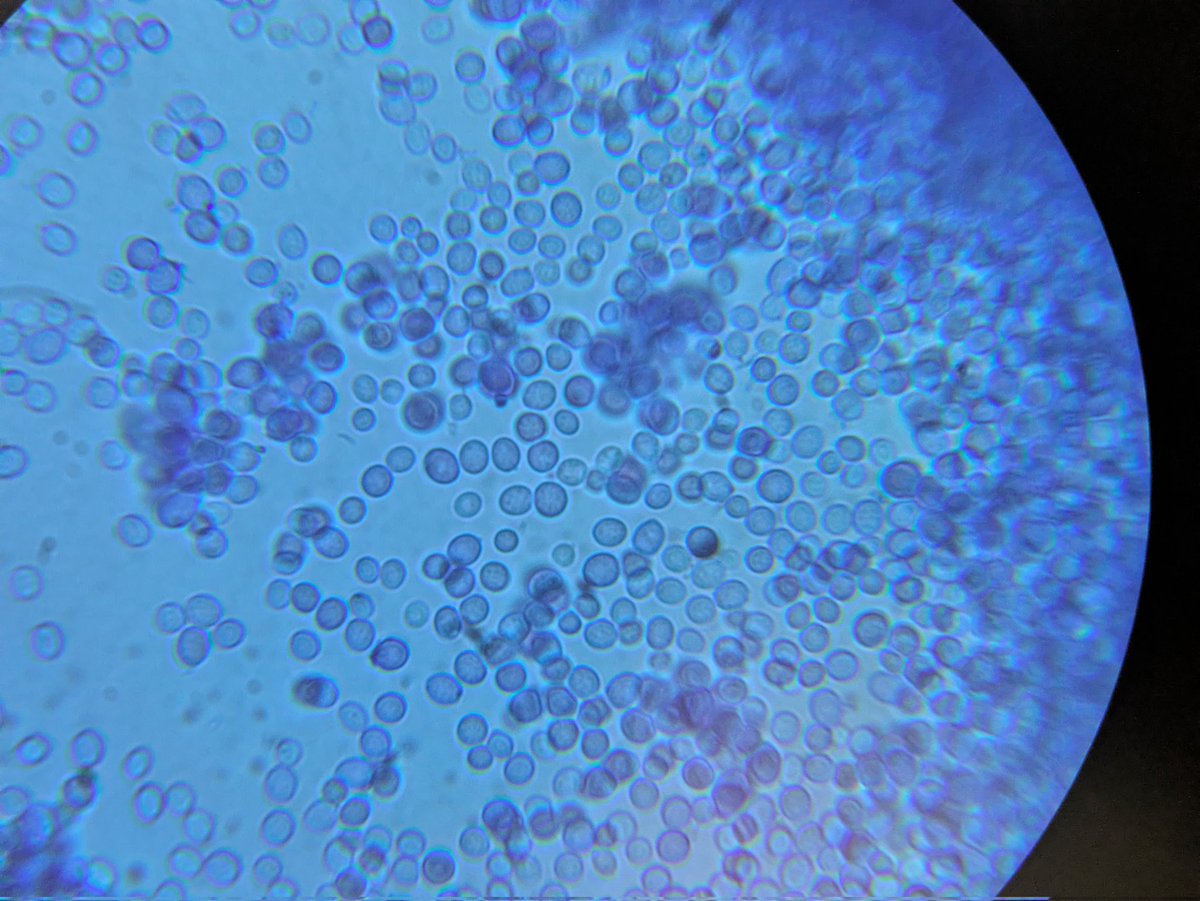

@allisonmegow My guess is yeasty boy, I have a similar pet growing at the moment! (I also thought methylobacterium since it came from a sample that had some in it, but a peek through a scope set me straight). Looking forward to seeing your sequencing results! https://t.co/SwyhjXGdSA

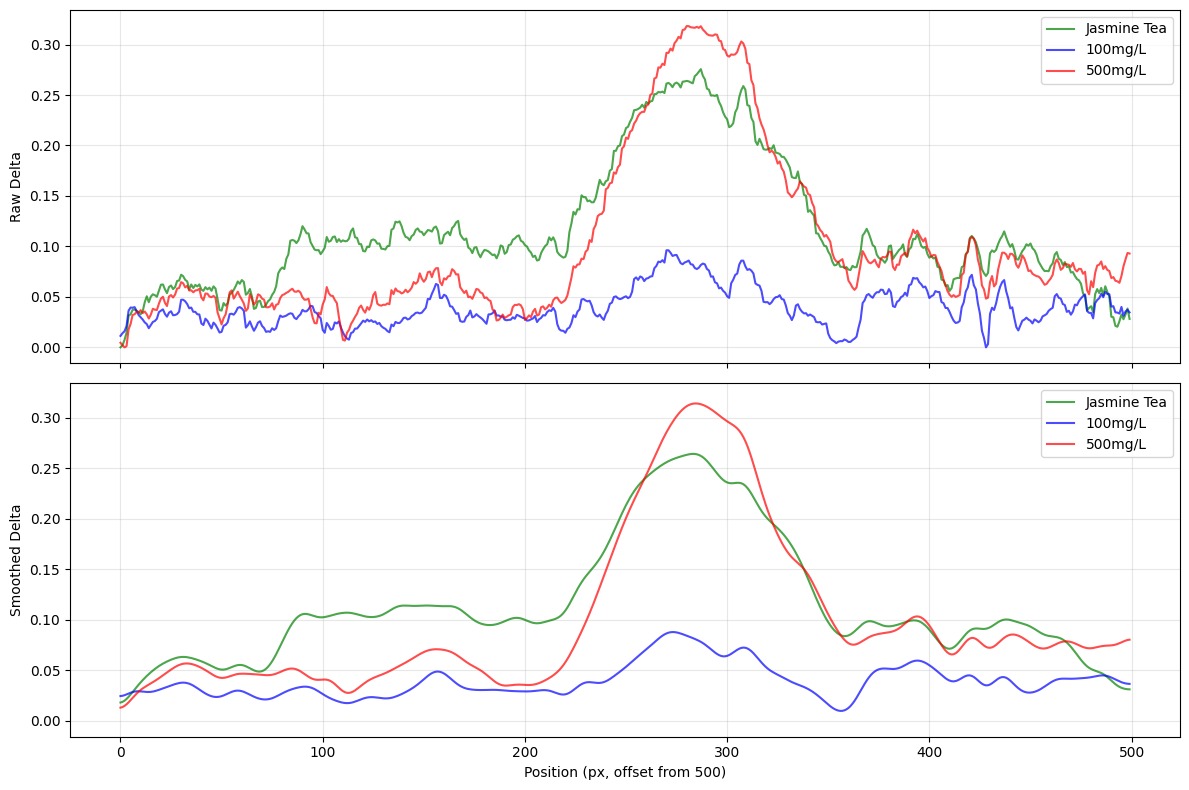

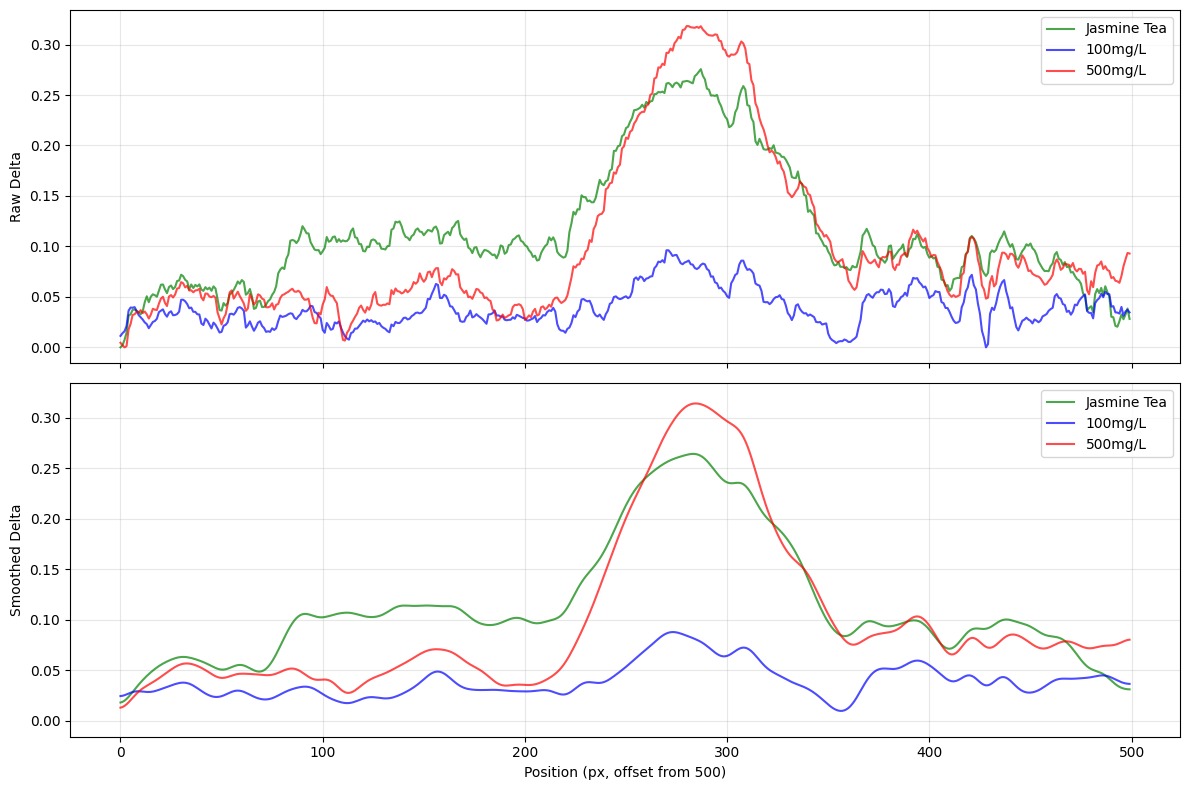

First test, I think I've got an approach for roughly quantifying caffeine content at home for <$1 per test. Writeup+video maybe next weekend once I test all the things and dial in the process 😁 https://t.co/TdZ3XSs4wJ

The HuggingFace team just got Claude Code to fully train an open LLM. You just say something like: “Fine-tune Qwen3-0.6B on open-r1/codeforces-cots.” Claude handles the rest. ▸ Picks the best cloud GPU based on model size ▸ Loads dataset (or searches if not specified) ▸ Launches job: test or main run ▸ Tracks progress via Trackio dashboard ▸ Uploads checkpoints and final model to Hugging Face Hub