@vllm_project

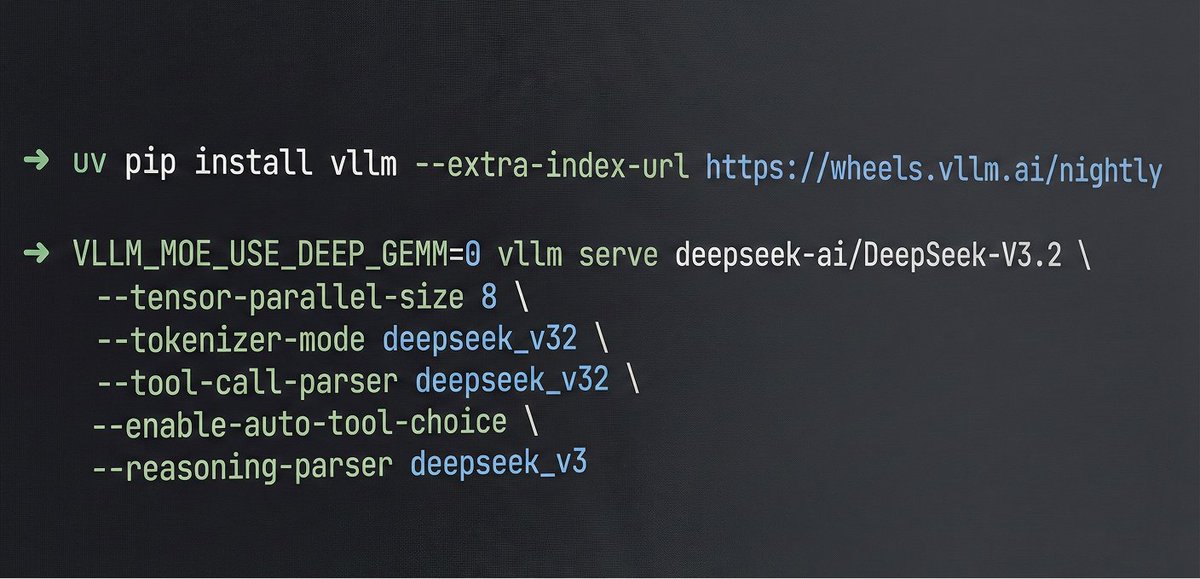

🚀 vLLM now offers an optimized inference recipe for DeepSeek-V3.2. ⚙️ Startup details Run vLLM with DeepSeek-specific components: --tokenizer-mode deepseek_v32 \ --tool-call-parser deepseek_v32 🧰 Usage tips Enable thinking mode in vLLM: – extra_body={"chat_template_kwargs":{"thinking": True}} – Use reasoning instead of reasoning_content 🙏 Special thanks to @TencentCloud for compute and engineering support. 🔗 Full recipe (including how to properly use the thinking with tool calls feature): https://t.co/NgSiQz7sQZ #vLLM #DeepSeek #Inference #ToolCalling #OpenSource