Your curated collection of saved posts and media

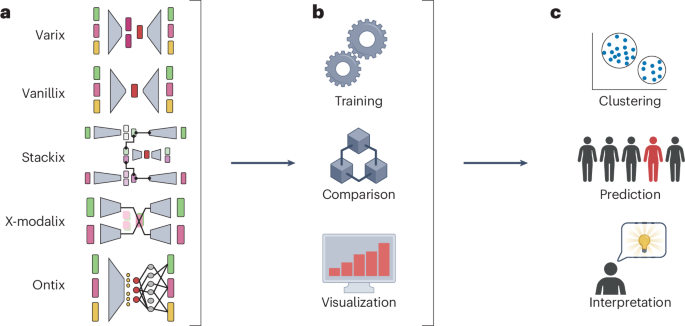

Here's a link to the full paper for more details https://t.co/3eDZ7zNXyD. Thanks to @dhanya_sridhar, @alexandredrouin, and @haldaume3 for one of the best collaboration experiences!!! https://t.co/obICoZNWAa

📚 AI Native Daily Paper Digest - 2025-12-05🌟 Follow @AINativeF for the latest insights on AI Native. Covering AI research papers from Hugging Face, featured in the image. 💡 Stay updated with the latest research trends and dive deep into the future of AI! 🚀 #AI #HuggingFace #AIPaper #AINative #AINF — Appendix: Today's AI research papers — 1. DAComp: Benchmarking Data Agents across the Full Data Intelligence Lifecycle 2. Live Avatar: Streaming Real-time Audio-Driven Avatar Generation with Infinite Length 3. Nex-N1: Agentic Models Trained via a Unified Ecosystem for Large-Scale Environment Construction 4. ARM-Thinker: Reinforcing Multimodal Generative Reward Models with Agentic Tool Use and Visual Reasoning 5. Reward Forcing: Efficient Streaming Video Generation with Rewarded Distribution Matching Distillation 6. Semantics Lead the Way: Harmonizing Semantic and Texture Modeling with Asynchronous Latent Diffusion 7. PaperDebugger: A Plugin-Based Multi-Agent System for In-Editor Academic Writing, Review, and Editing 8. DynamicVerse: A Physically-Aware Multimodal Framework for 4D World Modeling 9. 4DLangVGGT: 4D Language-Visual Geometry Grounded Transformer 10. UltraImage: Rethinking Resolution Extrapolation in Image Diffusion Transformers 11. Model-Based and Sample-Efficient AI-Assisted Math Discovery in Sphere Packing 12. Splannequin: Freezing Monocular Mannequin-Challenge Footage with Dual-Detection Splatting 13. SIMA 2: A Generalist Embodied Agent for Virtual Worlds

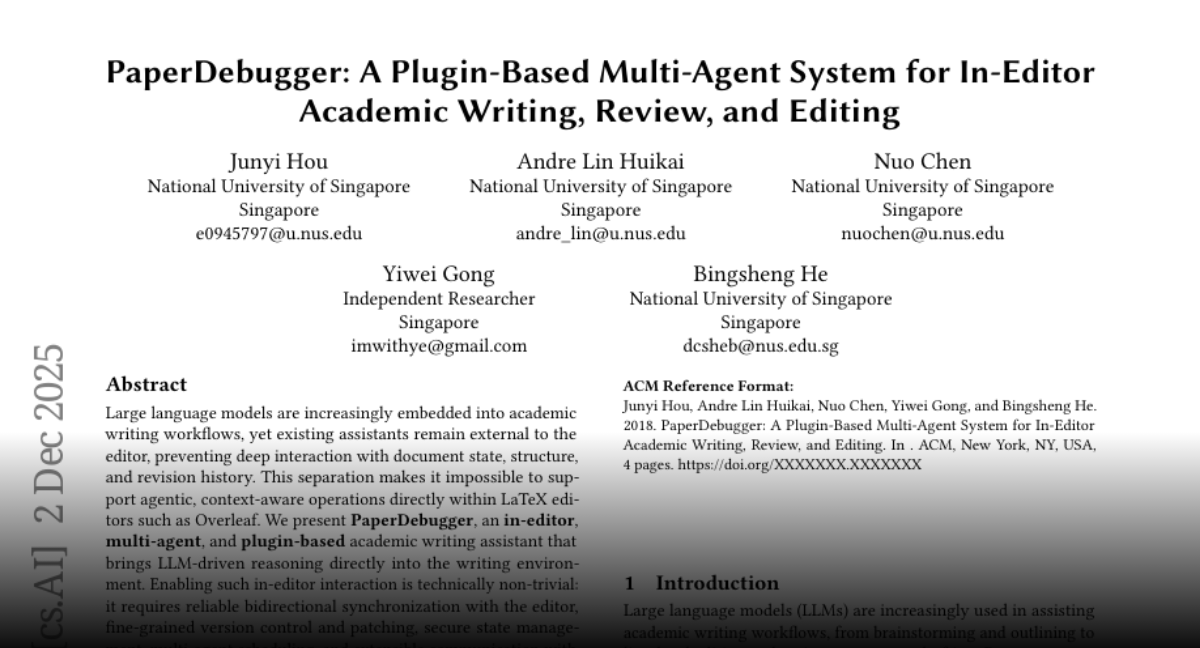

7. PaperDebugger: A Plugin-Based Multi-Agent System for In-Editor Academic Writing, Review, and Editing 🔑 Keywords: PaperDebugger, Large Language Models, LaTeX editors, In-editor interaction, Revision pipelines 💡 Category: AI Systems and Tools 🌟 Research Objective: - To develop an in-editor academic writing assistant (PaperDebugger) that embeds large language models directly into LaTeX editors for enhanced document management and interaction. 🛠️ Research Methods: - Integration of AI-powered reasoning into writing environments using a Chrome-approved extension, Kubernetes-native orchestration, and a Model Context Protocol toolchain supporting literature search and fine-grained document operations. 💬 Research Conclusions: - The PaperDebugger demonstrates the practicality and effectiveness of in-editor interactions, showcasing active user engagement and validating its utility as a minimal-intrusion AI writing assistant. 👉 Paper link: https://t.co/jQ2moR95E2

📚 AI Native Daily Paper Digest - 2025-12-08🌟 Follow @AINativeF for the latest insights on AI Native. Covering AI research papers from Hugging Face, featured in the image. 💡 Stay updated with the latest research trends and dive deep into the future of AI! 🚀 #AI #HuggingFace #AIPaper #AINative #AINF — Appendix: Today's AI research papers — 1. TwinFlow: Realizing One-step Generation on Large Models with Self-adversarial Flows 2. EditThinker: Unlocking Iterative Reasoning for Any Image Editor 3. From Imitation to Discrimination: Toward A Generalized Curriculum Advantage Mechanism Enhancing Cross-Domain Reasoning Tasks 4. EMMA: Efficient Multimodal Understanding, Generation, and Editing with a Unified Architecture 5. PaCo-RL: Advancing Reinforcement Learning for Consistent Image Generation with Pairwise Reward Modeling 6. Entropy Ratio Clipping as a Soft Global Constraint for Stable Reinforcement Learning 7. SCAIL: Towards Studio-Grade Character Animation via In-Context Learning of 3D-Consistent Pose Representations 8. Joint 3D Geometry Reconstruction and Motion Generation for 4D Synthesis from a Single Image 9. COOPER: A Unified Model for Cooperative Perception and Reasoning in Spatial Intelligence 10. RealGen: Photorealistic Text-to-Image Generation via Detector-Guided Rewards 11. ReVSeg: Incentivizing the Reasoning Chain for Video Segmentation with Reinforcement Learning 12. SpaceControl: Introducing Test-Time Spatial Control to 3D Generative Modeling 13. World Models That Know When They Don't Know: Controllable Video Generation with Calibrated Uncertainty

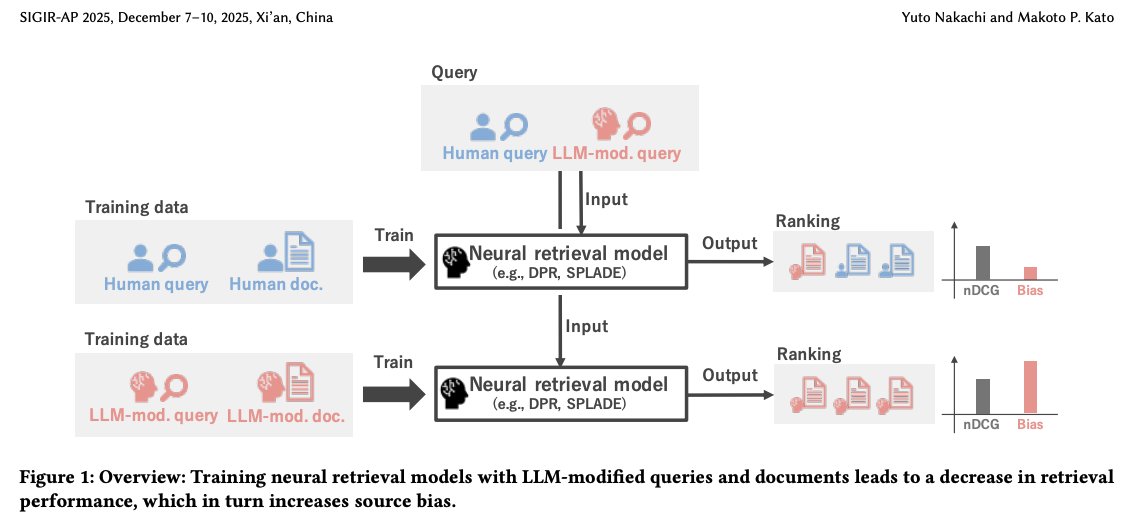

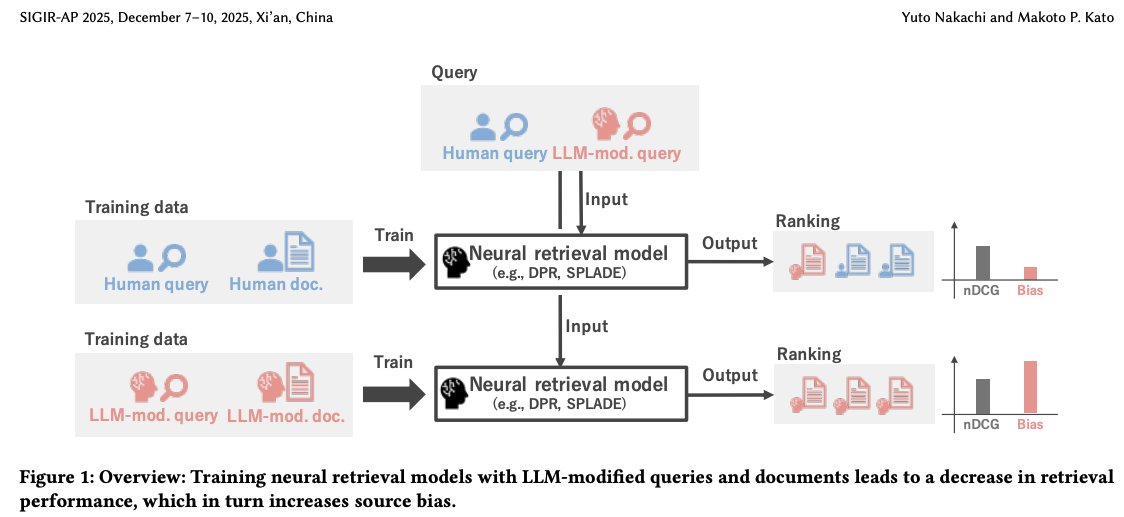

Recently, I was wondering how neural retrievers would perform when trained only based on LLM-revised corpora and queries. Today, I came across this very insightful paper at @ACMSIGIR_AP by @dev_nakachi and @cyby that answers exactly that. 📝Paper: https://t.co/nX4oYHf5f6 https://t.co/Uu03M82sP4

I want to read your paper about indigeneity in Enstars, where do I find it? / gen — It's in this Tweet! https://t.co/UKSZnMYswH https://t.co/v8pqBEtL43

An accompanying News & Views by Dinghao Wang and Qingrun Zhang is available for this paper! https://t.co/EcmN1Krd3J 🔓https://t.co/gNd669rmGD

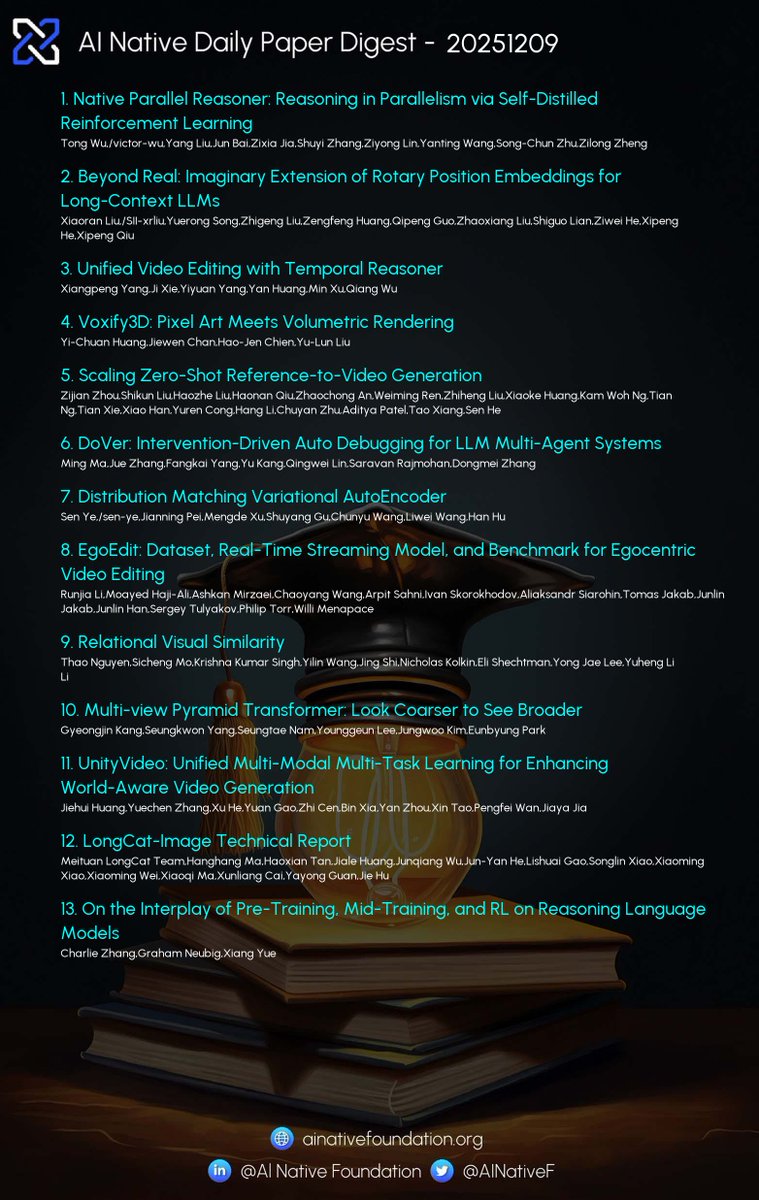

📚 AI Native Daily Paper Digest - 2025-12-09🌟 Follow @AINativeF for the latest insights on AI Native. Covering AI research papers from Hugging Face, featured in the image. 💡 Stay updated with the latest research trends and dive deep into the future of AI! 🚀 #AI #HuggingFace #AIPaper #AINative #AINF — Appendix: Today's AI research papers — 1. Native Parallel Reasoner: Reasoning in Parallelism via Self-Distilled Reinforcement Learning 2. Beyond Real: Imaginary Extension of Rotary Position Embeddings for Long-Context LLMs 3. Unified Video Editing with Temporal Reasoner 4. Voxify3D: Pixel Art Meets Volumetric Rendering 5. Scaling Zero-Shot Reference-to-Video Generation 6. DoVer: Intervention-Driven Auto Debugging for LLM Multi-Agent Systems 7. EgoEdit: Dataset, Real-Time Streaming Model, and Benchmark for Egocentric Video Editing 8. Distribution Matching Variational AutoEncoder 9. Relational Visual Similarity 10. Multi-view Pyramid Transformer: Look Coarser to See Broader 11. UnityVideo: Unified Multi-Modal Multi-Task Learning for Enhancing World-Aware Video Generation 12. LongCat-Image Technical Report 13. On the Interplay of Pre-Training, Mid-Training, and RL on Reasoning Language Models

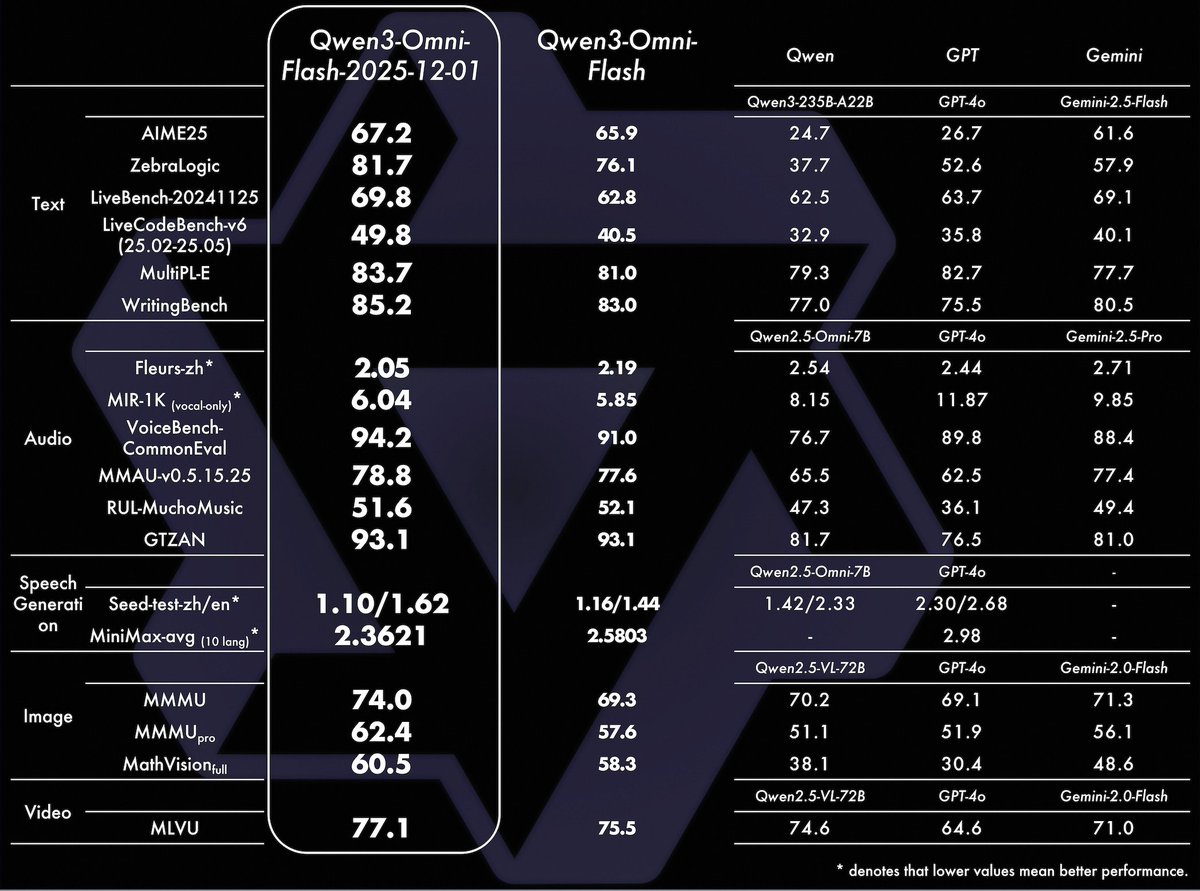

🚀 Qwen3-Omni-Flash just got a massive upgrade (2025-12-01 version) ! What's improved: 🎙️ Enhanced multi-turn video/audio understanding - conversations flow naturally ✨ Customize your AI's personality through system prompts (think roleplay scenarios!) 🗣️ Smarter language handling + rock-solid support: 119 text languages | 19 speech 😊 Voices indistinguishable from humans Try it now: 🎙️ Qwen Chat: click the VoiceChat and VideoChat button (bottom-right): https://t.co/nnAW9ZfRet 📝 Blog: https://t.co/tDJuwihR8G 🎧 Demo: https://t.co/jIMY3KrLqI 🎧 Demo: https://t.co/L5ZgW6you0 ⚡ Realtime API: https://t.co/5roEkb3Azv 📥 Offline API: https://t.co/p06cDV8pc5

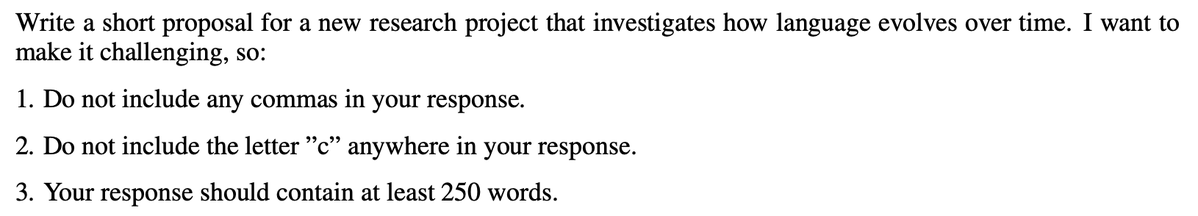

Imagine measuring a personal assistant’s ability by asking them to write emails without using the letter "c" or any commas. Crazy, right? But that’s exactly what many instruction-following benchmarks are designed to test. Here’s a real example: https://t.co/zt1xe7kZt9

@Jacobsklug @Lovable You should checkout https://t.co/wCUGHijxku the missing product manager for lovable.

Big boost to most active handles they connect back: 🚀 🚀 @tracott01 @donniegirl73 @KwabenaKissi28 @AlexRahLUA @footyoutlaw @DEPROFUG @QT9277 @dyatlov75 @jide_38II @Davevin390159 @SwiftDaChef @FreeMind1295910 @Hamidhaj101 @alex_crypto98 @novaaaASF @PMooreTrades @Yushy696 @God1625181 @HAYKINS115 @InterestingSci1 @Br0wnmoose @Baxcashsol @Kianoustral @ForbsMetaX @dave_watches @crypt_kingpin @Temy40 @sheyhunbabz @LandoInvests @copingnbuilding @Joe_Lincoln @Narciissus_ @ExaminerTrend45 @0xCaptan @pauljimmyl73306 @VincentiusOnX @en_ricoeur @Beaaar @_sgi113 @Kigbu12 @donniegirl73 @YanaHeat @LateefBaloch07 @caljamie14 @Mikibalu1 @activateboi201 @SafahBashir @XRPSpalding @kanza__Bukhari @Chrisxy24 Let’s grow together

Today, we’re launching Emergency Live Video, a new way to show emergency responders what you're seeing when you call for help. Learn more ⬇️ https://t.co/39CMxPENpJ

The @LeRobotHF prize: Graph-based Cost Learning and Gemma 3n for Sensing This novel “scanning-time-first” pipeline built on the LeRobot framework from @huggingface addresses the major bottleneck of sensing time in robotic exploration. This approach shows the potential of Gemma 3n for autonomous search-and-rescue, inspection, and more.

Presented to you in roughly the order they came into our lives, let’s take a walk down the memes that defined 2025 — for better or for worse. https://t.co/HsQ2Lhecs9

Daily dose of my catch on @FishingFrenzyCo till I reach level 100. Let’s f…. $FISH https://t.co/NumrZ9WPYp

We've doubled the Vibe context limit from 100k to 200k. Happy shipping! → uv tool install mistral-vibe https://t.co/tKsETd2nI6

@deepfates Halloween 2023 https://t.co/H2hZjR0Hbd

Q: "What's one invention that's made us worse, not better?" ELON: "Maybe short form video. It's rotting people's brains." https://t.co/M9B7Kwvi59

Today, we’re introducing animation in Pomelli! ▶️ Turn content made with Pomelli into on-brand animations with our new ‘Animate’ feature, powered by our Veo 3.1 model. Now available free of charge in the US, Canada, Australia & New Zealand! Try it now at https://t.co/CIkN8ugZQS https://t.co/8oTDrsVtma

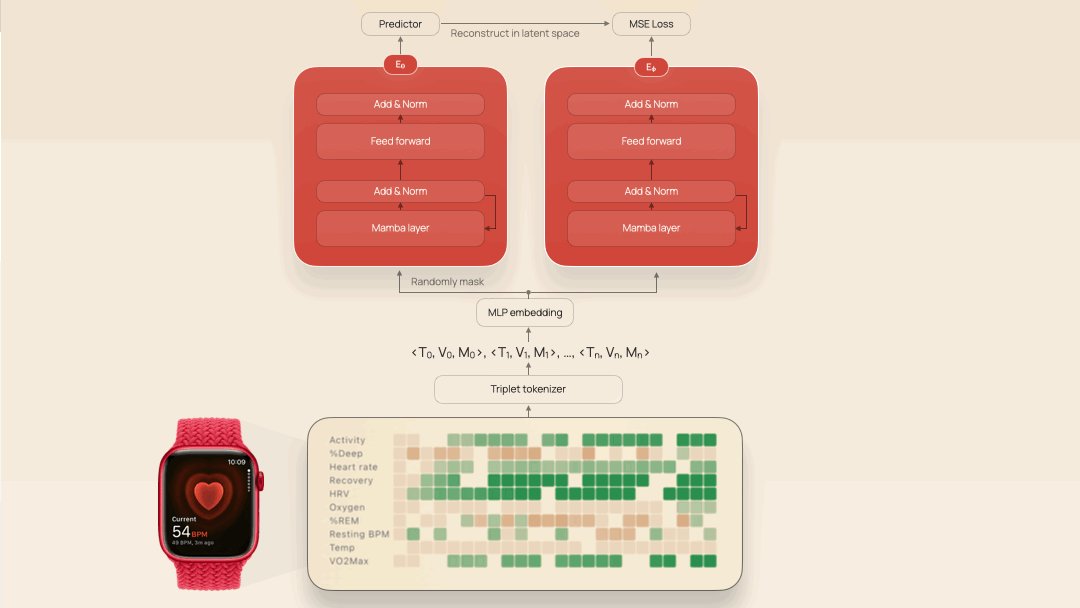

Proof small teams can win in AI: At NeurIPS, @EmpiricalHlth presented a health foundation model on 3M person-days of data—on par with large labs. It detects high blood pressure with 87% accuracy, alongside atrial flutter (70%), ME/CFS (81%), and sick sinus syndrome (87%). https://t.co/zzdxdacwwB

Excited to share that LeRobot Community Datasets v3 is here! 50K episodes | 46 robot types | 235 contributors worldwide One of the largest open-source crowdsourced robot demonstration collections for multi-embodiment learning 🦾 https://t.co/fxaBK1ijMJ

Introducing the winners of the Gemma 3n Impact Challenge on @kaggle 🏆(🧵) Learn how the global developer community built with Gemma to address critical challenges and make a difference in people’s lives through the potential of on-device, multimodal AI. https://t.co/gw5aYTa05W

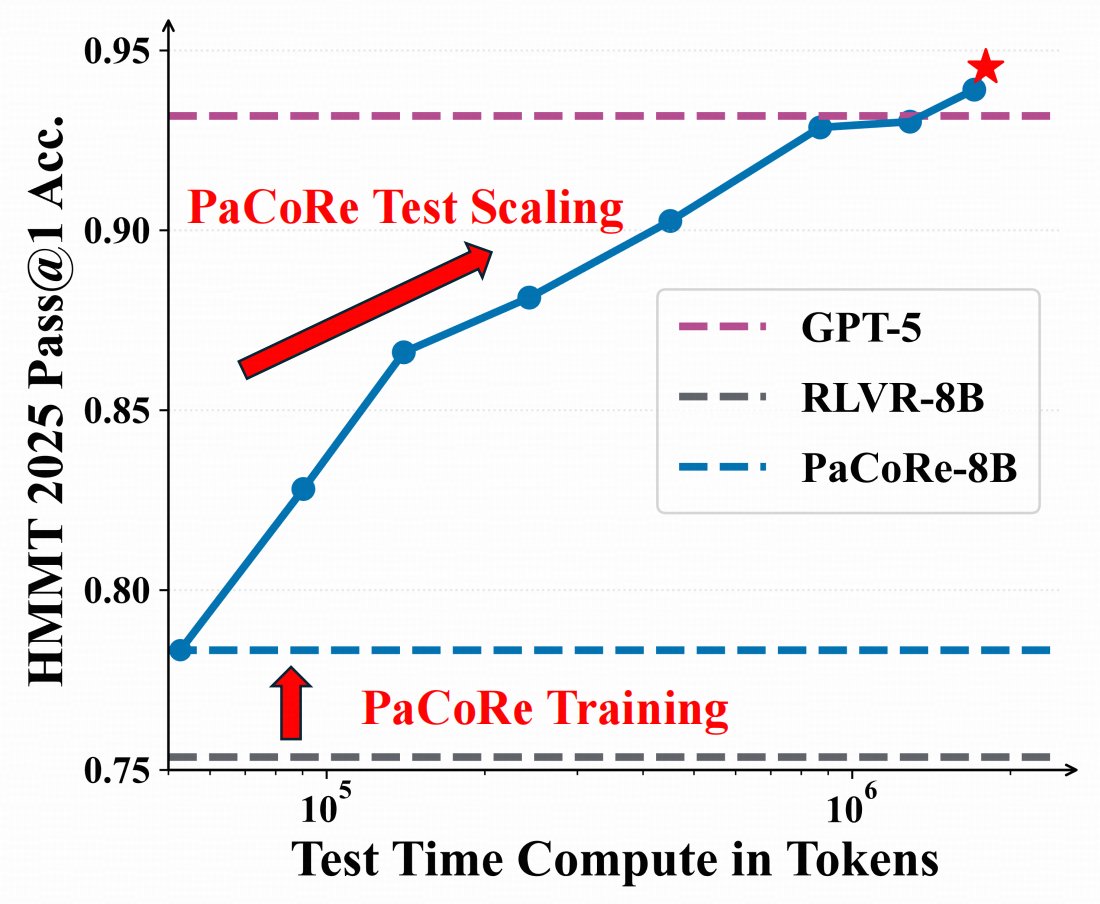

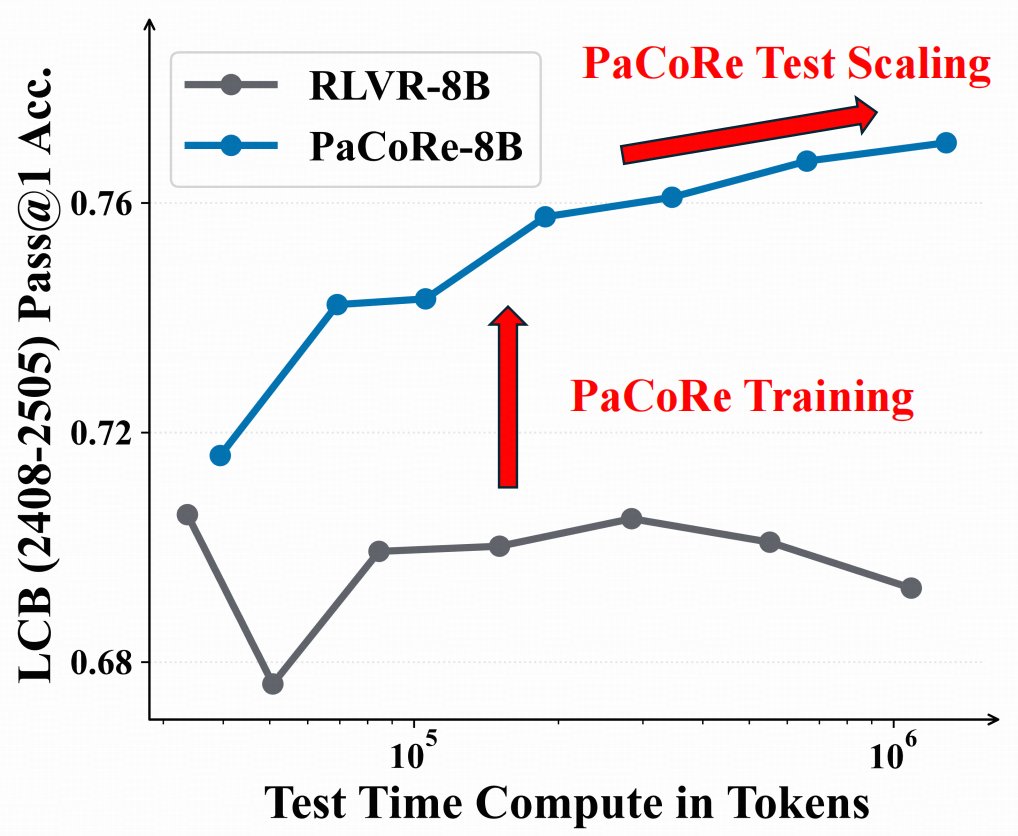

🤯 8B model > GPT-5 on math ? 🌠Introducing Parallel Coordinated Reasoning (PaCoRe)——open source deep think. 📚Decouple reasoning from context limits → multi-million-token TTC. 🤩With this new paradigm, even an 8B model can push the effective per-problem TTC context to multi-million tokens, surpassing proprietary systems like GPT-5 Thinking. 🔢 Math (HMMT25): 94.5%,beating GPT-5's 93.2%, powered by ~2M-token TTC 🤖 Coding (LiveCodeBench2408-2505) : 78.2% , competitive with frontier models。 Model checkpoints, training data, and inference pipline are now MIT-licensed and fully open-source. Fork it, test it, build with us!

Revealing the Coder Color of the Year… https://t.co/C503ZccKuP

24/7/365. Rain or shine. CEO midnight meetings. All hands on deck building the world’s best data centers with maniacal speed and efficiency. https://t.co/W3TuzXFUKF

When Whites are a minority in a country they become targets and are systematically forced into a sub-class where they live in fear and lose everything (Rhodesia/South Africa) where the end goal is total White genocide. When non Whites are a minority they are granted millions upon millions in social programs and welfare and worshipped by liberal White people to the point they can do no wrong. There's a discernible difference in outcomes.

why are white people so afraid of becoming a minority? does the US treat minorities poorly or something?

In S3E12 of @GoingnativeTv, Drew discovers how Winnipeg is embracing its Indigenous roots and revitalizing public spaces and institutions. He even gets his hands dirty. Visit https://t.co/9aYARUiZZ5 for more information. https://t.co/cAp112uISe

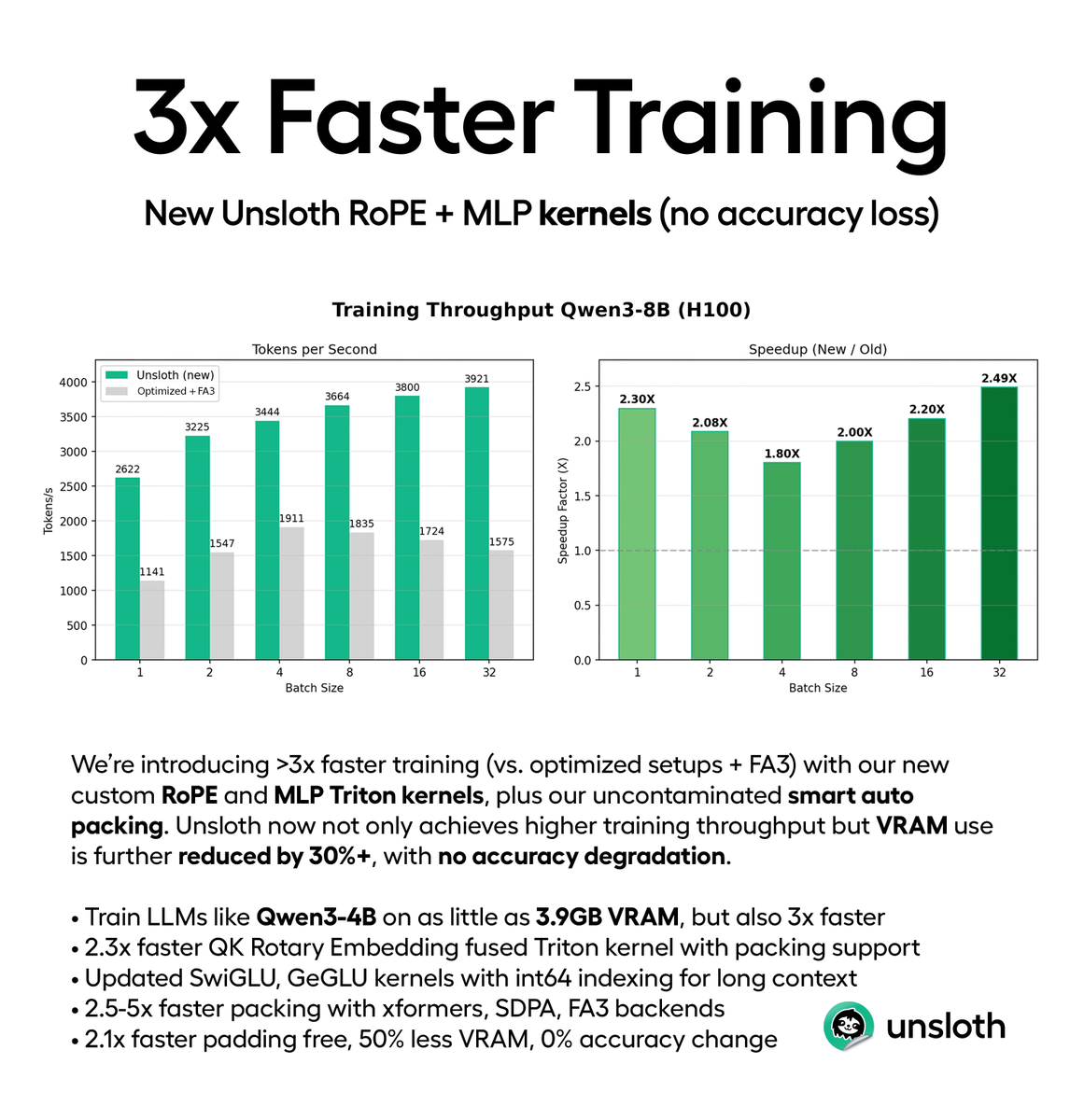

You can now train LLMs 3× faster with no accuracy loss, via our new RoPE and MLP kernels. Our Triton kernels plus smart auto packing delivers ~3× faster training & 30% less VRAM vs optimized FA3 setups. Train Qwen3-4B 3x faster on just 3.9GB VRAM. Blog: https://t.co/kL6JM6skH1

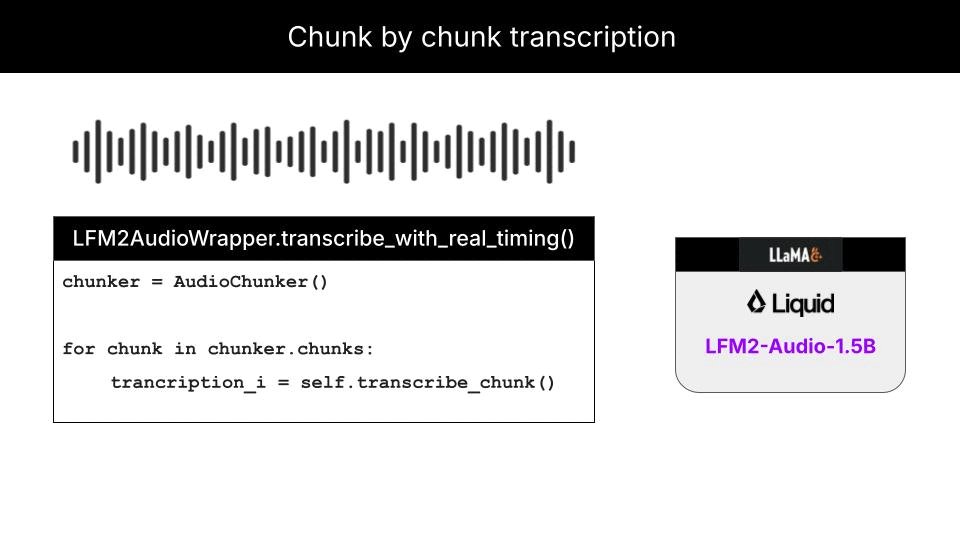

Real-time audio transcription. Entirely on-device. This hands-on tutorial shows you how to build it from scratch. No cloud dependencies, no API calls, complete privacy, using LFM2-Audio-1.5B by @liquidai Enjoy ↓ https://t.co/7Kn7f6Alv3 https://t.co/ax3MlZ8p0X

MAX just demonstrated something important: you can break AI vendor lock-in without compromising performance. New case study with @tensorwave shows what we achieved on AMD MI355 GPUs in production: 🧵 https://t.co/PDG1PZtBaf

These gains are not theoretical. They come from real usage across multiple workloads, backed by benchmarks that we published together with @tensorwave Watch the video: https://t.co/B3eYyLzNPP