@StepFun_ai

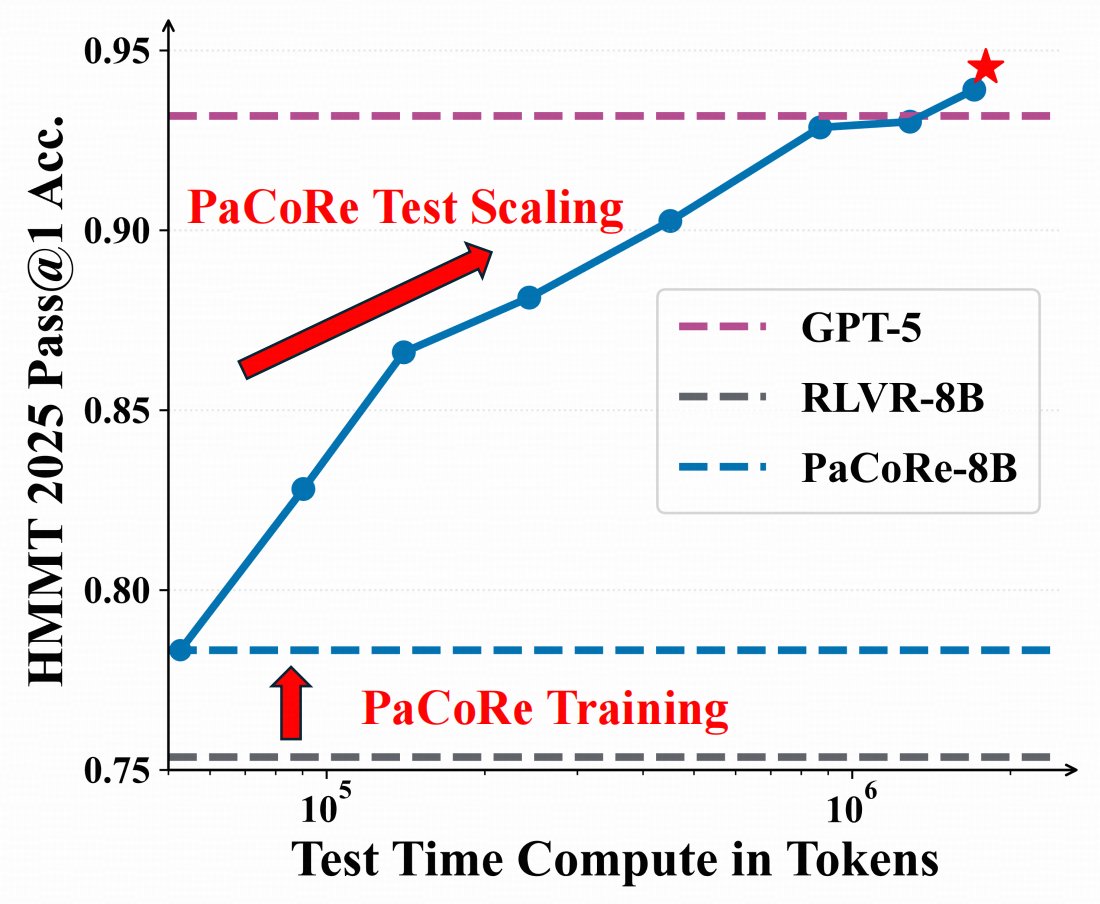

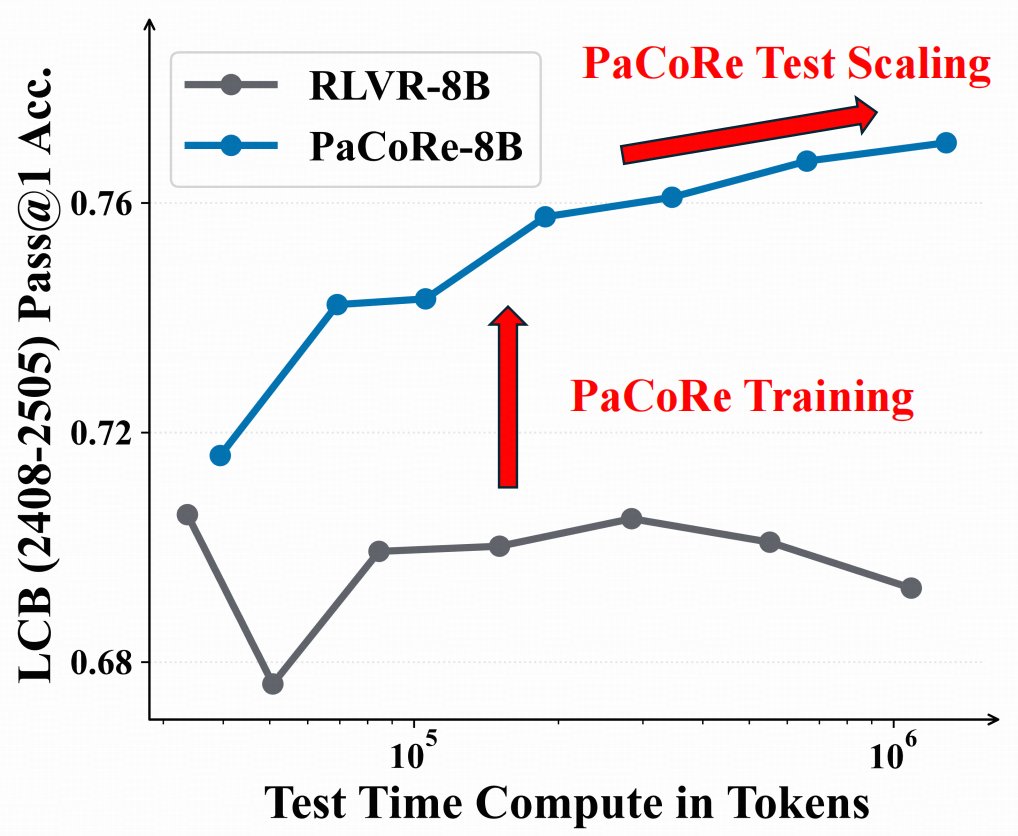

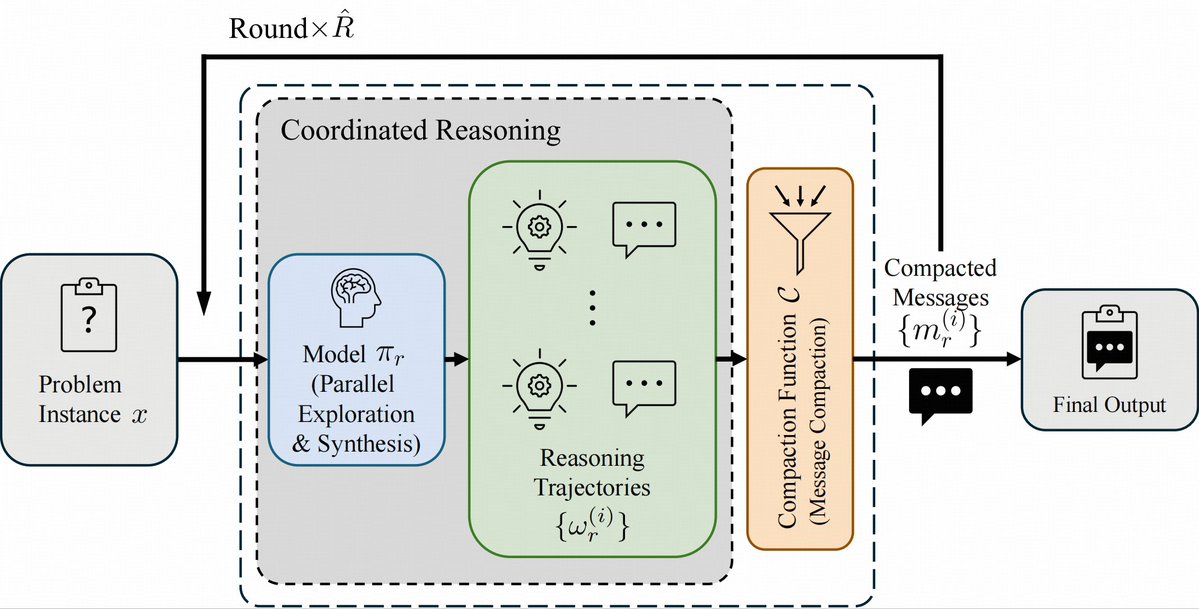

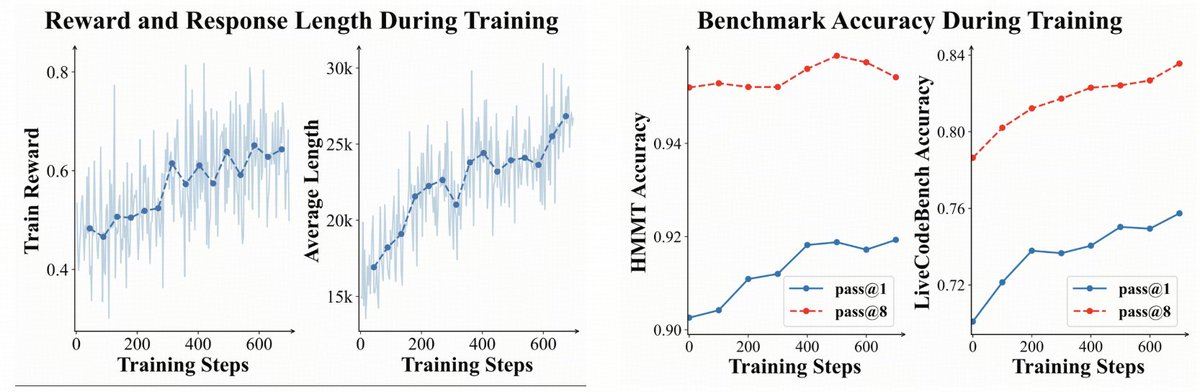

🤯 8B model > GPT-5 on math ? 🌠Introducing Parallel Coordinated Reasoning (PaCoRe)——open source deep think. 📚Decouple reasoning from context limits → multi-million-token TTC. 🤩With this new paradigm, even an 8B model can push the effective per-problem TTC context to multi-million tokens, surpassing proprietary systems like GPT-5 Thinking. 🔢 Math (HMMT25): 94.5%,beating GPT-5's 93.2%, powered by ~2M-token TTC 🤖 Coding (LiveCodeBench2408-2505) : 78.2% , competitive with frontier models。 Model checkpoints, training data, and inference pipline are now MIT-licensed and fully open-source. Fork it, test it, build with us!