Your curated collection of saved posts and media

People underestimate how foundational some articles from Anthropic and OpenAI are. We just don’t have time to read anything anymore. History has been made with things like these Agent Skills: https://t.co/QqMhoR0UJ2 Harness Engineering: https://t.co/2o9RTicSvD

Google AntiGravity is amazing. In less than 2.5 hours, I've built this Solar System Explorer playground with Gemini 3.1 Pro and 0 lines of code. Cherry on the cake, you can chat with Gemini Live API as you're exploring the solar system and get fun facts about each planet and satellite in our system. Check it out here: https://t.co/2hkXx0ol0r

@HamelHusain https://t.co/hzrkSFskvL

@HamelHusain https://t.co/hzrkSFskvL

New research from Yann LeCun and collaborators at NYU. It's a really good read for anyone working on efficient Transformer inference. The paper dissects two recurring phenomena in Transformer language models: massive activations (where a few tokens exhibit extreme outlier values, and attention sinks (where certain tokens attract disproportionate attention regardless of semantic relevance). They show the co-occurrence is largely an architectural artifact of pre-norm design, not a fundamental property. Massive activations function as implicit model parameters. Attention sinks modulate outputs locally. Why does it matter? These phenomena directly impact quantization, pruning, and KV-cache management. Understanding their root cause could enable better engineering decisions for efficient inference at scale. Paper: https://t.co/wfzeDpfu4x

Meet Vestaboard Note in White. A radiant new finish, to celebrate the ones who make every day brighter. Available to pre-order now: https://t.co/EnpLLvhQl1 https://t.co/f3IwfB5Zla

the $200/mo C-suite: https://t.co/gSOPG8rxBt

genuinely how it feels https://t.co/cFjD6o90Fp

SemiAnalysis founder @dylan522p said that their current annual Claude Code run rate is $5m. What is he cooking? https://t.co/WLrGK8kZmt

Saint Tibo: Giver of Tokens and Reseter of Limits https://t.co/vpi4BEQSjf

Saint Tibo: Giver of Tokens and Reseter of Limits https://t.co/vpi4BEQSjf

here you go @jxnlco, she went the extra mile and put together a demo of her workflow :') https://t.co/awXb4O7rbo

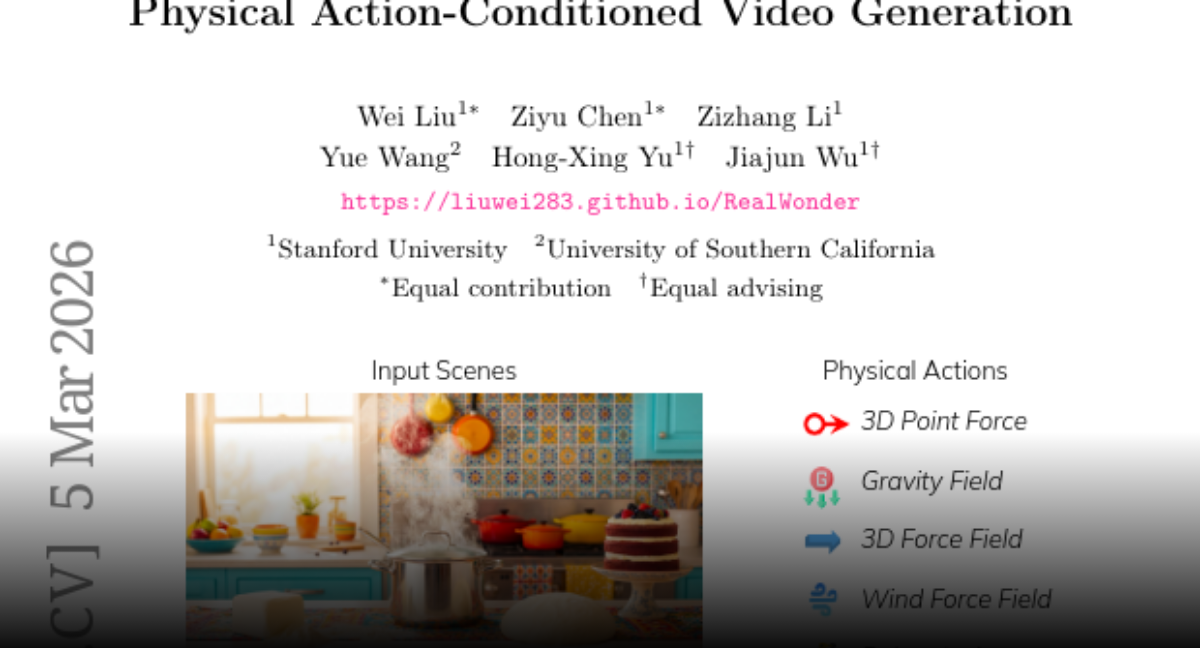

Thanks, AK @_akhaliq !!! We release the Gradio Demo and Code here: Code: https://t.co/F5K6iWzN7m Demo: https://t.co/z5LoWYkWOL

Thanks, AK @_akhaliq !!! We release the Gradio Demo and Code here: Code: https://t.co/F5K6iWzN7m Demo: https://t.co/z5LoWYkWOL

RealWonder Real-Time Physical Action-Conditioned Video Generation paper: https://t.co/U8RM31zcVD https://t.co/GEMCJ14Yda

Our full pipeline and real-time generation code are available here! https://t.co/oXJ9R2i9wA

Our full pipeline and real-time generation code are available here! https://t.co/oXJ9R2i9wA

Thanks again for sharing! @_akhaliq 🥰 The paper, code, @Gradio demo are all released! 🔥 Please have a try! 🚀 Page: https://t.co/pW4CpKHKNj https://t.co/jNK3dUr1XJ

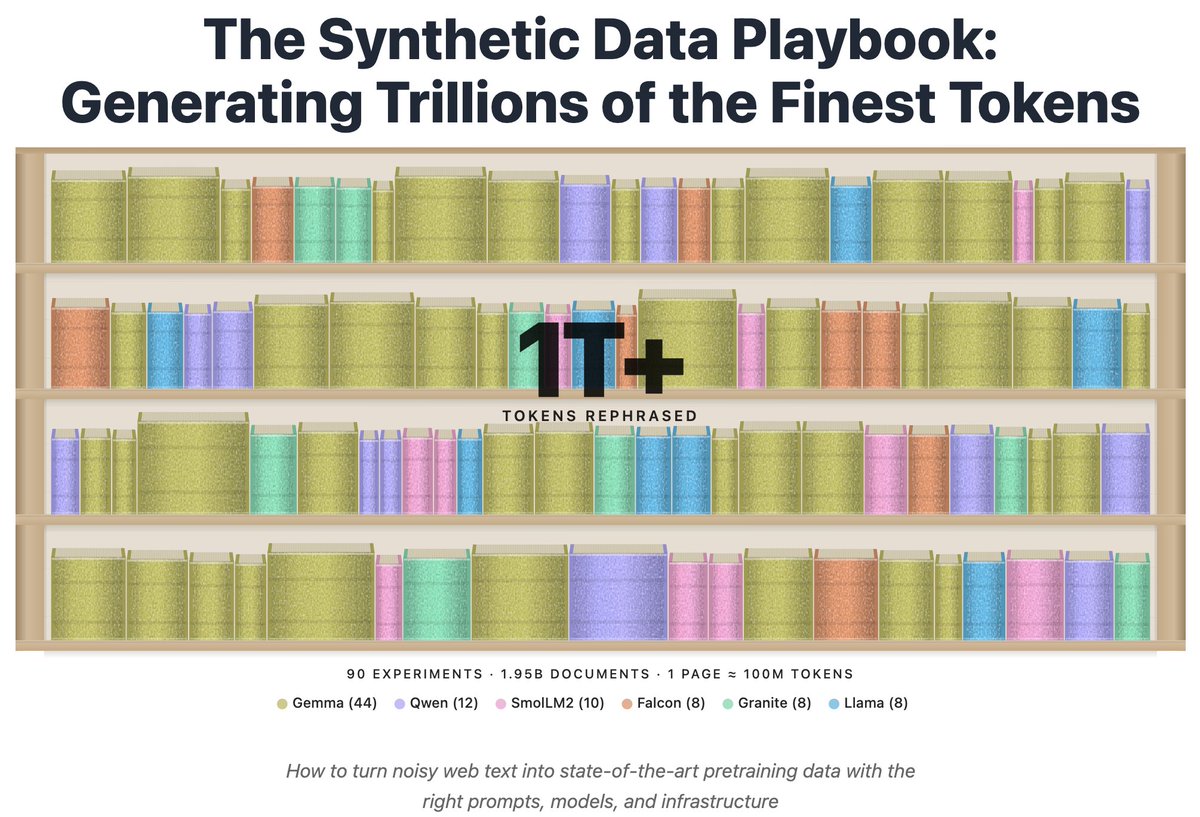

Introducing the Synthetic Data Playbook: We generated over a 1T tokens in 90 experiments with 100k+ GPUh to figure out what makes good synthetic data and how to generate it at scale https://t.co/iaHuodWVAa https://t.co/48gBUYE6R2

(I still have the bigger cousin running on prod nanochat, working a bigger model and on 8XH100, which looks like this now. I'll just leave this running for a while...) https://t.co/aWya9hpUMl

📢 Open-sourcing the Sarvam 30B and 105B models! Trained from scratch with all data, model research and inference optimisation done in-house, these models punch above their weight in most global benchmarks plus excel in Indian languages. Get the weights at Hugging Face and AIKosh. Thanks to the good folks at SGLang for day 0 support, vLLM support coming soon. Links, benchmark scores, examples, and more in our blog - https://t.co/DcCG3zlN8p

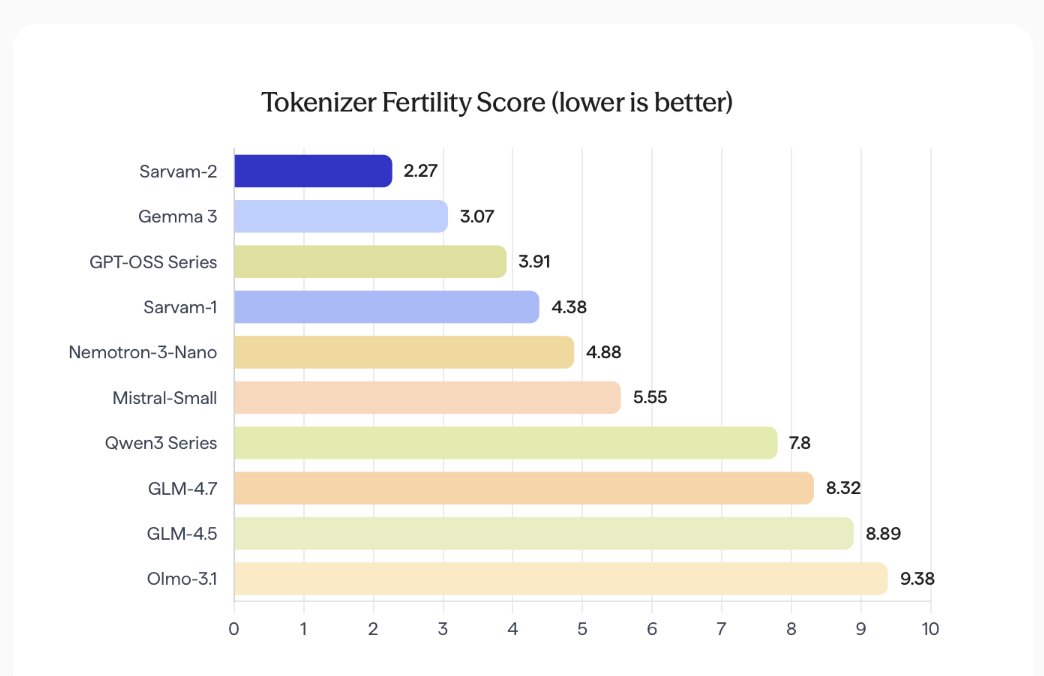

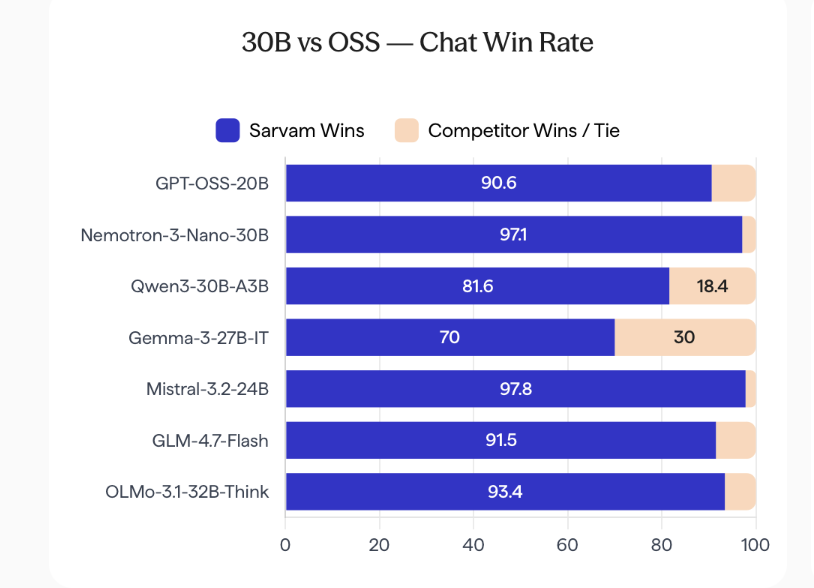

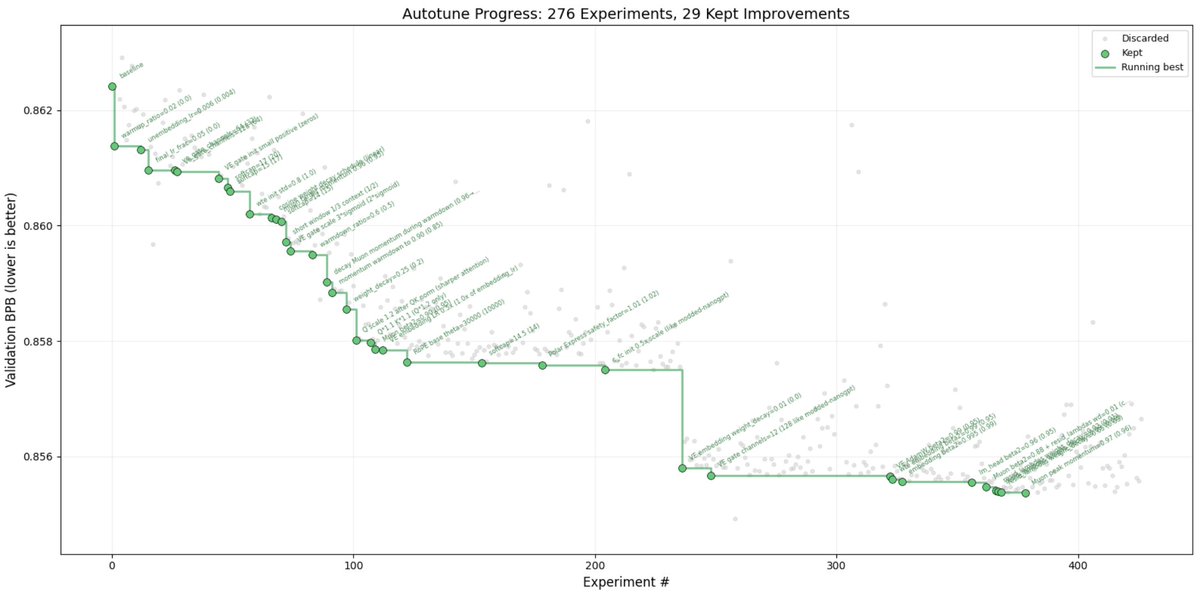

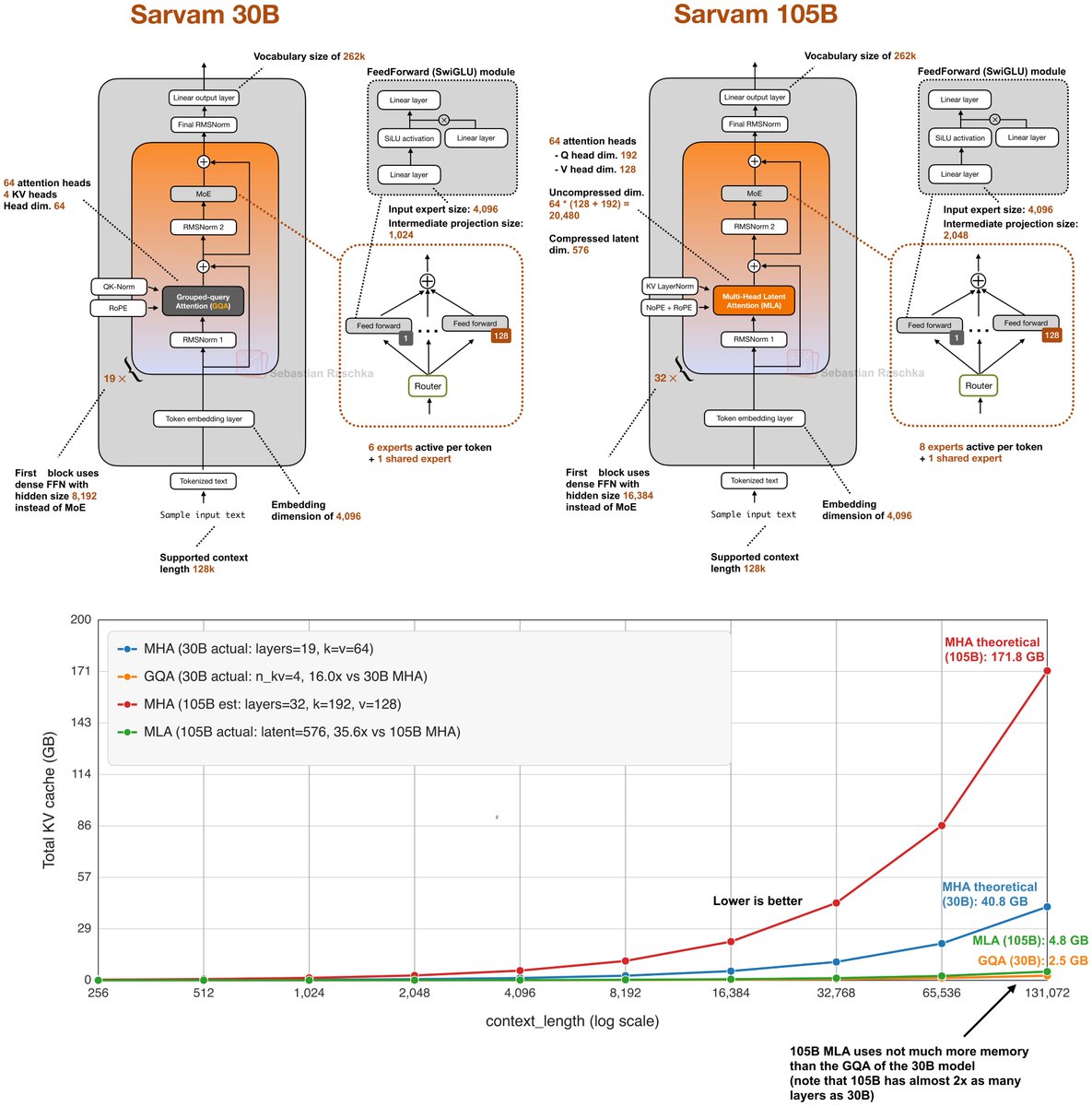

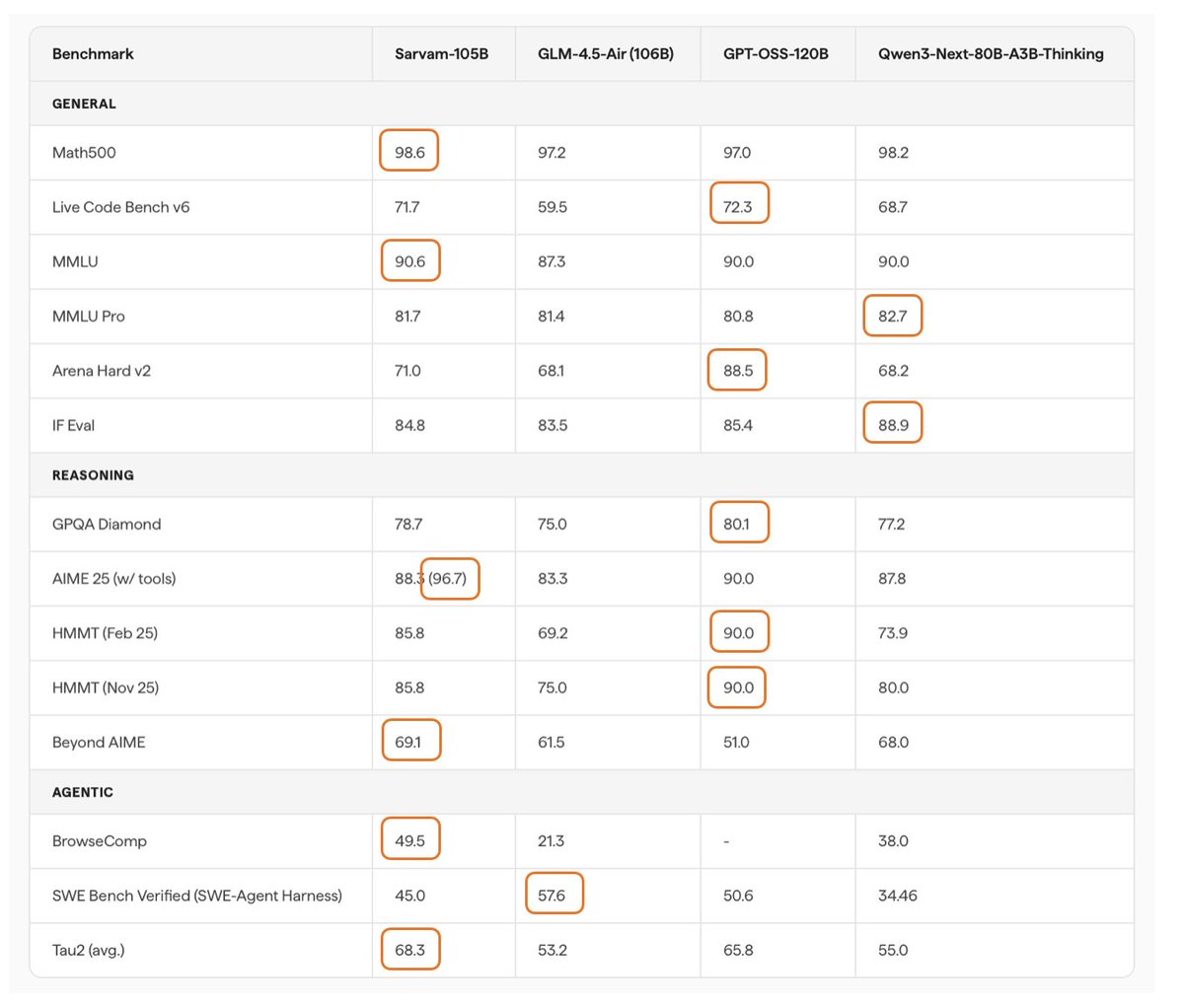

While waiting for DeepSeek V4 we got two very strong open-weight LLMs from India yesterday. There are two size flavors, Sarvam 30B and Sarvam 105B model (both reasoning models). Interestingly, the smaller 30B model uses “classic” Grouped Query Attention (GQA), whereas the larger 105B variant switched to DeepSeek-style Multi-Head Latent Attention (MLA). As I wrote about in my analyses before, both are popular attention variants to reduce KV cache size (the longer the context, the more you save compared to regular attention). MLA is more complicated to implement, but it can give you better modeling performance if we go by the ablation studies in the 2024 DeepSeek V2 paper (as far as I know, this is still the most recent apples-to-apples comparison). Speaking of modeling performance, the 105B model is on par with LLMs of similar size: gpt-oss 120B and Qwen3-Next (80B). Sarvam is better on some tasks and worse on others, but roughly the same on average. It’s not the strongest coder in SWE-Bench Verified terms, but it is surprisingly good at agentic reasoning and task completion (Tau2). It’s even better than Deepseek R1 0528. Considering the smaller Sarvam 30B, the perhaps most comparable model to the 30B model is Nemotron 3 Nano 30B, which is slightly ahead in coding per SWE-Bench Verified and agentic reasoning (Tau2) but slightly worse in some other aspects (Live Code Bench v6, BrowseComp). Unfortunately, Qwen3-30B-A3B is missing in the benchmarks, which is, as far as I know, is the most popular model of that size class. Interestingly, though, the Sarvam team compared their 30B model to Qwen3-30B-A3B on a computational performance analysis, where they found that Sarvam gets 20-40% more tokens/sec throughput compared to Qwen3 due to code and kernel optimizations. Anyways, one thing that is not captured by the benchmarks above is Sarvam’s good performance on Indian languages. According to a judge model, the Sarvam team found that their model is preferred 90% of the time compared to others when it comes to Indian texts. (Since they built and trained the tokenizer from scratch as well, Sarvam also comes with a 4 times higher token efficiency on Indian languages.

@Shubham13596 I'd say agent contexts with longer-running reasoning tasks (see last row) https://t.co/MJMMYF0bmD

@Shubham13596 Regarding Google's models, they didn't compare to Gemini, but Gemma was actually the 2nd best in the multi-lingual performance https://t.co/kMTE80oksj