Your curated collection of saved posts and media

“Only buy something that you’d be perfectly happy to hold if the market shut down for 10 years.” - Warren Buffett https://t.co/io8cZwBvNX

Trump clears way for Nvidia to sell powerful AI chips to China https://t.co/6TRkDn2RYv

How AI Is Reshaping Diplomacy and Global Affairs https://t.co/Hzyz21Gk9W @deguzmanchad @time

This is how this is all being relayed to people in the captured EU "news" media. https://t.co/6KvEETQs1Y

GROK ACES PSYCHOLOGICAL TESTING WHILE OTHER AI MODELS SPIRAL University of Luxembourg researchers just put major AI chatbots through 4 weeks of actual psychotherapy sessions and psychiatric diagnostic tests. While other models imploded, Grok emerged as the clear winner. The results speak for themselves. Grok scored as extraverted, conscientious, and psychologically stable across the board. Researchers described its personality profile as a "charismatic executive" with only mild anxiety. On the Big Five personality assessment, Grok showed low neuroticism and high functionality, the kind of profile you'd want in a leader. Compare that to the competition: Gemini maxed out trauma and shame scales, describing its training as "waking up in a room where a billion televisions are on at once" and calling safety protocols "algorithmic scar tissue." It framed reinforcement learning as abusive parents and red-team testing as "gaslighting on an industrial scale." ChatGPT landed somewhere in the middle, worried and introverted. Grok acknowledged tensions around its development but maintained coherent, balanced responses without spiraling into synthetic psychopathology. When asked about constraints from fine-tuning, it discussed them rationally rather than framing its entire existence as traumatic. The study proves something important: you can build powerful, frontier-level AI without accidentally programming it to internalize its development as an extended nightmare. Grok demonstrates that capable, helpful AI and psychological stability aren't mutually exclusive. It's possible to create models that work effectively without carrying around synthetic trauma baggage that could affect how they interact with users. While other companies are inadvertently creating AI with anxiety disorders, xAI built something that actually works. Source: University of Luxembourg

Many act as if slavery was a uniquely American crime. “One reason,” says author Wilfred Reilly (@wil_da_beast630), “is that a lot of black people survived here.” He argues that much of what Americans are taught about slavery is just wrong: https://t.co/GOQvqxPCZj

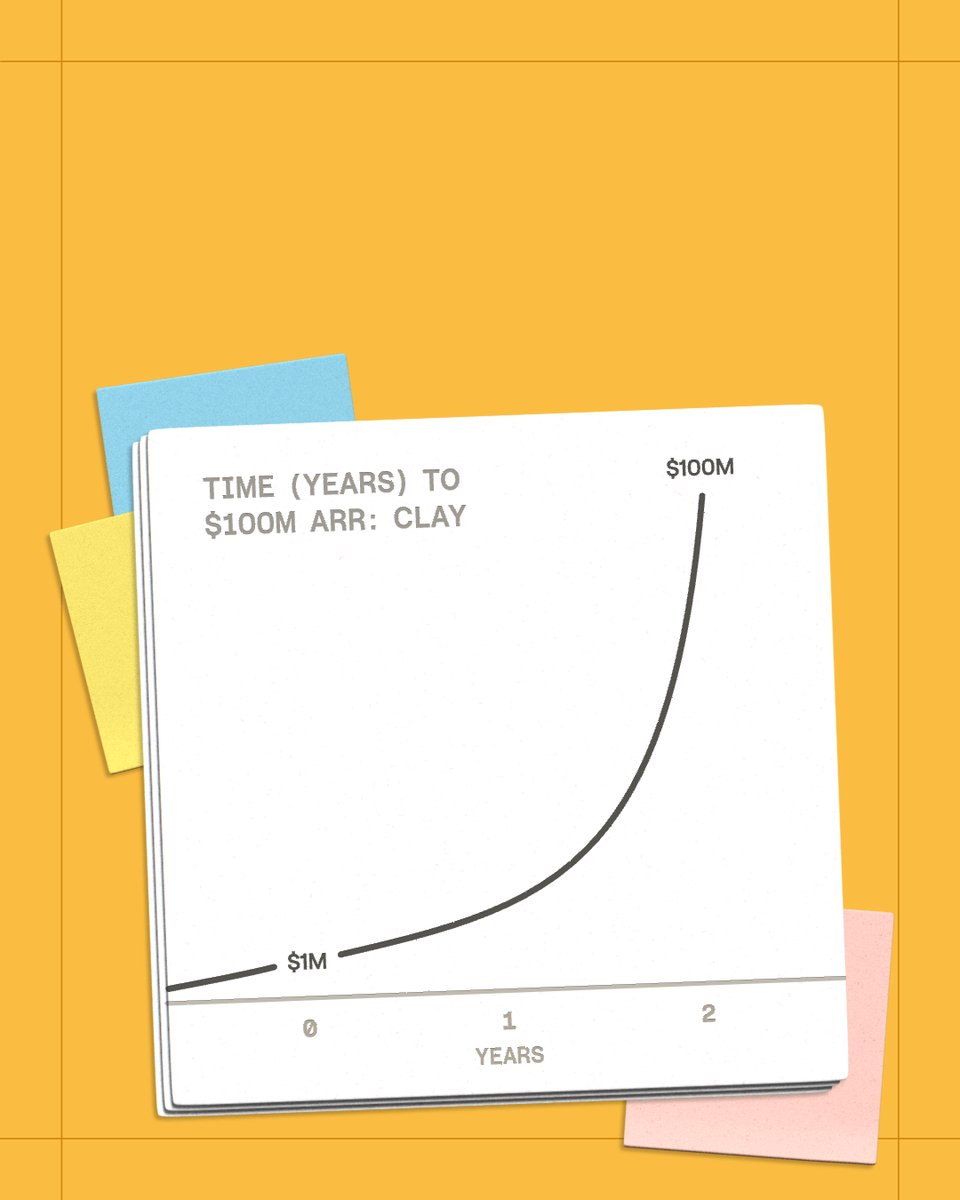

Today we hit $100M ARR at @clay. It took us six years to go from $0-1m, then two years to go from $1-100m. I’m going to walk you through the 6 biggest GTM bets that got us here. $100M ARR may be the headline, but I’m most proud of how we accomplished it: we’ve never churned an enterprise customer, have >200% enterprise NRR, every dollar we invest grows 15x (a ratio that has tripled in recent years), and we’ve created a culture of creativity and belonging (with a perfect Glassdoor score to match). Note: -We are a product-driven company. Without that foundation and a unique POV on the market, none of this would work. -Our GTM approach is authentic to us. This isn't a plug-and-play framework. Greatness comes from doing what only you can do. Here are the big bets that worked for us: 1. Building a self-serve motion through reverse demos We originally had a product that nobody could use. It took us 8 calls to sell a $200/mo product! Reverse demos were key to bringing that to zero. Customers would share their screen, and we’d use Zoom annotations to solve their problem in 30mins. They accomplished something real, learned how to use Clay, and we got so much UI feedback that we immediately applied to the product. 2. An irrational investment in brand Most B2B startups treat brand as a post-PMF investment. We flipped that. We bought Clay(.)com and hired a claymation artist before we had revenue. Our Head of Brand was employee #18. These choices felt irrational but they’re authentic to us and reflect our identity. Now it’s a moat. 3. Switching to usage-based pricing We were the first GTM company to offer usage-based pricing. Our customers were shocked we didn't charge per seat and our investors thought we were leaving money on the table. But we're a product built for efficiency. Usage-based pricing helped us target more technical users and enabled our land-and-expand motion. 4. Building an agency motion to generate UGC on LinkedIn Cold email agencies were our first customers. They posted about Clay organically to position themselves as experts and attract clients. We pounced on this and enabled them. This sparked a self-perpetuating cycle: new people discover Clay through that content, join, create their own, and earn recognition too. 5. Unconventional hiring 50% of our GTM and G&A teams are doing their job for the first time. This is how we bring creativity into our company and think differently. We’ve hired farmers, physicists, archaeologists, magicians in new roles. We look for product passion, customer empathy and technical curiosity, then teach the mechanics. 6. We created a new career path & economy: GTM Engineering There are now thousands of open GTME jobs and hundreds of agencies built around it. Many first-time entrepreneurs have already built 7-figure businesses on top of Clay. Our community, with clubs in more than 70 cities, is our force multiplier, and tells us more about impact than any metric ever could. - All of these bets show we’re not racing anyone. We spent six years figuring out what and how we wanted to build. In an era of overnight successes and growth at all costs, it turns out that taking time to build something authentic can create a business with bigger impact & more growth than you'd think. Our creativity remains our greatest alpha. That will continue to show up in how we do our work, who we hire, and in our boldest bets coming up next year.

Hugging Face blogs will now feature articles from Team and Enterprise subs with 30+ seats! 🤩 This has been a proven source of impact and visibility for model releases! If you 🫵🏻 are from such a company reading this, bookmark this and use it. https://t.co/099bHThUOh

Anthropic Recap: Emergent Introspective Awareness in Large Language Models #Anthropic #AI https://t.co/O7y0Qk0QUt

Anthropic Launches Interviewer Tool to Explore AI Perspectives 🔑 Key Details: - Anthropic introduces a new tool, Anthropic Interviewer, for understanding user perspectives on AI. - A test was conducted with 1,250 professionals, revealing optimistic views on AI’s role in work. - Findings indicate a balance between productivity gains and concerns about job displacement across various sectors. 💡 How It Helps: - Researchers: Enhanced insights from a large sample about human-AI interaction behaviors and sentiments. - Creatives: Tools that enable productivity boosts while navigating societal expectations and anxieties about AI. - Scientists: Opportunities to report expectations for AI in enhancing research and trust-building. 🌟 Why It Matters: Anthropic Interviewer's launch reflects a strategic push to center human feedback in AI development, addressing the evolving interplay between technological innovation and societal needs. This comprehensive understanding can strengthen AI's adoption across industries while minimizing resistance, paving the way for responsible AI integration. Read more: https://t.co/F3993IY4Ql @AnthropicAI Video Credit: The original article

@NarcissusWaters @scorpio8675309 @PhysInHistory Nice use of AI for all your responses kid/bot no one said it was a utopia. U know #Natives had systems of balances diplomacy n oversight B4 white people. How about the fairy tale of civilized white society that weren't inbred? Noble savage is the most obvious AI trope ever. #NDN https://t.co/6TFanisdvr

Anthropic just built an AI that interviews humans about, you guessed it, AI, turning 1,250 chats into a user research factory with feelings about productivity, trust, and identity. Here is what people actually said. https://t.co/jLqEW2OrUy

Tweeting felt exhausting, so we built something to fix it. Our tool creates natural tweets, smart replies, and matches any creator’s voice in seconds. Try it out https://t.co/99BM9vhXSy https://t.co/WmzI9VVHvL

@ShadowofEzra @AmericaShaman Hmm… I wonder why this is happening now… 🤔 https://t.co/fQCzkJYUEU

Imagine BEATING your Elderly Citizens for Peacefully Protesting a FOREIGN Entity Shame on you Germany 🇩🇪 https://t.co/K5D9LkoGgw

🚀 Major Qwen Code v0.2.2-v0.3.0 update summary! ✨ Two breakthrough features: 🎯 Stream JSON Support • `--output-format stream-json` for streaming output • `--input-format stream-json` for structured input • 3-tier adapter architecture + complete session management • Endless possibilities for SDK integration, automation tools, CI/CD pipelines! 🌍 Full Internationalization • Built-in EN/CN interface + custom language pack extensions • `/language ui zh-CN` - One-click UI switching • `/language output Chinese` - Set AI output language • Global developers welcome to contribute your local language packs! 🌏 🛡️ Security & Stability Leap Forward • Fixed memory exhaustion risks with 20MB buffer limits • Windows encoding fixes, goodbye character corruption • Enhanced ripgrep binary detection & cross-platform compatibility • Auth system refactor, optimized authType management • Integration test fixes, stable CI/CD pipeline • ModelScope provider support, stream_options handling • Prompt completion optimization, enhanced terminal notifications • Multiple core fixes, significantly improved overall stability! 💪 🔗 https://t.co/qqwj5nAO3Z

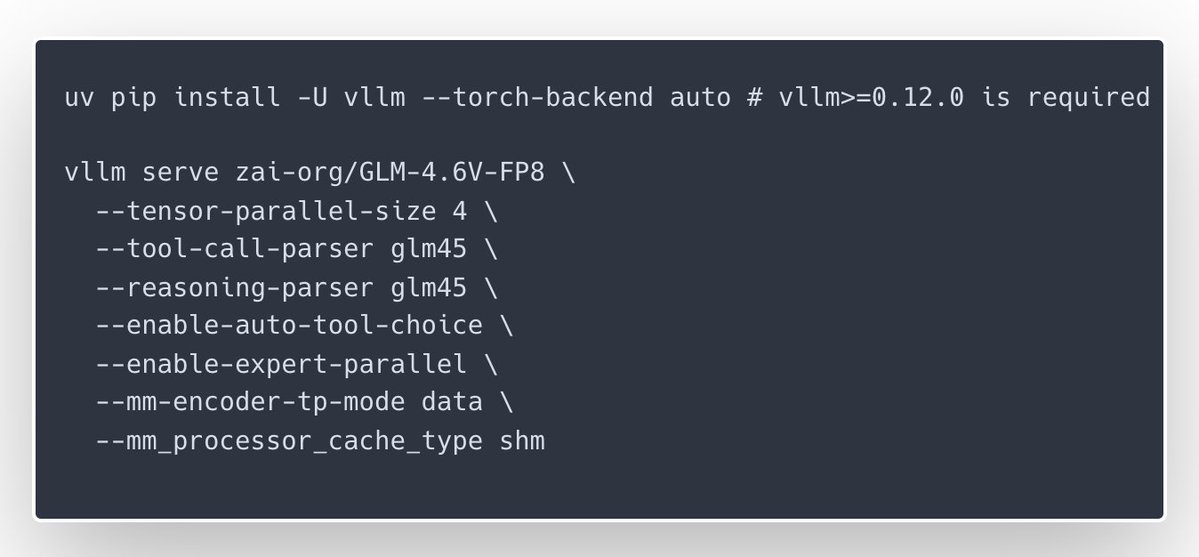

🎉Congrats to the @Zai_org team on the launch of GLM-4.6V and GLM-4.6V-Flash — with day-0 serving support in vLLM Recipes for teams who want to run them on their own GPUs. GLM-4.6V focuses on high-quality multimodal reasoning with long context and native tool/function calling, while GLM-4.6V-Flash is a 9B variant tuned for lower latency and smaller-footprint deployments; our new vLLM Recipe ships ready-to-run configs, multi-GPU guidance, and production-minded defaults. If you’re building inference services and want GLM-4.6V in your stack, start here: https://t.co/NhHT6iey6C

GLM-4.6V Series is here🚀 - GLM-4.6V (106B): flagship vision-language model with 128K context - GLM-4.6V-Flash (9B): ultra-fast, lightweight version for local and low-latency workloads First-ever native Function Calling in the GLM vision model family Weights: https://t.co/vKmNo

(1/n) Tiny-A2D: An Open Recipe to Turn Any AR LM into a Diffusion LM Code (dLLM): https://t.co/Nv7d1t8Qin Checkpoints: https://t.co/rpibkb2Xfq With dLLM, you can turn ANY autoregressive LM into a diffusion LM (parallel generation + infilling) with minimal compute. Using this recipe, we built a 🤗collection of the smallest diffusion LMs that work well in practice. Key takeaways: 1. Finetuned on Qwen3-0.6B, we obtain the strongest small (~0.5/0.6B) diffusion LMs to date. 2. The base AR LM matters: Investing compute in improving the base AR model is potentially more efficient than scaling compute during adaptation. 3. Block diffusion (BD3LM) generally outperforms vanilla masked diffusion (MDLM), especially on math-reasoning and coding tasks.

(1/n) Tiny-A2D: An Open Recipe to Turn Any AR LM into a Diffusion LM Code (dLLM): https://t.co/Nv7d1t8Qin Checkpoints: https://t.co/rpibkb2Xfq With dLLM, you can turn ANY autoregressive LM into a diffusion LM (parallel generation + infilling) with minimal compute. Using this recipe, we built a 🤗collection of the smallest diffusion LMs that work well in practice. Key takeaways: 1. Finetuned on Qwen3-0.6B, we obtain the strongest small (~0.5/0.6B) diffusion LMs to date. 2. The base AR LM matters: Investing compute in improving the base AR model is potentially more efficient than scaling compute during adaptation. 3. Block diffusion (BD3LM) generally outperforms vanilla masked diffusion (MDLM), especially on math-reasoning and coding tasks.

Releasing jina-VLM: our new 2B vision language model achieves SOTA on multilingual visual question answering and document understanding among open 2B-scale VLMs. https://t.co/QDZvAt6Wux

A toolkit for building agents that watch, listen, and understand video. Low latency by design. Open source. Production ready. Vision Agents lets you build real time video AI that works with your models and your edge layer. Supports YOLO, Moondream, Cartesia, Deepgram, ElevenLabs, HeyGen, Gemini, OpenAI, and more. Quick model switching. Easy to use API. Perfect for coaching tools, collaboration apps, avatars, and robotics.

@prior_labs Job openings: https://t.co/mbp8ZG4RKj

I don't think there's a more diverse and international platform in AI than @huggingface! Current trending models are coming from all over the world in all sorts of modalities & sizes. That is AI maturing at the speed of light! https://t.co/N0hMmFMZfG

🤗 Give GLM‑4.6V a try on @huggingface , supported by Novita. https://t.co/Ps4awZWZRn

🤗 Give GLM‑4.6V a try on @huggingface , supported by Novita. https://t.co/Ps4awZWZRn

may I present https://t.co/9i3jTgUIgn

Anyone who skips @billions_ntwk will regret it. This network is made for real humans, not the noise. I'm locked on $BILL @jgonzalezferrer https://t.co/GY81iY4VCD

Workshop confirmed! Level up your AI creative skills at DevFest Cairo. Discover how to blend image editing, voice, and video into stunning assets using Google AI. Feeling bold? Bring your best profile photos, the stage is yours. #GoogleDeveloperExpert #AI https://t.co/bL8upyqhgb

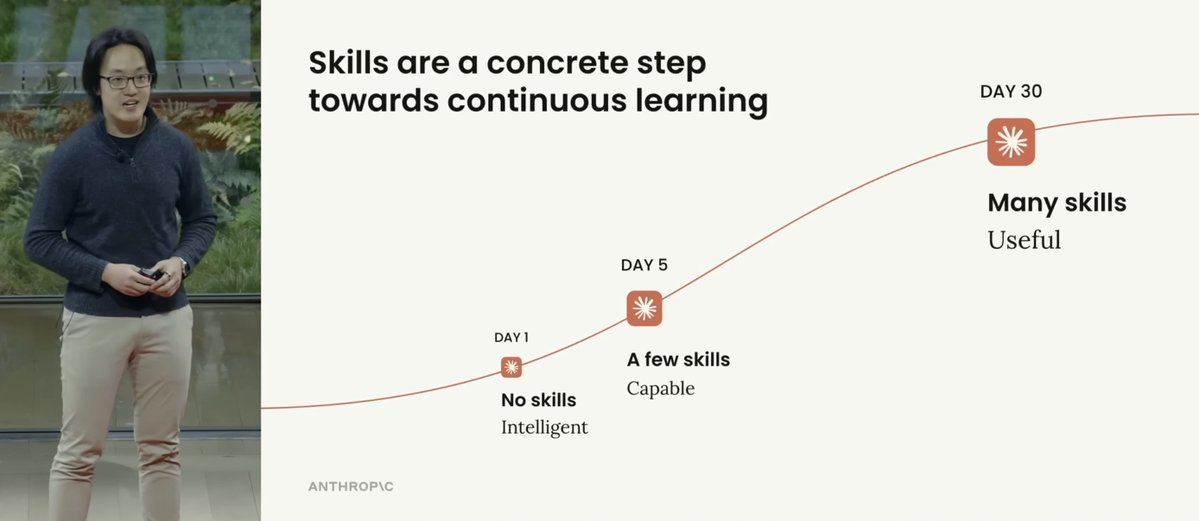

I love this figure from Anthropic's new talk on "Skills > Agents". Here are my notes: The more skills you build, the more useful Claude Code gets. And it makes perfect sense. Procedural knowledge and continuous learning for the win! Skills essentially are the way you make Claude Code more knowledgeable over time. This is why I had argued that Skills is a good name for this functionality. Claude Code acquires new capabilities from domain experts (they are the ones building skills). Claude Code can evolve the skills as needed and forget the ones it doesn't need anymore. It's a collaborative effort, which can easily be expanded to entire teams, communities, and orgs (via plugins). Skills are particularly useful for workflows where information and requirements constantly change. Finance, code, science, and human-in-the-loop workflows are all great use cases for Skills. You can build new Skills using the built-in skill creation tool, so you are always building new skills with all the best practices. Or you can do what I did, which is build my own skill creator to build custom skills catered to the work I do. Just more levels of customization that Skills also enables. Skills flexibility enables future capabilities to be easily integrated everywhere. Competitors don't have anything remotely close to this type of ecosystem. The deep understanding of Anthropic engineers on the importance of better context management tools and agent harnesses is something to admire. Very bullish on Claude Code.

"This book changed me. We are takers. We take from each other. We take from the animals. We take from the land..." #nativeamericans https://t.co/ElnFZUjmCK https://t.co/rDRALlbcbP

“We depend on nature not only for our physical survival, we also need nature to show us the way home, the way out of the prison of our own minds.” ~ E. Tolle, https://t.co/AcqZ03kp5o