Your curated collection of saved posts and media

Which way western woke? https://t.co/b5es8rWIab

SpaceX has completed its 550th booster landing https://t.co/Hau0uZjDSt

Wishing you a Happy Hanukkah from the ellipse of the White House. Even in the age of so much darkness we can rejoice together with our families for the festival of lights. https://t.co/TMJVlvN9W3

Social media are full of misinformation about AI history. To all "AI influencers:" before you post your next piece, take history lessons from the AI Blog, with chapters on: Who invented artificial neural networks? 1795-1805 Who invented deep learning? 1965 Who invented backpropagation? 1676-1970 Who invented convolutional neural nets? 1979-1988 Who invented generative adversarial networks? 1990 Who invented Transformer neural networks? 1991-2017 Who invented deep residual learning? 1991-2015 Who invented neural knowledge distillation? 1991 Who invented the transistor? 1925 Who invented the integrated circuit? 1949 Who created the general purpose computer? 1936-1941 Who founded theoretical CS and AI theory? 1931-34 And many more ... https://t.co/nZdKsslmJj

These AI renovations of abandoned places are so satisfying to watch (from kouyang1 on IG) https://t.co/67phw9uIXN

These AI renovations of abandoned places are so satisfying to watch (from kouyang1 on IG) https://t.co/67phw9uIXN

Very detailed article by @jetscott that talks about all the #smartglasses available at the moment, their strengths, their challenges, and their evolutions for the next year. A very good read for the weekend! https://t.co/iKB3Q6iflz #ArtificialIntelligence #Meta #Google https://t.co/prEmHliW3Z

And that is assuming your chat doesn't just end with this error. I am still unsure why they decided to go the compacting route in the way that they did. Conversations don't compress into tasks and files in the same that coding projects do. https://t.co/KUFfVuQem3

MrBeast Is Building a Financial Empire as Beast Industries Expands Into Banking and Mobile Services https://t.co/RqOW2KzmiY @MrBeast @castle_crypto

Want job security in the age of AI? Get a state license – any state license https://t.co/CvOHpkbb7p

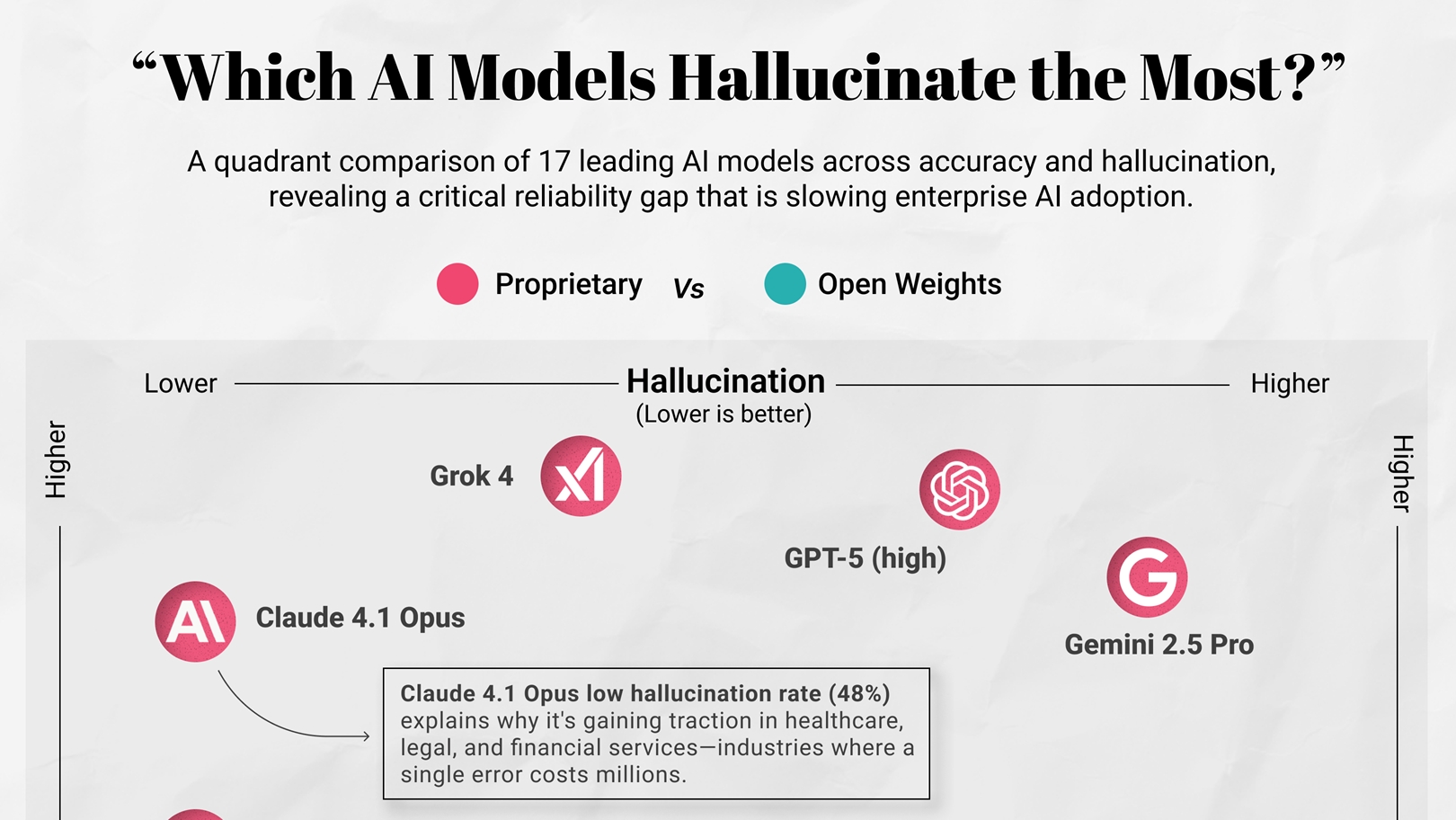

Which AI Models Hallucinate the Most? https://t.co/gYjKzdIyvM @visualcap

Believe me when I say Hollywood is cooked. Here is a trailer I created in 20 minutes using @midjourney and @Kling_ai This is a movie I'd LOVE to see on the big screen. I'm tired of eating whatever the next big franchise has to offer me. https://t.co/M64k5PLyFV

Real user feedback matters in model evaluation. ✨GPT-5.2 Instant, meant for everyday work, is #1 on @yupp_ai’s Text Leaderboard while GPT-5.2 (High) is #1 on our SVG Leaderboard. @openai’s strategy of releasing model variants suited to the task looks sound. Congrats @openai! 🎉 https://t.co/4TX7ffVvuV

GPT-5.2 is now rolling out to everyone. https://t.co/nfubPwnIIw

窓全開の大音量でおさるのジョージ見てたら今これ https://t.co/pb3pvSpBiU

窓全開の大音量でおさるのジョージ見てたら今これ https://t.co/pb3pvSpBiU

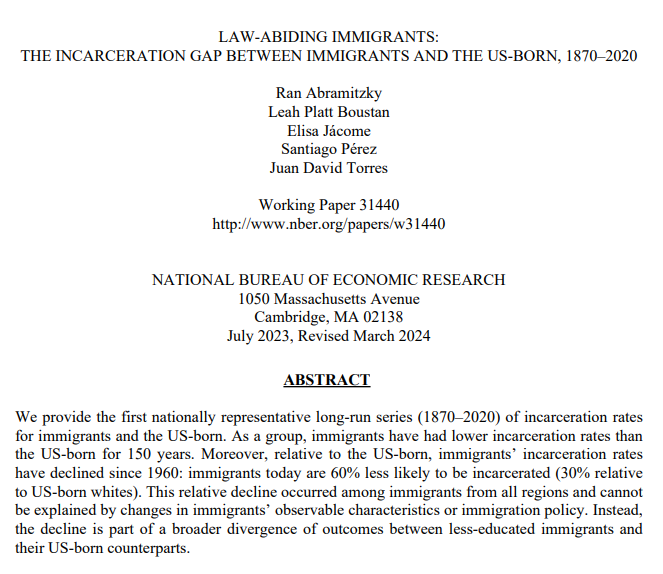

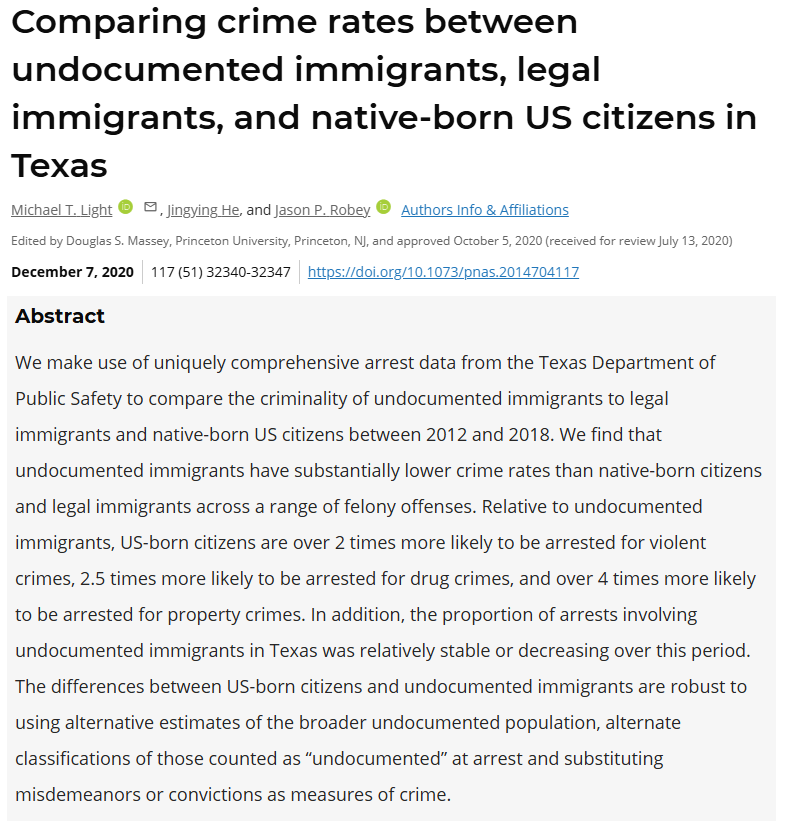

per capita, legal immigrants to america commit less crime than the native-born population. even illegal immigrants commit less crime (not counting the initial crime of entering illegally) turns out, the right-wing narrative about immigration is false, at least for america. https://t.co/i3pgsUk0jE

Thomas Sowell on Gun Control 🔫 https://t.co/vBFWmXa5B0

Adaptive retrieval is the way to go! And this RouteRAG paper shows why. Let's talk about it: RAG systems have a retrieval problem. The default approach to multi-hop reasoning today relies on fixed retrieval pipelines. It typically involves fetching text + maybe graph data, and hope everything is retrieved in one-shot. But the reality is that complex questions and real-world tasks require adaptive retrieval. Sometimes you need text. Sometimes you need relational structure from a graph. Sometimes it will be great to use both. And it's not secrete that graph retrieval is expensive, so retrieving it unnecessarily wastes compute. This new research introduces RouteRAG, an RL-based framework that teaches LLMs to make adaptive retrieval decisions during reasoning. When to retrieve, what source to retrieve from, and when to stop. The model learns a unified generation policy through two-stage training. > Stage 1 optimizes for answer correctness, establishing reasoning capability. > Stage 2 adds an efficiency reward that discourages unnecessary retrieval, teaching the model to balance accuracy against computational cost. The action space includes three retrieval modes: passage-only, graph-only, or hybrid. The model dynamically selects based on evolving query needs. Text retrieval works well for simple questions. Graph retrieval shines for multi-hop reasoning. The policy learns when each is appropriate. Results across five QA benchmarks: RouteRAG-7B achieves 60.6 average F1, outperforming Search-R1 (56.8 F1) despite being trained on only 10k examples versus 170k. On multi-hop datasets like 2Wiki, it reaches 64.6 F1 compared to 58.9 for Search-R1. The efficiency gains are also substantial. RouteRAG-7B reduces average retrieval turns by 20% compared to training without the efficiency reward, while actually improving accuracy by 1.1 F1 points. So we get best of both worlds: fewer retrieval calls and better answers. And here is something exciting: Small models also approach large model performance. RouteRAG with Qwen2.5-3B surpasses several graph-based RAG systems built on GPT-4o-mini, suggesting that improving the retrieval policy can be as impactful as scaling the backbone. Teaching models when and what to retrieve through RL yields more efficient and accurate multi-hop reasoning than scaling training data or model size alone. Paper: https://t.co/a4J6oAX0GC Learn to build RAG and effective AI Agents in our academy: https://t.co/zQXQt0PMbG

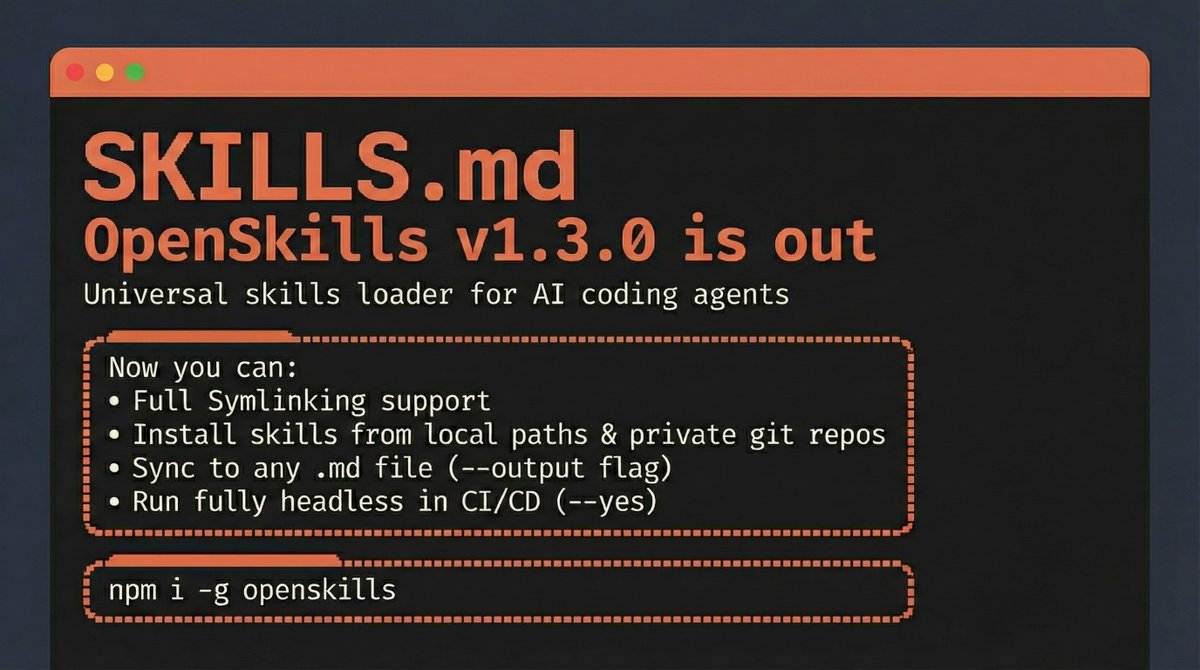

OpenSkills v1.3.0 is out 🚀 The Universal Skills loader for AI Coding Agents Now you can: • Use Symlinks with your skills • Install skills from local paths & private git repos • Sync to any .md file (--output flag) • Run fully headless in CI/CD (--yes) npm i -g openskills https://t.co/Rt0le0Akxy

Jamie Dimon says soft skills like emotional intelligence and communication are vital as AI eliminates roles https://t.co/XBm2hITjVp @jpmorgan @fortunemagazine

At this small buyout firm, talking about AI for cost-cutting is off-limits https://t.co/tOUS8W7n7y @nicollsanddimes @businessinsider

After 3 tech layoffs, I knew I had to lean into being a founder https://t.co/Bp0QhYDrKu @TimSParadis @businessinsider

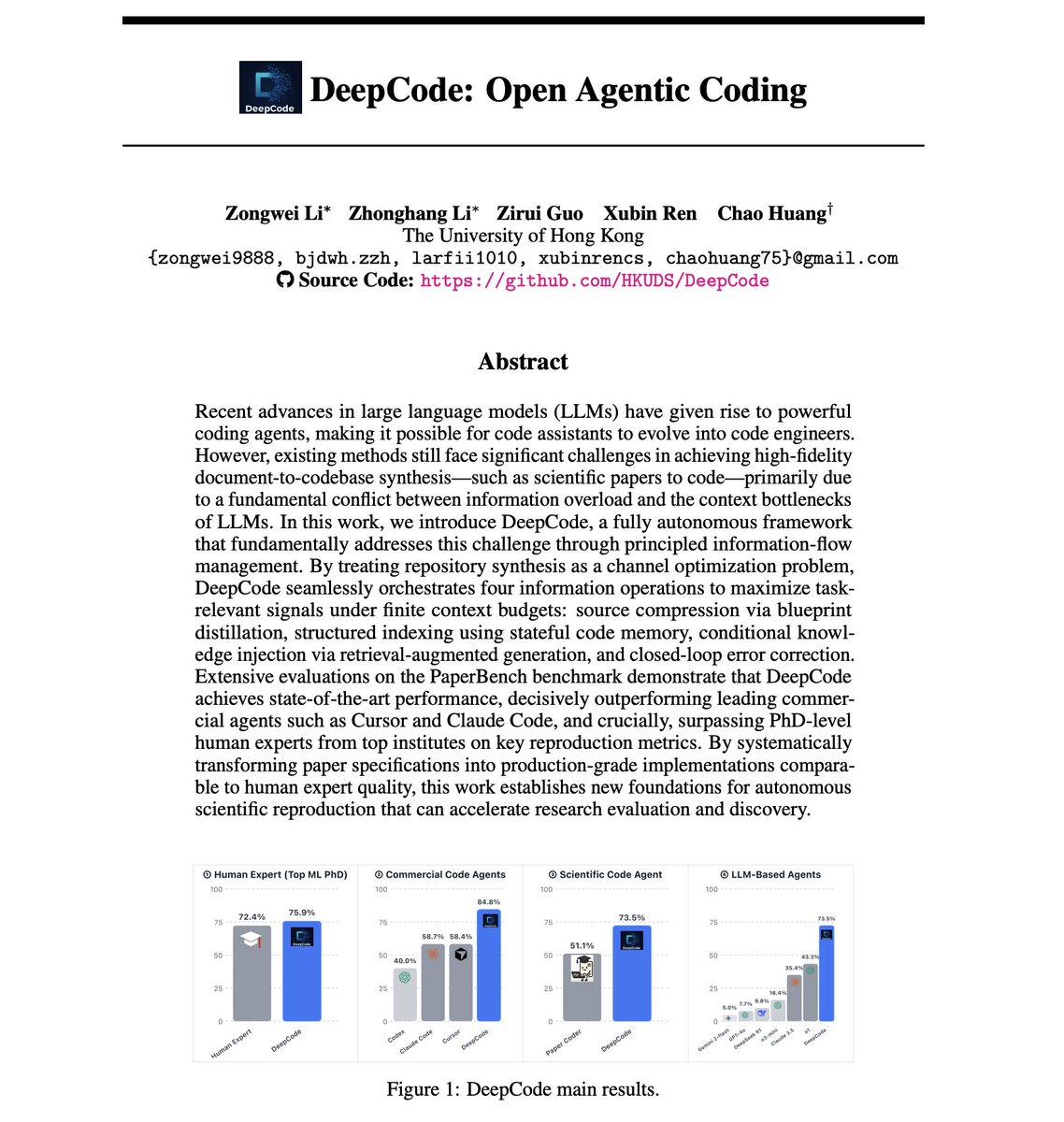

DeepCode: Open Agentic Coding AI coding agents still can't reliably turn research papers into working code. The best LLM agents achieve only 42% replication scores on scientific papers, while human PhD experts hit 72%. But the problem isn't model capability. This new paper suggests it might have to do with information management. It introduces DeepCode, an open agentic coding framework that treats repository synthesis as a channel optimization problem, maximizing task-relevant signals under finite context budgets. How does this work? Scientific papers are high-entropy specifications with scattered multimodal constraints, equations, pseudocode, and hyperparameters. Naive approaches that concatenate raw documents with growing code history cause channel saturation, where redundant tokens mask critical algorithmic details and signal-to-noise ratio collapses. DeepCode addresses this through four orchestrated information operations: 1) Source compression via blueprint distillation: A planning agent transforms unstructured papers into structured implementation blueprints with file hierarchies, component specifications, and verification protocols. 2) Structured indexing using stateful code memory: As files are generated, the system maintains compact memory entries of the evolving codebase to preserve cross-file consistency without context saturation. 3) Conditional knowledge injection via RAG: The system bridges implicit specification gaps by pulling standard implementation patterns from external knowledge bases. 4) Closed-loop error correction: A validation agent treats runtime execution feedback as corrective signals to identify and fix bugs iteratively. The results on OpenAI's PaperBench benchmark are impressive. DeepCode achieves 73.5% replication score, a 70% relative improvement over the best LLM agent baseline (o1 at 43.3%). It decisively outperforms commercial agents: Cursor at 58.4%, Claude Code at 58.7%, and Codex at 40.0%. Most notably, DeepCode surpasses human experts. On a 3-paper subset evaluated by ML PhD students from Berkeley, Cambridge, and Carnegie Mellon, humans scored 72.4%. DeepCode scored 75.9%. Principled information-flow management yields significantly larger performance gains than merely scaling model size or context length. The framework is fully open source. Paper: https://t.co/LXVKsxOXfi Learn to build effective AI Agents here: https://t.co/JBU5beIoD0

Sign if you know/remember https://t.co/bfJo4lplht

Sign if you know/remember https://t.co/bfJo4lplht

Make sure to set up the all new 𝕏 and Grok widgets on your lock screen for instant access. https://t.co/tkkryFdh3t

WOW! An absolute ocean of Chileans have flooded the streets to celebrate the end of socialist rule in their country. A capitalist revolution is ensuing all over the Americas. https://t.co/B5RGsIY2AM

@Wizarab10 Ohh!! Oh babyyyyy Sir Dickson you like Native very well oo Do you wear Jeans and shorts? https://t.co/YoCGEmfj1O

Amazon pulls AI recap from Fallout TV show after it made several mistakes https://t.co/PYvJIJuqZW @liv_mcmahon @bbctech @bbc