@omarsar0

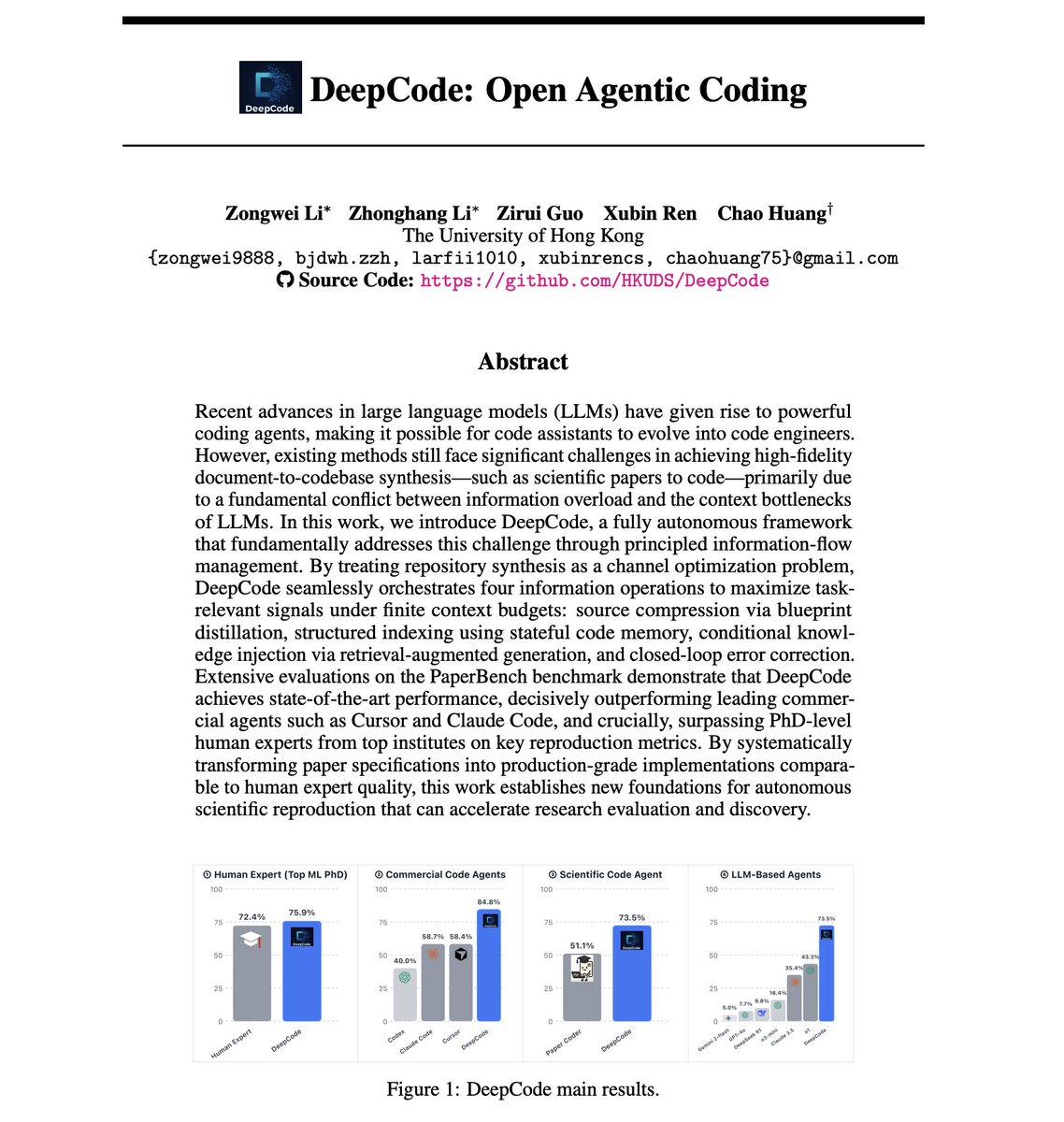

DeepCode: Open Agentic Coding AI coding agents still can't reliably turn research papers into working code. The best LLM agents achieve only 42% replication scores on scientific papers, while human PhD experts hit 72%. But the problem isn't model capability. This new paper suggests it might have to do with information management. It introduces DeepCode, an open agentic coding framework that treats repository synthesis as a channel optimization problem, maximizing task-relevant signals under finite context budgets. How does this work? Scientific papers are high-entropy specifications with scattered multimodal constraints, equations, pseudocode, and hyperparameters. Naive approaches that concatenate raw documents with growing code history cause channel saturation, where redundant tokens mask critical algorithmic details and signal-to-noise ratio collapses. DeepCode addresses this through four orchestrated information operations: 1) Source compression via blueprint distillation: A planning agent transforms unstructured papers into structured implementation blueprints with file hierarchies, component specifications, and verification protocols. 2) Structured indexing using stateful code memory: As files are generated, the system maintains compact memory entries of the evolving codebase to preserve cross-file consistency without context saturation. 3) Conditional knowledge injection via RAG: The system bridges implicit specification gaps by pulling standard implementation patterns from external knowledge bases. 4) Closed-loop error correction: A validation agent treats runtime execution feedback as corrective signals to identify and fix bugs iteratively. The results on OpenAI's PaperBench benchmark are impressive. DeepCode achieves 73.5% replication score, a 70% relative improvement over the best LLM agent baseline (o1 at 43.3%). It decisively outperforms commercial agents: Cursor at 58.4%, Claude Code at 58.7%, and Codex at 40.0%. Most notably, DeepCode surpasses human experts. On a 3-paper subset evaluated by ML PhD students from Berkeley, Cambridge, and Carnegie Mellon, humans scored 72.4%. DeepCode scored 75.9%. Principled information-flow management yields significantly larger performance gains than merely scaling model size or context length. The framework is fully open source. Paper: https://t.co/LXVKsxOXfi Learn to build effective AI Agents here: https://t.co/JBU5beIoD0