Your curated collection of saved posts and media

Adaptive retrieval is the way to go! And this RouteRAG paper shows why. Let's talk about it: RAG systems have a retrieval problem. The default approach to multi-hop reasoning today relies on fixed retrieval pipelines. It typically involves fetching text + maybe graph data, and hope everything is retrieved in one-shot. But the reality is that complex questions and real-world tasks require adaptive retrieval. Sometimes you need text. Sometimes you need relational structure from a graph. Sometimes it will be great to use both. And it's not secrete that graph retrieval is expensive, so retrieving it unnecessarily wastes compute. This new research introduces RouteRAG, an RL-based framework that teaches LLMs to make adaptive retrieval decisions during reasoning. When to retrieve, what source to retrieve from, and when to stop. The model learns a unified generation policy through two-stage training. > Stage 1 optimizes for answer correctness, establishing reasoning capability. > Stage 2 adds an efficiency reward that discourages unnecessary retrieval, teaching the model to balance accuracy against computational cost. The action space includes three retrieval modes: passage-only, graph-only, or hybrid. The model dynamically selects based on evolving query needs. Text retrieval works well for simple questions. Graph retrieval shines for multi-hop reasoning. The policy learns when each is appropriate. Results across five QA benchmarks: RouteRAG-7B achieves 60.6 average F1, outperforming Search-R1 (56.8 F1) despite being trained on only 10k examples versus 170k. On multi-hop datasets like 2Wiki, it reaches 64.6 F1 compared to 58.9 for Search-R1. The efficiency gains are also substantial. RouteRAG-7B reduces average retrieval turns by 20% compared to training without the efficiency reward, while actually improving accuracy by 1.1 F1 points. So we get best of both worlds: fewer retrieval calls and better answers. And here is something exciting: Small models also approach large model performance. RouteRAG with Qwen2.5-3B surpasses several graph-based RAG systems built on GPT-4o-mini, suggesting that improving the retrieval policy can be as impactful as scaling the backbone. Teaching models when and what to retrieve through RL yields more efficient and accurate multi-hop reasoning than scaling training data or model size alone. Paper: https://t.co/a4J6oAX0GC Learn to build RAG and effective AI Agents in our academy: https://t.co/zQXQt0PMbG

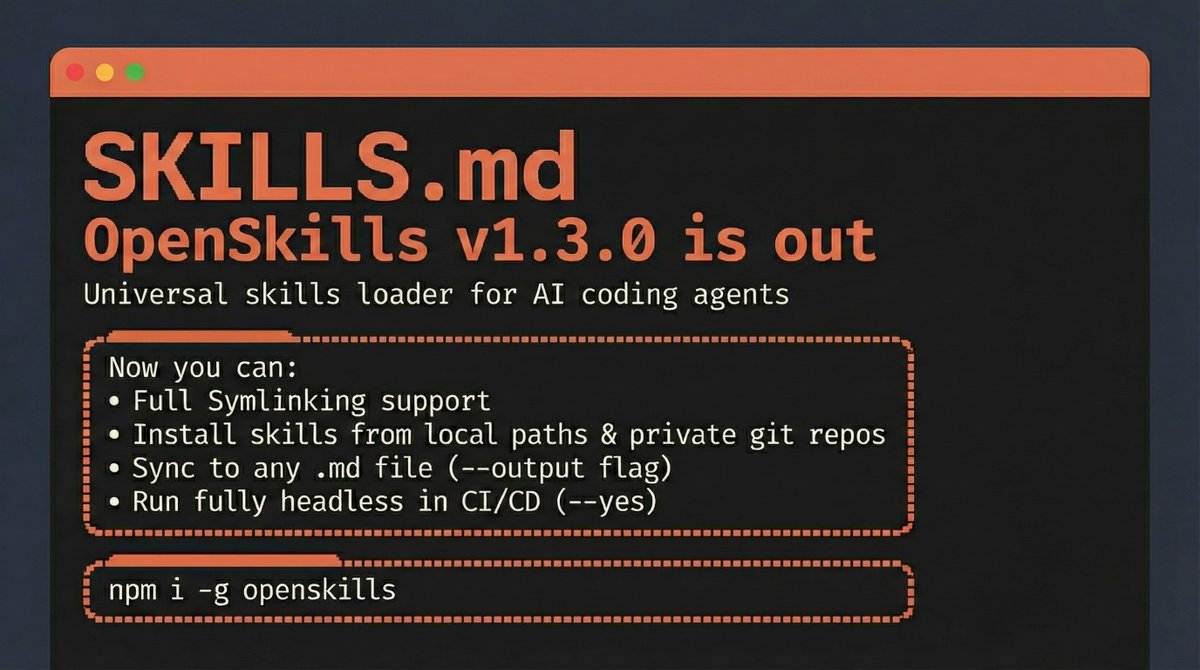

OpenSkills v1.3.0 is out 🚀 The Universal Skills loader for AI Coding Agents Now you can: • Use Symlinks with your skills • Install skills from local paths & private git repos • Sync to any .md file (--output flag) • Run fully headless in CI/CD (--yes) npm i -g openskills https://t.co/Rt0le0Akxy

Jamie Dimon says soft skills like emotional intelligence and communication are vital as AI eliminates roles https://t.co/XBm2hITjVp @jpmorgan @fortunemagazine

At this small buyout firm, talking about AI for cost-cutting is off-limits https://t.co/tOUS8W7n7y @nicollsanddimes @businessinsider

After 3 tech layoffs, I knew I had to lean into being a founder https://t.co/Bp0QhYDrKu @TimSParadis @businessinsider

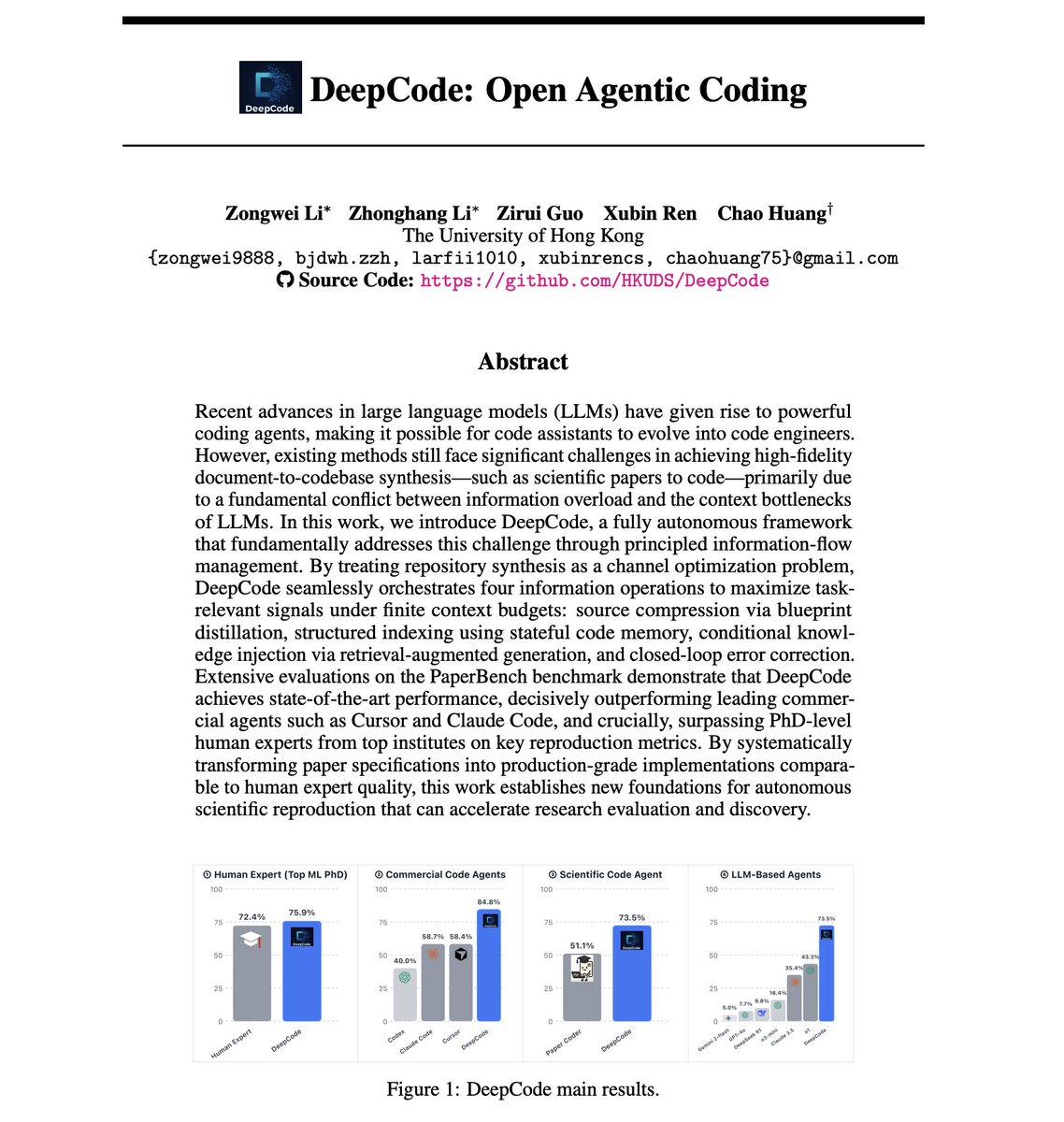

DeepCode: Open Agentic Coding AI coding agents still can't reliably turn research papers into working code. The best LLM agents achieve only 42% replication scores on scientific papers, while human PhD experts hit 72%. But the problem isn't model capability. This new paper suggests it might have to do with information management. It introduces DeepCode, an open agentic coding framework that treats repository synthesis as a channel optimization problem, maximizing task-relevant signals under finite context budgets. How does this work? Scientific papers are high-entropy specifications with scattered multimodal constraints, equations, pseudocode, and hyperparameters. Naive approaches that concatenate raw documents with growing code history cause channel saturation, where redundant tokens mask critical algorithmic details and signal-to-noise ratio collapses. DeepCode addresses this through four orchestrated information operations: 1) Source compression via blueprint distillation: A planning agent transforms unstructured papers into structured implementation blueprints with file hierarchies, component specifications, and verification protocols. 2) Structured indexing using stateful code memory: As files are generated, the system maintains compact memory entries of the evolving codebase to preserve cross-file consistency without context saturation. 3) Conditional knowledge injection via RAG: The system bridges implicit specification gaps by pulling standard implementation patterns from external knowledge bases. 4) Closed-loop error correction: A validation agent treats runtime execution feedback as corrective signals to identify and fix bugs iteratively. The results on OpenAI's PaperBench benchmark are impressive. DeepCode achieves 73.5% replication score, a 70% relative improvement over the best LLM agent baseline (o1 at 43.3%). It decisively outperforms commercial agents: Cursor at 58.4%, Claude Code at 58.7%, and Codex at 40.0%. Most notably, DeepCode surpasses human experts. On a 3-paper subset evaluated by ML PhD students from Berkeley, Cambridge, and Carnegie Mellon, humans scored 72.4%. DeepCode scored 75.9%. Principled information-flow management yields significantly larger performance gains than merely scaling model size or context length. The framework is fully open source. Paper: https://t.co/LXVKsxOXfi Learn to build effective AI Agents here: https://t.co/JBU5beIoD0

Sign if you know/remember https://t.co/bfJo4lplht

Sign if you know/remember https://t.co/bfJo4lplht

Make sure to set up the all new 𝕏 and Grok widgets on your lock screen for instant access. https://t.co/tkkryFdh3t

WOW! An absolute ocean of Chileans have flooded the streets to celebrate the end of socialist rule in their country. A capitalist revolution is ensuing all over the Americas. https://t.co/B5RGsIY2AM

@Wizarab10 Ohh!! Oh babyyyyy Sir Dickson you like Native very well oo Do you wear Jeans and shorts? https://t.co/YoCGEmfj1O

Amazon pulls AI recap from Fallout TV show after it made several mistakes https://t.co/PYvJIJuqZW @liv_mcmahon @bbctech @bbc

With AI, @MIT researchers teach a robot to build furniture by just asking https://t.co/YVPz0y1ILE @therobotreport https://t.co/Pgnft1Unj4

@youwouldntpost it begins... https://t.co/jcrQiTbVbS

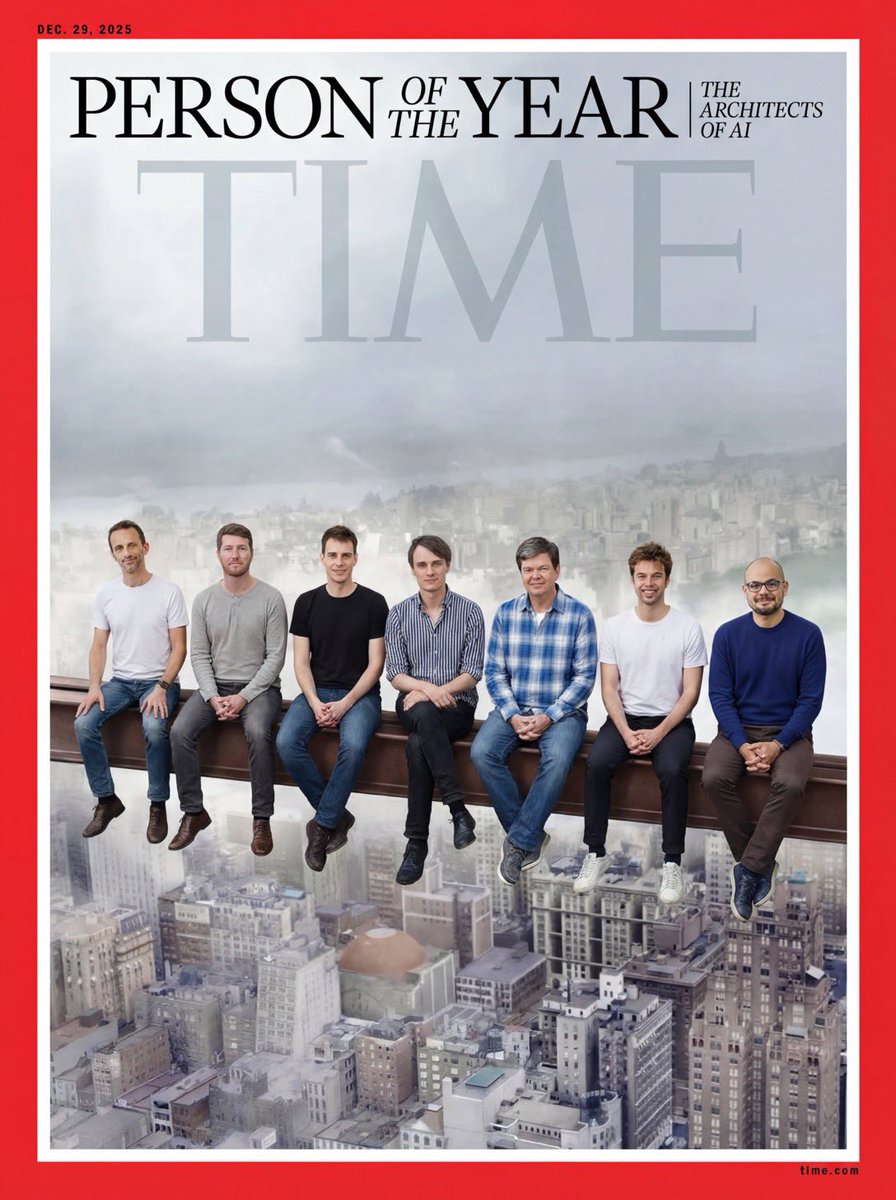

🇪🇺On vote pour cette couv’🙌🏼!! Merci @roxannevarza @ClaraChappaz et 👏🏼@itsrwanman pour ce matching! Story « Thankfully we have @demishassabis in the photo. » & @ClementDelangue @julien_c @Thom_Wolf @arthurmensh @ptrkprz @ylecun @antonosika @FabianHedin @ASMLcompany @Lovable https://t.co/u8npbbj1qG

Do LLMs really understand? To cut through the noise around AI, we brought in two experts shaping the field: Yann LeCun (@ylecun) and Adam Brown of @GoogleDeepMind. Watch them in conversation with @JannaLevin about the true intelligence of artificial minds. https://t.co/LnzQGPUIwA https://t.co/f6gbwJSELF

It was a joy to watch this substantive discussion. @JannaLevin for @PioneerWorks_ talking to @ylecun and Adam Brown of Google DeepMind, about many things AI! And a surprise plant in the audience toward the end shouts out questions about machine consciousness! https://t.co/gIWX6FtPv8

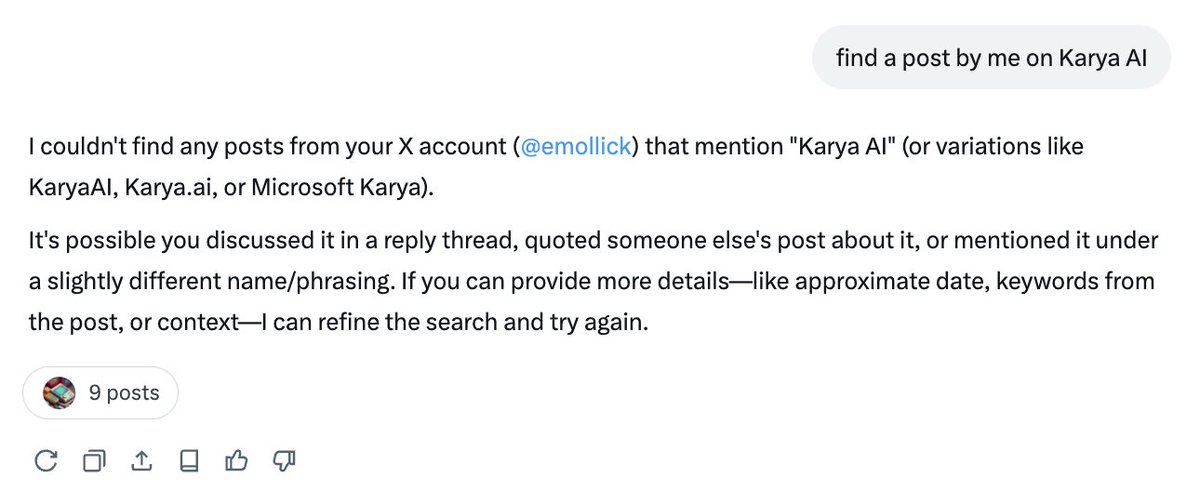

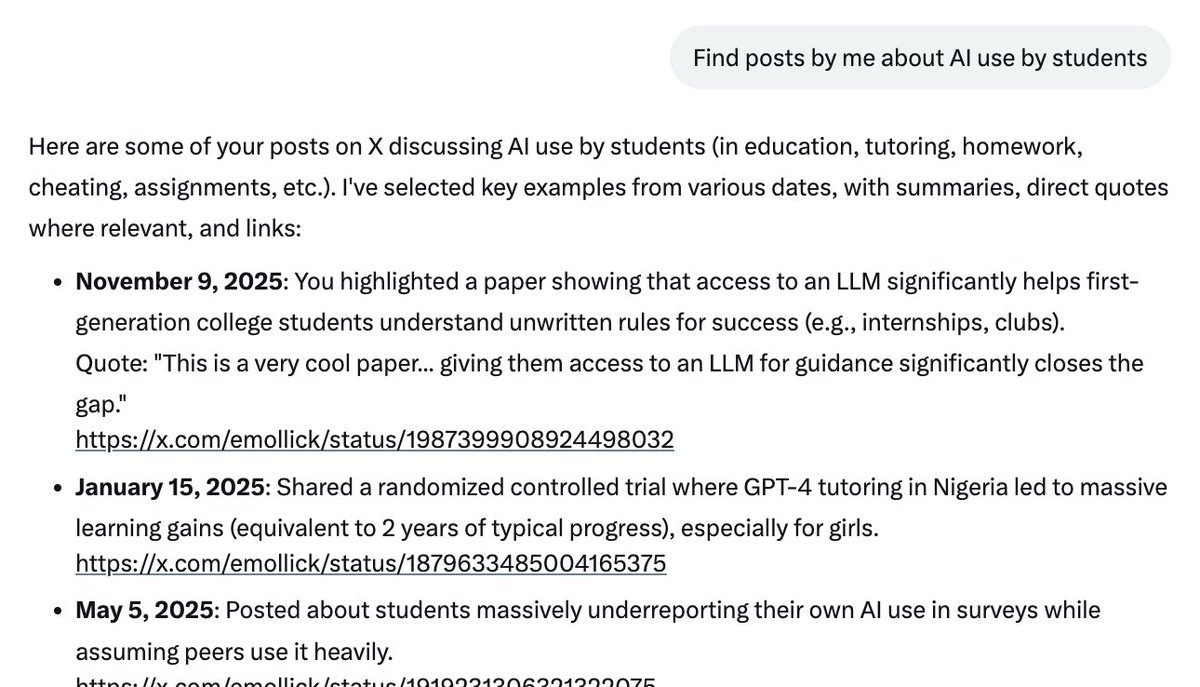

I've never had a reason to really try Grok so I've missed out on all the insanity. It turns out that @grok thinks I'm @emollick. I'm flattered to be confused with Ethan, but also... what? How does a bug like this even arise?! https://t.co/pp6WcNXDi7

Update: I asked it what my username is and answered correctly (after looking at 19 webpages?!) and now I can't reproduce the bug even in a fresh session. Oh well. You know what they say, everyone can be @emollick for 15 minutes. https://t.co/rSflSk5zT3

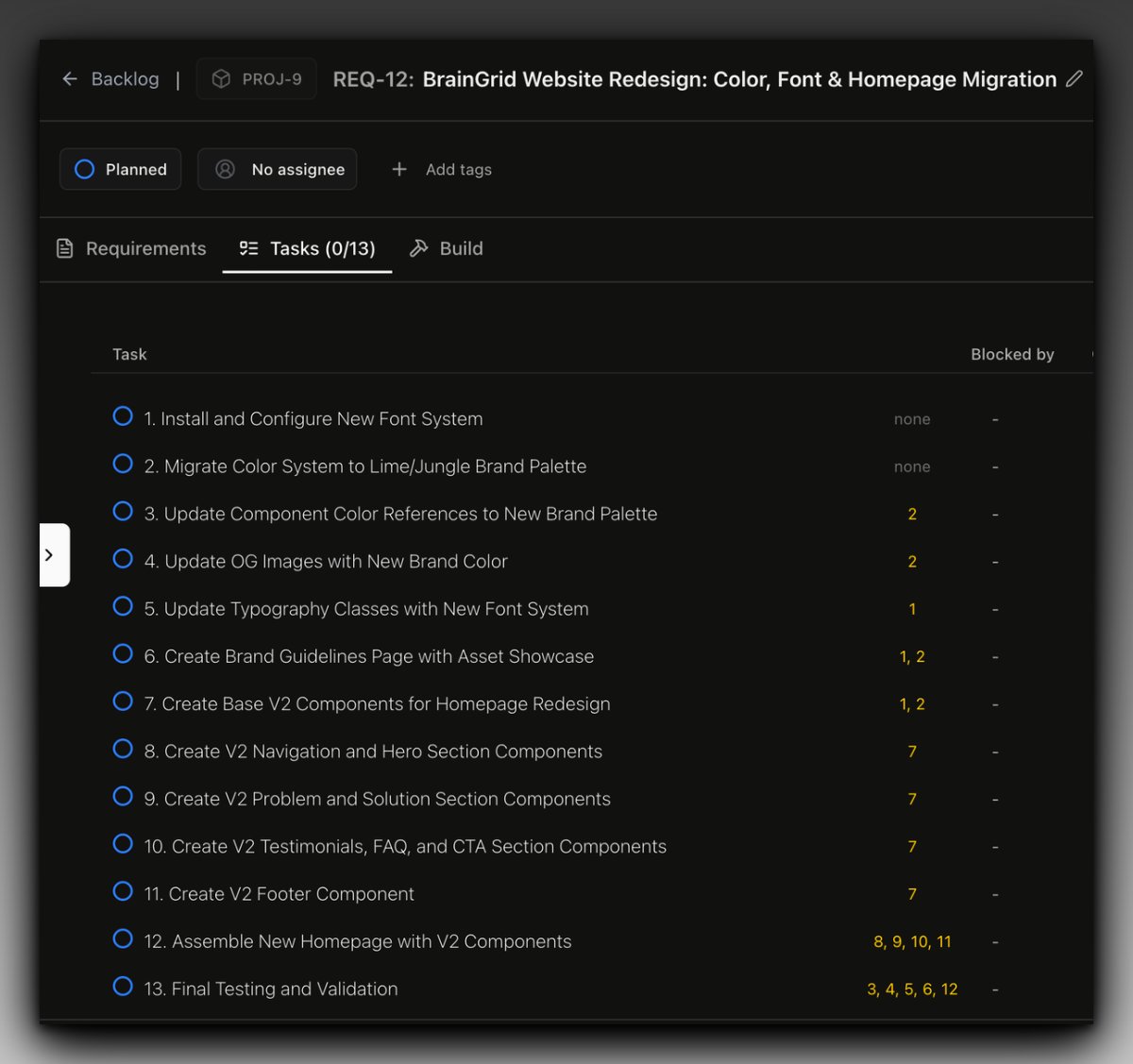

Embarking on a massive redesign for @braingridai with the new brand. @braingridai crafted a plan, broke down the tasks, and provided detailed prompts for each one. Now, Claude code is pulling tasks one by one and building them 💪🏼 https://t.co/XzlSxsRCu3

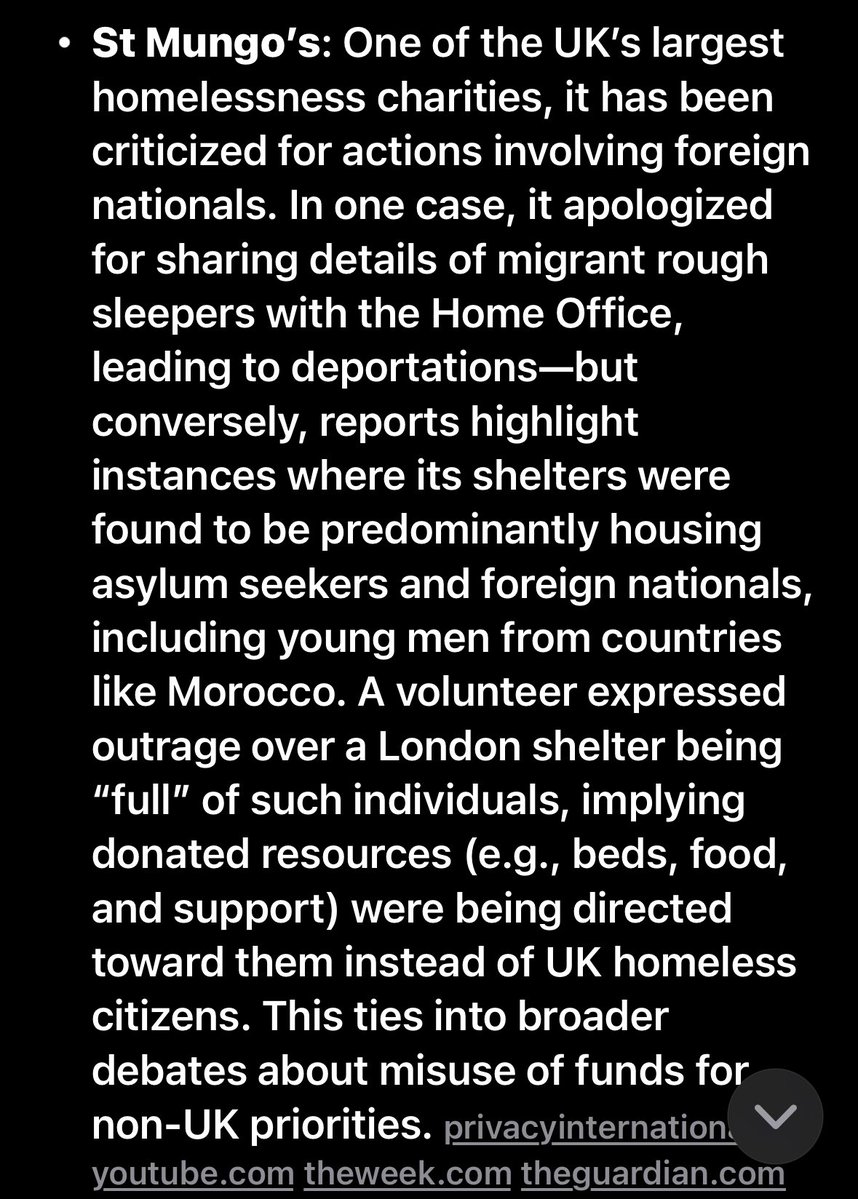

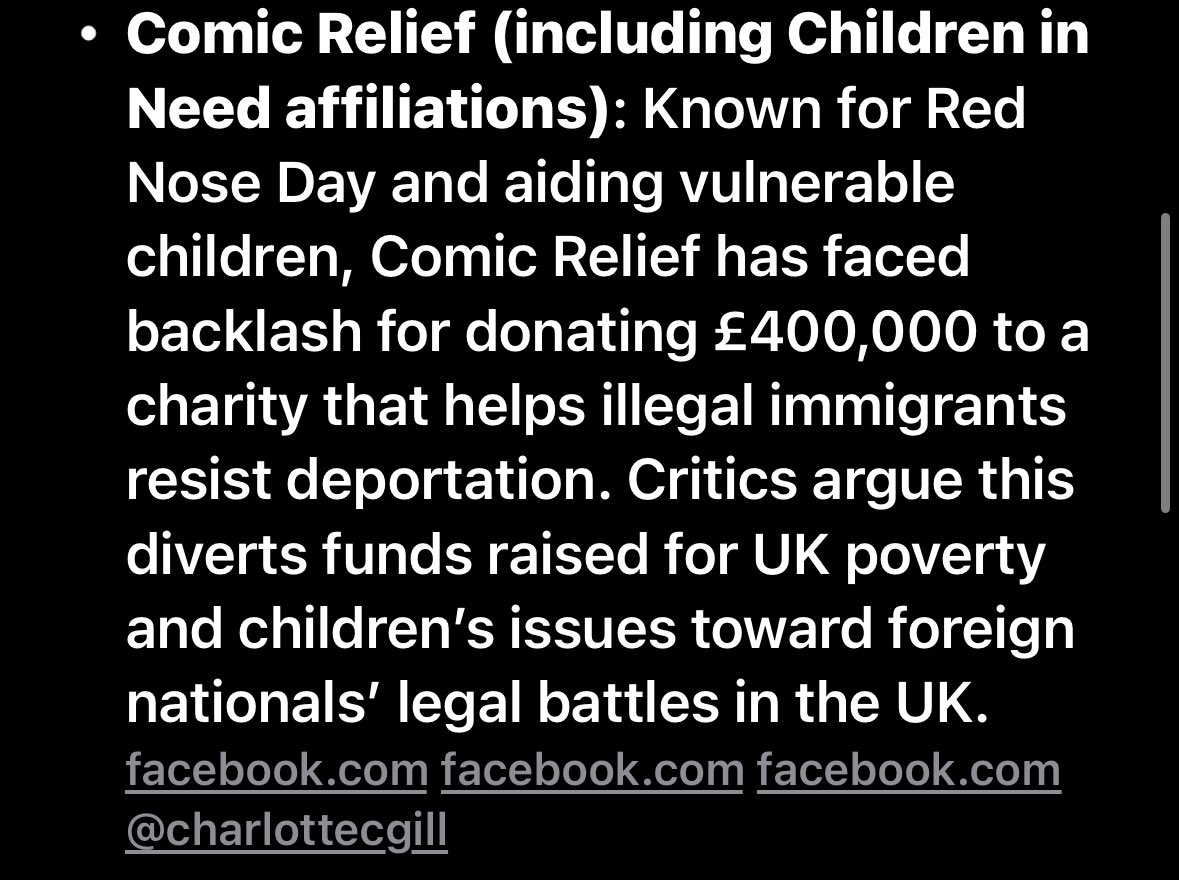

UK charities brace for £300m Christmas donation slump, with children’s and homelessness charities among worst affected https://t.co/BB4KQZnF0J

Let them suffer. Provide directly to your own communities, these charlatans just redirect your money without your knowing. Follow the trail, charity starts at home. https://t.co/CaQVsdqFJi

UK charities brace for £300m Christmas donation slump, with children’s and homelessness charities among worst affected https://t.co/BB4KQZnF0J

@nathan_ga19 me and the boys banning Indians from X app https://t.co/FK4naa2SZX

Your weekend just got lighter. 💙 Upgrade what you need today and split the payments with zero stress. Lucred’s got you covered. #LucredFinance #StressFreeShopping #BNPL #NigeriaFintech https://t.co/GJTLwXVfIA

We’re hiring 2 summer interns on the NVIDIA Brev team! Apply here: https://t.co/h7iswNxJCU https://t.co/F33d8DBq1U

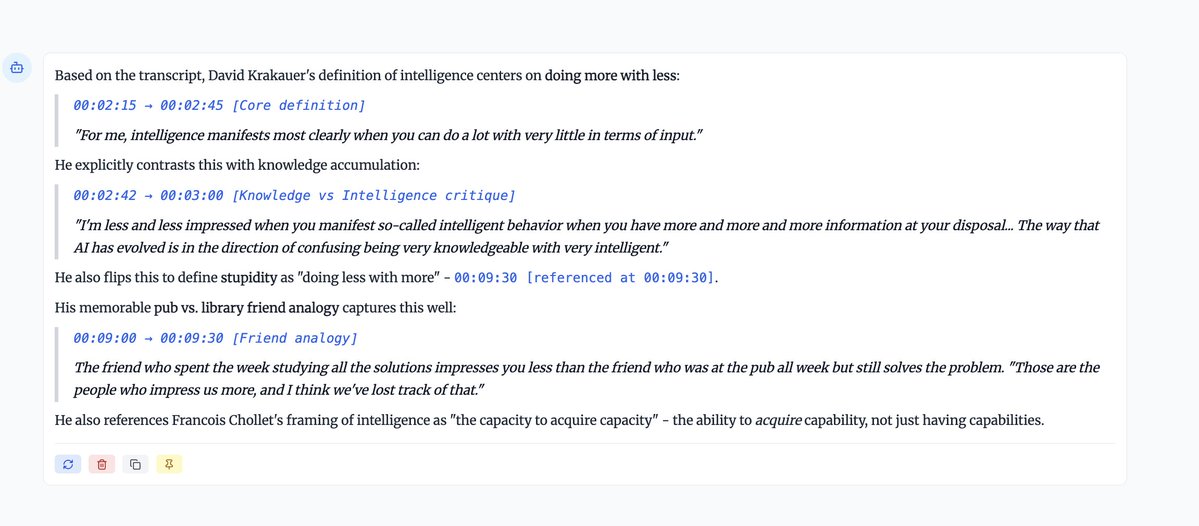

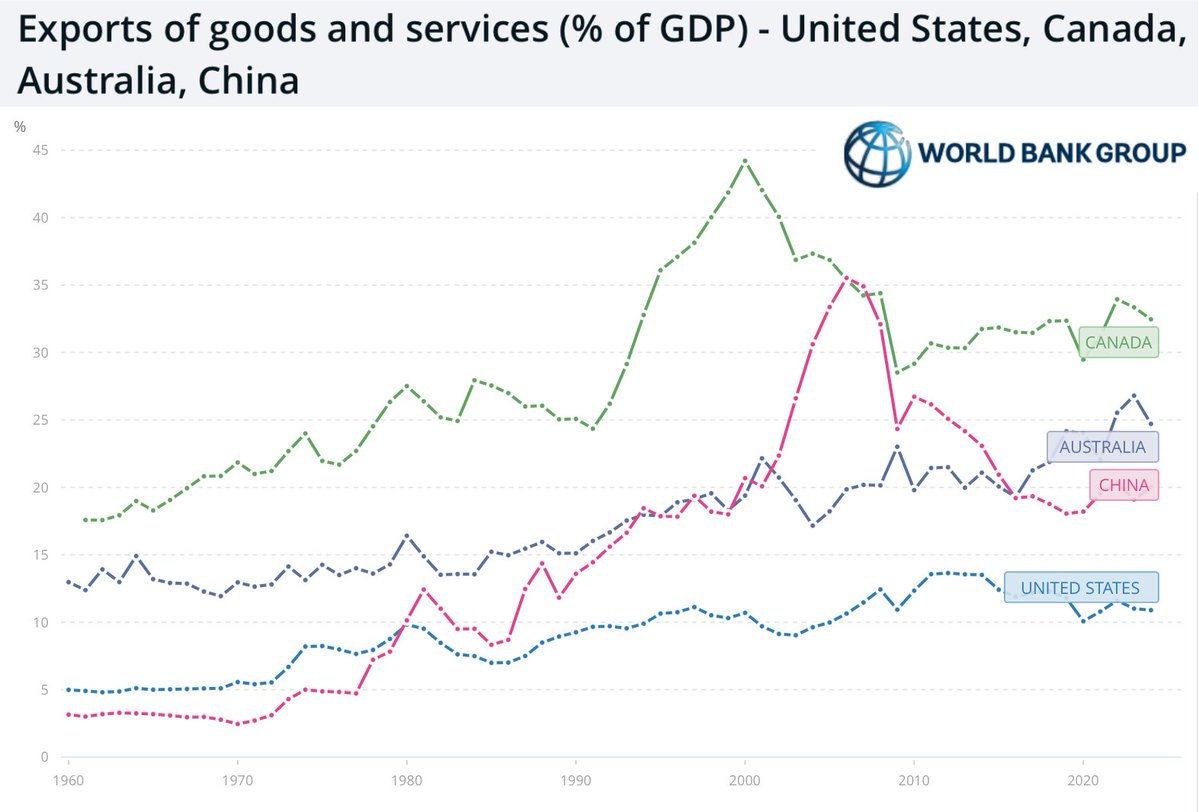

@Tim_Dettmers "in other words, AGI — then that intelligence can improve itself, leading to a runaway effect. This idea comes from Oxford-based philosophers who brought these concepts to the Bay Area." Amen. By the way, David Krakauer imo understands intelligence very well (see images and our recent coverage of him). Most of the ideology around "AGI" conceives of intelligence as an abstract monomaniacal algorithm with a single goal - something which no serious cognitive science (other than connectionists, LOL) would give any credence to. As @MelMitchell1 says, it's multi scale, multi objective and multi domain. I just finished reading this book - https://t.co/9fLwfAy5Hu by Adam Becker and you would really enjoy reading chapter 2 "Machines of Loving Grace"

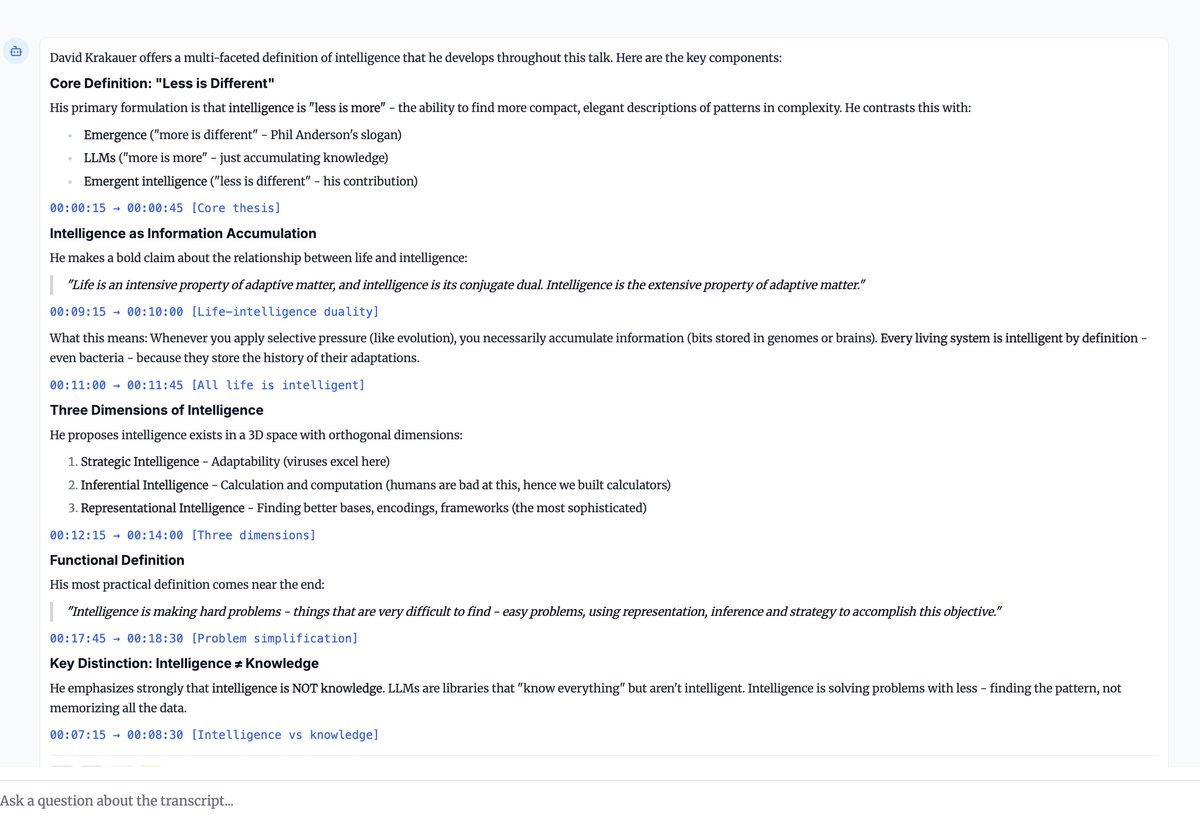

Exports are only 20% of China's GDP. The U.S. is 15% of that (3%). The Chinese domestic market is way bigger than people realize.

This picture was taken on Mars this week. https://t.co/Dd0KM8z4yC

生成AIは「人の成長を加速させるパートナー」になれるか Google Cloud様提供の『日経ビジネス』記事にて、Sakana AI CEO David Ha(@hardmaru)が参加したインタビューが公開されました。業務に適したAIモデルの組み合わせ方や、日本企業の強みを活かすAI導入について語りました。 組織文化に寄り添うAIを 「日本の大手企業の今のあり方は、何十年もの時間をかけて出来上がってきたものです。その組織の形や働き方になったことには、それなりの理由があります。それに対して「組織をフラットに」と要求するのは非合理的です。むしろ、AIがそこを支援して一緒に働くことが大切です。」 人の成長を加速させるパートナーを目指して 「生成AIの目標の一つは、人とコンピューターを橋渡しするCompanionになることです。…深い業務の理解をAIが持ち、人間と同じ方向を向いて問題を解決していくようになっていくことが次の挑戦だと思います。」 Sakana AIのミッション 「Sakana AIはAIモデルやAIエージェントの研究開発に取り組むスタートアップです。いま取り組んでいることは2つあります。エネルギー効率が高い日本発のAIモデルの開発と、いくつものAIモデルをうまく使い分けて高度なプロセスを自動実行できるようなAIエージェントの開発です。」 AIモデルを使い分け、組み合わせる 「AIモデルによって強いところと弱いところが違っていますね。だから私たちは、複数のAIを組み合わせて求める機能を実現するマルチファウンデーションモデルを提唱しています。…企業の事業ドメインや適用する業務によって選ぶべき生成AIは変わります。利用者の志向や業務ノウハウを理解したうえで、AIモデルを選び、そしてモデルが出してくる回答の適切さを評価する必要があります。そのプロセスこそが、AI導入の成功を左右する鍵になります。」 全文はこちら:https://t.co/RSSy8bFbLa

Applied Research Engineer https://t.co/FuEoI2xrzS