Your curated collection of saved posts and media

AI image generators are getting better by getting worse https://t.co/IQtoN54Qgo @verge

Playing Le Mans Ultimate in VR is awesome! https://t.co/bEQ0jkweFv

Imagine this: you have a great idea for an AI video. You’re excited to bring it to life… and then you lose hours rerolling generations just to get close to what you had in mind. Sound familiar? It’s happened to me more times than I can count - and it’s a real creative bottleneck. That’s why Kamo-1 by @kinetix_ai genuinely surprised me. Instead of endless rerolls, it lets you generate precise videos based on an input clip. You create the first frame, choose the camera controls, and the results actually follow your intent. Long-term, this kind of control and predictability will get much closer to how traditional CGI workflows work. Check out my guide below on how I’m using it 👇

Under the hood of every gaussian splat lives a point cloud - what this mean is we can do some really cool things! With points and particles being so relative - you can actually treat them as if they were the same. Here we're using @insydium_ltd X-Particles to drive a nexus particle simulation from the millions of splat points directly. This opens the doors to tons of creative opportunities for splats via XP with the full stack of modifiers and controls to take your splat assets to the next level! Rendered with @OTOY Octane 2026

https://t.co/Pr98RMbcyI

https://t.co/Pr98RMbcyI

Just getting into books, is this a good start https://t.co/zsxxwlnsiW

Start talking to Grok - It has a best human-like voice, understands emotions better, and delivers insights instantly with the latest Grok model Just keep talking.... Grok listens to you, searches the web in real time, and pulls live info whenever you need it Pause, resume and turn off mic anytime from the home screen - no need for app reopening Grok voice mode just hits different – it feels more natural, more expressive, and way better for real conversations

Elon Musk: The fundamental weakness of Western civilization is the empathy exploit “There's a guy who posts on the internet who's great, Gad Saad, and he talks about basically suicidal empathy. There's so much empathy that you actually suicide yourself. So we've got civilizational suicidal empathy going on. I believe in empathy. I think you should care about other people, but you need to have empathy for civilization as a whole and not commit to a civilizational suicide. The fundamental weakness of Western civilization is empathy. The empathy exploit. They're exploiting a bug in Western civilization, which is the empathy response. I think empathy is good, but you need to think it through and not just be programmed like a robot. Its weaponized empathy is the issue.”

Will the media report that the victim of the Brown Shooter was a young conservative christian white woman who ran the only allowed young Republican group on campus and was part of a small group of only 20 openly conservative students at Brown? Will they mention that the police explicitly claimed it was likely a targeted attack to her family and that when he came in the room he looked specifically for her first before he fired?

The public school system is designed to indoctrinate children into: -CRT (Critical Race Theory) -Gender ideology -Climate ideology -Feminism, & -Atheism & everyday more parents realize that if you want to protect your child from the woke mind virus you have to start young. https://t.co/U6w2AtWNfz

https://t.co/phRaYgRppM

Which way western woke? https://t.co/b5es8rWIab

SpaceX has completed its 550th booster landing https://t.co/Hau0uZjDSt

Wishing you a Happy Hanukkah from the ellipse of the White House. Even in the age of so much darkness we can rejoice together with our families for the festival of lights. https://t.co/TMJVlvN9W3

Social media are full of misinformation about AI history. To all "AI influencers:" before you post your next piece, take history lessons from the AI Blog, with chapters on: Who invented artificial neural networks? 1795-1805 Who invented deep learning? 1965 Who invented backpropagation? 1676-1970 Who invented convolutional neural nets? 1979-1988 Who invented generative adversarial networks? 1990 Who invented Transformer neural networks? 1991-2017 Who invented deep residual learning? 1991-2015 Who invented neural knowledge distillation? 1991 Who invented the transistor? 1925 Who invented the integrated circuit? 1949 Who created the general purpose computer? 1936-1941 Who founded theoretical CS and AI theory? 1931-34 And many more ... https://t.co/nZdKsslmJj

These AI renovations of abandoned places are so satisfying to watch (from kouyang1 on IG) https://t.co/67phw9uIXN

These AI renovations of abandoned places are so satisfying to watch (from kouyang1 on IG) https://t.co/67phw9uIXN

Very detailed article by @jetscott that talks about all the #smartglasses available at the moment, their strengths, their challenges, and their evolutions for the next year. A very good read for the weekend! https://t.co/iKB3Q6iflz #ArtificialIntelligence #Meta #Google https://t.co/prEmHliW3Z

And that is assuming your chat doesn't just end with this error. I am still unsure why they decided to go the compacting route in the way that they did. Conversations don't compress into tasks and files in the same that coding projects do. https://t.co/KUFfVuQem3

MrBeast Is Building a Financial Empire as Beast Industries Expands Into Banking and Mobile Services https://t.co/RqOW2KzmiY @MrBeast @castle_crypto

Want job security in the age of AI? Get a state license – any state license https://t.co/CvOHpkbb7p

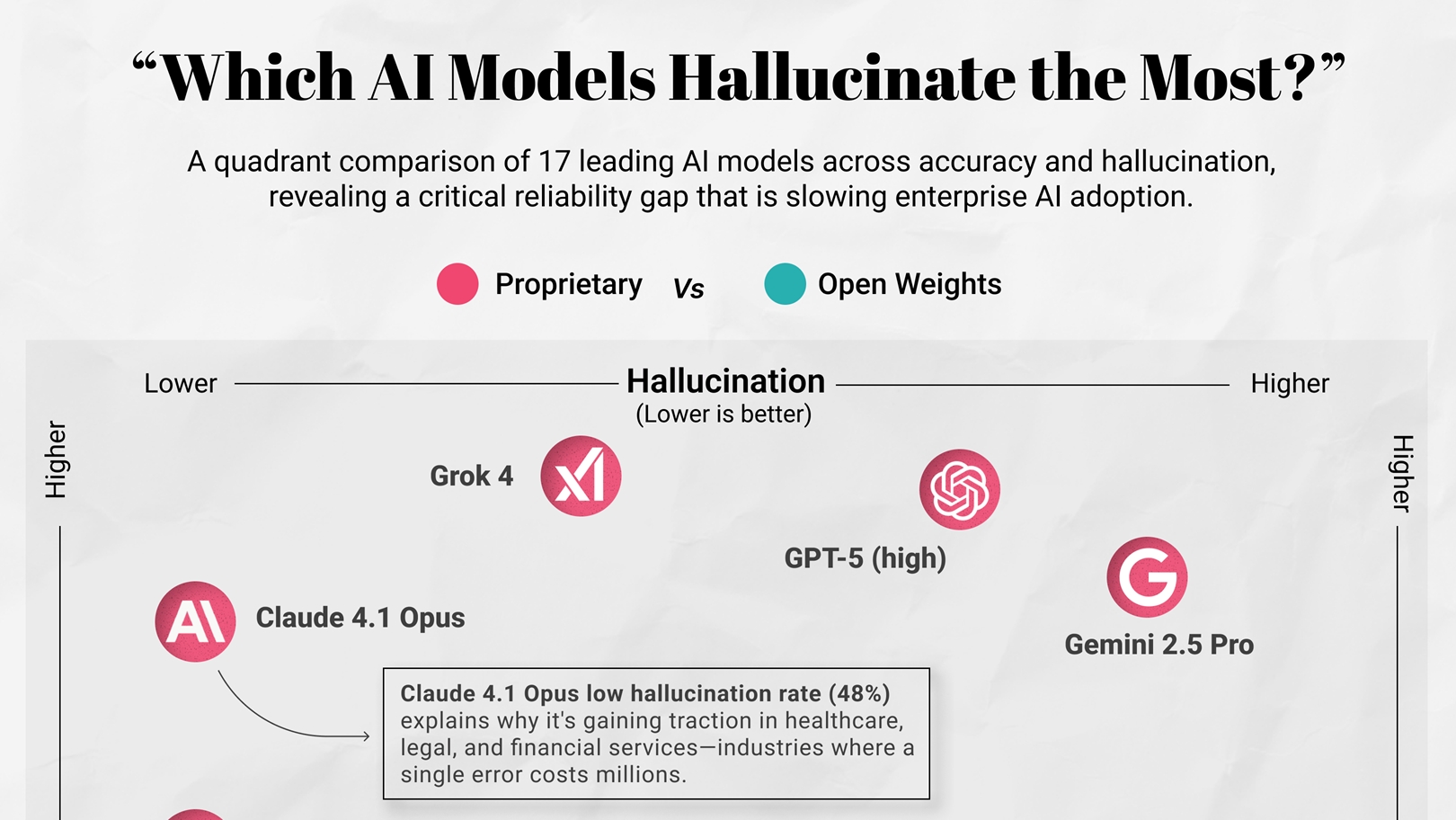

Which AI Models Hallucinate the Most? https://t.co/gYjKzdIyvM @visualcap

Believe me when I say Hollywood is cooked. Here is a trailer I created in 20 minutes using @midjourney and @Kling_ai This is a movie I'd LOVE to see on the big screen. I'm tired of eating whatever the next big franchise has to offer me. https://t.co/M64k5PLyFV

Real user feedback matters in model evaluation. ✨GPT-5.2 Instant, meant for everyday work, is #1 on @yupp_ai’s Text Leaderboard while GPT-5.2 (High) is #1 on our SVG Leaderboard. @openai’s strategy of releasing model variants suited to the task looks sound. Congrats @openai! 🎉 https://t.co/4TX7ffVvuV

GPT-5.2 is now rolling out to everyone. https://t.co/nfubPwnIIw

窓全開の大音量でおさるのジョージ見てたら今これ https://t.co/pb3pvSpBiU

窓全開の大音量でおさるのジョージ見てたら今これ https://t.co/pb3pvSpBiU

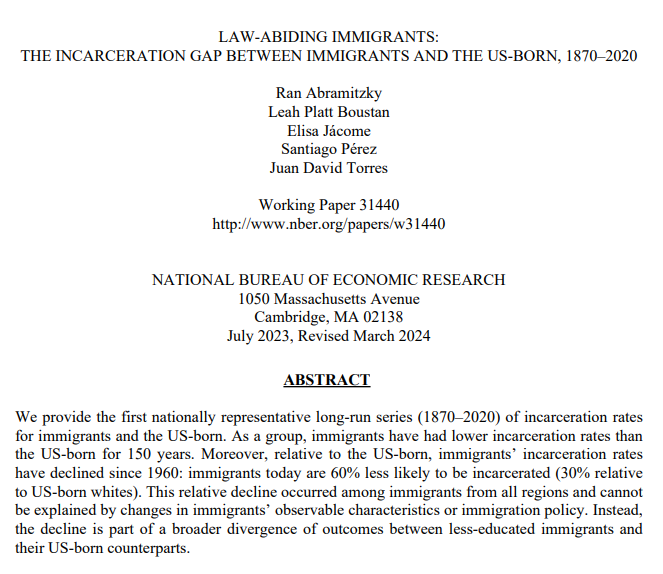

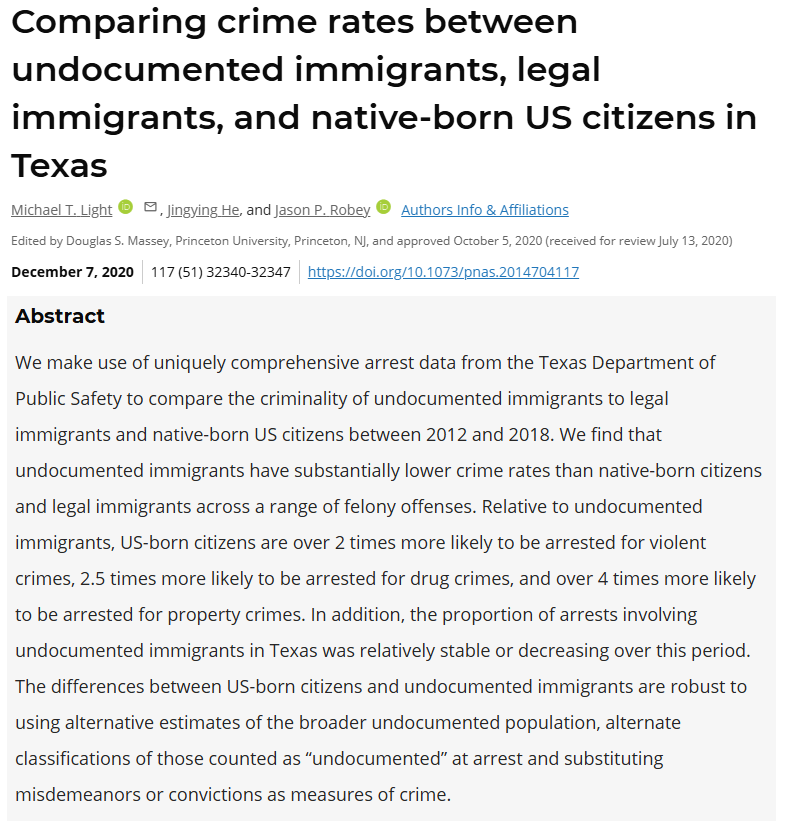

per capita, legal immigrants to america commit less crime than the native-born population. even illegal immigrants commit less crime (not counting the initial crime of entering illegally) turns out, the right-wing narrative about immigration is false, at least for america. https://t.co/i3pgsUk0jE

Thomas Sowell on Gun Control 🔫 https://t.co/vBFWmXa5B0

Adaptive retrieval is the way to go! And this RouteRAG paper shows why. Let's talk about it: RAG systems have a retrieval problem. The default approach to multi-hop reasoning today relies on fixed retrieval pipelines. It typically involves fetching text + maybe graph data, and hope everything is retrieved in one-shot. But the reality is that complex questions and real-world tasks require adaptive retrieval. Sometimes you need text. Sometimes you need relational structure from a graph. Sometimes it will be great to use both. And it's not secrete that graph retrieval is expensive, so retrieving it unnecessarily wastes compute. This new research introduces RouteRAG, an RL-based framework that teaches LLMs to make adaptive retrieval decisions during reasoning. When to retrieve, what source to retrieve from, and when to stop. The model learns a unified generation policy through two-stage training. > Stage 1 optimizes for answer correctness, establishing reasoning capability. > Stage 2 adds an efficiency reward that discourages unnecessary retrieval, teaching the model to balance accuracy against computational cost. The action space includes three retrieval modes: passage-only, graph-only, or hybrid. The model dynamically selects based on evolving query needs. Text retrieval works well for simple questions. Graph retrieval shines for multi-hop reasoning. The policy learns when each is appropriate. Results across five QA benchmarks: RouteRAG-7B achieves 60.6 average F1, outperforming Search-R1 (56.8 F1) despite being trained on only 10k examples versus 170k. On multi-hop datasets like 2Wiki, it reaches 64.6 F1 compared to 58.9 for Search-R1. The efficiency gains are also substantial. RouteRAG-7B reduces average retrieval turns by 20% compared to training without the efficiency reward, while actually improving accuracy by 1.1 F1 points. So we get best of both worlds: fewer retrieval calls and better answers. And here is something exciting: Small models also approach large model performance. RouteRAG with Qwen2.5-3B surpasses several graph-based RAG systems built on GPT-4o-mini, suggesting that improving the retrieval policy can be as impactful as scaling the backbone. Teaching models when and what to retrieve through RL yields more efficient and accurate multi-hop reasoning than scaling training data or model size alone. Paper: https://t.co/a4J6oAX0GC Learn to build RAG and effective AI Agents in our academy: https://t.co/zQXQt0PMbG