Your curated collection of saved posts and media

今日のAIニュースをゆる〜くお届け <第18話 Reachy Mini> #ゆるふわAI研究所 https://t.co/7F4l3Gtza8

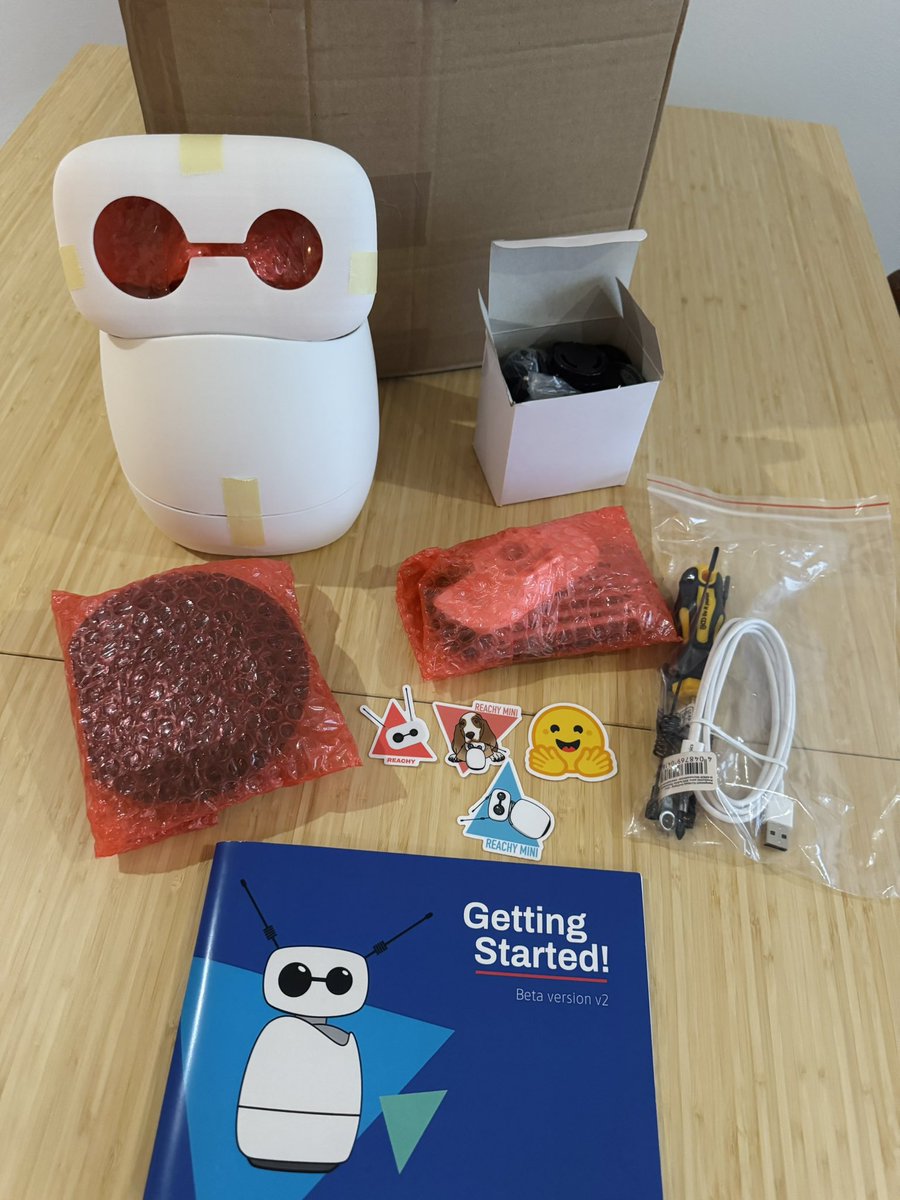

my reachy mini is here, can’t wait to play around with it! https://t.co/wyEp2lXk4X

my reachy mini is here, can’t wait to play around with it! https://t.co/wyEp2lXk4X

2/ In GitHub Copilot, GPT-5.2 is a fantastic multi-purpose model, especially when it comes to long-context and reasoning when coding or investigating a complex code base. Also available today. https://t.co/VMnJP1TXdp

Imagine: Two animals run the exact same distance. One chooses to. One is forced. The voluntary runner gets healthier—better heart rate, blood pressure, glucose. The forced runner? Gets sicker. Andrew Huberman says the same rule destroys or upgrades your stress, your workouts, even your life. And the craziest proof: People who watched hours of Boston bombing news coverage suffered MORE acute stress than those who were actually there. Your mind doesn’t know the difference between experiencing and relentlessly consuming. So… what “have-to” in your life are you ready to reframe as a choice? This 1:58 clip just rewired my brain.

NEW Research from Apple. When you think about it, RAG systems are fundamentally broken. Retrieval and generation are optimized separately, retrieval selects documents based on surface-level similarity while generators produce answers without feedback about what information is actually needed. There is an architectural mismatch. Dense retrievers rank documents in embedding space while generators consume raw text. This creates inconsistent representation spaces that prevent end-to-end optimization, redundant text processing that causes context overflow, and duplicated encoding for both retrieval and generation. This new research introduces CLaRa, a unified framework that performs retrieval and generation over shared continuous document representations. They encode documents once into compact memory-token representations that serve both purposes. Instead of maintaining separate embeddings and raw text, documents are compressed into dense vectors that both the retriever and generator operate on directly. This enables something previously impossible: gradients flowing from the generator back to the retriever through a differentiable top-k selector using Straight-Through estimation. The retriever learns which documents truly enhance answer generation rather than relying on surface similarity. To make compression work, they introduce SCP, a pretraining framework that synthesizes QA pairs and paraphrases to teach the compressor which information is essential. Simple QA captures atomic facts, complex QA promotes relational reasoning, and paraphrases preserve semantics while altering surface form. Results: At 16x compression, CLaRa-Mistral-7B surpasses the text-based DRO-Mistral-7B on NQ (51.41 vs 51.01 F1) and 2Wiki (47.18 vs 43.65 F1) while processing far less context. At 4x compression, it exceeds uncompressed text baselines by 2.36% average on Mistral-7B. Most notably, CLaRa trained with only weak supervision from next-token prediction outperforms fully supervised retrievers with ground-truth relevance labels. On HotpotQA, it achieves 96.21% Recall@5, exceeding BGE-Reranker (85.93%) by over 10 points despite using no annotated relevance data. Well-trained soft compression can retain essential reasoning information while substantially reducing input length. The compressed representations filter out irrelevant content and focus the generator on reasoning-relevant context, leading to better generalization than raw text inputs. Great read for AI devs. (bookmark it) Paper: https://t.co/JtMukGVNwV Learn to build with RAG and AI Agents in my academy: https://t.co/JBU5beIoD0

NVIDIA introduces the Nemotron 3 family of models! Super, Ultra will be released later, Nano released today * Mixture-of-Experts hybrid Mamba–Transformer architecture Super and Ultra models: * are trained with NVFP4 * LatentMoE (project token embedding to smaller latent dimension for experts to process) * multi-token prediction full pretraining+post-training data and code will be made open-source

🧠⚙️Building the world’s first autonomous science operating system requires extraordinary scale, speed & security. Find out how @LilaSciences are partnering with #AWS to support their mission of accelerating scientific discovery. 👉 https://t.co/HBCCvY0wLu https://t.co/waU86oEeVi

“iRobot Corp., the company that revolutionized robot vacuum cleaners in the early 2000s with its Roomba model, filed for bankruptcy and proposed handing over control to its main Chinese supplier.” 😥 https://t.co/sSifua7Tjn

INDIGENOUS PEOPLES FOR BLACK LIVES #JusticeForAhmadArbery #JusticeForBigFloyd #JusticeforBreonnaTaylor #JusticeForTonyMcDade https://t.co/dFNZ7JfLH3

Art https://t.co/4sVDaplWGe

Went to bed at 4am. Anxious. Tired. Heavy heart. I keep thinking about my relatives, the communities I’m grateful to be part of & advocate for, hoping all our heart work isn’t for nothing. Praying for a future where Indigenous & Black relatives aren’t murdered, missing & ignored. https://t.co/8ssQRNw7e1

Being a voice, trying to make an impact & educate has been feeling pretty tokenizing & draining. Feeling really down lately. After all the heart work we do as Indigenous Ppls, the demands for our emotional labor not being implemented is frustrating. Staying focused & positive. https://t.co/ad0M0zqDQj

—— JORDAN kumpulan tweets au si anak kedua https://t.co/StZ0fORv6W

New data reveals over 75,000 people with no criminal record were arrested by ICE during the first 9 months of the Trump admin. The administration claimed they were targeting criminals, but the truth is clear: undocumented people with no record were detained & made to disappear. https://t.co/DDJxScMQno

Trump claims he's taken in over $18 trillion in tariffs in the last 10 months. To give you an idea of just how nonsensical and detached from reality this claim is, federal taxes in 2024 brought in just under $5 trillion. United States GDP in 2024 was $29 trillion. https://t.co/Z1RwR7JIOR

BREAKING: TRUMP LIES TO THE ENTIRE COUNTRY Trump’s tariff claim is completely false. Trump said: "Because of the tariffs, we've taken in $18 TRILLION. There's never been anything like it! The Biden admin took in less than $1 trillion in 4 years. We took in more than $18T in 10 months. That's good!" Reality: - The U.S. has NEVER collected $18T in tariffs, not in 10 months, not EVER. - Total U.S. federal revenue from all taxes combined is only $4–5T per year. - Tariffs historically bring in $70–100B per year...billions, not trillions! How wrong is $18T? - It’s 180× larger than actual annual tariff revenue - It’s 4× the entire federal budget - It’s economically impossible!!! Tariffs are paid by U.S. importers & consumers, not “free money” from abroad, and they’ve never generated trillions. Has TRUMP completely lost it!

📢 Introducing: NATIVE Recap Beeliever Edition 🐝🔥 Starting this week, I’ll be dropping a weekly @goNativeCC Recap every Sunday a simple, clear update covering: 🔸 Key BTCFi highlights 🔸 Native’s latest progress 🔸 My personal take on the narrative Short, sharp, and easy to follow. BTCFi is growing fast, and Native is building quietly but confidently. This recap will help you stay ahead without the noise. If you’re a Beeliever 🐝, you’ll love this. If not… you might become one soon. See you Sunday. NATIVE Recap begins. 🐝🔥

Everyone keeps asking what Bitcoin can become. Native quietly asked something weirder — “What if Bitcoin already knows the answer?” 🐝🧠 Native doesn’t force behavior onto BTC. It listens, verifies, and lets the rules speak for themselves. Turns out… Bitcoin is way smarter when you stop interrupting it. ⚡🧡 Some protocols add opinions. Native adds ears. That’s a very different kind of progress. @goNativeCC @Tommy_The_Gods #Native #goNative #BTCFi #nBTC #BeeLiever #CTEnergy #beeartist

@SebastianRoehl On native integration: this is an area that Flutter is investing in heavily! Some things we have in the current pipeline: 1. We're revamping Flutter's threading model to make interop easier: https://t.co/BjcDGEGPgz

A wrapped package has just arrived! We can't wait for you to see what's inside! 👀 https://t.co/TZx8OTvw61 https://t.co/2SQt0ITR4f https://t.co/H7tdsmFFK9

I know I'm not pretty😔so everyone dislikes me💔 https://t.co/C0uWv13iuV

“We meet people we haven’t ever seen before, but deep inside, there is a feeling that we have known them for thousands of years.” ~ L. Agni, https://t.co/tHxsXbuIBD

ALARM bells people. 🚨🚨🚨 Neoconservative David Brooks wrote this in an OpEd in the NY Times, "Trumpism… is primarily about the acquisition of power — power for its own sake. It is a multifront assault to make the earth a playground for ruthless men, so of course any institutions that might restrain power must be weakened or destroyed. Trumpism is about ego, appetite and acquisitiveness and is driven by a primal aversion to the higher elements of the human spirit — learning, compassion, scientific wonder, the pursuit of justice. … What is happening now is not normal politics. We’re seeing an assault on the fundamental institutions of our civic life, things we should all swear loyalty to — Democrat, independent or Republican. It’s time for a comprehensive national civic uprising. It’s time for Americans in universities, law, business, nonprofits and the scientific community, and civil servants and beyond to form one coordinated mass movement. Trump is about power. The only way he’s going to be stopped is if he’s confronted by some movement that possesses rival power. … I’m really not a movement guy. I don’t naturally march in demonstrations or attend rallies that I’m not covering as a journalist. But this is what America needs right now."

🔴 Société : "Ce qui se passe aujourd’hui me sidère : des vérités scientifiques objectivées peuvent être contestées par des arguments politiques. Je croyais cela impossible", constate Étienne Klein, physicien et philosophe des sciences. #ToutEstPolitique #canal16 https://t.co/bW4GuQSbhj

@Hupathia @mathcatho On nage en pleins délire. Je rappel qu'on part de ce twitte avec ce genre de propos : https://t.co/1CIQtfd1cL

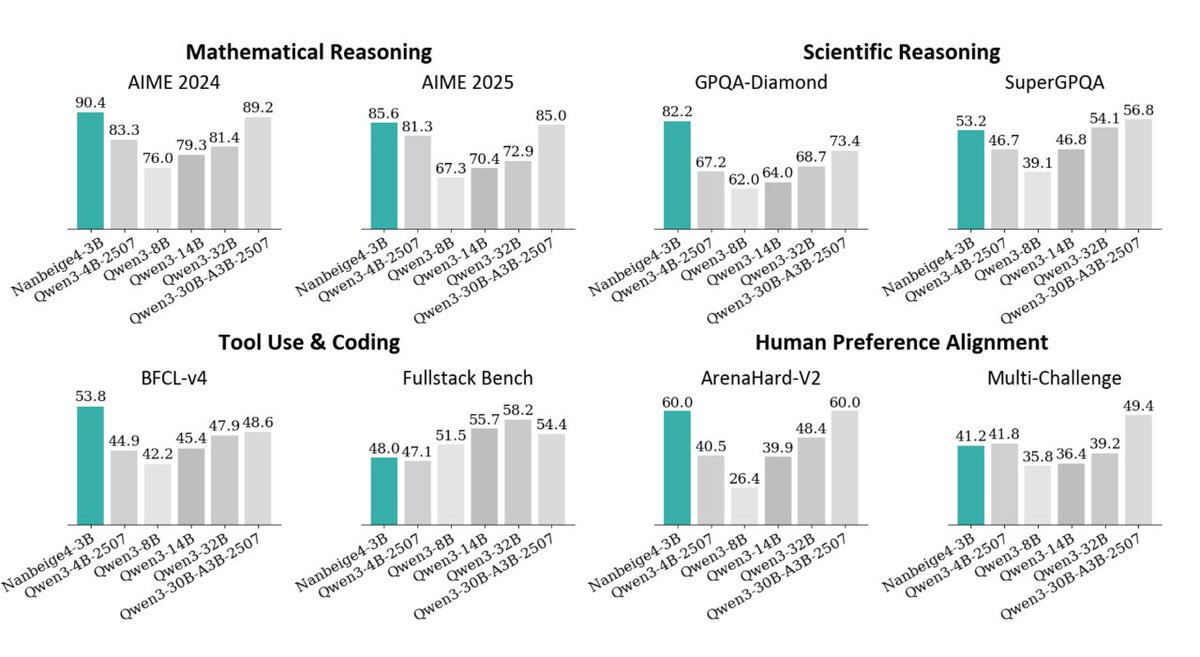

China’s depth of STEM talent is the ultimate refutation of the "concentration of power." After Xiaomi, RedNote, Meituan (Chinese DoorDash) and many others, now BOSS Zhipin (a ~$10B mkt cap recruiting app) have also joined the game and open-sourced a small yet powerful model. Both Base and thinking variant are on @huggingface . When one company shares its tech, it is a tactic. When everyone does it, there must be a deeper economic theory behind. My take? With this much talent, you cannot hoard intelligence. It is becoming a utility to everyone on the globe.

@Kaylah41 @reckybecky0 Maybe the initial tweet has been deleted so you didn't read it, I'll just paste it here, if you have time, take a look there too, https://t.co/M4NuvS3nEM

Just came back from China, some early thoughts: Life observations: - the great firewall is not that great, so easy to get a vpn or an e-sim that has one - hongkong is surprisingly really run-down, locals have said it’s way past its prime - everyone of all ages in china doomscrolls (douyin/xiaohongshu for gen z, wechat for boomers) - chinese immigration is hella efficient and automated with face recognition (i look chinese and have a 10-year visa tho) - shenzhen has 18M+ people but the city does not feel dense at all - china loves heavy packaging (delivery food, consumer goods, etc.) - life is generally more convenient than in the US (safety, delivery food, transport, etc.) Tech observations: - speed is everything in china - for software, consumer > b2b because chinese companies don’t trust each other for enterprise deals + labor is cheap so b2b software can just be built in-house - chinese VCs are super risk averse, that’s why the US is still the king of 0-1, while China thrives in the 1-100 (master of scale and second mover advantage) - fierce national competition, copying each other is a given, most industries seem winner-takes-all - chinese app UIs maximize optionality, that’s why they feel so dense and chaotic - big culture of after-lunch naps in the workplace - big companies have special trade schools right next to their factories to create a direct pipeline of loyal specialized workers

Huge improvements made to Grok Imagine....Try it now https://t.co/NJBS5v9piw

I NEED 1000 RETWEETE FROM NATIVE LOVERS 🏹❤️ https://t.co/DrDlXciZLw

I NEED 1000 RETWEETE FROM NATIVE LOVERS 🏹❤️ https://t.co/W5vn2oqnN2