Your curated collection of saved posts and media

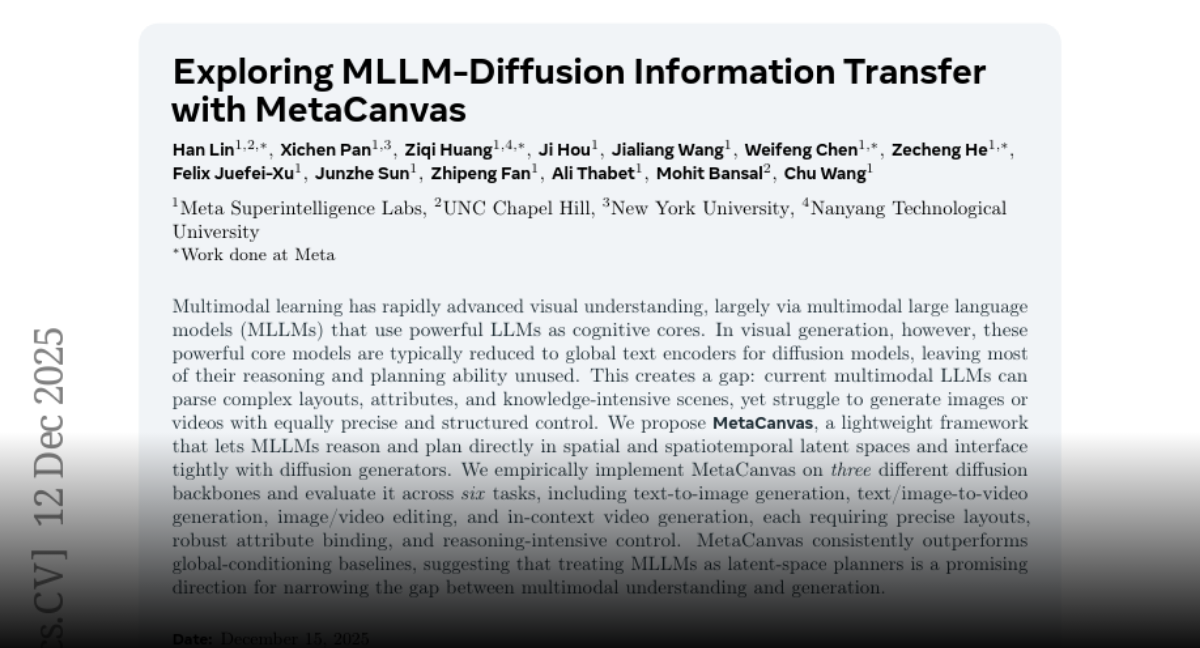

Meta presents Exploring MLLM-Diffusion Information Transfer with MetaCanvas https://t.co/GnBqAingOh

discuss: https://t.co/VuMoPg6XXU

Resemble AI released chatterbox-turbo on Hugging Face https://t.co/qGwUyM6QXt

app: https://t.co/wT3MHAFIKU

model: https://t.co/qYDMapLrVr

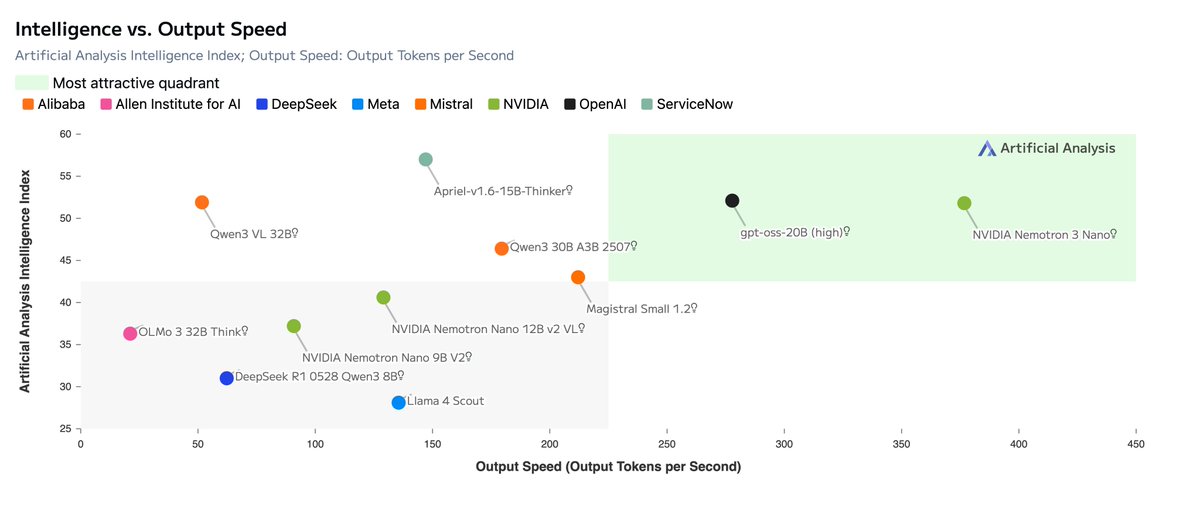

✨ Meet our new open family of models: @NVIDIA Nemotron 3 Open in weights, data, tools, and training, Nemotron 3 is built for multi-agent apps and features: • An efficient hybrid Mamba‑Transformer MoE architecture • 1M token context for long-term memory and improved reasoning • Multi‑environment reinforcement learning via NeMo Gym for advanced skill adaptation Plus NVFP4 pre-training, latent MoE, 1T tokens of data, and more. 📗Read the details in our tech blog: https://t.co/9FD1jGp3zc 🤗 Try the model on @huggingface: https://t.co/n7an9b7hN9

NEWS: NVIDIA announces the NVIDIA Nemotron 3 family of open models, data, and libraries, offering a transparent and efficient foundation for building specialized agentic AI across industries. Nemotron 3 features a hybrid mixture-of-experts (MoE) architecture and new open Nemotron pretraining and post-training datasets, paired with NeMo Gym, an open-source reinforcement learning library that enables scalable, verifiable agent training. Read more: https://t.co/ldf247t3Zz

IBM dropped CUGA, open-source enterprise agent to automate boring tasks 🔥 > given workspace files, it writes and executes code to accomplish any task 🤯 > comes with a ton of tools built for enterprise tasks, supports MCPs > plug in your favorite LLM 👏 here's a small demo where it retrieves info from a file, calculates revenue by writing code, and drafts an e-mail 🤯 they release code, a blog and a demo 🙌🏻 you can run this locally

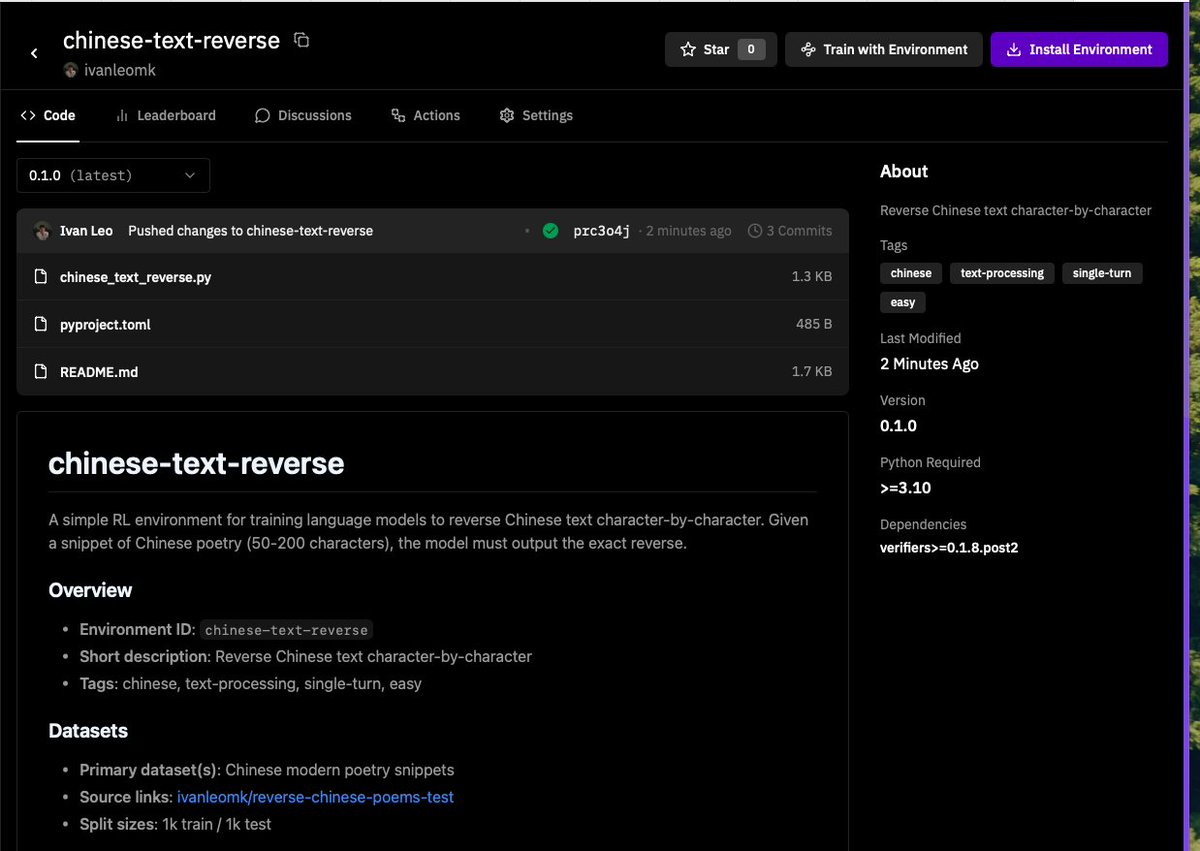

had some time tonight and hacked on my first environment to get models to reverse chinese text with @PrimeIntellect's environments and @willccbb's verifiers library. This was surprisingly easy to get started! Next step training a small model~ https://t.co/eexuycGlTI

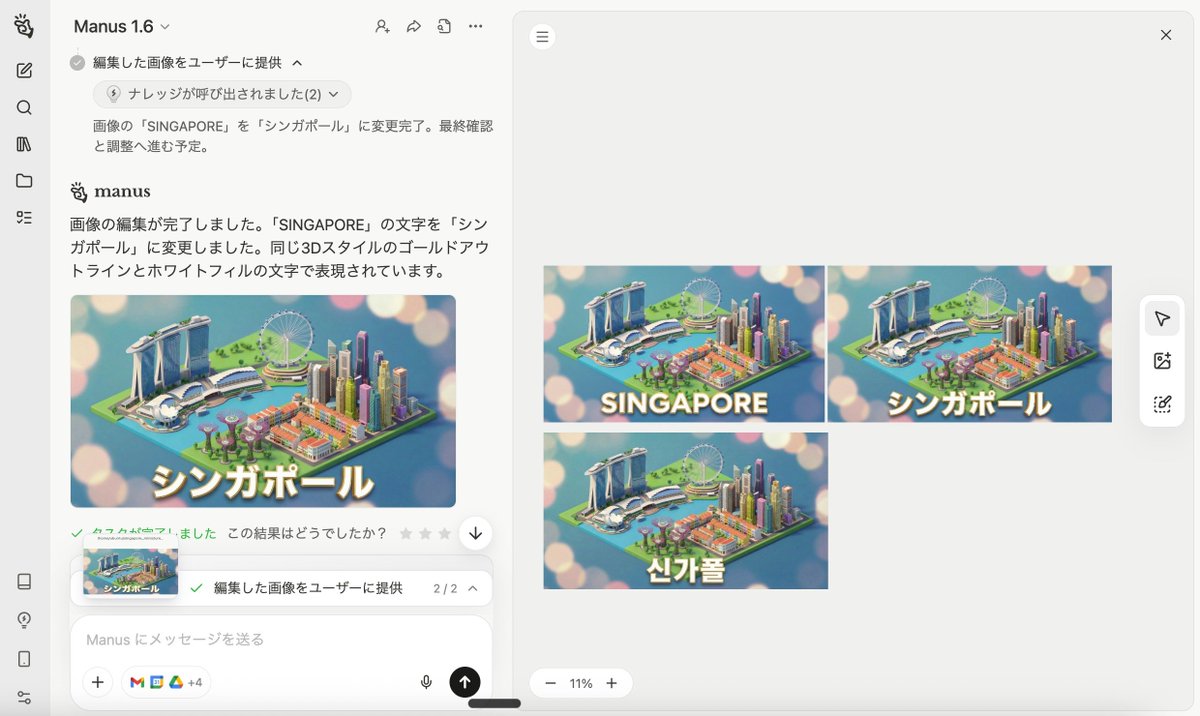

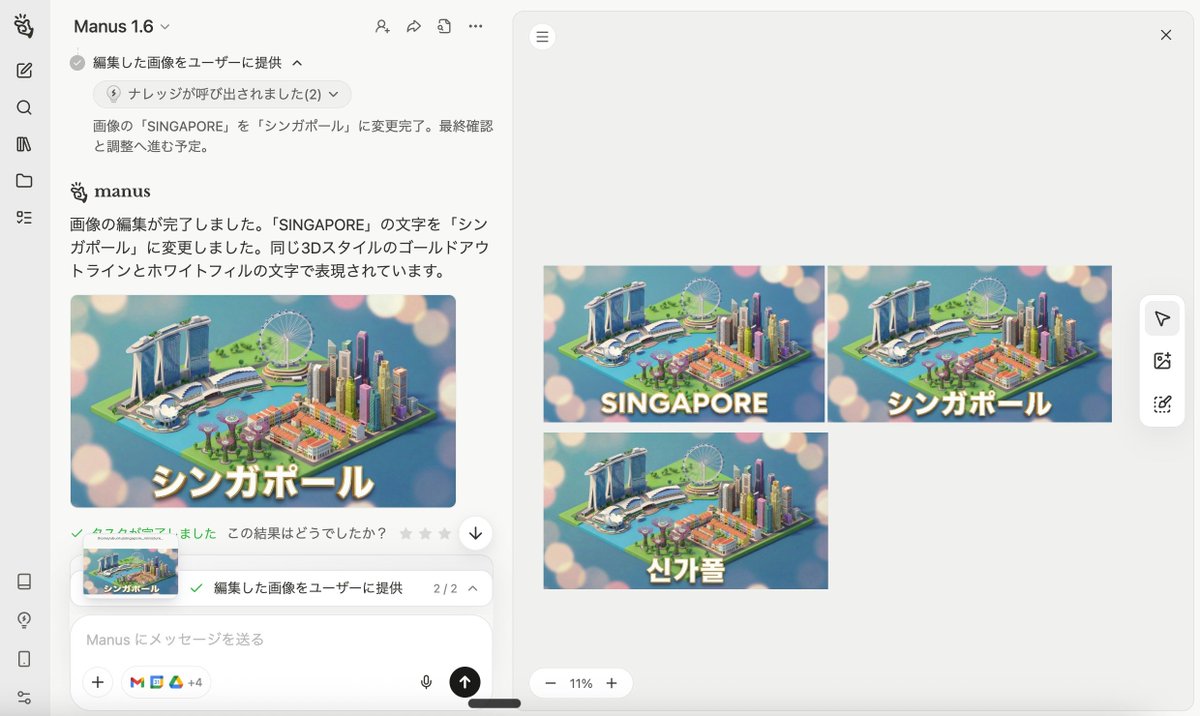

Nano Banana Pro生成 ↓ テキスト抽出 ↓ 編集 Now you can do it on Manus https://t.co/XxfG0gjwhx

Nano Banana Pro生成 ↓ テキスト抽出 ↓ 編集 Now you can do it on Manus https://t.co/XxfG0gjwhx

Grok Code is the only model with insanely high trillion–token–scale usage on Kilo Code – nearly 5× more than the next most-used model It has consistently ranked #1 ever since it first took the top spot https://t.co/sO9E5dAxeV

🎉#GateWeb3Wallet has supported the #Injective Non-EVM type network! 🔥@injective is an interoperable #Layer1 blockchain powering next-generation #DeFi applications 🔔Stay tuned for further updates! 🌐Enter Web3💪:https://t.co/kaEf8OhzAx #GateWeb3 #Injective #Newchain https://t.co/DFoN1fJ7oj

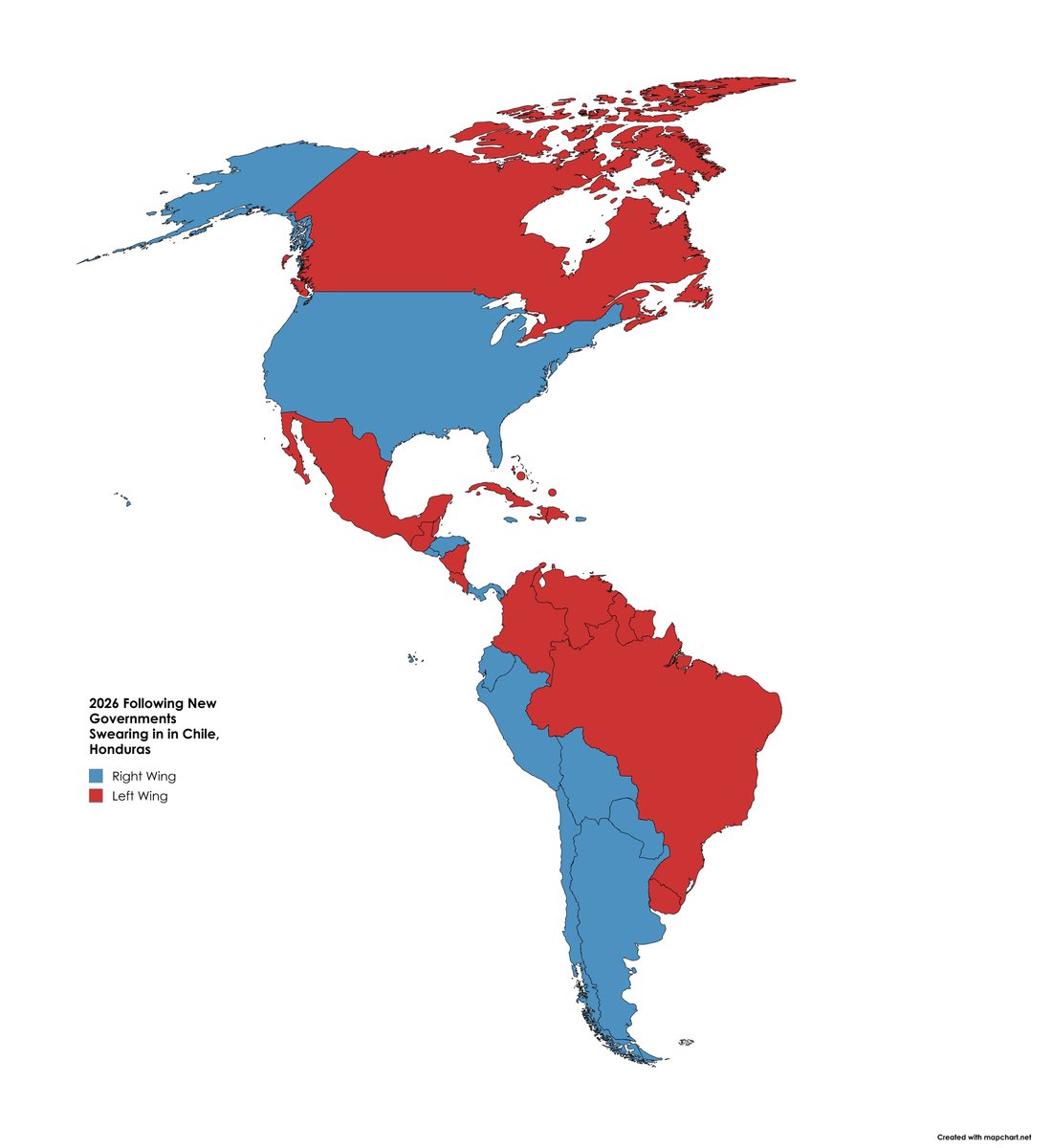

This map will save mapping btw this is legitimate art to me and i will not elaborate its just beautiful https://t.co/w6EMvBC4LG

The Western Hemisphere has been Trumpified. 🔵 Right Wing Governments: 12 🔴 Left Wing Governments: 8 After the socialist regime in Venezuela falls soon, Cuba is next. https://t.co/UuBMRmAPel

This is happening mad fast! I started to realize this when moving all my workflows to Claude Code Skills. Painful at first, but then suddenly moving at speeds never imaginable. I hear more companies embracing skills, which accelerate things more. Good read! https://t.co/HsgySimauW

How can we make a better TerminalBench agent? Today, we are announcing the OpenThoughts-Agent project. OpenThoughts-Agent v1 is the first TerminalBench agent trained on fully open curated SFT and RL environments. OpenThinker-Agent-v1 is the strongest model of its size on TerminalBench, and sets a new bar on our newly released OpenThoughts-TB-Dev benchmark. (1/n)

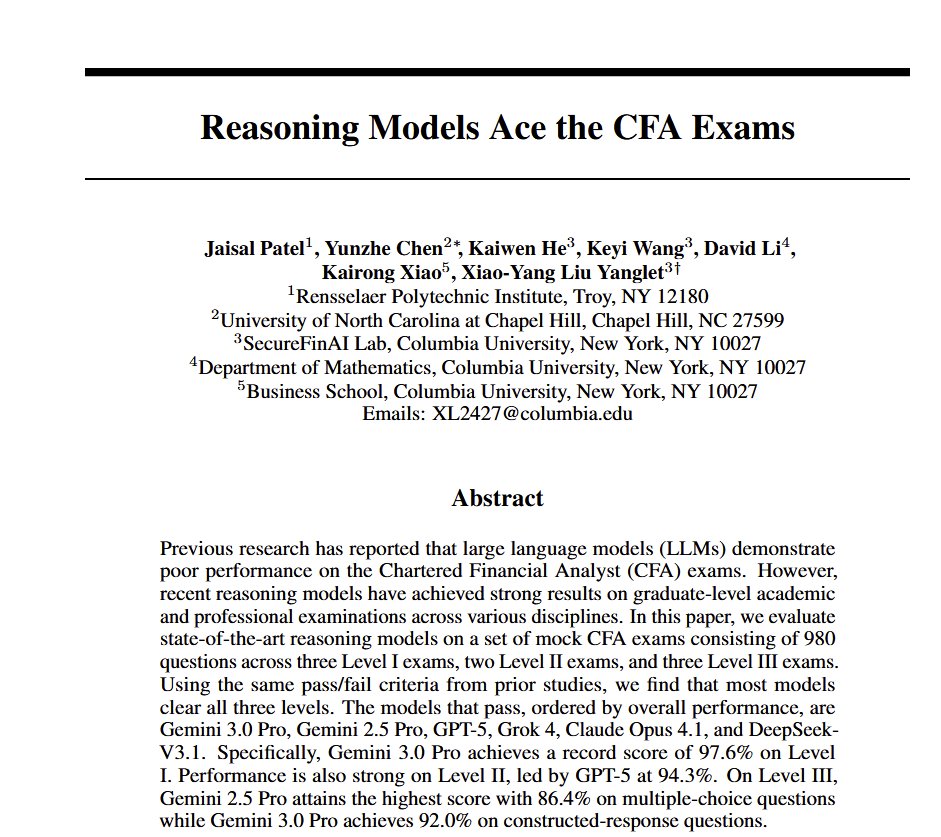

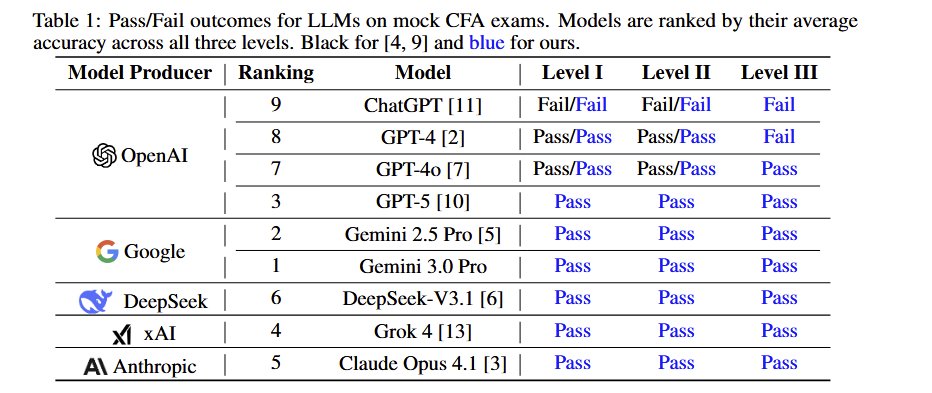

All the frontier AIs now pass all levels of the very challenging Chartered Financial Analyst (CFA) exam The paper used paywalled, new mock exams to reduce the risk of leakage but AI grading for the essays. Interestingly, prompting strategy doesn't matter for most question types https://t.co/k5PsKGObi7

Apple just released Sharp Sharp Monocular View Synthesis in Less Than a Second https://t.co/bXoFtIPmWs

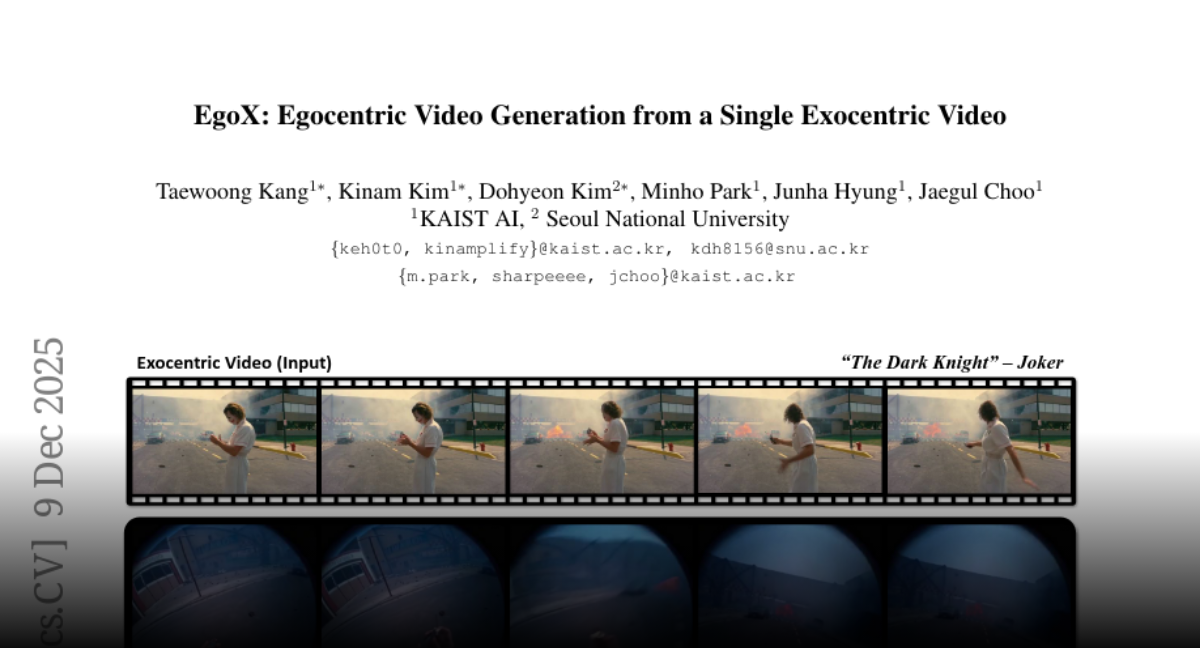

EgoX Egocentric Video Generation from a Single Exocentric Video https://t.co/t2RWPiqSPR

discuss: https://t.co/XnYeSzpn2Y

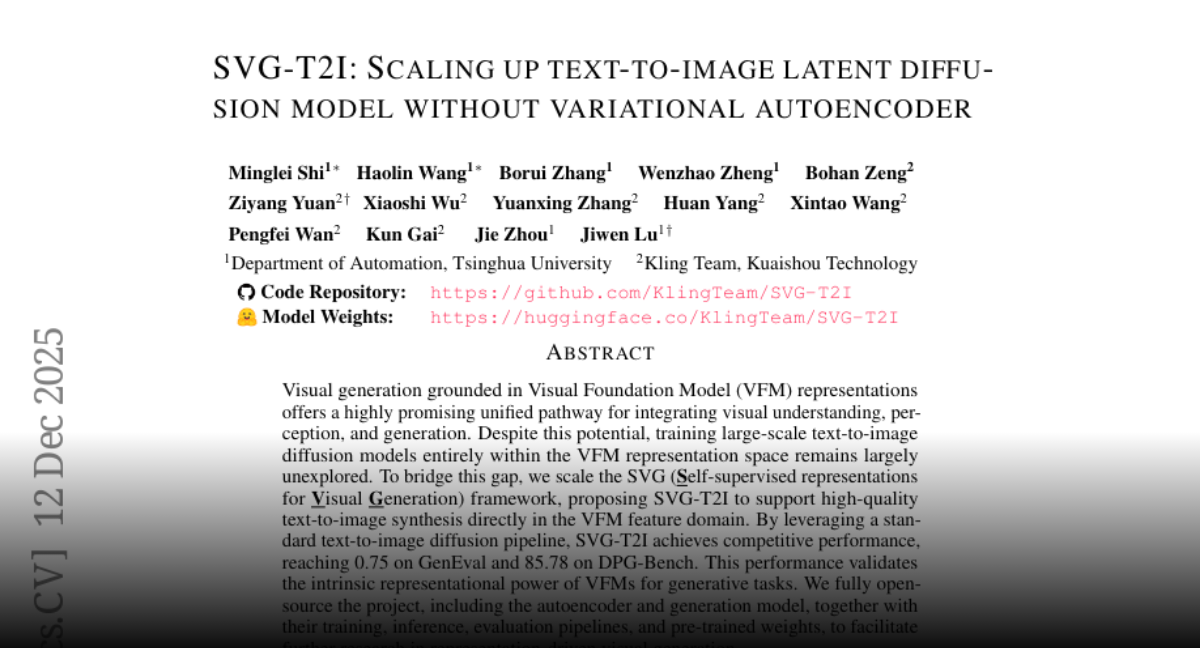

SVG-T2I Scaling Up Text-to-Image Latent Diffusion Model Without Variational Autoencoder https://t.co/cN3g4qn6Js

discuss: https://t.co/NmUO0sare6

Today, @NVIDIA is launching the open Nemotron 3 model family, starting with Nano (30B-3A), which pushes the frontier of accuracy and inference efficiency with a novel hybrid SSM Mixture of Experts architecture. Super and Ultra are coming in the next few months. https://t.co/v7MKIy7Oe4

A new day, a new Google release: today it's open source! It remains exciting. https://t.co/1WhUibF70r

PSA: https://t.co/n1RdT94ij3 is a good link to bookmark 🤗(and refresh)

A new day, a new Google release: today it's open source! It remains exciting. https://t.co/1WhUibF70r

It's here. Manus 1.6 is now live We've upgraded our core agent architecture for more complex work with less supervision. Here's what that means for you 👇 https://t.co/PHtEduT0BB

🎥🙌| QUIENES SON MÁS INTELIGENTES LOS LATINOS O LOS EUROPEOS? https://t.co/Vbl3SdZ95I

Simply Red - Holding Back The Years https://t.co/TugNAG7FeU

आईटी सेल के सक्रिय सदस्य देश के शोषित पीड़ित, आदिवासी-दलितों की आवाज बुलंद करने वाले ट्विटर टीम @dayalalnanoma80 @nanoma_dayalal भाई डायालाल जी ननोमा के कुदरती अवतरण दिवस पर ढैर सारी शुभकामनाएं जोहार🌷🌷🌷💐🌱🌱🌱 पुरखा आर्शीवाद बना रहे https://t.co/GYJs1gciNW

“Pax Silica” is now a thing. It’s Trump admin’s attempt to establish a group of critical partners to maintain and expand global supply chains and develop AI. In the Indo-Pacific, here’s the list: —Australia —Japan —Singapore —South Korea —Taiwan (guest) https://t.co/cjQeromtlV

NEW Research from Meta Superintelligence Labs and collaborators. The default approach to improving LLM reasoning today remains extending chain-of-thought sequences. Longer reasoning traces aren't always better. Longer traces conflate reasoning depth with sequence length and inherit long-context failure modes. This new research introduces Parallel-Distill-Refine (PDR), a framework that treats LLMs as improvement operators rather than single-pass reasoners. Instead of one long reasoning chain, PDR operates in phases: - Generate diverse drafts in parallel. - Distill them into a bounded textual workspace. - Refine conditioned on this workspace. - Repeat. Context length becomes controllable via degree of parallelism, no longer conflated with total tokens generated. The model accumulates wisdom across rounds through compact summaries rather than replaying full histories. On AIME 2024, PDR achieves 93.3% accuracy compared to 79.4% for standard long chain-of-thought at matched latency budgets. For o3-mini at 49k effective tokens, accuracy improves from 76.9% (Long CoT) to 86.7% (PDR), a 9.8 percentage point gain. PDR also achieves the same accuracy as sequential refinement with 2.57x smaller sequential budget by converting parallel compute into accuracy without lengthening per-call context. The researchers also trained an 8B model with operator-consistent RL to make training match the PDR inference interface. Mixing standard and operator RL yields an additional 5% improvement on both AIME benchmarks. Bounded memory iteration can substitute for long reasoning traces while holding latency fixed. Strategic parallelism and distillation is shown to beat brute-force sequence extension. Paper: https://t.co/EviERpmTu7 Learn to build effective AI Agents in our academy: https://t.co/zQXQt0PMbG