@NVIDIAAIDev

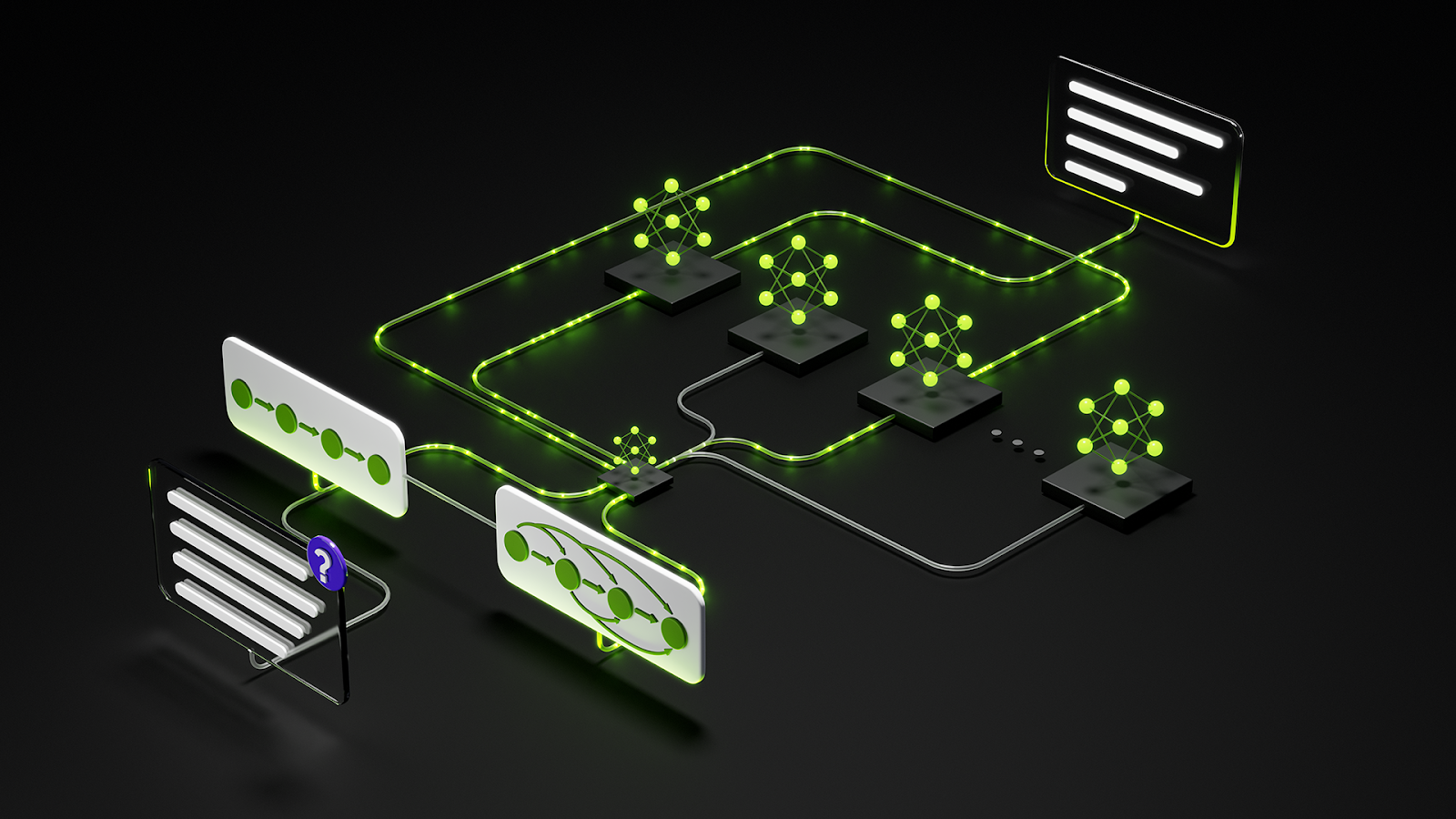

✨ Meet our new open family of models: @NVIDIA Nemotron 3 Open in weights, data, tools, and training, Nemotron 3 is built for multi-agent apps and features: • An efficient hybrid Mamba‑Transformer MoE architecture • 1M token context for long-term memory and improved reasoning • Multi‑environment reinforcement learning via NeMo Gym for advanced skill adaptation Plus NVFP4 pre-training, latent MoE, 1T tokens of data, and more. 📗Read the details in our tech blog: https://t.co/9FD1jGp3zc 🤗 Try the model on @huggingface: https://t.co/n7an9b7hN9