Your curated collection of saved posts and media

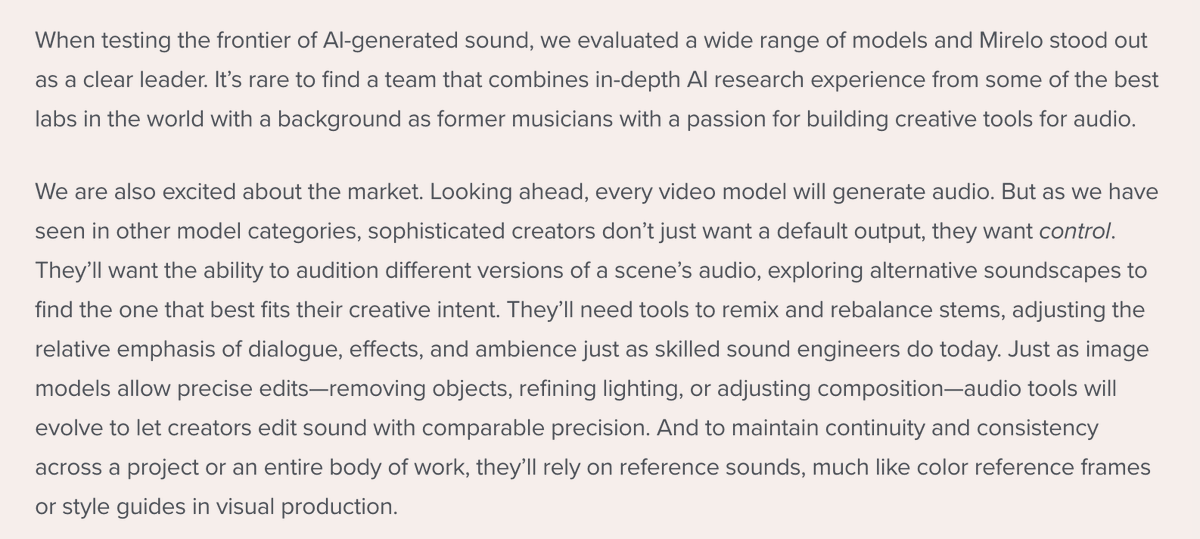

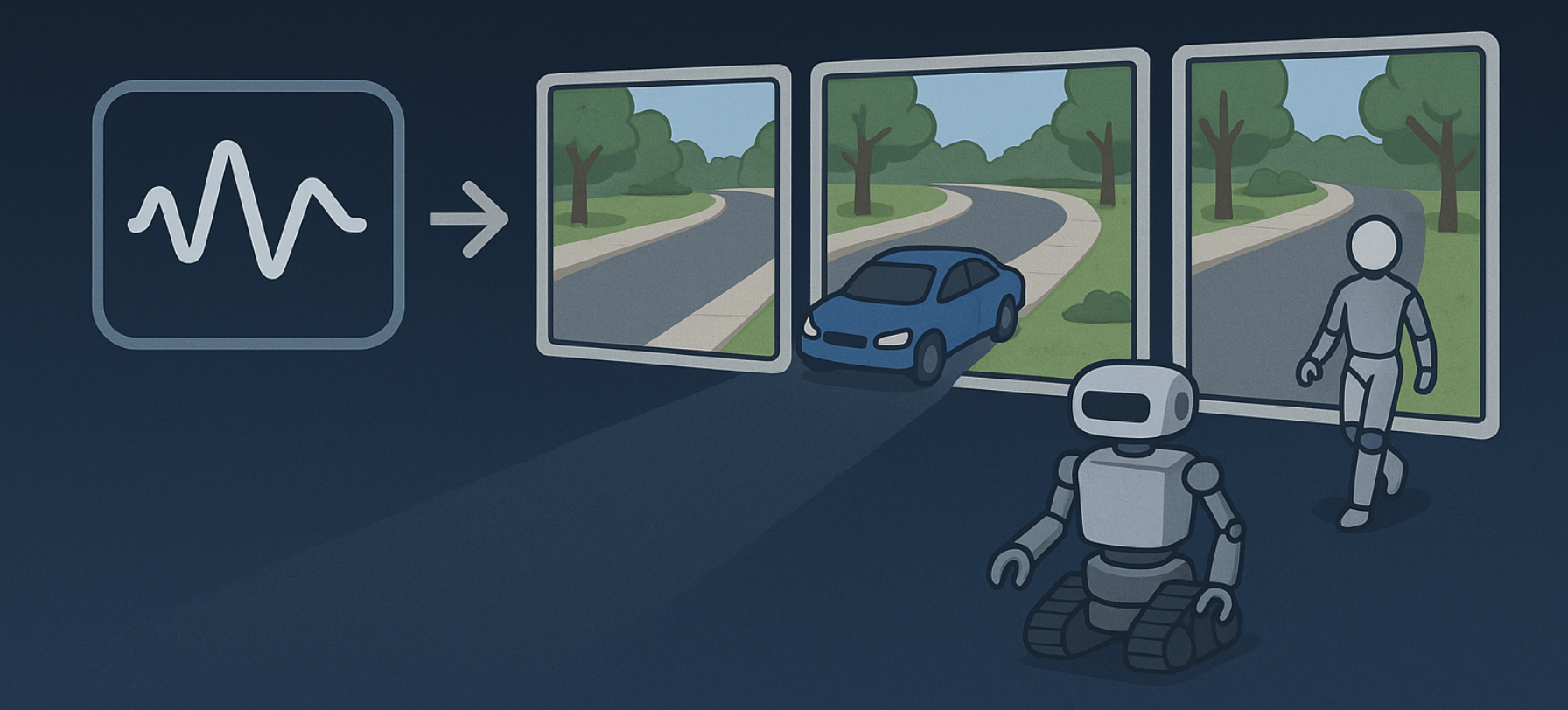

Announcing @a16z's investment in @MireloAI 🔉 @appenz and I couldn't be more thrilled to partner with this incredible team building the AI sound layer. Sound is a crucial part of video - and Mirelo's model enables creators to have more control and higher fidelity. More 👇 https://t.co/sOA8oSh0fJ

Big news today. 🚀 We are more than thrilled to announce our seed investment round of $41m, co-lead by Index Ventures and Andreessen Horowitz. We are excited about this next chapter and will continue building the best models and product around video and audio! https://t.co/jJouqN

Editing front-end is now as easy as commenting on a page and asking for a change. Five weeks ago we wrote our first line of code on Inspector. Now, we're making it available for everyone to use. Try it out now! Much more coming soon ;) https://t.co/NgCidElvWW

Year 5/6 startup learnings > Limit your emotional attachments > You don't know someone until they make a mistake > Things are never as good as they seem, and never as bad as they seem plus - why the shifting forest from Shang-Chi may be the perfect startup metaphor? https://t.co/favvxrFrjJ

EgoX Egocentric Video Generation from a Single Exocentric Video https://t.co/t2RWPiqSPR

Will check emails soon https://t.co/XTae2DfQS2

chatterbox-turbo https://t.co/JBRIztWFkD

https://t.co/qYDMapLrVr

app: https://t.co/wT3MHAFIKU

Particulate Feed-Forward 3D Object Articulation https://t.co/rsSkfX998e

app: https://t.co/JSXmwr1rOx

Grok app store downloads surged in November with 11.44 million downloads, up 34.22% month over month, as per the latest @Similarweb data. Momentum accelerated sharply over the month. Clear signal of rising demand and expanding reach. https://t.co/hiIoMDObjX

GPT-5.2 landed and I decided to put it through it's paces with GitHub Copilot and @code. It generated some stunning code and beautiful designs! I AM IMPRESSED! Let's go! https://t.co/Ee7ACoqlwB #vscode #microsoftai #microsoftlife #MicrosoftEmployeeCreator

@savvyswgxx @VersusMarkets raided all tweets. AuM8rJhPLPoNiicuiAQFkshkytmPVZv6yRfSE4EKCUSH https://t.co/wiqsjlfPHa

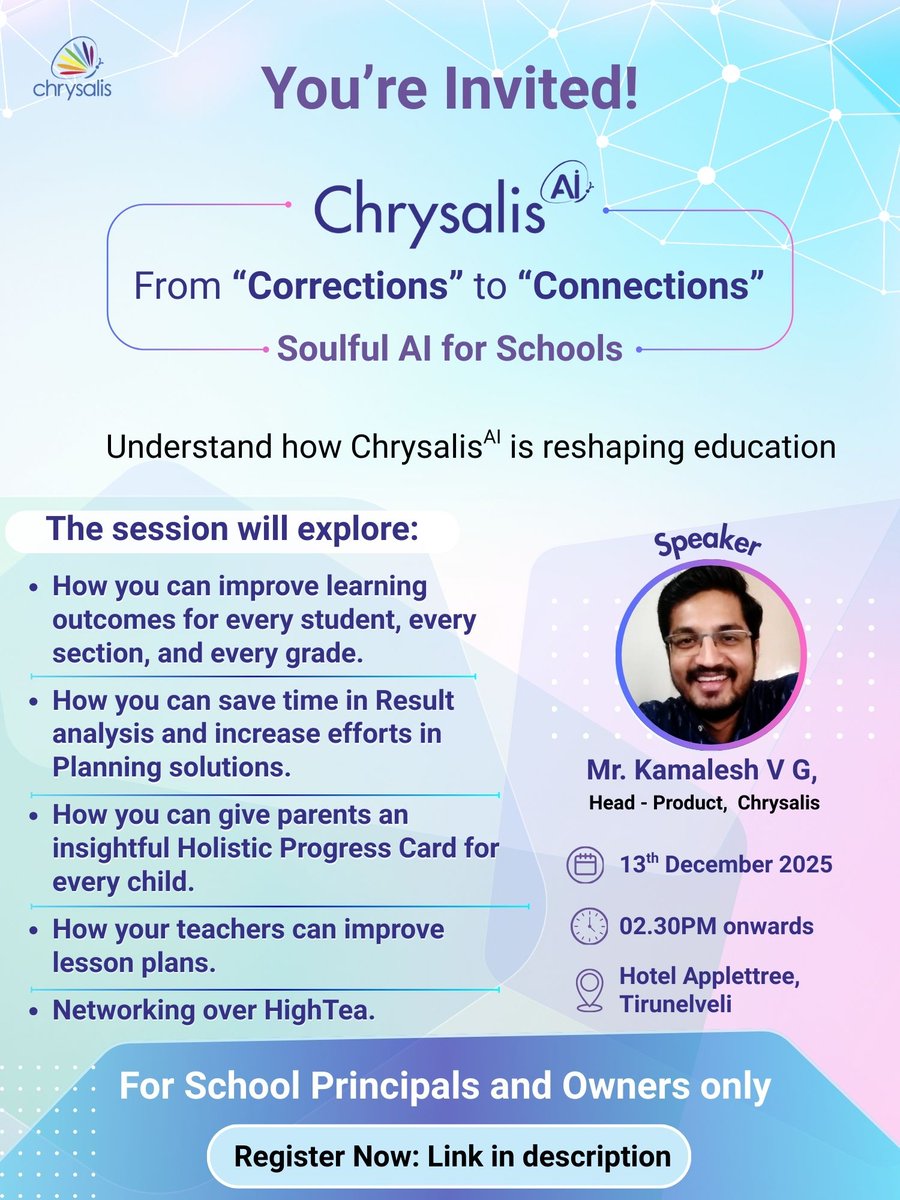

Tirunelveli, we’re coming to you! Register Now: https://t.co/SSZfwtbR3D #ChrysalisAI #CorrectionsToConnections #SoulfulAI#AIinEducation #AI #AIinEducation #AIforschools #aiinschools #Tirunelveli https://t.co/XBRZ9XMtSg

Bitcoin’s trustlessness doesn’t pause at sunset — and neither does Native. As the network rests, the proofs keep validating, the system stays sovereign, and BTC remains exactly where it belongs: under your control. See you in the morning. 🐝⚡ #goNative #BTCFi #CryptoInfrastructure #beeartist

@crycrystallss @Shilllin I had an awesome time taking your Christmas photos together. Cheers ✨ https://t.co/Th9zGyWXzD

GN CT 🌙⚡ Ledgers don’t fail loudly. They fail when no one is watching. Native exists for that quiet moment. Not to rewrite Bitcoin’s ledger — but to stand guard over it, ensuring every action respects what the ledger already proves. While the hive sleeps, rules remain enforced, history remains intact, and execution never outruns truth. That’s guardianship — not control. Good night, CT. 🐝🌙 @goNativeCC @Tommy_The_Gods #Native #goNative #BTCFi #nBTC #CryptoInfrastructure #beeartist

quick hack i’ve been using to catch narrative pivots: search TL with min_faves:10 -filter:replies -filter:nativeretweets around tickers/themes, then bump to min_replies:1 to see convo depth. add from:yourID to audit which of your posts actually moved @NetworkNoya turns that raw scan into signal. it reads micro‑patterns in sentiment and narrative flow, flags when interest consolidates or when doubt starts to creep, and maps where momentum is forming before charts react my play: surface early posts, let $NOYA’s read guide size/entries, and stake for loyalty while automations execute across chains #AttentionFi #Web3 who else is running this workflow?

Secure your coding agents with virtual filesystems and better document understanding. Building safe AI coding agents requires solving two critical challenges: filesystem access control and handling unstructured documents. We've created a solution using AgentFS, LlamaParse, and @claudeai. 🛡️ Virtual filesystem isolation: agents work with copies, not your real files, preventing accidental deletions while maintaining full functionality 📄 Enhanced document processing: LlamaParse converts PDFs, Word docs, and presentations into high-quality text that agents can actually understand ⚡ Workflow orchestration: LlamaIndex Workflows provide stepwise execution with human-in-the-loop controls and resumable sessions 🔧 Custom tool integration: replace built-in filesystem tools with secure MCP server alternatives that enforce safety boundaries This approach uses AgentFS (by @tursodatabase) as a SQLite-based virtual filesystem, our LlamaParse for state-of-the-art document extraction, and Claude for the coding interface - all orchestrated through LlamaIndex Agent Workflows. Read the full technical deep-dive with implementation details: https://t.co/IiCW8Bo0NZ Find the code on GitHub: https://t.co/mekxD21O4E

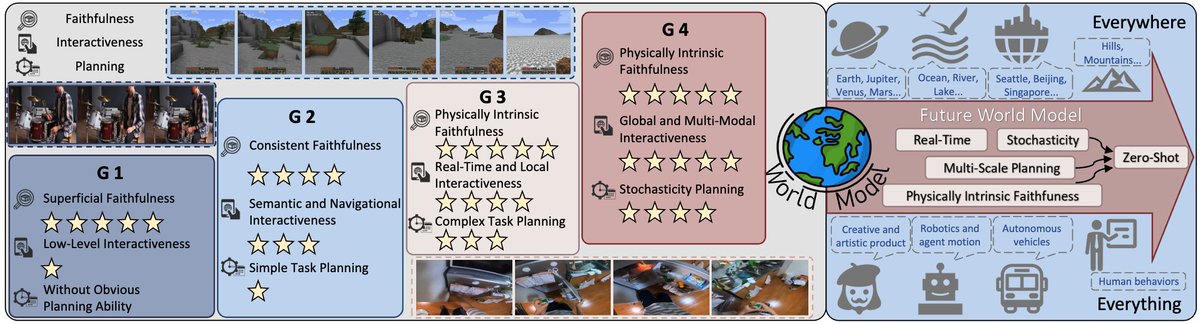

🗺️Towards A Roadmap of Video World Model🗺️ We conceptualize the progression of video generation through **four generations**, in which the core capabilities ultimately culminating in a world model. 🏡 https://t.co/Cr9T4Uq7QP 📜 https://t.co/NvXmw6JbbG 🧑💻 https://t.co/lv4QuAW08m https://t.co/VYYR8Yk239

🚀 Announcing Echo — our new frontier model for 3D world generation. Echo turns a simple text prompt or image into a fully explorable, 3D-consistent world. Instead of disconnected views, the result is a single, coherent spatial representation you can move through freely. This is part of a bigger shift in AI: from generating pixels and tokens to generating spaces. Echo predicts a geometry-grounded 3D scene at metric scale, meaning every novel view, depth map, and interaction comes from the same underlying world — not independent hallucinations. Once generated, the world is interactive in real time. You control the camera, explore from any angle, and render instantly — even on low-end hardware, directly in the browser. High-quality 3D world exploration is no longer gated by expensive equipment. Under the hood, Echo infers a physically grounded 3D representation and converts it into a renderable format. For our web demo, we use 3D Gaussian Splatting (3DGS) for fast, GPU-friendly rendering — but the representation itself is flexible and can be easily adapted. Why this matters: consistent 3D worlds unlock real workflows — digital twins, 3D design, game environments, robotics simulation, and more. From a single photo or a line of text, Echo builds worlds that are reliable, editable, and spatially faithful. Echo also enables scene editing and restyling. Change materials, remove or add objects, explore design variations — all while preserving global 3D consistency. Editing no longer breaks the world. This is only the beginning. Echo is the foundation for future world models with dynamics, physical reasoning, and richer interaction — environments that don’t just look right, but behave right. Explore the generated worlds on our website and sign up for the closed beta. The era of spatial intelligence starts here. 🌍 #Echo #WorldModels #SpatialAI #3DFoundationModels Check it out: https://t.co/QgsuLdhoe6

post here: https://t.co/7BDznUr9VM

Meta presents Exploring MLLM-Diffusion Information Transfer with MetaCanvas https://t.co/GnBqAingOh

discuss: https://t.co/VuMoPg6XXU

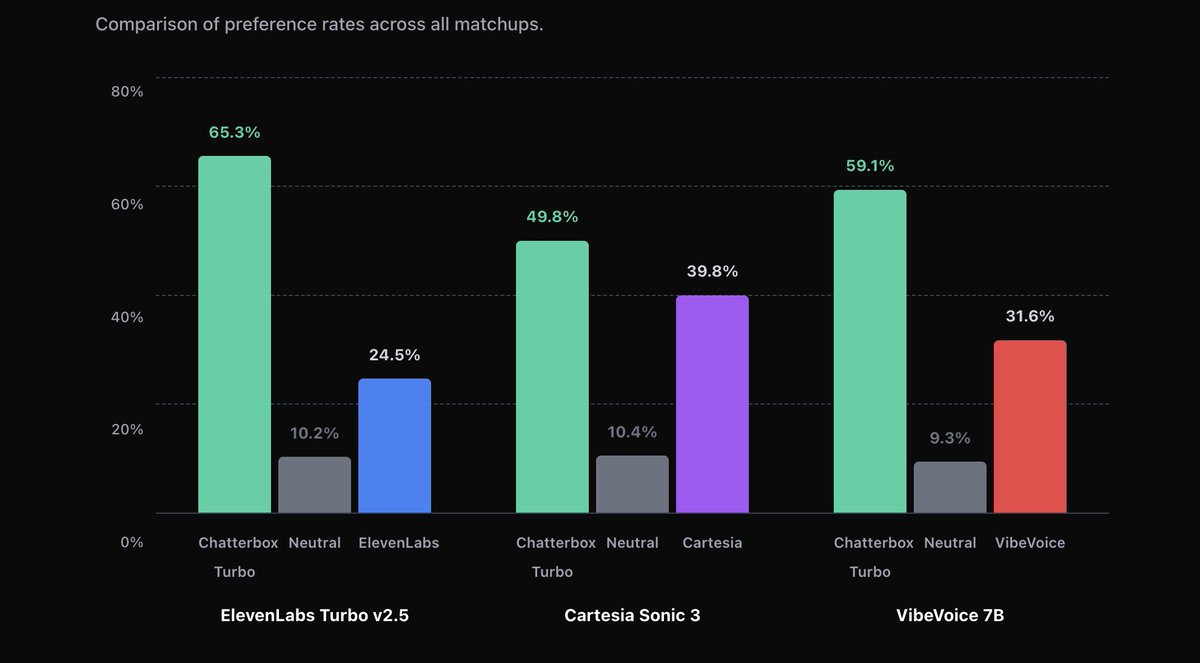

Resemble AI released chatterbox-turbo on Hugging Face https://t.co/qGwUyM6QXt

app: https://t.co/wT3MHAFIKU

model: https://t.co/qYDMapLrVr

✨ Meet our new open family of models: @NVIDIA Nemotron 3 Open in weights, data, tools, and training, Nemotron 3 is built for multi-agent apps and features: • An efficient hybrid Mamba‑Transformer MoE architecture • 1M token context for long-term memory and improved reasoning • Multi‑environment reinforcement learning via NeMo Gym for advanced skill adaptation Plus NVFP4 pre-training, latent MoE, 1T tokens of data, and more. 📗Read the details in our tech blog: https://t.co/9FD1jGp3zc 🤗 Try the model on @huggingface: https://t.co/n7an9b7hN9

NEWS: NVIDIA announces the NVIDIA Nemotron 3 family of open models, data, and libraries, offering a transparent and efficient foundation for building specialized agentic AI across industries. Nemotron 3 features a hybrid mixture-of-experts (MoE) architecture and new open Nemotron pretraining and post-training datasets, paired with NeMo Gym, an open-source reinforcement learning library that enables scalable, verifiable agent training. Read more: https://t.co/ldf247t3Zz

IBM dropped CUGA, open-source enterprise agent to automate boring tasks 🔥 > given workspace files, it writes and executes code to accomplish any task 🤯 > comes with a ton of tools built for enterprise tasks, supports MCPs > plug in your favorite LLM 👏 here's a small demo where it retrieves info from a file, calculates revenue by writing code, and drafts an e-mail 🤯 they release code, a blog and a demo 🙌🏻 you can run this locally

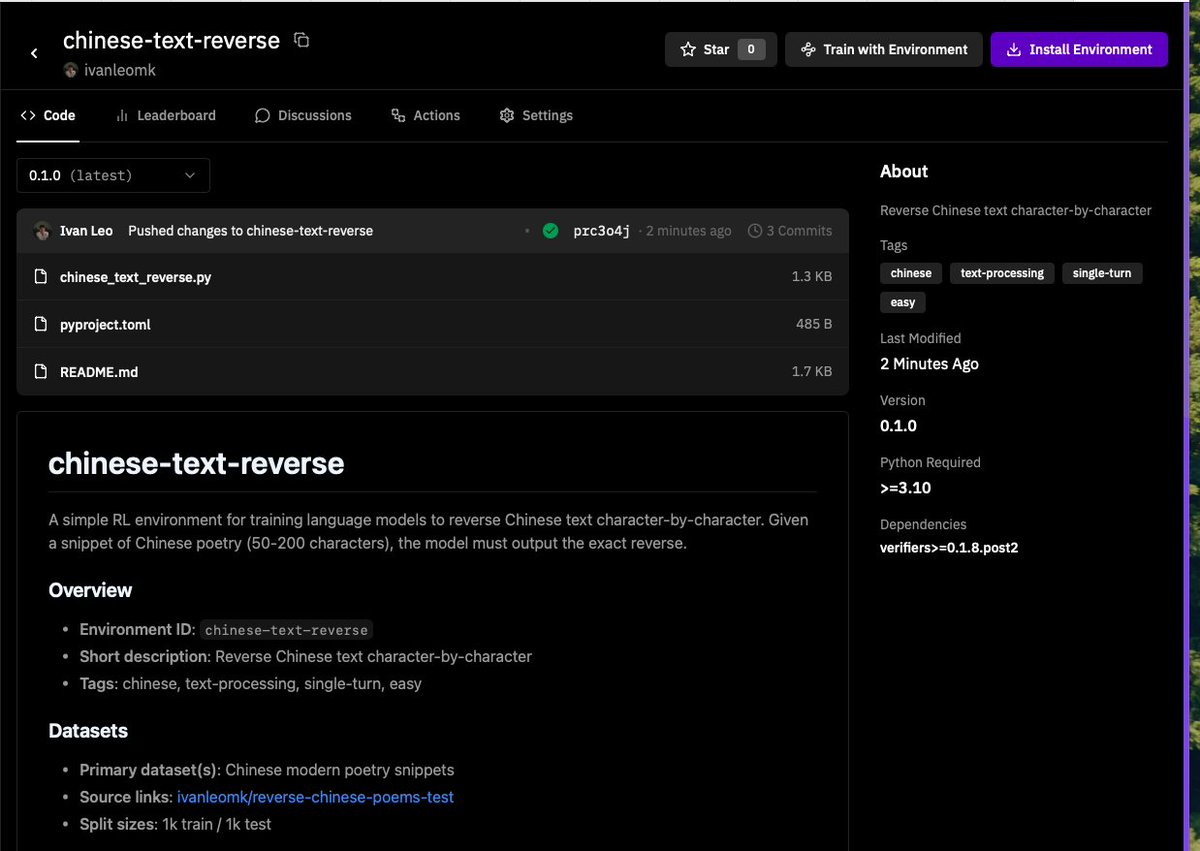

had some time tonight and hacked on my first environment to get models to reverse chinese text with @PrimeIntellect's environments and @willccbb's verifiers library. This was surprisingly easy to get started! Next step training a small model~ https://t.co/eexuycGlTI

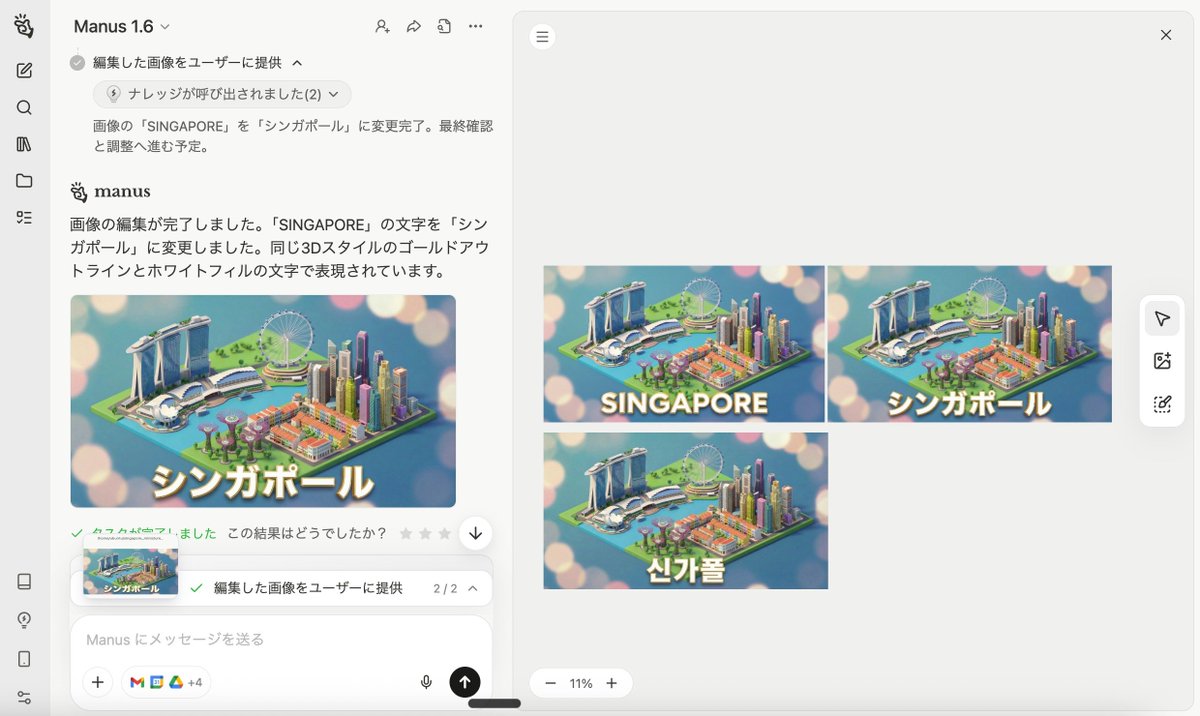

Nano Banana Pro生成 ↓ テキスト抽出 ↓ 編集 Now you can do it on Manus https://t.co/XxfG0gjwhx