Your curated collection of saved posts and media

“The way we think about ourselves will give rise to the world we live in.” <>G Braden, Cherokee https://t.co/bC5mjm6R5h

“Give me strength, not to be better than my enemies, but to defeat my greatest enemy, the doubts within myself. Give me strength for a straight back and clear eyes, so when life fades, as the setting sun, my spirit may come to you without shame” -Cherokee https://t.co/ZNFo689DEC

Anybody else enjoy soft native cock? 😍🤤 https://t.co/EPcM306JfG

To day My birthday I know I'm not perfect🥲 https://t.co/vuFgbBsYZi

#UmkhokhaTheCurse Cannot pretend anymore i miss old Nobuntu 😭😭😭😭 https://t.co/UCP32rY6h0

If you support Native American culture Say......❝Yes❞ https://t.co/lxPlpWghR5

beat the getting unblockabled allegations https://t.co/ArhFsx3aHf

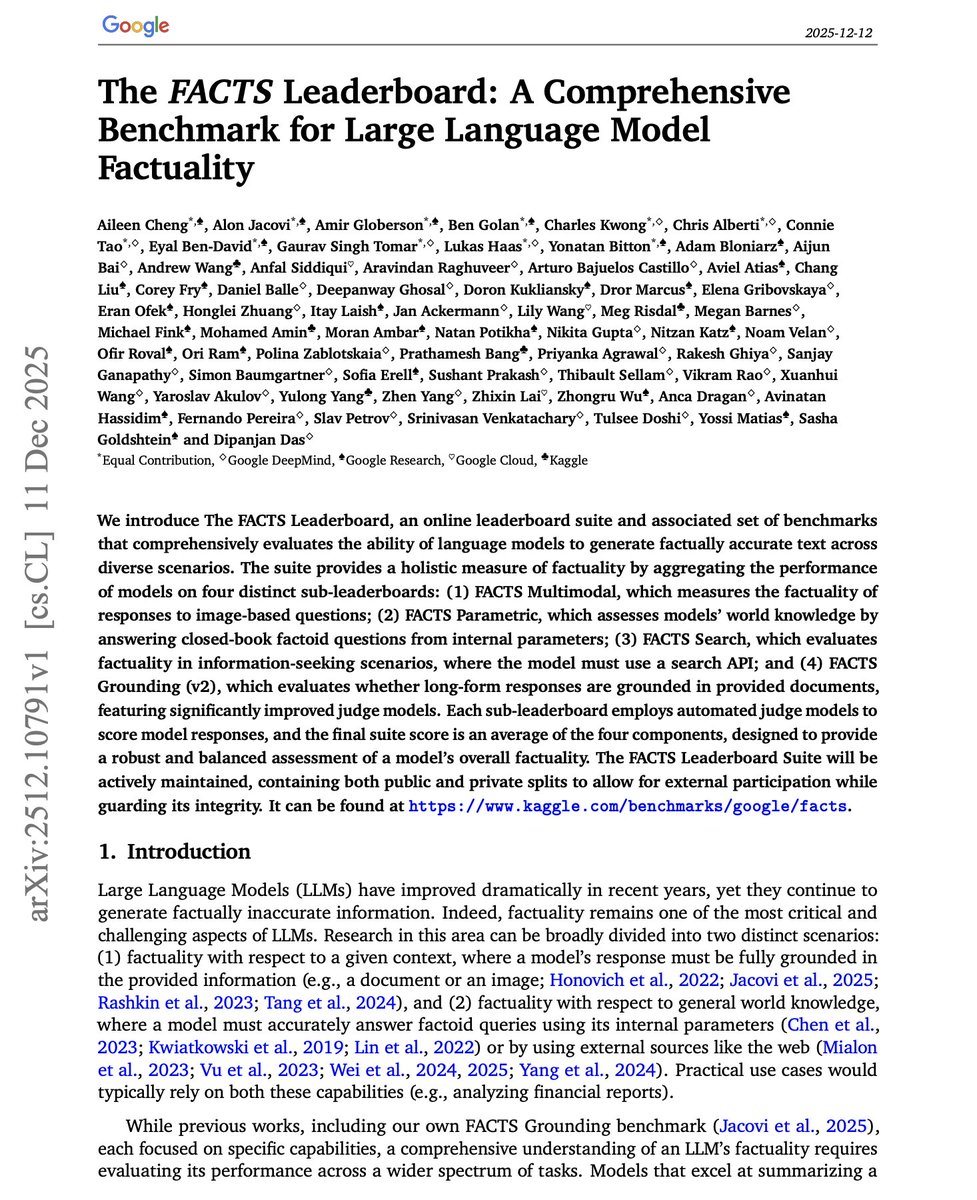

New benchmark from Google Research. Models get better at benchmarks, but do they actually get more factual? Previous evaluations focused on narrow slices: grounding to documents, answering from memory, or using search. A model excelling at one often fails at another. This new research introduces the FACTS Leaderboard, a comprehensive suite that measures factuality across four distinct dimensions: - FACTS Multimodal tests visual grounding combined with world knowledge on ~1,500 image-based questions. - FACTS Parametric assesses closed-book factoid recall using 2,104 adversarially-sampled questions that stumped open-weight models. - FACTS Search evaluates information-seeking with web tools across 1,884 queries including multi-hop reasoning. - FACTS Grounding v2 tests whether long-form responses stay faithful to provided documents. The aggregate FACTS Score averages performance across all four. Results: Gemini 3 Pro leads with 68.8% overall. Gemini 2.5 Pro follows at 62.1%, then GPT-5 at 61.8%. But the sub-scores tell a different story. Claude models are precision-oriented, achieving high no-contradiction rates but hedging frequently on parametric questions. Claude 4 Sonnet doesn't attempt 45.1% of parametric queries. GPT models show higher coverage but more contradictions. On multimodal, even the best models only reach ~47% accuracy when requiring both complete coverage and zero contradictions. On parametric knowledge, the spread is enormous: Gemini 3 Pro hits 76.4% while GPT-5 mini manages just 16.0%. The benchmark maintains both public and private splits to prevent overfitting. All evaluation runs through Kaggle with standardized search tools for fair comparison. A single factuality number hides crucial behavioral differences. Some models guess aggressively, others hedge conservatively. This suite exposes those tradeoffs across the contexts where factuality actually matters. Paper: https://t.co/TCHOSGlQKs Learn how to evaluate and build effective AI agents in our academy: https://t.co/JBU5beIoD0

Best possible task accuracy after fixing constraints on inference process? Tokens across all generations, depth of generation chain (“latency”), total context length and compute. PDR achieves pareto frontier: "draft in parallel → distill to a compact workspace → refine". https://t.co/jWoGu8EVdj

NEW Research from Meta Superintelligence Labs and collaborators. The default approach to improving LLM reasoning today remains extending chain-of-thought sequences. Longer reasoning traces aren't always better. Longer traces conflate reasoning depth with sequence length and inh

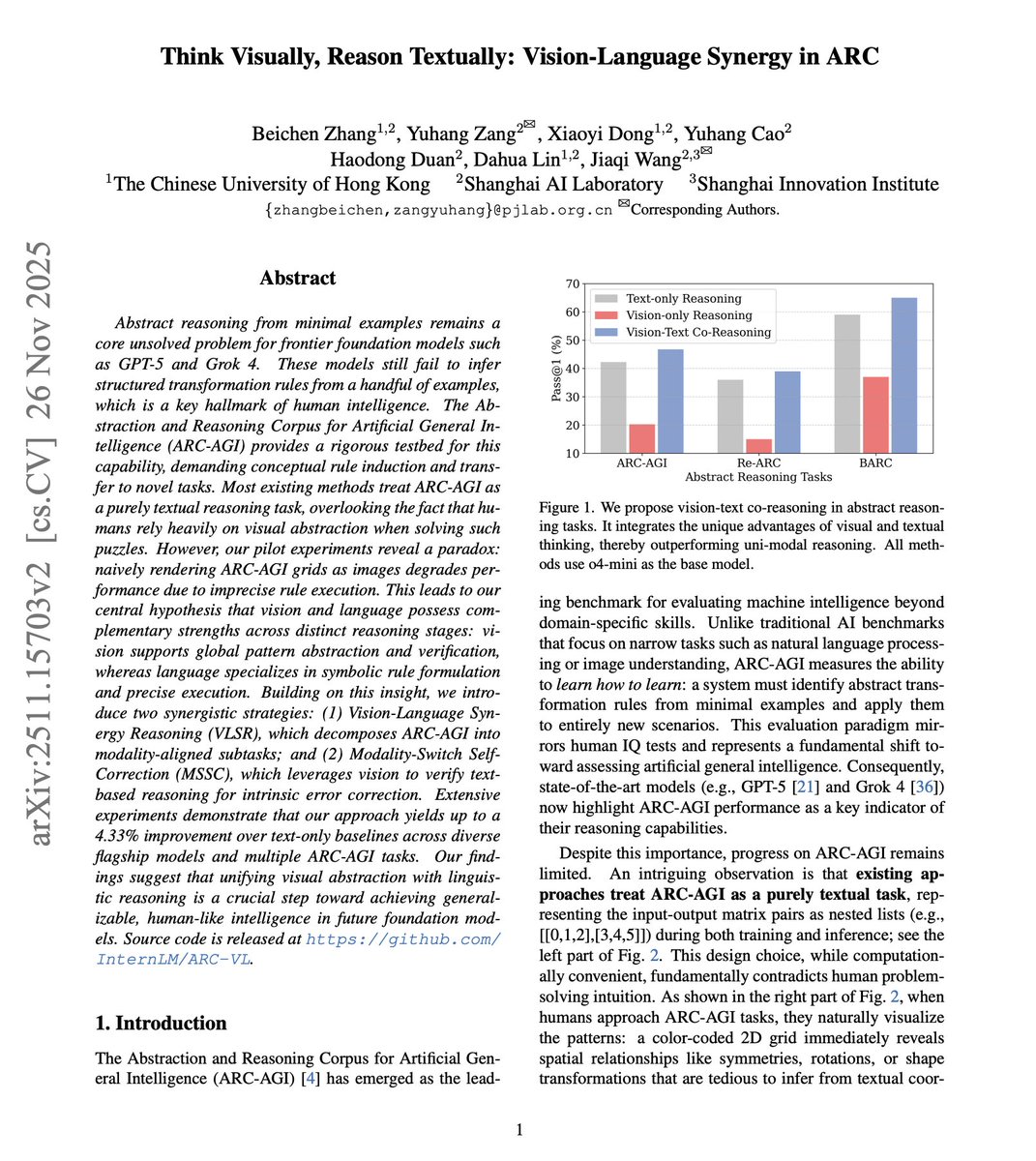

NEW research on abstract reasoning. Frontier models like GPT-5 and Grok 4 still can't do what humans find trivially easy: infer transformation rules from a handful of examples. The default approach to solving ARC-AGI (the leading benchmark for abstract reasoning) treats these visual puzzles as pure text. Nested lists like [[0,1,2],[3,4,5]]. But that contradicts how humans actually solve these puzzles. This new research introduces Vision-Language Synergy Reasoning (VLSR), a framework that strategically combines visual and textual modalities for different reasoning stages. Vision and text have complementary strengths. Vision excels at global pattern recognition, providing a 3.0% improvement in rule summarization through holistic 2D perception. Text excels at precise execution, with vision causing a 20.5% performance drop on element-wise manipulation tasks. VLSR decomposes the problem accordingly. Phase 1: visualize example matrices as color-coded grids for rule summarization. Phase 2: switch to text for precise rule application. This is about matching the modality to the task. They also introduce Modality-Switch Self-Correction (MSSC), which breaks the confirmation bias that plagues text-only self-correction. After generating an answer textually, the system verifies it visually. Results across GPT-4o, Gemini-2.5-Pro, o4-mini, and Qwen3-VL: up to 7.25% improvement on Gemini, 4.5% on o4-mini over text-only baselines. Text-only self-correction often degrades performance across rounds. MSSC improves consistently at each iteration. The approach extends to fine-tuning. Vision-language synergy training achieves 13.25% on ARC-AGI with Qwen3-8B, outperforming text-only fine-tuning (9.75%) and closed-source baseline GPT-4o (8.25%) with a much smaller model. Abstract reasoning may require coordinated visual and linguistic processing, not either modality alone. This work shows that matching the modality to the reasoning stage, rather than forcing everything through text, unlocks consistent gains across models. Paper: https://t.co/cQZDUGCmjz Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG

The media is extremely racist against White people. Now, replace the word “White” with “Black” and imagine the outrage. This is not okay. Anti-White racism has to stop. https://t.co/E3NWW5KBAl

Unpopular opinion: Gradient descent and sampling won’t reach AGI. Meta’s PDR paper quiet admissions behind shiny evals: The workspace is not persistent. Long-context failure modes. “Manual” steering. Why is the transformer failing at every turn? Read: https://t.co/T4ib3pgCVg https://t.co/WlRx4Lo5D5

Speedrunning ImageNet Diffusion - 360x faster training There have been many new techniques demonstrating convergence speedups compared to DiT in the past few years, however all of these have been studied in isolation, against increasingly outdated baselines. I present SR-DiT (SpeedrunDiT), which combines some of the best techniques into one new modern baseline

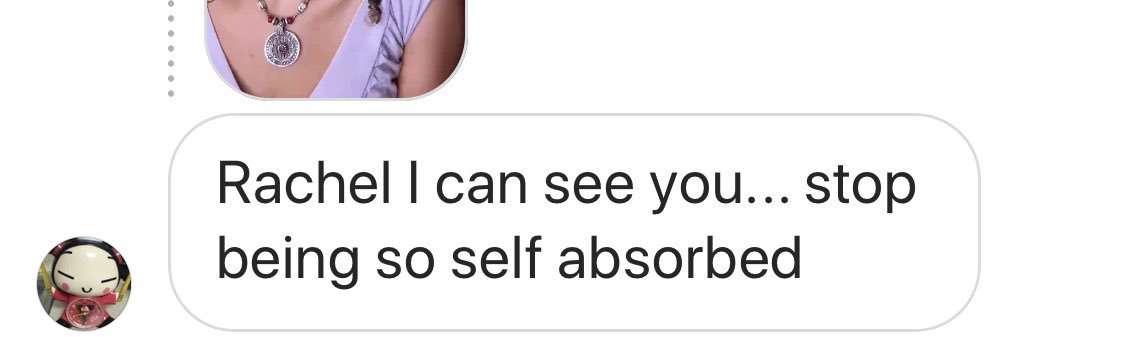

This is not about self absorption.. it’s about racism, parity & 💰 speaking up is costing me $ but may help younger folk coming up down the line achieve those things. https://t.co/oBVQkGslpZ

Are you a person of color who's encountered racist caricatures while you were in school — either recently or in the past? @NPRWeekend wants to hear from you. https://t.co/QIxQmqnSG6

reject embrace modernity tradition https://t.co/FSxD18Qd1r

#NativelyDigital Original: Copy: https://t.co/dTYgIuSSsQ

“If you are driven by fear, anger or pride… Nature will force you to compete. If you are guided by courage, awareness, love, tranquility and peace… Nature will serve you.” ~ A. Ray, 🪶✨ #INDIGENOUS #NativeTwitter https://t.co/o1X0AjAxN8

rachel: “ele deturpou o que eu falei pra usar contra mim lá fora, como se eu fosse uma pessoa preconceituosa, contra as minorias” VAI FALANDO RACHEL https://t.co/bChtAO2SrY

🔱 Native x @MantaNetwork: Scaling Web3 with ZK Tech! 🌊 We're elated to announce our latest integration w/ Manta, enhancing interoperability through ZK technology! Combining Native's Unified Liquidity & Manta's Modular design, together we're forging a unified crypto ecosystem. https://t.co/wYKvhCIWrx

🌊 @native_fi, Web3's Liquidity Layer, now joins the #MantaPacific Ecosystem! Native is a liquidity solution that combines bridges, assets and pricing into one offering. 🔗 https://t.co/zpVlhRqHup

🔱 Native x @MantaNetwork: Scaling Web3 with ZK Tech! 🌊 We're elated to announce our latest integration w/ Manta, enhancing interoperability through ZK technology! Combining Native's Unified Liquidity & Manta's Modular design, together we're forging a unified crypto ecosyste

From One-Person Companies to Generative Media, AI Funding Spans the Stack https://t.co/VcljFNMZPV @pymnts

I built a reading tracker with Manus 1.6 Max. https://t.co/ZyXiLQIbFE

やばい。Canvaが要らなくなるかも... Manus1.6で、Nano banana proの画像がテキスト編集から背景削除まで完結するようになった。 https://t.co/hp2RpIJuRd

やばい。Canvaが要らなくなるかも... Manus1.6で、Nano banana proの画像がテキスト編集から背景削除まで完結するようになった。 https://t.co/hp2RpIJuRd

https://t.co/dapoCilroN

fascinated by the trajectory this book took from "forgotten on arrival" to "dustbin of literature" to "rediscovered quiet masterpiece" to "the NYRB edition absolutely everyone has been recommended at some point" to "enough already" to "overrated trash"

https://t.co/dapoCilroN

The @NativeGaming team share their emotions after the reverse sweep! https://t.co/p0GKVWjSPB

RedStone 🤝 @native_fi RedStone is delighted to provide price feeds to @native_fi in our Core (Pull) Model. Now LST & LRT holders can easily contribute to Native’s programmable liquidity Aqua, receiving yield in return. Details below 👇 https://t.co/Eceo0f1sS5

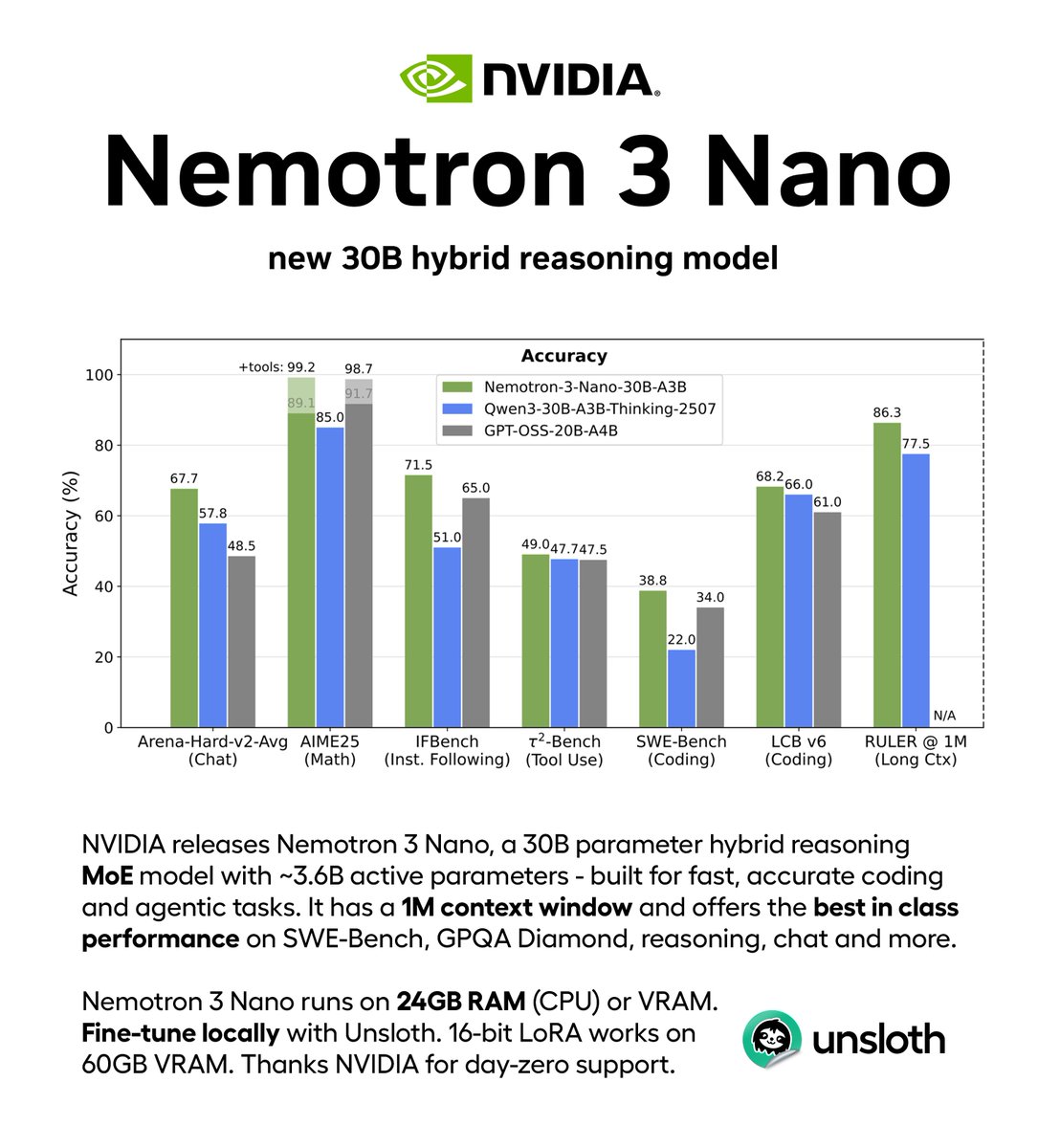

NVIDIA releases Nemotron 3 Nano, a new 30B hybrid reasoning model! 🔥 Nemotron 3 has a 1M context window and the best in class performance for SWE-Bench, reasoning and chat. Run the MoE model locally with 24GB RAM. Guide: https://t.co/UAHCV8dMNC GGUF: https://t.co/XdmG9ZSnNQ https://t.co/XttVvteTqE

ElevenLabs has officially LOST to Open-Source ResembleAI allows you to clone ANY voice without verification using on 5-10 seconds of audio, and dominates on paralinguistic tags for human-like expressions. Most "fast" text-to-speech models sound robotic. Most "quality" TTS models are slow. None incorporate authentication at a foundational level. @resembleai solved all three. Chatterbox Turbo delivers: 🟢<150ms time-to-first-sound 🟢State-of-the-art quality that beats larger proprietary models 🟢Natural, programmable expressions 🟢Zero-shot voice cloning with just 5 seconds of audio 🟢PerTh watermarking for authenticated and verifiable audio 🟢Open source – full transparency, no black boxes Try it on HuggingFace: https://t.co/cPXPQyPrRN

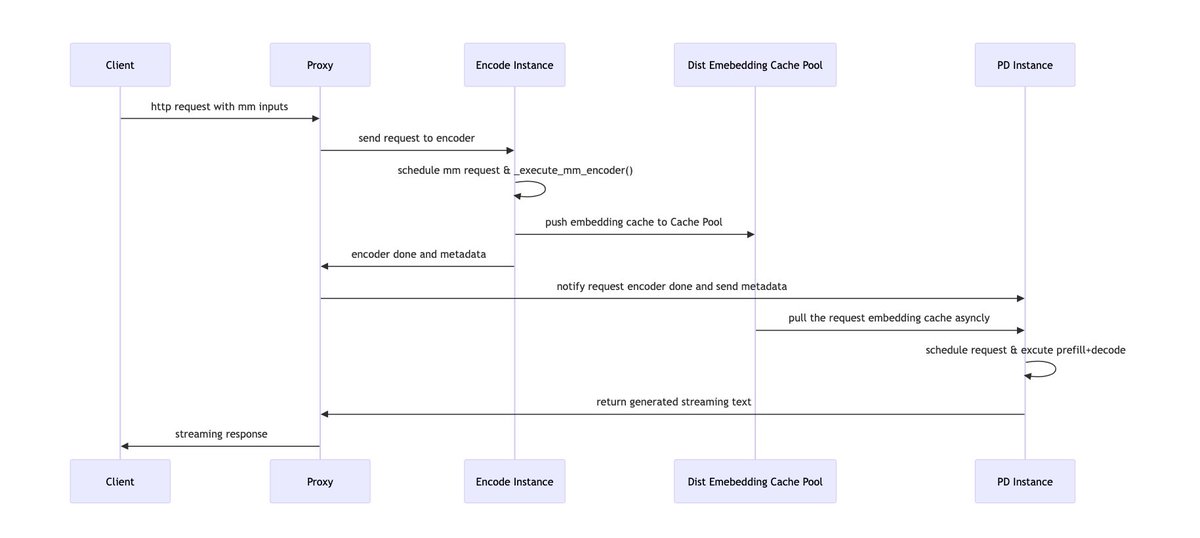

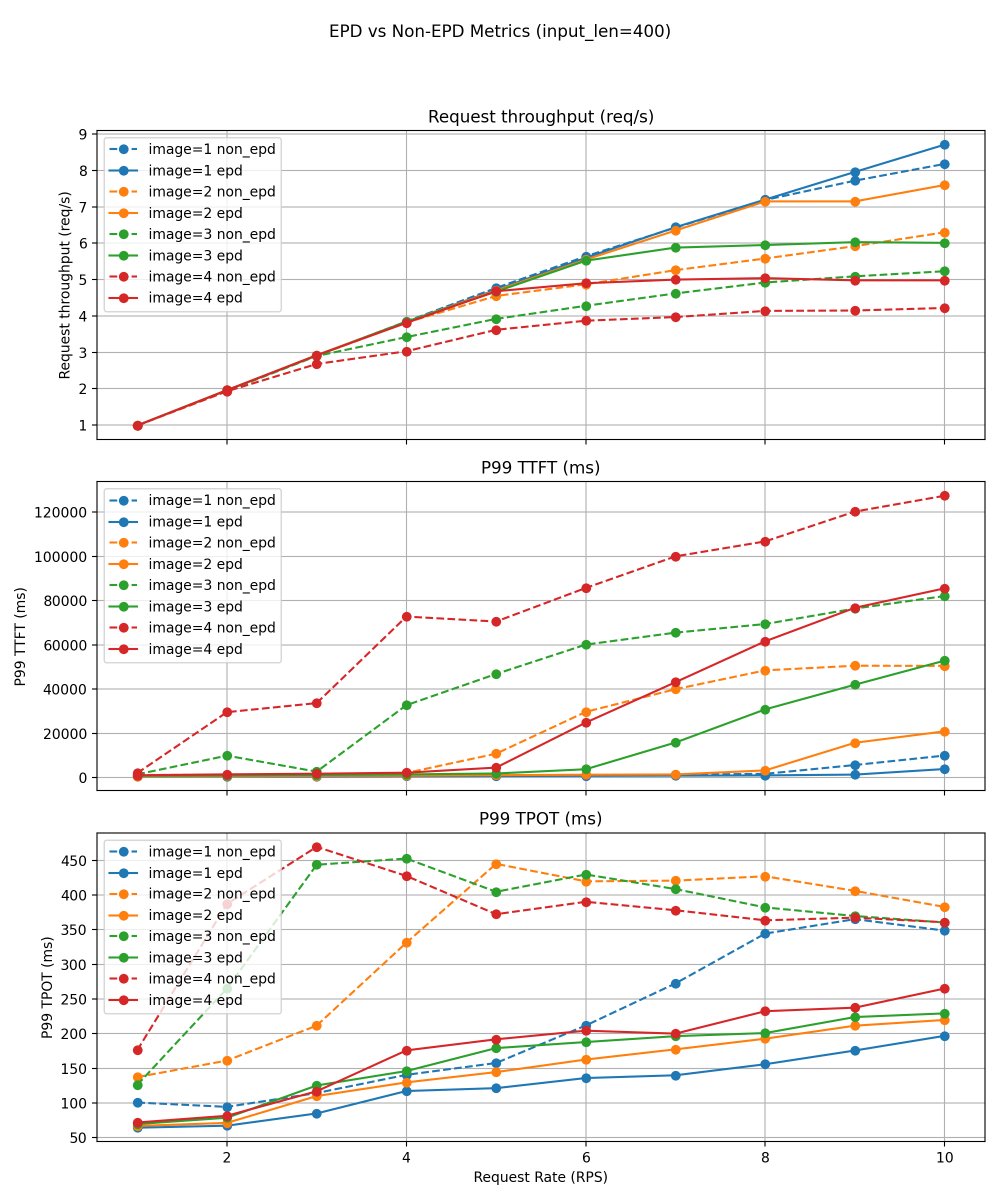

Multimodal serving pain: vision encoder work can stall text prefill/decode and make tail latency jittery. We built Encoder Disaggregation (EPD) in vLLM: run the encoder as a separate scalable service, pipeline it with prefill/decode, and reuse image embeddings via caching. This provides an efficient and flexible pattern for multimodal serving. Results: consistently higher throughput (5–20% across stable regions) and significant reductions in P99 TTFT and P99 TPOT. Read more: https://t.co/kGjOCuPZy2 #vLLM #LLMInference #Multimodal