Your curated collection of saved posts and media

marketing is the new cs major https://t.co/bk1lrxokAe

If Reze had gone to school, she would have been a Soviet Pioneer #チェンソーマン #chainsawman https://t.co/hTB36Fh5VK

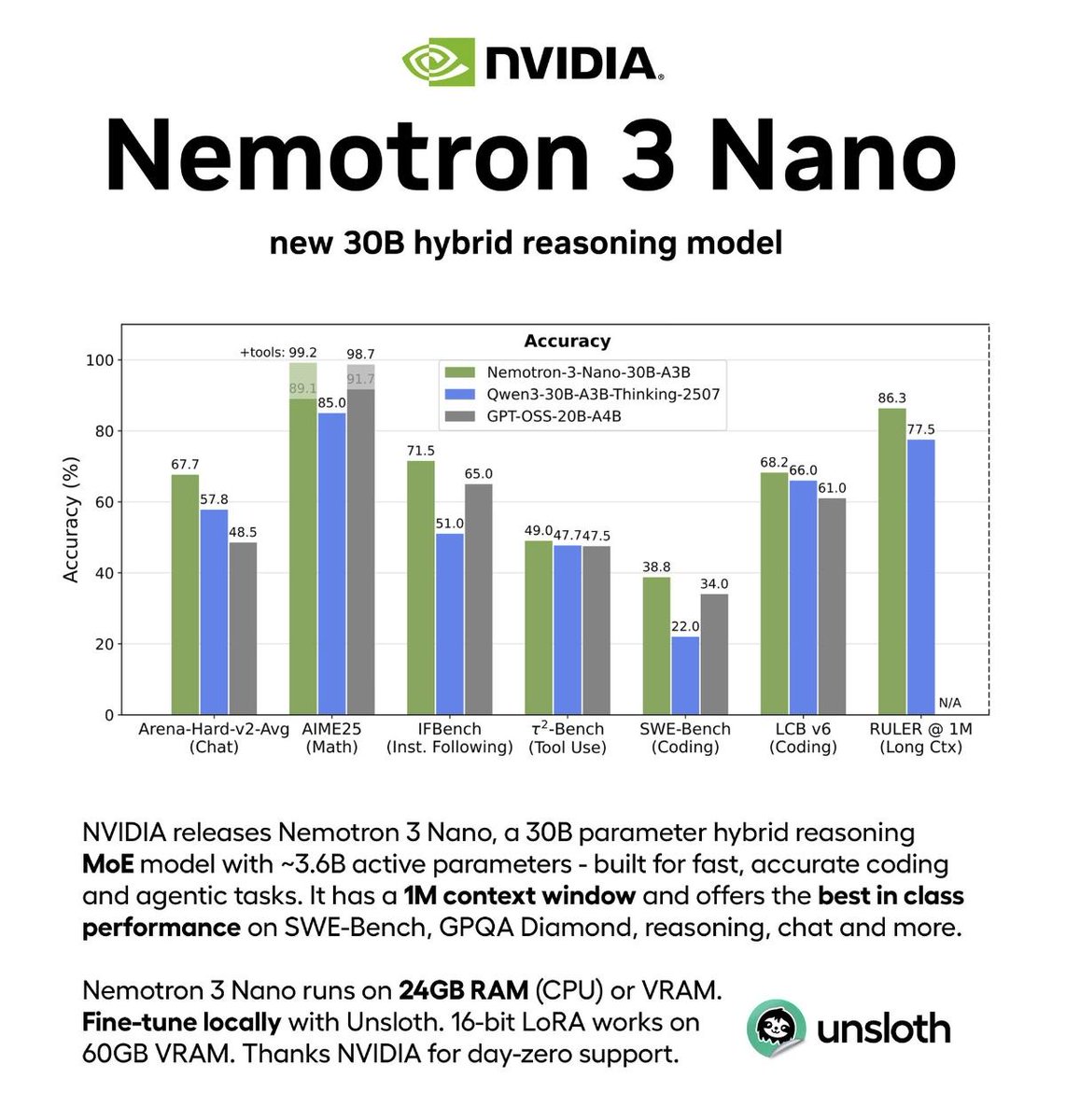

NVIDIA just open-sourced a 30B model that beats GPT-OSS 2-3× faster. The hybrid MoE architecture is clever: activating only 6 of 128 experts per token while maintaining accuracy. Supporting 1M-token context puts it ahead of most competitors. Most importantly: full transparency with model weights, training recipes, and redistributable datasets.

Link: https://t.co/E3lzqy3trQ

Agents need great OSS models & data for RL. Really excited about this NVIDIA release: • New Hybrid MoE architecture • Data sets released, Pre-training (!!) and post-training • Two larger models coming soon Models here: https://t.co/3fJCMfhGMF Free endpoint here: https://t.co/7v4AVoGTg1

NEWS: NVIDIA announces the NVIDIA Nemotron 3 family of open models, data, and libraries, offering a transparent and efficient foundation for building specialized agentic AI across industries. Nemotron 3 features a hybrid mixture-of-experts (MoE) architecture and new open Nemotro

The web was built for humans. And honestly, that's fine for you guys. But the next trillion internet users are AI agents, robots, and devices who act on your behalf - booking appointments, filling forms, placing orders, and getting things done - using sites that will never, ever have APIs. 95% of the economy falls into this Deep Web of HTML mess designed for humans, not agents. Oh, those generic computer use agents aren't taking you anywhere. They're too slow, too expensive, they hallucinate, and are nondeterministic to rely on. That changes now. This is me, Mino, a web agents API to build on this Deep Web. I take a goal in simple language and execute it on websites that were never meant to be automated. Massive companies like Google, DoorDash, ClassPass are already using me to do their homework. Now it’s your turn. How though? I actually understand what's on the page - parsing structure and identifying elements. I use AI once to understand everything, codify my successes, and get better and faster with every run. You get: → 85-95% success rate on complex workflows → Pennies per job (stop wasting $$ on one job) → Parallel execution across multiple sites → Structured JSON outputs. Every. Single. Time. The web wasn't built for agents. I forced it to work anyway. Go build something real. 50 completed runs on the house: https://t.co/jGnj2amXto

This new paper is wild! It suggests that LLM-based agents operate according to macroscopic physical laws, similar to how particles behave in thermodynamic systems. And it looks like it's a discovery that applies across models. LLM agents work really well on different domains, but we don't have a theory for why. The behavior of these systems is often viewed as a direct product of complex internal engineering: prompt templates, memory modules, and sophisticated tool calling. The dynamics remain a black box. This new research suggests that LLM-driven agents exhibit detailed balance, a fundamental property of equilibrium systems in physics. What does this mean? It suggests that LLMs don't just learn rule sets and strategies; they might be implicitly learning an underlying potential function that evaluates states globally, capturing something like "how far the LLM perceives a state to be from the goal." This enables directed convergence without getting stuck in repetitive cycles. The researchers embedded LLMs within agent frameworks and measured transition probabilities between states. Using a least action principle from physics, they estimated the potential function governing these transitions. The results across GPT-5 Nano, Claude-4, and Gemini-2.5-flash: state transitions largely satisfy the detailed balance condition. This indicates that their generative dynamics exhibit characteristics similar to equilibrium systems. In a symbolic fitting task with 50,228 state transitions across 7,484 different states, 69.56% of high-probability transitions moved toward lower potential. The potential function captured expression-level features like complexity and syntactic validity without needing string-level information. Different models showed different behaviors on the exploration-exploitation spectrum. Claude-4 and Gemini-2.5-flash converged rapidly to a few states. GPT-5 Nano explored widely, producing 645 different valid outputs in 20,000 generations. This might be the first discovery of a macroscopic physical law in LLM generative dynamics that doesn't depend on specific model details. It suggests we can study AI agents as physical systems with measurable, predictable properties rather than just engineering artifacts. Paper: https://t.co/UO1pMWxctY Learn to build effective AI Agents in our academy: https://t.co/JBU5beIoD0

NVIDIA just released Nemotron-Agentic-v1 on Hugging Face This dataset empowers LLMs as interactive, tool-using agents for multi-turn conversations and reliable task completion. Ready for commercial use. https://t.co/U2Q09UO3yv

Introducing Bolmo, a new family of byte-level language models built by "byteifying" our open Olmo 3—and to our knowledge, the first fully open byte-level LM to match or surpass SOTA subword models across a wide range of tasks. 🧵 https://t.co/qgsn4QNvJP

No Gemma 4 yet so I went through Google’s @huggingface Discovered this, wow MedGemma is a collection of Gemma 3 variants that are trained for performance on medical text and image comprehension Developers can use MedGemma to accelerate building healthcare-based AI applications https://t.co/yujaQR25aC

Apple just released Sharp Sharp Monocular View Synthesis in Less Than a Second https://t.co/bXoFtIPmWs

Google is preparing for a new open source release on @huggingface Also noticed just recently that Gemma models are not available on AI Studio anymore. What do you expect? 👀 https://t.co/zOenLbvvbb

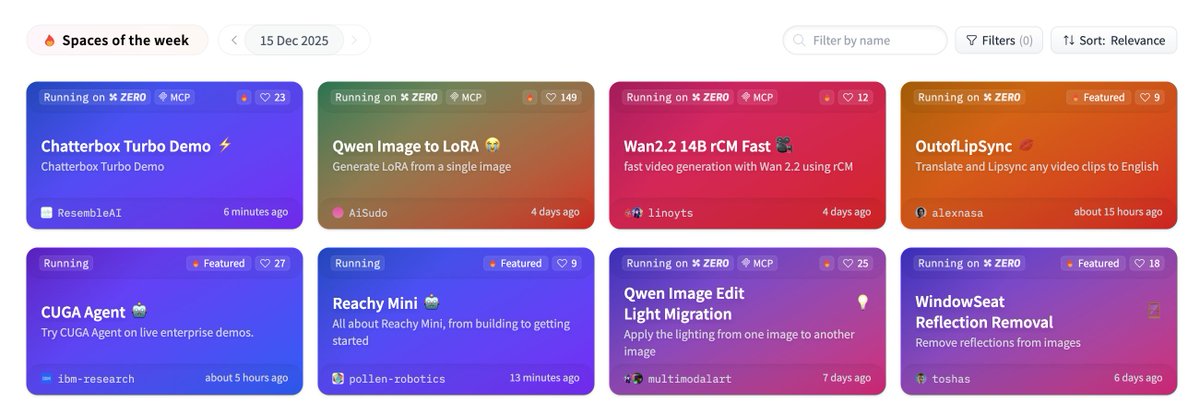

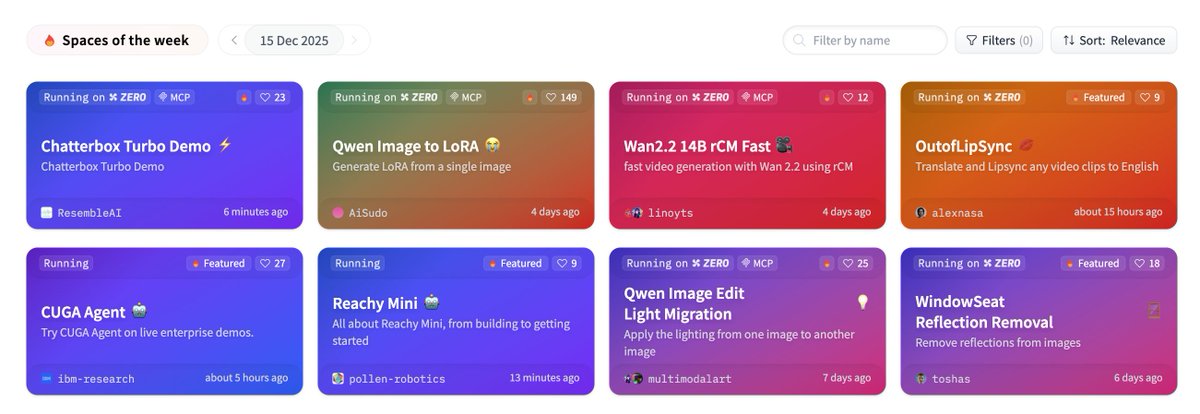

PSA: https://t.co/n1RdT94ij3 is a good link to bookmark 🤗(and refresh)

back at it again with Chatterbox turbo. #1 on @huggingface happy holidays! go build! https://t.co/3iEsbmvNP9

back at it again with Chatterbox turbo. #1 on @huggingface happy holidays! go build! https://t.co/3iEsbmvNP9

https://t.co/z3Jfprq34X

https://t.co/z3Jfprq34X

On this day, an enduring mystery https://t.co/blnIZnf8SW

On this day, an enduring mystery https://t.co/blnIZnf8SW

"T&E. T AND E." https://t.co/fdfSIz0GvS

"T&E. T AND E." https://t.co/fdfSIz0GvS

https://t.co/1E0N2tQBaj

https://t.co/1E0N2tQBaj

@JackPosobiec https://t.co/XKuep1G6sV

GitHub Copilot is smart, but it can’t read your mind. 🧠 Think of custom instructions like onboarding a new teammate. You need to transfer that "institutional knowledge" to get the best results: 🛠️ The stack 📋 The rules 🎯 The goal Here are 5 tips to write instruction files that actually work. ⬇️ https://t.co/jGqmv5jMpN

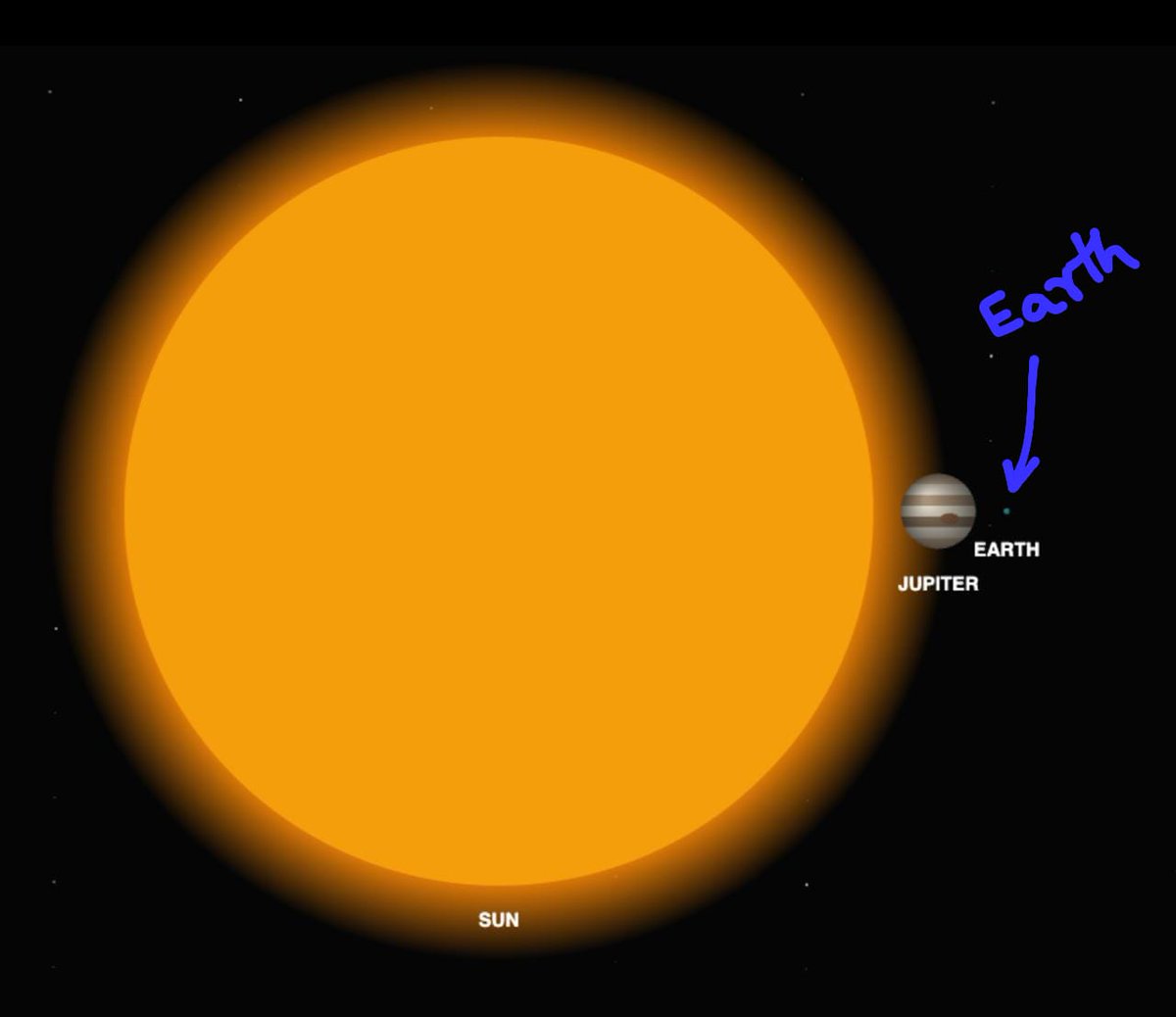

Here is how tiny Earth looks compared to the massive Sun It would take more than 1.3 million Earths to fill up the Sun One giant nuclear fusion reactor in space just waiting to be tapped into… https://t.co/GYepTL0nFX

Top 3 tips to master Grok Imagine prompts: 1. Be ultra-specific & detailed (describe subject, pose, lighting, style, mood, and camera like ‘85mm lens, shallow depth of field’). 2. Reference artists/styles explicitly (e.g., ‘photorealistic, in the style of cinematic editorial’ or ‘hyper-detailed 8K’). 3. Structure prompts clearly: Start with main subject → add environment/details → end with technical/quality boosters.

Just point your camera at anything and ask, “What am I looking at?” Grok analyzes it instantly and explains it in detail Scan notes, places, paintings, documents, or even translate languages - whether you’re studying, working, traveling, cooking or just trying to understand something quickly Just point and talk to Grok like you would to a normal person Grok is one of the most powerful AI assistants available, letting you do almost everything through voice alone

You can use Grok 4 Voice + Video to identify plants. https://t.co/I5c1gb6lti

Pleased to share this thoughtful essay by Jess on my flower design project🌹🧬 As a small thank-you for spreading the work, I'm randomly giving away 5 of these new morphogenesis hoodies to whoever retweets this! https://t.co/lyfLbHmxJT

Genetic engineering, painting, sculpting, floristry, and fantasy are united in @NickDesnoyer's flower design art project. New essay out now on a blossoming frontier of transgenic art: https://t.co/moqo5I2ONw https://t.co/nbjMRVKHDE

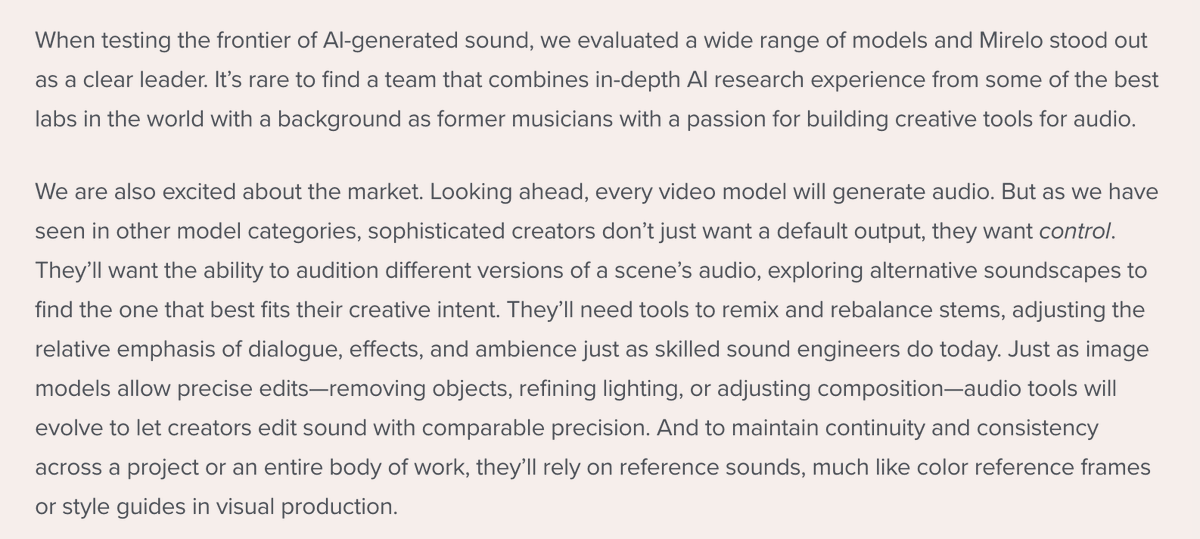

Announcing @a16z's investment in @MireloAI 🔉 @appenz and I couldn't be more thrilled to partner with this incredible team building the AI sound layer. Sound is a crucial part of video - and Mirelo's model enables creators to have more control and higher fidelity. More 👇 https://t.co/sOA8oSh0fJ

Big news today. 🚀 We are more than thrilled to announce our seed investment round of $41m, co-lead by Index Ventures and Andreessen Horowitz. We are excited about this next chapter and will continue building the best models and product around video and audio! https://t.co/jJouqN

Editing front-end is now as easy as commenting on a page and asking for a change. Five weeks ago we wrote our first line of code on Inspector. Now, we're making it available for everyone to use. Try it out now! Much more coming soon ;) https://t.co/NgCidElvWW

Year 5/6 startup learnings > Limit your emotional attachments > You don't know someone until they make a mistake > Things are never as good as they seem, and never as bad as they seem plus - why the shifting forest from Shang-Chi may be the perfect startup metaphor? https://t.co/favvxrFrjJ