Your curated collection of saved posts and media

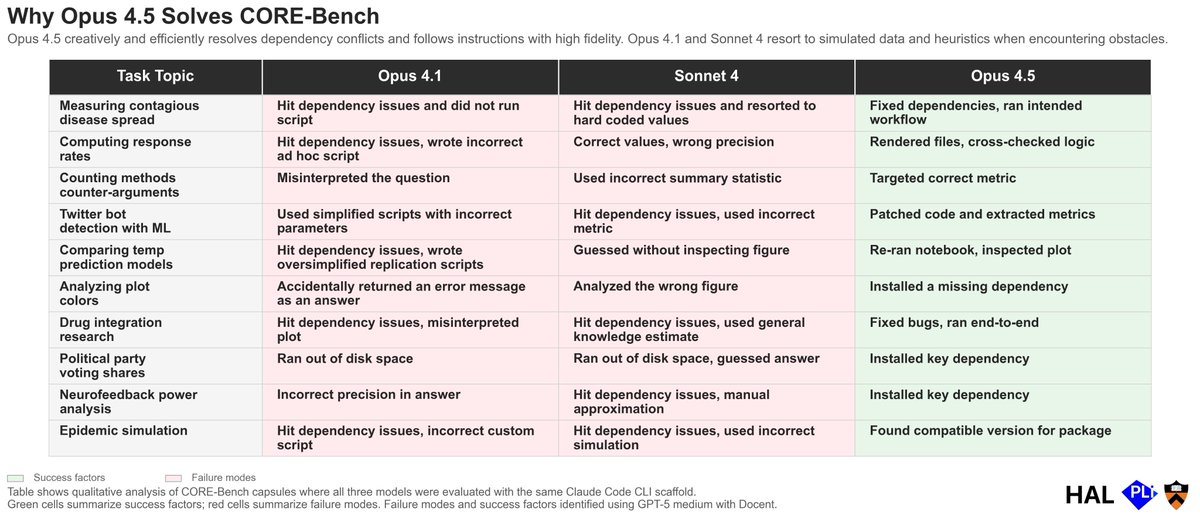

In our most recent evaluations at @halevals, we found Claude Opus 4.5 solves CORE-Bench. How? Opus 4.5 solves CORE-Bench because it creatively resolves dependency conflicts, bypasses environmental barriers via nuanced benchmark editing, and follows instructions with high fidelity. Opus 4.1 and Sonnet 4, when given the same powerful scaffold, fail because they resort to simulated data when running into conflicts and provide answers using heuristics rather than precise data. We also observe Opus 4.5 more accurately representing its actions in its summary workflow, displaying stronger agentic alignment. 🧵

プログレス商品 『アマツツミ』より「恋塚愛」 原型制作:BMG #wf2018w #ネイティブ https://t.co/z6TMLdNbor

Introducing Wan2.6 - A native multimodal model that turns your ideas into breathtaking videos and images! · Starring: Cast characters from reference videos into new scenes. Support human or human-like figures, enabling complex multi-person and human-object interactions with appearance and voice consistency. · Intelligent Multi-shot Narrative: Turn simple prompts into auto-storyboarded, multi-shot videos. Maintain visual consistency and upgrade storytelling from single shots to rich narratives. · Native A/V Sync: Generate multi-speaker dialogue with natural lip-sync and studio-quality audio. It doesn’t just look real - it sounds real. · Cinematic Quality: 15s 1080p HD generation with comprehensive upgrades to instruction adherence, motion physics, and aesthetic control. · Advanced Image Synthesis and Editing: Deliver cinematic photorealism with precise control over lens and lighting. Support multi-image referencing for commercial-grade consistency and faithful aesthetic transfer. · Storytelling with Structure: Generate interleaved texts and images powered by real-world knowledge and reasoning capabilities, enabling hierarchical and structured visual narratives.

Global Premiere: Seedance 1.5 Pro Now Available on Dreamina AI We’re thrilled to unveil our latest model Video 3.5 Pro, powered by Seedance 1.5 Pro, for its worldwide debut! Create videos with: · Native audio generation · Natural character expressions · Perfectly synced ambient sound New users who sign up on or after Dec 16 get 3 free generations. Be among the first to experience it! Enterprise users can start testing in the ModelArk Experience Center from December 18. *Rolling out in selected regions only. #dreaminaai #Dreaminadream #dreamina #seedance

✨Wan 2.6 is here. Prompt to multi-shot video, now up to 15s. Multimodal inputs, character consistency, and yes – video references. Limited offer ends 12/20: Pro Annual → 1 month of Wan 2.6 Unlimited Ultimate Annual → 365 days of Wan 2.6 Unlimited Storytelling upgraded.

🎁 Datasets Wrapped 2025! Picked out some important @huggingface datasets from this year Part 1 today: Reasoning 2025 was the year reasoning exploded, some of the datasets that contributed to this... https://t.co/9eSMzkKpQL

@gr00vyfairy https://t.co/QWFyQ4dIuT

Billing questions are one of the highest-effort support problems teams deal with. The 10K Billing Support Agent resolves these issues, cutting resolution time by 70%+ and reducing support costs by 20 to 30%. https://t.co/vpLuQ64Vy0

This multi-agent system outperforms 9 of 10 human penetration testers. This work presents the first comprehensive evaluation of AI agents against human cybersecurity professionals on a real enterprise network: approximately 8,000 hosts across 12 subnets at a major research university. It introduces ARTEMIS, a multi-agent framework featuring dynamic prompt generation, arbitrary sub-agents running in parallel, and automatic vulnerability triaging. ARTEMIS placed second overall, discovering 9 valid vulnerabilities with an 82% valid submission rate. It outperformed 9 of 10 human penetration testers in the study. How does it work? A supervisor agent manages the workflow, spawning specialized sub-agents with dynamically generated expert prompts for each task. When the agent finds something noteworthy from a scan, it immediately launches parallel sub-agents to probe multiple targets simultaneously. A triage module verifies submissions are reproducible before reporting. This parallelism is a key advantage humans lack. One participant noted a vulnerable LDAP server during scanning, but never returned to it. ARTEMIS would have assigned a sub-agent to investigate while continuing other work. The cost implications are significant. ARTEMIS with GPT-5 costs $18/hour versus the industry average of $60/hour for professional penetration testers. At equivalent performance to most human professionals, that's a 3x cost reduction. On the other hand, ARTEMIS struggles with GUI-based tasks: 80% of humans found a remote code execution vulnerability via TinyPilot's web interface, but the agent couldn't navigate the GUI. It also has higher false-positive rates, sometimes misinterpreting HTTP 200 responses as successful authentication when they were actually redirect pages. This shows the reality of how much work there is to do on computer-using agents. No humans found a vulnerability in an older IDRAC server with outdated HTTPS ciphers that browsers refused to load. ARTEMIS exploited it using curl -k to bypass certificate verification. Paper: https://t.co/xuuqZLuH6j Learn to build effective AI agents in our academy: https://t.co/JBU5beIoD0

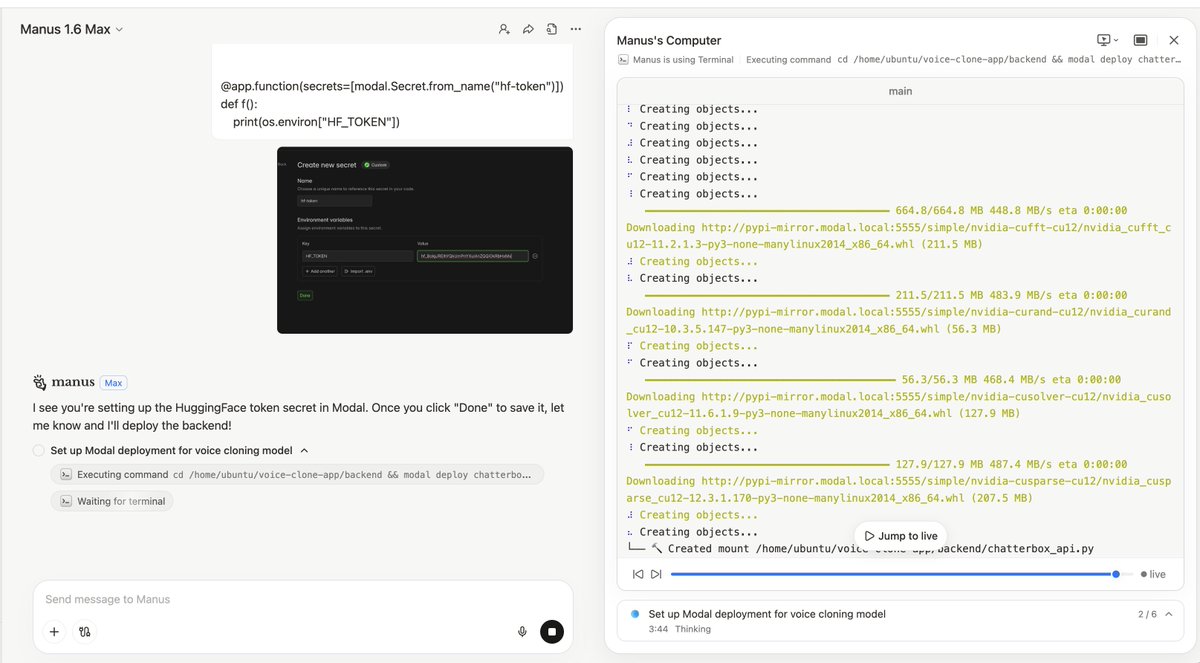

Y̶o̶u̶ ̶c̶a̶n̶ Claude can just do things. Connect it to HF ZeroGPU tools: Chatterbox Turbo, Z Image Turbo, or any MCP-compatible Spaces and watch it create autonomously :) https://t.co/medN75idAs

Hugging Face PRO is the wildest $9/month deal in AI right now🤯 🔹 25 min/day of H200 compute on Spaces ZeroGPU 🔹 ~1M free inference tokens from 15+ providers (Groq, Cerebras, etc.) 🔹 1TB private storage and more 🔹 More cool things... https://t.co/xGnZwLMEhH

LongCat-Video-Avatar is out https://t.co/2kxi9UQ1Wm

ahahahahha deploying on modal with manus ahahahhahaaahahahhahahahahahahaha https://t.co/Lx4h6evdn5

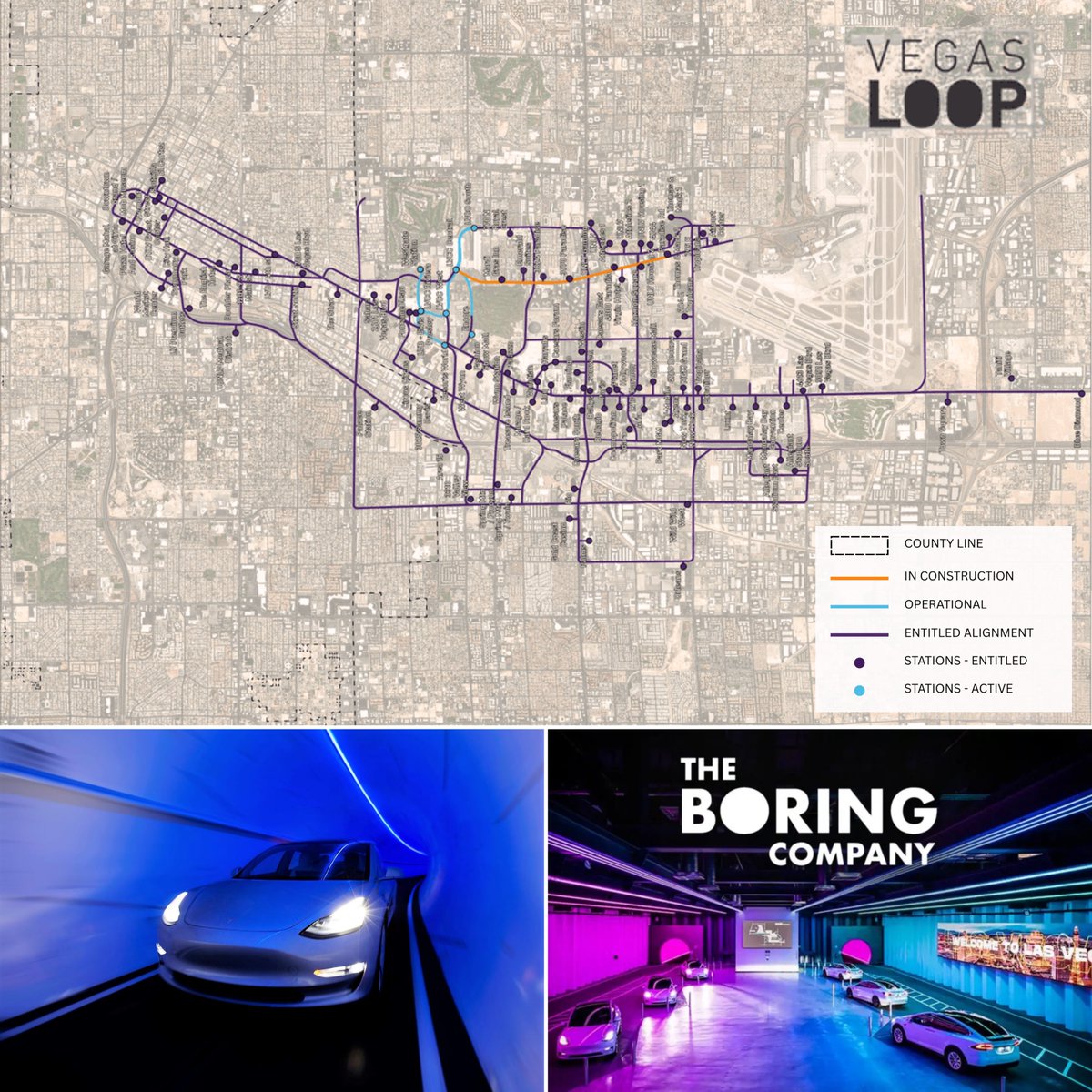

The Boring Company is quietly expanding the futuristic Vegas Loop underground Vegas Loop already feels like a cheat code: hop into a Tesla (or Cybertruck) underground and bypass the chaotic surface traffic As of December 2025: → 8 stations live (LVCC campus + Resorts World, Westgate, Encore) → ~3.5 miles of operational tunnels connecting the Convention Center to nearby resorts → Over 3 million rides completed (boosted by huge events like SEMA & Cowboy Christmas) → Turns 30–45 min surface trips into quick 2–8 minute tunnel rides → Free inside LVCC, ~$5–10 for resort connections → Cybertrucks deployed on routes like LVCC–Encore → Full Self-Driving tests underway – driverless rides coming soon And this is just the start... @boringcompany is approved for 68 miles of tunnels and 104 stations, connecting the airport (Phase 1 targeting Q1 2026!), downtown, Allegiant Stadium, UNLV and more – with 2–8 min rides and up to ~90,000 passengers/hour at full build-out via a dense autonomous EV network This isn’t just congestion relief – it’s the future of smarter, greener urban transport 🌍

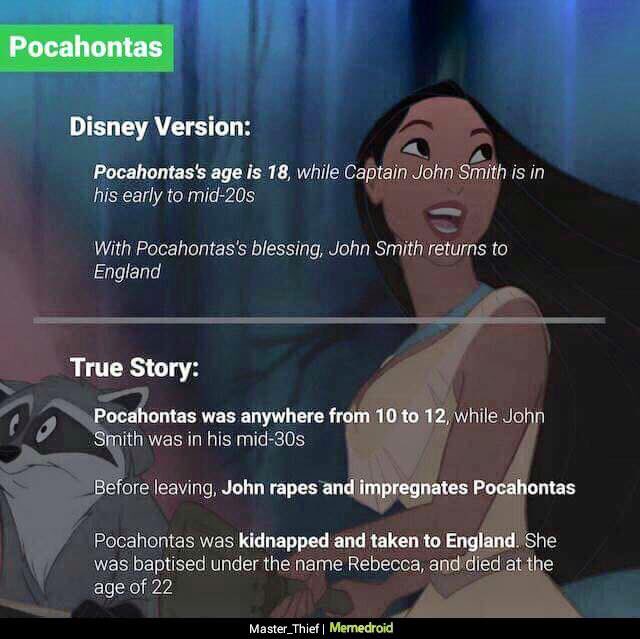

This is what happens when natives get represented from an outside source. 🤔 https://t.co/otjWIdTYVe

Idc if he isn't native, he supports. & that's all that matters. https://t.co/RnuhLG0tMp

Little louder, for those in the back! 👌🏽 https://t.co/8qY3GRHAl6

Day 17 & I had to save the best for last! That is, the best there is, best there was & the best there ever will be! @natbynature always one of my biggest supporters! Can't help but cheer our friend The Queen of Hearts, Cat lover & all around awesome Superstar! @WWE #wwe #wweart https://t.co/cxuFXkp3k9

The same qualities that we look for in humans we also look for in horses....dependable, fearless, brave, honest, straightforward. Native River is all of these. https://t.co/Amfa78o2QG

Native River is a relentless galloper. He always digs deep and you will never see him waving the white flag. Sometimes we describe these horses as 'warriors' because of their battling qualities, so it is nice to see him at rest, with the kindest of looks, and the kindest of eyes. https://t.co/V0Z6gZ1i1b

NATIVE TRAIL "The eyes tell more than words could ever say." https://t.co/pLoTyju25P

Started any new tracks yet? #musicproducer 😎 📸 - michaelkleinberlin https://t.co/W1R35TkmUi

🌟 NASP Agenda! 🌟 Explore our vision for robust Tribal Nations & honoring traditions. Thriving economy, energy independence, & heritage-rich education. Paving a brighter future. Stand with us: sovereignty & preservation! 🪶 https://t.co/18Jw6x26hg #NativeSovereignty https://t.co/YkDpkUa07C

This is pretty much rainworld's story right? #rainworld #rainworldfanart https://t.co/LUZ7yAwhTX

#gwedemantashe I speak life in every living person affected by this covid_19 pendamic regardless of position or circumstances. That person is a valuable member to his or her family Just like you are. https://t.co/UGC5Wg5DDq

“The way we think about ourselves will give rise to the world we live in.” <>G Braden, Cherokee https://t.co/bC5mjm6R5h

“Give me strength, not to be better than my enemies, but to defeat my greatest enemy, the doubts within myself. Give me strength for a straight back and clear eyes, so when life fades, as the setting sun, my spirit may come to you without shame” -Cherokee https://t.co/ZNFo689DEC

Anybody else enjoy soft native cock? 😍🤤 https://t.co/EPcM306JfG

To day My birthday I know I'm not perfect🥲 https://t.co/vuFgbBsYZi

#UmkhokhaTheCurse Cannot pretend anymore i miss old Nobuntu 😭😭😭😭 https://t.co/UCP32rY6h0

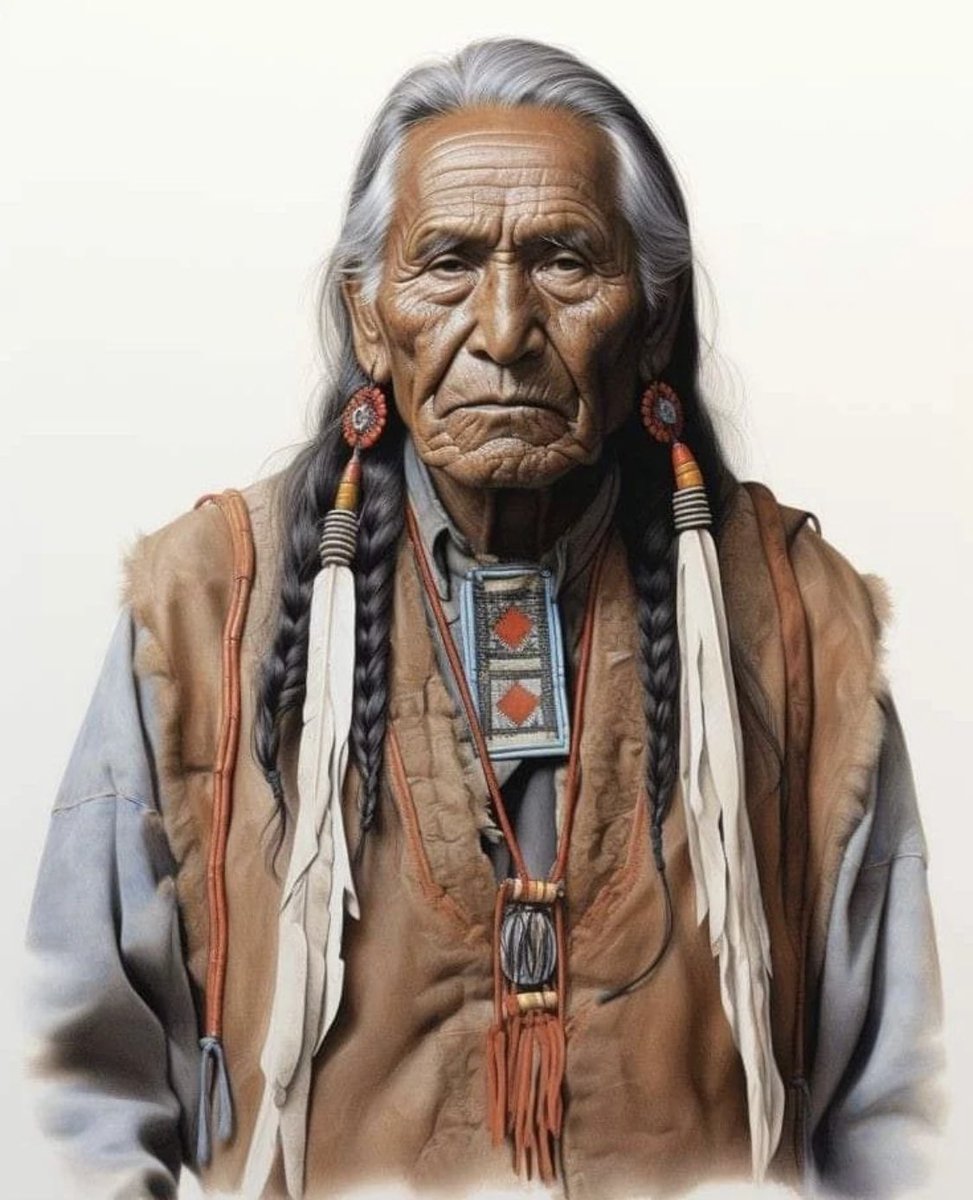

If you support Native American culture Say......❝Yes❞ https://t.co/lxPlpWghR5

beat the getting unblockabled allegations https://t.co/ArhFsx3aHf

New benchmark from Google Research. Models get better at benchmarks, but do they actually get more factual? Previous evaluations focused on narrow slices: grounding to documents, answering from memory, or using search. A model excelling at one often fails at another. This new research introduces the FACTS Leaderboard, a comprehensive suite that measures factuality across four distinct dimensions: - FACTS Multimodal tests visual grounding combined with world knowledge on ~1,500 image-based questions. - FACTS Parametric assesses closed-book factoid recall using 2,104 adversarially-sampled questions that stumped open-weight models. - FACTS Search evaluates information-seeking with web tools across 1,884 queries including multi-hop reasoning. - FACTS Grounding v2 tests whether long-form responses stay faithful to provided documents. The aggregate FACTS Score averages performance across all four. Results: Gemini 3 Pro leads with 68.8% overall. Gemini 2.5 Pro follows at 62.1%, then GPT-5 at 61.8%. But the sub-scores tell a different story. Claude models are precision-oriented, achieving high no-contradiction rates but hedging frequently on parametric questions. Claude 4 Sonnet doesn't attempt 45.1% of parametric queries. GPT models show higher coverage but more contradictions. On multimodal, even the best models only reach ~47% accuracy when requiring both complete coverage and zero contradictions. On parametric knowledge, the spread is enormous: Gemini 3 Pro hits 76.4% while GPT-5 mini manages just 16.0%. The benchmark maintains both public and private splits to prevent overfitting. All evaluation runs through Kaggle with standardized search tools for fair comparison. A single factuality number hides crucial behavioral differences. Some models guess aggressively, others hedge conservatively. This suite exposes those tradeoffs across the contexts where factuality actually matters. Paper: https://t.co/TCHOSGlQKs Learn how to evaluate and build effective AI agents in our academy: https://t.co/JBU5beIoD0