Your curated collection of saved posts and media

@EdwadSouth @huggingface Yes! :-) https://t.co/8Lc526sOmg

@baltostar I would rather live in my world than yours. https://t.co/Gh3ADQqnIS

Excited to welcome @Apple to @huggingface Enterprise! 😍 Just yesterday Apple released Sharp - an amazing new model to turn images into 3D splats. Wonder how your iPhone magically turns your picture into moving images when you tilt it? With Sharp you can create this type of effect and more. With HF Enterprise, Apple will be able to publish technical articles on the HF blog - I for one would love to learn more about Sharp and the 150+ models, datasets and applications shared on HF!

Learnt today that Chorus by @charlieholtz is open sourced! https://t.co/TsgCqvGKps

🚀🚀🚀Introducing HY World 1.5 (WorldPlay)! We have now open-sourced the most systemized, comprehensive real-time world model framework in the industry. In HY World 1.5, we develop WorldPlay, a streaming video diffusion model that enables real-time, interactive world modeling with long-term geometric consistency, resolving the trade-off between speed and memory that limits current methods. You can generate and explore 3D worlds simply by inputting text or images. Walk, look around, and interact like you're playing a game. Highlights: 🔹Real-Time: Generates long-horizon streaming video at 24 FPS with superior consistency. 🔹Geometric Consistency: Achieved using a Reconstituted Context Memory mechanism to dynamically rebuild context from past frames to alleviate memory attenuation 🔹Robust Control: Uses a Dual Action Representation for robust response to user keyboard and mouse inputs. 🔹Versatile Applications: Supports both first-person and third-person perspectives, enabling applications like promptable events and infinite world extension. 👉🏻Try it now: https://t.co/0awf2D5LaT 🌐Project Page: https://t.co/bbFzdTXUdU 🔗Github: https://t.co/lSsVLXxoAd 🤗Hugging Face: https://t.co/qbCBzvfNRT 📄Technical Report: https://t.co/diLCcxWwQw

First Look: The Beast's Spatial Anchor, Smooth Follow, Ultra-wide, & Multi-Window on iPhone (No Adapter Required) The first batch of The Beast is on track to ship by the end of this month! We’re excited to share a first-look video for an early glimpse of what’s coming. 😎 Please note that the in-glasses visuals may appear softer in the footage due to camera capture — in use, the experience has already become a true mobile powerhouse for our team.

Today I’m sharing Tiny🔥Torch—an educational framework for ML systems, built from scratch. You don’t just train models, you build tensors, autograd, optimizers, and data loaders, and see how design choices affect memory, performance, and efficiency. If you use @PyTorch or @TensorFlow, this helps learners see what’s really happening under the hood. Too many students learn how to use ML frameworks, but never how to build one. Tiny🔥Torch is about closing that gap. Early, open, and still evolving, looking for fellow educators and learners. Ideas and help welcome 🙏 https://t.co/rEO37tbmHO

@blevlabs The new @xAI terms of service: https://t.co/VkOKkAyq7U

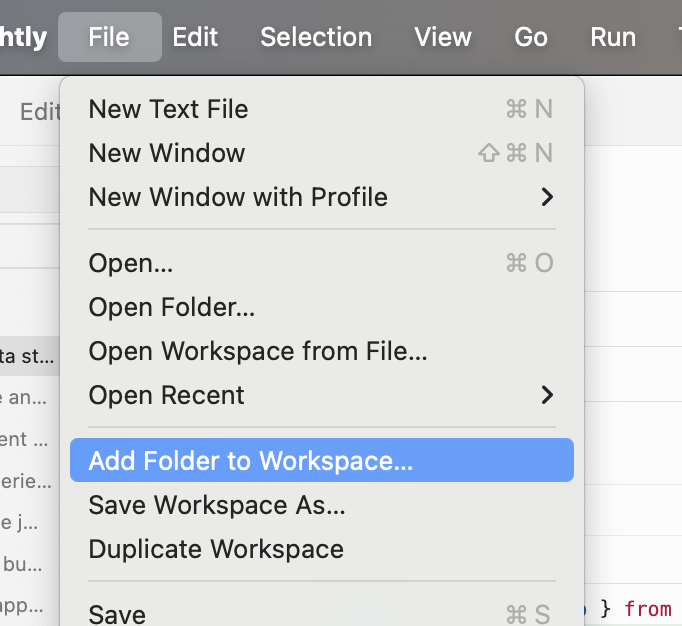

@RhysSullivan have you tried multi workspace roots? its like a virtual monorepo https://t.co/WPaUUoKamz

@RhysSullivan have you tried multi workspace roots? its like a virtual monorepo https://t.co/WPaUUoKamz

Odessa A'zion at the "MARTY SUPREME" premiere in NYC.https://t.co/uVuLXV3yy5

USA!! USA!! 🇺🇸 https://t.co/xaauDHwCIz

drove two hours in a foreign country just to see these fruit bus stops in nagasaki https://t.co/aHpgxvVDqT

USA!! USA!! 🇺🇸 https://t.co/xaauDHwCIz

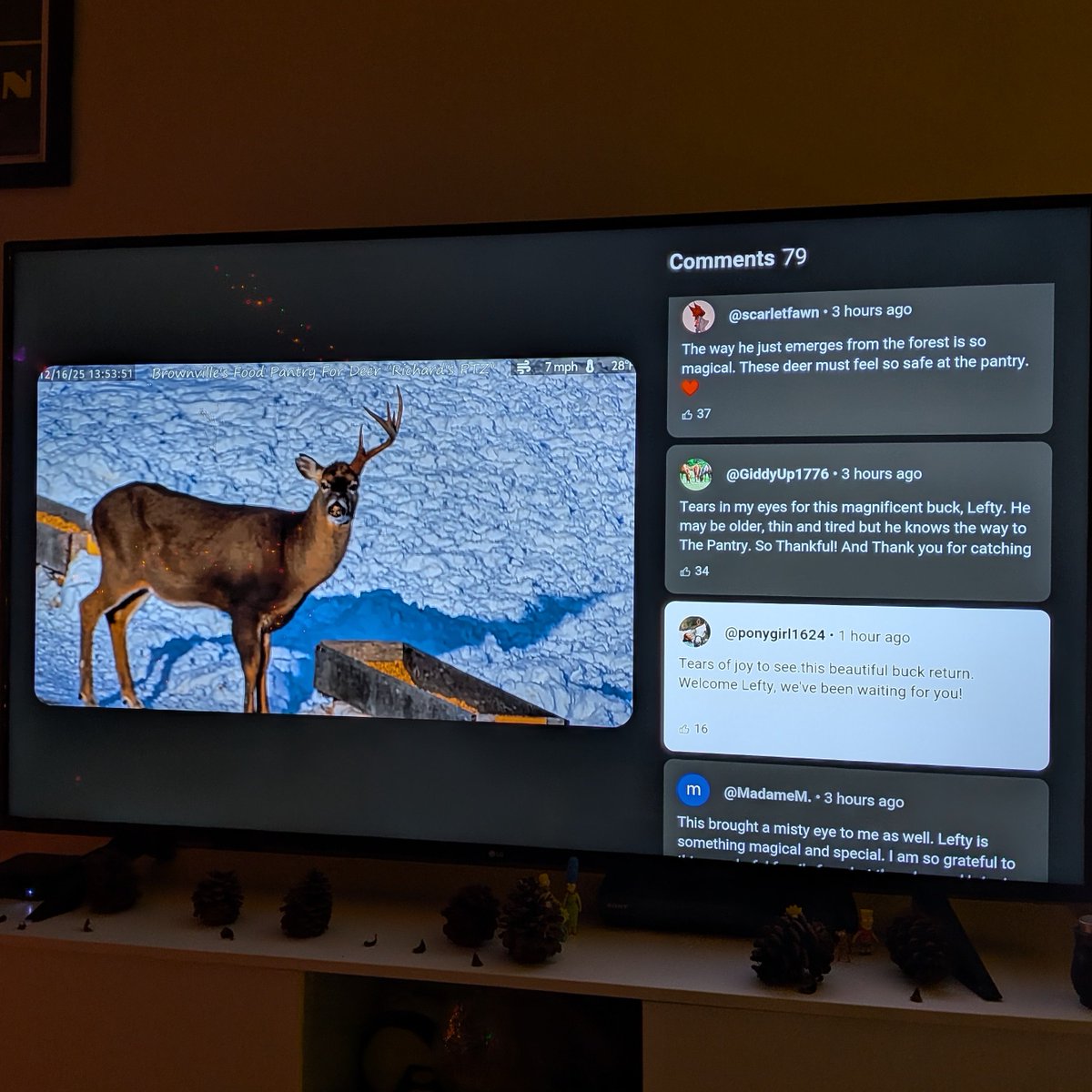

A fan favorite deer is back and the comments are beautiful https://t.co/wVNHiOxcRo

So much is horrible all of the time but I want to remind everyone that the Brownville's Food Pantry for Deer, a seasonal 24/7 livestream of deer eating oats and apples, begins feeding for the winter tomorrow morning https://t.co/WocwW3T9Ir

A fan favorite deer is back and the comments are beautiful https://t.co/wVNHiOxcRo

In partnership with Airtel Africa, Starlink Direct to Cell will connect more than 170 million people in Africa across 14 countries, powering life-saving connectivity when it’s needed most. This marks the sixth continent for the satellite-to-mobile service and expands our mission to end mobile dead zones. The service will begin by delivering data that enables voice, video and messaging, and will advance to providing high-speed broadband service to smartphones with 20x improved data speeds → https://t.co/V1Ehnv3Ybm

📁 Yann LeCun says language models do extract meaning, but only at a superficial level. Unlike humans, their intelligence is not grounded in physical reality or common sense. They answer many questions well, but break down when faced with new situations because they do not truly understand the world they describe.

Some more thoughts about Yann interview: Even if LLMs work great, that's missing the point. Everyone's doing the same thing now. More scale, more data, longer CoT, tweak RL. But the path to get there was completely stochastic. Attention, transformers, scaling laws, RLHF, none of it was obvious, it came from people trying very different things. We wouldn't be here if everyone had agreed early on which direction to go. Assuming the next leap comes from all of us optimizing the same recipe is dangerous. I agree that the situation today is indeed way more complicated. Back then you could test a wild idea on a few GPUs. Now every serious bet costs millions in compute. That makes it harder to explore, which makes the convergence problem even worse. But that's exactly why we need to be more intentional about funding diverse approaches, not less.

Yann LeCun says there is no such thing as general intelligence Human intelligence is super-specialized for the physical world, and our feeling of generality is an illusion We only seem general because we can't imagine the problems we're blind to "the concept is complete BS"

Here's a simple rule I use: When someone tells you AGI is coming, ask yourself what they're selling. VCs need the hype for valuations. Researchers need it for funding. Doomers sell fear. Skeptics sell their brand. Even I'm selling you my podcast 😅 There's no single moment where we'll suddenly have it. We'll reach superhuman level on some tasks, human level on others, and stay behind on many. Some tasks have already happened, while others will take forever. The thing is that for 99% of problems, I don't care about "general intelligence." I just want something that solves my problem. Sometimes it's way better than humans. Sometimes about the same. Sometimes worse but still useful. None of this is "AGI." "AGI" is not a scientific concept. It's a story. And usually someone is selling it to you.

📁 Yann LeCun, Chief AI Scientist at Meta, says many people misunderstand what a world model really is. He explains that it is not about reproducing the world in full detail like a visual simulator. He warns that generating video may look impressive, but it does not guarantee the system has learned the underlying dynamics of reality.

Yann LeCun argues there is no such thing as “general intelligence” human or artificial. What we call general intelligence is just our ability to solve problems we’re wired for or can imagine. Humans are great at navigating social life and the real world but terrible at tasks like chess without training. Many animals outperform us in domains we’re blind to. So instead of aiming for "general AI," we should focus on machines mastering human-relevant domains.

📁 Yann LeCun says that AGI does not exist and is a poorly defined concept built around an idealized view of human intelligence. He argues that humans are highly specialized, good at navigating the physical and social world, but weak at many other tasks. We seem general only because we can imagine the problems we are good at. For LeCun, AGI mistakes human limits for universal laws of intelligence.

🎯 Demo: counting objects & actions Show Molmo 2 a clip and ask “How many times does the ball hit the ground?” It returns a count plus space–time pointers (pixel coordinates + timestamps) for each event, so you can verify every answer. https://t.co/HghRd7BbBC

Via DM submission https://t.co/YRhOHOkyDa

ChatGPT just announced monetization in Chat apps. This is MASSIVE. You can hack distribution and make money from 800M weekly users. Here are 12 ChatGPT monetization frameworks so you can print $$ 1. Buyer Agent Post-purchase apps that keep customers hooked, getting the most out of the product, reorder reminders, cancel preventers, limit refunds/returns. 2. Data-for-Dollars Lead magnet quiz apps you license to brands - they capture zero-party data, you external-checkout the solution. 3. Influencer Middleware "Shop my [thing]" apps for creators - fans chat their vibe, get personalized affiliate recs with creator commission baked in. RIP link in bio. Chat in bio is here. 4. App Agency Build ChatGPT apps for ecommerce brands to grow revenue, ROAS, retention marketing and high-converting personalized content. 5. Discovery Tax "Free" research tools that collect pain points. Startup validator that external-checkouts aggregated market reports you compiled. 6. Expert Matcher Skill matching apps that external-checkout specialist bookings, designers, developers, coaches from your vetted marketplace. 7. Gatekeepers-as-a-Service Assessment tools that score users then external-checkout the certification/bootcamp that "fixes" their gaps. 8. Co-Founder Connector Niche matchmaking apps, free to browse, paid to unlock intros via external-checkout (accountability partners, masterminds). 9. ROI Theater B2B calculators that diagnose problems then external-checkout demo calls, commission on closed deals. 10. Demo Liasion Interactive product tours via chat that external-checkout expedited onboarding calls or premium setup. 11. Symptom-to-Consult Diagnostic apps that analyze situations then external-checkout 1-on-1 expert sessions (financial, legal, career). 12. Waitlist Agent Early access apps that take $1 deposits via instant-checkout - validate demand before building, capture waitlist. This is a game changer. It's still early. Instant checkout is coming soon. Use one of these frameworks and go build your business. The future is bright. See ya out there.

wrist cam changes everything https://t.co/I2G3l3LW0w

We discovered an emergent property of VLAs like π0/π0.5/π0.6: as we scale up pre-training, the model learns to align human videos and robot data! This gives us a simple way to leverage human videos. Once π0.5 knows how to control robots, it can naturally learn from human video.

wrist cam changes everything https://t.co/I2G3l3LW0w

📢 New issue brief: Have Chinese AI models pulled ahead of their global counterparts? Our latest brief analyzes China’s diverse open-weight model ecosystem and examines the policy implications of their widespread global diffusion. https://t.co/zkdBKf7N5F https://t.co/MNugnSzaFb

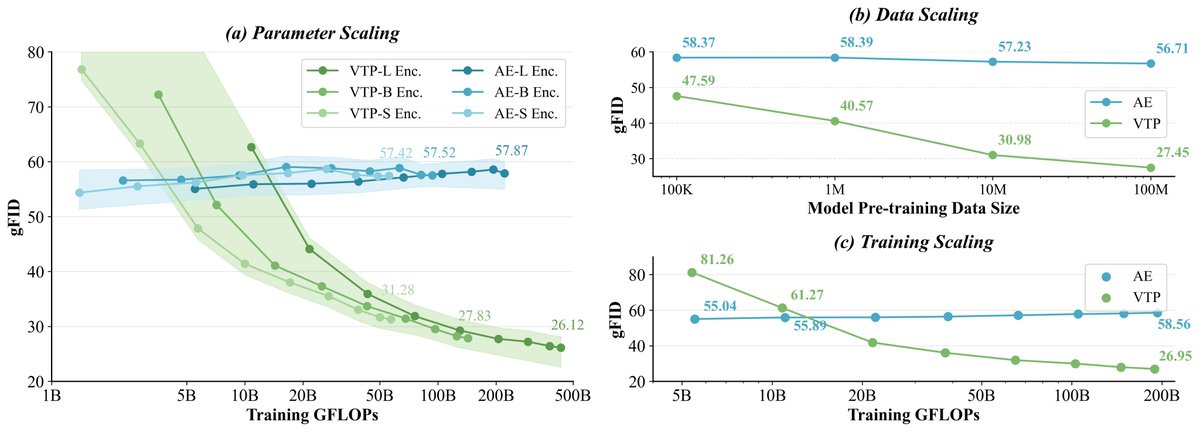

MiniMax (Hailuo) Video Team Has Open Sourced VTP (Visual Tokenizer Pre-training)! VTP is a scalable pre-training framework for visual tokenizers, built for next-gen generative models. It challenges the conventional belief in Latent Diffusion Models that scaling the stage-1 visual tokenizer (model size, compute, data) doesn’t improve stage-2 generation. By combining representation learning + compression–reconstruction, we show the first scaling curve connecting visual tokenizers with Diffusion Transformers! 🧐And the best part: no extra compute for the generator, but better generations simply by scaling up tokenizer training for better representation space! 🎄🎅Merry pre-Christmas to the community!

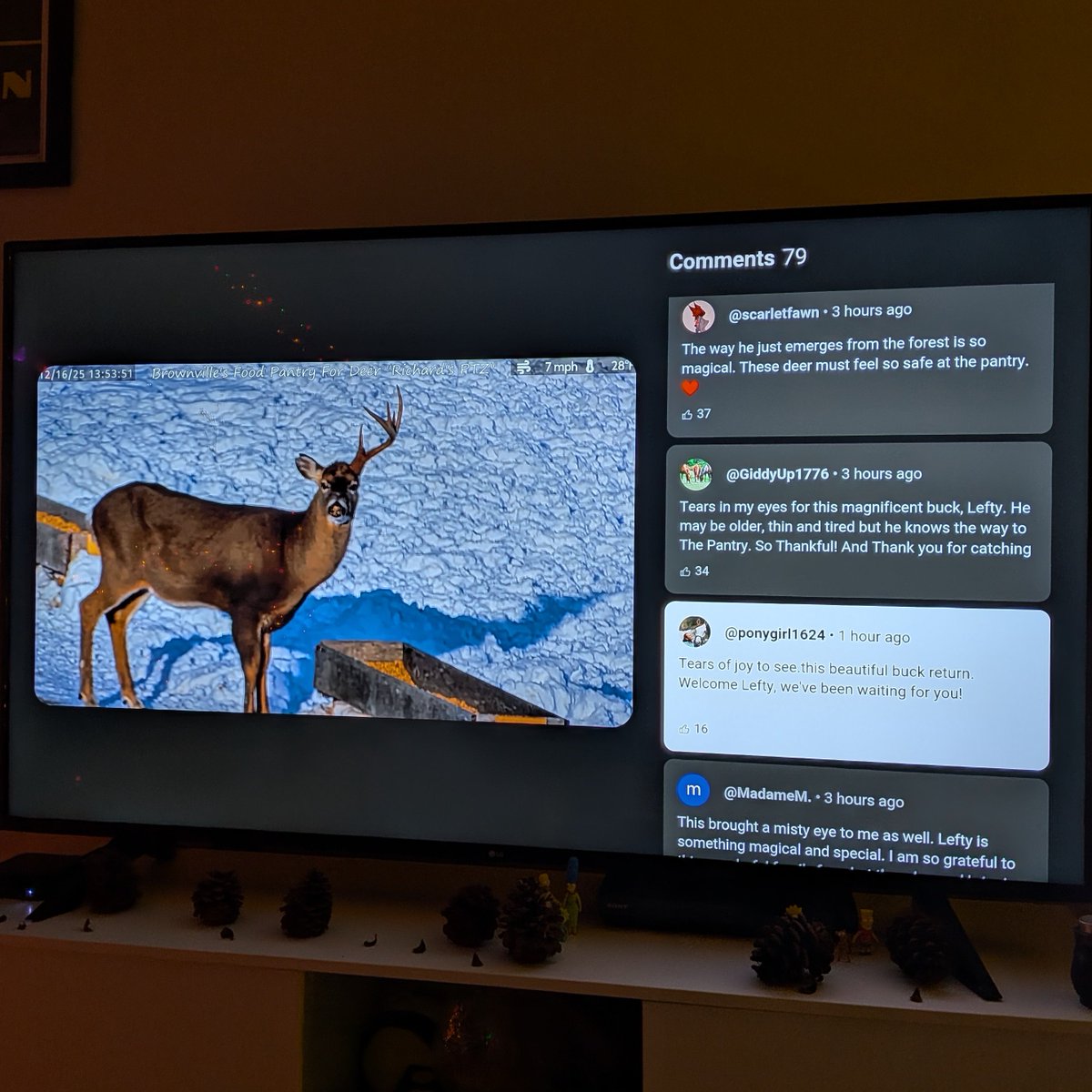

it’s nice that there are still people out there who remember what the internet is really for https://t.co/q4wcfy63yK

it’s nice that there are still people out there who remember what the internet is really for https://t.co/q4wcfy63yK