@MiniMax__AI

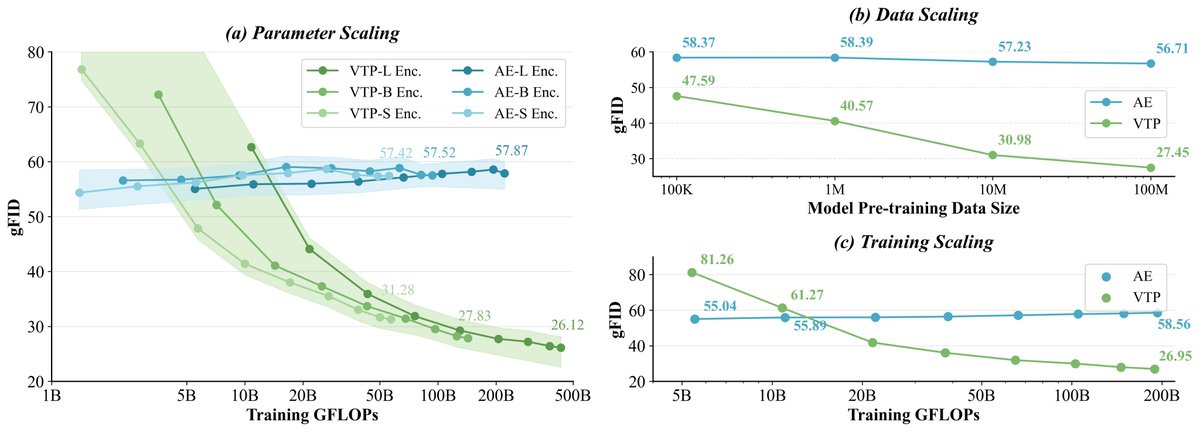

MiniMax (Hailuo) Video Team Has Open Sourced VTP (Visual Tokenizer Pre-training)! VTP is a scalable pre-training framework for visual tokenizers, built for next-gen generative models. It challenges the conventional belief in Latent Diffusion Models that scaling the stage-1 visual tokenizer (model size, compute, data) doesn’t improve stage-2 generation. By combining representation learning + compression–reconstruction, we show the first scaling curve connecting visual tokenizers with Diffusion Transformers! 🧐And the best part: no extra compute for the generator, but better generations simply by scaling up tokenizer training for better representation space! 🎄🎅Merry pre-Christmas to the community!