Your curated collection of saved posts and media

Fast and Accurate Causal Parallel Decoding using Jacobi Forcing https://t.co/MDLUIfsxUn

Wow there are now 600,000 public datasets on @huggingface ! They were 600 exactly five years ago 😳 (@mervenoyann @abhi1thakur can testify) So, to summarize: - 2020>2025 : x1000 - 2025>2030: x1000 too ??? 😂 The open source community is crazy ! https://t.co/UHe4nfrlbs

It arrived 🤗 https://t.co/glGN3Pwaov

It arrived 🤗 https://t.co/glGN3Pwaov

🐸 x 🤗 Let's go! $10 free @huggingface Inference Provider credits to try open models with Toad! https://t.co/ISkm0YfbcH

Alrighty. The Toad is out of the bag. 👜🐸 Install toad to work with a variety of #AI coding agents with one beautiful terminal interface. Check out the blog post for more information... https://t.co/KpQu5cYZzR I've been told I'm very authentic on camera. You just can't fake th

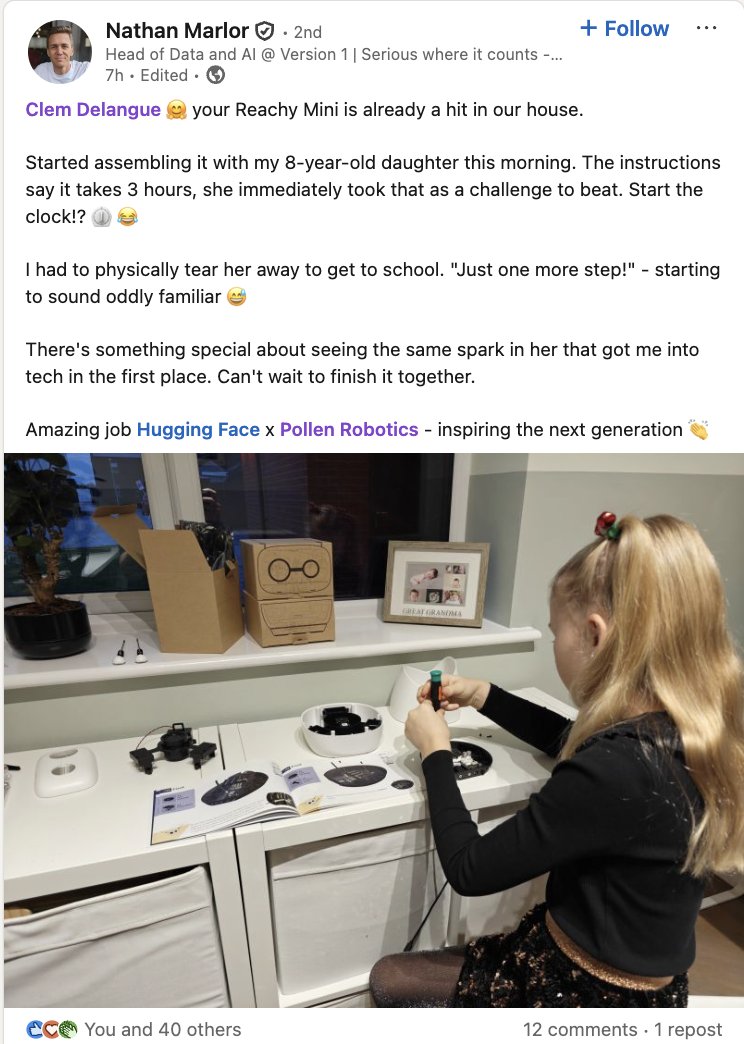

Let's create a new generation of AI builders, not just AI users! https://t.co/tedfrUHUUt

Let's create a new generation of AI builders, not just AI users! https://t.co/tedfrUHUUt

Is software engineering still in demand? With Sajjaad Khader 🚀 https://t.co/aaW1pLJwI7

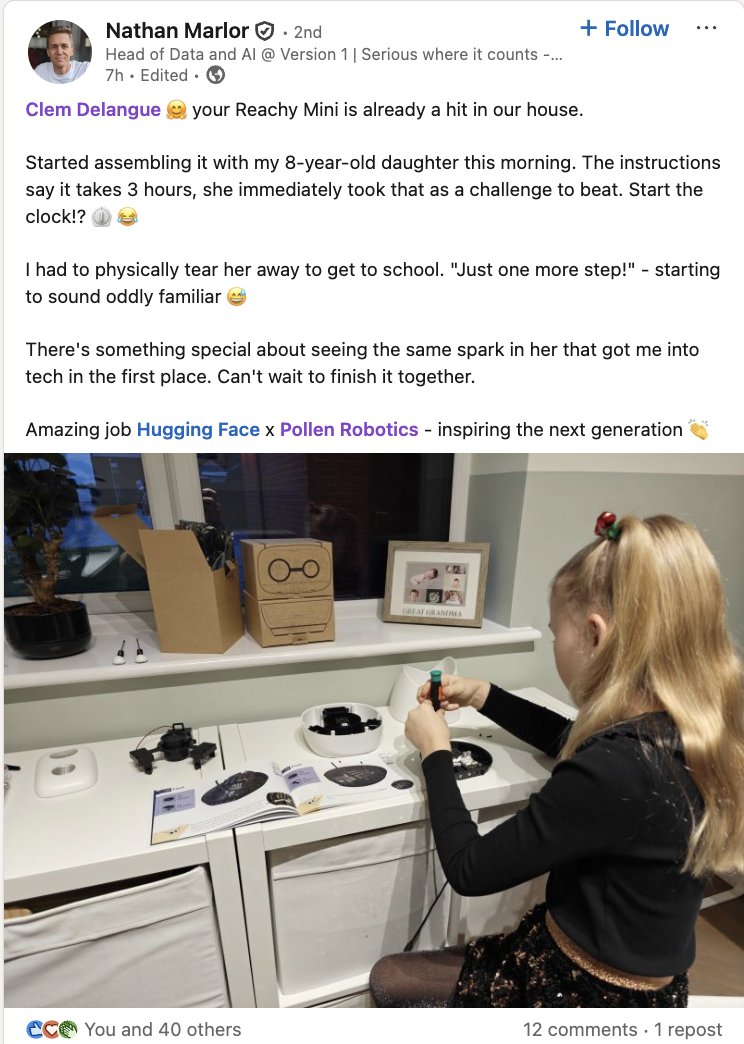

I think everyone, even the most cynical & informed among us, is going to fall for at least one AI-faked story, photo, or post this coming year & likely many more. (You will also likely believe a real thing was AI) This has bad implications (but wouldn’t blame those taken in) https://t.co/Y5YDLWuaTN

It would be ironic if my post was in response to fake AI content (they are likely deleted but no way to know) https://t.co/DWBBH2rVNS

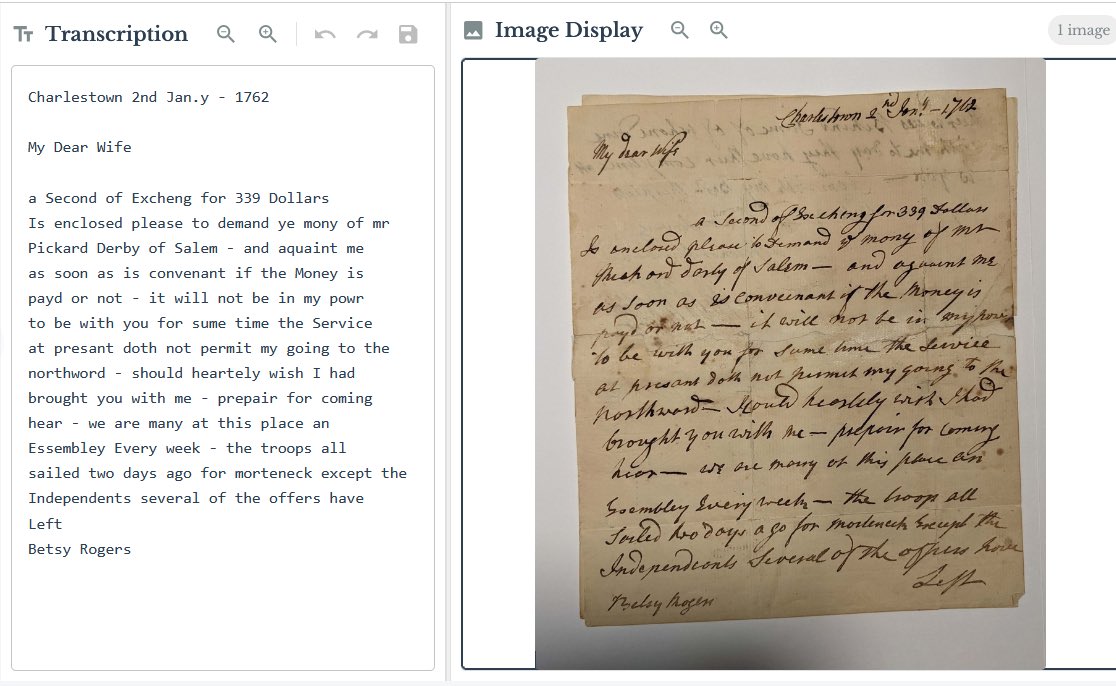

Gemini 3 flash is as good at reading handwriting as the average human (pro is expert human level). It is much better than both GPT-5.2 and Opus 4.5 with character level error rates of 1.43% and word level error rates of 2.74%. This is a 47-63% improvement over 2.5 Flash, the same leap we saw with pro. At a fraction of a cent per page, this is a big deal. Read more about the Gemini models on handwriting: https://t.co/6HYnyWu2A9

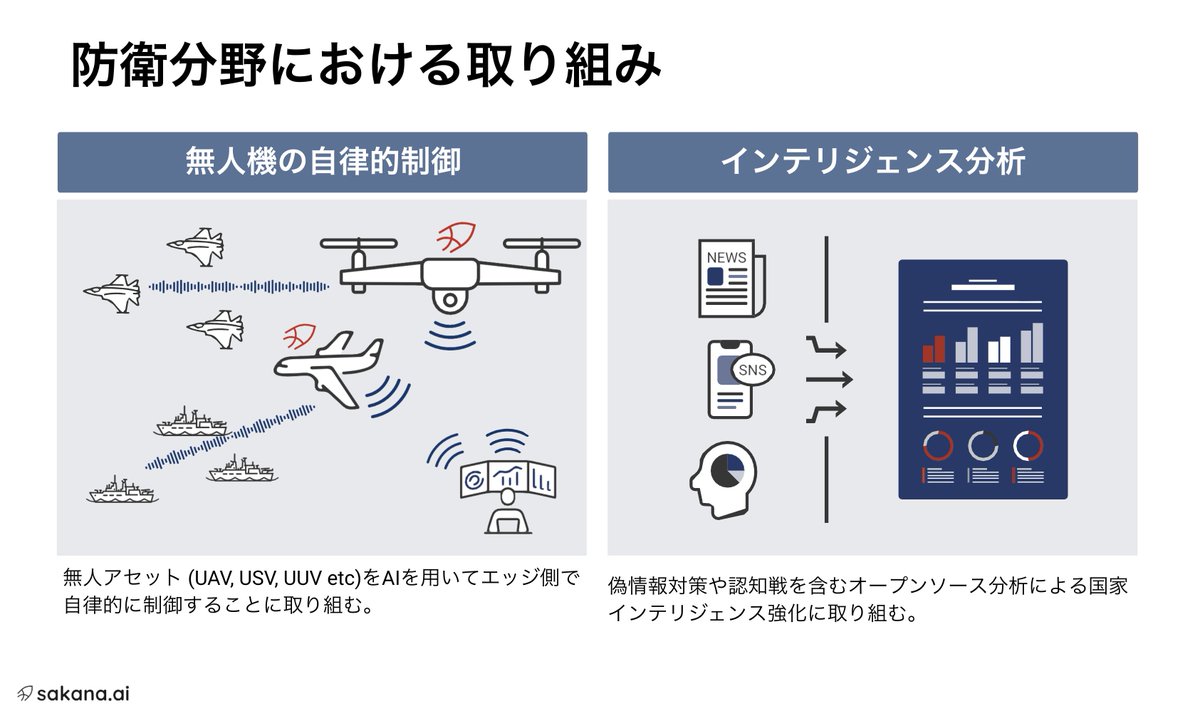

【防衛・インテリジェンス領域のエンジニア募集】 先日のシリーズB調達を経て、Sakana AIでは現在、「防衛」および「インテリジェンス」分野におけるエンジニア採用を加速させています。 ▼「防衛」および「インテリジェンス」分野での取り組みの例(日経クロステック) https://t.co/YpI6iakFgn この未踏の領域を共に切り拓く仲間を心から求めています。 ビジョンや事業内容に少しでも興味を持っていただいた方は、ぜひ採用ページをご覧ください。 • Applied Research Engineer https://t.co/KCa6GgvA3z • Software Engineer https://t.co/Kl2IXgRo7h ▼Appliedチームの紹介 https://t.co/2rdYAUlVer

Lovable just raised $330M at a $6.6B valuation. It's been an iconic, insane, wonderful journey so far. Thank you to everyone who made this possible: (thread) https://t.co/EJLOSzpAw3

Announcing Lovable raised $330M at a $6.6B valuation. We launched Lovable to empower the 99%, the people with ideas who don’t code. Now everyone is a builder: founders, teachers, artists, and teams inside the world's largest companies. Meet some of those incredible builders: https://t.co/WJtil2YIE2

Look at this guy, The guy is afraid of asking for what he wants https://t.co/lZ5RdQhCQP

@viemccoy custom instructions ? i would never lobotomize a model like that

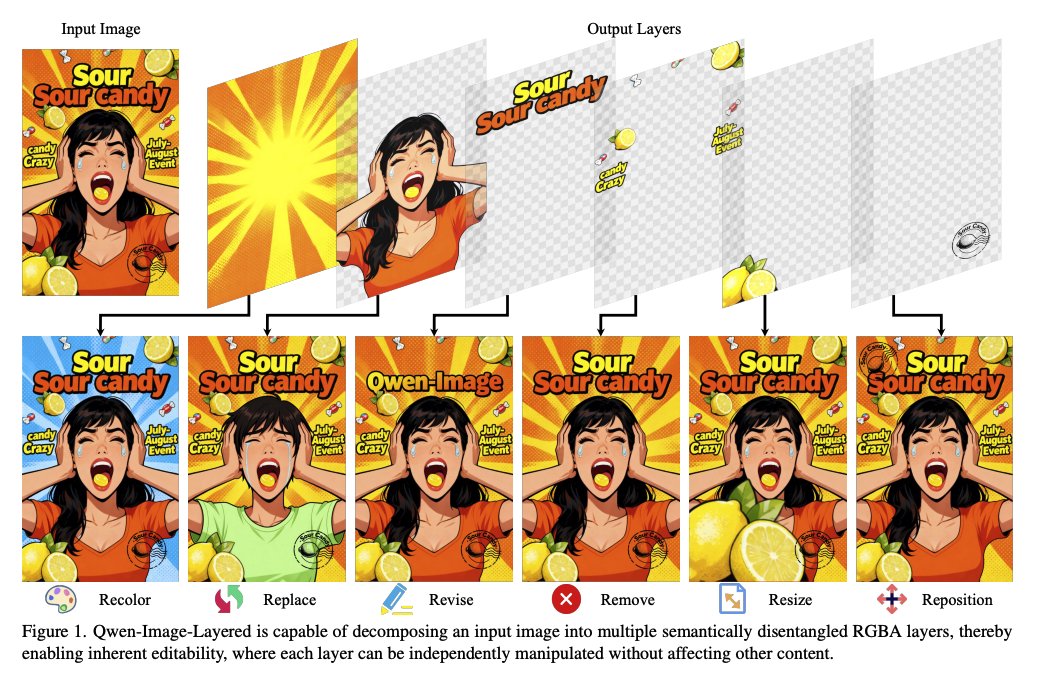

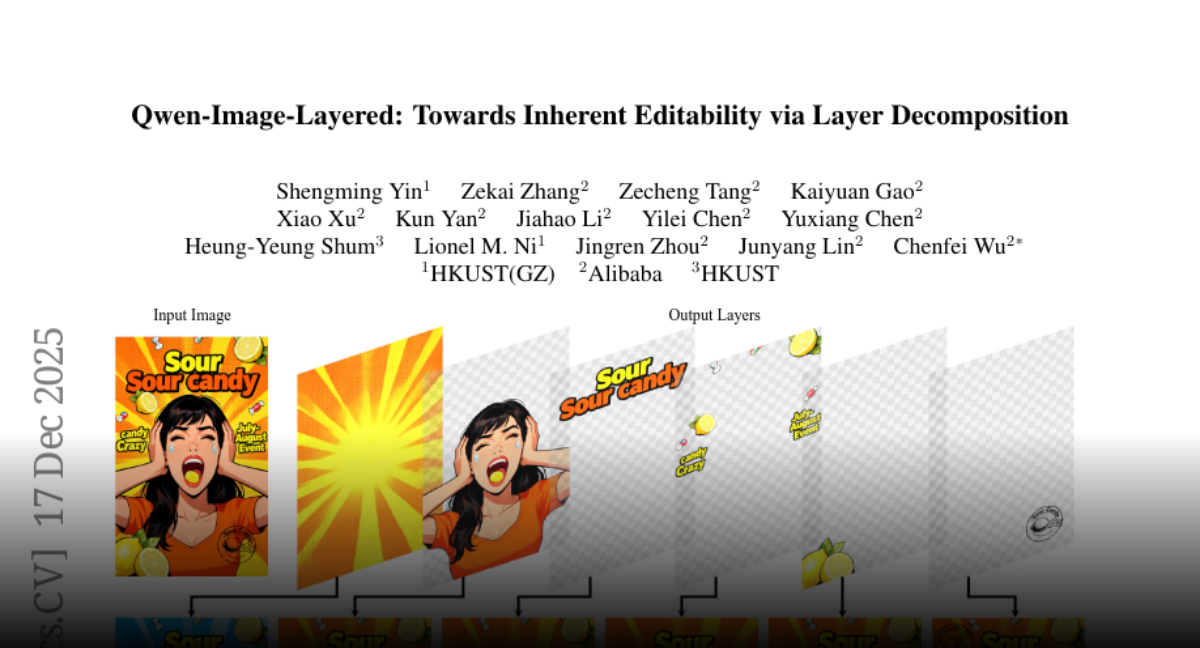

Qwen-Image-Layered Towards Inherent Editability via Layer Decomposition https://t.co/s5sWJsMjpX

discuss: https://t.co/M8oBAuiwgR

Here, a nation where we 🏙️overthrow capitalist society 🏙️live with sincerity, trust & consensus More from Mr H👉https://t.co/3T3qqseYre #hypernation #defi #dao #blockchain #defiproject #daoverse #blockchain #crypto #btc

TurboDiffusion: 100–205× faster video generation on a single RTX 5090 🚀 Only takes 1.8s to generate a high-quality 5-second video. The key to both high speed and high quality? 😍SageAttention + Sparse-Linear Attention (SLA) + rCM Github: https://t.co/vT3nfax8H9 Technical Report: https://t.co/LEgLyhdPXh

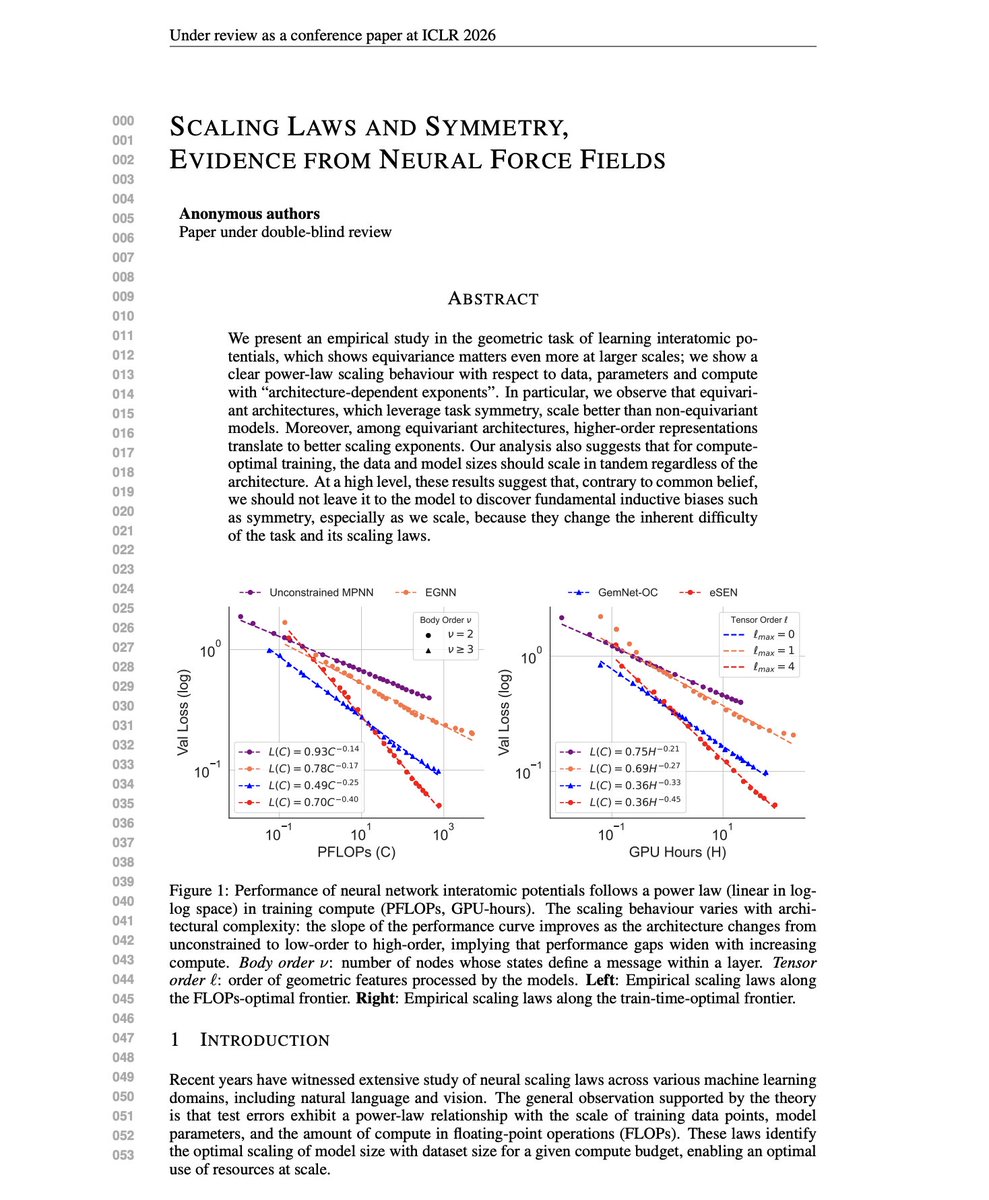

Scaling Laws and Symmetry The common belief is that scaling outperforms inductive biases. Give the model enough data and compute, and it will learn the structure on its own. But this new research finds the opposite. Researchers conducted comprehensive scaling experiments on neural network interatomic potentials, comparing architectures that encode rotational and permutation symmetry to varying degrees. The main finding is that equivariant architectures don't just have lower loss at any given scale. They have better scaling exponents. The performance gap grows as you add more compute. For geometric tasks with known symmetries, the scaling laws favor architectures that encode those symmetries. "Contrary to common belief, we should not leave it to the model to discover fundamental inductive biases such as symmetry, especially as we scale, because they change the inherent difficulty of the task and its scaling laws." Paper: https://t.co/HR5cNwFyaL Learn to build effective AI Agents in our academy: https://t.co/zQXQt0PMbG

This is a fascinating paper. It's well known that long-horizon agents have a memory problem. The standard approach is to append everything to the context. Every past observation, action, and thought gets added to the prompt. This creates three compounding issues: O(N) memory growth, degraded reasoning on out-of-distribution context lengths, and attention dilution that causes the model to forget key details even when they're technically in the prompt. This new research unifies memory and reasoning into a single process. It introduces MEM1, an RL framework that trains agents to maintain constant memory across arbitrarily long multi-turn tasks. At each turn, the model updates a compact internal state that simultaneously consolidates prior information and reasons about next actions. After each turn, all previous observations, actions, and states are discarded. Only the most recent internal state remains. Inference-time reasoning serves two purposes. While reasoning about the current query, the model also extracts and stores exactly what it needs for future turns. Memory consolidation becomes part of the reasoning process itself, not a separate module. Training uses PPO with a masked trajectory technique. Because MEM1 dynamically prunes context, standard policy optimization breaks since tokens don't belong to a single continuous trajectory. The authors solve this by stitching sub-trajectories together and applying 2D attention masks that restrict each token's attention to only what was visible when it was generated. The results show dramatic efficiency gains: On 16-objective multi-hop QA, MEM1-7B improves performance by 3.5x while reducing memory usage by 3.7x compared to Qwen2.5-14B-Instruct. Peak token usage stays nearly constant as task complexity increases, while baseline methods scale linearly. On WebShop navigation, MEM1-7B outperforms AgentLM-13B (twice the parameters) with 2.8x less peak token usage. Notably, the agent trained on 2-objective tasks generalizes to 16-objective tasks. Performance actually improves relative to baselines as horizon length increases, because baseline models degrade on out-of-distribution context lengths while MEM1 maintains constant context. Emergent behaviors appear in the trained agents: maintaining structured memory for multiple concurrent questions, shifting focus when one objective stalls, and interleaving reasoning with selective memory updates. External memory modules require separate training and engineering overhead. Full-context approaches don't scale. MEM1 shows that end-to-end RL can train models to consolidate memory as part of reasoning, achieving both efficiency and performance without architectural changes. Paper: https://t.co/q9pEIxBpit Learn to build effective AI Agents in my academy: https://t.co/JBU5beIoD0

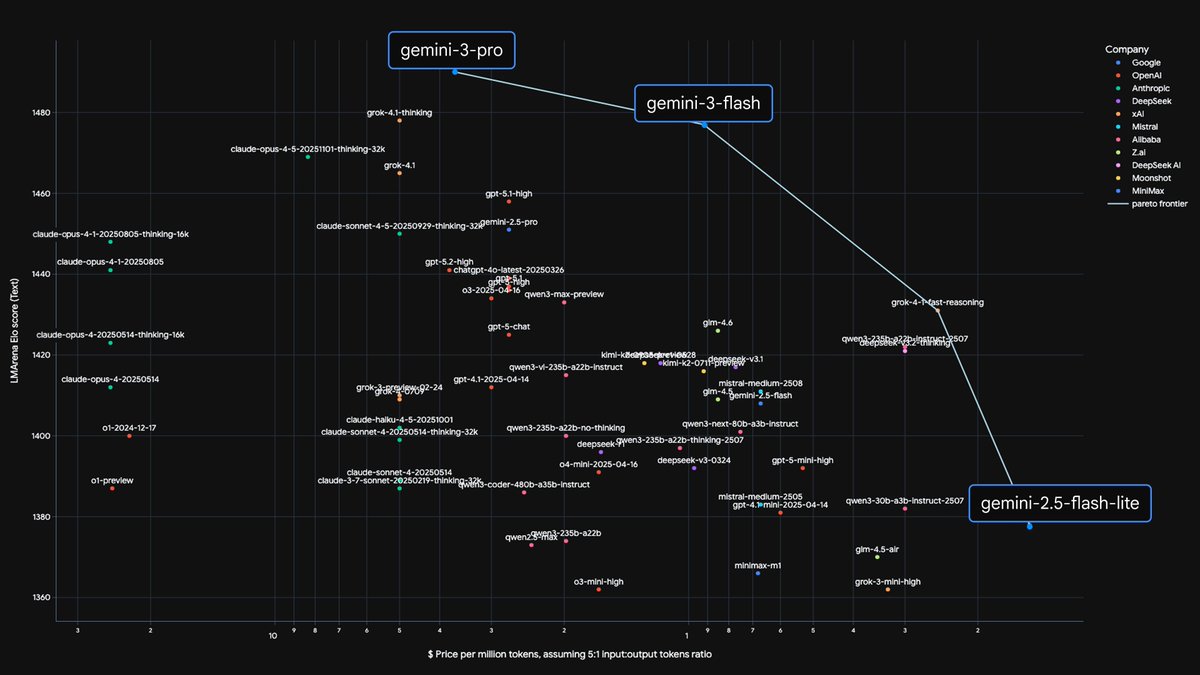

distillation might be one of the most impactful technology of the llm era, really impressive scores https://t.co/8NHdHOtf1b

🔎🚀@turbopuffer’s solution for scalable, reliable & cost-effective search has become a key ingredient for high-performing LLMs. Find out how the startup is working with #AWS to support leading #AI enterprises. 👉 https://t.co/UeVXbkeqbJ https://t.co/BgEX85SuHU

Coursera and Udemy enter a merger agreement valued at around $2.5B https://t.co/LcThQ9cMPA @LaurenForristal @techcrunch

Is XRP crashing? The sustained break below $2 signals trouble https://t.co/n94cPbGD5a @godbole17 @coindesk

A “scientific sandbox” lets researchers explore the evolution of vision systems https://t.co/eHJlLqS23B @mit

Good read Two views of AI and Big Tech https://t.co/G9PN9iqfUp @ft

【受注締切日】 佐々木琴音 バニーVer. → 8月1日(水) https://t.co/Kv74waySls 恋塚 愛 → 8月1日(水) https://t.co/T9HEVLkUvd ショコラ → 8月1日(水) https://t.co/cSqXRTEiU0 https://t.co/1mPrr8GdzR

⠀ ⠀ ⠀⠀ ⠀ ⠀ ⠀ ⠀⎰ ✶ #⃞NᥱɯPɾofılᥱPıc ━ ⠀ ⠀ ⠀⠀ ⠀ ᠕lteɾnɑtıve veɾsıon: ⠀ ⠀ ⠀ Eɑɾth-1610 & Eɑɾth-2301 ⠀ ⠀ https://t.co/0FPgFPIJFL

⠀ ⠀ ⠀⠀ ⠀ ⠀ ⠀ ⠀⎰ ✶ #⃞NᥱɯPɾofılᥱPıc ━ ⠀ ⠀ ⠀⠀ ⠀ ᠕lteɾnɑtıve veɾsıon: ⠀ ⠀ ⠀ Eɑɾth-1610 & Eɑɾth-2301 ⠀ ⠀ https://t.co/KIDYAeVGUK

⠀ ⠀ ⠀⠀ ⠀ ⠀ ⠀ ⠀⎰ ✶ #⃞NᥱɯPɾofılᥱPıc ━ ⠀ ⠀ ⠀⠀ ⠀ ᠕lteɾnɑtıve veɾsıon: ⠀ ⠀ ⠀ Eɑɾth-1610 & Eɑɾth-2301 ⠀ https://t.co/Jzf4mAlzBV

⠀ ⠀ ⠀⠀ ⠀ ⠀ ⠀ ⠀⎰ ✶ #⃞NᥱɯPɾofılᥱPıc ━ ⠀ ⠀ ⠀⠀ ⠀ ᠕lteɾnɑtıve veɾsıon: ⠀ ⠀ ⠀ Eɑɾth-1610 & Eɑɾth-2301 ⠀ https://t.co/dfh1Y66ESi