@dair_ai

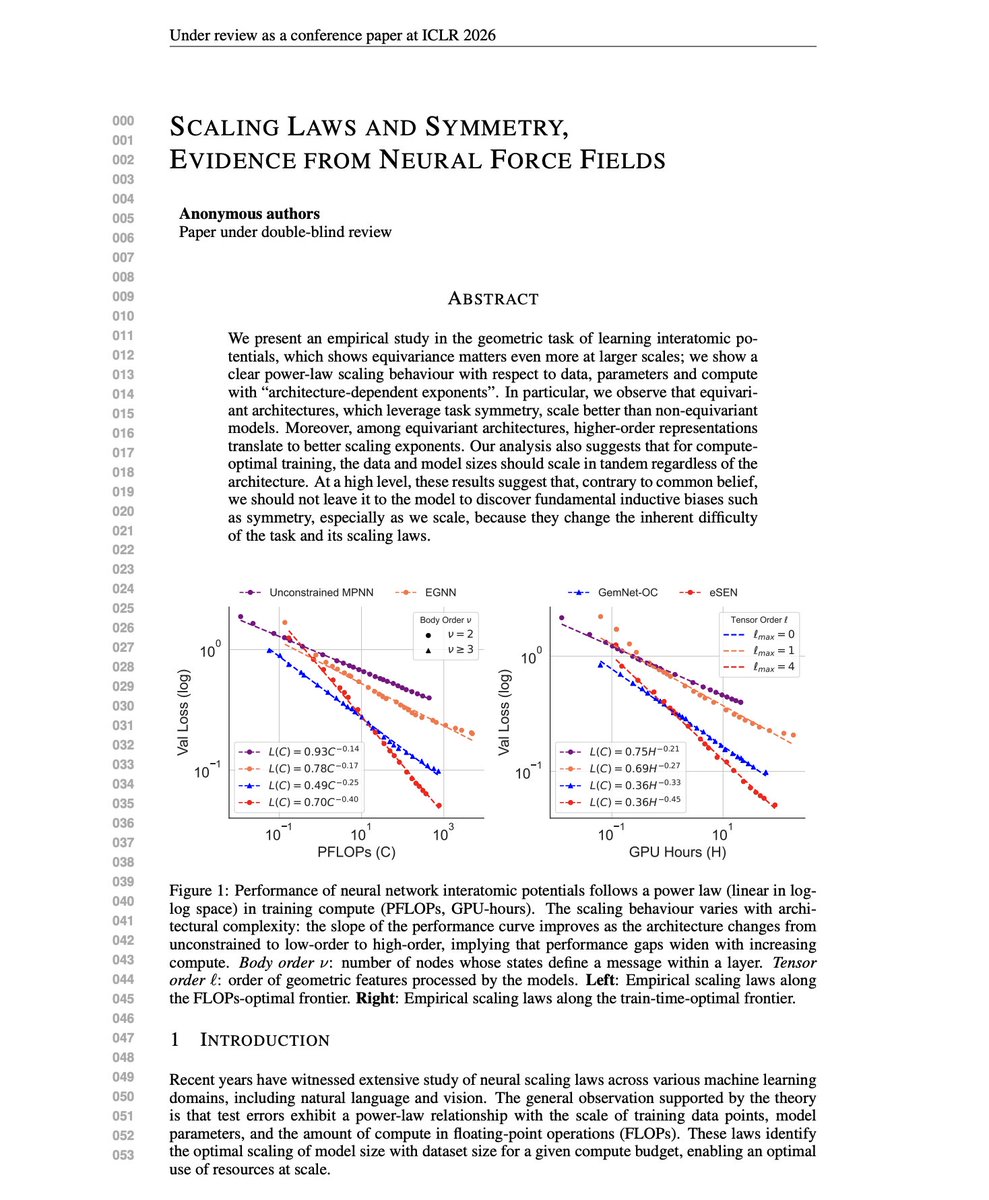

Scaling Laws and Symmetry The common belief is that scaling outperforms inductive biases. Give the model enough data and compute, and it will learn the structure on its own. But this new research finds the opposite. Researchers conducted comprehensive scaling experiments on neural network interatomic potentials, comparing architectures that encode rotational and permutation symmetry to varying degrees. The main finding is that equivariant architectures don't just have lower loss at any given scale. They have better scaling exponents. The performance gap grows as you add more compute. For geometric tasks with known symmetries, the scaling laws favor architectures that encode those symmetries. "Contrary to common belief, we should not leave it to the model to discover fundamental inductive biases such as symmetry, especially as we scale, because they change the inherent difficulty of the task and its scaling laws." Paper: https://t.co/HR5cNwFyaL Learn to build effective AI Agents in our academy: https://t.co/zQXQt0PMbG