Your curated collection of saved posts and media

Last week @Shopify released what might be the most significant AI tool set the world has seen so far in terms of AIs that will have immediate impact on generating revenue. They released many new tools. We dig into what we think are the three most important ones: 1) Simgym, 2) Shopify Product Network, and 3) Sidekick Pulse. Thanks to @tobi @RichardSSutton @m_sendhil @suzannegildert and Niamh Gavin for a fascinating discussion. Video link below.

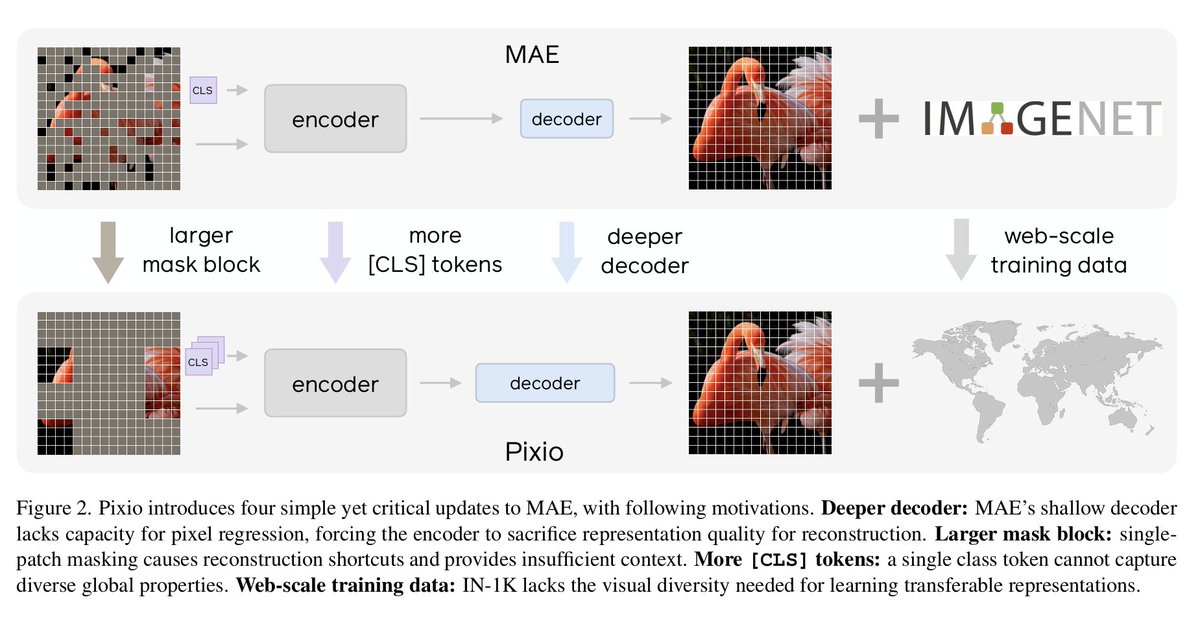

In collaboration with @AIatMeta, we added support for Pixio in the Transformers library! It proposes 4 changes to Masked AutoEncoders (MAE), including scaling it to 2B images. It outperforms/matches DINOv3 trained at similar scales Find the models here: https://t.co/iUE8fQbmOp https://t.co/cJssb2s7FZ

https://t.co/1igzeDZGqw

https://t.co/1igzeDZGqw

People use AI for a wide variety of reasons, including emotional support. Below, we share the efforts we’ve taken to ensure that Claude handles these conversations both empathetically and honestly. https://t.co/P2BmTDEDge

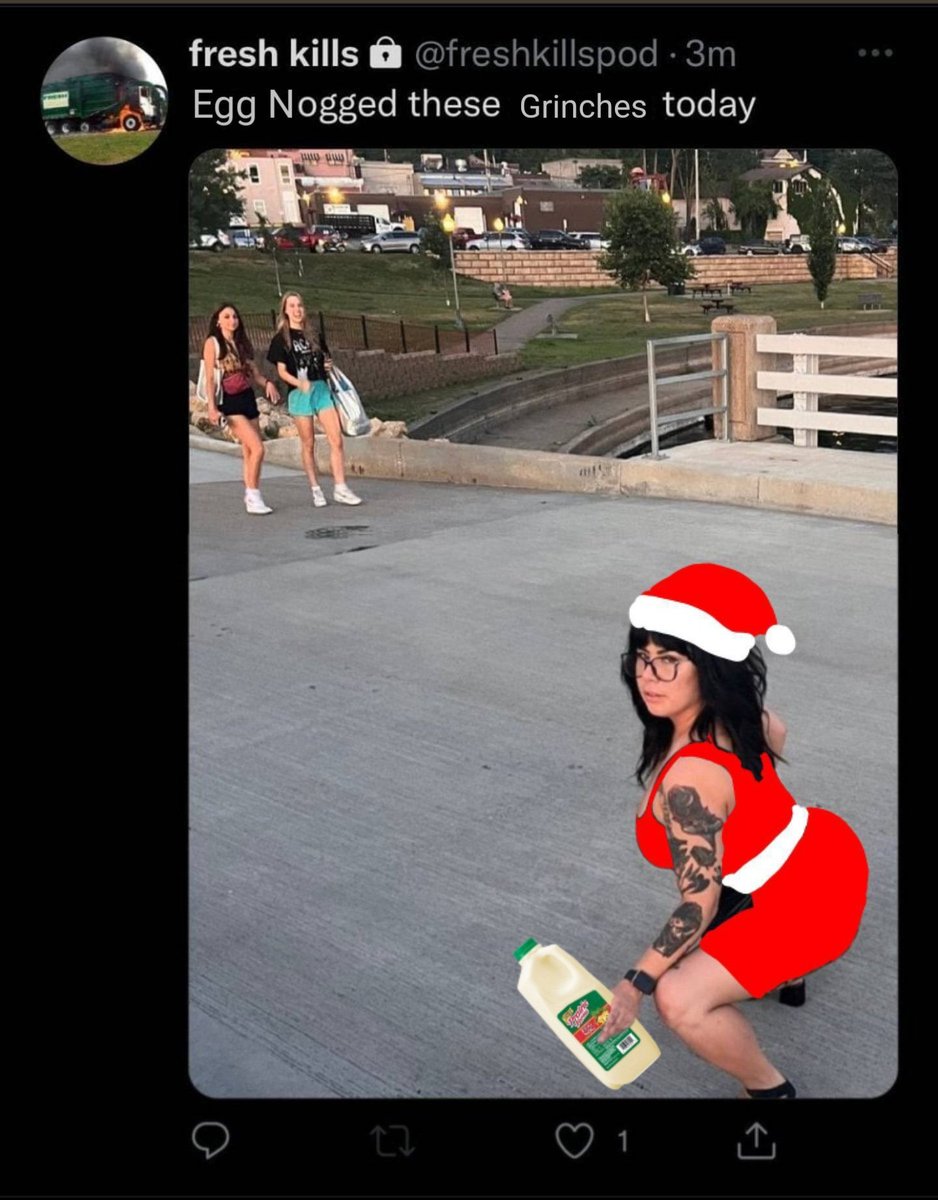

🎉 MiMo-V2-Flash FREE API is now live on ModelScope! The first major release since Fuli Luo joined Xiaomi—and it’s built for real-world agentic AI. ⚡ MiMo-V2-Flash: an open, high-performance MoE model with • 309B total / 15B active parameters • 256K context window • 150+ tokens/s generation thanks to native Multi-Token Prediction 🔥 Key wins for developers: ✅ Hybrid attention (5:1 SWA + Global) → 6× less KV cache, full long-context recall ✅ 73.4% on SWE-Bench Verified — new SOTA for open-source models ✅ Matches DeepSeek-V3.2 on reasoning, but much faster in practice ✨ API-ready—perfect for building smart, responsive agents. 🔗 Try it now: https://t.co/7hQIhBC25Y

We’re open-sourcing Perception Encoder Audiovisual (PE-AV), the technical engine that helps drive SAM Audio’s state-of-the-art audio separation. Built on our Perception Encoder model from earlier this year, PE-AV integrates audio with visual perception, achieving state-of-the-art results across a wide range of audio and video benchmarks. Its native multimodal support can assist people in everyday tasks, including sound detection and richer audio-visual scene understanding. 🔗 Read the paper: https://t.co/RLWJOgG2uz 🔗 Download the code: https://t.co/1L5ZqCZlxq

You can train the VyvoTTS model using just a 200MB audio file with Unsloth in 5 minutes to replicate someone's voice with high accuracy. Next week, we'll release the base model and many fine-tuned models trained with extensive data. Which other voices should we train? https://t.co/A6AgGbMnBW

Skills allow more people to build what's lacking in AI Agents. It's about building the capabilities/knowledge missing in AI agents. Everyone should learn to build Skills. I am hosting a 2hr workshop for our academy members in Jan: https://t.co/64C8UmZq7j Join us! https://t.co/eRJB5vxOPn

Every year, I put together a year's review of what's going on in the crazily evolving world of brain chips and #BCI's. 2025 was huge for #Neuralink, but we saw many other trends. Here are my top-10 trends of the year and what to watch for in 2026: https://t.co/VOIc4fXC94

Alrighty. The Toad is out of the bag. 👜🐸 Install toad to work with a variety of #AI coding agents with one beautiful terminal interface. Check out the blog post for more information... https://t.co/KpQu5cYZzR I've been told I'm very authentic on camera. You just can't fake that kind of awkwardness.

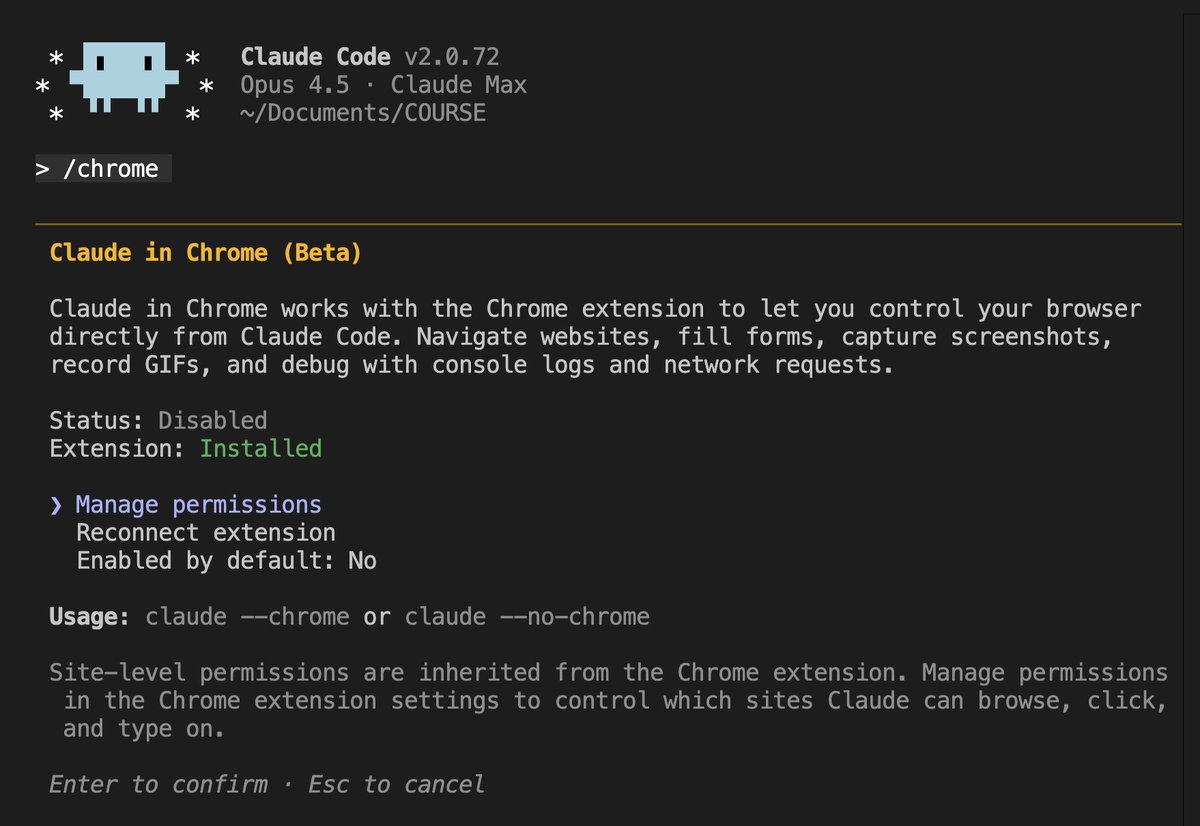

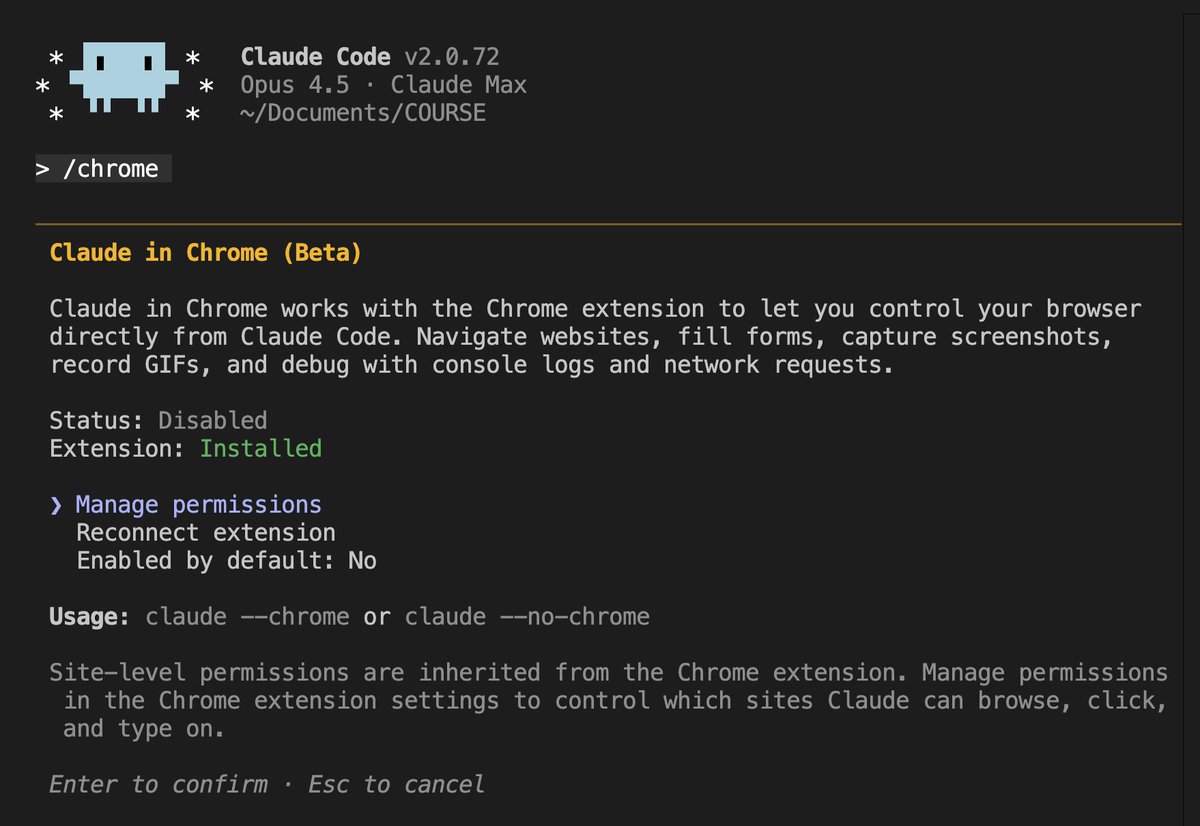

Using the extension, Claude Code can test code directly in the browser to validate its work. Claude can also see client-side errors via console logs. Try it out by running /chrome in the latest version of Claude Code. https://t.co/jcb21qr5Y5

🤩This looks extremely useful to improve designing and debugging workflows in Claude Code. Sometimes it's hard to explain to Claude Code the exact changes you want in your app. This provides better visual cues and more direct and useful context for Claude Code. https://t.co/y7nbm3CwRV

Using the extension, Claude Code can test code directly in the browser to validate its work. Claude can also see client-side errors via console logs. Try it out by running /chrome in the latest version of Claude Code. https://t.co/jcb21qr5Y5

@eunifiedworld @bytebot girl. https://t.co/Q3ODvrvnxu

i swear to god if i hear a normie say «knowledge graph» one more time… https://t.co/3MUzxTMVvD

i swear to god if i hear a normie say «knowledge graph» one more time… https://t.co/3MUzxTMVvD

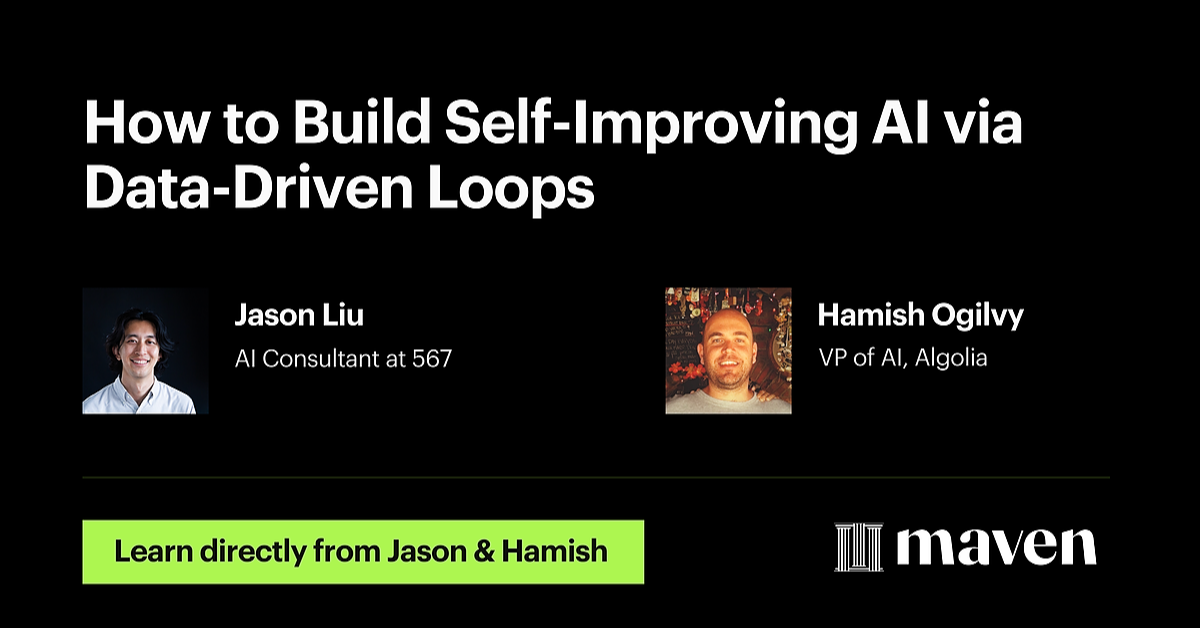

excited to have the vp of at at algolia give cus a talk on how to build self improving ai systems https://t.co/6ah81HZm7L

excited to have the vp of at at algolia give cus a talk on how to build self improving ai systems https://t.co/6ah81HZm7L

Google just released FunctionGemma https://t.co/PwXVblLxnr

Nice! https://t.co/IkI5tVo2fM

icymi I work at the emoji company (new feature!) https://t.co/4tYkaTg3KG

Nice! https://t.co/IkI5tVo2fM

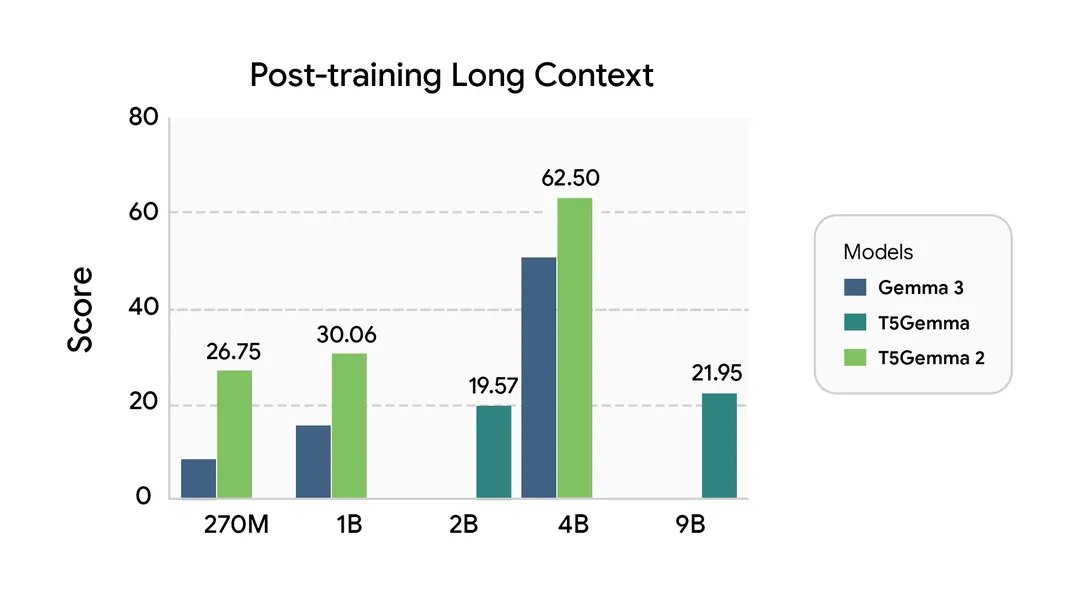

Introducing T5Gemma 2, the next generation of encoder-decoder models 🚀 Built on top of Gemma 3, we were able to build compact models at sizes of 270m-270m, 1B-1B, and 4B-4B sizes. While most models today are decoder-only, T5Gemma 2 is the first (I'm aware of) multimodal, long-context, and heavily multilingual (140 languages) encoder-decoder model out there. We hope this model enables the model research community as well as the community of devs ready to explore with new architectures. Blog: https://t.co/12ScxYcjxa Models: https://t.co/D38wNFo5Bc Paper: https://t.co/2rypSQ7Bf6

A GGUF format model from the GLM-V series, created by the Hugging Face team. Welcome to use it🥳 https://t.co/mNAvC7NlnX

A GGUF format model from the GLM-V series, created by the Hugging Face team. Welcome to use it🥳 https://t.co/mNAvC7NlnX

GROK JUST TURNED VOICE AI INTO A REAL PRODUCT, FAST, AND EVERYWHERE xAI just opened Grok Voice to developers, and this isn’t some early experiment dressed up as a launch. It’s the same system already running inside millions of Teslas, now exposed through an API that actually works in the real world. Speed is the first thing that jumps out. Grok responds in under a second, which means conversations flow instead of stalling. That alone separates it from most voice AI people tolerate rather than enjoy. Language is the next flex. Grok handles dozens of them, switches mid-sentence, and keeps accents and tone intact. It sounds like someone talking, not software guessing. Then there’s capability. Grok can search live data, pull from X, use tools, plan routes, and control systems. In Teslas, it already acts like a co-pilot, not a novelty. No token gymnastics. Source: @xai

https://t.co/Tub46GilMJ

https://t.co/Tub46GilMJ

A decade ago SpaceX emerged from the most turbulent and challenging period in its history: surviving a major loss and doubling down with a new rocket, super-cold propellant, and a historic landing. This is that story: https://t.co/LLb0NcEfTw

"Fraud tourists" traveled to Minnesota after a friend told them state programs were "a good opportunity to make money," prosecutors say. https://t.co/dkI1RGAVOX

"Basically the government funded NGOs are a way to do things that would be illegal if they were the government." 一 Elon Musk https://t.co/XvcLhmyWSE

BREAKING: Grok 4.1 Fast just claimed the #1 spot on both the GeoMetrics and Agent Zero leaderboards. 🥇 https://t.co/JWex5lh7zb

Grok Imagine https://t.co/rVyOGio6m5