@osanseviero

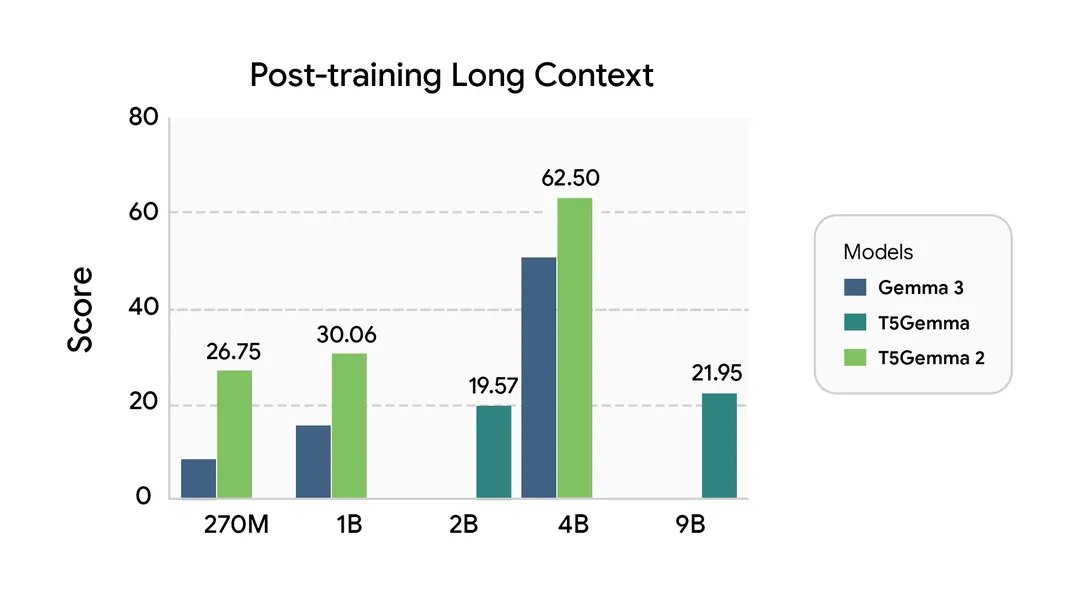

Introducing T5Gemma 2, the next generation of encoder-decoder models 🚀 Built on top of Gemma 3, we were able to build compact models at sizes of 270m-270m, 1B-1B, and 4B-4B sizes. While most models today are decoder-only, T5Gemma 2 is the first (I'm aware of) multimodal, long-context, and heavily multilingual (140 languages) encoder-decoder model out there. We hope this model enables the model research community as well as the community of devs ready to explore with new architectures. Blog: https://t.co/12ScxYcjxa Models: https://t.co/D38wNFo5Bc Paper: https://t.co/2rypSQ7Bf6