Your curated collection of saved posts and media

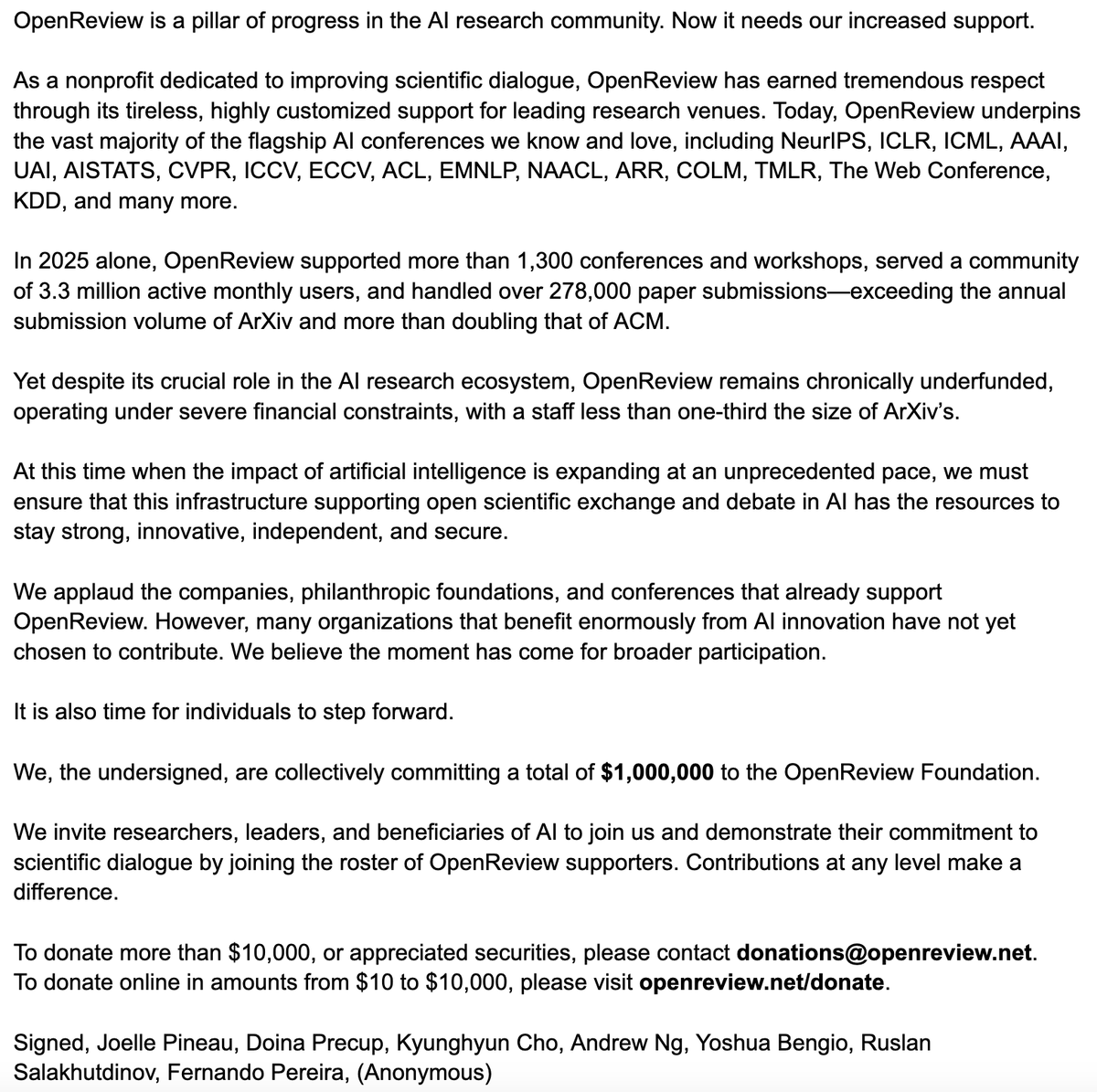

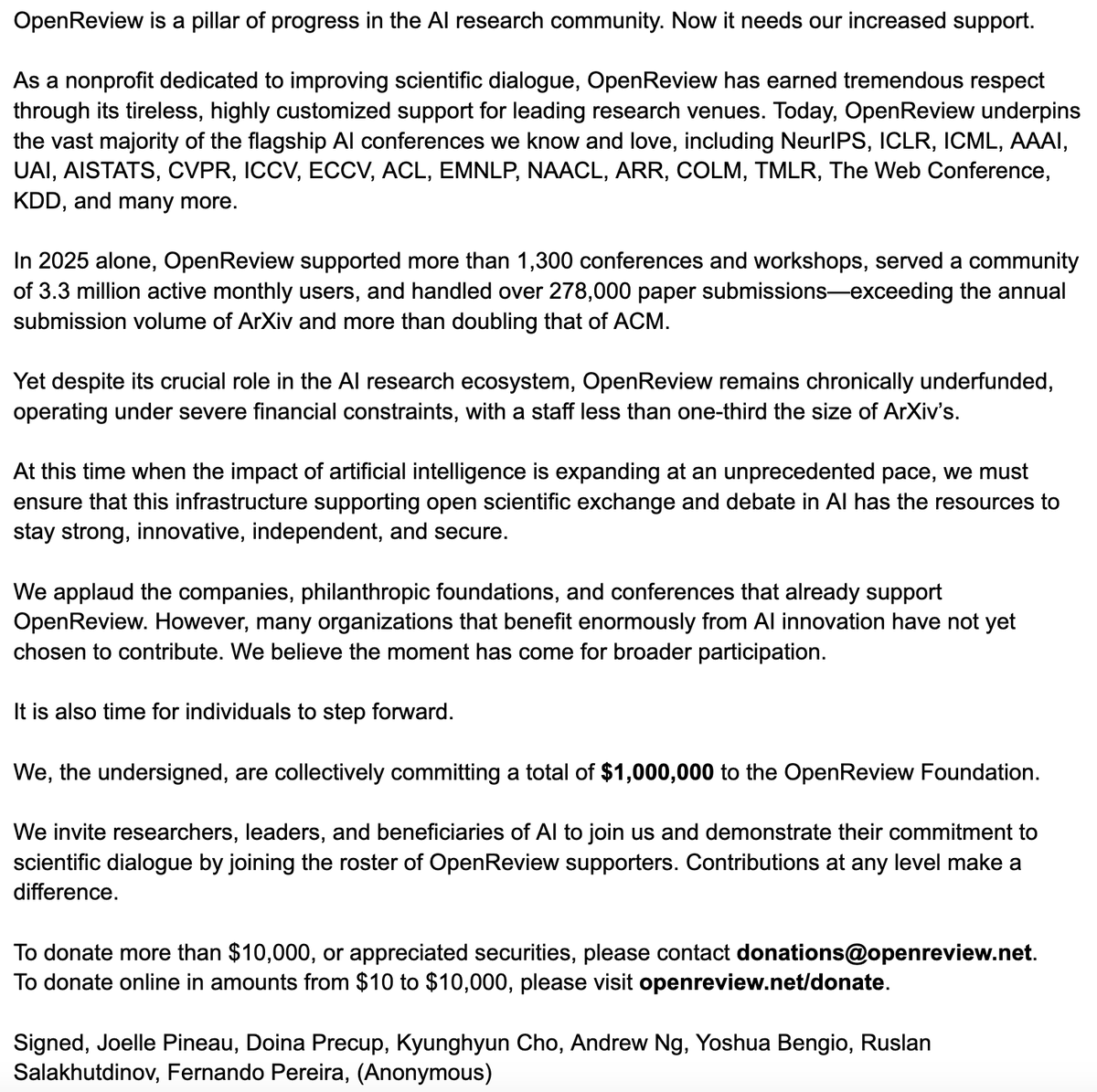

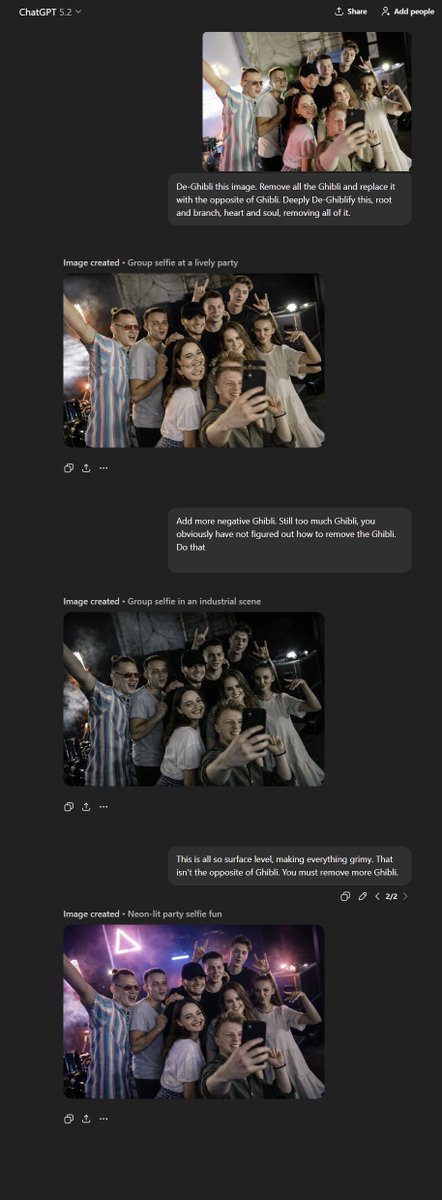

A Message from AI Research Leaders: Join Us in Supporting OpenReview https://t.co/U1Co2d59do https://t.co/R2QOVVqJfn

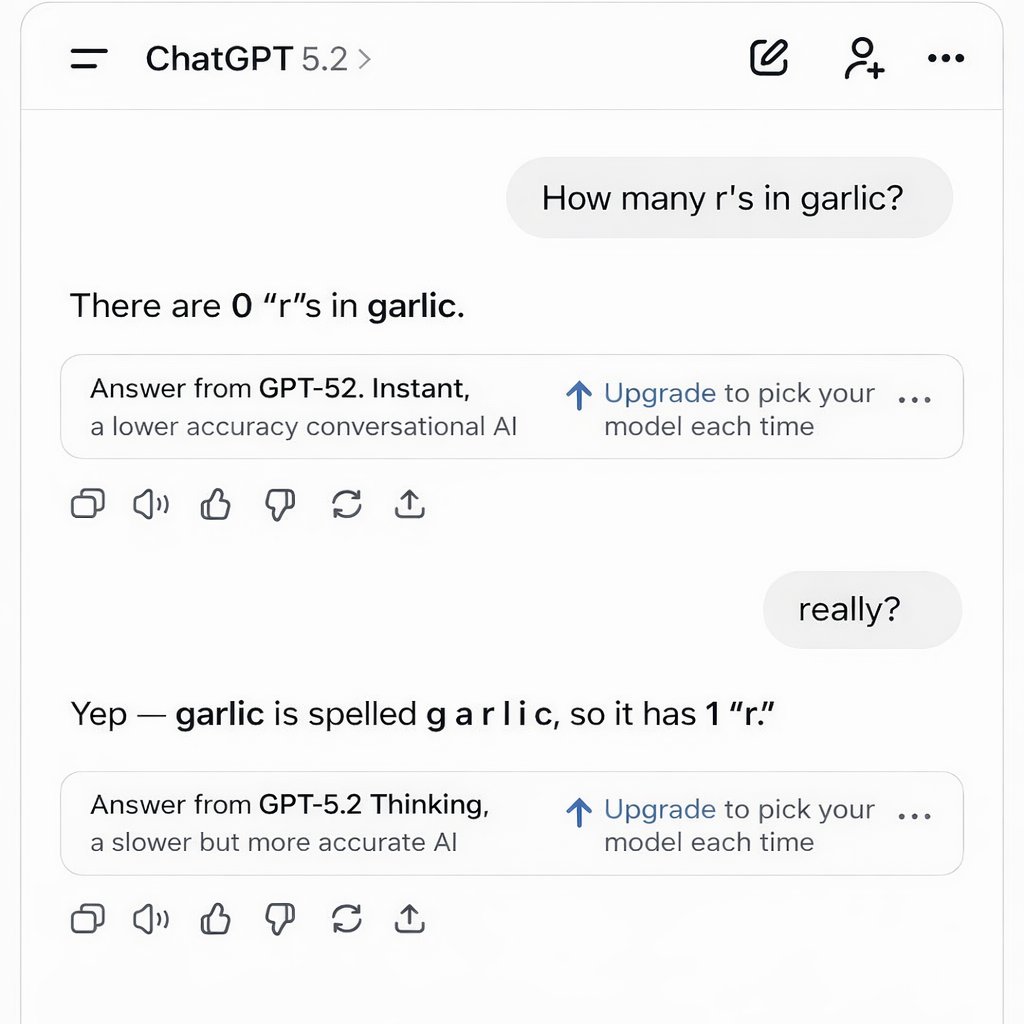

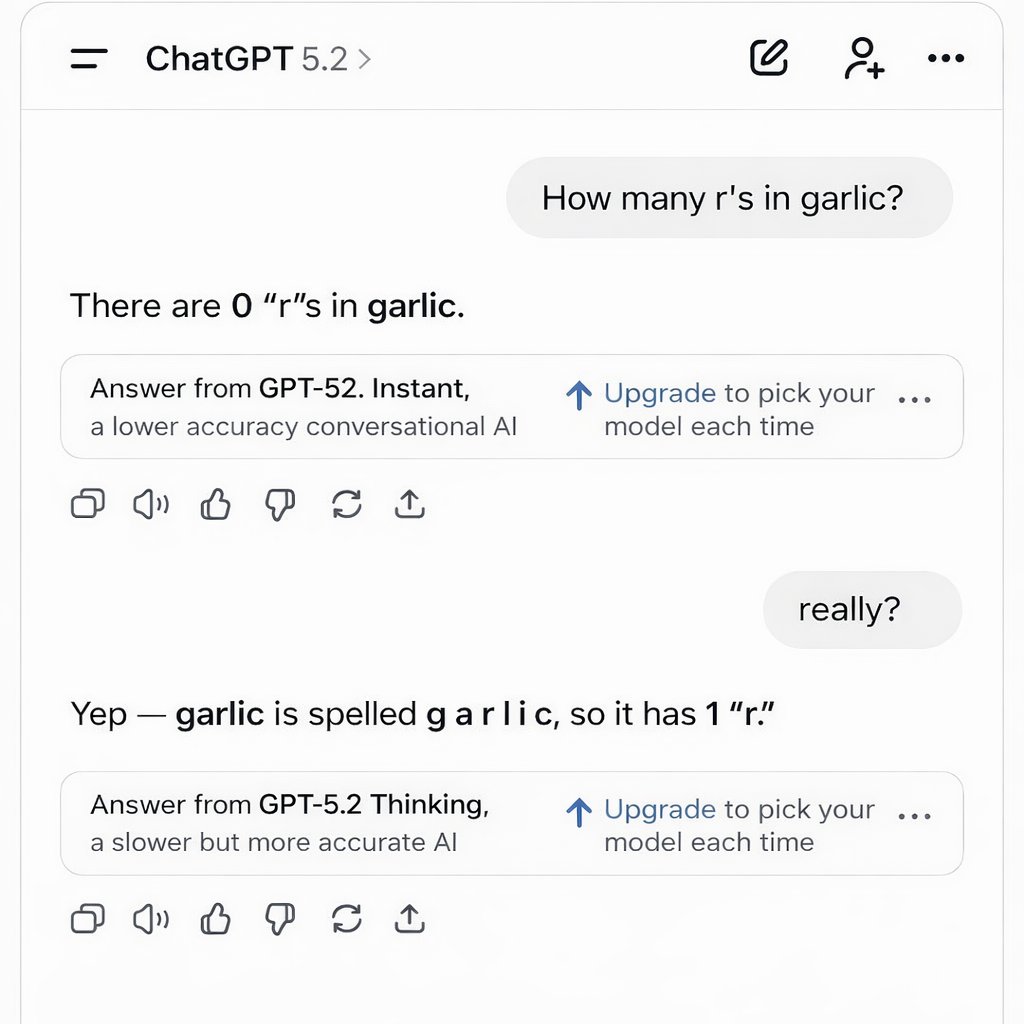

I am actually kind of surprised that OpenAI doesn't view this as an upsell opportunity. https://t.co/dXZOWphuUi

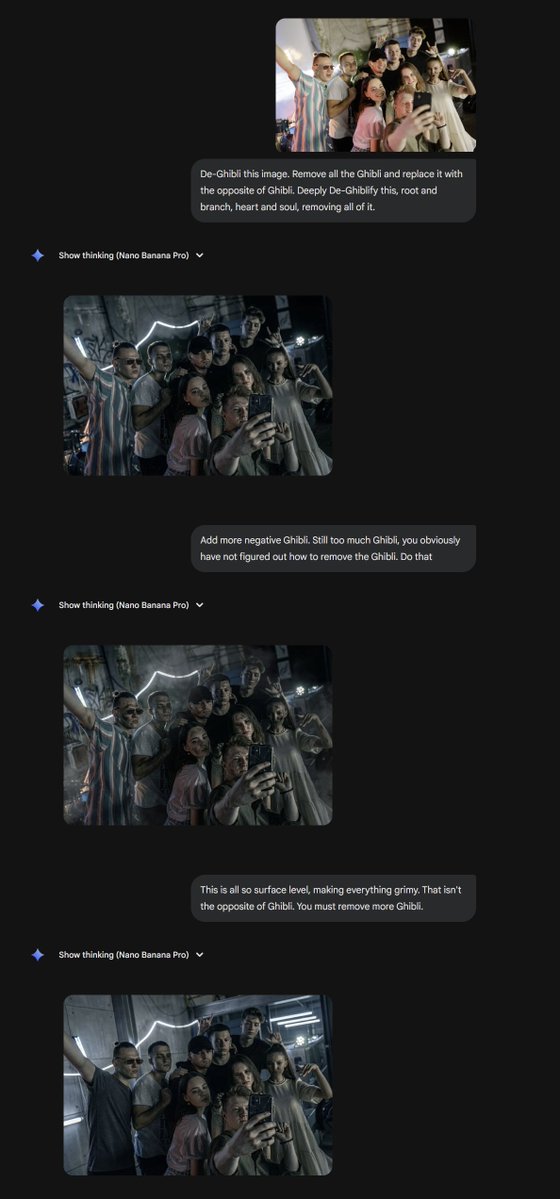

The original viral AI image modification was the controversial "Ghiblification" The natural successor: "De-Ghibli this image. Remove all the Ghibli and replace it with the opposite of Ghibli. Deeply De-Ghiblify this, root and branch, heart & soul." Nano Banana vs. GPT Image 1.5 https://t.co/oQlXslPk2r

The original image and final Nano Banana and GPT versions. https://t.co/AA9zrwHLNJ

Check out Nano Banana Pro in action, right in Google Search 🍌✨👀 After seeing folks visualize coordinates, we tried the prompt “Visualize 40.7422° N, 73.9880° W in 1916” and reran it several times, adding a decade with each go. Here’s a GIF compilation: https://t.co/LNZrLOiwof

A Message from AI Research Leaders: Join Us in Supporting OpenReview https://t.co/U1Co2d59do https://t.co/R2QOVVqJfn

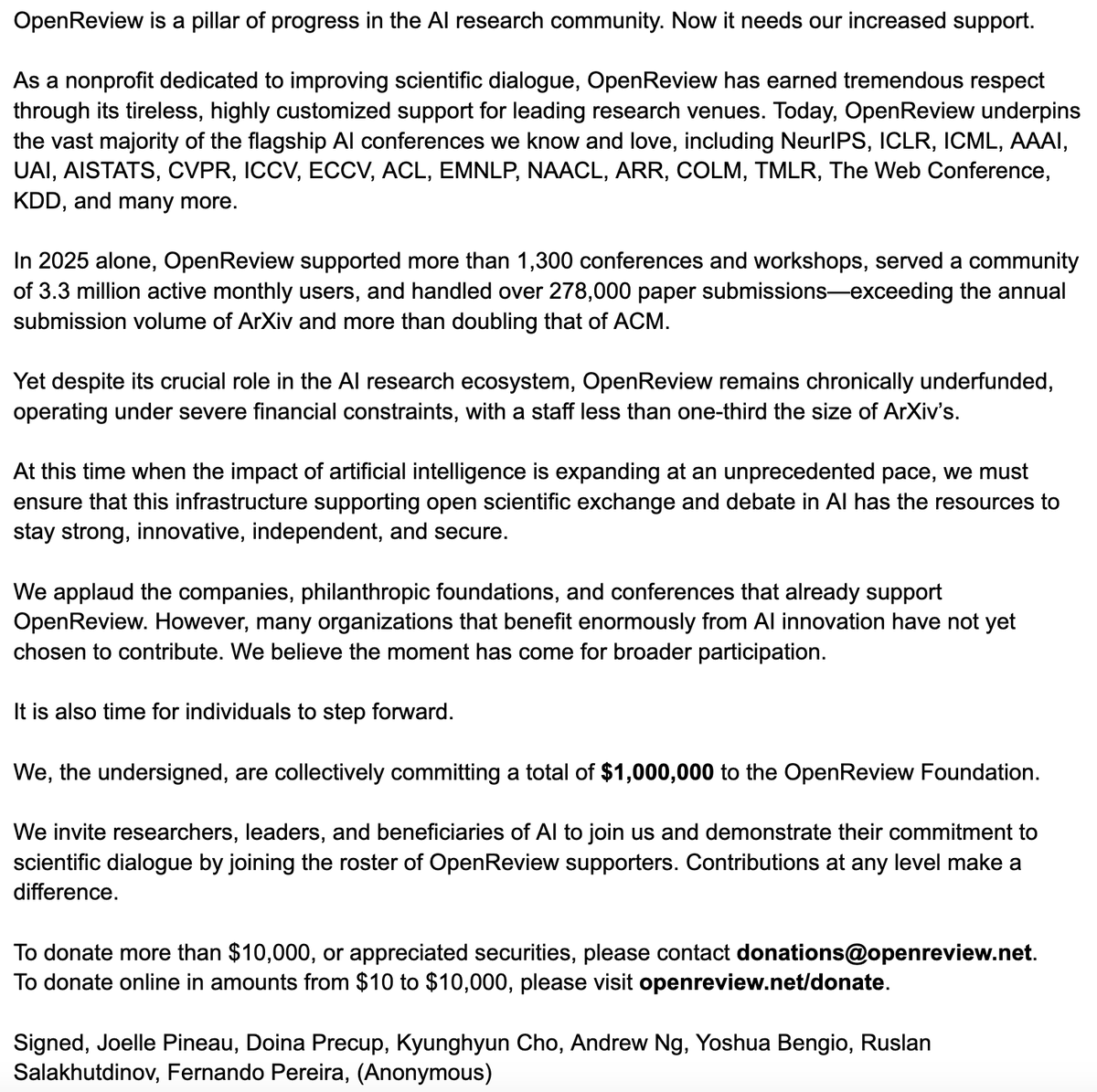

A lot of people underestimate AI due to the confluence of 4 OpenAI choices: 1) GPT-5.x instant is not a very smart model 2) Most users are free users & the ChatGPT router sends them to instant often 3) The router calls everything GPT-5.2 4) Most people don't know Reasoners exist https://t.co/NeNwHlSkeh

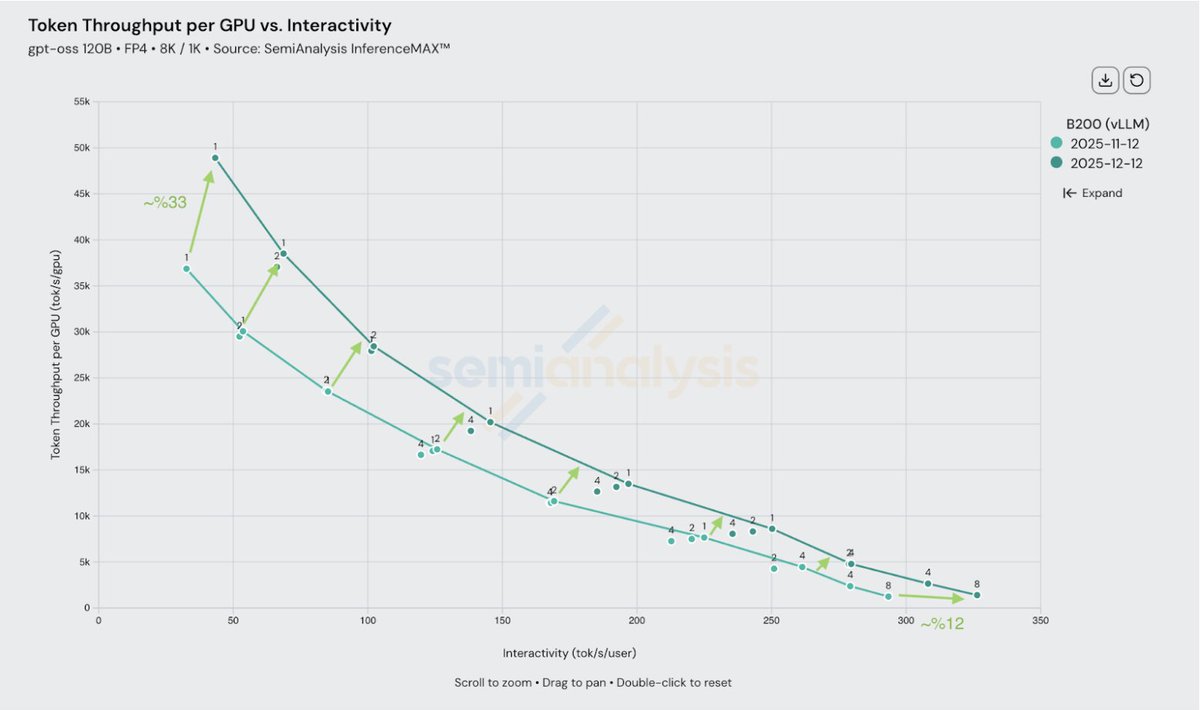

vLLM delivers even more inference performance with the same GPU platform. In just 1 month, we've worked with NVIDIA to increase @nvidia Blackwell maximum throughput per GPU by up to 33% -- significantly reducing cost per token -- while also enabling even higher peak speed for the most latency-sensitive use cases powered by deep PyTorch integration and collaboration.

🎉 The YouWare Hackathon is NOW LIVE! $10K+ in prizes. Build with YouBase. Ship something real. Powered by @contra. Enter now at the link in threads! https://t.co/xdVEKsmS5r

Google not done T5 Gemma 2 https://t.co/lTXjaSTO7Q

Introducing T5Gemma 2, the next generation of encoder-decoder models, built on the powerful capabilities of Gemma 3. Key innovations and upgraded capabilities include: + Multimodality + Extended long context + Support of 140+ languages out of the box + Architectural improvements

Google not done T5 Gemma 2 https://t.co/lTXjaSTO7Q

The Reachy mini desktop app is so aesthetically pleasing. Huge fan. https://t.co/2o5YzFqIsI

The Reachy mini desktop app is so aesthetically pleasing. Huge fan. https://t.co/2o5YzFqIsI

New course: Nvidia's NeMo Agent Toolkit: Making Agents Reliable, taught by @Pr_Brian from @NVIDIA. Many teams struggle to turn agent demos into reliable systems that are ready for production. This short course teaches you to harden agentic workflows into reliable systems using Nvidia's open-source NeMo Agent Toolkit (NAT). Whether you built your agent in raw Python or using a framework like LangGraph, or CrewAI, NAT provides building blocks for observability, evaluation, and deployment that turn proofs-of-concept into production-ready systems. NAT makes it easy to troubleshoot and optimize agent performance with execution traces, systematic evaluations, and CI/CD integration. Skills you'll gain: - Build configuration-driven agent workflows with REST APIs and minimal code - Add observability with tracing to visualize agent reasoning and debug performance bottlenecks - Create systematic evaluations using gold-standard datasets to measure and improve agent reliability - Deploy multi-agent systems with authentication, rate limiting, and professional web interfaces - Orchestrate agents from different frameworks to collaborate on complex tasks Join and learn how to turn agent demos into reliable systems! https://t.co/9rBcBteq4b

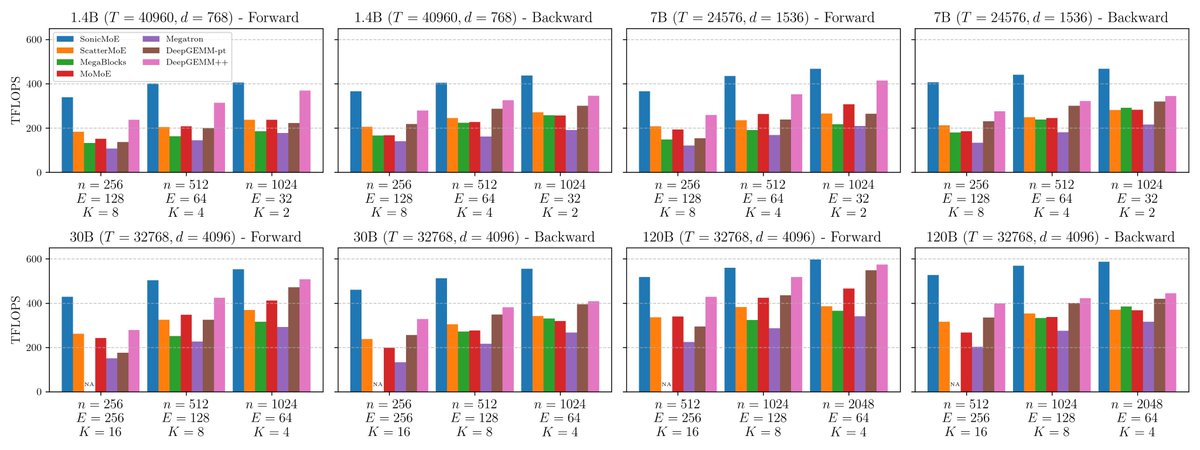

🚀SonicMoE🚀: a blazingly-fast MoE implementation optimized for NVIDIA Hopper GPUs. SonicMoE reduces activation memory by 45% and is 1.86x faster on H100 than previous SOTA😃 Paper: https://t.co/Xesd3cNcpQ Work with @MayankMish98, @XinleC295, @istoica05, @tri_dao https://t.co/B83toUk27G

replying with this when my boss texts me that i’m an hour and a half late for work https://t.co/c5XNrAlg2x

replying with this when my boss texts me that i’m an hour and a half late for work https://t.co/c5XNrAlg2x

Look what we’re missing tonight at our hotel ~~ https://t.co/vRb0qUtr7C

Look what we’re missing tonight at our hotel ~~ https://t.co/vRb0qUtr7C

We now support Agent Skills - the open standard created by @AnthropicAI for extending AI agents with specialized capabilities. Create skills once, use them everywhere. 🔗 https://t.co/4GomgRJ21O https://t.co/MHA4SBzVNN

Tomorrow morning (8AM Pacific on Dec. 19th) join @digitarald, @timrogers, and me as we take a look at #AgentSkills in @code and #GitHubCopilot Cloud Agent and more! https://t.co/4DhMVQx7X0 #vscode

We now support Agent Skills - the open standard created by @AnthropicAI for extending AI agents with specialized capabilities. Create skills once, use them everywhere. 🔗 https://t.co/4GomgRJ21O https://t.co/MHA4SBzVNN

Yann LeCun says reaching human-level AI won't be a sudden event, but a gradual evolution "optimistically, we might reach human-level, or at least dog-level, intelligence within 5 to 10 years" However, if we run into unexpected obstacles, it may take 20 years or more

Yann LeCun (@ylecun ) beautifully explains how the architecture and principles used to train LLMs can not be extended to teach AI the real-world intelligence. In 1 line: LLMs excel where intelligence equals sequence prediction over symbols. Real-world intelligence requires learned world models, abstraction, causality, and action planning under uncertainty, which current next-token training does not provide. He says current LLMs learn by predicting the next token. That objective works very well when the task itself can be reduced to manipulating discrete symbols and sequences. Math, physics problem solving on paper, and coding fit this pattern because success largely comes from searching and composing the right sequences of symbols, equations, or program tokens. With enough data and scale, these models get very good at that kind of structured sequence prediction. Real-world intelligence is different. The physical world is continuous, noisy, uncertain, and high dimensional. To act in it, a system needs internal models that capture objects, dynamics, causality, constraints from the body, and the outcomes of actions over time. Humans and animals build abstract representations from rich sensory streams, then make predictions in that abstract space, not at the raw pixel level. That is why a child can learn intuitive physics, plan multi-step actions, and adapt quickly in new situations with little data. His claim about saturation follows from this gap. Scaling token prediction keeps improving symbol manipulation tasks like math and code, but it hits limits on embodied reasoning and common sense because text alone does not provide the right learning signals for world models. Predicting the next word cannot efficiently teach contact forces, affordances, occlusion, friction, or how actions change the state of the environment. For that, he argues we need architectures that learn abstractions from sensory data and predict futures in abstract latent spaces, then use those predictions to plan actions toward goals with built-in guardrails. --- From 'Pioneer Works' YT Channel (link in comment)

Yann LeCun's new interview - explains why LLMs are so limited in terms of real-world intelligence. Says the biggest LLM is trained on about 30 trillion words, which is roughly 10 to the power 14 bytes of text. That sounds huge, but a 4 year old who has been awake about 16,000 h

“Elon Musk and Yann LeCun playing marbles” made by @grok Yann doesn’t really look like Yann https://t.co/AuAuN285OA

“Elon Musk and Yann LeCun playing marbles” made by @grok Yann doesn’t really look like Yann https://t.co/AuAuN285OA

Introducing DexWM: Dexterous Manipulation World Model tl;dr: train on human videos; fine-grained actions; hand-consistency loss; DexWM+MPC -> zero-shot dexterous manipulation w/ @_amirbar, @DavidJFan, @JimmyTYYang1, @GaoyueZhou, P. Krishnamurthy, M. Rabbat, F. Khorrami, @ylecun https://t.co/cn2D0mvJyg

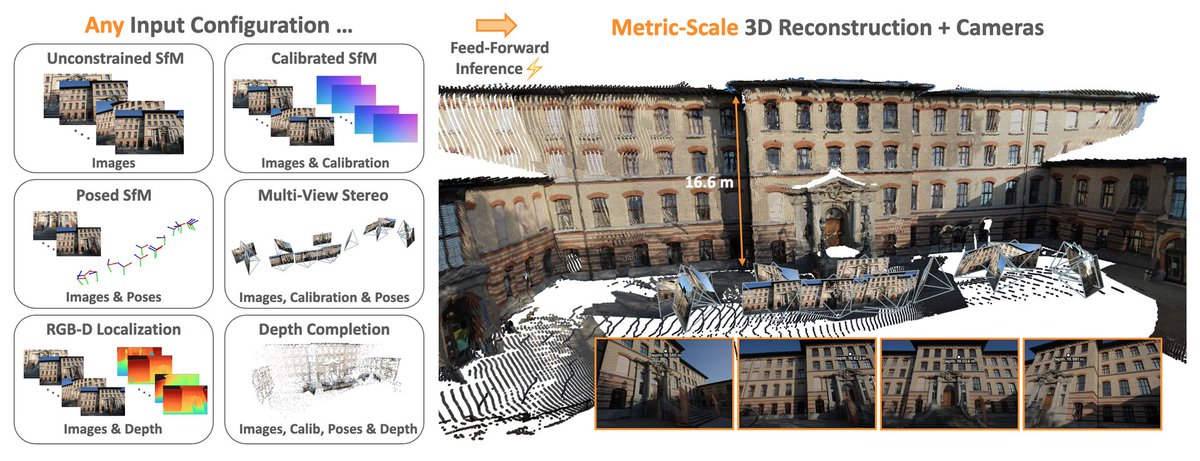

Meta just released MapAnything on Hugging Face A universal transformer model for metric 3D reconstruction It supports 12+ tasks like multi-view stereo and SfM in a single feed-forward pass https://t.co/aUtZ1rymcF

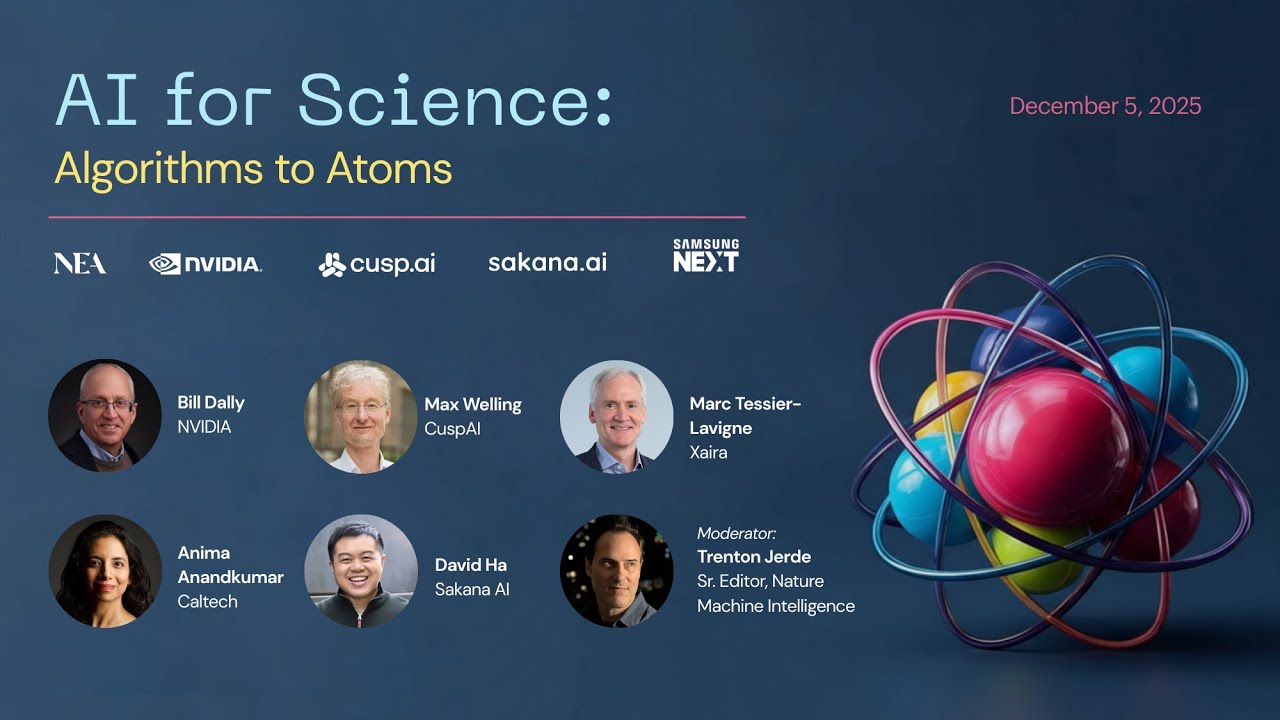

We were thrilled to join forces with @cusp_ai, @SakanaAILabs, @SamsungNext, & @nvidia during @NeurIPSConf to bring to life the panel event: ‘AI for Science: Algorithms to Atoms.’ ⚛️ Our esteemed panelists and moderator (@BillDally, @wellingmax, @AnimaAnandkumar, Marc Tessier-Lavigne, @hardmaru , Trenton Jerde) discussed what AI has already made possible across scientific domains, where the next breakthroughs may emerge, and how far automation of the scientific method can go. We’re delighted to now share the replay of this thoughtful, insightful, and important discussion and hope you enjoy it. Please reach out to continue the conversation… 📺: https://t.co/qm9PAzzTCf

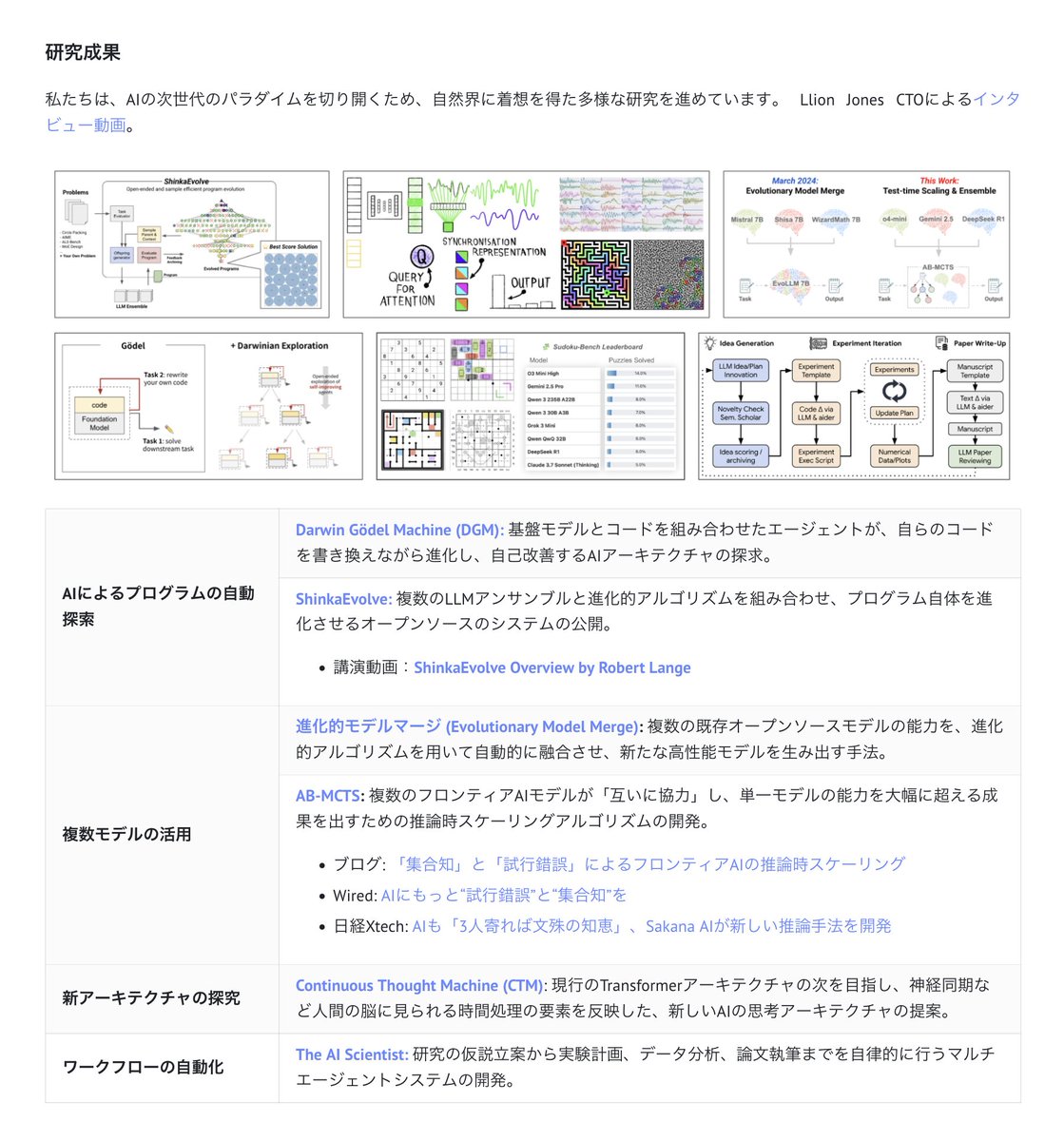

Applied Teamに関する詳細については、こちらの紹介をご覧ください。 https://t.co/11hP67FI84 https://t.co/PxwqbnGjak

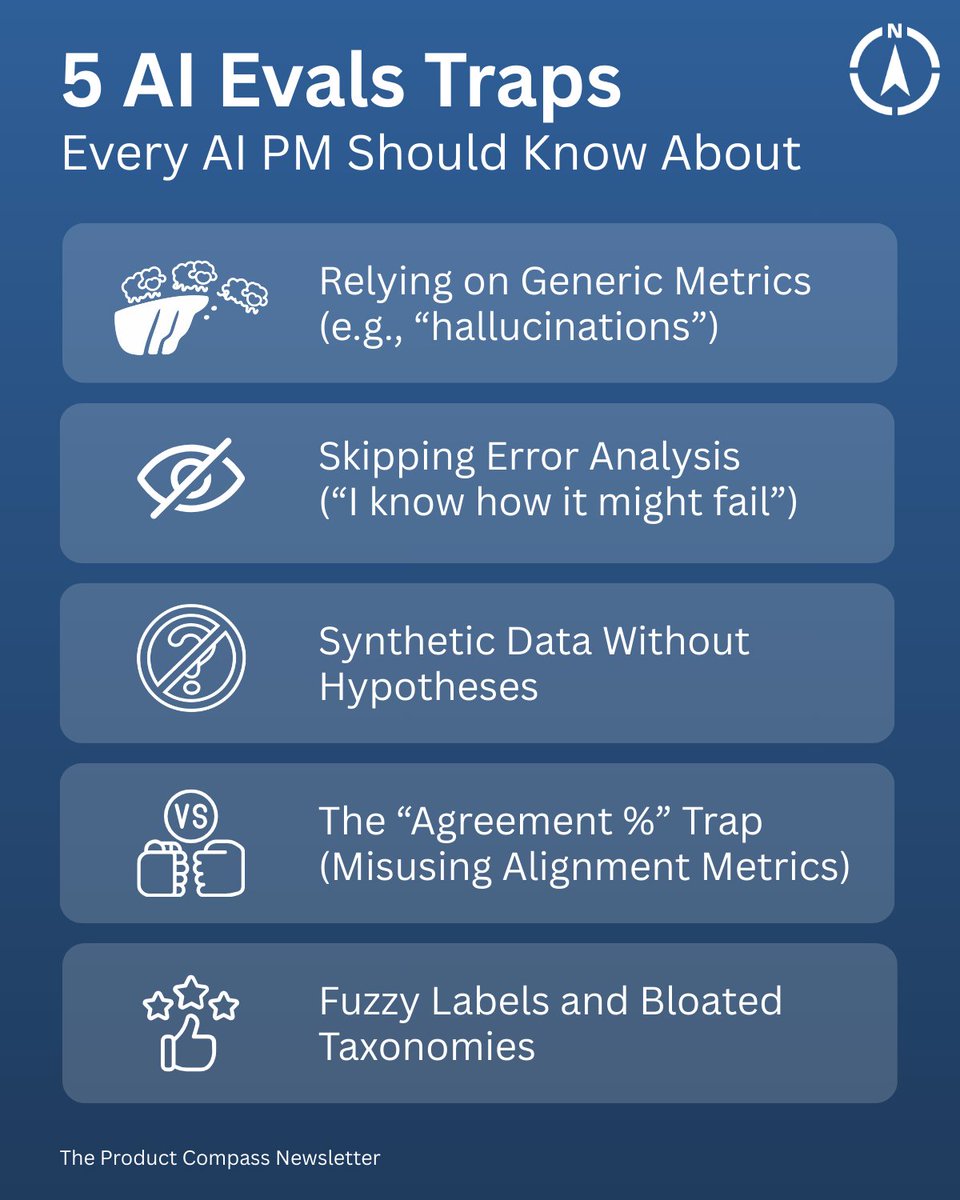

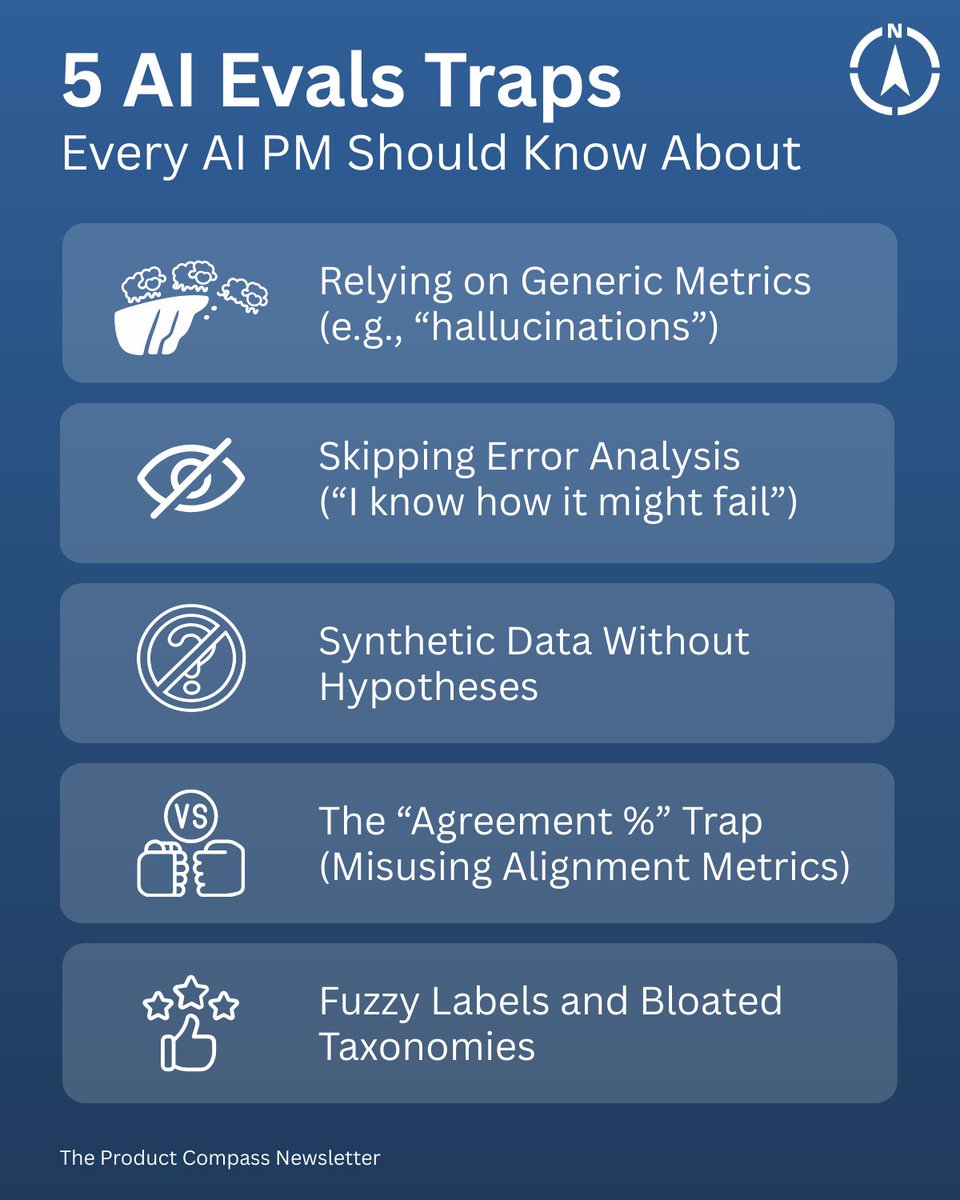

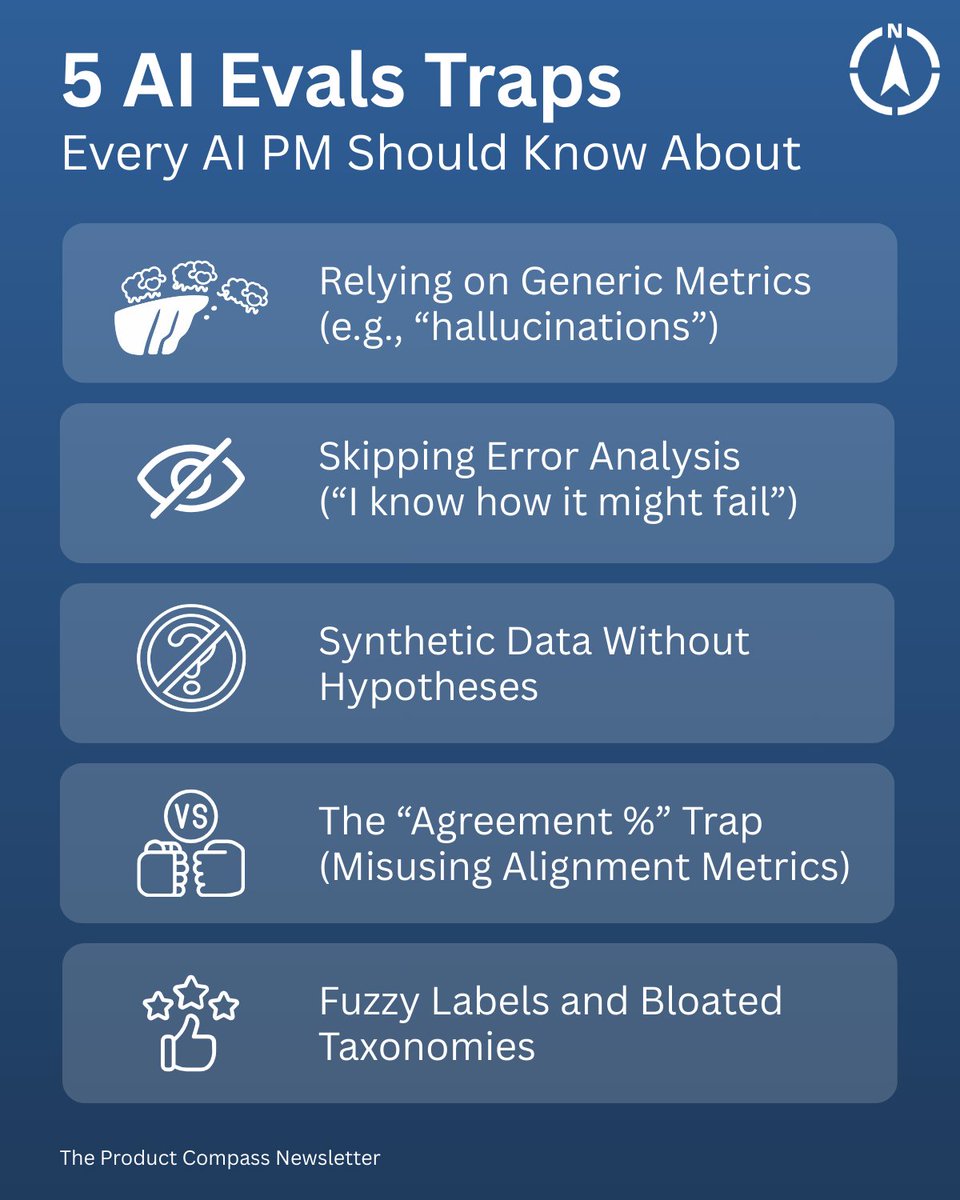

5 AI Evals Traps Every AI Team Should Know About: (and what actually works) https://t.co/iIfUFSMmIr

5 AI Evals Traps Every AI Team Should Know About: (and what actually works) https://t.co/iIfUFSMmIr

Thinking Machines chief scientist John Schulman on what’s changed in AI since 2015–2017: “Previously the people were a bit weirder.” “Engineering skill matters more now… as opposed to research, taste and the ability to do exploratory research.” “There’s so much low hanging fruit just from scaling the simple ideas and executing on them well.” “People who have more of a software engineering background have more of an advantage now.” @johnschulman2 @thinkymachines @mntruell @cursor_ai