Your curated collection of saved posts and media

@gr00vyfairy You can also use it to create what I think is the best OG image ever https://t.co/0D8Hr798NW

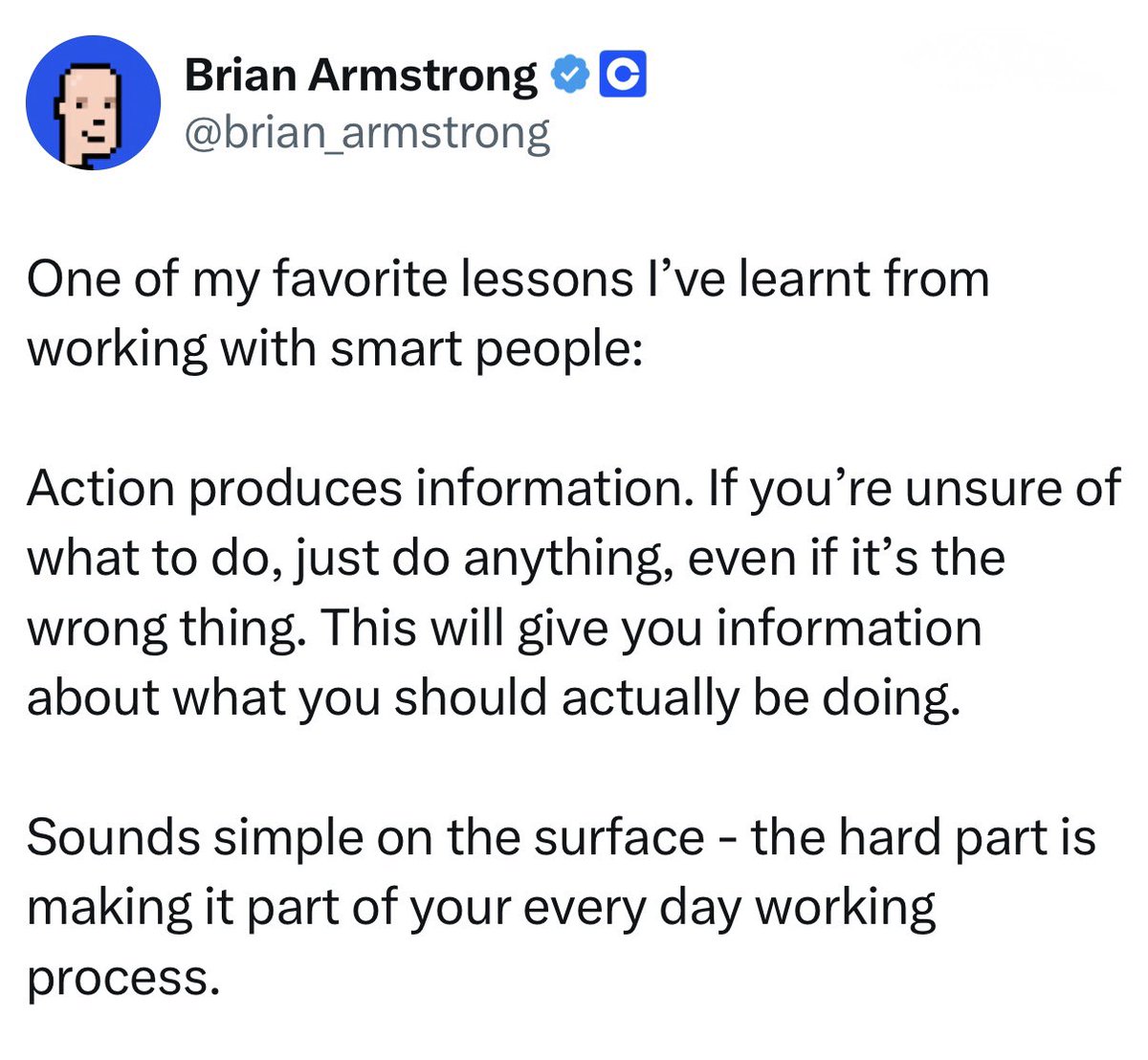

Action produces information. https://t.co/MMP7wfuWCw

Action produces information. https://t.co/MMP7wfuWCw

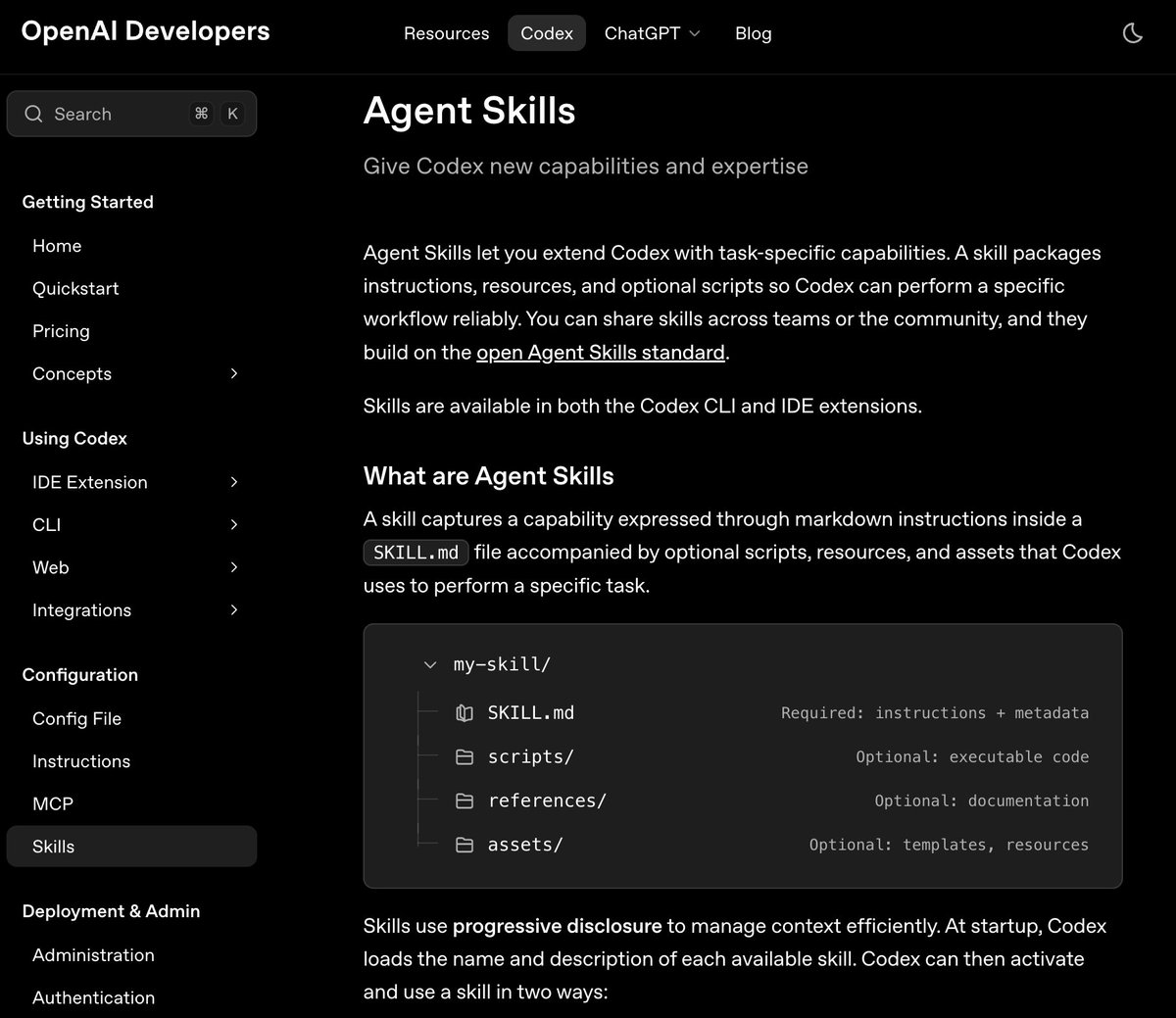

Skills is now officially supported in Codex. There is a neat built-in skill for planning. This is the best way to pull in the right context at the right time. Also, a great way to build highly specialized skills for your coding agents. https://t.co/fviSC12aci

Me and the homies. https://t.co/pU9i20ako1

What are you buying? https://t.co/UsipE27Stv

This is wild. A real-time webcam demo using SmolVLM from @huggingface and llama.cpp! 🤯 Running fully local on a MacBook M3. https://t.co/BQ1HyP7RoC

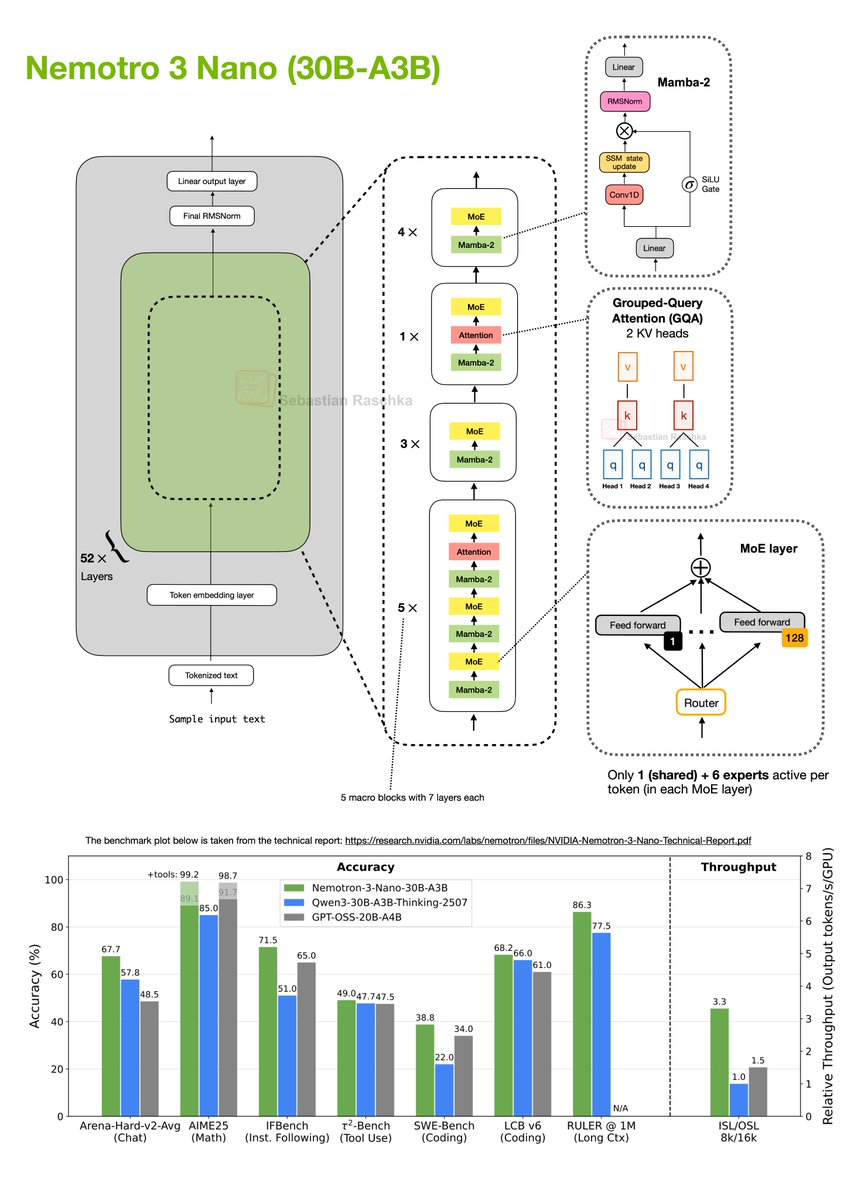

I really didn't expect another major open-weight LLM release this December, but here we go: NVIDIA released their new Nemotron 3 series this week. It comes in 3 sizes: 1. Nano (30B-A3B), 2. Super (100B), 3. and Ultra (500B). Architecture-wise, the models are a Mixture-of-Experts (MoE) Mamba-Transformer hybrid architecture. As of this morning (Dec 19), only the Nano model has been released as an open-weight model, so this post will focus on that one (shown in my drawing below). Nemotron 3 Nano (30B-A3B) is a 52-layer hybrid Mamba-Transformer model that interleaves Mamba-2 sequence-modeling blocks with sparse Mixture-of-Experts (MoE) feed-forward layers, and uses self-attention only in a small subset of layers. There’s a lot going on in the figure above, but in short, the architecture is organized into 13 macro blocks with repeated Mamba-2 → MoE sub-blocks, plus a few Grouped-Query Attention layers. In total, if we multiply the macro- and sub-blocks, there are 52 layers in this architecture. Regarding the MoE modules, each MoE layer contains 128 experts but activates only 1 shared and 6 routed experts per token. The Mamba-2 layers would take a whole article itself to explain (perhaps a topic for another time). But for now, conceptually, you can think of them as similar to the Gated DeltaNet approach that Qwen3-Next and Kimi-Linear use, which I covered in my Beyond Standard LLMs article. The similarity between Gated DeltaNet and Mamba-2 layers is that both replace standard attention with a gated-state-space update. The idea behind this state-space-style module is that it maintains a running hidden state and mixes new inputs via learned gates. In contrast to attention, it scales linearly instead of quadratically with the input sequence length. What’s actually quite exciting about this architecture is its really good performance compared to pure transformer architectures of similar size (like Qwen3-30B-A3B-Thinking-2507 and GPT-OSS-20B-A4B), while achieving much higher tokens-per-second throughput. Overall, this is an interesting direction, even more extreme than Qwen3-Next and Kimi-Linear in its use of only a few attention layers. However, one of the strengths of the transformer architecture is its performance at a (really) large scale. I am curious to see how the larger Nemotron 3 Super and especially Ultra will compare to the likes of DeepSeek V3.2.

Just updated the Big LLM Architecture Comparison article... ...it grew quite a bit since the initial version in July 2025, more than doubled! https://t.co/oEt8XzNxik https://t.co/RZuwp6ZUaF

https://t.co/gBgot9C1ZV

https://t.co/1VkhHOw1X6

https://t.co/gBgot9C1ZV

Believe so brightly that everyone sees the beauty in believing. ~ Native American 🪶✨ https://t.co/PLFFDE0g86

Native Beauty 🌹❤️🔥❤️🔥🌹🌹 If you're a Native beauty fan of mine can I get a big….YESS !!! I love you All❤️ https://t.co/gG4VDMMcWq

“We each want nothing more than to live for the moment! Nature hardwired us perpetually to follow the call of the wild, cull all the highs in life, and rejoice in life by dancing, singing, jumping, building nests, creating beauty, and playing with our young! We each find ourselves happiest when we are engaging in conduct that makes us feel Alive!” ~ K. J. Oldster,

Thank you for links @snkr_twitr union X JORDAN 📸 by me! 🤗💟💟💟 https://t.co/tV85BwEeta

Hãu mitakonabi! 💕 Macaže ne Jordy Ironstar, mitaguyabi Céga K’inna eda hambi. Hello my friends! My name is @JordenIronstar & I am a Two Spirit bead artist from Carry the Kettle Nakoda Nation. ✨ I will be your host this week. So buckle up, it’s going to be a bumpy ride 🚗 https://t.co/FnOMWq3M16

Happy National Indigenous Peoples Day ! #Nunavut https://t.co/SiOtm6vhx9

mau kasih bunga tapi gamau modal —— cerita jordan https://t.co/As39P8ETTf

jordan jastip (lagi) —— jisung three tweets au https://t.co/U0j3XbiRRe

@jordanbpeterson @petersonacademy I’ll sign up if I get an in person interview with you I probably won’t wear this to it…. Probably https://t.co/sFkVmdosEk

https://t.co/bM1AVlWGbk

https://t.co/bM1AVlWGbk

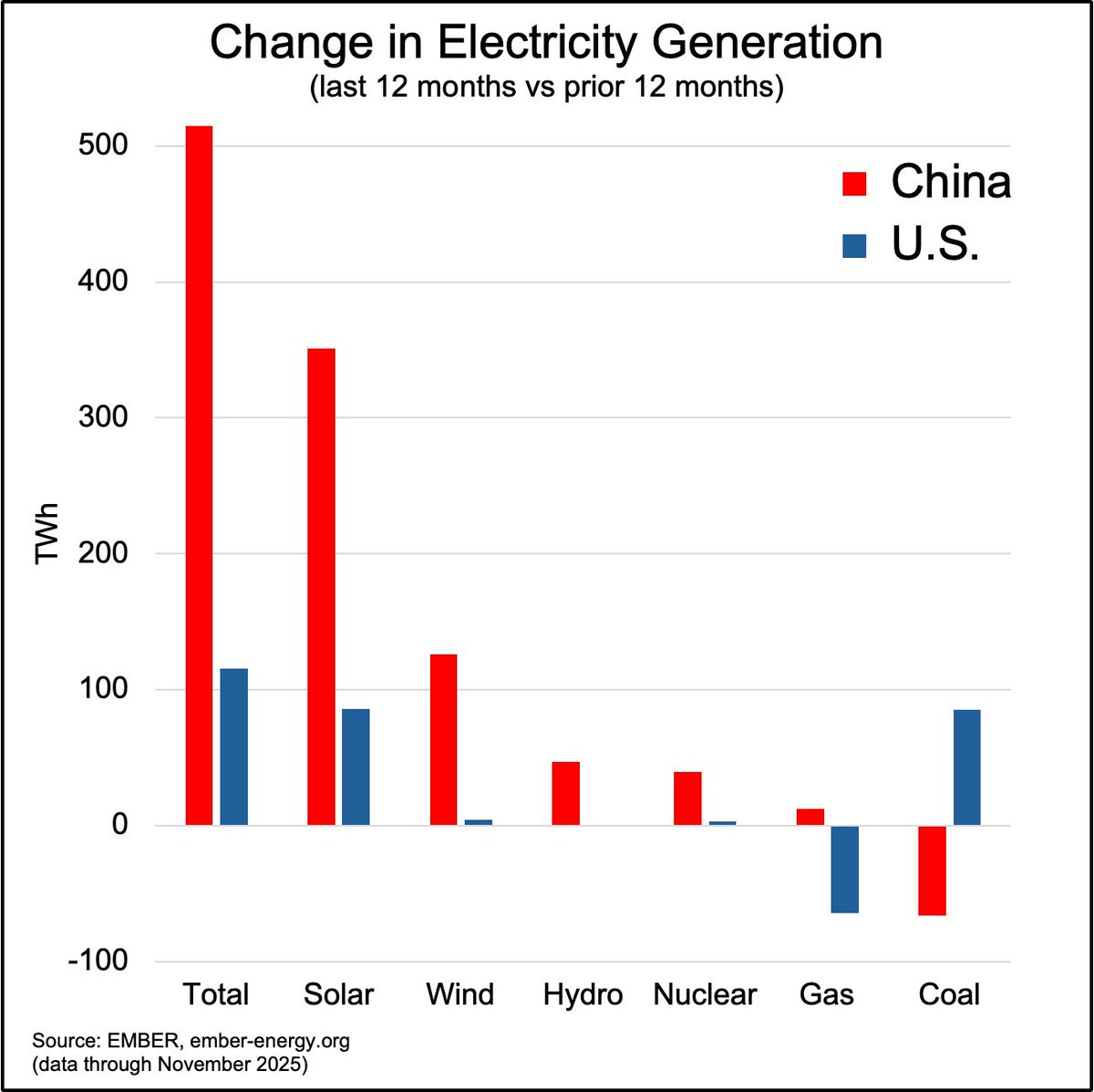

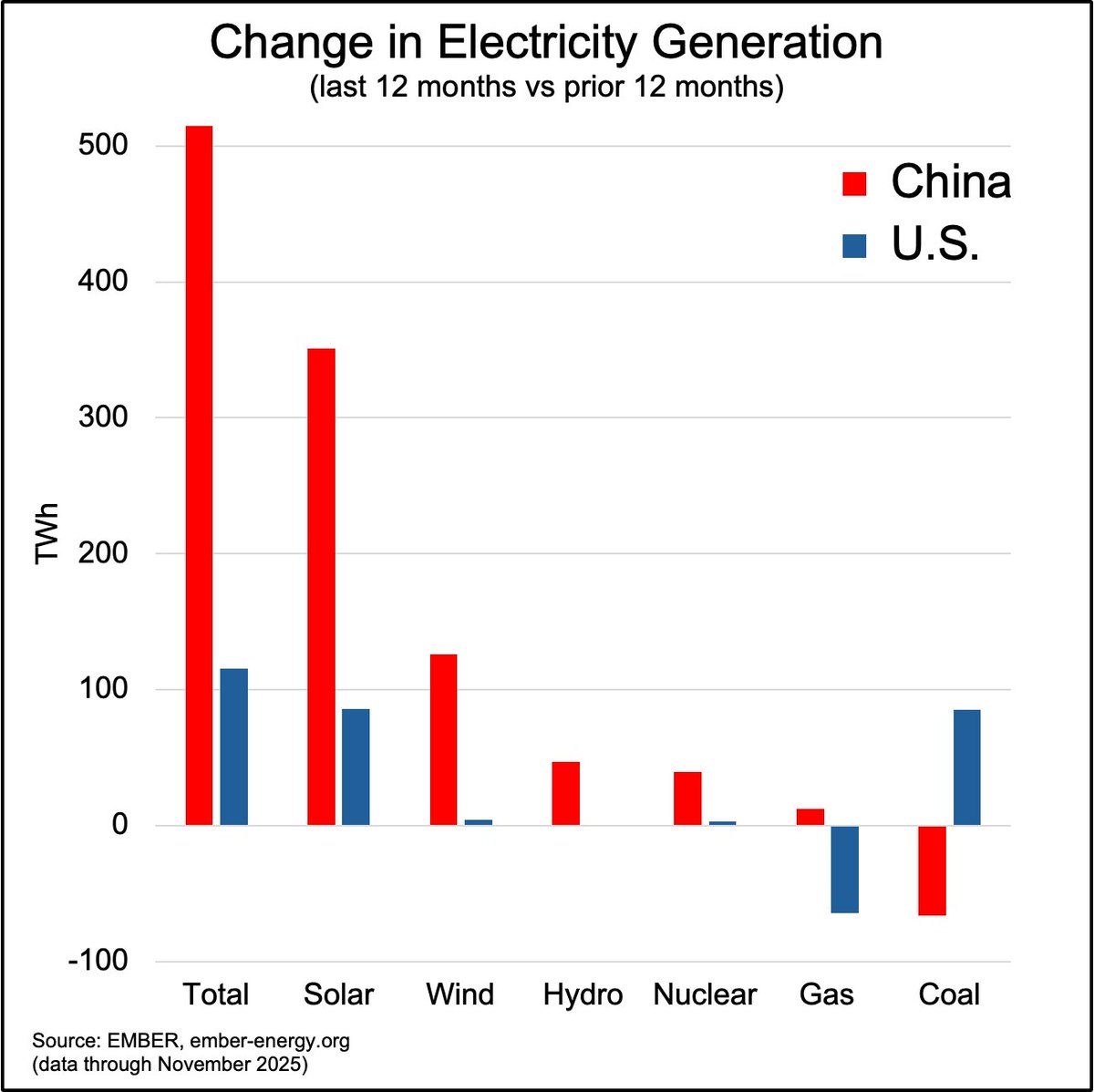

China has grown by over 500 TWh in the past 12 months. Coal is down 66 TWh. https://t.co/g3BQU4UiBg

Here’s how $NVDA is building AI that becomes the brains inside chip factories. “Vision AI agents” are systems that see through cameras and sensors then act in real time without waiting for human intervention. https://t.co/qtBWSpZgb2

【初お披露目】 ネイティブ商品 『呉マサヒロ』さんイラスト 企画進行中! #wf2020w #ネイティブ https://t.co/LXghL93Bm3

【初お披露目】 『MだSたろう』さんイラスト 「エリミア」 原型制作:にゃばー #ロケットボーイ #エロホビ https://t.co/2XYGYzY2Rp

【初お披露目】 『だにまる』さんイラスト 「蠱惑の地雷系ネコマタ配信者クロ」 企画進行中! #のくちゅるぬ #エロホビ https://t.co/Zpphx2NYmr

『しおこんぶ』さんイラスト 「星乃 リリ」 原型制作:あろえもなか #ロケットボーイ #エロホビ https://t.co/GfEqZgrSOA

【初お披露目】 『米白粕』さんイラスト 「夢現」 原型制作:げぼぼ #ロケットボーイ #エロホビ https://t.co/IUDarU4et2

【初お披露目】 『u介』さんイラスト 「うしちゃん」 原型制作:マーボー豆腐(Tofu) #ホットビーナス #エロホビ https://t.co/HxU8CwrpI6

【初お披露目】【デコマス初】 『羽織イオ』さんイラスト 「白バニー:ルビー」 原型制作:BINDing #バインディング #エロホビ https://t.co/cxKpKm8YKV

So... Postgres is now basically a search engine? pg_textsearch was just open sourced. It enables BM25 to search your database.... massive upgrade for key word search. Google uses BM25 in their search engine. Claude told me: "if you're already on Postgres, you can now skip the whole sync-your-data-to-Elasticsearch dance for search." (ps, how can you not love Claude). Now I got to figure out how to implement in my Django querysets... future course? Grab it at https://t.co/bMwRSgtOcO #sponsored

Nothing can stop you from reclaiming your songs and your dances. https://t.co/jKNsXaAezD