@rasbt

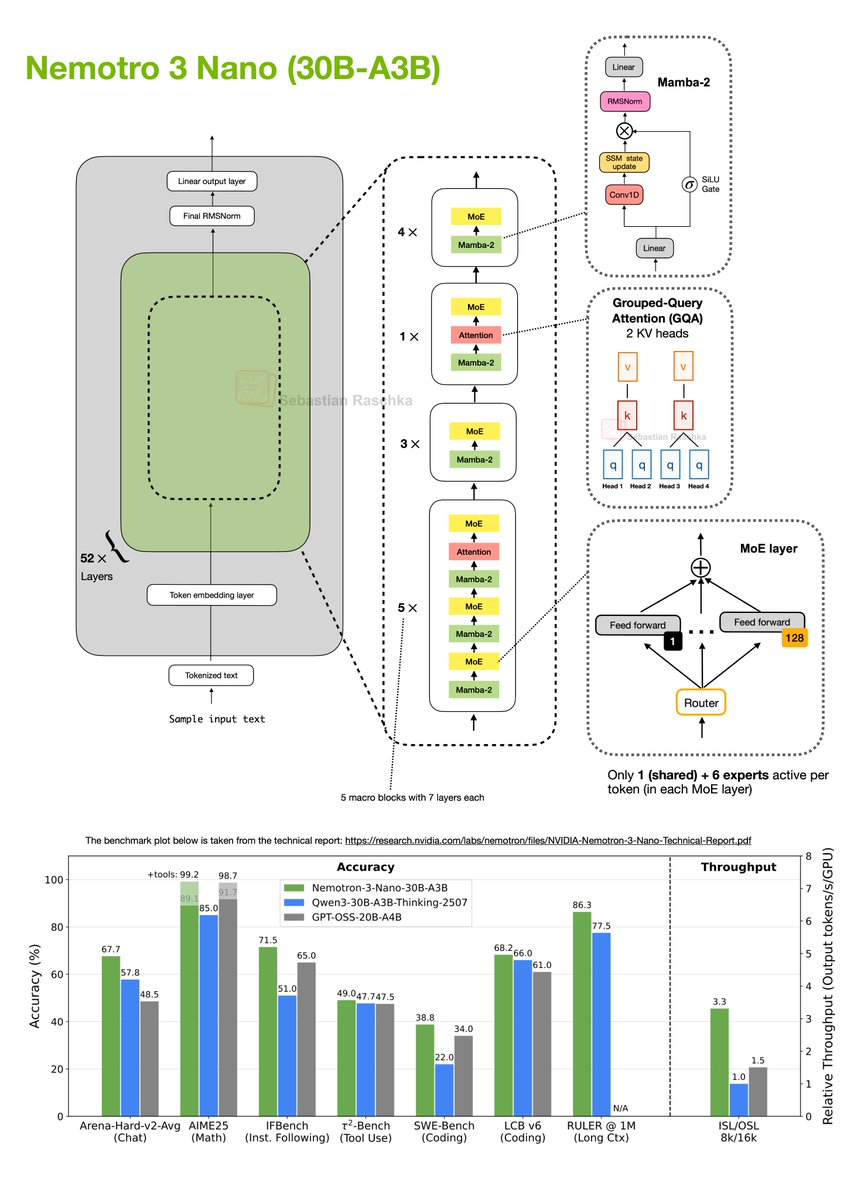

I really didn't expect another major open-weight LLM release this December, but here we go: NVIDIA released their new Nemotron 3 series this week. It comes in 3 sizes: 1. Nano (30B-A3B), 2. Super (100B), 3. and Ultra (500B). Architecture-wise, the models are a Mixture-of-Experts (MoE) Mamba-Transformer hybrid architecture. As of this morning (Dec 19), only the Nano model has been released as an open-weight model, so this post will focus on that one (shown in my drawing below). Nemotron 3 Nano (30B-A3B) is a 52-layer hybrid Mamba-Transformer model that interleaves Mamba-2 sequence-modeling blocks with sparse Mixture-of-Experts (MoE) feed-forward layers, and uses self-attention only in a small subset of layers. There’s a lot going on in the figure above, but in short, the architecture is organized into 13 macro blocks with repeated Mamba-2 → MoE sub-blocks, plus a few Grouped-Query Attention layers. In total, if we multiply the macro- and sub-blocks, there are 52 layers in this architecture. Regarding the MoE modules, each MoE layer contains 128 experts but activates only 1 shared and 6 routed experts per token. The Mamba-2 layers would take a whole article itself to explain (perhaps a topic for another time). But for now, conceptually, you can think of them as similar to the Gated DeltaNet approach that Qwen3-Next and Kimi-Linear use, which I covered in my Beyond Standard LLMs article. The similarity between Gated DeltaNet and Mamba-2 layers is that both replace standard attention with a gated-state-space update. The idea behind this state-space-style module is that it maintains a running hidden state and mixes new inputs via learned gates. In contrast to attention, it scales linearly instead of quadratically with the input sequence length. What’s actually quite exciting about this architecture is its really good performance compared to pure transformer architectures of similar size (like Qwen3-30B-A3B-Thinking-2507 and GPT-OSS-20B-A4B), while achieving much higher tokens-per-second throughput. Overall, this is an interesting direction, even more extreme than Qwen3-Next and Kimi-Linear in its use of only a few attention layers. However, one of the strengths of the transformer architecture is its performance at a (really) large scale. I am curious to see how the larger Nemotron 3 Super and especially Ultra will compare to the likes of DeepSeek V3.2.