Your curated collection of saved posts and media

Revisiting @sarkodie’s stellar debut album, ‘Sarkology’ 🔥 https://t.co/JRBw6m5Oyo

“If you are driven by fear, anger or pride— Nature will force you to compete. If you are guided by courage, awareness, tranquility and peace… nature will serve you.” ~ A. Ray, https://t.co/5H27c6LKSr

「すーぱーそに子」より『すーぱーそに子 OL Ver.』 原型制作:八巻 #wf2016s #ネイティブ https://t.co/yQIEmir5Ph

for you 🥰 Cr. Hello_asian #เจ้าแก้มก้อน #Fluke_Natouch https://t.co/nY8YvUA2UJ

ต้าวเด็กมากับฝน 💦 #เจ้าแก้มก้อน #Fluke_Natouch https://t.co/LaBbA8TqDT

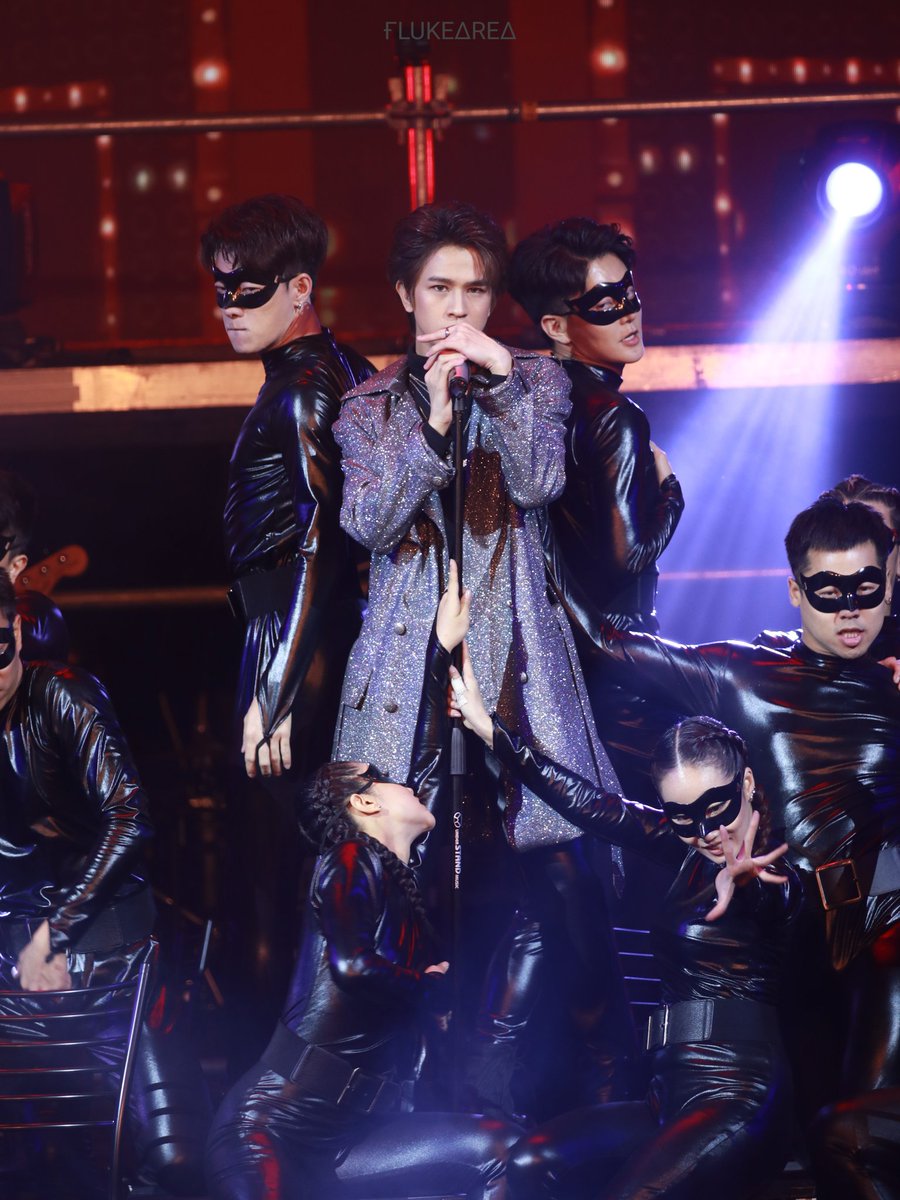

ยอมเป็นทาสเทอ 😳 #เจ้าแก้มก้อน #Fluke_Natouch https://t.co/iDeKPoK1Kw

I’ve been thinking about the “fine-tuning” question in cosmology. Many physical constants (gravity, the cosmological constant, particle masses) seem to sit in extremely narrow ranges that allow stars, galaxies, and life to exist. Even small changes could make a lifeless universe. Some people interpret this as a hint of intentional design. Others argue it could be explained by the multiverse (we simply live in the universe that works), or by deeper laws of physics we haven’t discovered yet. I’m not claiming a final answer — I just find the question fascinating: What do you think fine-tuning actually tells us?

【ラスト2日】 ネイティブより、「すーぱーそに子 ドジッ娘OL ver.」!もうすぐ受注締めです!(`・ω・´)ゞ https://t.co/oXuavWaOj7 https://t.co/Iit0vZfvUp

【 12月19日(水) 受注締切 】 momi氏の描く爽やか美少女「七海 葵」をロケットボーイが忠実に立体化! ボディの交換により、水着の食い込み表現と解放状態の両方を再現可能にいたしました。 https://t.co/nRqEPywzKj https://t.co/ulxa5KmFVw

『島田フミカネ』さんイラスト「ノーラ」 原型制作:アビラ #wf2019w #ネイティブ https://t.co/EsfqPyHxxP

“Those who know how to maintain silence, knows how to maintain everything.” ~>N.Namdeo https://t.co/YM1tmM35dm

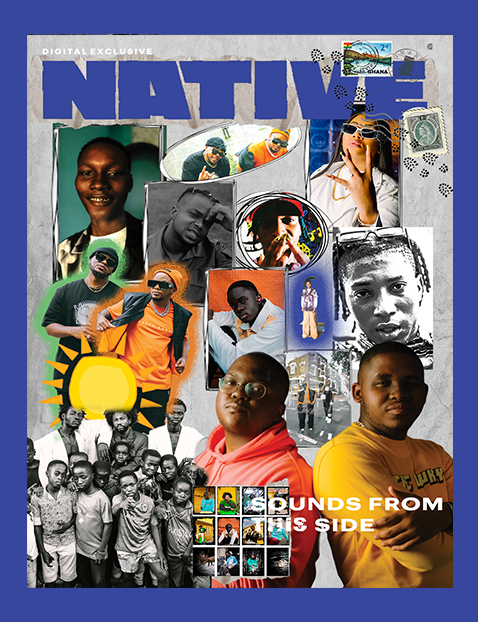

🚨 NEW DIGITAL COVER ALERT🚨 The NATIVE Presents: Sounds From 𝓣𝓱𝓲𝓼 Side featuring: Street Pop 3.0 🇳🇬 Amapiano 🇿🇦 Asakaa Drill 🇬🇭 FULL STORY: https://t.co/Ka8lhCfueu https://t.co/9ETnVgdVJd

GREAT PHOTO OF >ADAM BEACH< HAVE A BLESSED WEEKEND BROTHER https://t.co/aSGWjCReBM

Found old @modal swag. Smells good! https://t.co/Ld3VV0RzRI

Looking for a festive Yule log to brighten up your terminal? You’ll love @leereilly’s GitHub CLI extension that gives you a cozy, animated Git log. 🔥 🪵 https://t.co/2oMEsTkEMP https://t.co/bxhmd254GE

We’re releasing Bloom, an open-source tool for generating behavioral misalignment evals for frontier AI models. Bloom lets researchers specify a behavior and then quantify its frequency and severity across automatically generated scenarios. Learn more: https://t.co/TwKstpLSy3

‘Logan was terrified for Jake’ — and honestly… you can feel the tension in his face. What do you think — genuine fear, or just a bad freeze-frame/angle? 🥊👀 @LoganPaul @jakepaul https://t.co/Hku9WLy1GC

Nana conference Attacca conference https://t.co/pcGv8RkuQP

RAG systems struggle with multi-hop reasoning. In most cases, the problem isn't the LLMs. It's the retrieval system. Standard RAG treats each piece of evidence as equally reliable, ignoring how documents connect to each other. Why is this a problem? When questions require reasoning across multiple sources, single-shot retrieval often misses "bridge" documents whose entities aren't mentioned in the original query. Iterative retrieval helps, but it introduces new issues: LLM-guided graph traversal can hallucinate or become stuck on partial reasoning from previous steps. This new research introduces SA-RAG, a framework that applies spreading activation, a mechanism from cognitive psychology, to knowledge-graph-based retrieval. How does it work? Instead of relying on the LLM to decide which documents to fetch next, activation propagates automatically through a knowledge graph. Starting from entities matched to the query, activation spreads outward through weighted connections, with strength diminishing over distance. Documents linked to highly activated entities get retrieved. The system builds a hybrid structure during indexing. An LLM extracts entities and relationships from text chunks, creating a knowledge graph where documents connect to entities through "describes" links. At query time, seed entities are identified by embedding similarity, then activation flows through the graph in a breadth-first manner. On MuSiQue, SA-RAG alone achieves 67% answer correctness with phi4, outperforming naive RAG at 45% and CoT-based iterative retrieval at 55%. When combined with chain-of-thought iterative retrieval, it reaches 74% on MuSiQue and 87% on 2WikiMultiHopQA. This system demonstrates a 25% to 39% absolute improvement over naive RAG across benchmarks. Notably, these results come from small, open-weight models like phi4 and gemma3, which require no fine-tuning. Spreading activation captures associative relevance rather than surface-level similarity. The method works as a plug-and-play module, boosting any training-free RAG pipeline without architectural changes. Paper: https://t.co/jLZLkacDAX Learn to build effective RAG and AI agents in our academy: https://t.co/zQXQt0PMbG

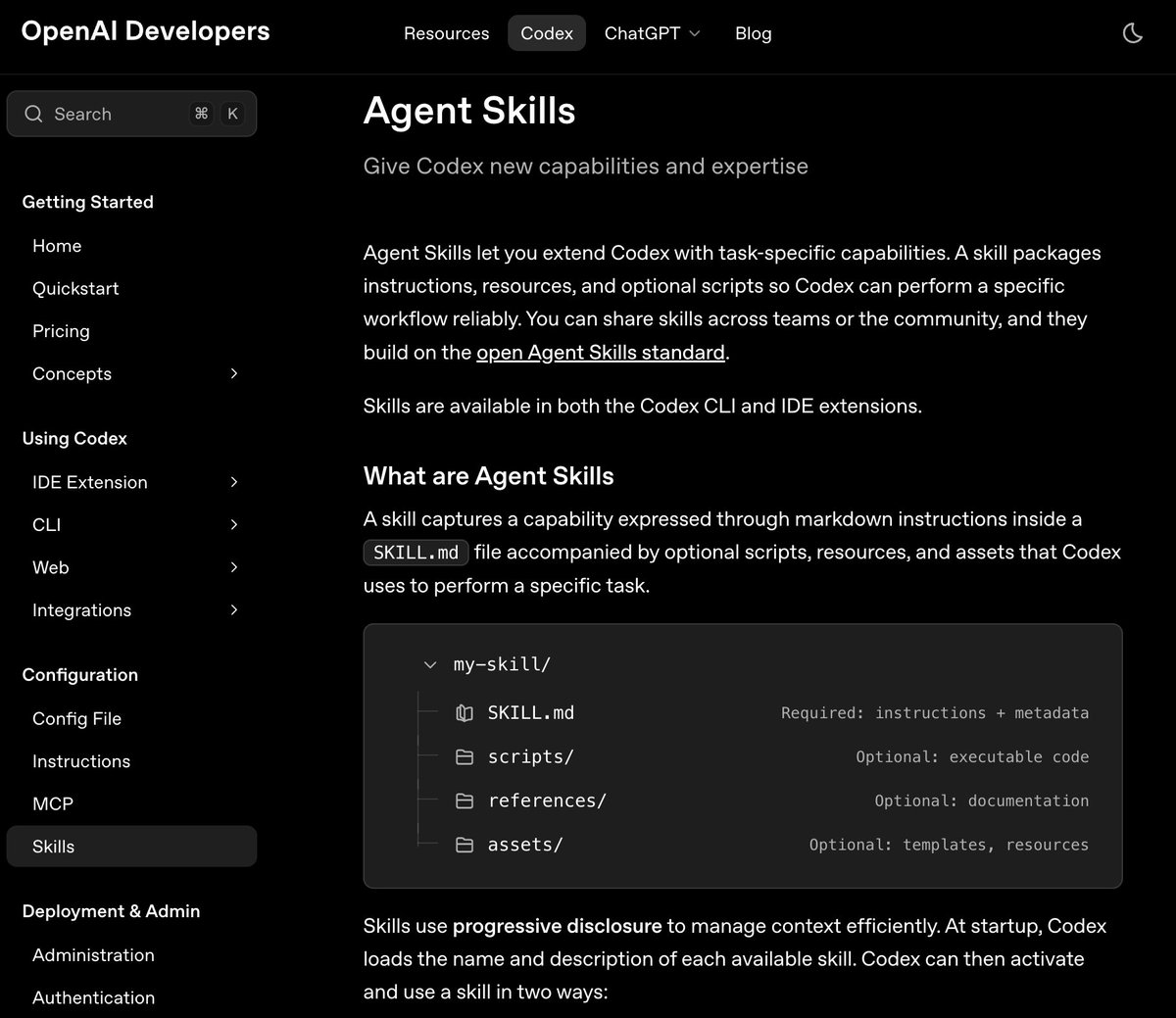

Check out the other skill examples in the repo. https://t.co/Oj3Oh5kJ9v

@gr00vyfairy You can also use it to create what I think is the best OG image ever https://t.co/0D8Hr798NW

Action produces information. https://t.co/MMP7wfuWCw

Action produces information. https://t.co/MMP7wfuWCw

Skills is now officially supported in Codex. There is a neat built-in skill for planning. This is the best way to pull in the right context at the right time. Also, a great way to build highly specialized skills for your coding agents. https://t.co/fviSC12aci

Me and the homies. https://t.co/pU9i20ako1

What are you buying? https://t.co/UsipE27Stv

This is wild. A real-time webcam demo using SmolVLM from @huggingface and llama.cpp! 🤯 Running fully local on a MacBook M3. https://t.co/BQ1HyP7RoC

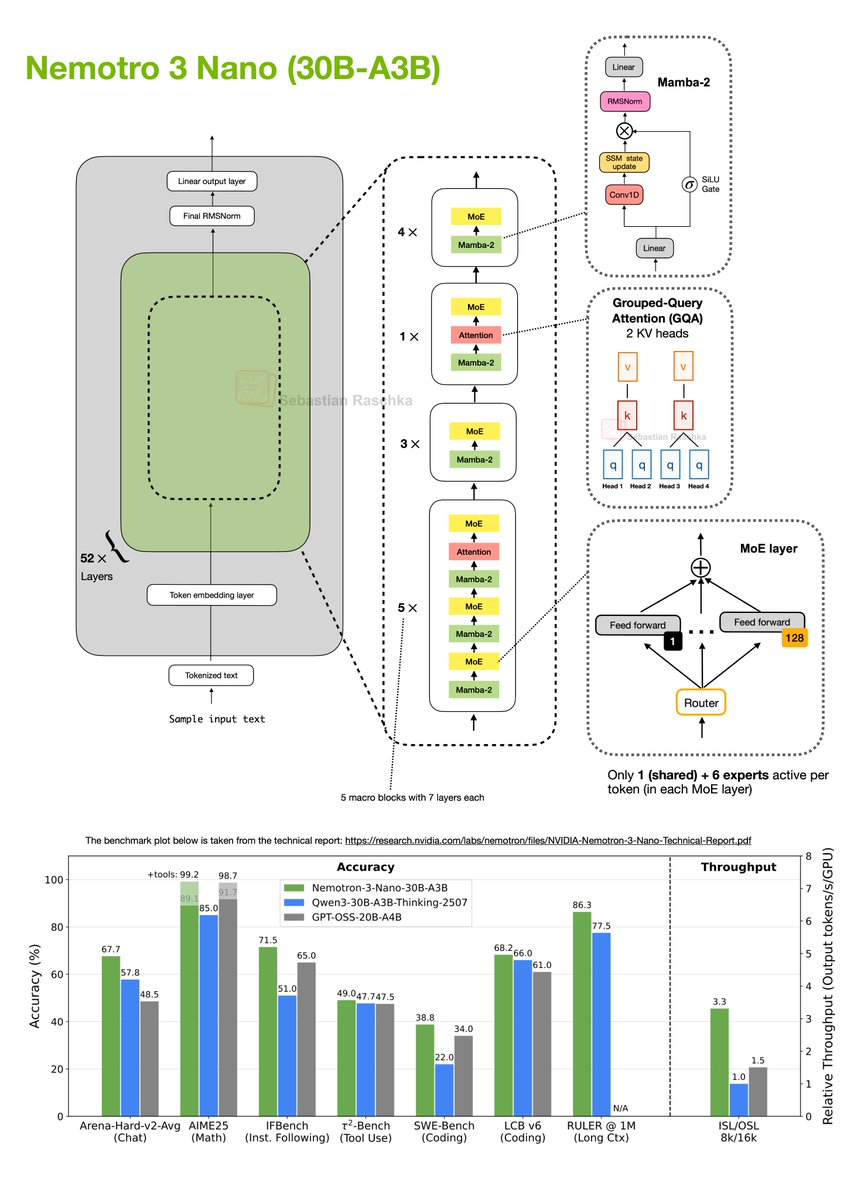

I really didn't expect another major open-weight LLM release this December, but here we go: NVIDIA released their new Nemotron 3 series this week. It comes in 3 sizes: 1. Nano (30B-A3B), 2. Super (100B), 3. and Ultra (500B). Architecture-wise, the models are a Mixture-of-Experts (MoE) Mamba-Transformer hybrid architecture. As of this morning (Dec 19), only the Nano model has been released as an open-weight model, so this post will focus on that one (shown in my drawing below). Nemotron 3 Nano (30B-A3B) is a 52-layer hybrid Mamba-Transformer model that interleaves Mamba-2 sequence-modeling blocks with sparse Mixture-of-Experts (MoE) feed-forward layers, and uses self-attention only in a small subset of layers. There’s a lot going on in the figure above, but in short, the architecture is organized into 13 macro blocks with repeated Mamba-2 → MoE sub-blocks, plus a few Grouped-Query Attention layers. In total, if we multiply the macro- and sub-blocks, there are 52 layers in this architecture. Regarding the MoE modules, each MoE layer contains 128 experts but activates only 1 shared and 6 routed experts per token. The Mamba-2 layers would take a whole article itself to explain (perhaps a topic for another time). But for now, conceptually, you can think of them as similar to the Gated DeltaNet approach that Qwen3-Next and Kimi-Linear use, which I covered in my Beyond Standard LLMs article. The similarity between Gated DeltaNet and Mamba-2 layers is that both replace standard attention with a gated-state-space update. The idea behind this state-space-style module is that it maintains a running hidden state and mixes new inputs via learned gates. In contrast to attention, it scales linearly instead of quadratically with the input sequence length. What’s actually quite exciting about this architecture is its really good performance compared to pure transformer architectures of similar size (like Qwen3-30B-A3B-Thinking-2507 and GPT-OSS-20B-A4B), while achieving much higher tokens-per-second throughput. Overall, this is an interesting direction, even more extreme than Qwen3-Next and Kimi-Linear in its use of only a few attention layers. However, one of the strengths of the transformer architecture is its performance at a (really) large scale. I am curious to see how the larger Nemotron 3 Super and especially Ultra will compare to the likes of DeepSeek V3.2.

Just updated the Big LLM Architecture Comparison article... ...it grew quite a bit since the initial version in July 2025, more than doubled! https://t.co/oEt8XzNxik https://t.co/RZuwp6ZUaF

https://t.co/gBgot9C1ZV

https://t.co/1VkhHOw1X6

https://t.co/gBgot9C1ZV

Believe so brightly that everyone sees the beauty in believing. ~ Native American 🪶✨ https://t.co/PLFFDE0g86

Native Beauty 🌹❤️🔥❤️🔥🌹🌹 If you're a Native beauty fan of mine can I get a big….YESS !!! I love you All❤️ https://t.co/gG4VDMMcWq