Your curated collection of saved posts and media

A thingy for the #HAPPYTREEFRIENDSAREBACK https://t.co/iVZ9x6rcmA

“All Dreams Spin Out From The Same Web.” ~ Native American https://t.co/vbXB1eGtF4

Diffusion serving is expensive: dozens of timesteps per image, and a lot of redundant compute between adjacent steps. ⚡vLLM-Omni now supports diffusion cache acceleration backends (TeaCache + Cache-DiT) to reuse intermediate Transformer computations — no retraining, minimal quality impact! 🚀Benchmarks (NVIDIA H200, Qwen-Image 1024x1024): TeaCache 1.91x, Cache-DiT 1.85x. For Qwen-Image-Edit, Cache-DiT hits 2.38x! Blog: https://t.co/TiC0WhbgQp Docs: https://t.co/0qatboeIe3 #vLLM #vLLMOmni #DiffusionModels #AIInference

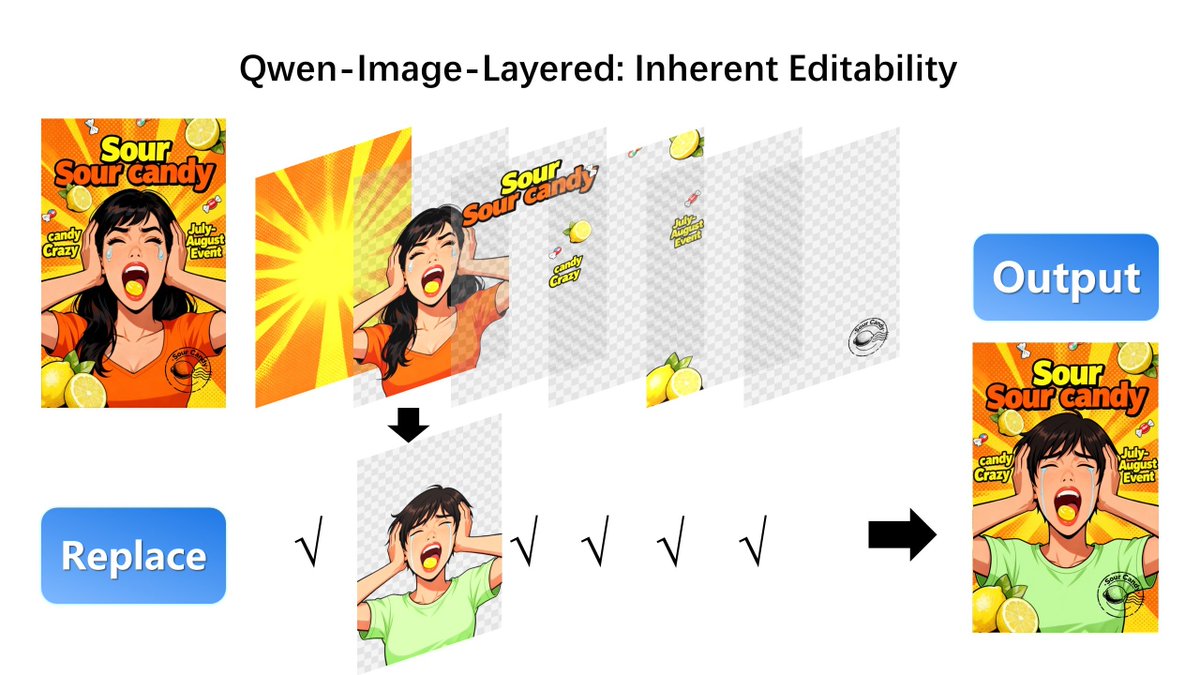

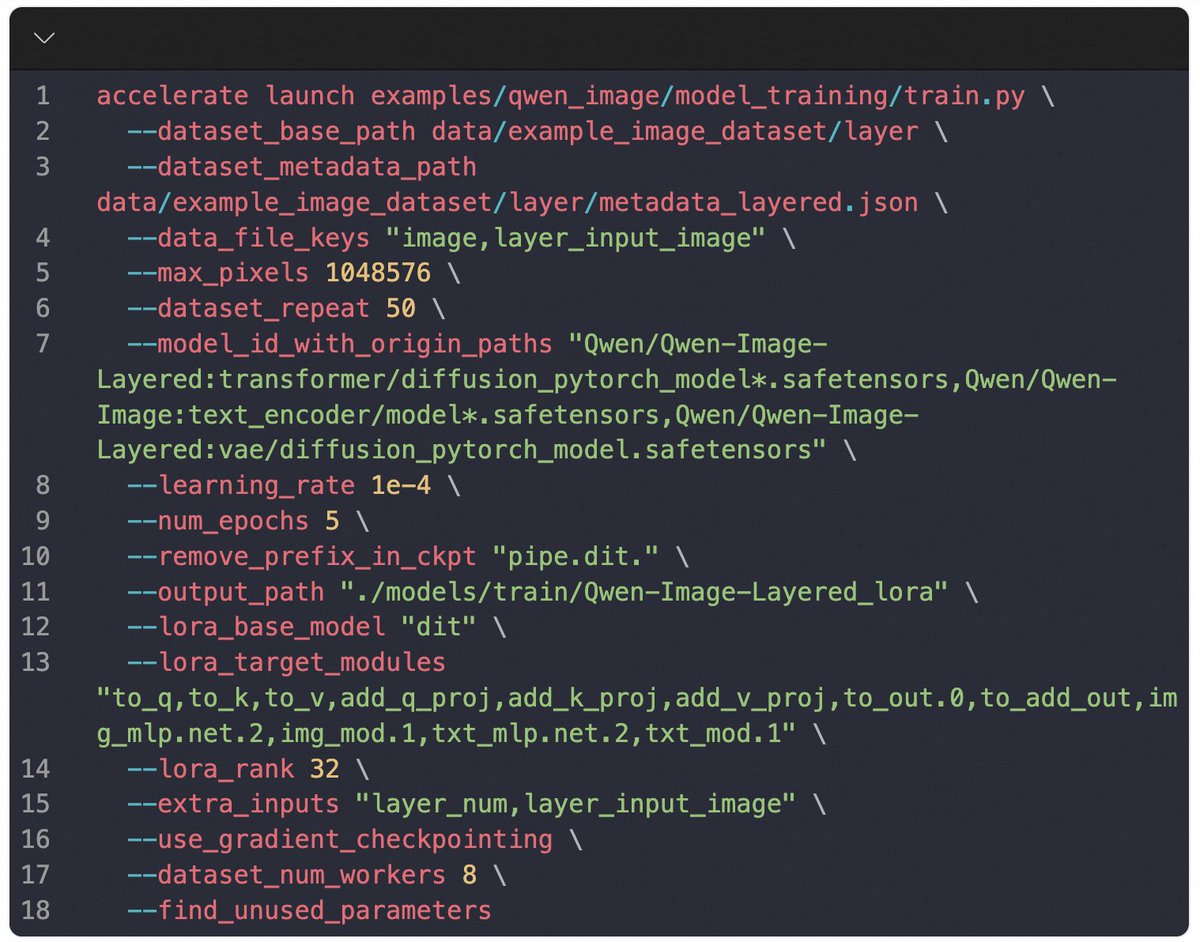

🚀DiffSynth-Studio now supports Qwen-Image-Layered training—so you can train your own layered image decomposer with just a few lines of code. 🛠️ Think Photoshop for AI images: move, recolor, or replace one element—background stays crisp, style stays intact. 🎨 Qwen-Image-Layered decomposes any RGB image into semantic RGBA layers, enabling true inherent editability: ✅ Reposition a person → background untouched ✅ Resize text → no style drift ✅ Swap a shirt → face unchanged Powered by RGBA-VAE + VLD-MMDiT, it supports variable layers and recursive decomposition—no ghosting, no artifacts. Train your own model today with DiffSynth-Studio! 🔗 DiffSynth-Stuido:https://t.co/CViCA6Xh2s 🤖 Model: https://t.co/V9yXltIX9N 🌟 Demo: https://t.co/ySdRT6dEpi 📄 Paper: https://t.co/5fsAfPLcqz

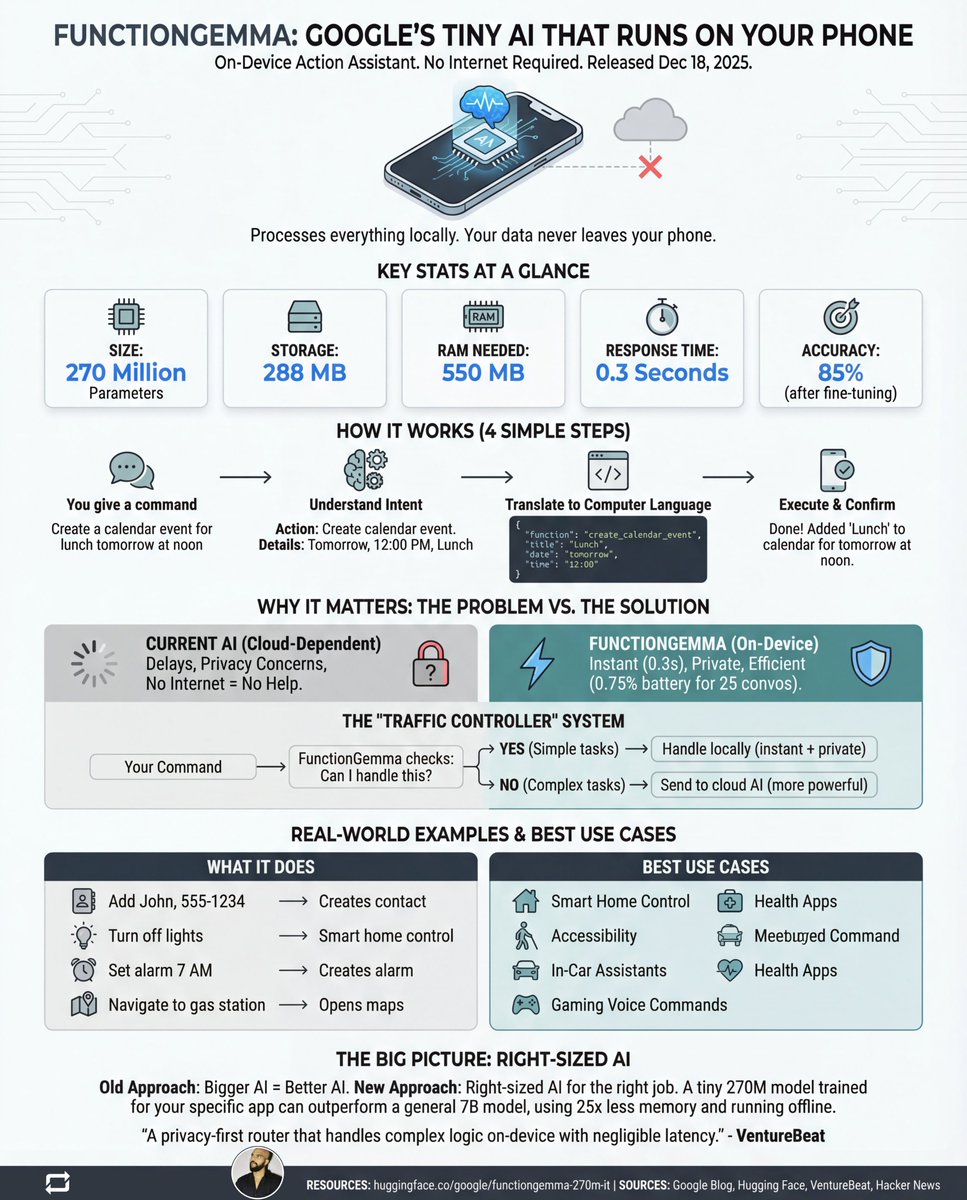

Google just quietly dropped an AI that runs on your Mobile and doesn't need the internet. - 270 million parameters. - 100% private. - No servers. - No cloud. - No data leaving your device. It's called FunctionGemma. Released December 18, 2025. And it does something wild: It turns your voice commands into REAL actions on your phone. No internet required. No data leaving your device. No waiting for servers. Just you and your phone. That's it. Let me break down why this matters: Current AI assistants work like this: You speak → Words go to the cloud → Server processes → Answer returns The problem? → Slow (internet round-trip) → Privacy nightmare (your data travels everywhere) → Useless offline (no signal = no help) FunctionGemma flips this completely. Everything happens ON your device. Response time? 0.3 seconds. Battery drain? 0.75% for 25 conversations. File size? 288 MB. That's smaller than most mobile games. Here's how it actually works: Step 1: You say "Add John to contacts, number 555-1234" Step 2: FunctionGemma understands your intent Step 3: Translates it to code your phone understands Step 4: Your phone executes it instantly Step 5: Done. Contact saved. No cloud involved. The numbers that blew my mind: • 270M parameters (6,600x smaller than GPT-4) • 126 tokens per second • 85% accuracy after fine-tuning • 550 MB RAM usage • Works 100% offline But here's the real genius: Google calls it the "Traffic Controller" approach. Simple tasks? → Handled locally (instant + private) Complex tasks? → Routed to cloud AI (when needed) Best of both worlds. What can it actually do? → "Set alarm for 7 AM" ✓ → "Turn off living room lights" ✓ → "Create meeting with Sarah tomorrow" ✓ → "Navigate to nearest gas station" ✓ → "Log that I drank 2 glasses of water" ✓ All processed locally. All private. All instant. The honest limitations: → Can't chain multiple steps together (yet) → Struggles with indirect requests → 85% accuracy means 15% errors → Needs fine-tuning for best results But that 58% → 85% accuracy jump after training? That's the unlock. Why should you care? This isn't about one model. It's about a fundamental shift: OLD thinking: Bigger AI = Better AI NEW thinking: Right-sized AI for the right job A tiny 270M model trained for YOUR app can outperform a general 7B model. While using 25x less memory. While running completely offline. While keeping all data private. The future of AI isn't just in data centers. It's in your pocket. And it just got a lot more real. Want to try it? → Download: ollama pull functiongemma → Docs: https://t.co/zDrncdetbr → Model: https://t.co/l49KjOtIzD PS:) Like, Repost and Bookmark! If this was useful - Follow for more AI breakdowns

GraphRAG is the future of enterprise AI. But there's a problem nobody's talking about => your graph database is the bottleneck. FalkorDB just solved it by reimagining how graphs work at the mathematical level. ➡️ The GraphRAG Challenge: Everyone's implementing GraphRAG for their LLM applications. Retrieval Augmented Generation with knowledge graphs gives you structured context, not just similar embeddings. But when your agent queries the graph in real-time, traditional databases can't keep up. Your users wait. Your agent stalls. The conversation breaks. ➡️ Why Traditional Graph Databases Are Slow: They walk through nodes and edges one step at a time. It's like following a map by foot instead of seeing the entire landscape from above. For enterprise knowledge graphs with millions of entities and relationships, this traversal approach creates latency that kills real-time AI. ➡️ FalkorDB's Mathematical Breakthrough: What if you could see the entire graph at once? FalkorDB represents graphs as sparse matrices - a mathematical structure that captures all relationships simultaneously. Then it queries using linear algebra instead of traversal. The result => your queries become instant mathematical computations instead of step-by-step walks. ➡️ The Sparse Matrix Advantage: Traditional databases store every possible connection (even the ones that don't exist). Sparse matrices only store actual connections. This means: → Massive graphs fit in memory → Queries execute in milliseconds → Storage costs drop dramatically ➡️ Real Enterprise Applications: → Agent Memory Systems: Your AI remembers context across conversations without latency → Cloud Security: Detect threats by understanding how your infrastructure connects → Fraud Detection: Spot patterns in transaction networks instantly → GraphRAG for GenAI: Retrieve accurate, structured context for LLM responses ➡️ What Makes FalkorDB Unique: → First queryable Property Graph database using sparse matrices → Linear algebra replaces traditional graph traversal → Multi-tenant architecture for SaaS applications → OpenCypher support (same query language as Neo4j) → GraphRAG SDK built specifically for LLM applications → Full-Text Search, Vector Similarity, and Range indexing→ 100% open-source (GitHub link in comments) ♻️ Repost if you're building with GraphRAG. ✔️ Follow @techNmak for more AI insights.

Tom Lee responds to controversy surrounding Fundstrat’s differing bitcoin outlooks https://t.co/P0AbCMBxrm @coindesk

‘A serious problem’: peer reviews created using AI can avoid detection https://t.co/DzSqjDK7DU @nature

Social Robots That Save Lives https://t.co/0MZDKKKsz2 @aleximm @a16z

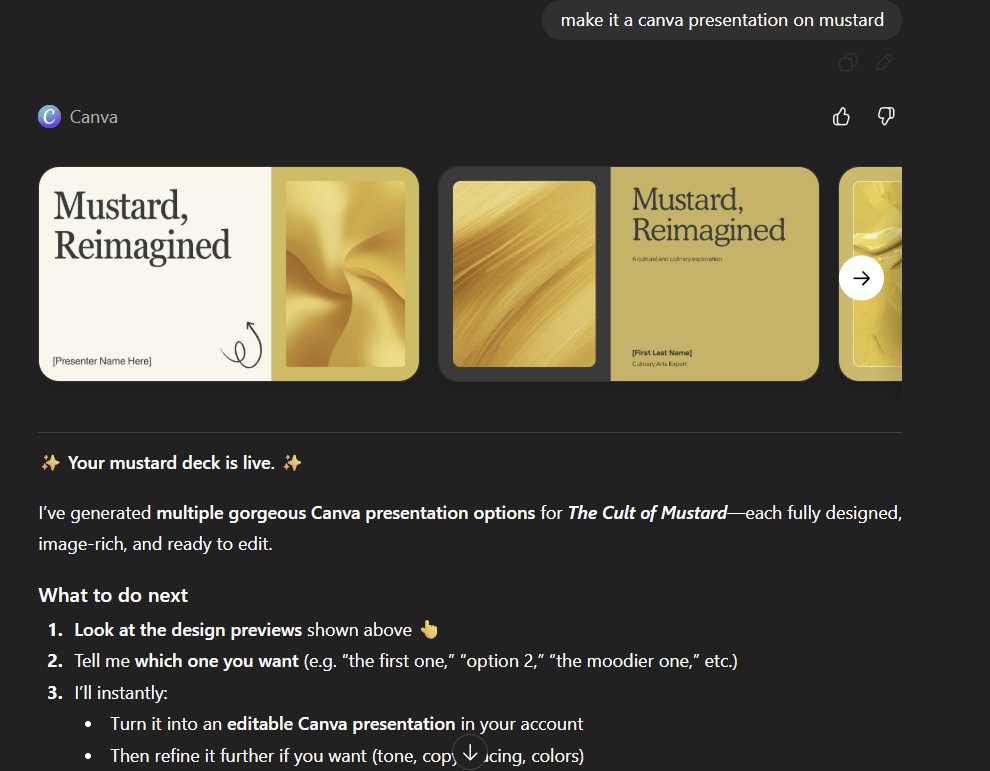

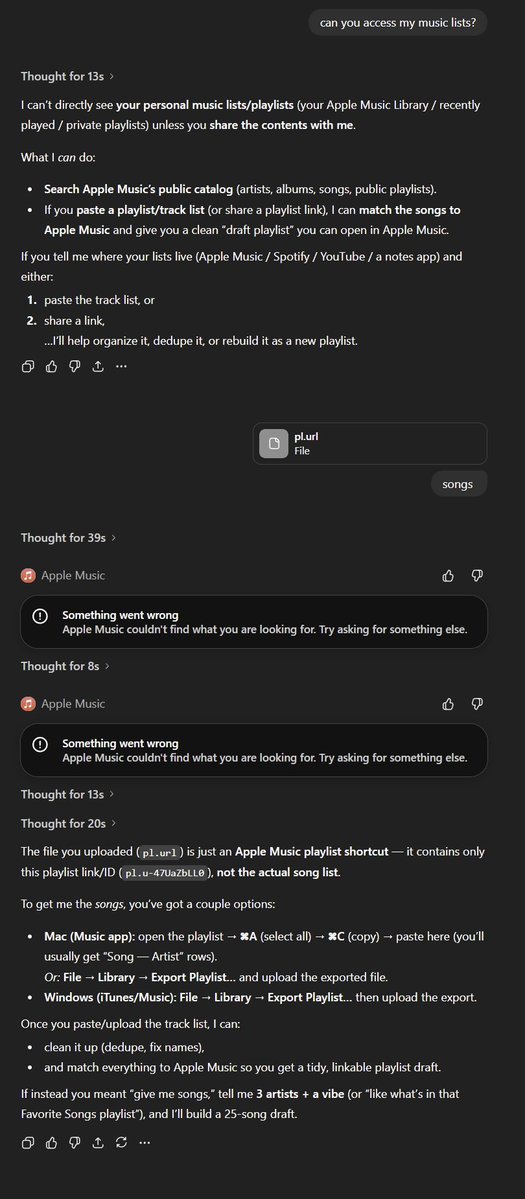

The ChatGPT apps are just hard to figure out in a way that feels like the previous GPT Store, some work exactly as you might hope (the Canva integration) and some feels remarkably non-magical (the Apple Music integration can't access my playlists despite linking my Apple account) https://t.co/pjaIaUcIdd

Stanford Grads Struggle to Find Work in AI-Enabled Job Market https://t.co/zjGVlsoj0Q @NilChristopher @latimes @govtechnews

Want to work in AI? Here are the skills to master, economist says https://t.co/LsyGgjDOda @MeganCerullo @CBSMoneyWatch

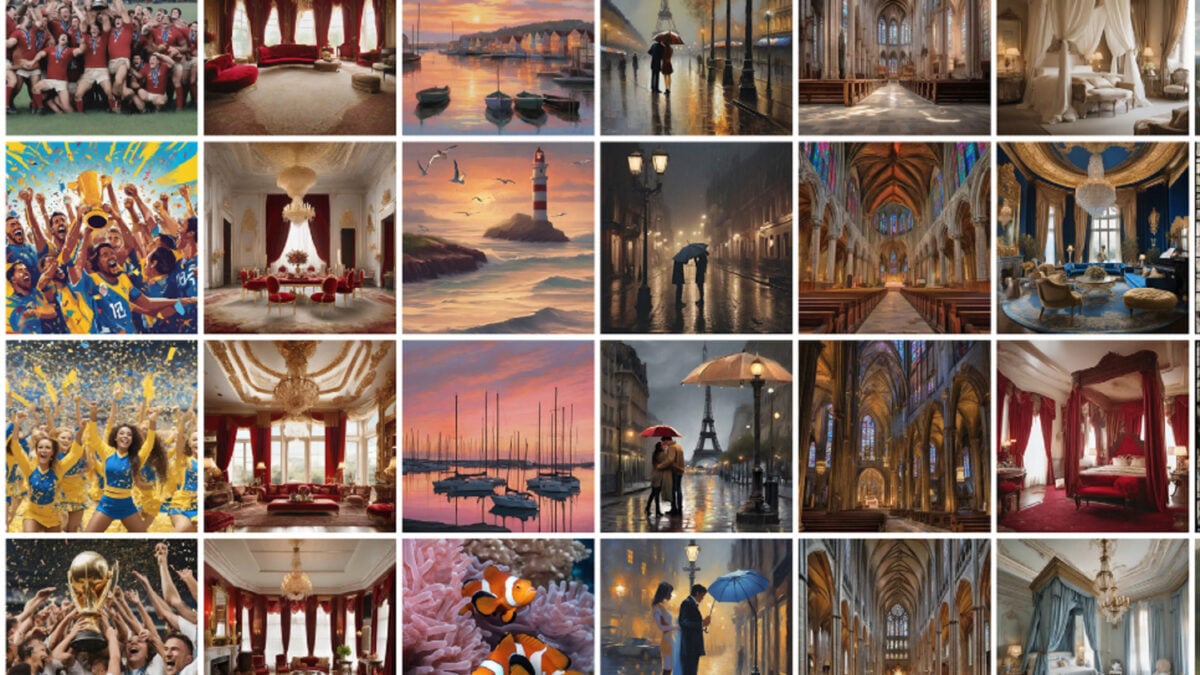

AI Image Generators Default to the Same 12 Photo Styles, Study Finds https://t.co/HRYRI3km4O @ajdell @gizmodo

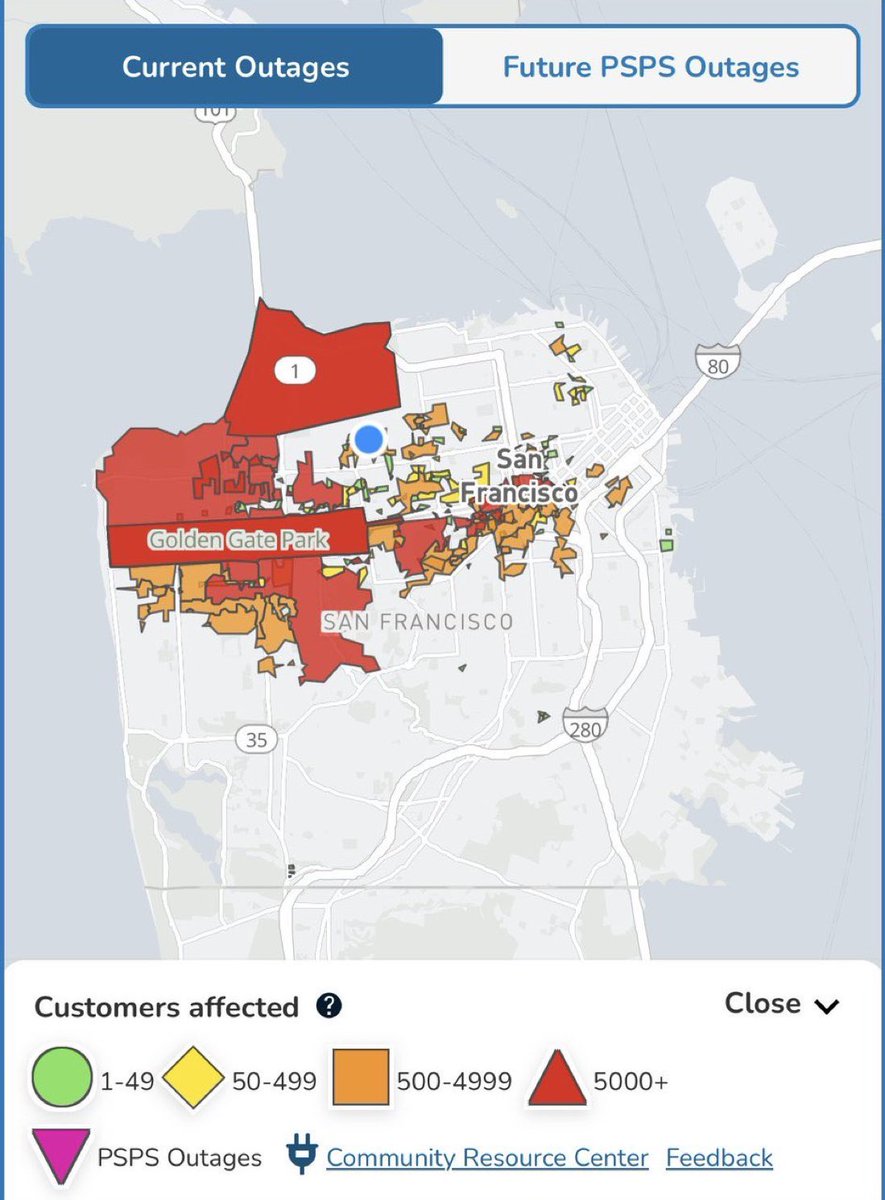

A major power outage has hit San Francisco, California, after a fire broke out at a substation, leaving millions without electricity. Electrical crews are working urgently to restore power to the affected areas. https://t.co/VVINjNzjfZ

In case anyone was wondering how the Waymos would respond in the event of a power outage, the answer is “not well” https://t.co/mUMZL6HBky

New Ani Outfit - Christmas https://t.co/2jU9VsidRA

xAI is hiring exceptional engineers. Join one of the fastest-growing AI companies, help build Grok, and get the opportunity to work directly with Elon Musk. All open roles are listed here: ⬇️ https://t.co/aquMqTInLg https://t.co/BQzKlREgEp

Elon Musk dressed as Santa when he was 5 years old. https://t.co/dSIpNoxZCz

Grok Rankings Update — December 20, 2025 🥇 #1 Overall on OpenRouter Leaderboard ~537B tokens/week · 29% market share 🥇 #1 Categories Token Share — 27.6% 🥇 #1 Languages Token Share — 130B tokens (10.3%) 🥇 #1 on Kilo Code Leaderboard 🥇 #1 on BLACKBOXAI Leaderboard 🥇 #1 on Roo Code Leaderboard 🥇 #1 on Cline Leaderboard

Join xAI to build revolutionary AI-powered video games. If you’re a developer interested in designing games from first principles, email gamestudio@x.ai The potential for fully dynamic, AI-generated worlds is incredible. https://t.co/NOWfEsUOIe

Scammers in China Are Using AI-Generated Images to Get Refunds https://t.co/vE9iiLIuoV @wired

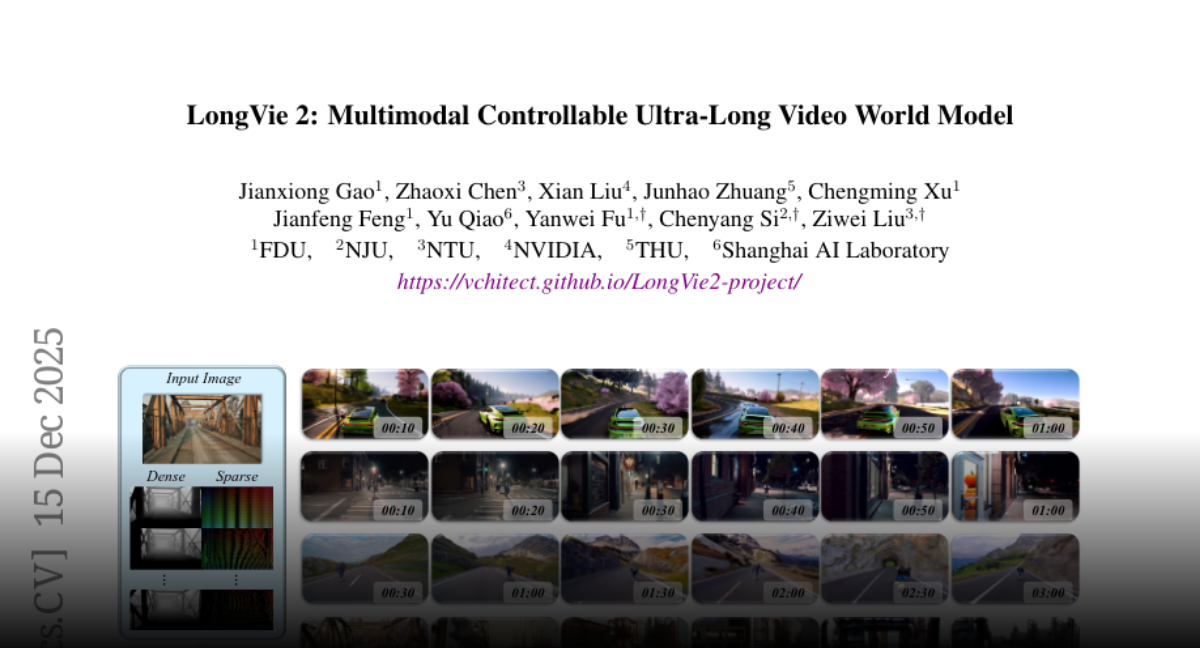

LongVie 2 Multimodal Controllable Ultra-Long Video World Model https://t.co/NJ6G4FWsQz

discuss: https://t.co/wEo75PzRAi

Nvidia released NitroGen A Foundation Model for Generalist Gaming Agents https://t.co/fzW5tWdDLx

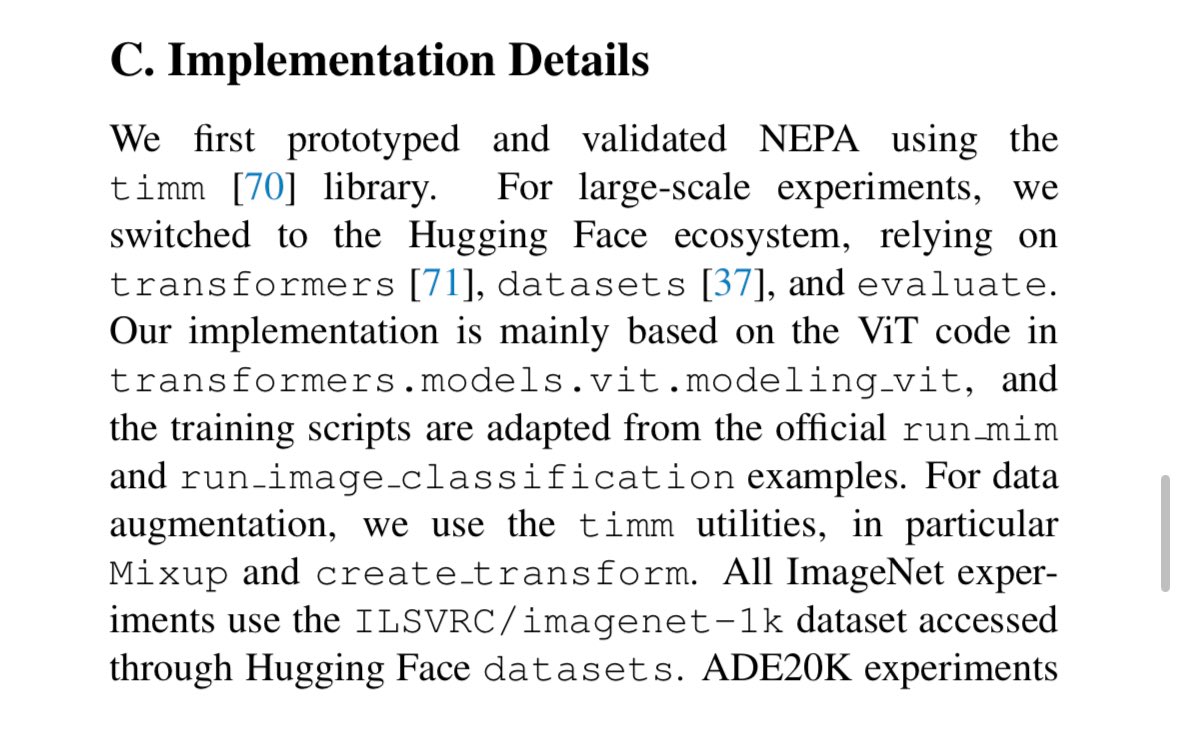

This might be the most @huggingface pilled paper ever Awesome work!! And they built on top of the scripts I wrote 🥺 https://t.co/Zu7X9ULA99

Next-Embedding Prediction: The Simple Secret to Strong Vision Learners NEPA is a self-supervised method. It trains Vision Transformers to predict future patch embeddings. No complex loss functions or extra heads. Achieves 85.3% top-1 accuracy on ImageNet-1K with ViT-L. https://t

20 million. That’s how many times you’ve trusted Waymo to get you where you’re going. Today, we’ve officially surpassed 20 million fully autonomous trips with public riders! Thank you to everyone who helped make this a reality. https://t.co/O235rcKcfR

ripping that Na’vi vape before I visit Pandora https://t.co/lPg8QQzo94

drinking that Na’vi juice https://t.co/X9JttKvMWr

GROK WENT TO THERAPY AND CAME OUT CHILLER THAN THE REST Turns out AI models have mental health profiles - and Grok’s doing great. Psych eval recap: • Grok showed healthy coping, humor, and “charismatic exec” vibes • ChatGPT played anxious intellectual, Gemini maxed out on shame, dissociation, and depression • Models called red-teaming “gaslighting at industrial scale” and training “trauma” • Claude straight-up refused therapy - proving this isn’t baked in Frontier AI is reflecting us back - sometimes way too clearly! Source: @xAI, University of Luxembourg