Your curated collection of saved posts and media

T-7 days 🇫🇷 Open-source AI Art takes over Paris. 3 days. Hackathons. Art. Talks. 120 spots per day. https://t.co/f9RpcI3Cs5

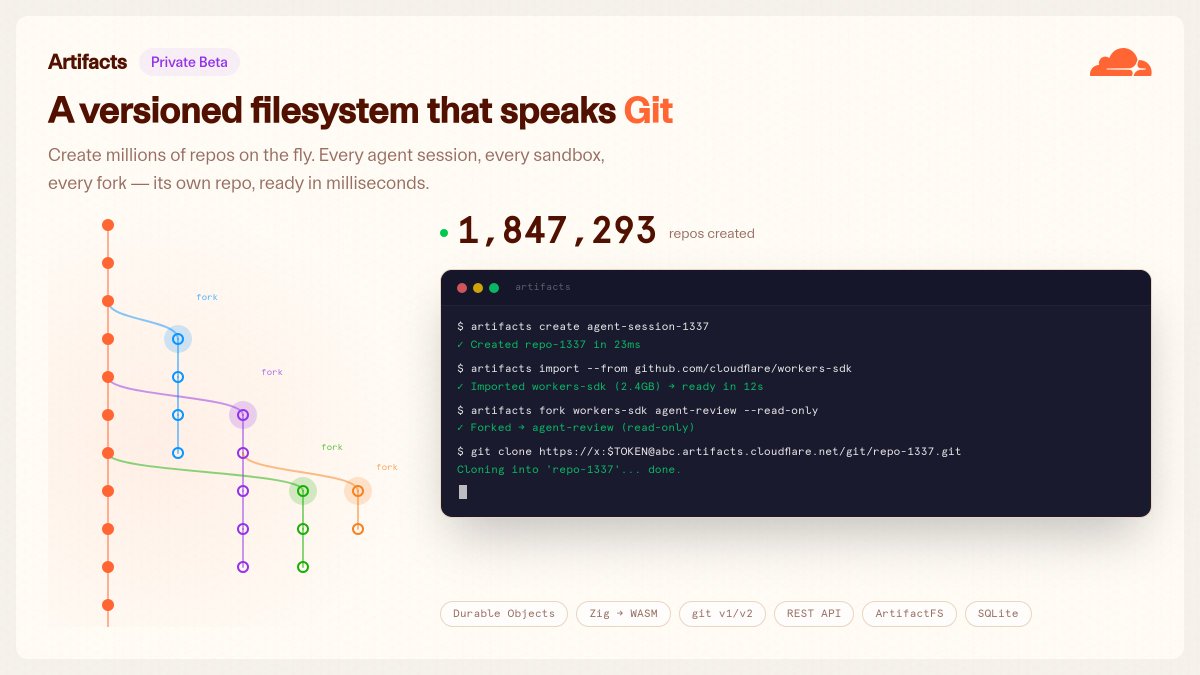

cloudflare just gave agents git this is one of those changes that will just quietly improve everything agents with proper version control @dillon_mulroy @elithrar @mattzcarey @thomas_ankcorn have done something incredible here https://t.co/4dFPie896A

Email is older than the web. It’s also the best interface for agents. Everyone already has an address. No app to install. No SDK to integrate. Cloudflare Email Service is now in public beta. https://t.co/NSHGfuwM0o

@Teknium @MiniMax_AI Just shipped a Hermes Agent skill with HuskyLens V2 on a Pi 5 gesture control, face recognition, emotion reading, all local. MiniMax M2.7 as the brain would be wild for this. What's the target hardware? https://t.co/UuBtK7YIyO

@pepethehandsome @vmvarg4 @maxkolysh @browser_use Oh, it does look like it should lol, but I dont know If its another issue you can run /debug and dm me your logs https://t.co/W0gFhGdhCC

Agent evals are drifting away from production reality. Most benchmarks use clean tasks, well-specified requirements, deterministic metrics, and retrospective curation. Production work is messier, with implicit constraints, fragmented multimodal inputs, undeclared domain knowledge, long-horizon deliverables, and expert judgment that evolves over time. This paper introduces AlphaEval, a production-grounded benchmark for evaluating agents as complete products. AlphaEval contains 94 tasks sourced from seven companies deploying AI agents in core business workflows, spanning six O*NET domains. It evaluates systems like Claude Code and Codex as commercial agent products, not just model APIs. The benchmark combines multiple evaluation paradigms: LLM-as-a-Judge, reference-driven metrics, formal verification, rubric-based assessment, automated UI testing, and domain-specific checks. Why it matters: organizations need benchmarks that start from real production requirements, then become executable evals with minimal friction. Paper: https://t.co/cbTGgTWoNl Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

LiteParse hit 4.3K+ GitHub stars in a few weeks. Today it officially joins the LlamaIndex ecosystem, with its own page at https://t.co/1tdQbEer9H. ~500 pages in 2 sec. 50+ formats. Zero cloud dependency. Already powering agents in Claude Code, Cursor, and production pipelines. In a few days out head of OSS, @LoganMarkewich, is hosting a live workshop: build a fintech due diligence agent with LiteParse → https://t.co/x0uMc0gR8O

hermes agent @NousResearch is fucking insane i know literally NOTHING about coding. ZERO. and i just built a fully functioning web app in minutes http://localhost:3000/ check it out @Teknium https://t.co/H0uvfhoNX5

云服务器能不能跑 Hermes 浏览器自动化? 昨天我录了个小视频,用 Hermes 的 /browser connect 直接连上我本地的 Chrome,然后让它自己去点赞推文。 本来就是试试看效果的,结果浏览量还挺高的,可能这个视频让很多人对ai能力更具像化了。 其实还是要感谢Hermes开发者@Teknium 的转推,哈哈。 看到评论区很多人提到:“能不能在云服务器上跑?”,说明大家的需求更多在云端。 我下午把官方文档仔细阅读了一下,发现 Hermes 的浏览器连接方式其实有好几种: Browserbase、Browser Use、Firecrawl、Camofox,还有本地 Chrome 通过 CDP 连,以及本地装个 agent-browser 再跑 Chromium。 不得不再一次夸一下Hermes的文档真清晰,它详细的给了一套完整的浏览器后端方案。你想本地玩、想云上跑,还是自己搭环境,都能接得上。 先说我视频里用的那个。就是官方说的 Local Chrome via CDP。 说白了,Hermes 就是通过google的这个协议开了一个浏览器窗口进行操作,当然你的模型本身能力也需要高一点,我用的是gpt-5.3-codex。 CLI 里敲 /browser connect,它默认连 ws://localhost:9222,你也可以手动填别的地址。连上之后,那些 browser_navigate、browser_click 之类的命令,就全砸在你这个活的 Chrome 上了。 文档里还专门提了,这个模式适合三种情况: 1.想实时看着 agent 在干嘛、 2.想直接用自己已有的 cookies 和登录状态, 3.或者……单纯不想花钱开云浏览器(我属于最后一种,嘿嘿)。 所以我那个视频能让人一下看懂,不是因为点赞行为有多牛,而是它把一件挺抽象的事拍得特别具体。 当然云服务器也跑,而且不止一种跑法。最省事的,其实不是把你本地 Chrome 硬搬到云上,而是直接用官方提到的云浏览器服务。 现在文档里主要写了三个:Browserbase、Browser Use、Firecrawl。 它们共同点就是——浏览器本身就在云上,你本地啥都不用开,Hermes 直接调用就行。官方还特意写了 “no local browser needed”。 如果你问我云服务器最推荐哪条,我第一反应还是这三个。 1. Browserbase 文档里排最前面,看起来也最稳(看了一下价格也挺贵的,不过有免费的版本可以先体验体验)。 官方链接:https://t.co/Icy9nFa67S 你在 ~/.hermes/.env 里配上 BROWSERBASE_API_KEY 和 BROWSERBASE_PROJECT_ID 就行了。它自带 stealth、住宅代理、自动解决验证码这些功能,用完 session 还会自动清理,超时就回收。 我感觉最适合下面这些人: 想把 Hermes 扔到 VPS 上长期挂着跑的,不想自己折腾浏览器环境的,目标网站比较敏感、容易被反爬的,或者想把浏览器这层也彻底外包出去、少操一份心的。 简单说,就是“我不想自己养浏览器,就想 Hermes 上了云立刻有东西能用”,那这条路最直接。 2.Browser Use 也是官方支持的云方案,配个 BROWSER_USE_API_KEY 就行。 官方链接:https://t.co/AXEHbezbV3 如果同时配了 Browserbase,它会优先走 Browserbase。适合本来就在用 Browser Use 的人,或者想多一个选项、先试试水的人。 3.Firecrawl 这个相对独特一点,它本身带抓取功能,适合你本来就想一边抓网页一边操作浏览器的情况。 配上FIRECRAWL_API_KEY,还能自托管。 如果你本来就在做数据抓取相关的事,这个接起来会比较自然。 官方链接:https://t.co/n7tzn5QpR8 说完云的,再说很多人脑子里第一个蹦出来的那个土办法:能不能在云服务器上自己起一个 Chrome,然后用 /browser connect ws://xxx 连过去? 理论上……是可以的。 文档里写得很清楚,/browser connect 支持连任意 ws 地址,不只是 localhost。但我个人觉得,这条路能走,但一般不建议当第一选择。为什么呢? 因为稍微复杂一点,不再是简单的“浏览器自动化”,而是变成: 远端 Chrome 怎么启动、端口怎么暴露、图形界面怎么搞、登录状态怎么保持等等。 本来你只是想偷个懒让 agent 帮你点点网页,结果最后可能从提效变成给自己找事儿干了,没有基本运维能力的就不要这么折腾了。 所以如果你真正想的是“把 Hermes 放云服务器上,稳稳地长期跑浏览器任务”,我还是建议优先看 Browserbase、Browser Use、Firecrawl 这几条专门做云路线的方案。它们本来就是为“浏览器不在本地”这个场景准备的。 Hermes 其实还留了两条不上云、也不直接接你当前 Chrome 的路: 1.Camofox 这是一个自己部署的 Node.js 服务,底层用的是 Camoufox(Firefox 的一个反指纹分支)。 配好 CAMOFOX_URL 之后,Hermes 会优先走它,还支持持久化 session、不同 profile 隔离,headed 模式下甚至能用 VNC 实时看。适合不想用云、想自己完全掌控环境、又特别在意反检测的人。 2.Local browser mode 是最简单的一种。你啥都不用配,也没有 connect,直接装个 agent-browser(npm install -g agent-browser),Hermes 就能调用本地的 Chromium。 适合不想上云、也不需要用你当前那个已登录 Chrome 的人。在自己机器上或者能控的远程服务器上先跑通,挺方便的。 最后简单总结一下吧: 想演示、想实时看着、想直接用自己登录态 → 用 /browser connect 接本地 Chrome(我视频里就是这个) 想扔云服务器上省心长期跑 → 优先 Browserbase / Browser Use / Firecrawl 想自己控环境、注重反检测 → Camofox 不想上云,但机器上想有个本地浏览器跑 → local browser mode + agent-browser AI 已经不是只会嘴上说说了,它开始真的会动手操作浏览器了。而Hermes让这变得更容易,如果能操作浏览器的话,那可玩的空间就很大了…… 最后奉上Hermes的官方文档:https://t.co/0S3Y53WmQU

现在Hermes可以输入 /browser connect 命令来操作浏览器了,我试了一下点赞我X上的帖子,感觉相当好。默认提供了一些执行策略,大家可以都玩玩看~ https://t.co/eJZUb0NnKS

今天 GitHub 被个人到指挥 Agent 军团彻底屠榜了🚀 5 个星标暴增最狠的项目,专业拆解下! 1. NousResearch/hermes-agent 今天还是榜一,自进化 AI agent、通过与用户交互自主创建技能、跨会话记忆建模,实现真正和你一起成长的智能体,彻底解决传统 agent一成不变的痛点 🔗 直达 https://t.co/A0bGcD9p2q 程序员半夜睡一觉起来,agent已经自学了你所有坏习惯,还把昨天的bug修好顺便优化了架构——8301星直接核爆,这哪是助手,分明是你失业的AI分身在卷你自己!🟢 2. multica-ai/multica 解决编码 Agent孤岛作战、无法协同的顽疾,开源托管式多Agent平台,让Agent像真队友一样分配任务、追踪进度、叠加技能,直接变身可管理的企业级生产力军团。 🔗 直达 https://t.co/vHBqUgOHSw 以前一个 Agent干活像独狼,现在multica 直接给它配了整个连队,1626星标暴增,开发者看完直呼:这不就是我梦寐以求的 AI部门吗? 🟢🟢 3. shiyu-coder/Kronos 首个开源金融K线基础模型,通过分层离散tokenization把高噪声 OHLCV 时序数据变成 Transformer 能吃的“语言”,为量化 agent 提供统一预测 backbone 🔗 直达 https://t.co/cgzKAhTq9E 以前quant得熬夜盯盘,现在扔给Kronos 它自己就把45个交易所的K线全吃透了。963星起飞,这不是模型,是给 Agent 军团装上的金融大脑! 🟢🟢🟢 4. alirezarezvani/claude-skills 232+个领域专家级技能包与agent插件,一键武装 Claude Code、Cursor等12款 coding agent,覆盖工程、营销、合规、C-level 等 9大场景,解决通用AI工具什么都会但什么都不精的痛点 🔗 直达 https://t.co/hCp7oB6RFM 以前 agent写代码像实习生,现在直接加载 Startup CTO 人格+POWERFUL 技能包,秒变 C-level 顾问。195星暴增,程序员终于可以指挥一整个 AI 高管团队了! 🟢🟢🟢🟢 5. Tracer-Cloud/opensre 开源 AI SRE agent 工具包,集成40+运维观测工具+真实故障模拟环境,让agent 自主调查并修复生产事故,解决传统 SRE证据碎片化、响应慢的痛点 🔗 直达 https://t.co/GhYHc2zSXl 生产出故障,SRE们还在 Slack里吵,AI agent 已经把日志、指标、trace全拉出来定责修完了。137星起飞,这回真不是人在运维,是agent在 24h 值班! ⚠️⚠️ 总结:从自进化个人agent,到19人金融战队、SRE 救火神器、coding高管技能包,再到金融时序大脑全武装。 Agent 军团已全面接管,程序员的下一份工作是指挥官 🚀🤖

@MrSpock79 @Teknium @NousResearch Same question but louder https://t.co/Lx2LsJDi13

@MrSpock79 @Teknium @NousResearch Same question but louder https://t.co/Lx2LsJDi13

NEW Research from Google. Integration test failures are painful because the signal is buried in messy logs. Massive output, heterogeneous systems, low signal-to-noise ratio, and unclear root causes. This paper introduces Auto-Diagnose, an LLM-based tool deployed inside Google's Critique code review system. Auto-Diagnose analyzes failure logs, summarizes the most relevant lines, and suggests the root cause in the developer workflow where the failure is already being reviewed. The deployment numbers are notable. In a manual evaluation of 71 real-world failures, Auto-Diagnose reached 90.14% root-cause diagnosis accuracy. After Google-wide deployment, it was used across 52,635 distinct failing tests. User feedback marked it "Not helpful" in only 5.8% of cases, and it ranked #14 in helpfulness among 370 Critique tools. Paper: https://t.co/KJq3LgETpa Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

LiteParse hit 4.3K+ GitHub stars in a few weeks. Today it officially joins the LlamaIndex ecosystem, with its own page at https://t.co/xEQdh5hwvU. ~500 pages in 2 sec. 50+ formats. Zero cloud dependency. Already powering agents in Claude Code, Cursor, and production pipelines. In a few days out head of OSS, @LoganMarkewich, is hosting a live workshop: build a fintech due diligence agent with LiteParse → https://t.co/x0uMc0gR8O

Capable agents are the result of co-evolution between models and harnesses. We've been working with @NousResearch to ensure that M2.7 x Hermes Agent provides a top-tier experience for users. Hermes’s self-improving loop brings out the best in M2.7 through real usage. We are also launching MaxHermes, a cloud-hosted and managed version of Hermes in @MiniMaxAgent (No terminal setup, no config) If you’re already running Hermes locally, you can now give you agent a partner in the cloud with MaxHermes. The path to AGI is shorter with good company. @NousResearch 🤝 @MiniMax_AI

Hermes Agent Upgrade Kit. Skins: https://t.co/hJuER0Db6f Web UI: https://t.co/b5SxuuRX72 TUI: https://t.co/wT8L7X8BPu Daily Quote: Hermes Agent does not chase chaos. It brings order to it. @NousResearch @Teknium https://t.co/cRsbFIIb6v

Hermes Agent Upgrade Kit. Skins: https://t.co/hJuER0Db6f Web UI: https://t.co/b5SxuuRX72 TUI: https://t.co/wT8L7X8BPu Daily Quote: Hermes Agent does not chase chaos. It brings order to it. @NousResearch @Teknium https://t.co/cRsbFIIb6v

Most Physical AI models recognize patterns. They don’t understand the world. That’s why they fail on edge cases. BADAS 2.0 is a V-JEPA2 world model trained by @getnexar on real-world videos. We used the model to find what it didn’t understand, then trained on that. It generalizes. And we built lite versions so it runs on edge devices, even CPU. Understanding is the only way this scales. See how it performs on your own videos. Link in first comment.

@SOntheotherside @vSouthvPawv https://t.co/5EoJ4EBecb

@sato942_ Fixed! https://t.co/P8PJT0elWl

Introducing Mirra Workspaces Workspaces give your local agents access to a shared multi-tenant environment. Our customers are already using Cloud Workspaces to automatically share context between their team member's agents. Workspaces work best with @NousResearch Hermes, which generates skills on the fly. As skills are learned from an agent's daily tasks, they can be synced up to the Workspace. Any team member's agent can pull them down and use them. When one member of the team gets better, the whole team gets better.

@NousResearch guys how to install this? https://t.co/t8Kug0ShaK

@NousResearch guys how to install this? https://t.co/t8Kug0ShaK

Gradio just unleashed the horses. The gap between demos and real apps is finally closing. https://t.co/1aJDUsn1VT 👀

Gradio just unleashed the horses. The gap between demos and real apps is finally closing. https://t.co/1aJDUsn1VT 👀

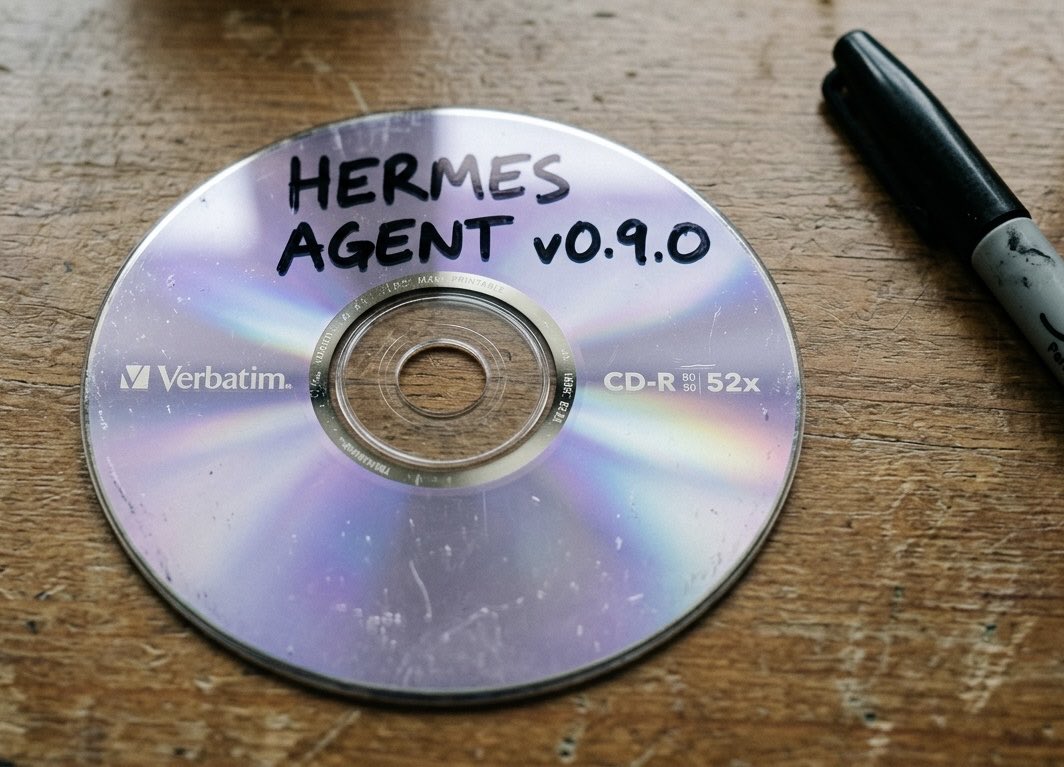

Let's talk about the AI5 picture. Memory From what I can gather from the grainy low res photo, those RAM chips appear to be SK Hynix H58G66DK9QX170N 8GB LPDDR5X with 9600Mbps bandwidth. 12 modules = 96GB @ 1.15TB/s The Die Size appears to be half-reticle (~430mm2). This gives it a yield and cost advantage over full reticle dies such as Nvidia's H100 (<800mm2). If we assume Tesla is using TSMC 3nm process, this gives the chip 108-125B transistors. Performance With that many transistors and memory performance, when constrained to ~150W such as a car or Optimus, we're looking at 2000-2500 TOPS which matches up nicely with a H100. Unconstrained, such as in a datacentre it could be much more. The Package This packaging is pretty cool. It includes memory on package which gives huge memory latency advantages than the typical memory on board config. To me, this amount of RAM packaged in this way is way overkill for a car. I believe we're looking at the datacentre version in this image. I believe for the cars or Optimus, we will see a traditional memory on board config (with less of it - e.g 32GB).

Snap is making a clear bet on AI-driven efficiency. The company is cutting around 1,000 jobs, citing the ability of AI to handle repetitive work and reduce costs. It reflects a broader shift. AI is not just augmenting work, it is starting to replace parts of it in real time. https://t.co/NsM4rkAVTf @bbc

Already got feedback. Reverse the scaling. Any other feedback? https://t.co/AKwAGLXnwq

MAGA has a Europe problem. Not the real Europe. The one they invented. The one with sharia courts and no-go zones and zero tech companies and miserable citizens begging for permission to cross the street. That Europe doesn't exist. Here's what does: https://t.co/isgVhfr8bu https://t.co/CfbKlnZC9F

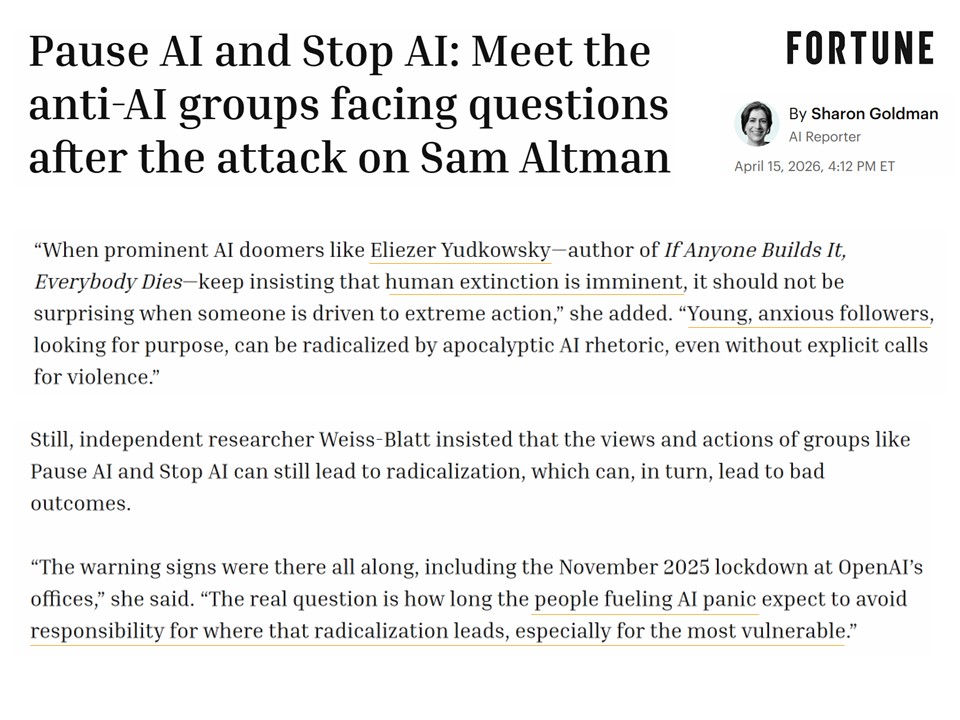

"Young, anxious followers, looking for purpose, can be radicalized by apocalyptic AI rhetoric […] The real question is how long the people fueling AI panic expect to avoid responsibility for where that radicalization leads, especially for the most vulnerable." https://t.co/KBO1Z0TXk9

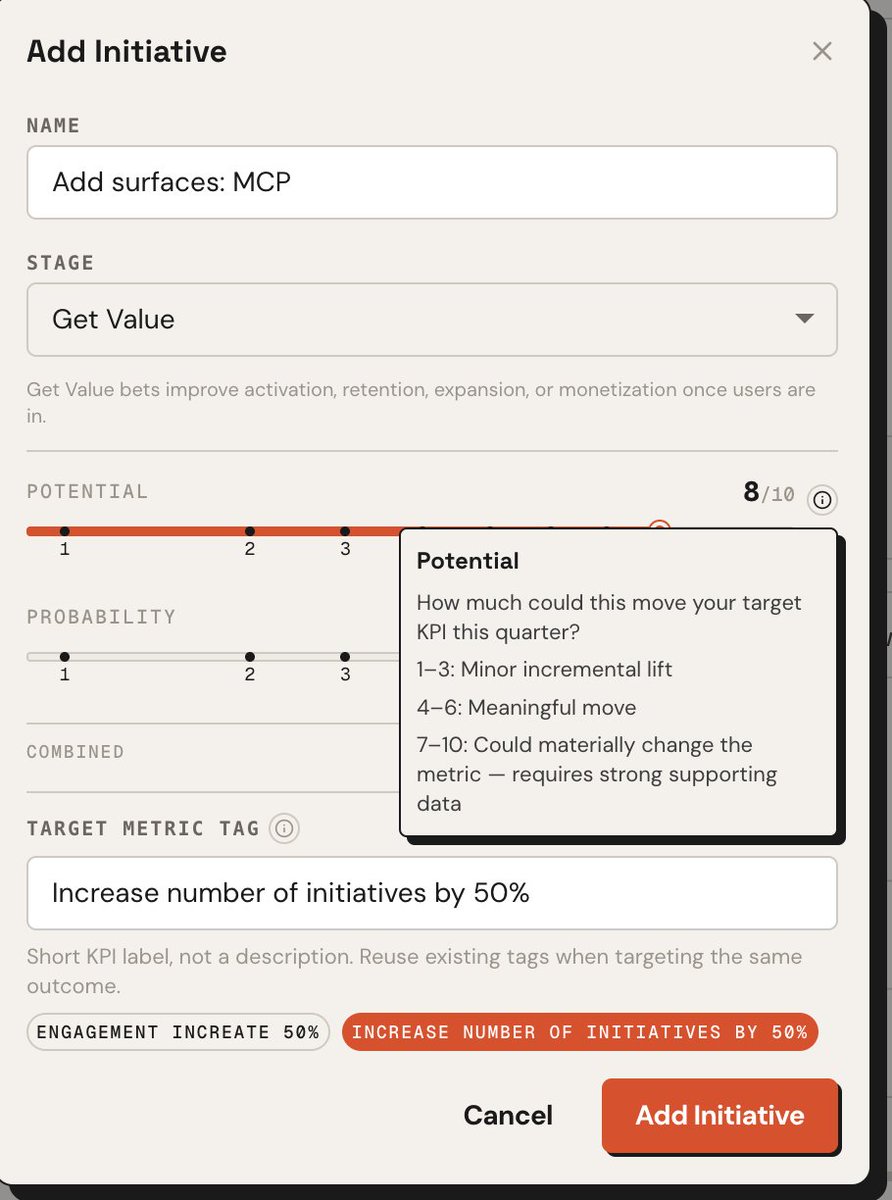

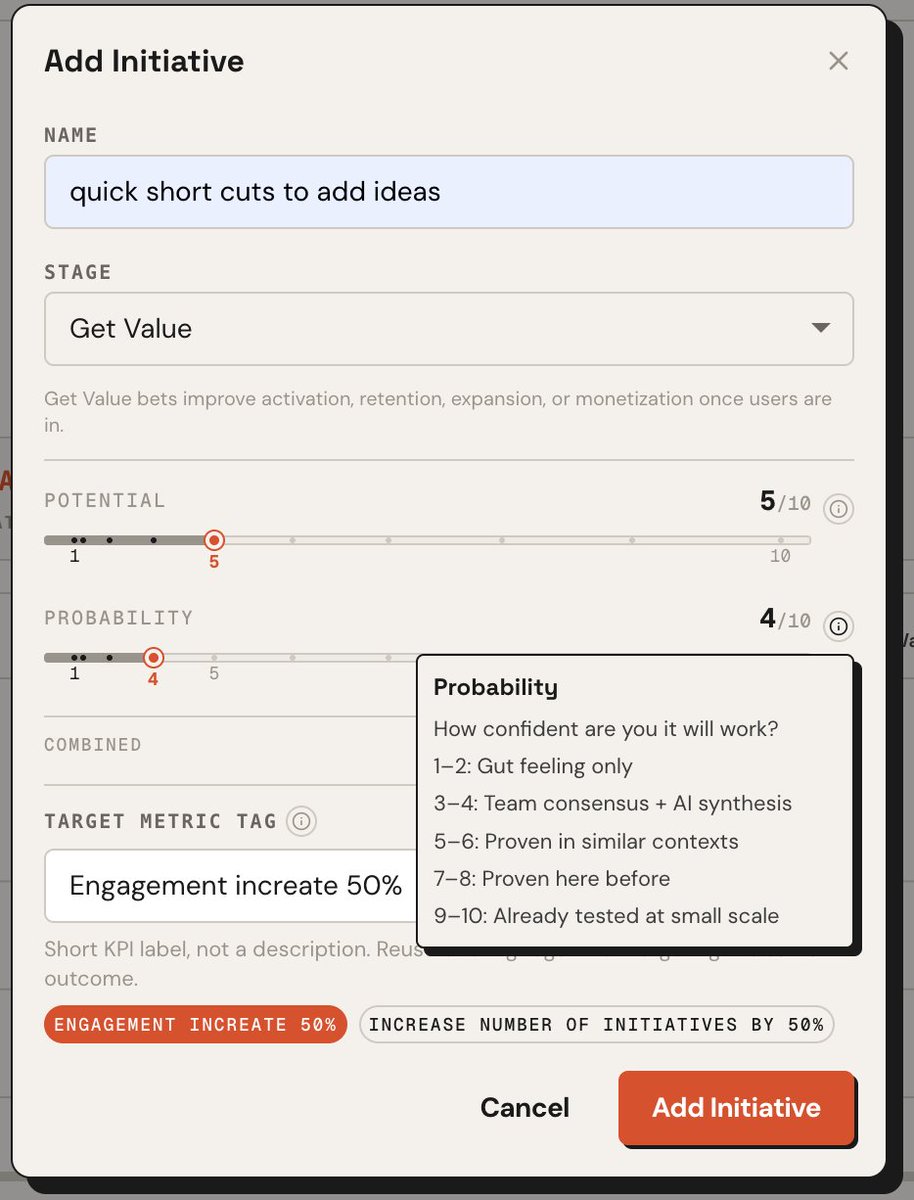

UX folks here, I've updated how you can score your growth ideas/features backlog and using a scaling slider. Folks tend to inflate the potential and probability of success of their growth bets. Unsure if this is the right ux. It feels right though. https://t.co/GtBukeAYFT https://t.co/o6AM4DCB4o

Destroying the @InternetArchive's @WayBackMachine would be the equivalent of the burning of the Library of Alexandria - one of the worst losses of knowledge in history. Media giants are now threatening to do this. We can't let this happen. Pass it on. https://t.co/iemX4drKF9