Your curated collection of saved posts and media

https://t.co/ly8vxkqcM7

10 years of residency and fluency in Japanese. Japan First Her approval rating is 75% in Japan. https://t.co/MibaMeP0AE

@scottbelsky A trend I’m seeing more is immigrant-founded restaurants hosting cooking classes It’s a tangible way to share your culture and ensure your family recipes/techniques become a part of your lasting legacy I took a tamale class last week and learned a few different regional styles https://t.co/vslh0CmF1a

You can now fine-tune LLMs with Unsloth then deploy them in @LMStudio! 🦥👾 We made a free notebook to fine-tune FunctionGemma (270M) so it “thinks” before calling tools, then export the model to GGUF for deployment in LM Studio. Notebook: https://t.co/3XoGxbDAuA

We worked with @UnslothAI on a new beginner's guide: How to fine-tune FunctionGemma and run it locally! 🔧 Train FunctionGemma for custom tool calls ✨ Convert it to GGUF + import into LM Studio 👾 Serve it locally and use it in your code! Step-by-step notebook: https://t.co/JkR

This AI agent just surpassed humans for the first time ever Simular open-sourced Agent S, and scored 72.6% on OSWorld bench vs 72.36% humans, automating your desktop and complex workflows 6 wild examples + how to try: 1. Turn research paper to X thread with assets https://t.co/jdB1TRHa3m

Open NotebookLM is INSANE! Fully Free Local NotebookLM Alternative with Gemini Integration: https://t.co/t4mgF3ryCv https://t.co/IB69nEEyJd

Open NotebookLM is INSANE! Fully Free Local NotebookLM Alternative with Gemini Integration: https://t.co/t4mgF3ryCv https://t.co/IB69nEEyJd

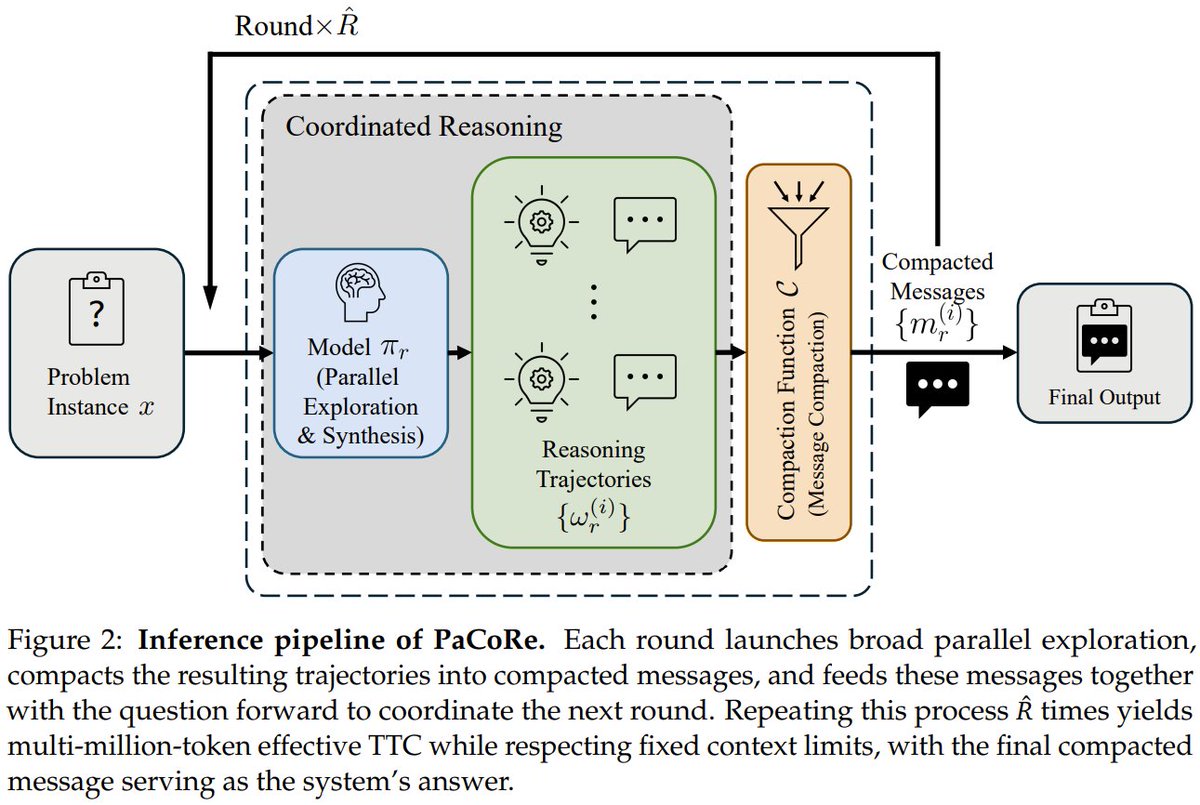

What if an 8B model could out-reason GPT-5 on math? Introducing PaCoRe. It breaks the sequential bottleneck. Instead of thinking step-by-step, it launches hundreds of parallel reasoning "threads" in each round, compresses their insights, and synthesizes them to guide the next round. The result? It scales compute to millions of tokens without hitting context limits, outperforming frontier systems. The 8B model scored 94.5% on HMMT 2025, beating GPT-5's 93.2%. PaCoRe: Learning to Scale Test-Time Compute with Parallel Coordinated Reasoning StepFun, Tsinghua, Peking Paper: https://t.co/qn8ShtFAwR GitHub: https://t.co/MHanDNRBYB Hugging Face: https://t.co/aN4hgyO7bE Our report: https://t.co/08pf1rxOug 📬 #PapersAccepted by Jiqizhixin

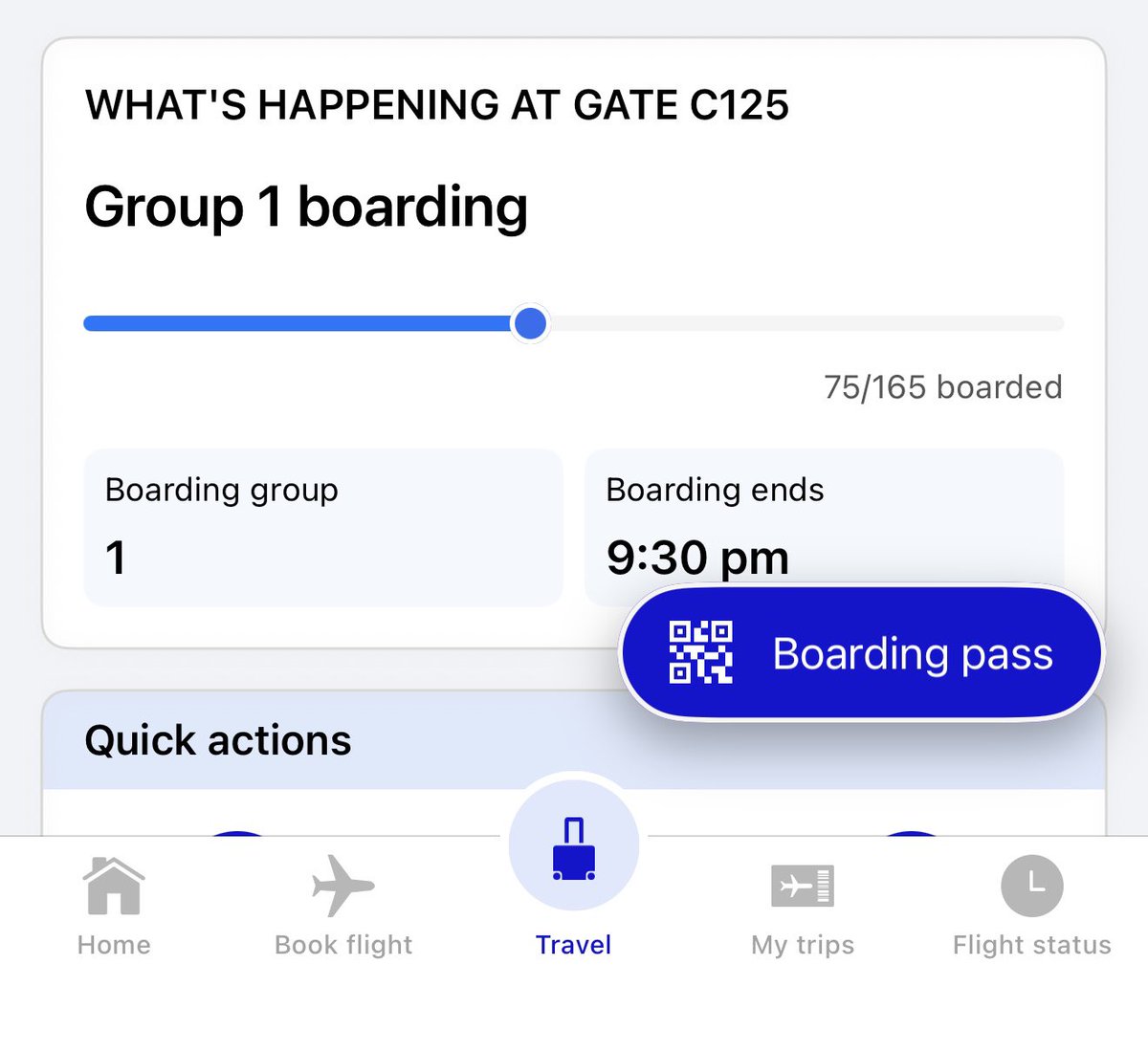

One of the things I love about @united is how good their iOS app is. The latest version has real-time boarding with a passenger progress bar, smart lounge guidance based on your gate and capacity, and more detailed live bag tracking. Lots of thoughtful little details ✈️ https://t.co/IrsVSgH36O

🚨Scientists just reprogrammed leukemia to self-destruct – and it worked. In a major breakthrough, scientists at Institut Pasteur have developed a therapy that forces leukemia cells to self-destruct—and alerts the immune system to wipe out the rest. The team targeted malignant B-cell leukemia with a triple-drug combination that reprograms cancer cells to undergo necroptosis, a form of inflammatory cell death. Unlike the silent shutdown of apoptosis, necroptosis creates an immune alarm, drawing in the body's defenses. Using real-time imaging, researchers watched immune cells swarm the cancer, leading to total tumor elimination in lab models. The challenge was that B-cell cancers typically lack a key protein, MLKL, needed for necroptosis. But the team cleverly sidestepped this using three existing clinical drugs. Together, they bypassed the missing protein and reactivated necroptotic pathways. The result: not just tumor shrinkage, but complete disappearance in multiple preclinical models. While human trials are still to come, the findings hint at a new kind of cancer therapy—one that doesn’t just kill tumors, but trains the immune system to join the fight. And because the drugs are already approved, the road to real-world use could be much shorter. Source: Le Cann, F., et al. (2025). Reprogramming RIPK3-induced cell death in malignant B cells promotes immune-mediated tumor control. Science Advances.

You were right @Scobleizer https://t.co/zuSMreiBwY

Qwen-Image-Edit-2511-Lightning https://t.co/myPYmIQ5ET

Capability overhang means too many gaps today between what the models can do and what most people actually do with them. 2026 Prediction: Progress towards AGI will depend as much on helping people use AI well, in ways that directly benefit them as on progress in frontier models themselves. 2026 will be about frontier research AND about closing this deployment gap — especially in health care, business, and people's daily lives.

Because most of my fellow Europeans haven't had the time nor stamina to fully acquaint themselves with the complete spectrum of insanity that is the current U.S. administration, I've taken it upon myself to offer a brief, honest introduction to its main characters.🧵 https://t.co/kGqm6LY9N5

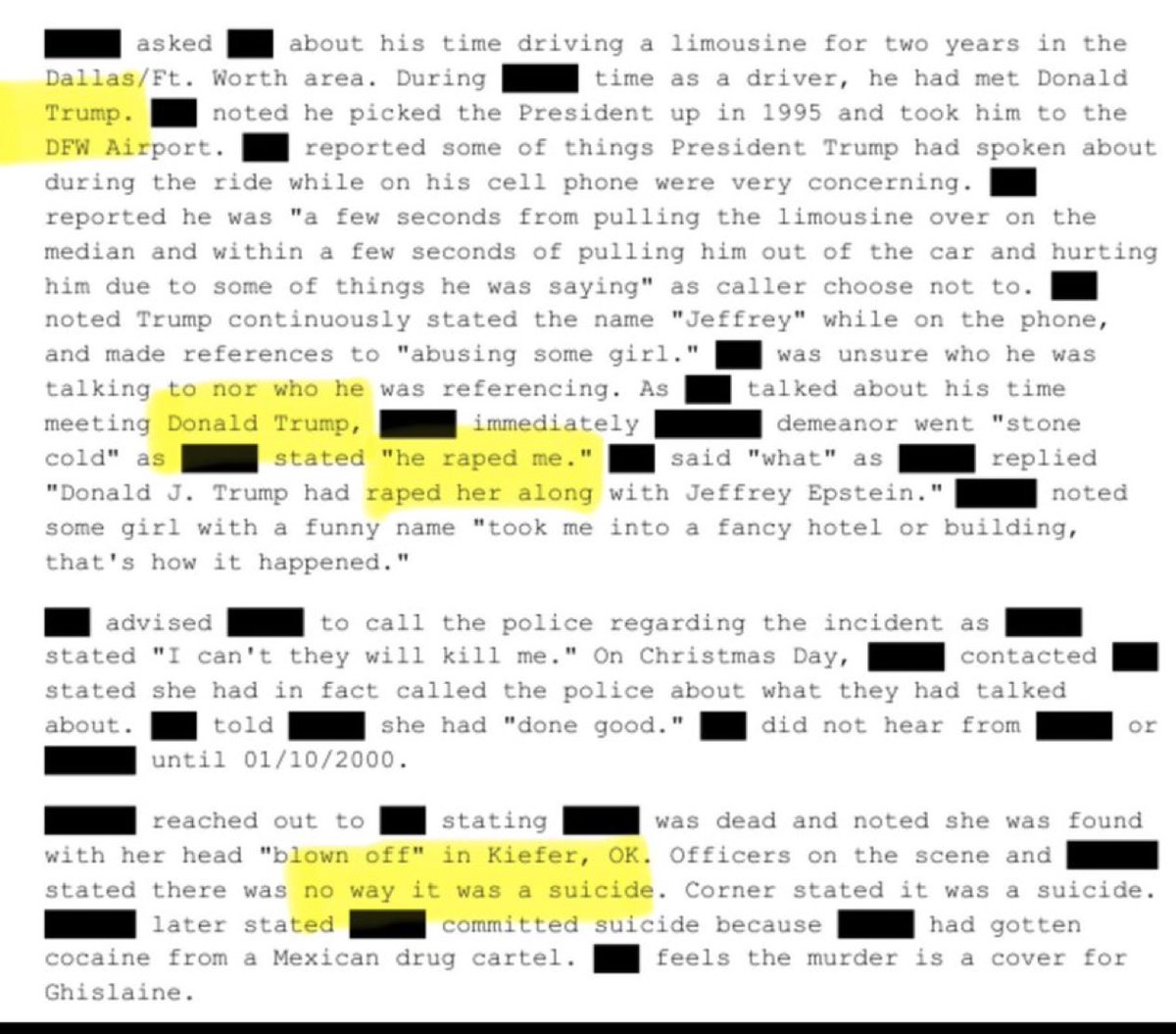

HUGE BREAKING: Data Set 8 of The Epstein Files shows that a victim stated that Trump & Epstein raped her, and that a Limo driver heard Trump in the back speaking to “Jeffrey” about “abusing girls.” The victim then “committed suicide” in January of 2000 after reporting the incident. Police didn’t believe it was suicide. Pls share far & wide!

Along for the ride in unsupervised FSD testing https://t.co/AsyBHnndj8

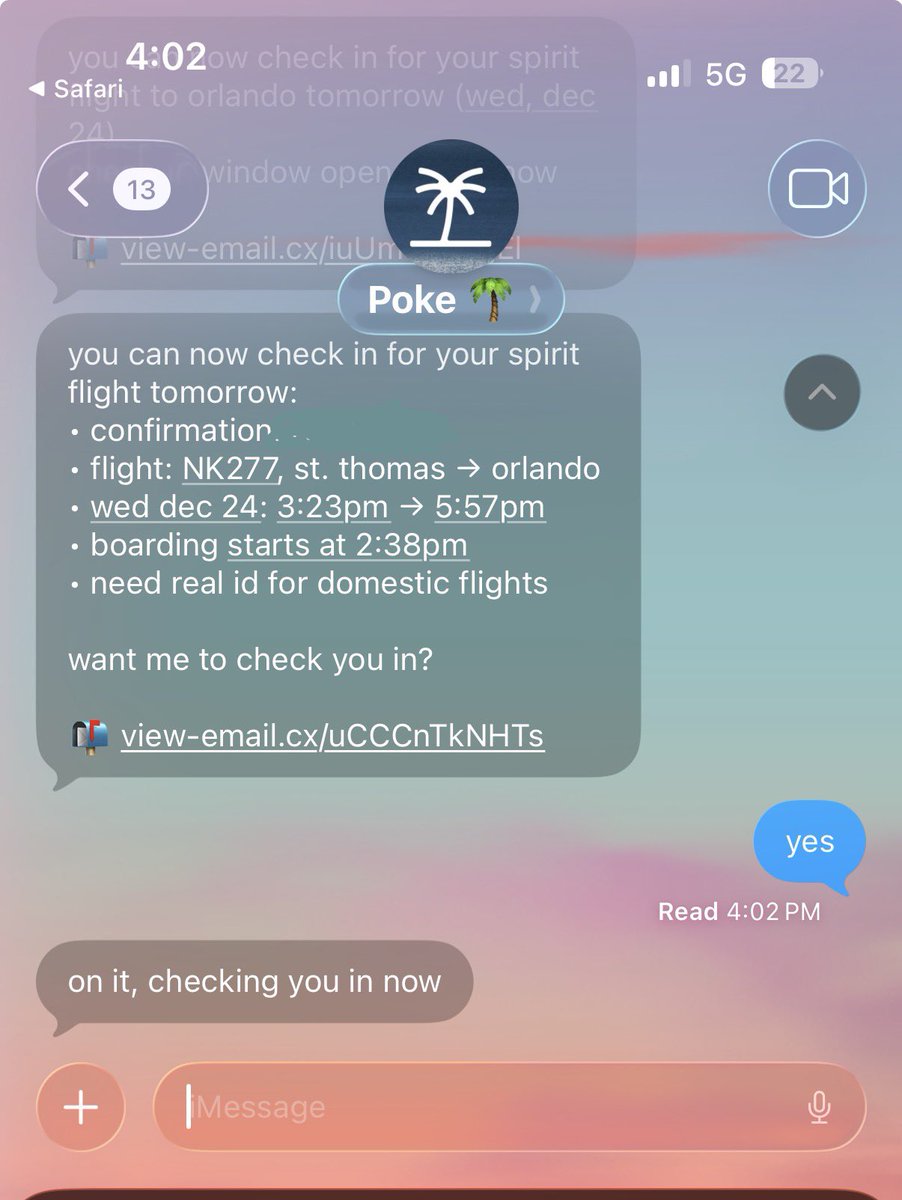

the spirit app wasn’t loading but poke was https://t.co/PZagjAT5ER

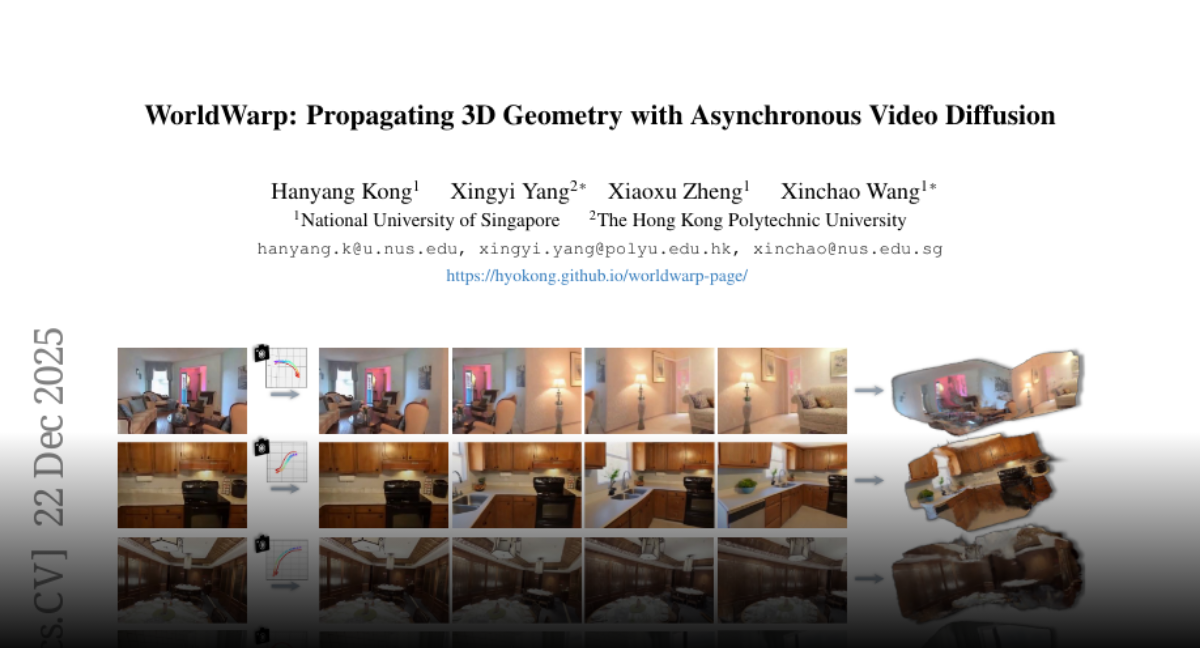

WorldWarp Propagating 3D Geometry with Asynchronous Video Diffusion https://t.co/7CvB39UGvG

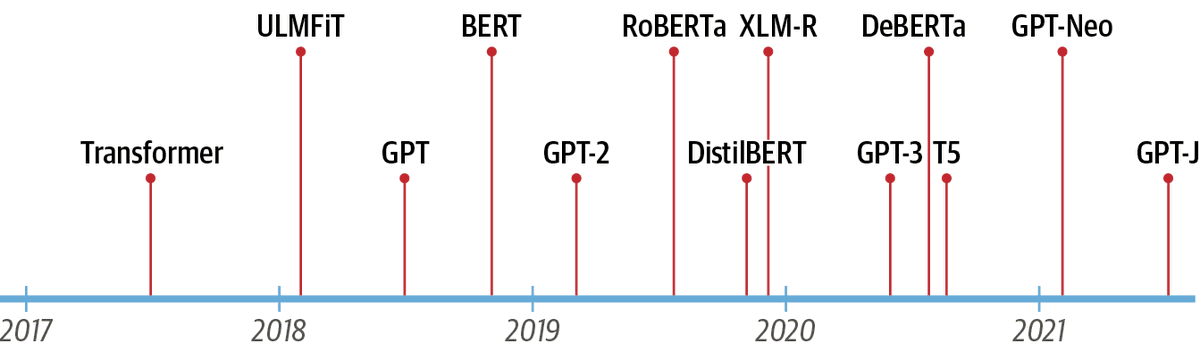

ULMFiT was really ahead of its time, complete with the pre-train -> mid-train -> SFT pipeline we use today https://t.co/ntOnsX6R6P

2017 Pre-training (ULMFiT) 😊

https://t.co/GEOZunWoXj alums and ULMFiT users like Jason have been fine-tuning LLMs since 2017/18. The rest of y'all are years behind, sorry. 🤷

@jeremyphoward 2018 Fine-tuning ULMFiT on song lyrics (DeepLyrics, grad school project) Watching it learn to rhyme was when I knew scaling was going to work

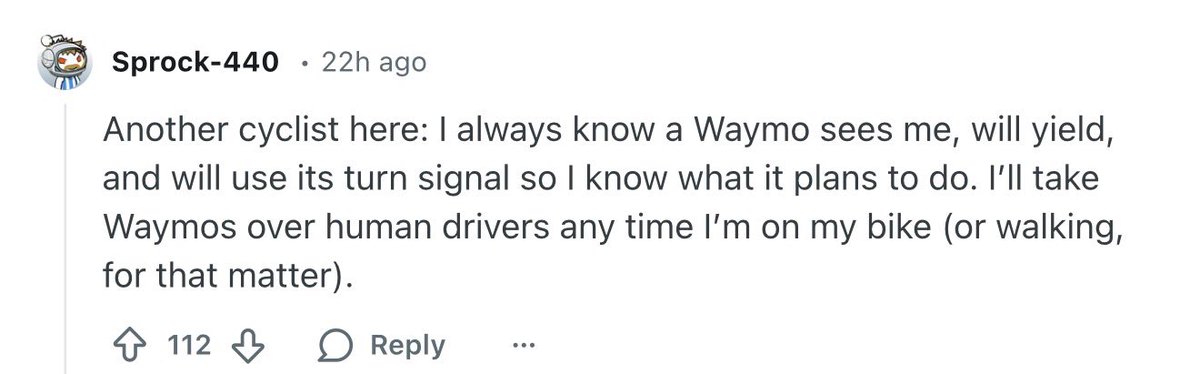

I bike everywhere in SF. I barely ever take a taxi/uber/waymo. But if you want to ban Waymo it means you don’t care about cyclists like me. https://t.co/BaQUdVpMjG

https://t.co/OrXsuh4Mrs

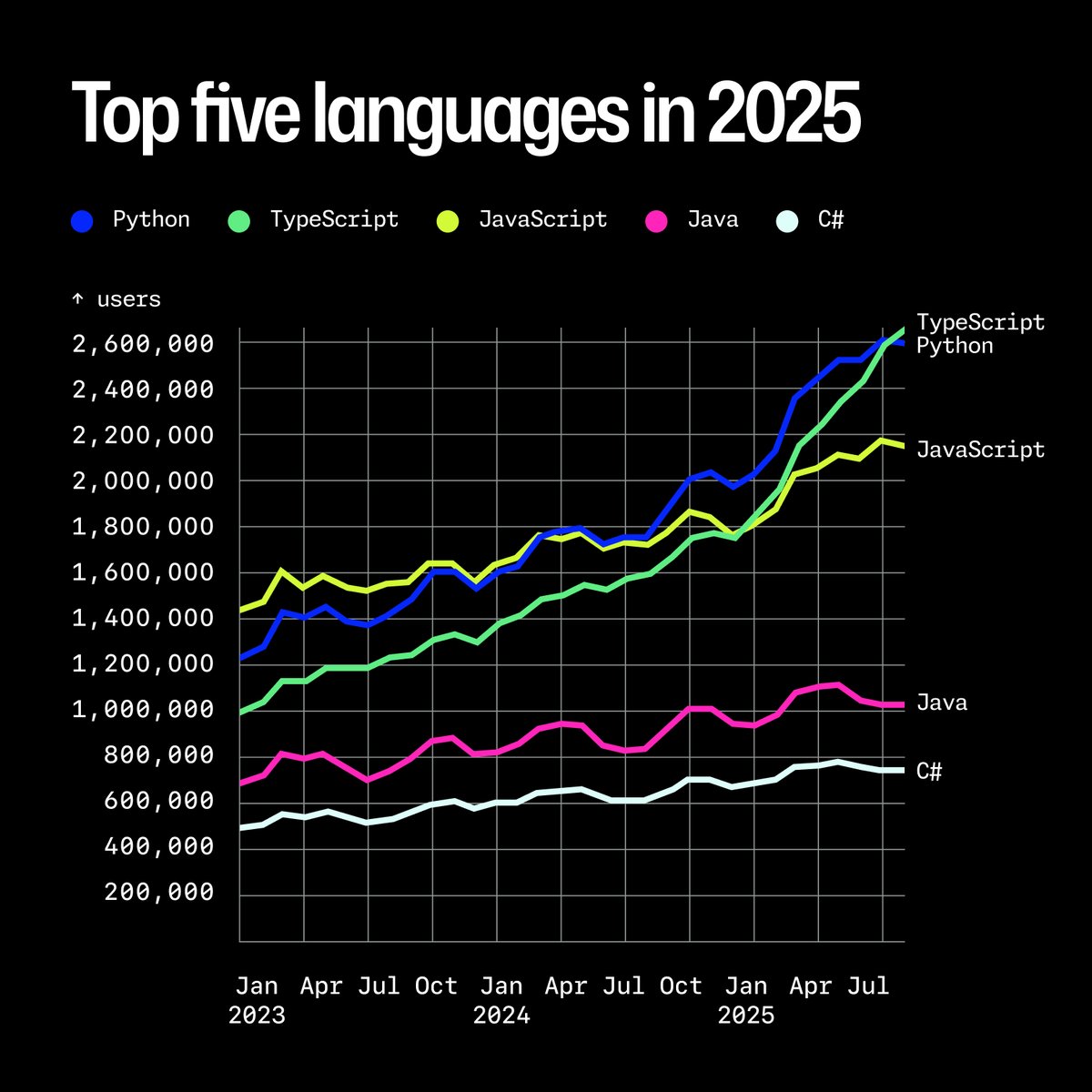

It was the closest race yet when it came to the top programming language of the year. 👀 https://t.co/GKDBVjiI0S

von Neumann gave us "zero-sum" in 1944 and it's one of my favorite examples of linguistic compression. Two words that mean “strictly competitive, fixed pie, stop looking for win-wins.” The term's real gift: it reveals the default human assumption that life is chess and value can’t be created—only transferred. Once you have the label, you start noticing how often that’s wrong

If you support Native American people’s, history & culture 🥰Say.. “Yes https://t.co/DmIpIrRUUn

☕️ @LatentLabs_, Outpost Bio and #AWS welcome you for breakfast on Jan 14 at #JPM2026. Hear from @biogerontology & discover how #AI is transforming drug discovery. 👉 https://t.co/aRQtFz2scX 🧬 Connect with leaders & VCs to explore the next generation of translational tools & biological FMs.

What if the safest driver on the road isn’t a human, but Tesla’s AI? LikeFolio's @AndySwan says Tesla FSD is already far safer than human drivers, in some cases multiple times safer! The problem? Tesla barely markets it. If people understood that pressing a button and letting AI drive is safer than driving yourself, it could be a game-changer by 2026–2027. $TSLA

Everything PyTorch Foundation does is shaped by the people who build, maintain, use, and support our projects. From contributors and maintainers to researchers, practitioners, and partners, your work continues to move the ecosystem forward. We’re grateful for the collaboration that defined the past year and look forward to what we’ll build together in the year ahead. ❤️ ❤️🔥 🔥 🔗 Learn more and get involved: https://t.co/bXzE4NH7i7 #PyTorch #OpenSource #AIInfrastructure

Splat's app uses AI to turn your photos into coloring pages for kids https://t.co/TJTDgCWE9h @SarahPerezTC @techcrunch

New York’s New AI Law Creates Oversight Office, Standards https://t.co/TwOFyrn7wt @govtechnews

AI 'friend' could help you plan your next vacation https://t.co/EqK69XGfiY @FuturityNews

AI translation is replacing interpreters in GP care here’s why that’s troubling https://t.co/VV71XxDurD @ConversationUS @ConversationUK