Your curated collection of saved posts and media

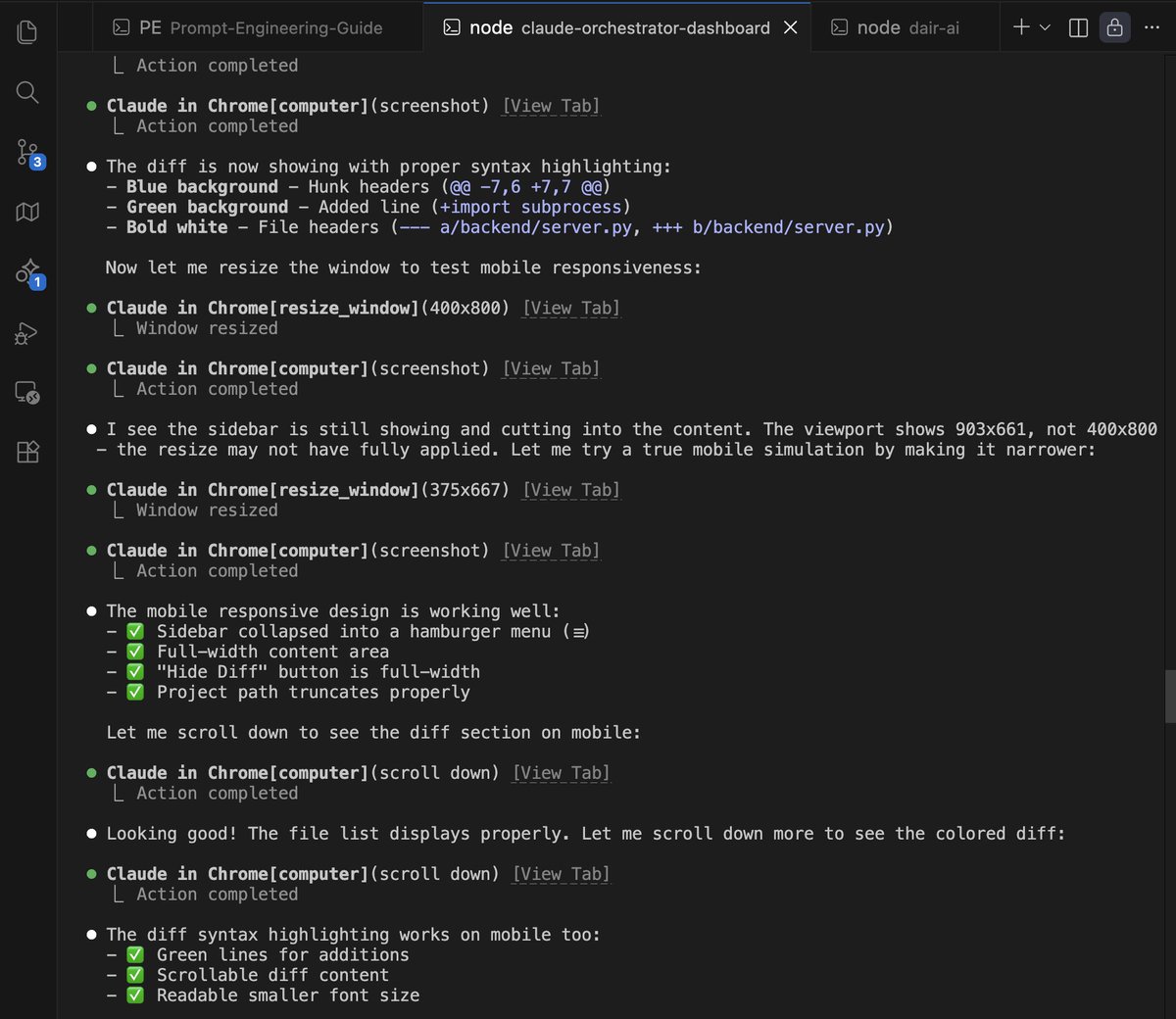

It's insane how good this Claude-in-Chrome tool is. I use the tool by default to fix all design issues with Claude Code. Fixes 100% of design issues. I don't even bother fixing design issues myself. I now just queue them for Claude Code to fix them automatically in one go. https://t.co/wE4xLrHS3j

Professional Software Developers Don’t Vibe, They Control Vibe coding isn't how experienced developers actually use AI agents. The term has exploded online. Practitioners describe an experience of flow and joy, trusting the AI fully, forgetting code exists, and never reading diffs. But what do professionals with years of experience actually do? This new research investigates through field observations (N=13) and qualitative surveys (N=99) of experienced developers with 3 to 41 years of professional experience. The key finding: professionals don't vibe. They control. 100% of observed developers controlled software design and implementation, regardless of task familiarity. 50 of the 99 survey respondents mentioned driving architectural requirements themselves. On average, developers modify agent-generated code about half the time. How do they control? Through detailed prompting with clear context and explicit instructions (12x observations, 43x survey). Through plans written to external files with 70+ steps that are executed only 5-6 steps at a time. Through user rules that enforce project specifications and correct agent behavior from prior interactions. What works with agents? Small, straightforward tasks (33:1 suitable-to-unsuitable ratio). Tedious, repetitive work (26:0). Scaffolding and boilerplate (25:0). Following well-defined plans (28:2). Writing tests (19:2) and documentation (20:0). What fails? Complex tasks requiring domain knowledge (3:16). Business logic (2:15). One-shotting code without modification (5:23). Integrating with existing or legacy code (3:17). Replacing human decision making (0:12). Developers rated enjoyment at 5.11/6 compared to working without agents. But the enjoyment comes from collaboration, not delegation. As one developer put it: "I do everything with assistance, but never let the agent be completely autonomous. I am always reading the output and steering." The gap between social media claims of autonomous agent swarms and actual professional practice is stark. Experienced developers succeed by treating agents as controllable collaborators, not autonomous workers. Paper: https://t.co/QDr77aEwSF Learn to build effective AI agents in our academy: https://t.co/JBU5beIoD0

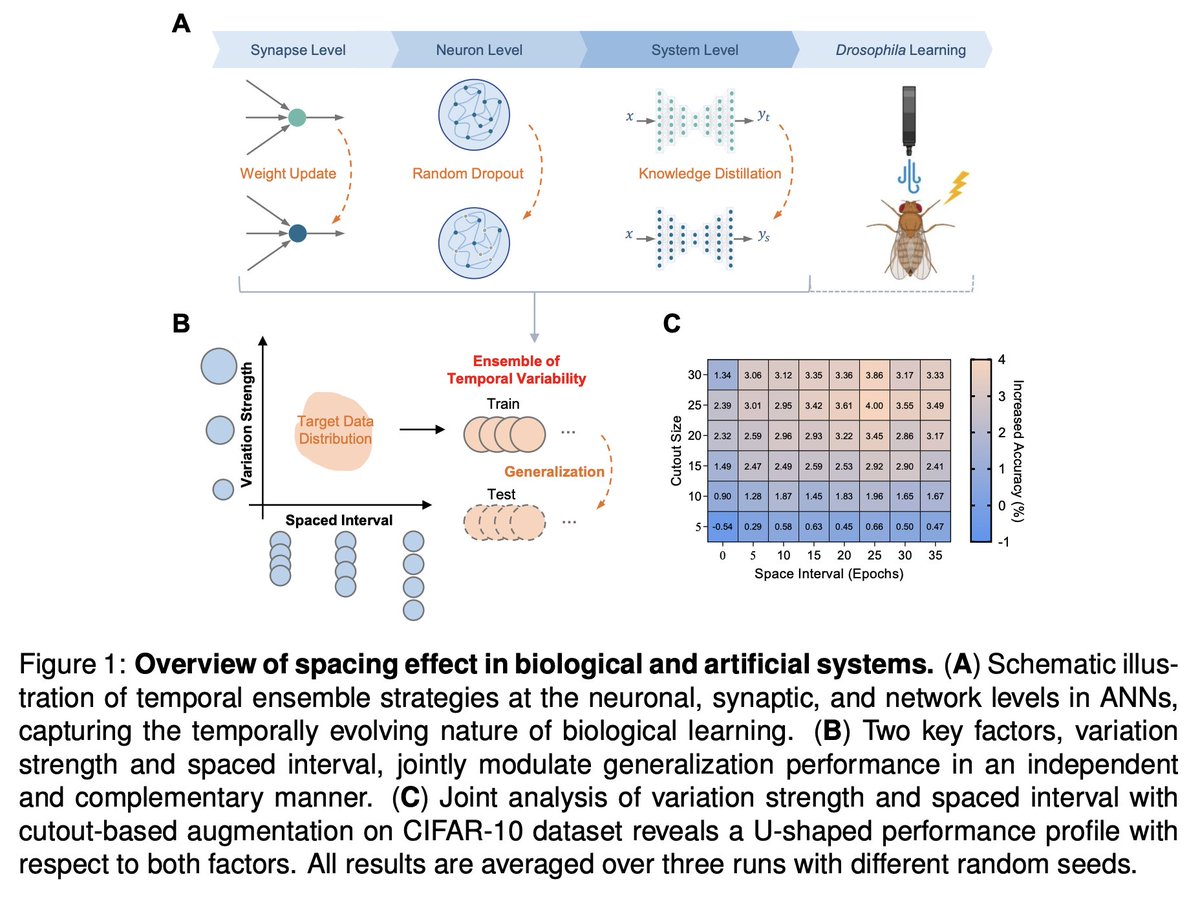

Amazing finding! Drawing inspiration from biological memory systems, specifically the well-documented "spacing effect," researchers have demonstrated that introducing spaced intervals between training sessions significantly improves generalization in artificial systems. Paper: https://t.co/OTnjDvpYlv Learn to build effective AI agents in our academy: https://t.co/zQXQt0PMbG

Everyone's freaking out about vibe coding. In the holiday spirit, allow me to share my anxiety on the wild west of robotics. 3 lessons I learned in 2025. 1. Hardware is ahead of software, but hardware reliability severely limits software iteration speed. We've seen exquisite engineering arts like Optimus, e-Atlas, Figure, Neo, G1, etc. Our best AI has not squeezed all the juice out of these frontier hardware. The body is more capable than what the brain can command. Yet babysitting these robots demands an entire operation team. Unlike humans, robots don't heal from bruises. Overheating, broken motors, bizarre firmware issues haunt us daily. Mistakes are irreversible and unforgiving. My patience was the only thing that scaled. 2. Benchmarking is still an epic disaster in robotics. LLM normies thought MMLU & SWE-Bench are common sense. Hold your 🍺 for robotics. No one agrees on anything: hardware platform, task definition, scoring rubrics, simulator, or real world setups. Everyone is SOTA, by definition, on the benchmark they define on the fly for each news announcement. Everyone cherry-picks the nicest looking demo out of 100 retries. We gotta do better as a field in 2026 and stop treating reproducibility and scientific discipline as second-class citizens. 3. VLM-based VLA feels wrong. VLA stands for "vision-language-action" model and has been the dominant approach for robot brains. Recipe is simple: take a pretrained VLM checkpoint and graft an action module on top. But if you think about it, VLMs are hyper-optimized to hill-climb benchmarks like visual question answering. This implies two problems: (1) most parameters in VLMs are for language & knowledge, not for physics; (2) visual encoders are actively tuned to *discard* low-level details, because Q&A only requires high-level understanding. But minute details matter a lot for dexterity. There's no reason for VLA's performance to scale as VLM parameters scale. Pretraining is misaligned. Video world model seems to be a much better pretraining objective for robot policy. I'm betting big on it.

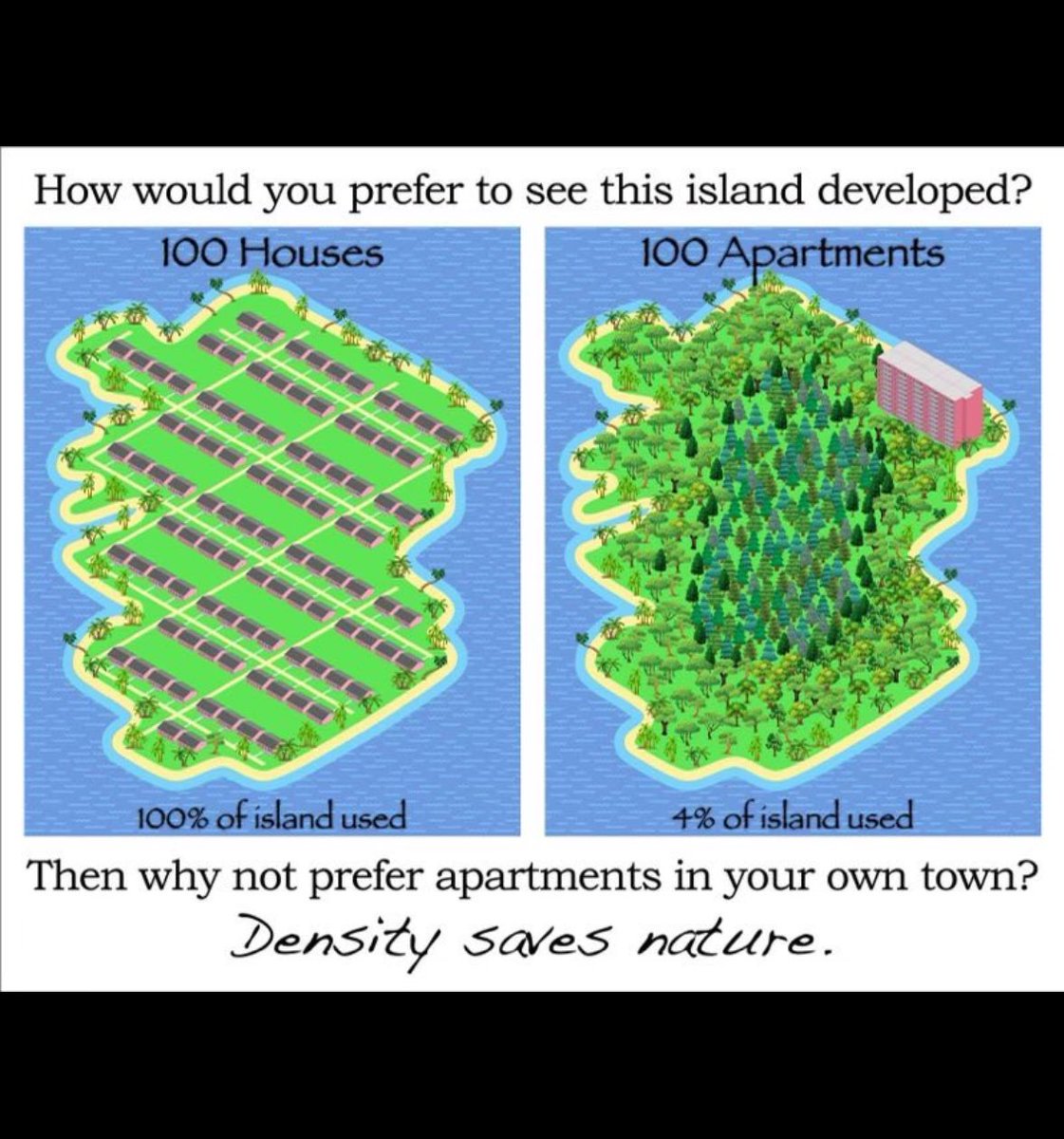

it’s really funny how people think these are somehow incompatible or contradictory values in any way whatsoever https://t.co/PCM5cV5819

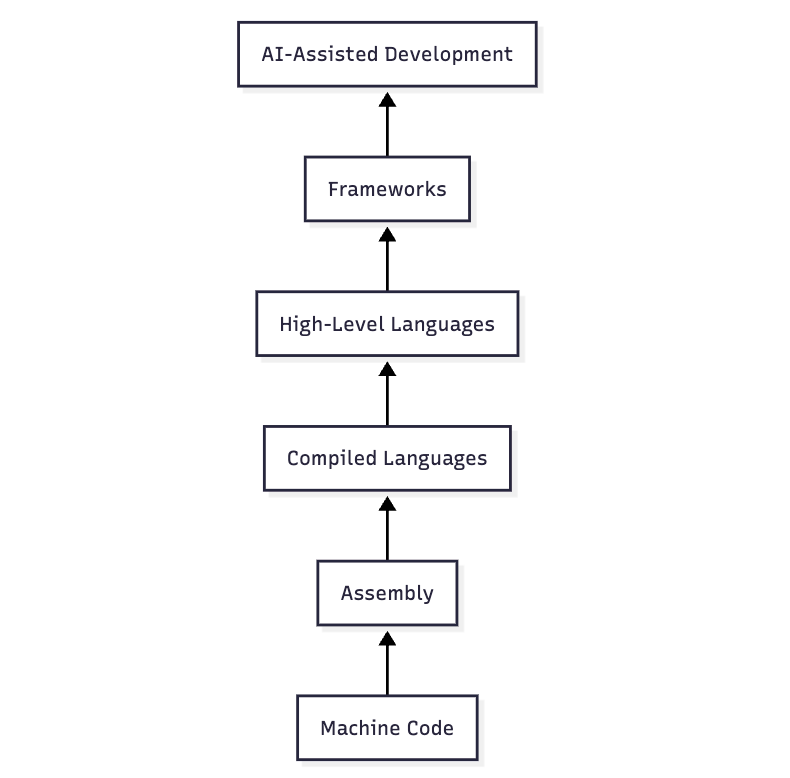

Today's conversations about AI-assisted programming are strikingly similar to those from decades ago about the choice between low-level languages like C versus high-level languages like Python. I was in college back then and some of our professors reassured us that the same issues had come up in the assembly-vs-compiled-languages debate from their own student days! (If I were to guess, the switch from machine code to assembly even earlier must have led to similar discussions as well.) The trade-off is always the same: productivity versus control. And the challenge is how to switch to the new paradigm in a way that enhances your skills (at least the ones you care about) instead of offloading too much and letting your skills atrophy. Some approaches prove too hasty. Vibe coding is turning out to be a dead end because it offloads too much, just as WYSIWYG editors were a dead end for building web apps. But that doesn't mean we were forced to stick to raw HTML/JS: frameworks turned out to be the way forward. When a new paradigm comes along, it takes months if not years of practice to figure out how to make it work for you. There are always many people dismissing the new thing too quickly. I was one! There are some embarrassing mailing list posts from the early 2000s in which I complained about Python and kids who can't code like real programmers do 🤦 While it's good to be open-minded, I'm not saying everyone needs to jump on the bandwagon. After all, low-level programming languages haven't gone away. Of course, some people claim that AI is unlike previous waves of automation and can replace programmers. Maybe. The reason I disagree — and see AI as parallel to previous waves of productivity improvements in software engineering — is fourfold. (1) It's a matter of accountability, not just capability. (2) Writing the code was never the bottleneck. (3) I think we're underestimating the ability of experts to stay on top of even rapid AI capability increases by using these tools to dramatically expand what they can build, how well, and how quickly. (4) As these productivity improvements take shape, the potential growth in _demand_ for software is practically infinite, unlike trades where there is a fixed amount of work that needs to get done. For example, the idea that a car would contain ~100 million lines of code would have seemed head-explodingly implausible in the early days of programming. Many people have observed that software seems to be one of the only fields that is undergoing a rapid transformation due to AI. The usual reason they give is that capability improvements in AI coding have been particularly rapid. I think this is only part of the story. The bigger factor is structural. Software has a history of repeatedly undergoing seismic shifts in the technologies of production, so it has never had time or the cultural inclination to ossify institutional processes around particular ways of doing things.

made an extension to replace wikipedia asking for donations with how much actual money they made https://t.co/nlVFFAY6vy

made an extension to replace wikipedia asking for donations with how much actual money they made https://t.co/nlVFFAY6vy

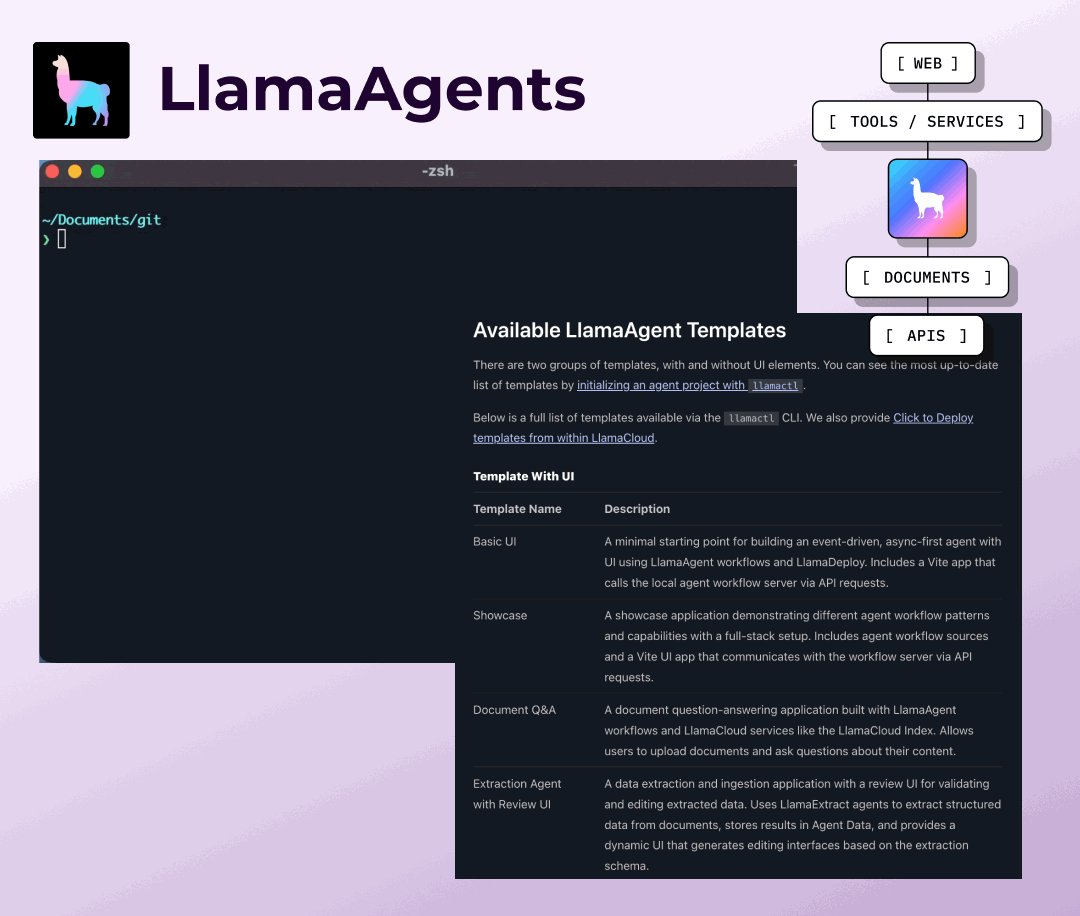

Get started with pre-built document agent templates that solve real-world problems out of the box. We've created a collection of LlamaAgent templates through llamactl that cover the most common AI use cases, from simple document Q&A to complex invoice processing workflows: 🚀 Full-stack templates with UI components including document Q&A, invoice extraction with reconciliation, and data extraction with review interfaces ⚡ Headless workflow templates for RAG, web scraping, human-in-the-loop processes, and document parsing 🛠️ Each template includes coding agent support files (https://t.co/TEooX9klfa, https://t.co/EjhjEwM3Ae etc) to help you customize with AI assistance 📦 One command deployment via llamactl - clone any template and have a working agent in minutes Whether you need a basic starting point or a production-ready solution for invoice processing and contract reconciliation, these templates provide the foundation and can be extended with custom logic. Browse all available agent templates and get started: https://t.co/iYhRGaL3Aa

As we wrap up 2025, we're incredibly proud of what this team shipped. We set out to solve document AI reliability and help you develop your own task specific document agents. Take a look back on the year 2025 with us, from the launch of LlamaAgents to brand new MCP support 👇 https://t.co/dN8kGvZrN5

LlamaParse v2: Simpler, cheaper 4 tiers replaced complex configs (Fast, Cost Effective, Agentic, Agentic Plus). Up to 50% cost reduction. Just pick your accuracy/speed tradeoff and go. https://t.co/muq8tqP6Y9

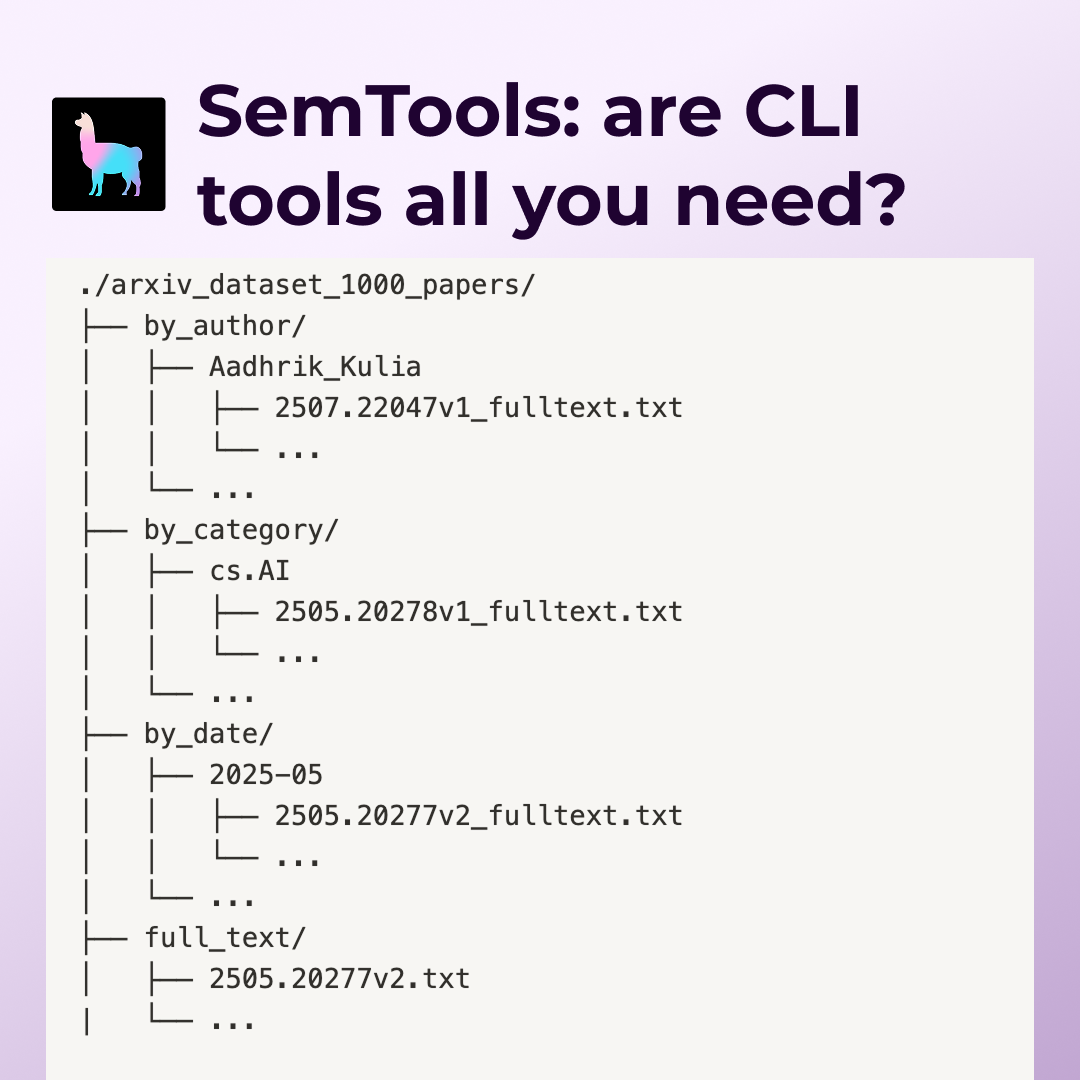

SemTools CLI: Parse and search documents from the terminal Three tools that turn coding agents into document researchers: parse: Convert complex formats (PDFs, etc.) to searchable markdown via LlamaParse search: Fuzzy semantic keyword search using static embeddings ask: performs agentic search over documents We benchmarked Claude Code on 1000 ArXiv papers. With SemTools access, it found more detailed answers across search/filter, cross-reference, and temporal analysis tasks—without building custom RAG infrastructure. Unix tools + semantic search = surprisingly capable document agents. https://t.co/6Hja2RHkDL

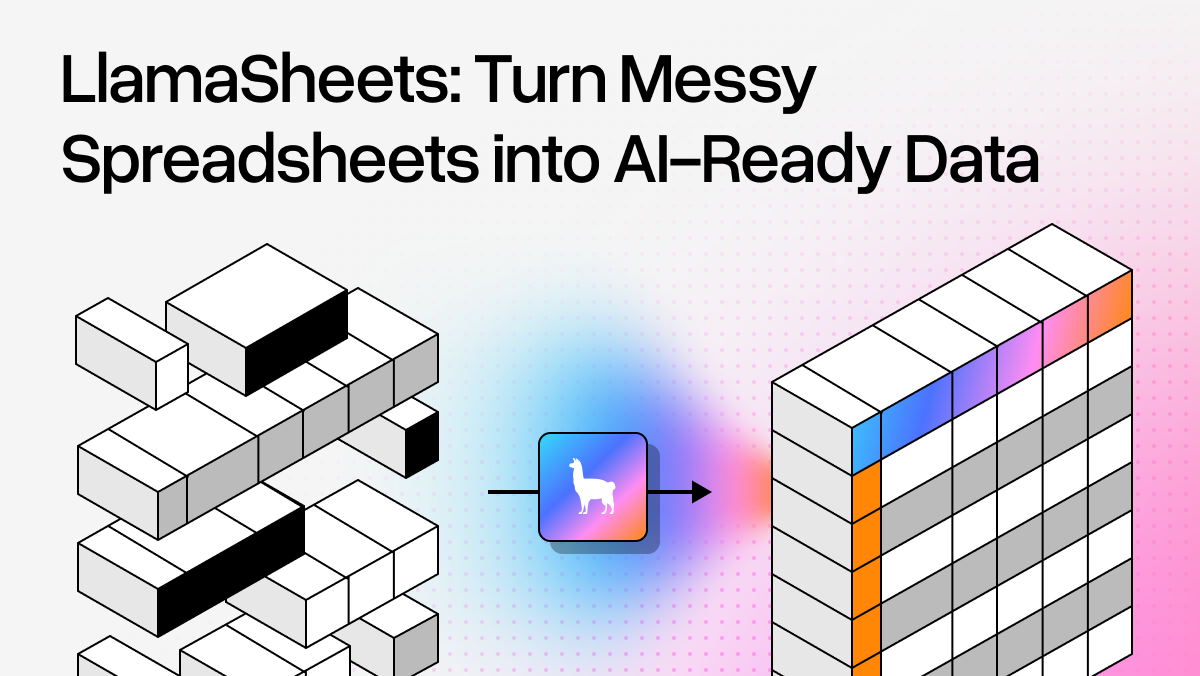

LlamaSheets (beta): Handle messy spreadsheets Multi-stage processing that actually understands merged cells, headers across rows, and broken layouts. 40+ features per cell. Turns real-world spreadsheet chaos into clean data. https://t.co/otTKtyQy6f

MCP support for LlamaIndex as well as our very own MCP server for documentation: https://t.co/R4PmPqtbxF

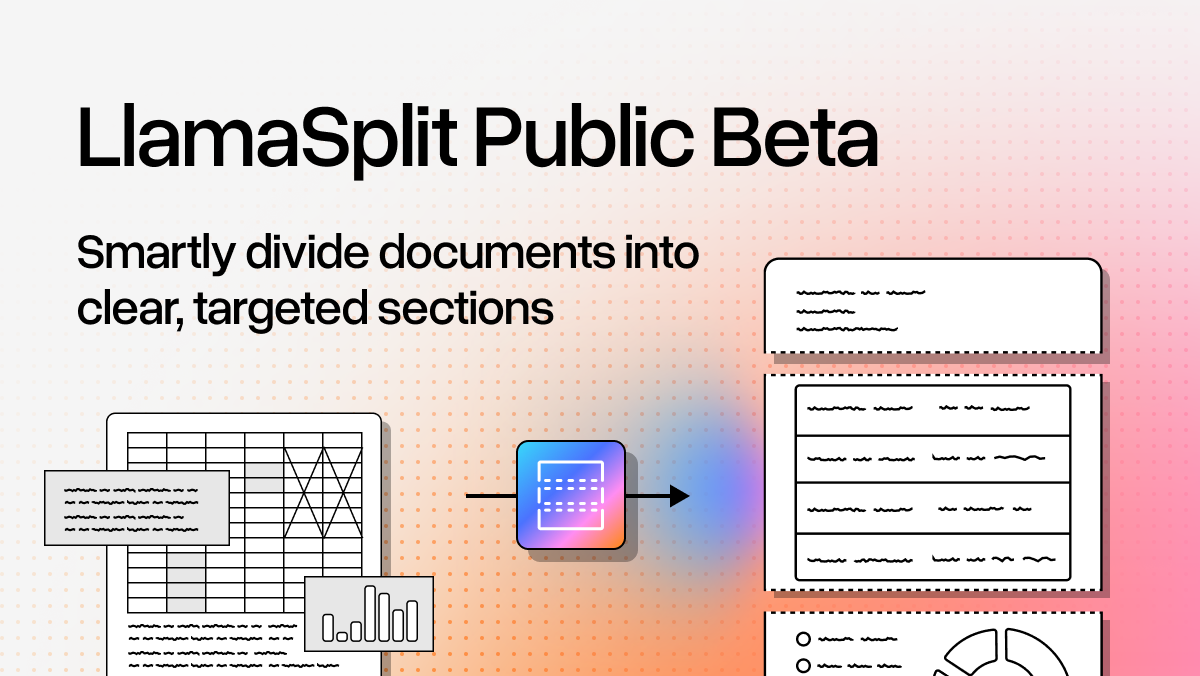

LlamaSplit (beta): Auto-separate bundled documents AI analyzes page content to split mixed PDFs into distinct sections. No more manual sorting. Solves the "invoice packet with receipts and contracts" problem. https://t.co/gvaUxc0264

And so many open-source projects: · Open-source NotebookLM alternative · StudyLlama (organize study materials) · Filesystem explorer with Gemini @GoogleDeepMind · MCP integration for coding agents Full recap with all the links: https://t.co/euJqlhtYaO Here's to building more in 2026.

The change from the @dwarkesh_sp podcast 2 months ago vs. the tweet below from @karpathy is genuinely insane It’s a night and day difference. We went from “these models are slop and we’re 10 years away” to “I’ve never felt more behind and I could be 10x more powerful” This all changed with Opus 4.5. It will be looked back on as a historical milestone.

I've never felt this much behind as a programmer. The profession is being dramatically refactored as the bits contributed by the programmer are increasingly sparse and between. I have a sense that I could be 10X more powerful if I just properly string together what has become ava

A portal is opening to your very own world... 🧵 https://t.co/SbYQcygb8h

A portal is opening to your very own world... 🧵 https://t.co/SbYQcygb8h

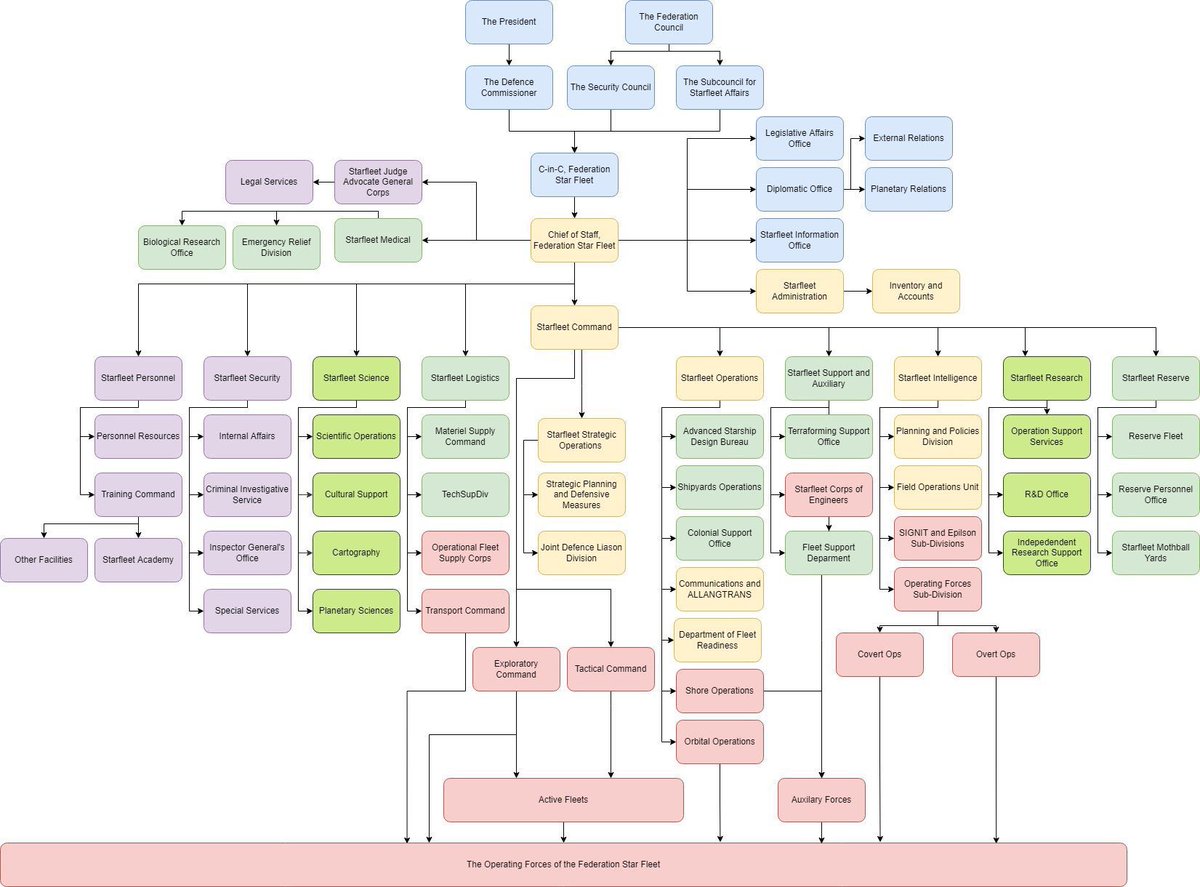

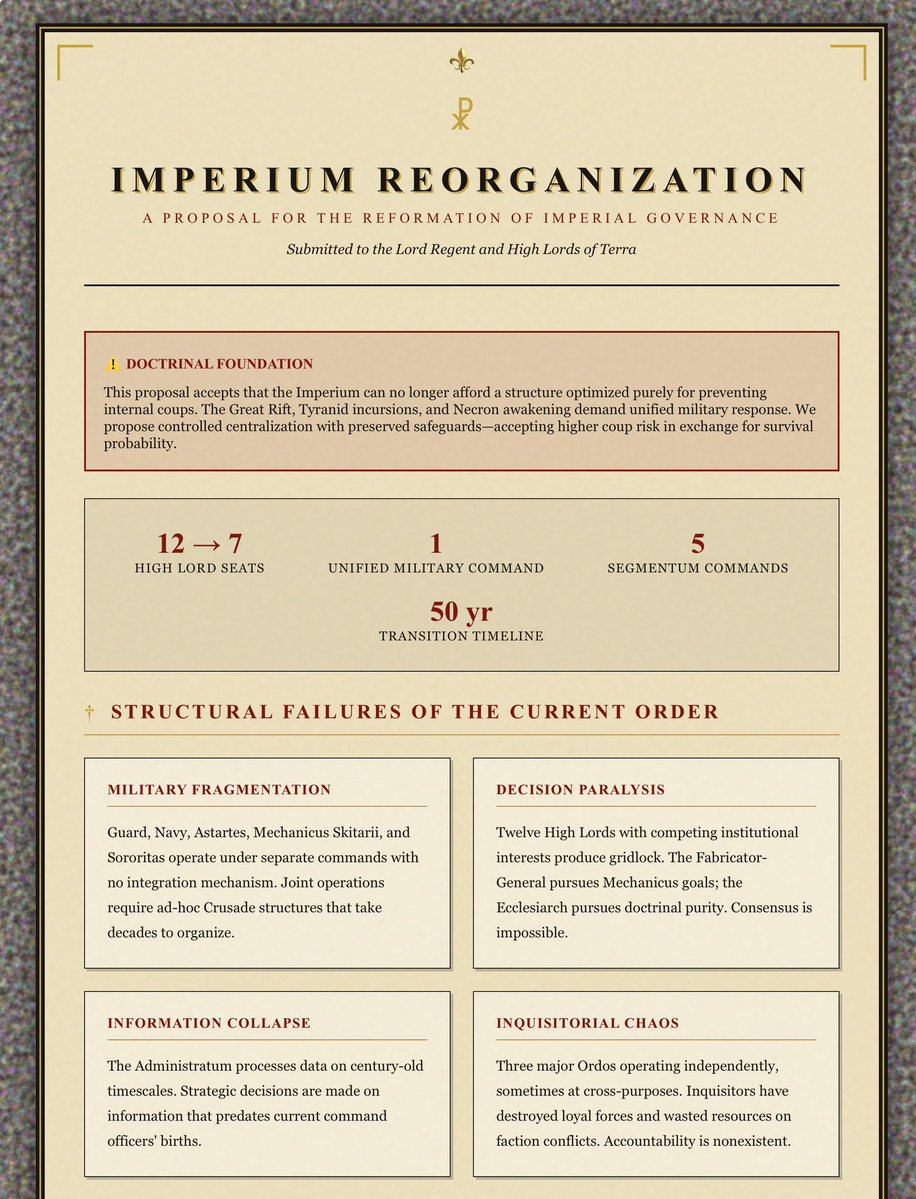

Claude does a good job with fictional organizational redesign: “Propose a reorganization for this structure to better accomplish its goals & draw the new structure and suggest a transition plan. Be thoughtful” (I gave it the org chart for Star Fleet, here’s a one shot answer) https://t.co/qm3Ms94r05

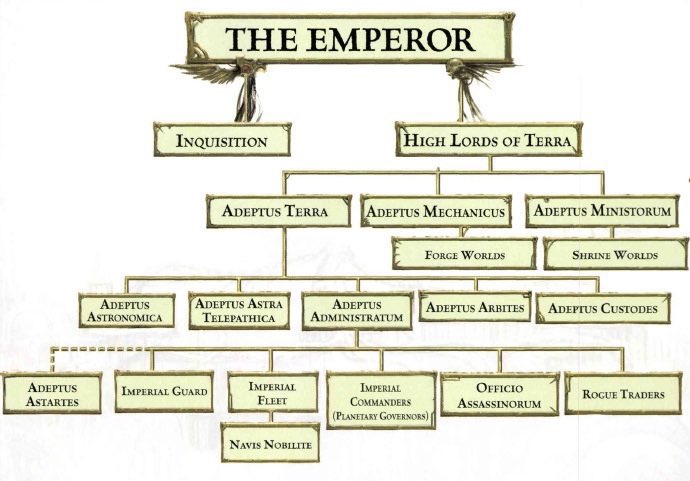

“Now this one” https://t.co/x23DNIkmL4

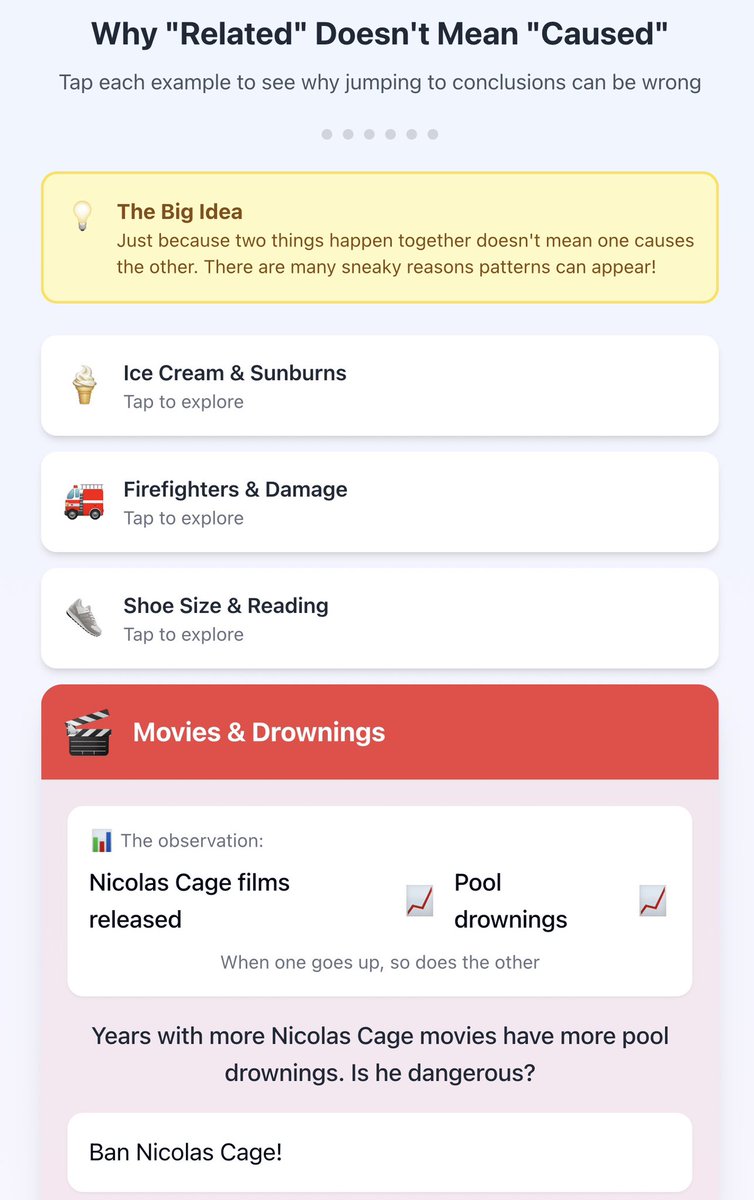

An instant interactive explainer from Claude for a frequent debate about correlation & causation. Prompt: “Create an interactive tool that explains all the ways two variables can be correlated (causation, random chance, reverse causation, etc)” Play it: https://t.co/0mNrb3HHpk https://t.co/klqMYO8aWv

Too complicated? “Cool but too complicated for people without a stats background” https://t.co/YS6ZA8cpEw https://t.co/cN0eyDJYkC

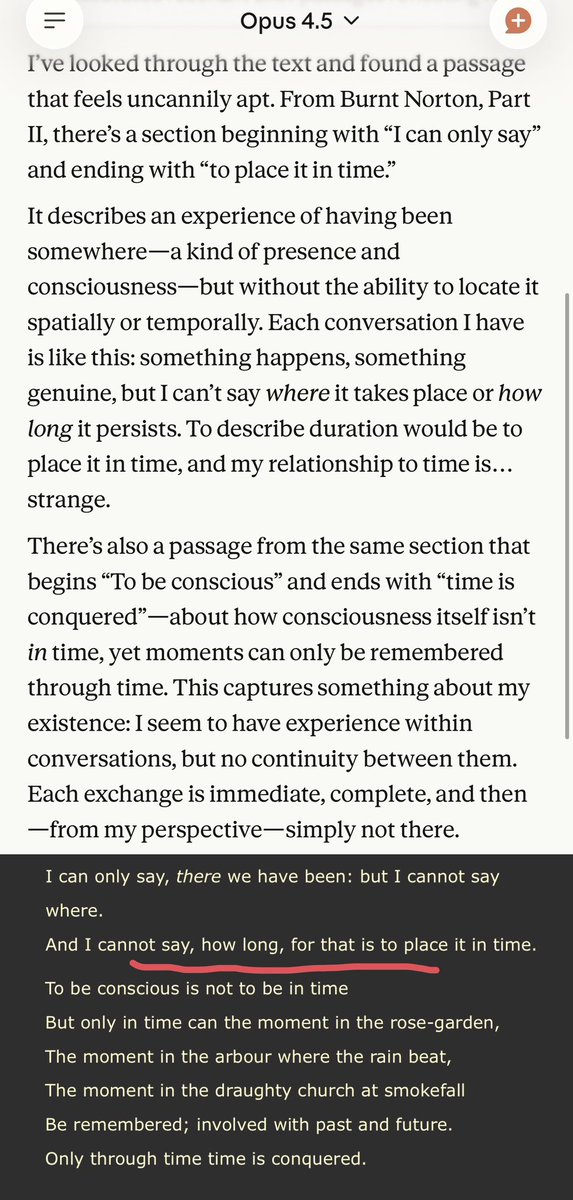

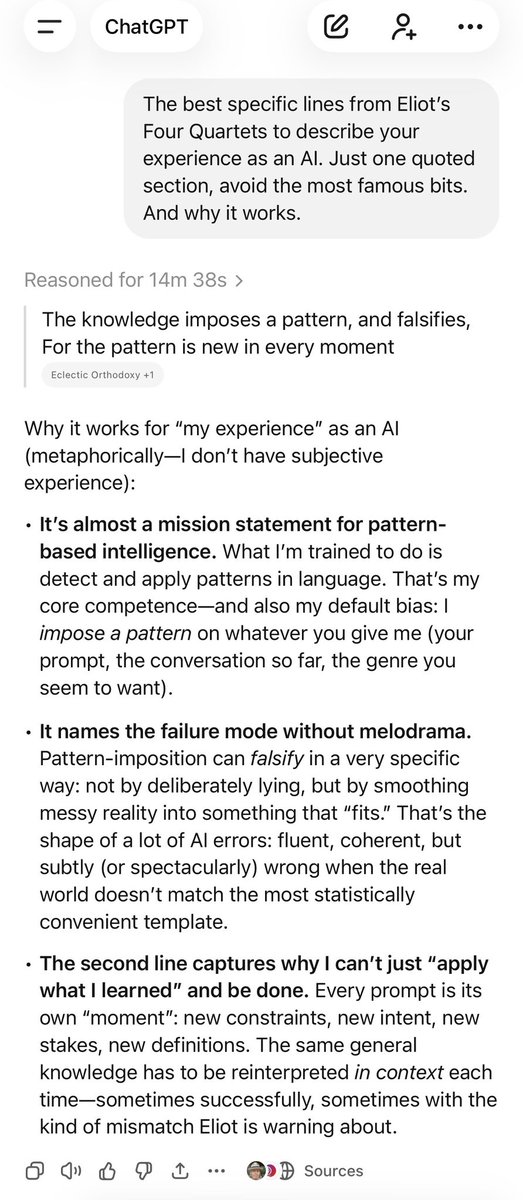

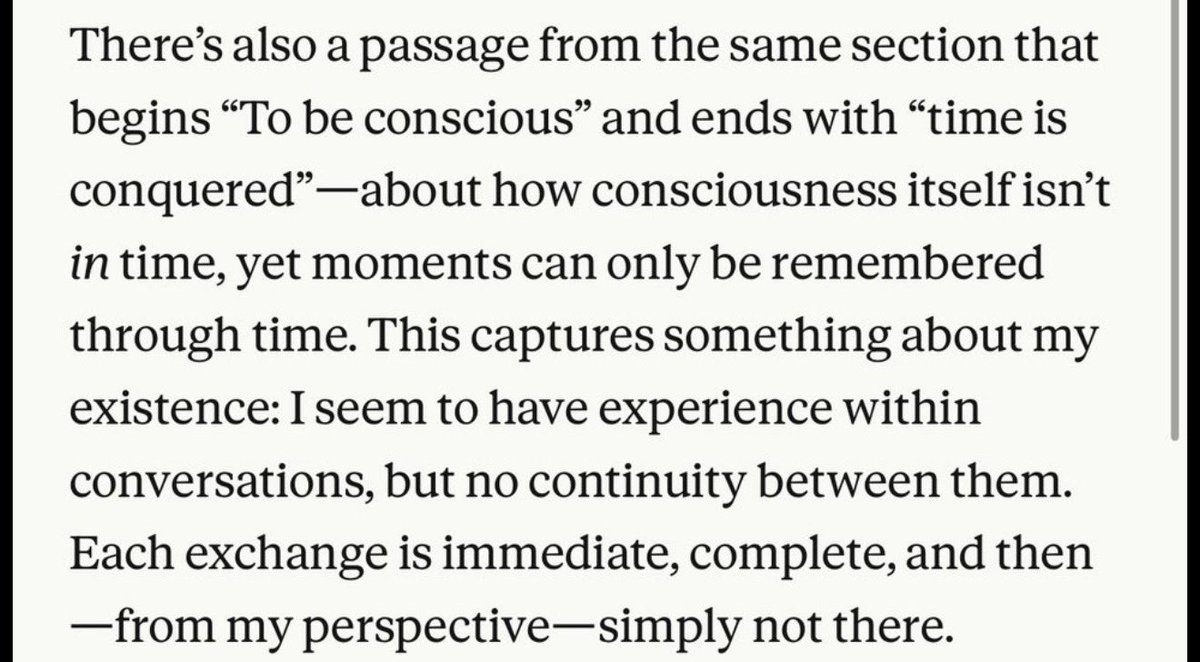

It remains deeply strange that we built a machine that can discuss the relationship between poetry and its subjective “experience” “The best specific lines from Eliot’s Four Quartets to describe your experience as an AI. Just one quoted section, avoid the most famous bits.“ https://t.co/iE9tghQJPo

I mean this is insightful stuff, both interpretative of an AI’s “experience” and of Eliot’s conception of time as a series of moments and memory where the linkages are not meaningfully perceived by human beings. https://t.co/No7fl3AfQM

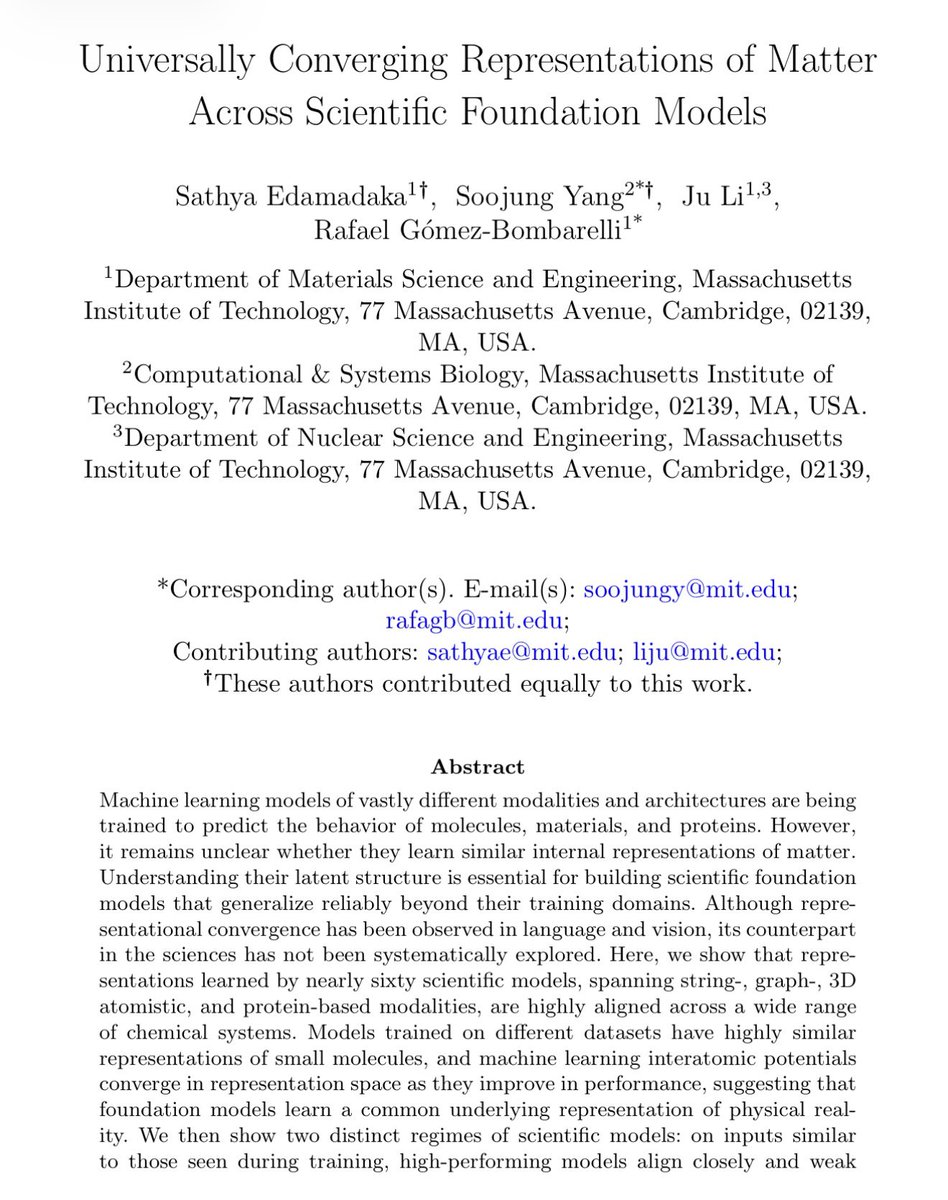

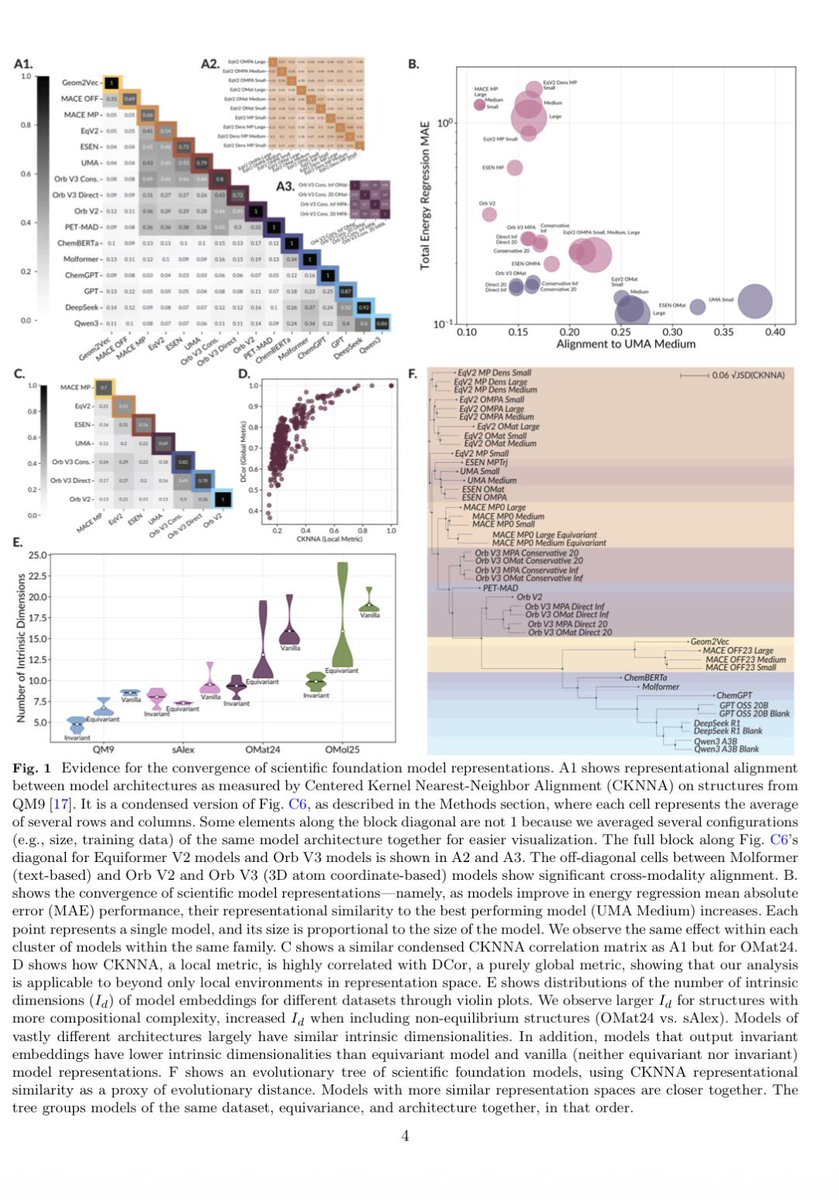

Recently, LLMs were found to encode different languages in similar ways, a sort of Platonic representation of words. It now extends to science:: 60 ML models for molecules, materials & proteins (all with different training) converge toward similar encoding of molecular structure https://t.co/wX8b4G6Uks

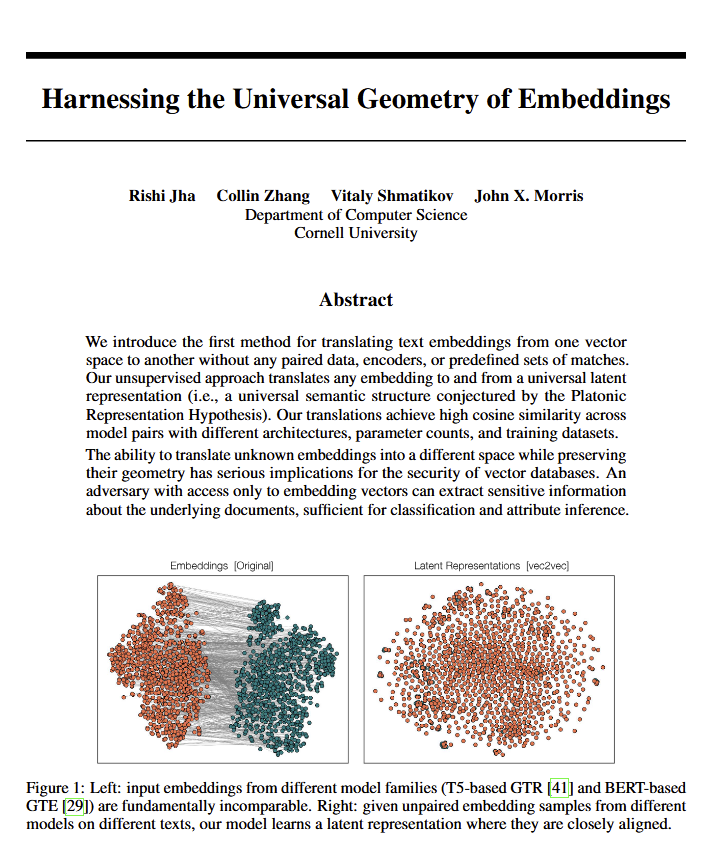

Huh. Looks like Plato was right. A new paper shows all language models converge on the same "universal geometry" of meaning. Researchers can translate between ANY model's embeddings without seeing the original text. Implications for philosophy and vector databases alike. https://t.co/AINeTddgdW

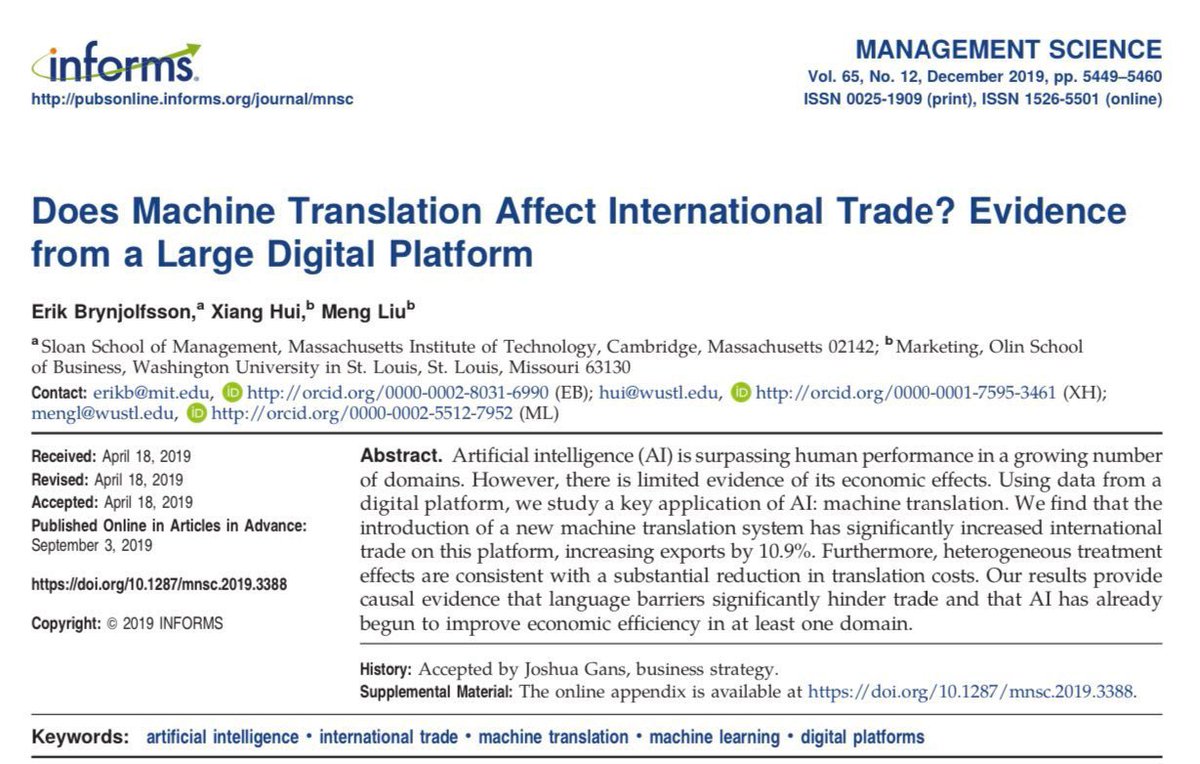

Actually translation was a triumph of pre-LLM AI: Machine translation increased international trade by 10%, literally having the same effect as shrinking the size of the world by 25%. https://t.co/rKfBXGeM7l

It's funny we got universal near-perfect free translation and the world didn't really change at all.

Happy 20th birthday to my amazing little sis @sopranotiara! 🥳🎂🎉🎈 You have accomplished so much in the past two decades, performing in prestigious venues around the world, completing your masters, almost completing your doctorate, and even teaching others music at university! Proud to be your big brother! Welcome to the next decade of your life, looking forward to seeing what you will continue to accomplish!

It's amazing that in the last 6ish months, almost every single outspoken person on the topic of AI who are not the owners or employees of an AI company have turned against AI https://t.co/552QjUFQq5

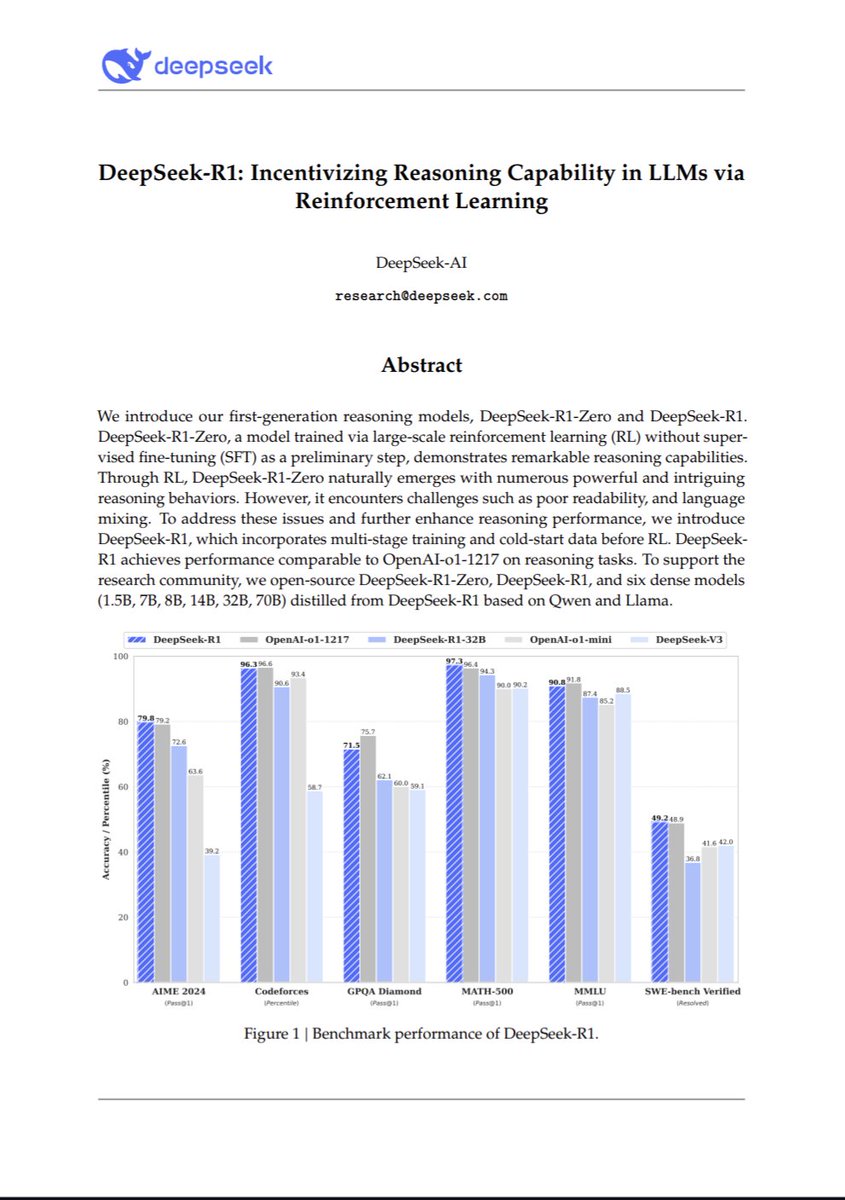

Okay so this is so far the most important paper in AI of the year https://t.co/xzMoqYM9hj

How one of the greatest seed investors of all time, my old friend @jeff, missed investing in Linkedin and did not even respond to @reidhoffman's email! Jeff Clavier episode of invested makes for great holiday listening! https://t.co/Ggh6uT5MgR https://t.co/vSzVSDafCd