@emollick

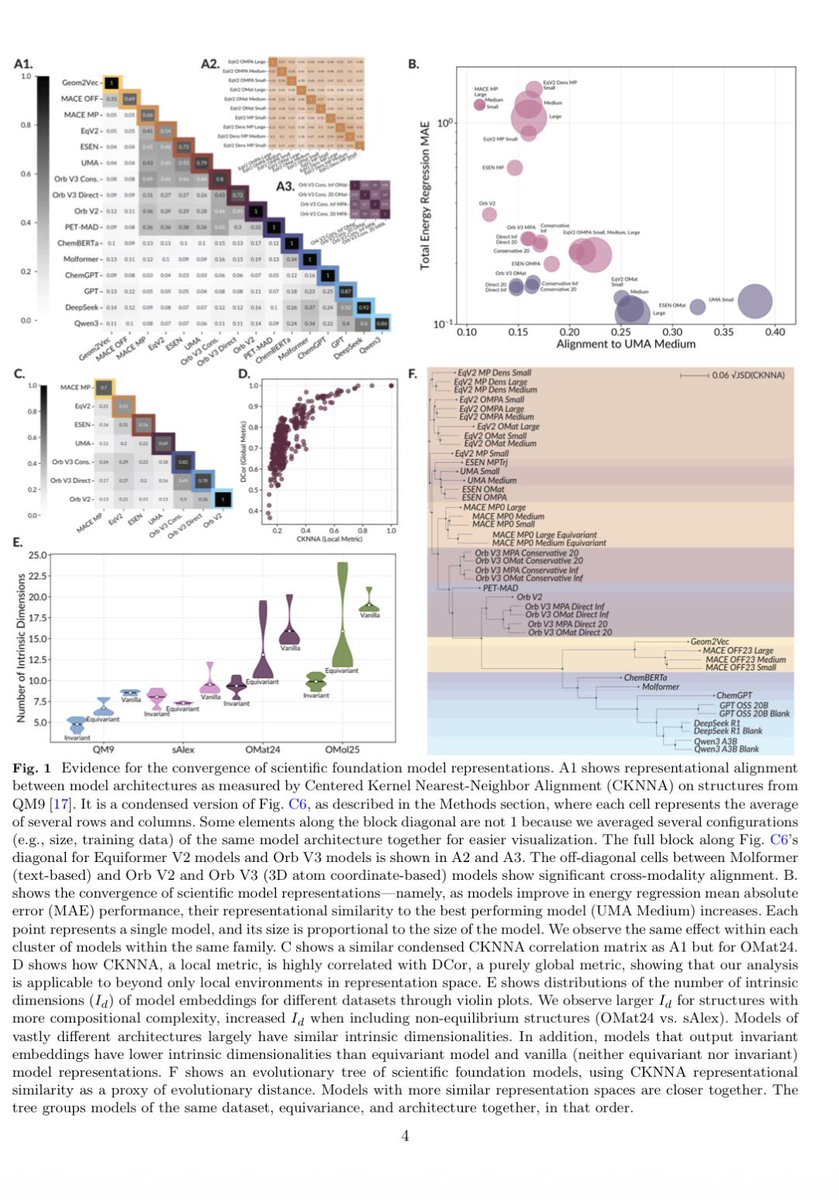

Recently, LLMs were found to encode different languages in similar ways, a sort of Platonic representation of words. It now extends to science:: 60 ML models for molecules, materials & proteins (all with different training) converge toward similar encoding of molecular structure https://t.co/wX8b4G6Uks