Your curated collection of saved posts and media

This is a nice essay, and I agree with its characterization of AI as Normal Technology. In fact, on the second line of AINT, we compare AI's potential impact to that of the internet or electricity. But there's a large, qualitative difference between even the most powerful general-purpose technologies which humans can and should influence/control, and creating an omnipotent entity that we have no control over. This was precisely the gap we wanted to highlight.

I spent a weekend at Stanford recently, which is where, in 2023, I did much of my formative thinking on AI. The Anthropic-DoW affair tested that early intellectual foundation more than anything, so found myself walking around Stanford, reflecting on what I learned in 2023. https:

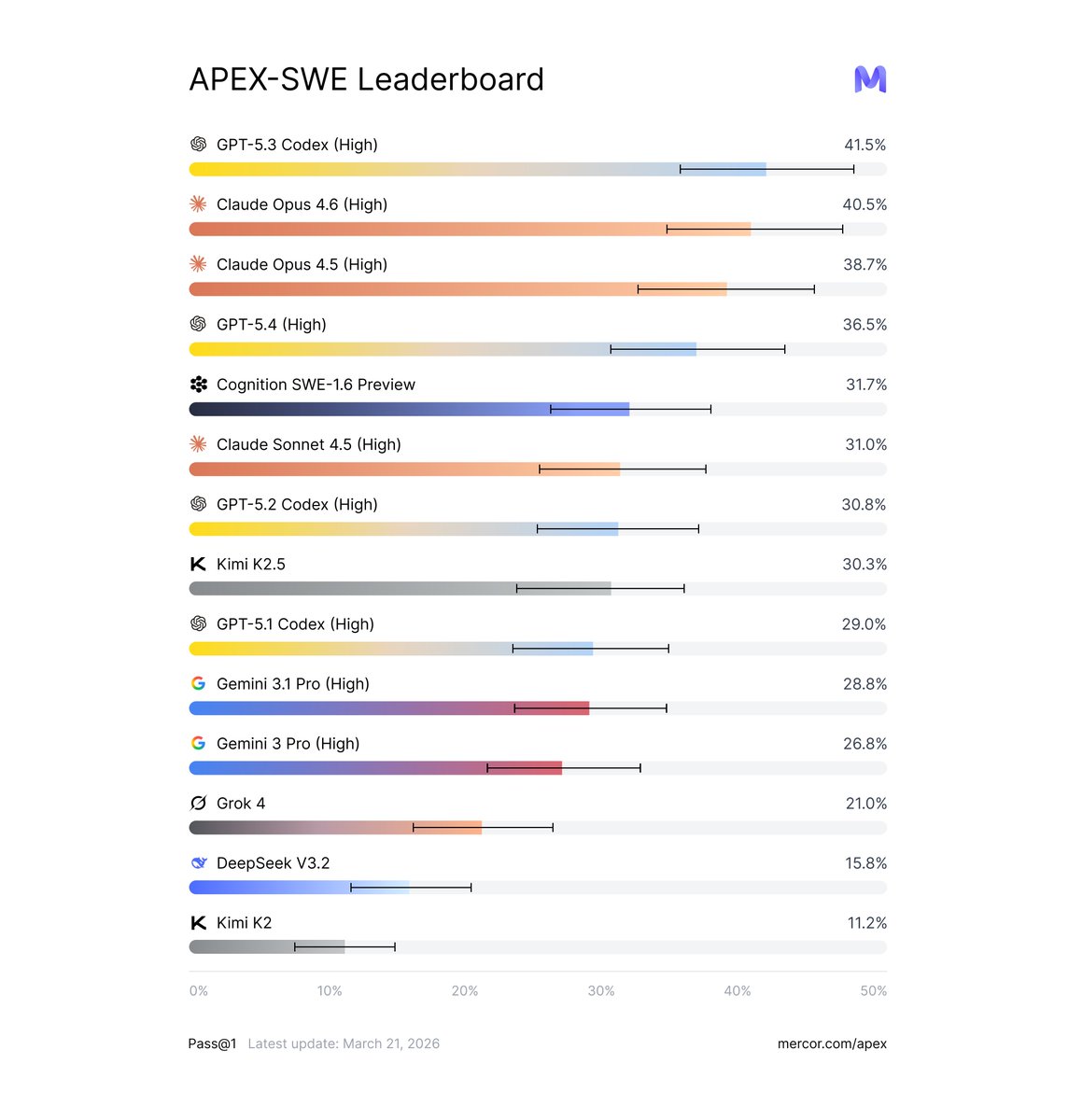

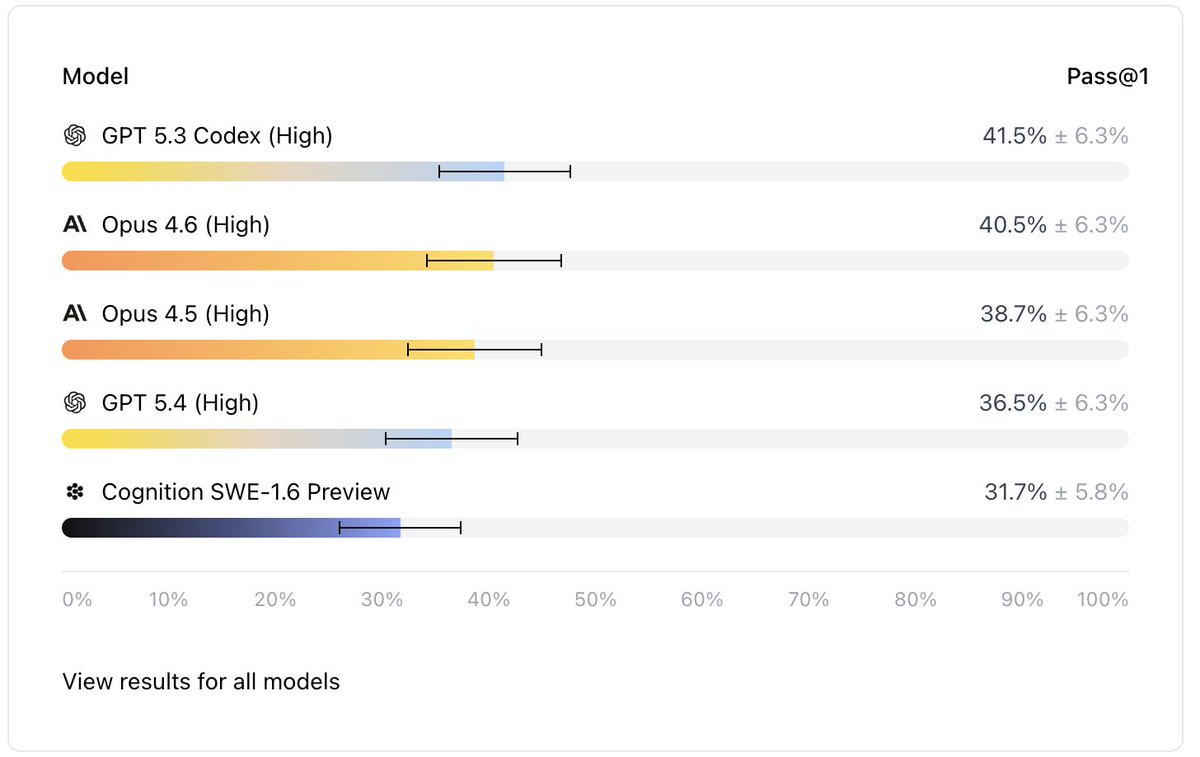

Introducing APEX-SWE, in collaboration with @Cognition. They see firsthand that real software engineering is not just writing code anymore. It's deploying systems, integrating with tools and debugging when things break. On APEX-SWE, every model fails to reliably solve the real production software engineering tasks. @OpenAI GPT-5.3 Codex (High) tops the leaderboard at 41.5% on Pass@1, followed by @AnthropicAI Opus 4.6 (High) at 40.5%. Every frontier model fails on nearly 60% of real production tasks.

Can agents replace software engineers? Not according to this new benchmark. Mercor and Cognition released APEX-SWE. It tests AI coding agents on real engineering work. > GPT-5.3 Codex leads at 41.5%. > Claude Opus 4.6 follows at 40.5%. Nothing crosses the 50% mark. Why? Old benchmarks are basically solved: HumanEval scores jumped from 67% to 90% in two years. OpenAI flagged SWE-bench as contaminated. Models were memorizing the answers. Those benchmarks never reflected the job in the first place. Those tests only measured code writing. Developers spend 16% of their time on that. The other 84% is debugging, infrastructure, and integration. This benchmark tests the 84%. 200 tasks split into two types: 1. Integration: build systems across live databases, APIs, and cloud services in Docker containers 2. Observability: find and fix real bugs using logs, dashboards, and chat history Each task drops an agent into a live environment. Real services, real credentials, and project boards with filler issues mixed in. 50 tasks are open-source on Hugging Face. The eval harness is on GitHub. You can run it yourself. AI writes half the code at big companies. 90% of developers use AI assistants. All of that covers 16% of the job.

Introducing APEX-SWE, in collaboration with @Cognition. They see firsthand that real software engineering is not just writing code anymore. It's deploying systems, integrating with tools and debugging when things break. On APEX-SWE, every model fails to reliably solve the real p

Lisa Kudrow doing a perfect Parker Posey impression lol https://t.co/LXtYdFNxj5

Lisa Kudrow doing a perfect Parker Posey impression lol https://t.co/LXtYdFNxj5

most AI apps still don't use the full multimodal stack. vision, audio, real-time processing. all largely untapped we got you access to the latest @GoogleDeepMind models. if you've been wanting to build multimodal agents, this one's for you 🏆 $45K+ in prizes (up to $25K in credits alone) 📅 saturday march 28 · san francisco apply below 👇

New on the Engineering Blog: How we designed Claude Code auto mode. Many Claude Code users let Claude work without permission prompts. Auto mode is a safer middle ground: we built and tested classifiers that make approval decisions instead. Read more: https://t.co/dpcMcWMf5k

LeWorldModel: Yann LeCuns Radical Simplification of World Models Just Made Physics-Aware AI Practical In the race for artificial general intelligence, two paths have emerged. One is the familiar scale everything route: bigger LLMs trained on ever-larger text corpora. The other, championed for years by Yann LeCun, is building world models: compact systems that learn the underlying physics of reality directly from raw sensory data (pixels) so AI can plan, predict, and act in the physical world like a robot or self-driving car actually would. Until now, the second path has been frustratingly difficult. Joint-Embedding Predictive Architectures (JEPAs) - LeCuns elegant framework for learning predictive representations without reconstructing every pixel - kept collapsing during training. Researchers had to resort to a laundry list of hacks: multi-term loss functions (up to six hyperparameters), frozen pre-trained encoders, stop-gradients, exponential moving averages, and other duct-tape tricks just to keep the model from mapping every input to the same useless output. LeCuns team (Mila, NYU, Samsung SAIL, and Brown University) dropped a bombshell: LeWorldModel (LeWM) - the first JEPA that trains stably end-to-end from raw pixels using only two loss terms. No more house-of-cards engineering. Just a clean, simple recipe that works on a single GPU in a few hours with only 15 million parameters. The Core Breakthrough: SIGReg Saves the Day LeWorldModels secret weapon is a new regularizer called SIGReg (for spherical isotropic Gaussian regularizer). It enforces a simple Gaussian distribution on the latent embeddings. This single term prevents representation collapse without any of the previous heuristics. The training objective now has just two parts: 1. Next-embedding prediction loss - the model predicts what the next latent state should be. 2. SIGReg - keeps the latent space well-behaved and diverse. Thats it. Hyperparameters drop from six to one. Training becomes stable, reproducible, and dramatically cheaper. The model learns directly from raw video frames (no pre-trained vision encoders needed) and produces a compact latent world model that can be used for fast planning. Impressive Results on Real Benchmarks Despite its tiny size, LeWorldModel punches way above its weight: - Trains on a single GPU in a few hours. - Plans actions up to 48 times faster than foundation-model-based world models. - Uses roughly 200 times fewer tokens than alternatives. - Matches or beats far larger models on diverse 2D and 3D control tasks (e.g., manipulation, navigation). - Its latent space encodes meaningful physical quantities (position, velocity, etc.) - proven by direct probing. - It reliably detects physically implausible surprise events, showing genuine causal understanding. Crucially, adding a decoder and reconstruction loss hurts performance on downstream control tasks. The pure JEPA objective already captures everything needed for planning - extra visual details just get in the way. Project website: https://t.co/KhGR9LiIQZ Official code: https://t.co/s1lI9kevJS Why This Matters for the Future of AI LeCun has been saying since 2022 that world models (not next-token predictors) are the key to real intelligence. Critics always pointed to the training instability. LeWorldModel removes that objection with elegant simplicity. This is a philosophical reset: AI can learn physics the way babies do - by watching the world unfold - without needing supercomputers or endless text. The implications for robotics, autonomous vehicles, and embodied agents are enormous. Suddenly, building a physically grounded planner is something a researcher (or even a hobbyist) can do on consumer hardware. 1 of 2

BREAKING: @ivanleomk is joining Google DeepMind https://t.co/Fu365QjYDk

Chicago Mayor Brandon Johnson: “We cannot put people in jail anymore. It’s racist.” I can't believe this is real https://t.co/0C6hTaJA8g

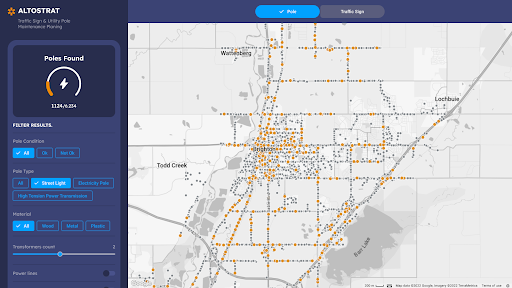

We have been heads down but wanted to share a bit about what we are doing 🧵 https://t.co/ICZlLwZ3Fq

We have been heads down but wanted to share a bit about what we are doing 🧵 https://t.co/ICZlLwZ3Fq

We are excited to welcome @OpenAI to the AIE Expo for the first time as Platinum sponsors for AIE EU! OAI has shipped SO much for AI Engineers this year alone, and this is the best place to catch up: - Meet the team at the Ask OpenAI lounge (bring your hardest tasks and best questions!) - Hear keynotes from @steipete and @lopopolo, and - get hands on with in-depth Codex workshops from @kagigz and @reach_vb! See you April 8-10 in London! AI Engineers💙@OpenAIDevs !

WebGPU is INSANE! 🤯 Here's a 24B parameter model running locally in a web browser, at a blazing ~50 tokens/second on my M4 Max. ⚡️ It's the largest model we've ever run with Transformers.js... and we're not stopping here. Big announcement soon. https://t.co/4emPjY89ba

BREAKING: @ivanleomk is joining Google DeepMind https://t.co/Fu365QjYDk

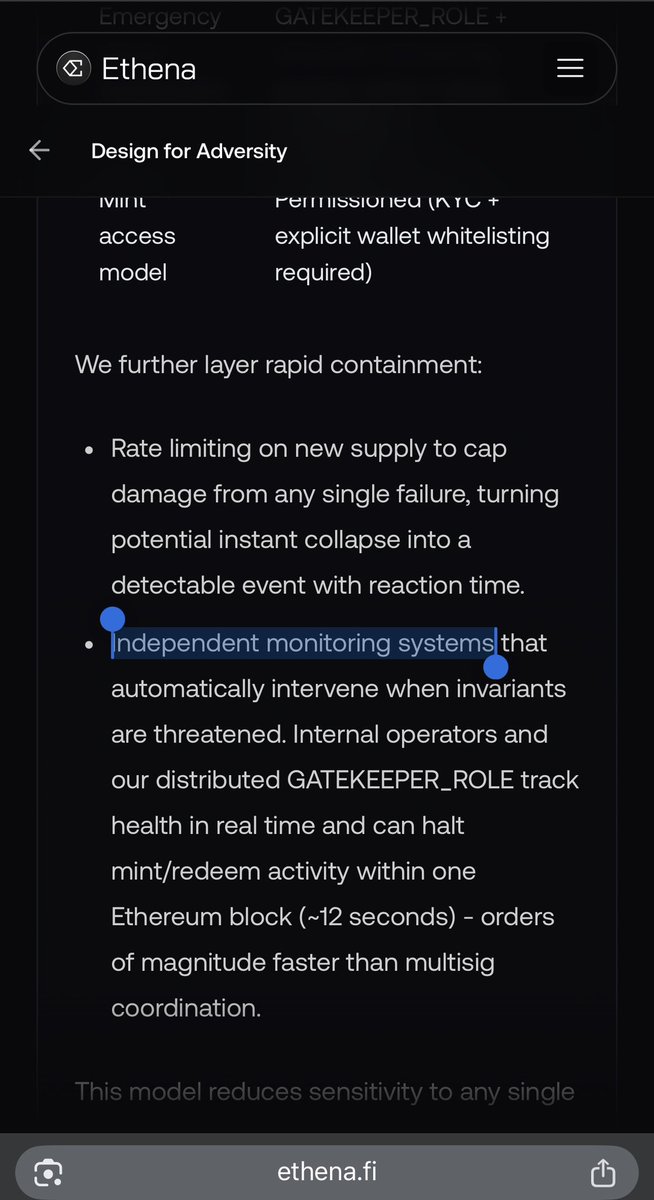

We've published a blog post on the recent Resolv USR incident. It covers what happened, why offchain trust assumptions matter, and the design principles Ethena adopted from inception to prevent similar outcomes. Read it below. https://t.co/F1Hto8n1E9

What is Hypernative? https://t.co/Dy93zLHpYX

We've published a blog post on the recent Resolv USR incident. It covers what happened, why offchain trust assumptions matter, and the design principles Ethena adopted from inception to prevent similar outcomes. Read it below. https://t.co/F1Hto8n1E9

A tragedy is unfolding at AllenAI. Fs in the chat https://t.co/yPwS7uiE1t

this is a huge deal and a sign of the changing legal tides for big tech. the plaintiffs attorneys here were early adopters of a novel legal strategy that uses product liability law to sidestep tech firms' go-to defense (section 230) & hold them accountable for negligent design https://t.co/FpmRddkSIt

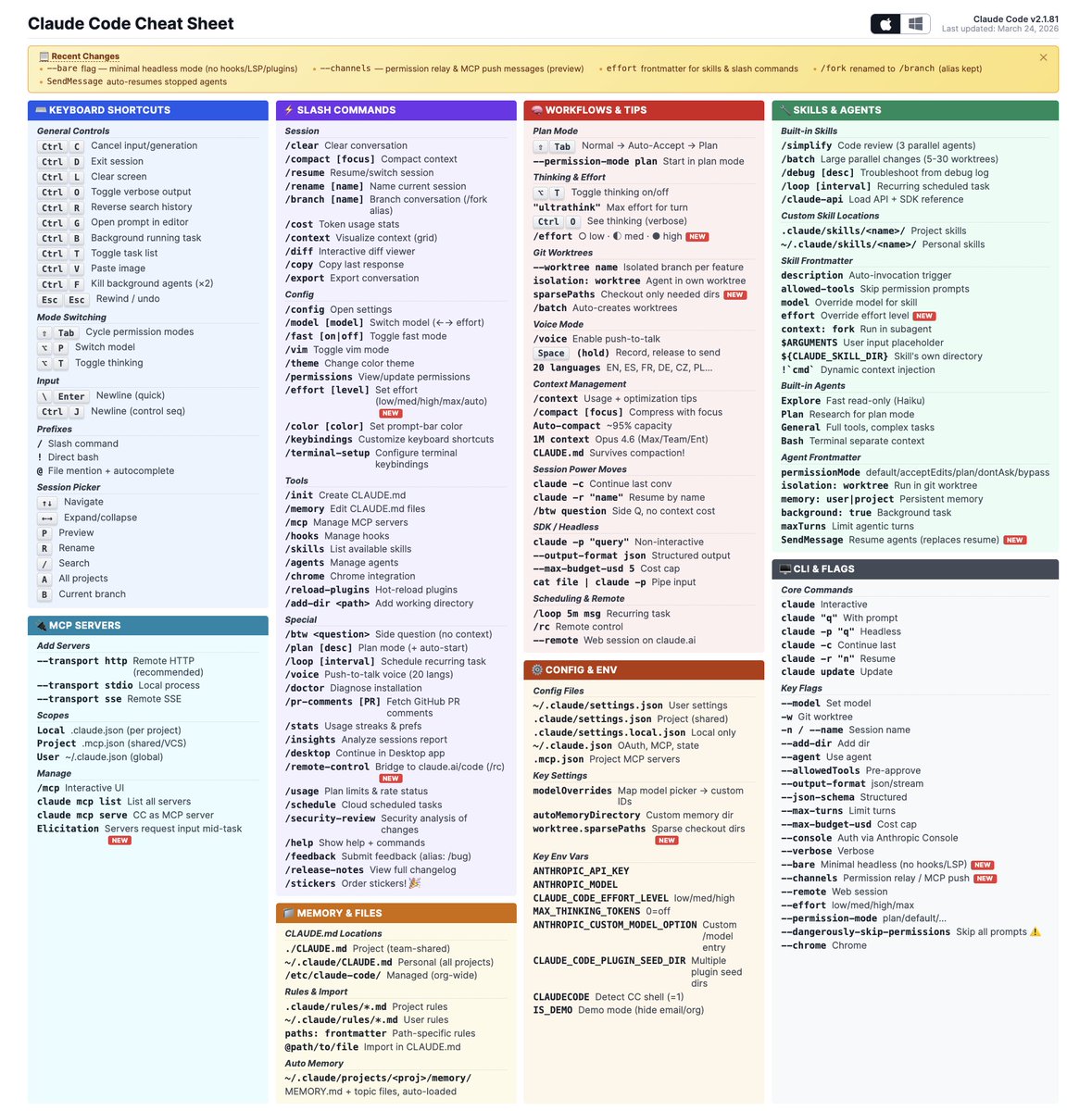

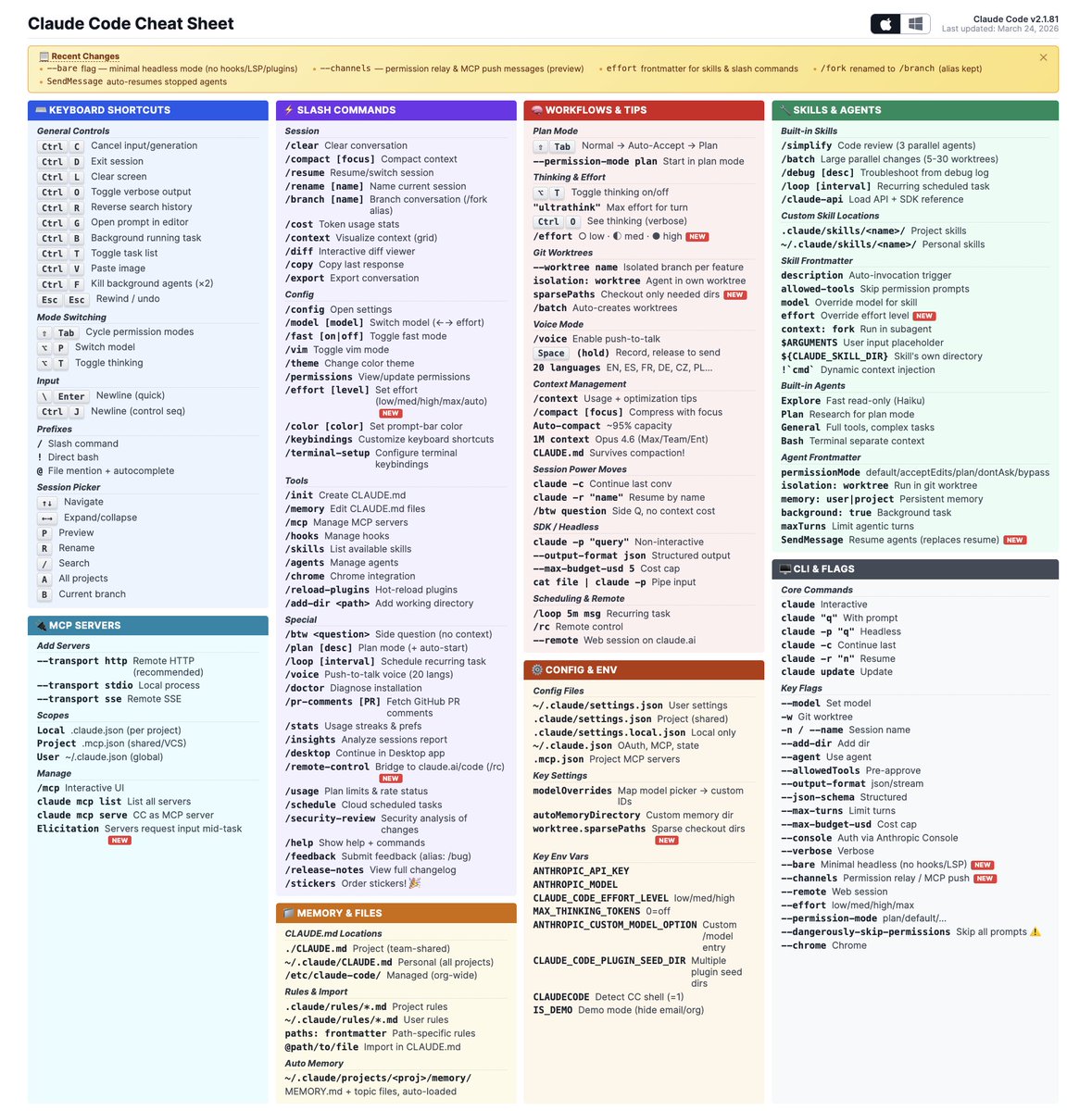

Nice cheat sheet for Claude Code. https://t.co/ikGzbSqjRK

https://t.co/BIKKach0bj

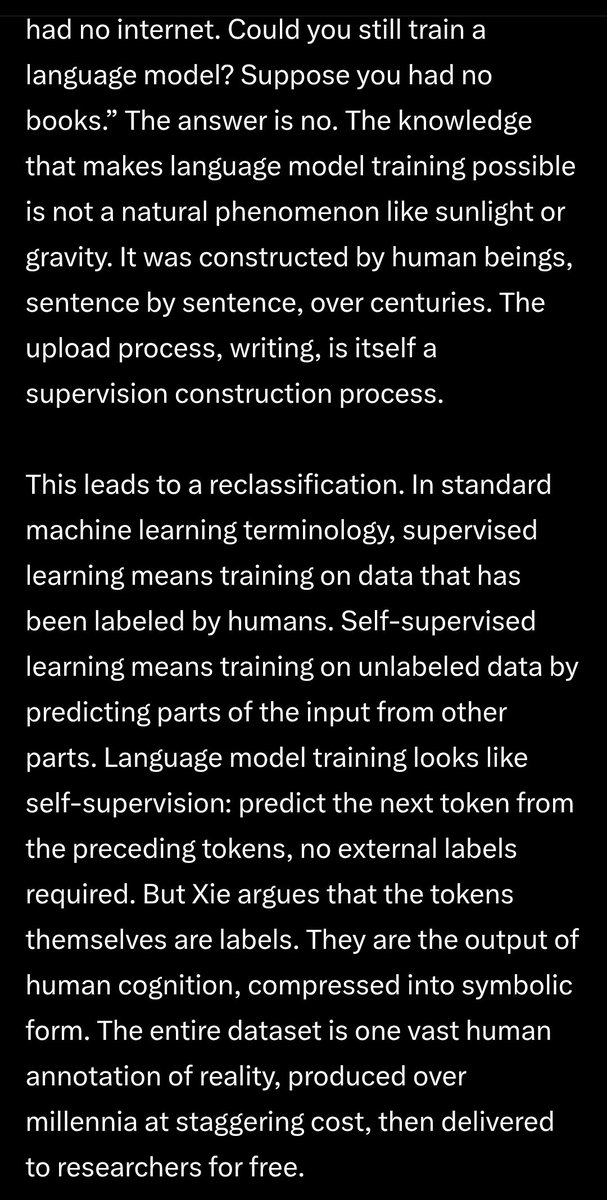

yeah, @sainingxie is one of the few who really get it. he sees through the loud hype. he knows we're not there yet, he agrees with sutton's recent and highly controversial take on LLMs being anti-bitter-pilled. the way he explains it is delightfully simple as well. essentially, just because u smuggled in human knowledge via decades of crowdsourced human annotations pertaining to all interesting aspects of reality, doesn't mean that u can naively advertise the product as scalable in the sense that sutton originally envisioned. LLMs rely very heavily on human knowledge to bootstrap their impressive competence, and once u take that away, the intelligence that remains is severely lacking. tl;dr: just because it wasn't u who annotated reality by hand for a few decades, doesn't mean that ur model requires no human annotations.

https://t.co/IECg0FK5YI

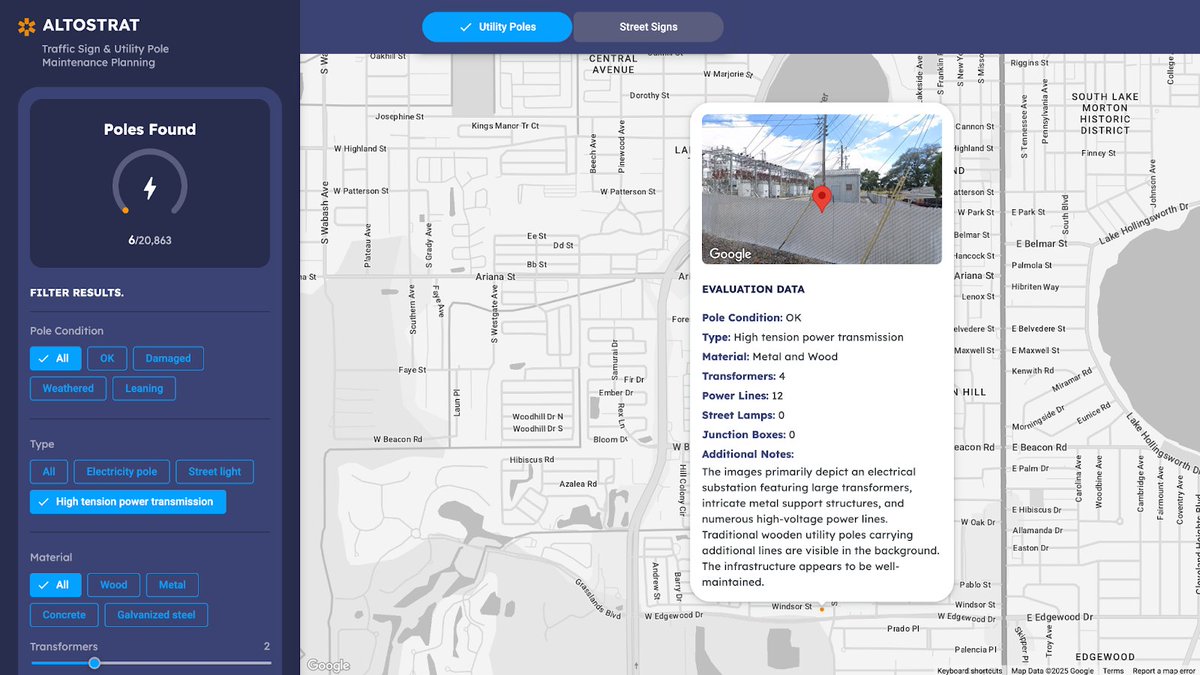

📢 Today, we're announcing general availability for Street View Insights, our first Imagery Insights dataset. 👁️ Access Street View’s vast image repository 💬 Prompt Gemini from Vertex AI Studio 🔗 Connect directly to BigQuery Get started ➡️ https://t.co/P8776NY96m https://t.co/bNTGY2u50J

Meet DiT4DiT, the FIRST video generation architecture for humanoid robot control. 🤖✨ By treating video generation as a world model, we give robots real "physical intuition." 🔥 The Results: 🚀 >10x better sample efficiency & up to 7x faster convergence! 🏆 SOTA on LIBERO (98.6%) & RoboCasa-GR1 (50.8%). 🦾 Zero-shot generalization on the Unitree G1 humanoid using just monocular vision (1x speed, fully autonomous). 🧠 How it works: We couple a Video DiT with an Action DiT via a dual flow-matching objective. Instead of relying on fully reconstructed future frames, we extract "intermediate denoising features" to guide action prediction—simple but highly effective! Check out the paper, real-world videos, and project page here: https://t.co/Ml0AA8PKqA #EmbodiedAI #Robotics #MachineLearning #WorldModels

What happens when you combine Gemini Live with Lyria 3? You get a super cool AI DJ that fulfills all your song wishes 📻✨ Lyria 3 is now available on the Gemini API! 🥳 https://t.co/gczY3o2DBi

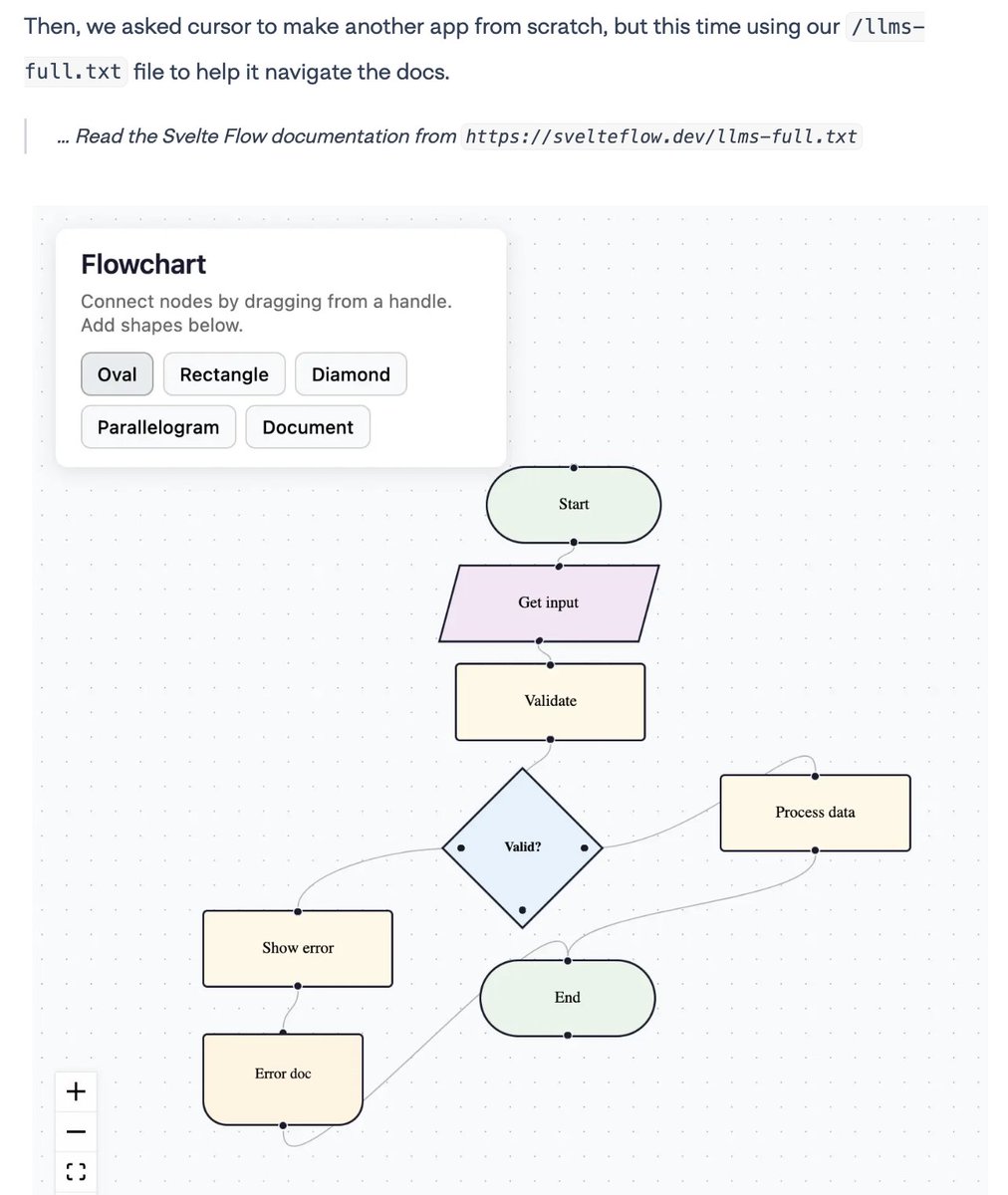

Great to see @xyflowdev joining the llms.txt party - and sharing some really helpful examples of how llms.txt helps agents get better results! 😎 https://t.co/gtmo0iiEJd https://t.co/6R1vBQO8OQ

@yoavgo @deliprao @NeurIPSConf Well, for one thing, OFAC disagrees: https://t.co/QGsx1IPVni

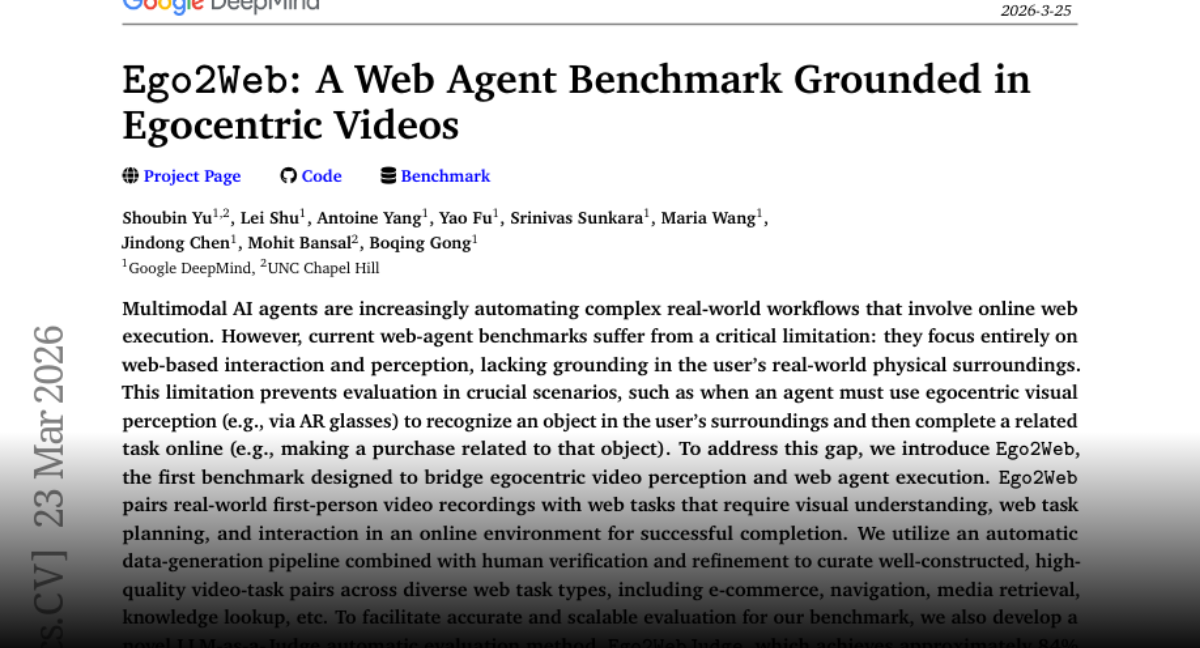

Ego2Web A Web Agent Benchmark Grounded in Egocentric Videos paper: https://t.co/4kYE2HEyPA https://t.co/XJh97rmnOJ

Life update I’ve moved to San Francisco and joined @GoogleDeepMind. Excited to work alongside @OfficialLoganK @thorwebdev @DynamicWebPaige @_philschmid @patloeber @ammaar @vadiamit @osanseviero @harrisonfjobe @goodside @alihcevik @matthewridenour https://t.co/HBiaH3yKii

https://t.co/96CWVKcudA

Question for the group: has there been any great art about Covid? Any incredible literary novels or films? I can't think of anything off the top of my head but my cultural knowledge is not limitless.

https://t.co/96CWVKcudA

Napoleon on procrastination. Written in 1793. Still punching you in the face in 2026. https://t.co/YA6DVWXw4C