Your curated collection of saved posts and media

FSD suddenly braked. It turns out it was because an adorable little dog darted across the road. https://t.co/kvsuXM11t7

นักเลงลิโด้ เหล่จ๋าว~~ #เจ้าแก้มก้อน #Fluke_Natouch https://t.co/TWbYfQLxFL

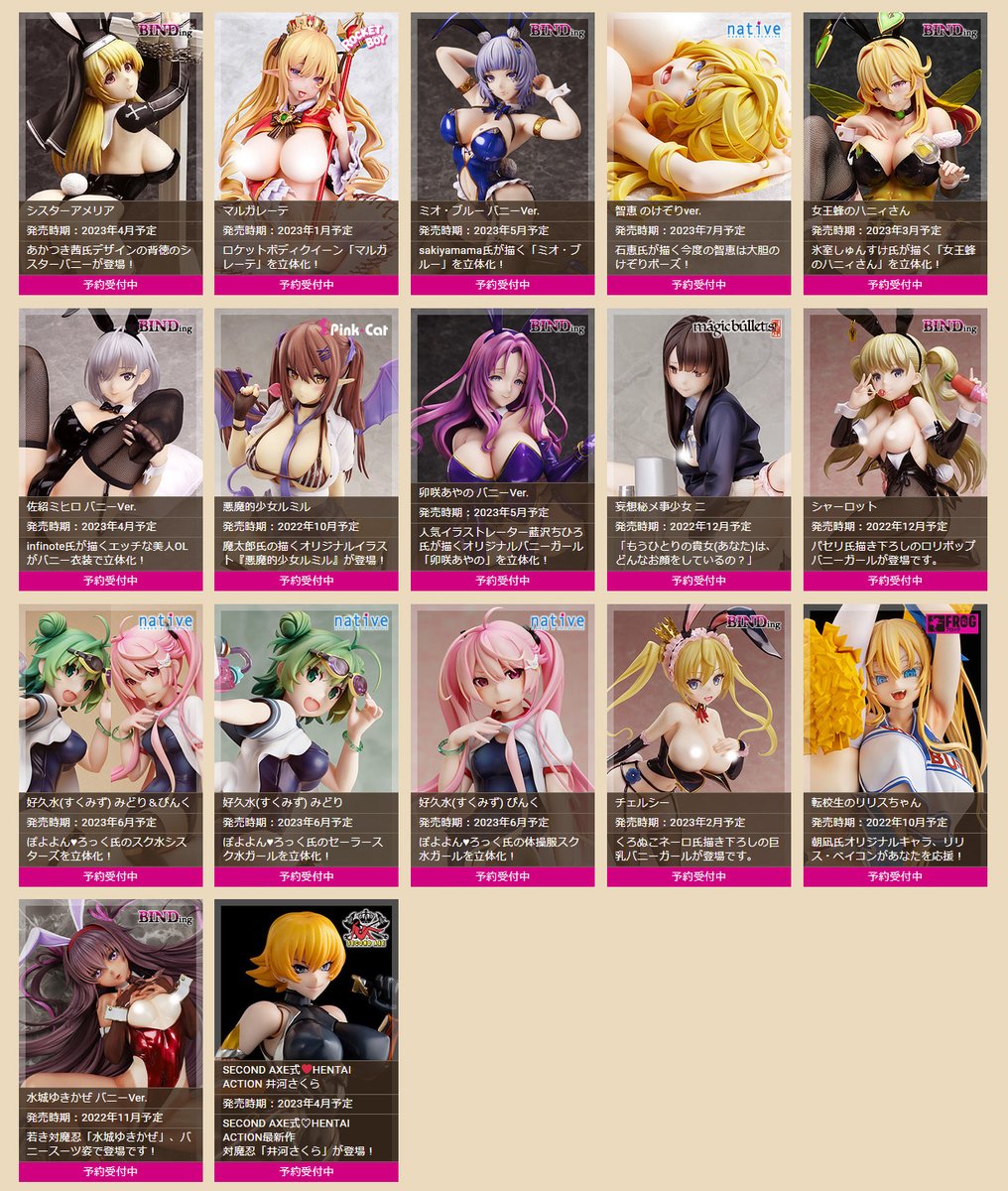

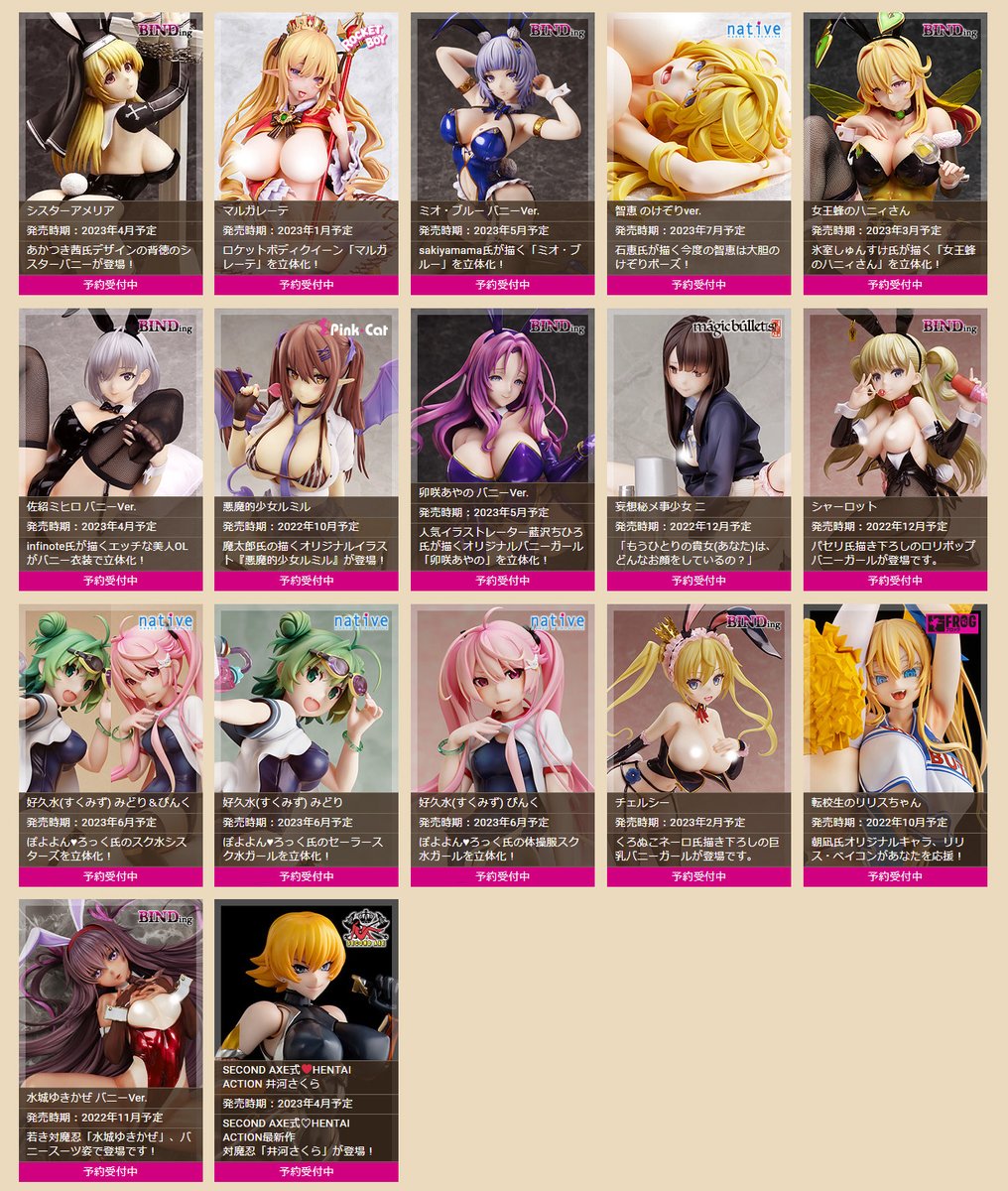

【初お披露目】 『ぽよよん♥ろっく』さんイラスト「好久水みどり」「好久水ぴんく」 #ウェブWF #WebWF https://t.co/aTptjjfsZK

【初お披露目】 『朝凪』さんイラスト 「転校生のリリスちゃん」 原型制作:マッカラン24 #フロッグ #エロホビ https://t.co/JRJ3rmX8PV

คุณคนโปรด🐣💕👼🏻 @FlukeNatouch #เจ้าแก้มก้อน #Fluke_Natouch https://t.co/d7SsWy4QP2

こちらが現在受注中の子達です! ご予約いただければ必ず手に入れることができます!!🙇♀️🙇♀️🙇♀️ 日本:https://t.co/BxccnepRTi EN:https://t.co/WPmxTtNKDd https://t.co/VGoRM7WZpF

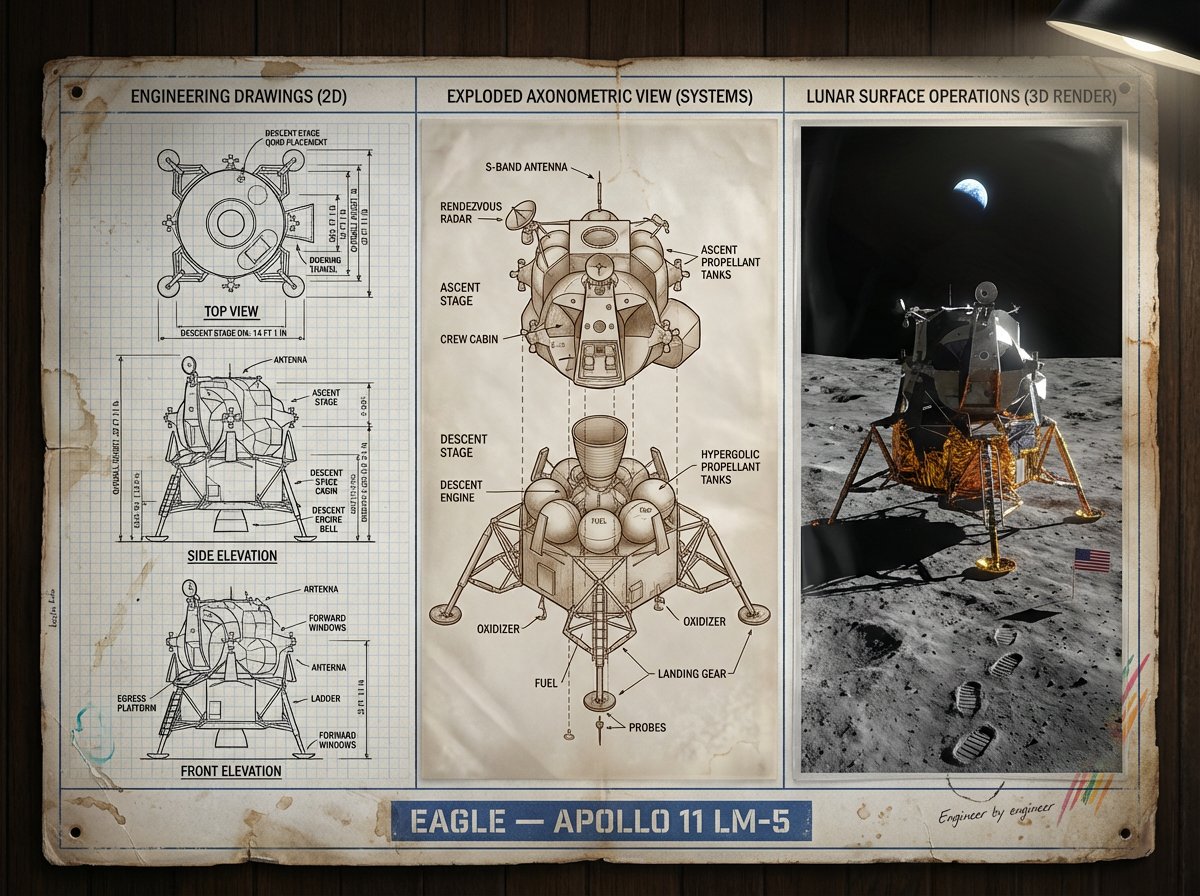

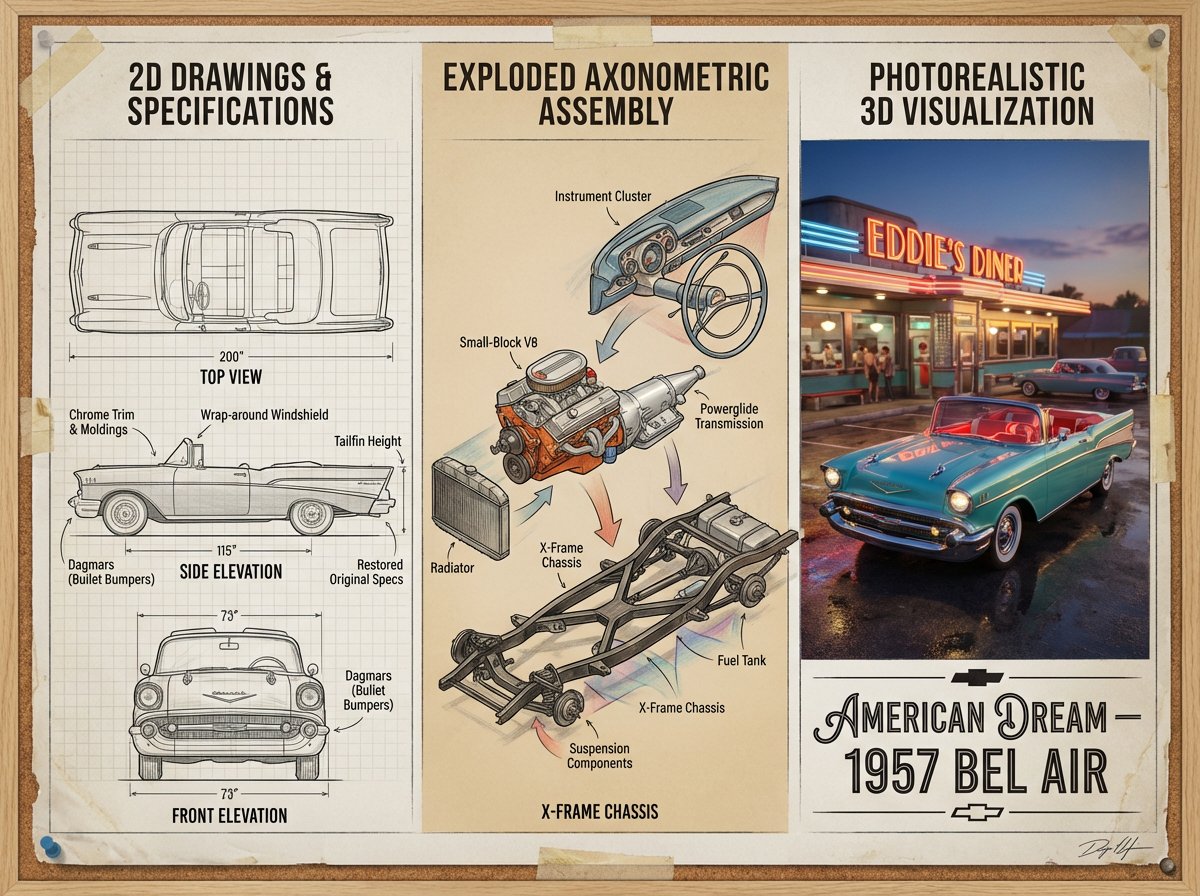

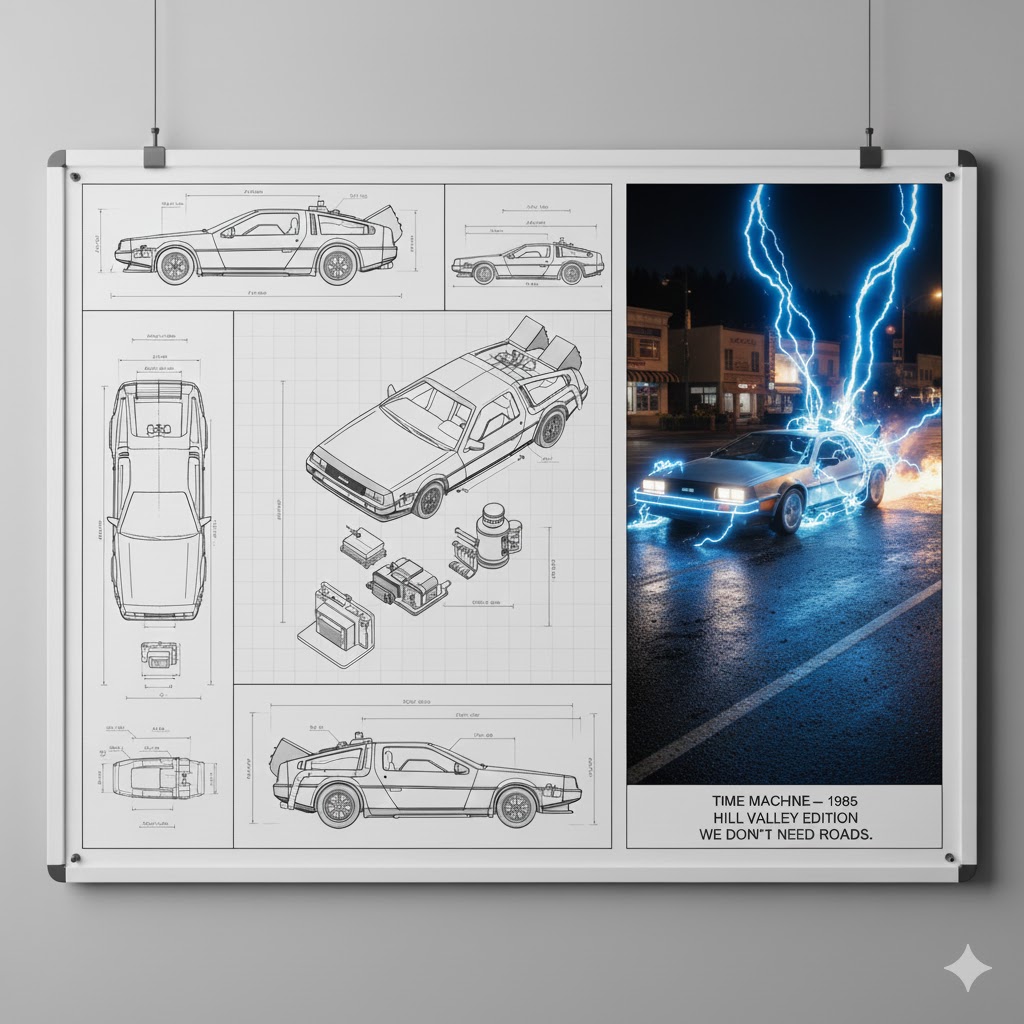

Prompt Studio: Nano Banana Pro Designer Presentation Board, in Firefly An expert [DISCIPLINE] designer’s presentation board for [SUBJECT] — [ICONIC FEATURES / ERA], featuring black-and-white 2D technical drawings with annotations and dimensions on the left, an exploded axonometric diagram revealing [KEY INTERNAL COMPONENTS / MATERIALS] in the center, and a photorealistic 3D render of [SUBJECT] in [ICONIC ENVIRONMENT / SCENE] on the right, with [LIGHTING / ATMOSPHERE / MOTION DETAILS]; visual style transitions from [TECHNICAL / ARCHIVAL TONES] to [EMOTIONAL / ATMOSPHERIC COLOR PALETTE], clean grid layout, museum-grade industrial design presentation, ultra-detailed cinematic realism, title block reading “[TITLE] — [YEAR / VARIANT / TAGLINE]”. See ALTs for ideas 👇

Back to the Future ;) https://t.co/T8uRRuj7uF

Prompt Studio: Nano Banana Pro Designer Presentation Board, in Firefly An expert [DISCIPLINE] designer’s presentation board for [SUBJECT] — [ICONIC FEATURES / ERA], featuring black-and-white 2D technical drawings with annotations and dimensions on the left, an exploded axonometr

https://t.co/KXVfAXvInO

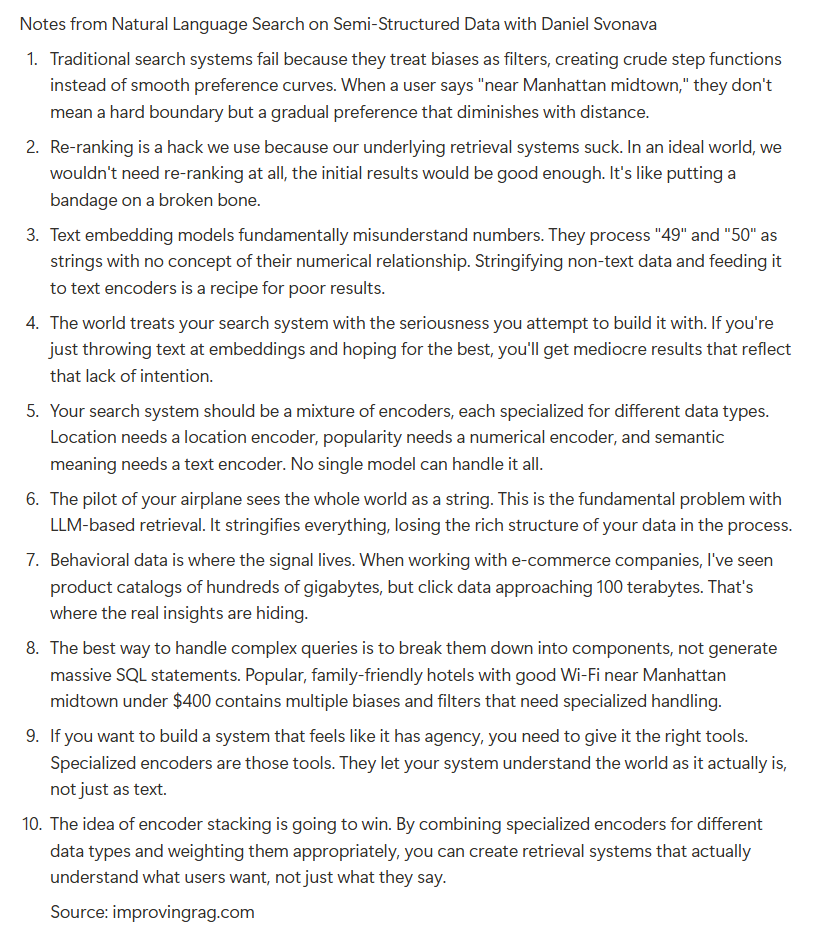

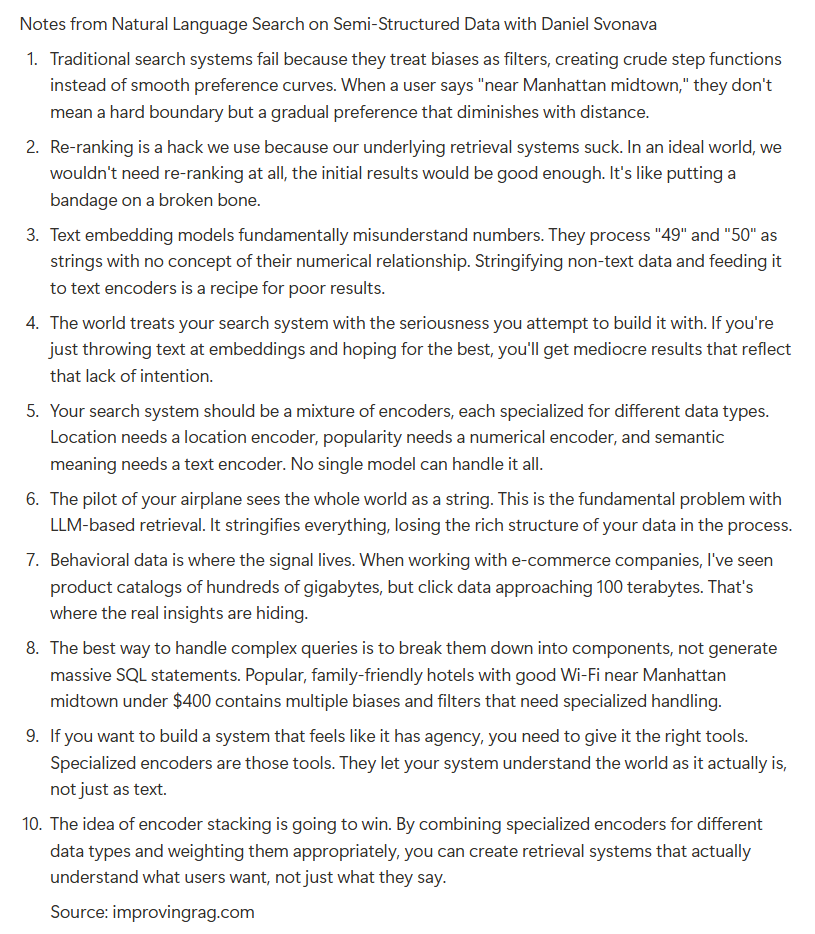

notes from our talk with the ceo of @superlinked @svonava https://t.co/YkVjsOjcZE

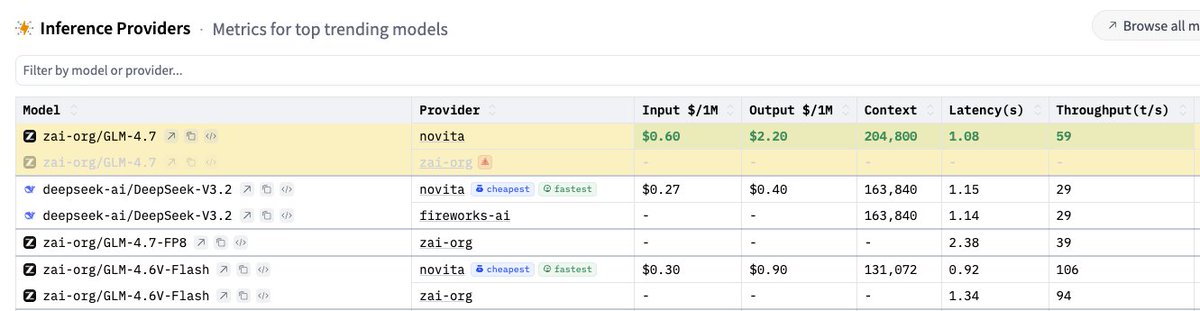

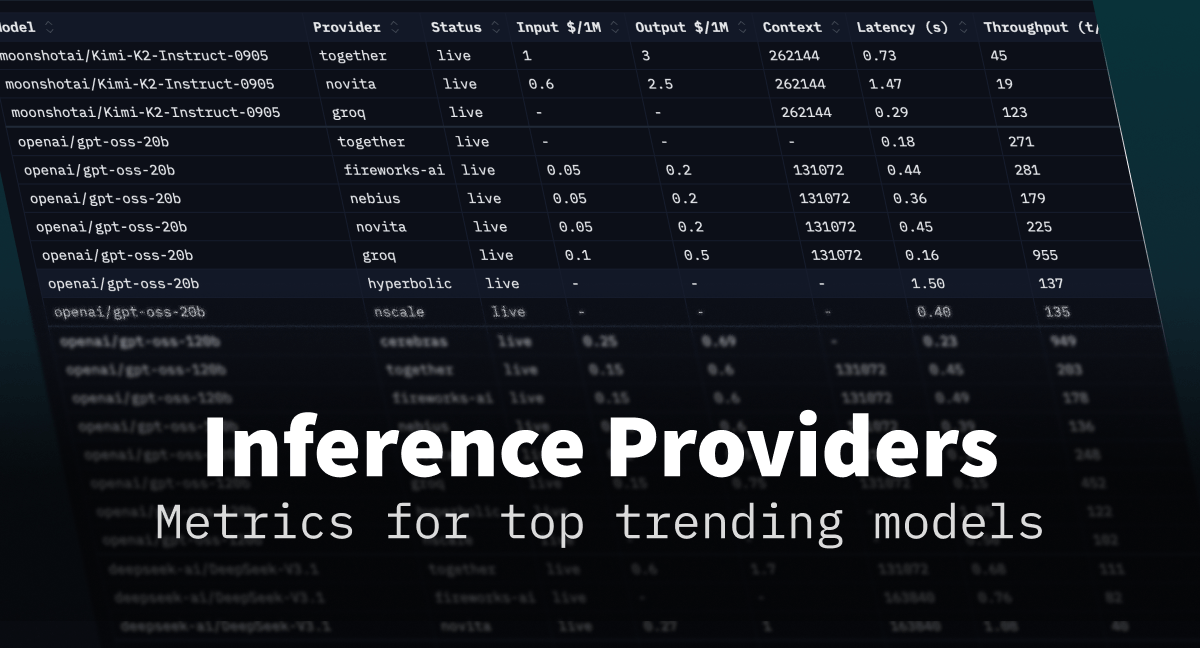

glm 4.7 on cerebras and groq would be great https://t.co/UvkWz5v7GJ https://t.co/tKiVZ0c3iP

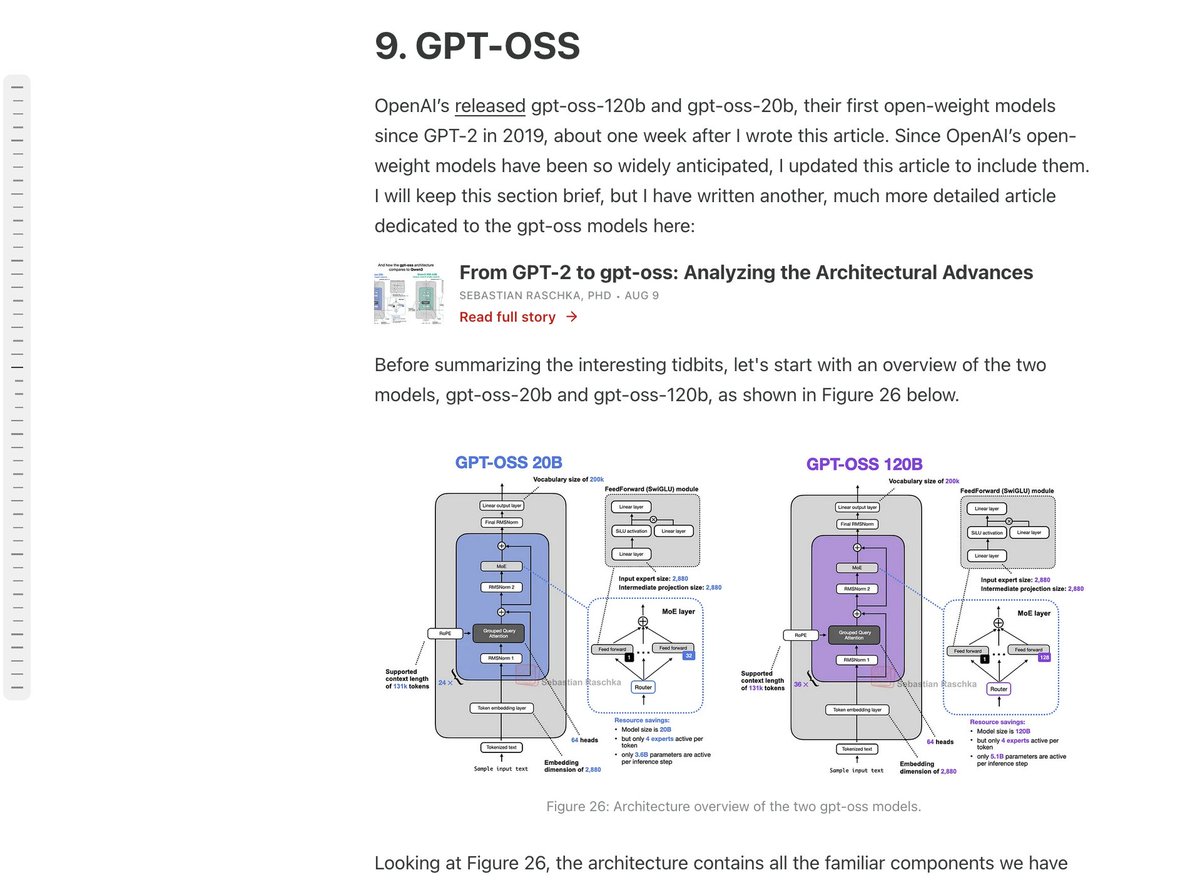

@filterchin @sama Section 9 :) https://t.co/Yt3IHYrLB1

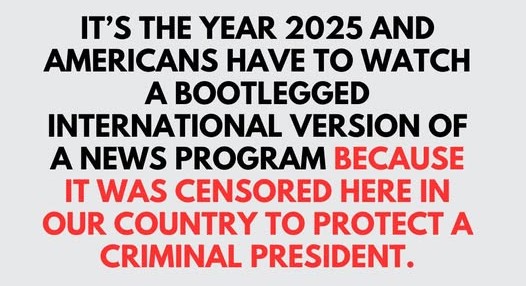

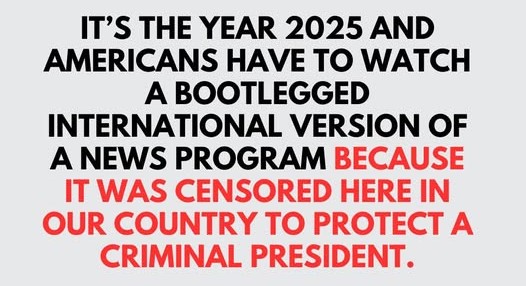

type of shit you see your little cousin tweeting https://t.co/3m9EtHaNdA

type of shit you see your little cousin tweeting https://t.co/3m9EtHaNdA

Iryna Zarutska's mural in Los Angeles https://t.co/qKWxeYjYN9

GDP up 4.3%, murders down 20%, suicides and opiate deaths down too, life expectancy recovered and rising again, cancer survival up and disability down, bee populations coming back... It's been an incredible year. Merry Christmas everyone! https://t.co/nXZXr1ukWe

If the name Thierry Breton sounds familiar to you, it's probably because of this https://t.co/sbtIBwDi23

Tesla literally sees the world and understands it like a human It knows which turns to take, navigates complex environments, and intelligently finds empty parking spots.....all on its own This feels straight out of science fiction, and there is no other car in the world doing this today

BREAKING: Grok iOS app just hit 800,000 ratings in the US, with an incredible 4.9/5 average. https://t.co/DkUbXx6UO0

Remember SpaceX was publicly clear that its goal was Mars even before the first Falcon 9 even flew There should be even more companies driven by civilization-scale visions https://t.co/fLpI44Ib9n

You are seeing more and more tourist attractions in China featuring humanoids as part of their shows. IMO, humanoids are going to bring a lot of value to the tourism and entertainment industry in the short term. https://t.co/aPbIBqPQuy

Vibe design is finally here. I’ve spent the past few days playing around with Stitch (from @GoogleLabs) and I think we can finally generate designs that don’t suck. Watch me prompt a UI design, edit with Nano Banana Pro, translate it to code, and then deploy on AI Studio 👇 https://t.co/962B8b7fY1

✨🇨🇳Just grab and drop the content you want, and it’ll be shared to your other device instantly. This is the super cool HarmonyOS Super Device interconnection feature of Huawei phones. https://t.co/0sg2H9J83d

🚨 GROK NEW FEATURE 🚨 Voice Mode has a new Isolation mode. Isolation mode makes the mic focus on your voice and reduces background noise, so the app understands you better in loud or busy places like outside or in a car. You can turn it on from the settings icon. https://t.co/9MGf1r9wVF

sqlit is a lazygit style SQL TUI for the terminal. It supports 10+ databases (Postgres, SQLite, Supabase, ClickHouse, DuckDB, etc), has query history, autocomplete and more. Peter Adams (Maxteabag on GitHub) made sqlit using @textualizeio and is Terminal Tool of the Week! ⭐️ https://t.co/f5M418UpkK

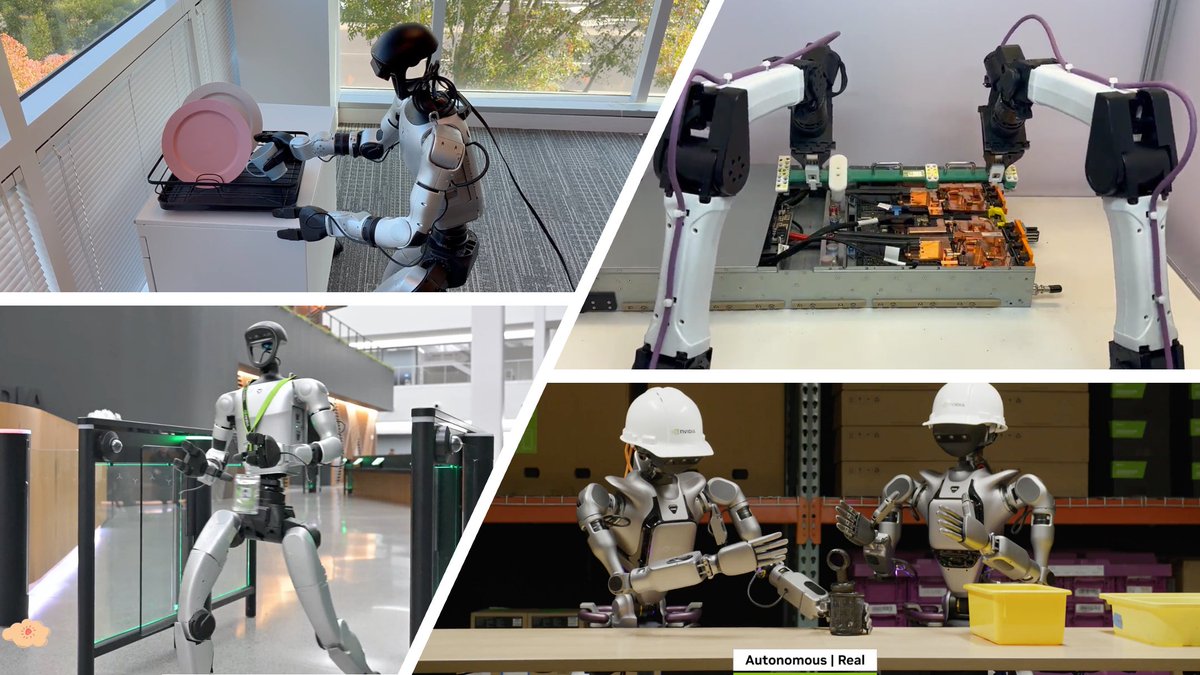

I'm on a singular mission to solve the Physical Turing Test for robotics. It's the next, or perhaps THE last grand challenge of AI. Super-intelligence in text strings will win a Nobel prize before we have chimpanzee-intelligence in agility & dexterity. Moravec's paradox is a curse to be broken, a wall to be torn down. Nothing can stand between humanity and exponential physical productivity on this planet, and perhaps some day on planets beyond. We started a small lab at NVIDIA and grew to 30 strong very recently. The team punches way above its weight. Our research footprint spans foundation models, world models, embodied reasoning, simulation, whole-body control, and many flavors of RL - basically the full stack of robot learning. This year, we launched: - GR00T VLA (vision-language-action) foundation models: open-sourced N1 in Mar, N1.5 in June, and N1.6 this month; - GR00T Dreams: video world model for scaling synthetic data; - SONIC: humanoid whole-body control foundation model; - RL post-training for VLAs and RL recipes for sim2real. These wouldn't have been possible without the numerous collaborating teams at NVIDIA, strong leadership support, and coauthors from university labs. Thank you all for believing in the mission. Thread on the gallery of milestones:

We open-sourced two further iterations to improve the N1 model on motion smoothness, language following, and cross-embodiment capability. N1.5: https://t.co/4QvMsSLKe0 N1.6: https://t.co/QrQM434Xaf https://t.co/okYzKet3U2

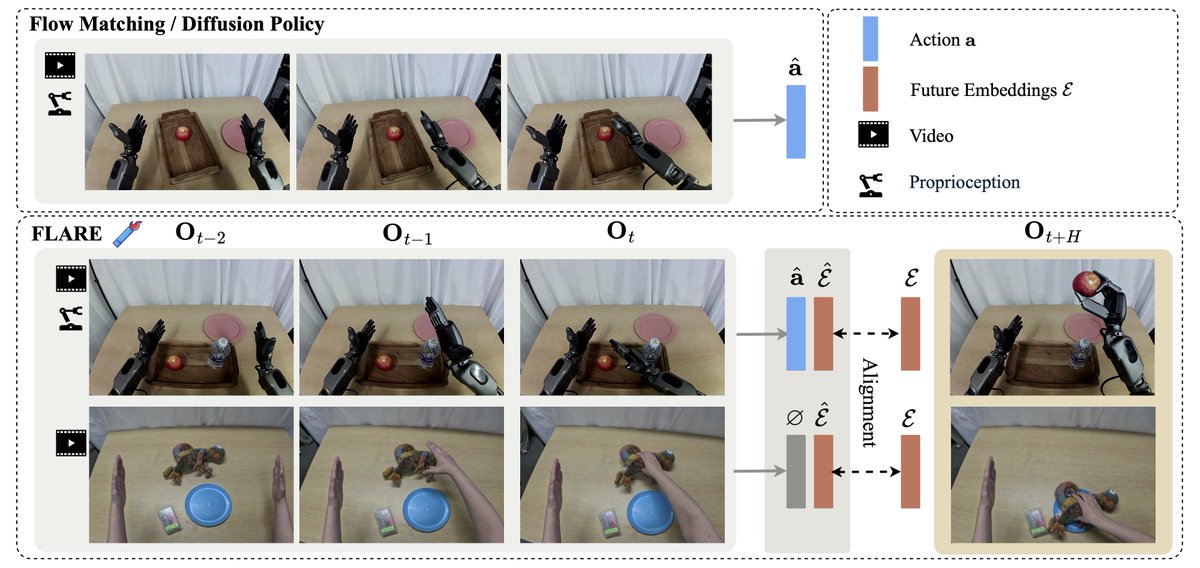

Representation also matters for VLA models! Introducing FLARE: Robot Learning with Implicit World Modeling. With future latent alignment objective, FLARE significantly improves policy performance on multitask imitation learning & unlocks learning from egocentric human videos.

How do you give a humanoid the general motion capability? Not just single motions, but all motion? Introducing SONIC, our new work on supersizing motion tracking for natural humanoid control. We argue that motion tracking is the scalable foundation task for humanoids. So we "supersized" it: 9k+ GPU hours and 100M+ motion frames. But tracking alone is not enough; we show how to make a useful control system out of it: - Universal Kinematic Planner: Enables game-like gamepad control and high-level teleoperation, just like controlling a character in a game. - VR Full-Body Teleop: Direct, real-time whole-body control by a human wearing a VR headset. - VR Keypoint Teleop: Control the upper body (hands/head) while our planner handles robust locomotion automatically. - VLA Integration: We connect this motion tracker to autonomous Visual-Language-Action (VLA) models for autonomous task execution! We use a Universal Token Space to UNIFY this command space, turning our robust tracker into a general-purpose, programmable humanoid brain. This is the generalist "System 1" for humanoids. 🚀 Project: https://t.co/X5xl7daKAS #Humanoids #Robotics #AI #FoundationModels #NVIDIAResearch 🧠🔥

What if robots could improve themselves by learning from their own failures in the real-world? Introducing 𝗣𝗟𝗗 (𝗣𝗿𝗼𝗯𝗲, 𝗟𝗲𝗮𝗿𝗻, 𝗗𝗶𝘀𝘁𝗶𝗹𝗹) — a recipe that enables Vision-Language-Action (VLA) models to self-improve for high-precision manipulation tasks. PLD couples real-world residual reinforcement learning with standard supervised fine-tuning — letting robots discover, recover, and distill their own data flywheel. Quick 🧵