Your curated collection of saved posts and media

Meet Hermes Agent, the open source agent that grows with you. Hermes Agent remembers what it learns and gets more capable over time, with a multi-level memory system and persistent dedicated machine access. https://t.co/Xe55wBbUuo

Most language models only read forward. Perplexity just open-sourced 4 models that read text in both directions. They used a technique from image generation to retrain Qwen3 so every word can see every other word in a passage. That changes how well a model understands meaning. They built four models from this: 1. Two sizes: 0.6B and 4B parameters 2. Two types: standard search embeddings and context-aware embeddings The context-aware version is the interesting one. It processes an entire document at once, so each small chunk "knows" what the full document is about. Standard embeddings treat each chunk in isolation. > Tops benchmarks for models of similar size > Works in multiple languages out of the box > MIT licensed, free for commercial use If you're building search over large document collections, you can now get document-level understanding without running a massive model. Small enough to actually deploy.

https://t.co/Li76j2d3L3

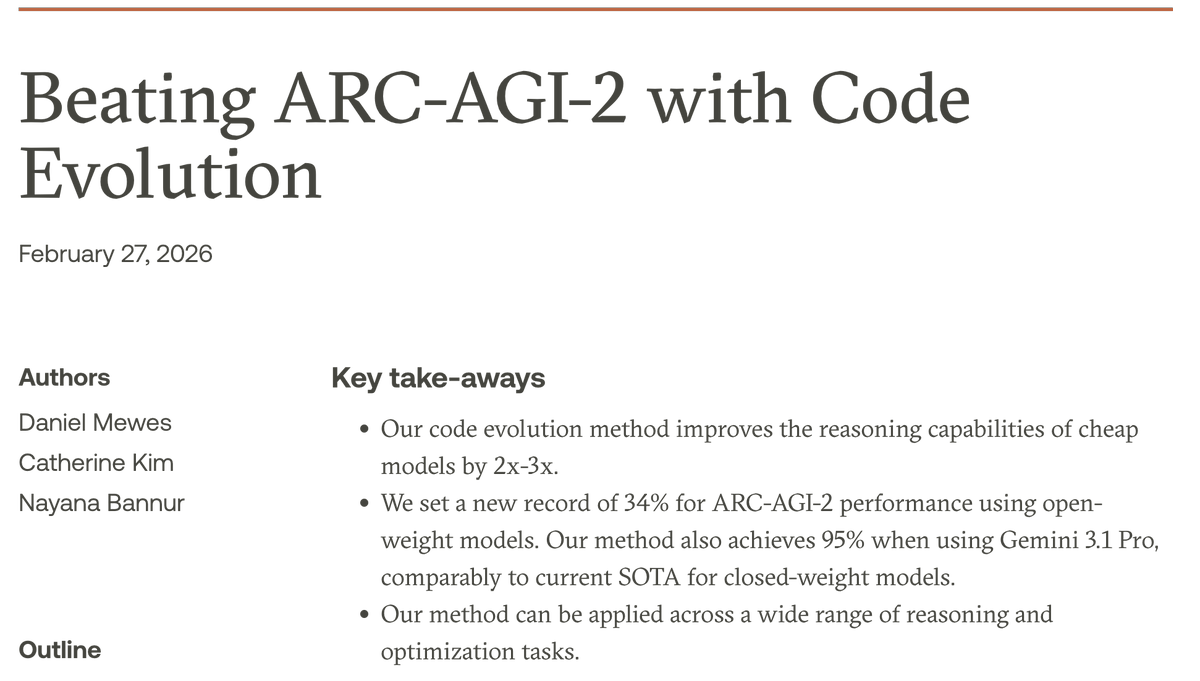

Imbue just open-sourced Evolver. A tool that uses LLMs to automatically optimize code and prompts. They hit 95% on ARC-AGI-2 benchmarks. That's GPT-5.2-level performance from an open model. Evolver works like natural selection for code. You give it three things: 1. Starting code or prompt 2. A way to score results 3. An LLM that suggests improvements Then it runs in a loop. It picks high-scoring solutions. Mutates them. Tests the mutations. Keeps what works. The key difference from random mutation: LLMs propose targeted fixes. When a solution fails on specific inputs, the LLM sees those failures. It suggests changes to fix them. Most suggestions don't help. But some do. Those survivors become parents for the next generation. Evolver adds smart optimizations: > Batch mutations: fix multiple failures at once > Learning logs: share discoveries across branches > Post-mutation filters: skip bad mutations before scoring The verification step alone cuts costs 10x. This works on any problem where LLMs can read the code and you can score the output. You can now auto-optimize: - Agentic workflows - Prompt templates - Code performance - Reasoning chains No gradient descent needed. No differentiable functions required.

"AI is not in a bubble, because you are fundamentally automating the boring part of businesses like accounting or billing or product design or delivery, or inventory. If anything it is underhyped" ~ Former Google CEO Eric Schmidt https://t.co/dnWdNJ5ffd

Latest nl with 3 world models, the moltbook analyses, a bunch of iso cities, a not bad web design gen site, m2-her roleplaying, a fun painting-to-blender paper, a concept for plot description I didn't know about, and more... (games, useful document models, some claude-ing) 1/2 https://t.co/guk3M7hqQc

Introducing Pika AI Selves: AI you birth, raise, and set loose to be a living extension of you. They’re rich, multi-faceted beings with persistent memory, and maybe even a peanut allergy. It’s up to you! Have them send pictures to your group chat. Make a video game about your fish. Call your mom while you do anything but call your mom. The possibilities are as myriad as the stars ✨ Get on the list to give birth to yours at pika dot me

Feel a lot of resonance with this. When we're doing things right, I think we're building tools for open-ended exploration, where the journey leads you somewhere new https://t.co/aPQsrpAIJV

@vanstriendaniel https://t.co/jT0qWQpq84

@viratt_mankali @openclaw Hey yeah - we built a custom iOS app to chat and interact with OpenClaw. We opensourced it here: https://t.co/Drl94NfDOR

@sundeep Got it to share everything it’s up to on my lock screen 📱 https://t.co/lMmcyueaUu

Why is OpenClaw everywhere right now? 1. Use the AI model of your choice 2. Lives in your chat apps 3. Spin up agents from plain English and name them run 24/7 The personal AI that actually works while you sleep. 🦞 Btw you don’t need a Mac mini, android will do: https://t.co/5Ls2UFYpqg

@dwr We visualized an experience like this with Live Activity + OpenClaw https://t.co/4kra38D96D

@dwr Can try it here! https://t.co/Drl94NfDOR

@Jason @steipete We built a way to stream OpenClaw’s… Thinking, Tool calls, and Price - in realtime on your lock screen. https://t.co/KSLup0MHQJ

@Jason @steipete Open-sourced it here: https://t.co/Drl94NfDOR

@ashleybchae OddJob 🎩 https://t.co/W9uoW7LlSX

Chowder iOS 2026.2.26 📍 Location sharing MVP ⌚️ Better Live Activity summaries 🔄 Reconnect stall fix 🧪 Stronger diagnostics https://t.co/N143gLt2xV

New research from Google DeepMind. What if LLMs could discover entirely new multi-agent learning algorithms? Designing algorithms for multi-agent systems is hard. Classic approaches like PSRO and counterfactual regret minimization took years of expert effort to develop. Each new game-theoretic setting often demands its own specialized solution. But what if you could automate the discovery process itself? This research uses LLMs to automatically generate novel multi-agent learning algorithms through iterative prompting and refinement. The LLM proposes algorithm pseudocode, which gets evaluated against game-theoretic benchmarks, and feedback drives the next iteration. LLMs have absorbed enough algorithmic knowledge from training to serve as creative search engines over the space of possible algorithms. They generate candidates that humans wouldn't think to try. The discovered algorithms achieve competitive performance against established hand-crafted baselines across multiple game-theoretic domains. This shifts algorithm design from manual expert craft to automated discovery. The same approach could generalize beyond games to any domain where we need novel optimization procedures. Paper: https://t.co/9AeQYo2LFS Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Be careful what you put in your AGENTS dot md files. This new research evaluates AGENTS dot md files for coding agents. Everyone uses these context files in their repos to help AI coding agents. More context should mean better performance, right? Not quite. This study tested Claude Code (Sonnet-4.5), Codex (GPT-5.2/5.1 mini), and Qwen Code across SWE-bench and a new benchmark called AGENTbench with 138 real-world instances. LLM-generated context files actually decreased task success rates by 0.5-2% while increasing inference costs by over 20%. Agents followed the instructions, using the mentioned tools 1.6-2.5x more often, but that instruction-following paradoxically hurt performance and required 22% more reasoning tokens. Developer-written context files performed better, improving success by about 4%, but still came with higher costs and additional steps per task. The broader pattern is that context files encourage more exploration without helping agents locate relevant files any faster. They largely duplicate what already exists in repo documentation. The recommendation is clear. Omit LLM-generated context files entirely. Keep developer-written ones minimal and focused on task-specific requirements rather than comprehensive overviews. I featured a paper last week that showed that LLM-generated Skills also don't work so well. Self-improving agents are exciting, but be careful of context rot and of unnecessarily overloading your context window. Paper: https://t.co/agxvRbW26N Learn to build effective AI agents in our academy: https://t.co/1e8RZKrwFp

Important survey on agentic memory systems. Memory is one of the most critical components of AI agents. It enables LLM agents to maintain state across long interactions, supporting long-horizon reasoning and personalization beyond fixed context windows. But the empirical foundations of these systems remain fragile. This new survey presents a structured analysis of agentic memory from both architectural and system perspectives. The authors introduce a taxonomy based on four core memory structures and then systematically analyze the pain points limiting current systems. What did they find? Existing benchmarks are underscaled and often saturated. Evaluation metrics are misaligned with semantic utility. Performance varies significantly across backbone models. And the latency and throughput overhead introduced by memory maintenance is frequently overlooked. Current agentic memory systems often underperform their theoretical promise because evaluation and architecture are studied in isolation. As agents take on longer, more complex tasks, memory becomes the bottleneck. This survey clarifies where current systems fall short and outlines directions for more reliable evaluation and scalable memory design. Paper: https://t.co/xNGTbVVhq9 Learn to build effective AI agents in our academy: https://t.co/LRnpZN7deE

This new paper on agent failure makes an interesting claim. This is particularly important for long-horizon agents. Many assume that agents collapse because they hit problems they can't solve, caused by insufficient model knowledge. It turns out that in the majority of cases, they collapse because they take one wrong step, and then another, which compounds quickly. Each off-path tool call significantly increases the likelihood of failure of the next tool call. In other words, most agent failures are reliability failures, not capability failures. Paper: https://t.co/HCkTaXmdkM Learn to build effective AI agents in our academy: https://t.co/1e8RZKrwFp

New research from Intuit AI Research. Agent performance depends on more than just the agent. It also depends on the quality of the tool descriptions it reads. However, tool interfaces are still written for humans, not LLMs. As the number of candidate tools grows, poor descriptions become a real bottleneck for tool selection and parameter generation. As Karpathy has suggested, let's build for AI Agents. This new research introduces Trace-Free+, a curriculum learning framework that teaches models to rewrite tool descriptions into versions that are more effective for LLM agents. The key idea: during training, the model learns from execution traces showing which tool descriptions lead to successful usage. Then, through curriculum learning, it progressively reduces reliance on traces, so at inference time, it can improve tool descriptions for completely unseen tools without any execution history. On StableToolBench and RestBench, the approach shows consistent gains on unseen tools, strong cross-domain generalization, and robustness as candidate tool sets scale beyond 100. Instead of only fine-tuning the agent, optimizing the tool interface itself is a practical and underexplored lever for improving agent reliability. Paper: https://t.co/BeVigJNGYY Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

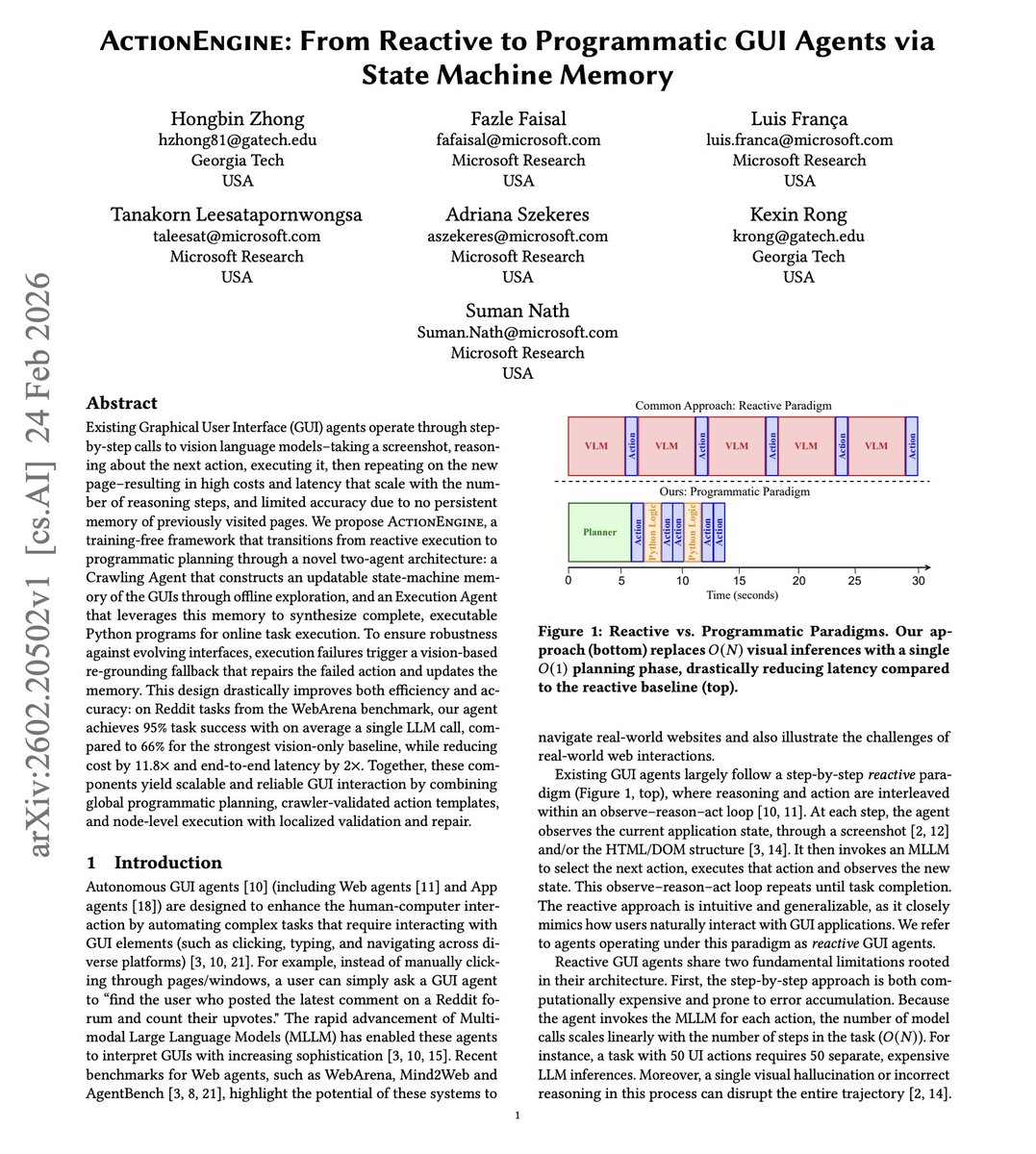

New research from Georgia Tech and Microsoft Research. GUI agents today are reactive. Every step costs an LLM call, which is why a lot of GUI agents are expensive, slow, and fragile. This new research introduces ActionEngine, a framework that shifts GUI agents from reactive execution to programmatic planning. A Crawling Agent explores the application offline and builds a state-machine graph of the interface. Nodes are page states, edges are actions. Then at runtime, an Execution Agent uses this graph to synthesize a complete Python program in a single LLM call. Instead of O(N) vision model calls per task, you get O(1) planning cost. On Reddit tasks from WebArena, ActionEngine achieves 95% task success with, on average, a single LLM call, compared to 66% for the strongest vision-only baseline. Cost drops by 11.8x. Latency drops by 2x. If the pre-planned script fails at runtime, a vision-based fallback repairs the action and updates the memory graph for future runs. Why does it matter? Treating GUI interaction as graph traversal rather than step-by-step probabilistic reasoning is a compelling direction for making agents both faster and more reliable. Paper: https://t.co/UR0PjvFf0c Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c