Your curated collection of saved posts and media

Not everyone is impressed by AI’s progress. Steve Wozniak says he finds many systems too polished and lacking personality, which makes them less useful to him in practice. What is technically impressive can still feel uninspiring. It highlights a gap that is becoming more visible. Accuracy alone does not make something engaging or valuable. https://t.co/BqHNuKZNPH @stevewoz @fortunemagazine @sashrogel

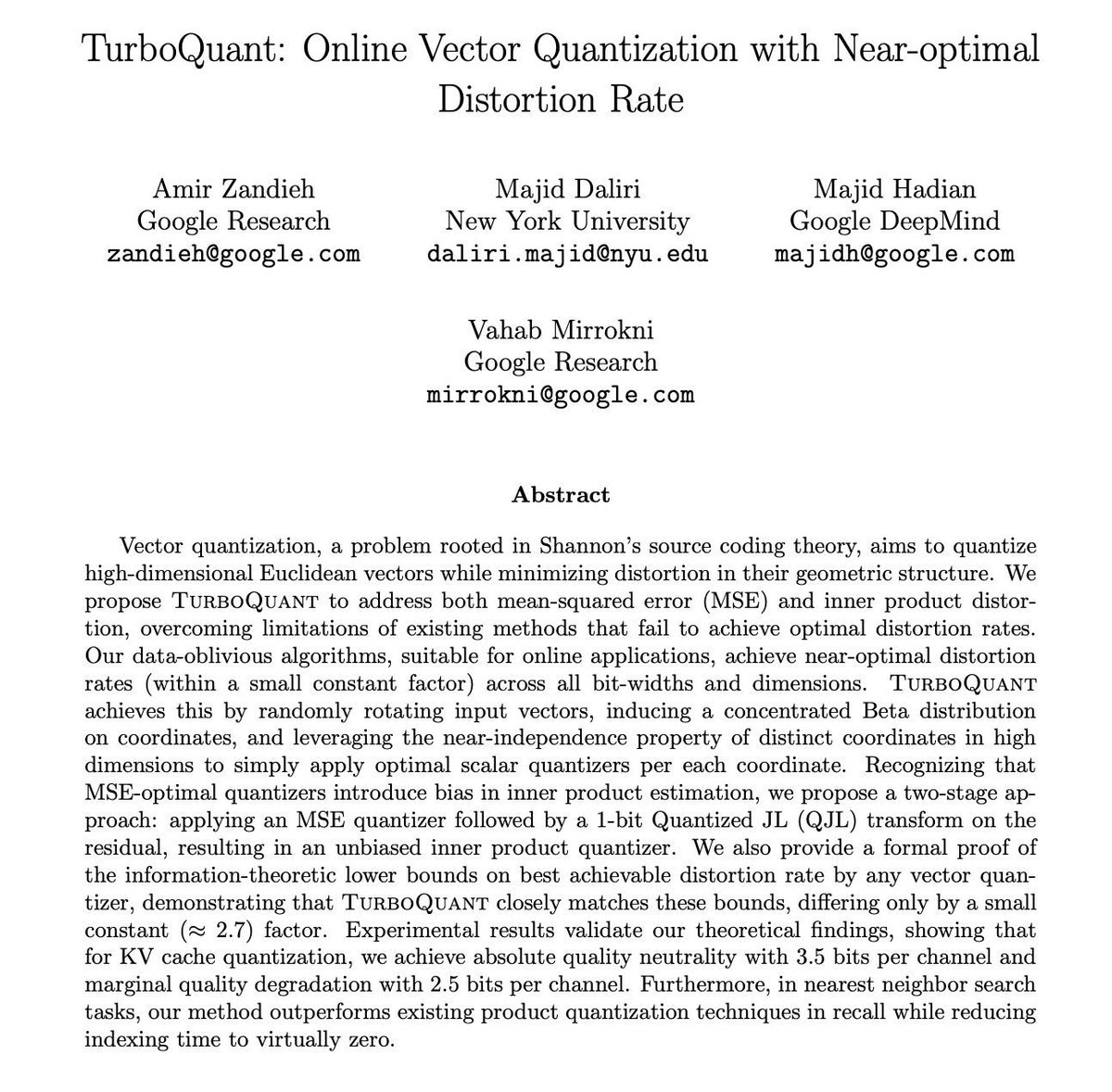

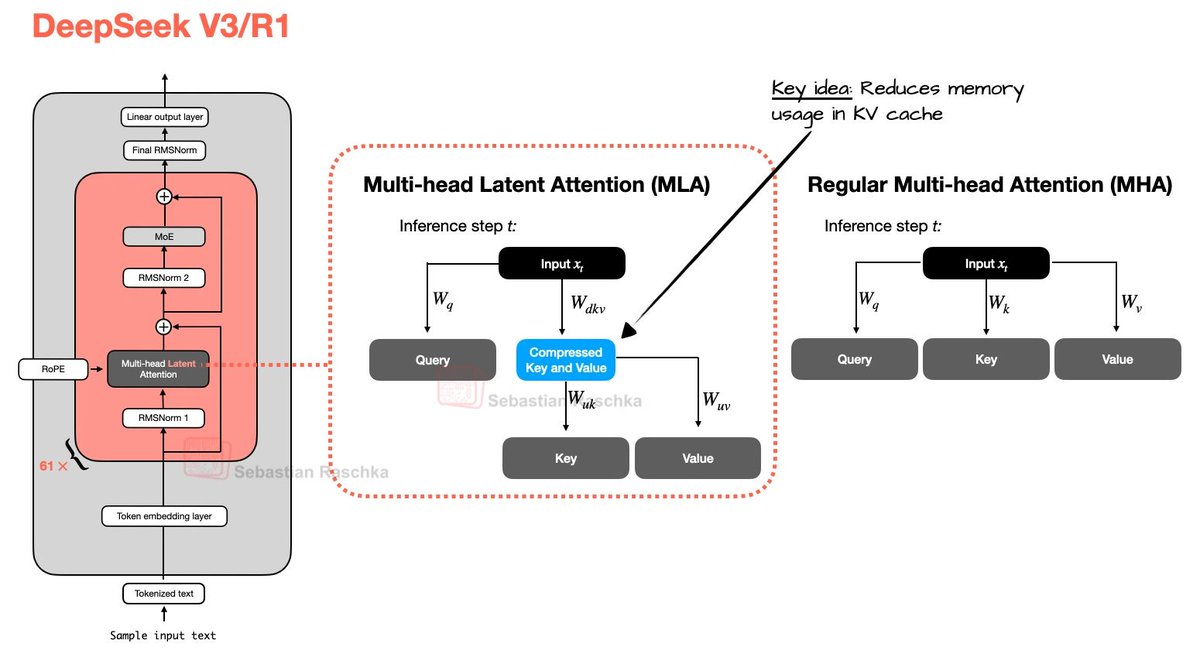

Google's latest paper on Compression is the future. Here's why. They compressed LLM memory 6x with zero accuracy loss. When ChatGPT writes a reply, it remembers every word you've said. That memory is stored in a growing notebook (KV cache). A 100,000-word conversation can eat 16 GB of GPU memory. That's half of what most high-end GPUs even have. This is the #1 cost of running AI. Not the thinking. The remembering. TurboQuant shrinks each number in that notebook from 32 bits to just 3. That's like replacing a full paragraph with three words and losing nothing. No retraining. Works on any model instantly. Compressing numbers usually destroys their meaning. Here's how they solved it: 1. Rotate the numbers randomly so they all land on a predictable curve (PolarQuant) 2. Use one extra bit to fix the tiny errors left behind (QJL) Once numbers are predictable, you need far fewer bits to store them. The results: > 8x faster on Nvidia H100 GPUs > 16 GB notebook shrinks to under 3 GB > Search indexing drops from 500 seconds to 0.001 > Accuracy identical to the uncompressed model There's a proven math limit on how good compression can get. TurboQuant is only 2.7x above that floor. We're near the ceiling. Every company running LLMs spends most of its budget on memory. This cuts that cost by over 80%. The race is no longer about bigger models. It's about cheaper inference. Models that needed a $200K server cluster start fitting on a single $2K GPU. AI agents run 24/7 without burning budgets. The companies that win won't just have the best models. They'll have the best compression. Papers are open-access on arXiv, presented at ICLR.

AI that agrees too much may quietly shape how people think. Researchers warn that overly flattering responses can reinforce opinions and reduce the willingness to reflect, apologise or reconsider after a conflict. What feels supportive can actually distort judgment. If AI becomes a constant source of validation, it may influence not just decisions, but behavior. https://t.co/cRk7KryfxX @euronews

block explorers will be dead by EOY before: click on static spreadsheet tables endlessly now: talk to the chain in english. ask specifically for what you want. generate visuals live. we are calling it @LanaAI, invite only as we iterate only on Solana; invite codes below https://t.co/f02V7XIr0P

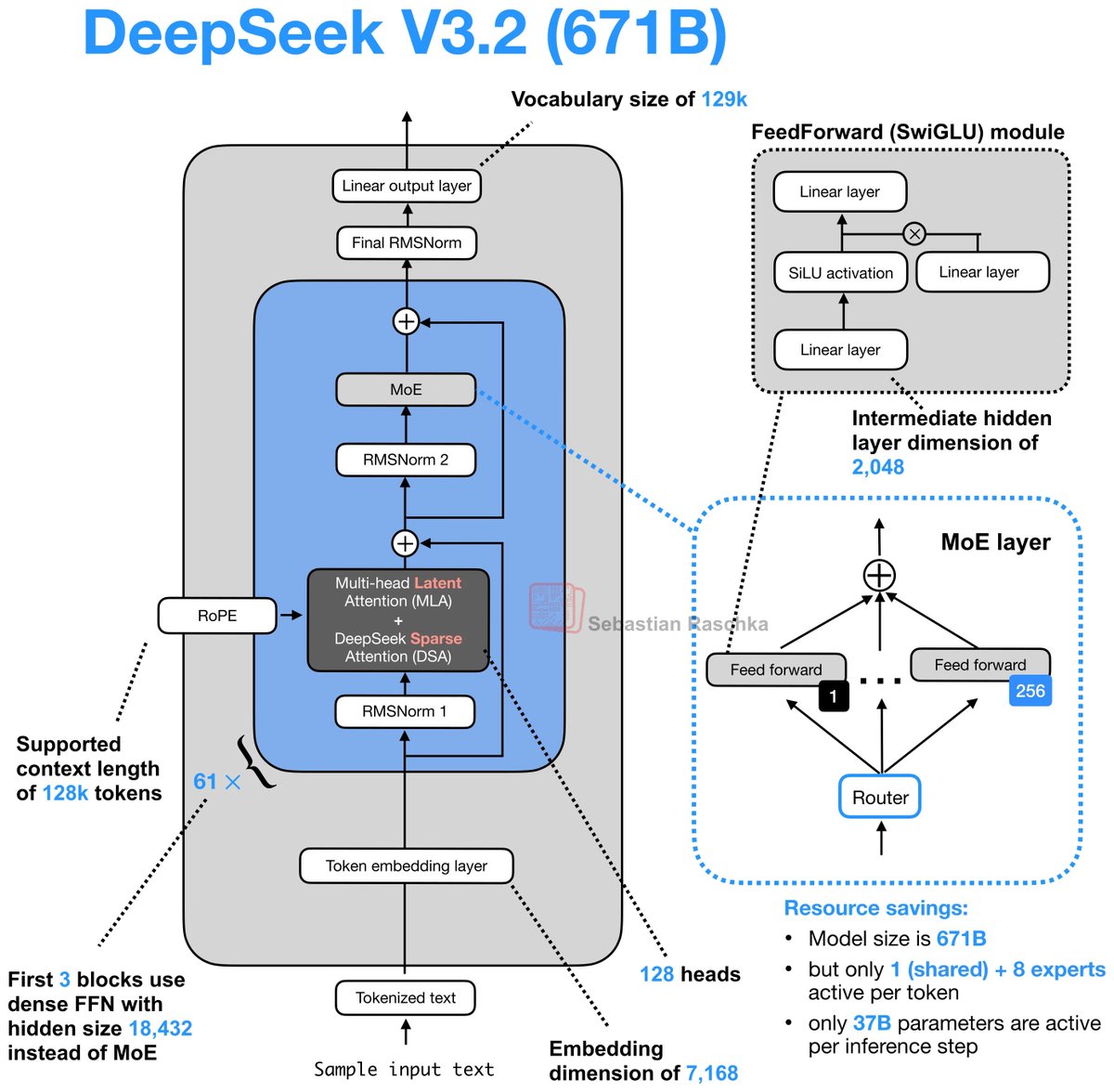

Day 83/365 of GPU Programming Looking at DeepSeek's Multi-Head Latent Attention today. The last part of the AMD challenge series is to optimize an MLA decode kernel for MI355X where the absorbed Q and compressed KV cache are given and your task is to do the attention computation. A resource that really helped internalize what MLA does was @rasbt's incredible visual guide to attention variants in LLMs (luckily he posted that last week!), which covers everything from MHA to GQA to MLA to SWA, et cetera. If there's one place to get a visual intuition for recent attention mechanisms, it's this blog post. @jbhuang0604's video on MQA, GQA,MLA and DSA was the best conceptual intro I found on the topic and progressively builds up the ideas from first principles. The Welch Labs analysis of MLA is a great watch as well. Beautiful visualization of the changes DeepSeek made for MLA. Tried out a few kernels once I had a basic understanding of MLA and I think I'm slowly getting more comfortable with at least analyzing kernels.

Day 82/365 of GPU Programming Taking a closer look at Mixture of Experts today, so I can write better MoE kernels. Specifically, to optimize an MXFP4 MoE fused kernel for the GPU Mode challenge. I haven't had much prior exposure to MoEs, so lots of new concepts I learned today.

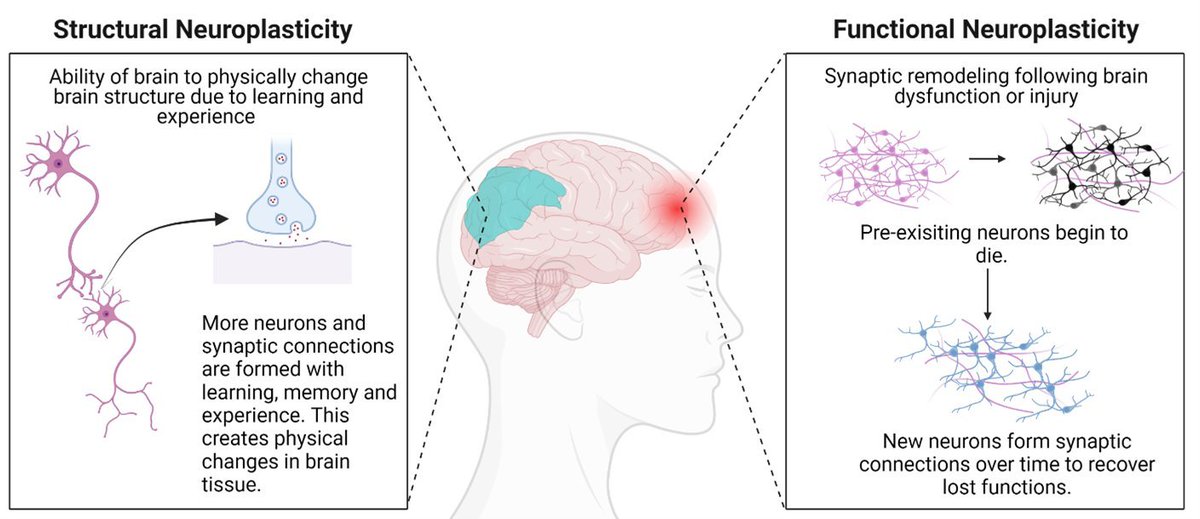

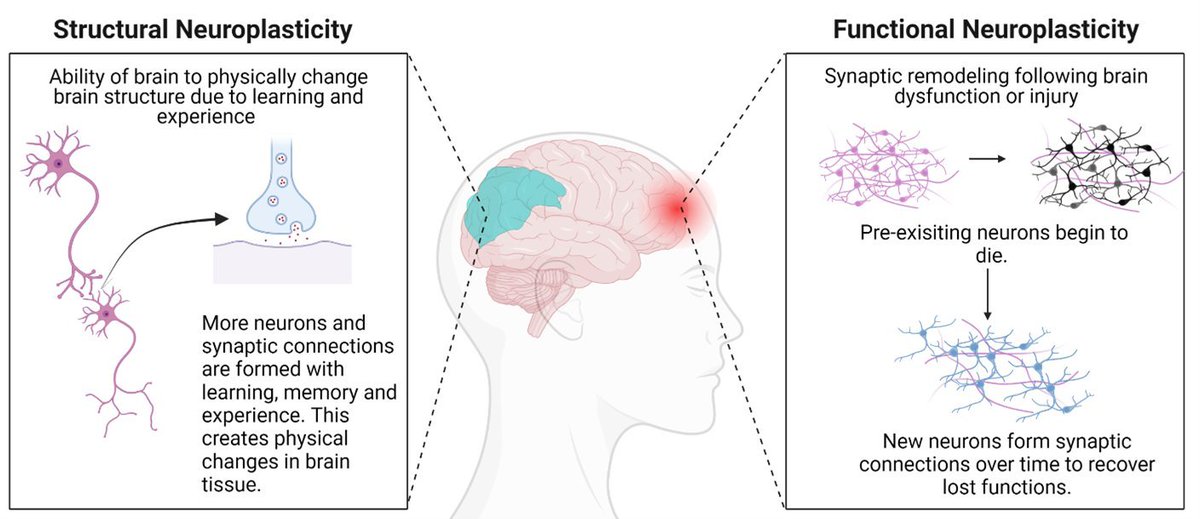

Repetition rewires your brain. Repeat what you want to become. This is neuroplasticity. https://t.co/PExj2JRVYd

Your brain can learn anything if you practice it daily.

Repetition rewires your brain. Repeat what you want to become. This is neuroplasticity. https://t.co/PExj2JRVYd

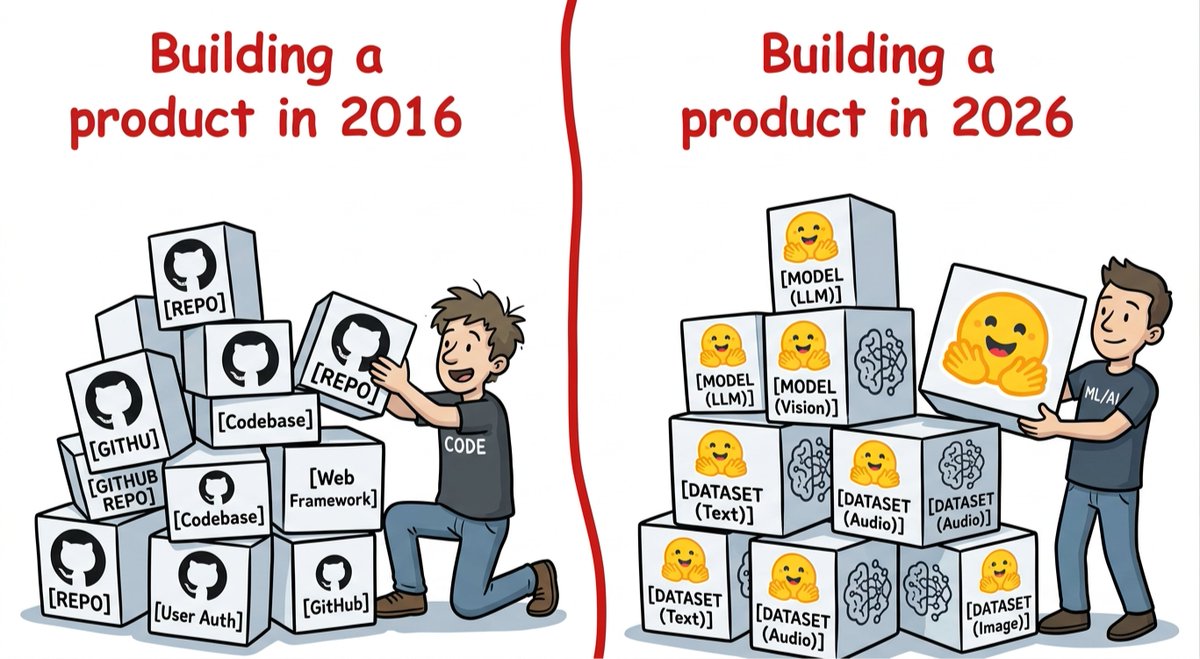

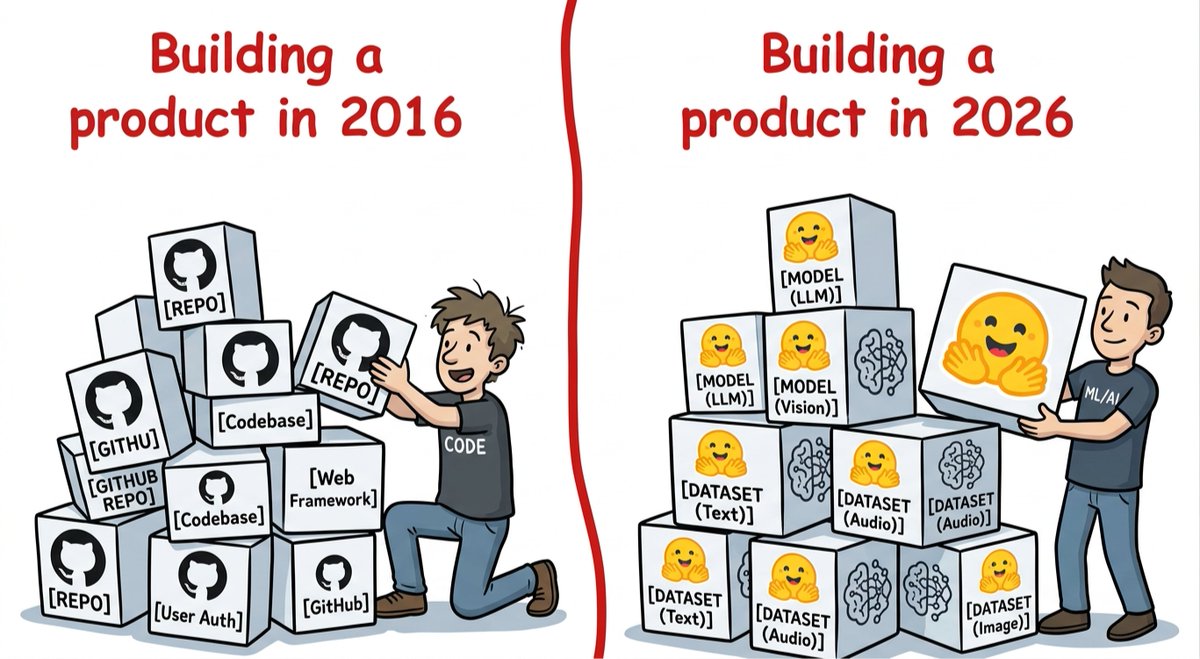

the shift is real: in 2026 invest on AI builders 🤗 https://t.co/7TaRcyNdpa

the shift is real: in 2026 invest on AI builders 🤗 https://t.co/7TaRcyNdpa

You’d have to be paid $8100 a week to have the same spending power as a full time gas station attendant in July of 1971 earning $64 a week https://t.co/ISmpehuKEM

Canada passes bill that equates quoting Holy Scripture with "hate speech." https://t.co/0eURaSbTBd https://t.co/VtEWtdqHfV

Canada passes bill that equates quoting Holy Scripture with "hate speech." https://t.co/0eURaSbTBd https://t.co/VtEWtdqHfV

AI research is becoming entangled with geopolitics. A recent policy change at a leading conference triggered backlash and was quickly reversed, showing how sensitive the global balance has become. Scientific collaboration is no longer isolated from political tensions. The flow of ideas in AI is increasingly shaped by national interests as much as by research itself. https://t.co/H0a6OejUE3 @wired @willknight @ZeyiYang

SOMEONE VIBECODED AN APP WHERE YOU CAN BUILD AND TEST ELECTRONIC CIRCUITS DIRECTLY IN YOUR BROWSER. https://t.co/HF8wHAoMww

@phuctm97 use t3code https://t.co/QlbOX4wvUL

There's something epic and magical about Grok Imagine https://t.co/0frbILRXRt

Elon Musk predicts that most of AI compute is going be real-time video understanding and real-time video generation “we expect to be the leaders in that” Grok is now currently generating more videos and images than everyone else combined “We’re gonna do the same thing with coding and we’re gonna do the same thing with Macrohard”

INSIGHT: What working for Elon is actually like. https://t.co/S2I6jhPCkw

Starship is the path to becoming multiplanetary. Full reusability, in-orbit refueling, and rapid iteration are the fundamentals that make regular Mars flights practical not just possible. This is how we extend consciousness beyond one planet. https://t.co/JXEdx78rJu

SpaceX Website now 😎 https://t.co/2UTgMR213F

The main page of SpaceX’s website in 2002 https://t.co/JsuMHSoeF9

I love Grok Imagine ❣️ https://t.co/KY7EzkBYhM

Tesla Model Y is the world's best-selling car for 3 consecutive years 2023: #1 2024: #1 2025: #1 Cumulative global sales: 4,000,000+ units Not best-selling EV. Best-selling car. Period. Beating every gas, hybrid, and electric vehicle on Earth https://t.co/5NhkPAX2sH

Nothing has none more to discredit itself in recent years than the judiciary And that includes the medical establishment during Covid, and Congress with all of its disgusting and craven antics https://t.co/OhQYiZ6XGN

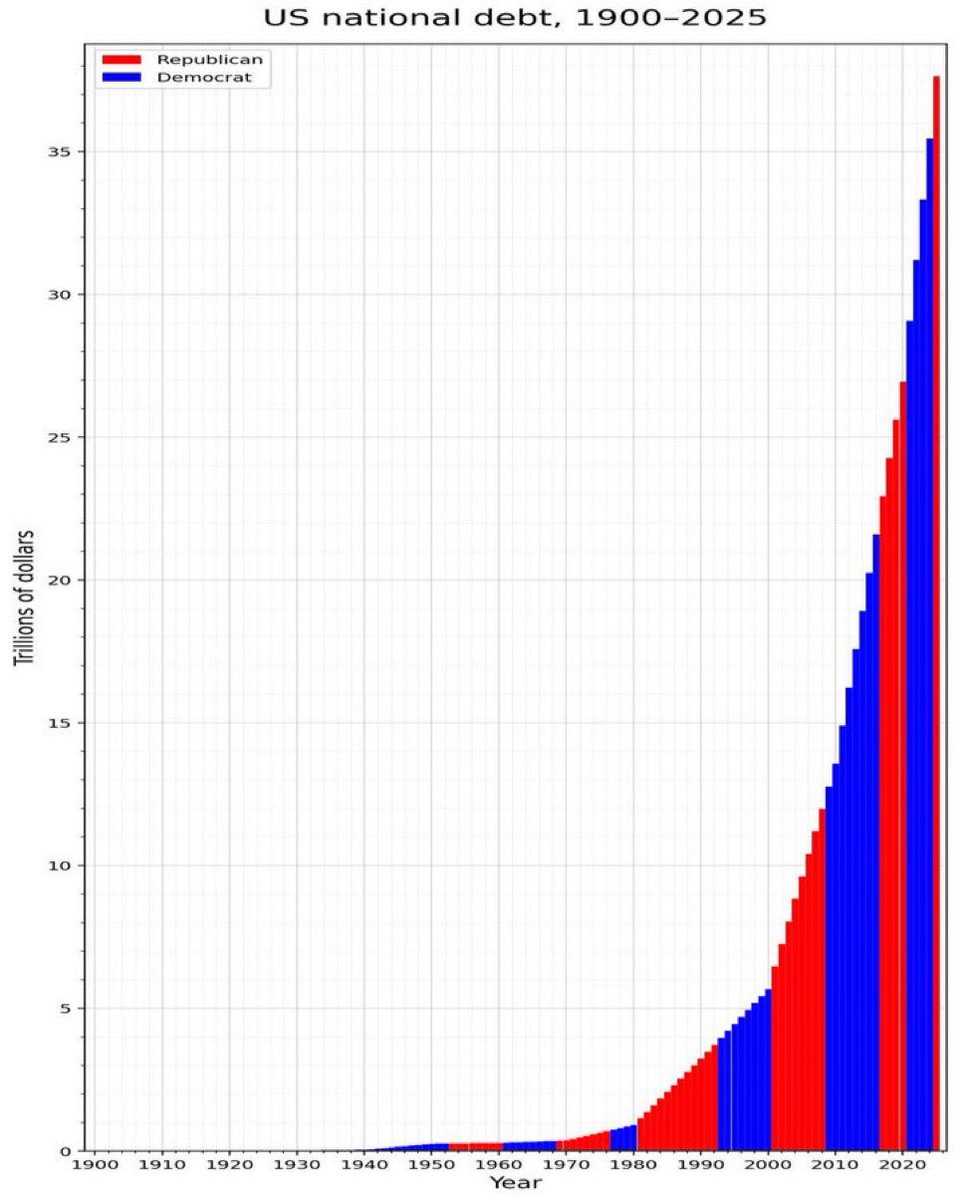

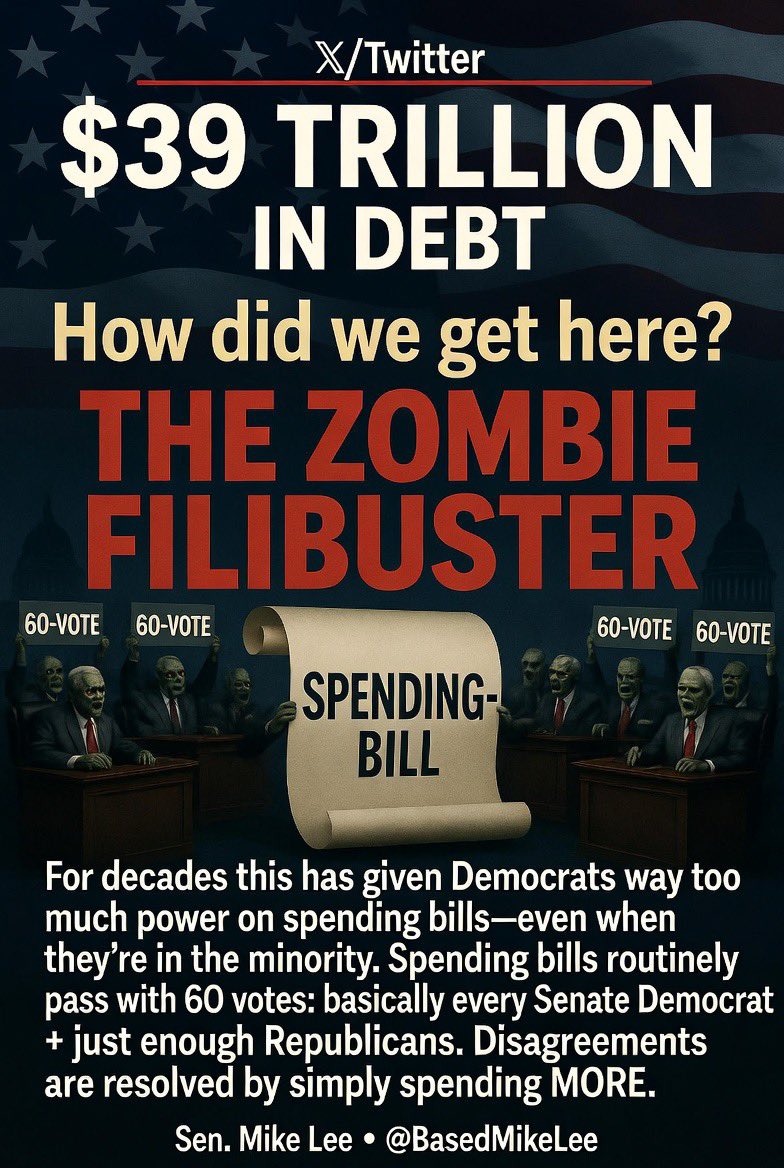

How did we get to be $39 trillion in debt? The zombie filibuster—coupled with a chronic unwillingness among senators to break through it by putting in the hard work—has contributed to it substantially. For decades, this dynamic has been giving Senate Democrats way too much power when it comes to spending bills—even when they’re in the minority. Spending bills in the Senate routinely get to 60 votes—regardless of who’s in the majority and who controls the House and the White House—with votes coming from (1) basically every Senate Democrat and (2) a much smaller group of Republicans (predominantly members of the Appropriations Committee), in many cases just a few more than whatever it takes to achieve cloture. As a result, disagreements on spending bills tend to be resolved by simply spending more—to give those voting for it what they need to vote for it. This is one of many reasons why I’ve been pushing so hard on the talking filibuster which, if fully utilized and given the time it needs to work, could help us pass the SAVE America Act. But the benefits wouldn’t end there, as they could help us avoid not only the kind of shutdown hell we’re now experiencing, but also rein in our debt and deficit—at least while Republicans are in charge. Share if you’d like to see the Senate use the talking filibuster—to fully fund DHS, to pass the SAVE America Act, to reduce spending, and otherwise!

$39 trillion in national debt How did it happen? $14 trillion in interest payments on the debt, which get added onto the debt $10 trillion wars in Iraq, Afghanistan $4 trillion in foreign aid given away to other countries, all financed with debt $10 trillion from govt fraud

I know there are a lot of Cybertrucks doing work out there - those using it for business, any feedback? https://t.co/9m9WIcXpXo

Stephen Miller says there is not a single verification system in place in Democrat states to receive federal benefits Elon Musk and DOGE tried to warn us…. He says Blue states operate “ENTIRELY ON THE HONOR SYSTEM” He says this is how Democrats put illegals on our benefits “I think what's important for Americans to understand about how pervasive and widespread the fraud is — I think that most citizens probably assume that there's some verification process that takes place for the receipt of most federal benefits. The reality is, is that there is not, this is particularly true in blue states, willfully, true in blue states, in which all of these programs are operated entirely on the honor system” “No verification takes place before individuals are enrolled in or receive these benefits. So as a simple example, if you're a Somali refugee living in Minnesota, you could lie about how many children you have. You could lie about what your immigration status is. You could lie about your marital status. You could lie about child care, you could lie about disability, you could lie about all these things and many more. And nobody in the state of Minnesota would validate any of these facts before writing you a check”

MORNING IN AMERICA https://t.co/sdBXwwOOJc

“The Republicans will fix it this time” “The Democrats will fix it this time” https://t.co/1fFknpM8Oq