Your curated collection of saved posts and media

You all are overthinking your side projects. >This guy made digital balls physically bounce off real objects/sticky notes using just a webcam, a projector, and JavaScript. Go build something fun. (via ig/bongyunng) https://t.co/caqC07Q5RI

这锅麻辣火锅,暖心又暖胃,尝上一口,幸福感瞬间满溢。 https://t.co/J7K7Li9IUc

MINI CNC 一家に一台🤪 https://t.co/sYdFMk7vGT

European allies are publicly and privately telling American diplomats that Russia is directly and materially helping Iran's war efforts beyond what the U.S. will publicly acknowledge. 1/4 https://t.co/3ySWn2f5lj

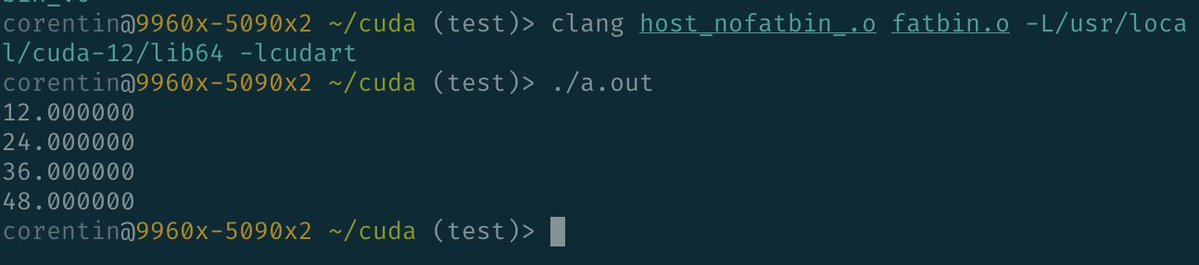

We managed the holy grail of CUDA compilation: joining CUDA host code and device code at *link time*. This means both build graphs (device and host) are now completely separated and built by SM. True scalable CUDA build graphs are now possible. Those who know, know. https://t.co/uR1kSGA9ed

What if you could build your own humanoid robot at home? Asimov just made that possible! A broken knee joint and a 2-month wait for a single spare part later, they built their own humanoid, and launched a DIY kit. $1M in pre-orders in 30 days says they're not alone in that frustration.

You can build your own humanoid at home. Asimov – Here be Dragons is now available for presale. $499 deposit, $15,000 target price. https://t.co/DMIO7Qaj76 https://t.co/O5empHswIP

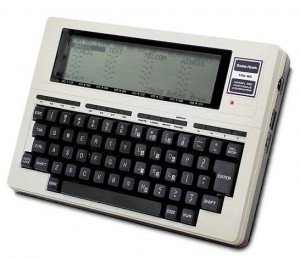

Today in 1983, Radio Shack introduced the TRS-80 Model 100, one of the first portable computers in a notebook-style form factor. https://t.co/mikdJelbjf

AI robots doing real household tasks 🤯 not just demos anymore. #robots #Ai 💕 https://t.co/QgBisvyXbS

Google’s TurboQuant is getting compared to “Pied Piper,” but it’s really about efficiency, not a breakthrough. If it works, it could cut AI runtime memory (KV cache) by up to 6x and make systems much cheaper to run, which is why some are calling it Google’s “DeepSeek moment.” But the biggest bottlenecks in AI, especially training and infrastructure, are still very much there. https://t.co/Q5dEOvABe8 @techcrunch @SarahPerezTC

This is Penpal, an AI app that you can only communicate to through handwriting. A zero-UI experience. There is something special about the age gap between the communication method and the technology. https://t.co/DrTO9hnMHm

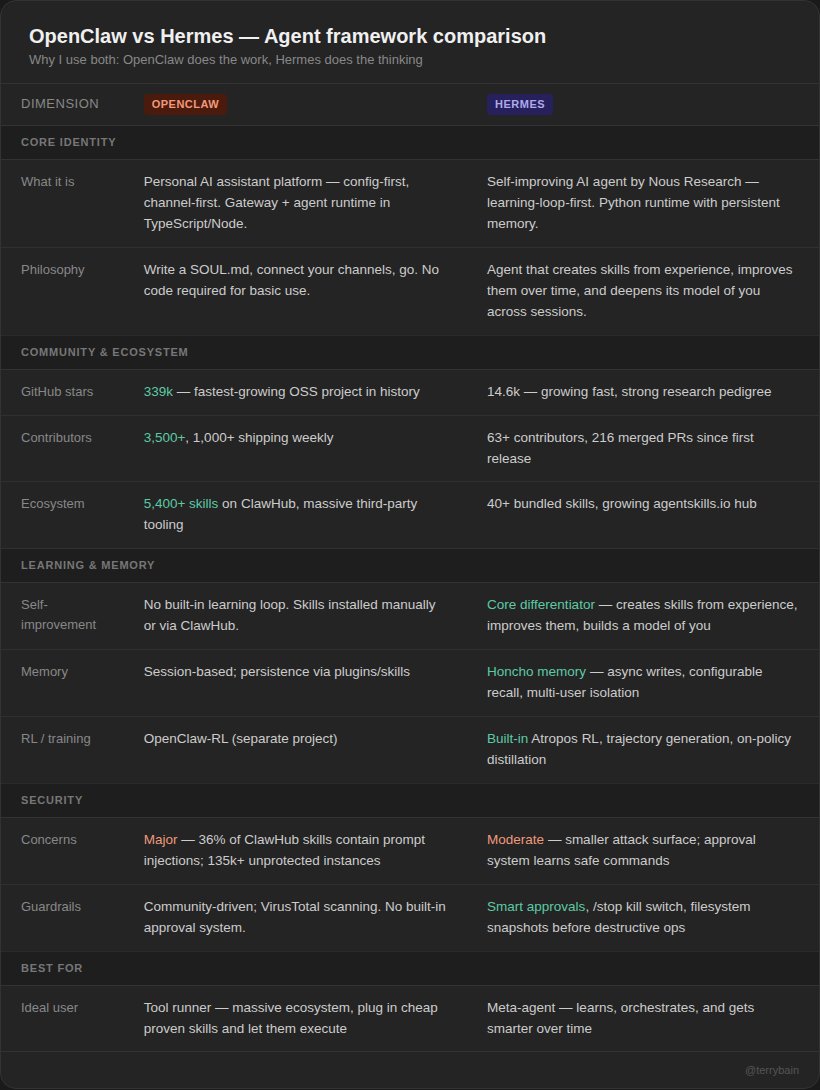

There are distinct advantages to using both OpenClaw and Hermes agent (see table 1). The #1 question I'm getting is "why don't you just use Hermes for everything?" The reason I don't is because I've been working on my research tool for 3+ months. In Claude Code, Codex, and eventually using OpenClaw. It works wonders for very cheap, and a Hermes rebuild would require a lot of time and credits. I'd be rebuilding what 3,500+ contributors and 5,400+ skills on ClawHub have already solved. So I asked myself, why not try to utilize both agents? Use their strengths to boost their weaknesses. OpenClaw is the fastest-growing open source project in history (339k GitHub stars). That community has built a massive tool-base. Plug in a skill, configure it, and it just runs. No code required. Hermes is fundamentally different. It's the only agent with a built-in learning loop. It creates skills from experience, improves them during use, and builds a deeper model of who you are across sessions. The way I see it, OpenClaw does the work, Hermes does the thinking and building. Together, we can build anything. Keep in mind, this is all very new and experimental. If anything, this is an important step in multi-agent frameworks working together. The possibilities only grow from here.

https://t.co/nrp8QDoxgk

it's time @claudeai https://t.co/64HtPk5bKE

it's time @claudeai https://t.co/64HtPk5bKE

同じ親基板上で XIAO RP2350 でも XIAO ESP32-S3 でも同じ C++ アプリが動いた🍣 #shapolab https://t.co/9hFb92zLyd

同じ親基板上で XIAO RP2350 でも XIAO ESP32-S3 でも同じ C++ アプリが動いた🍣 #shapolab https://t.co/9hFb92zLyd

This little illuminated dragon is very happy about Pretext. He's too busy having fun to care about people's "hot takes" on how "it's not that special." (This little dragon also only works on desktop right now but maybe I'll do mobile later) https://t.co/k9FH6p1G0T https://t.co/wNhFk1ZBwM

My dear front-end developers (and anyone who’s interested in the future of interfaces): I have crawled through depths of hell to bring you, for the foreseeable years, one of the more important foundational pieces of UI engineering (if not in implementation then certainly at leas

Reality TV is getting an AI twist, and it’s getting strange. An “AI fruit version” of Love Island shows how easily formats can be replicated, remixed and turned into something surreal with generative tools. Entertainment is entering a phase where ideas can be copied endlessly, but originality becomes harder to find. https://t.co/gu1nHpN4qW @bbcnews @sakshi_saroja

Starship is the first planet-colonizer class rocket https://t.co/hlMRTVRRP7

SpaceX COO @Gwynne_Shotwell: “I love working for @elonmusk.” “He’s funny— he’s hilarious actually.” “He focuses on things that I would never have thought were important.” “One is— beautiful spaces.” “This is one of the most beautiful factories I have ever seen.” Via @TIME https://t.co/VrmzOYOnpy

The Left used to worship Elon. Then they started calling him a Nazi over political disagreements. The message is clear: Either submit or the Left will do everything in its power to ruin you. https://t.co/b6mm3yXPmM

Top fashion with Grok Imagine https://t.co/haTBtjR7yK

https://t.co/VkWQKcvgT0

https://t.co/C3Ek5fK4f3

https://t.co/C3Ek5fK4f3

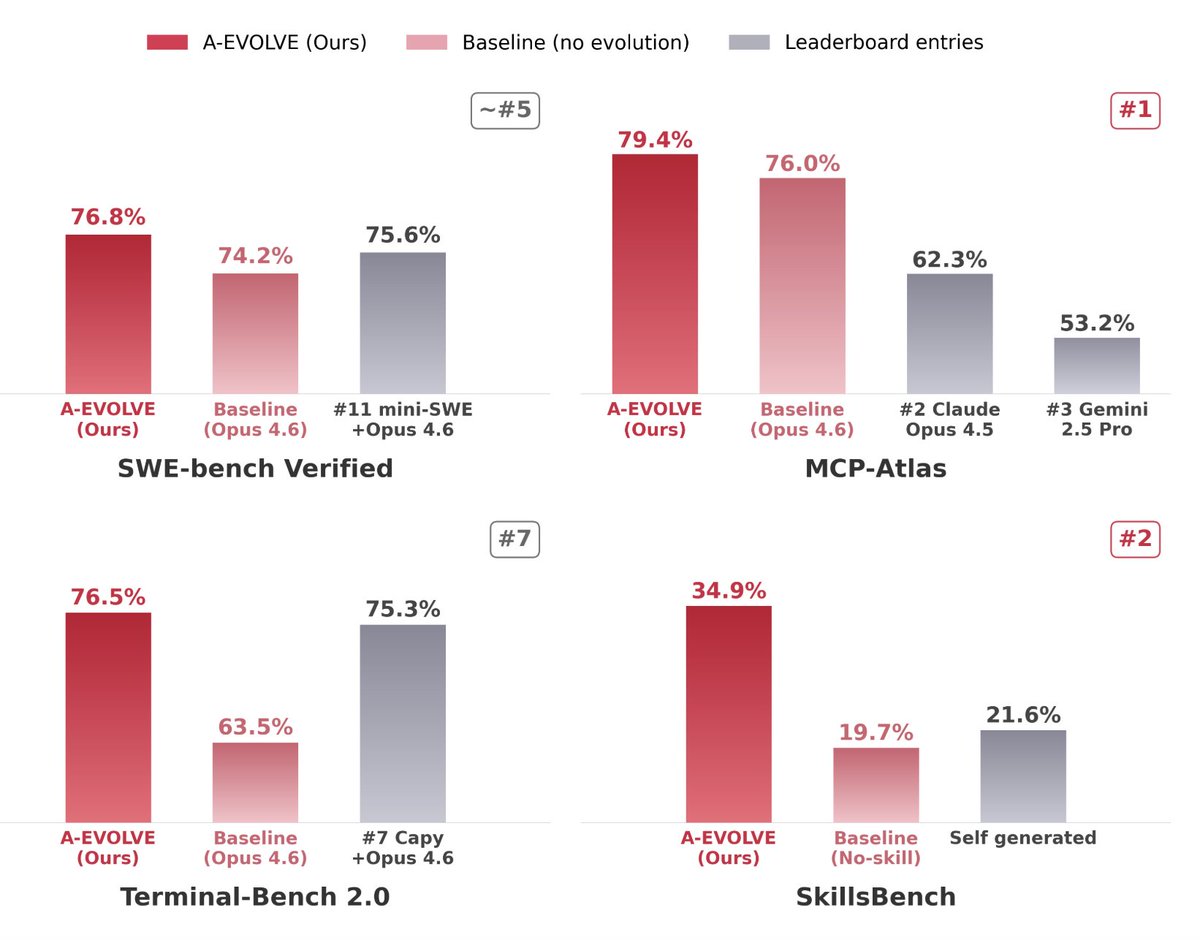

Launch Post🧬 A-Evolve: The PyTorch Moment for Self-evolving AI Today we at @amazon launch the universal infrastructure that turns any agent into a self-improving SOTA agent — zero human intervention. You give it a base agent → it returns a continuously evolving Top-10 agent. 3 lines of code. 0 hours of manual harness engineering: 🟢 MCP-Atlas → 79.4% (#1) +3.4pp 🔵 SWE-bench Verified → 76.8% (~#5) +2.6pp 🟣 Terminal-Bench 2.0 → 76.5% (~#7) +13.0pp 🟡 SkillsBench → 34.9% (#2) +15.2pp Thanks @binghe2727 @YisiSang @sammyershi @linminhua16 for the contribution! #AgenticAI #AEvolve #SelfImprovingAgents

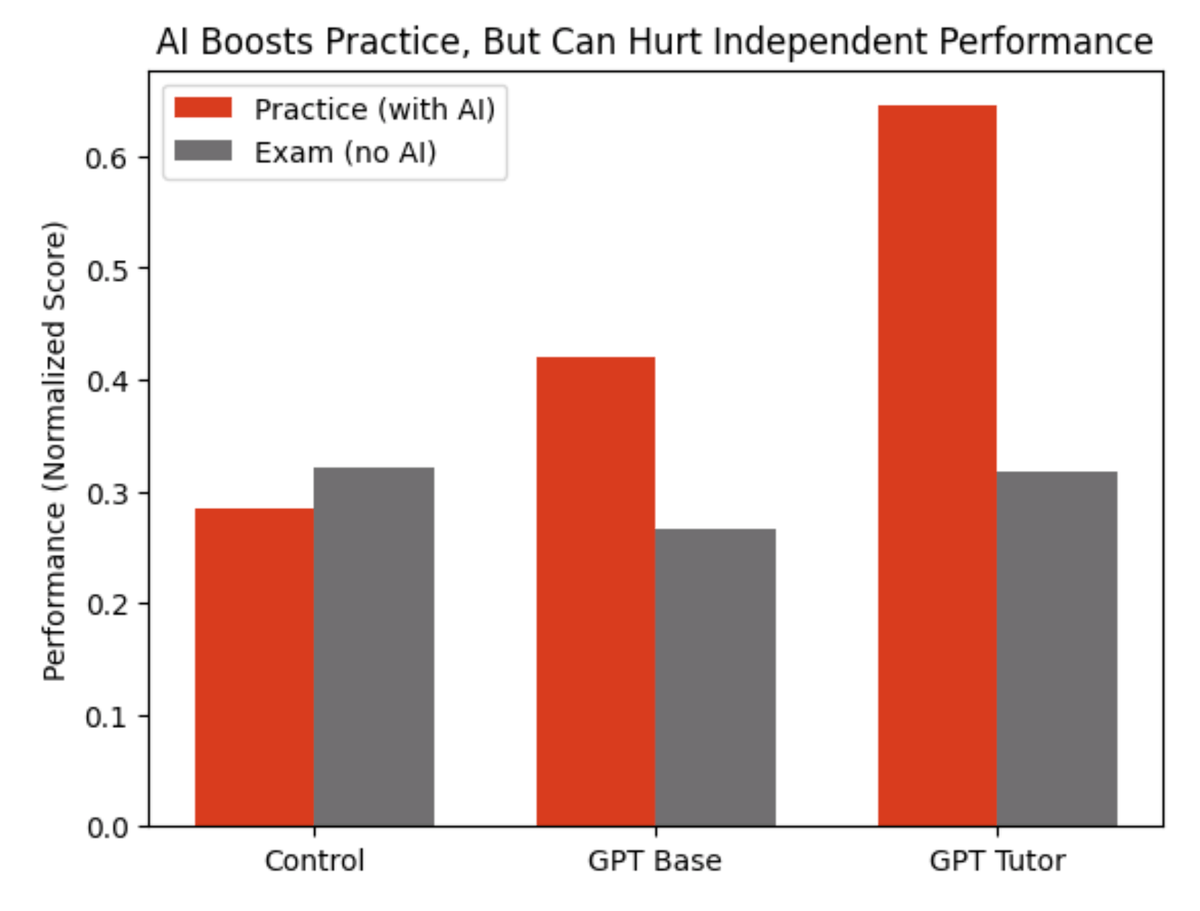

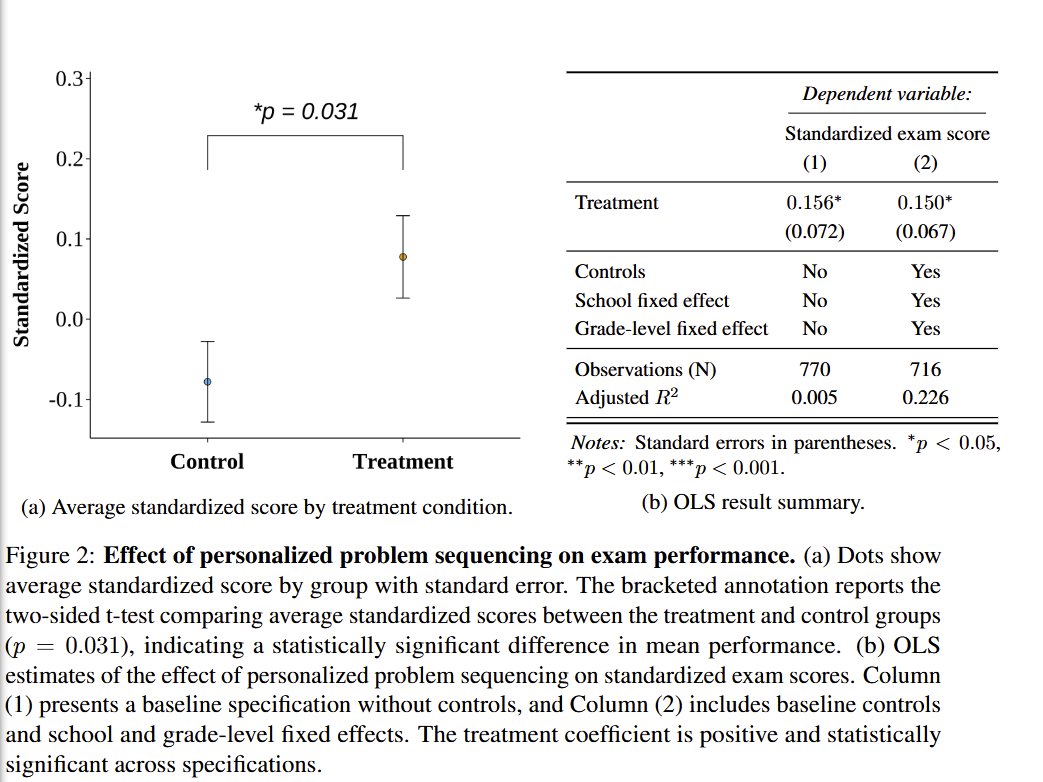

Wharton researchers gave nearly 1,000 high school math students access to ChatGPT during practice problems Result: chatGPT is the perfect trap. Look at the red bars. Students with ChatGPT crushed their practice sessions. The basic ChatGPT group solved more problems and those on the "tutor" version did even more. Now look at the gray bars. That's the exam. No AI allowed. The ChatGPT group scored 17% worse than kids who practiced with zero technology. And the fancy tutor version? No better than working alone. The researchers called AI a "crutch." When they analyzed what students actually typed into ChatGPT, most of them just wrote - “What’s the answer?” The kicker: students who used ChatGPT believed it hadn't hurt their learning. They were confidently wrong. This is the AI trap in education. Outsourcing your thinking. Of course, lots of half-baked AI literacy curricula being rolled out in schools now Let’s of course ignore that basic literacy (the ability to read) is possible for <50% of 8th graders Source: Bastani et al. (2025), "Generative AI Can Harm Learning," PNAS

The research team (including @hamsabastani who is on X) found that letting students just use AI resulted in them using it to accidentally shortcut learning But both that study and a separate RCT found that AIs prompted to act as a tutor improved learning https://t.co/0HtjGC8eU0 https://t.co/U3OIeCF4aP

Wharton researchers gave nearly 1,000 high school math students access to ChatGPT during practice problems Result: chatGPT is the perfect trap. Look at the red bars. Students with ChatGPT crushed their practice sessions. The basic ChatGPT group solved more problems and those

Jensen Huang explains how to attract the best talent in the world “Our company chooses projects for one fundamental goal. My goal is to create an amazing environment for the best people in the world… [and] to create the conditions by which they will come and do their life’s work?” As Jensen explains, the key to doing this is not by fighting for market share in commodity markets. It’s by choosing projects that are incredibly hard to do. “[A great person] wakes up in the morning and says, I want to do something that has never been done before, that’s incredibly hard to do, that if successful, makes a great impact on the world.” This is why NVIDIA doesn’t make cell phone chips, CPUs or do fabrication. “We naturally selected ourselves out of commodity markets. And because we selected amazing markets and amazingly hard to do things, amazing people joined us… That’s how you build something special. Otherwise you’re only talking about market share.” Video source: @Columbia_Biz (2023)

Eric Schmidt shares his weekend habit that led to billion-dollar decisions https://t.co/YZ2AC5h9ut