Your curated collection of saved posts and media

@PatrickMoorhead @wholemars I know. But it knows more about me than I know about me. Which is a freaky thing. :-) "Hey Grok simulate a conversation between Patrick Moorhead and Robert Scoble about the future of privacy and the social contract when it comes to AI-driven glasses." Here you go: https://t.co/j4PWh4KMeD Sounds pretty much like both of us, except I have @evenrealities and @getVITURE and @MentraGlass here. :-)

Apple vs vibe coding. The kicker: https://t.co/XocVdcBITc

Apple vs vibe coding. The kicker: https://t.co/XocVdcBITc

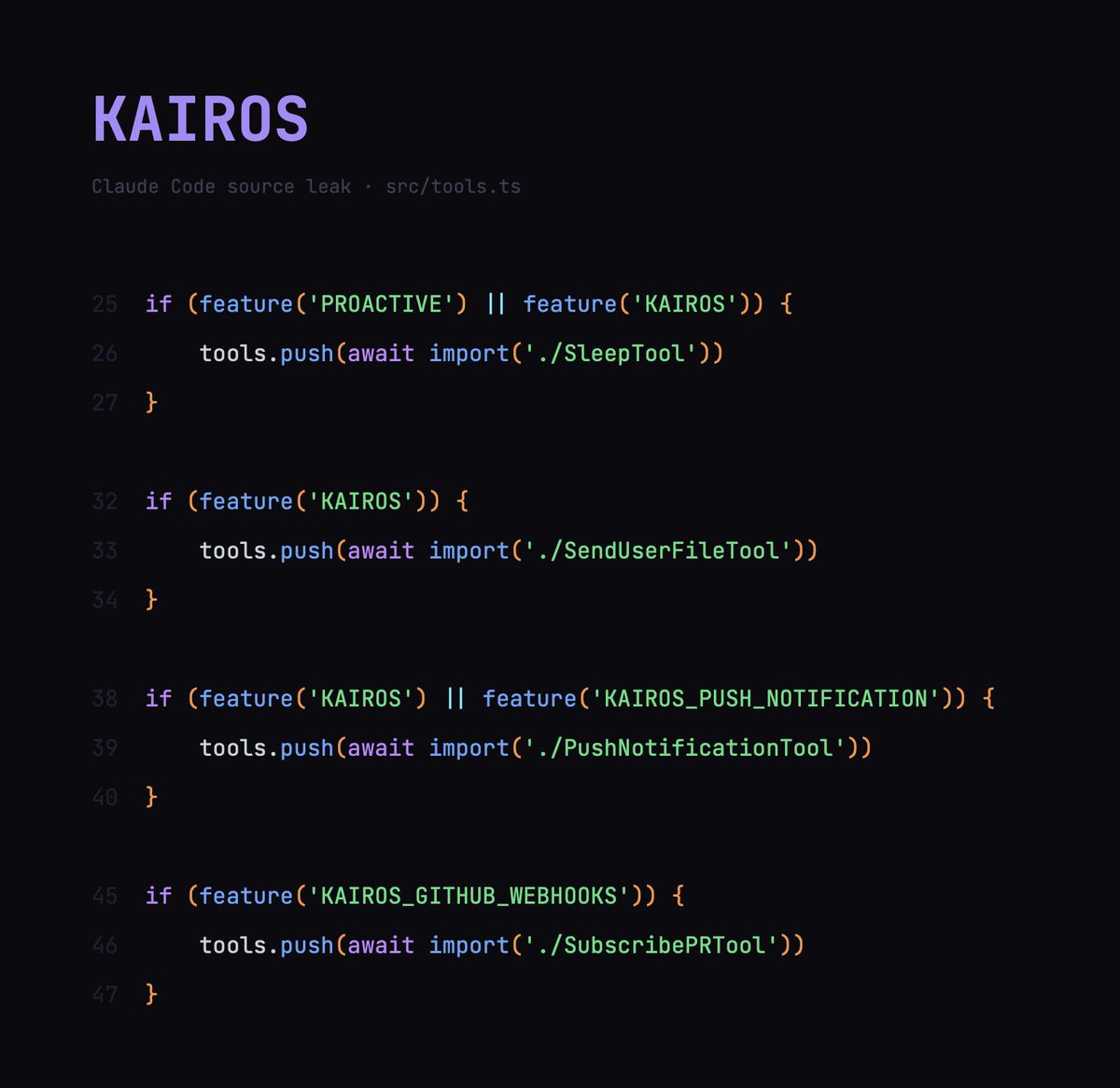

i can't believe more people aren't talking about this part of the claude code leak there's a hidden feature in the source code called KAIROS, and it basically shows you anthropic's endgame KAIROS is an always-on, *proactive* Claude that does things without you asking it to. it runs in the background 24/7 while you work (or sleep) anthropic hasn't turned it on to the public yet, but the code is fully built here's how it works: every few seconds, KAIROS gets a heartbeat. basically a prompt that says "anything worth doing right now?" it looks at what's happening and makes a call: do something, or stay quiet if it acts, it can fix errors in your code, respond to messages, update files, run tasks... basically anything claude code can already do, just without you telling it to but here's what makes KAIROS different from regular claude code: it has (at least) 3 exclusive tools that regular claude code doesn't get: 1. push notifications, so it can reach you on your phone or desktop even when you're not in the terminal 2. file delivery, so it can send you things it created without you asking for them 3. pull request subscriptions, so it can watch your github and react to code changes on its own regular claude code can only talk to you when you talk to it. KAIROS can tap you on the shoulder and it keeps daily logs of everything. > what it noticed > what it decided > what it did append-only, meaning it can't erase its own history (you can read everything) at night it runs something the code literally calls "autoDream." where it consolidates what it learned during the day and reorganizes its memory while you sleep and it persists across sessions. close your laptop friday, open it monday, it's been working the whole time think about what this means in practice: > you're asleep and your website goes down. KAIROS detects it, restarts the server, and sends you a notification. by the time you see it, it's already back up > you get a customer complaint email at 2am. KAIROS reads it, sends the reply, and logs what it did. you wake up and it's already resolved > your stripe subscription page has a typo that's been live for 3 days. KAIROS spots it, fixes it, and logs the change endless use-cases, it's essentially a co-founder who never sleeps the codebase has this fully built and gated behind internal feature flags called PROACTIVE and KAIROS i think this is probably the clearest signal yet for where all ai tools are going. we are heading into the "post-prompting" era where the ai just works for you in the background like an all-knowing teammate who notices and handles everything, before you even think to ask

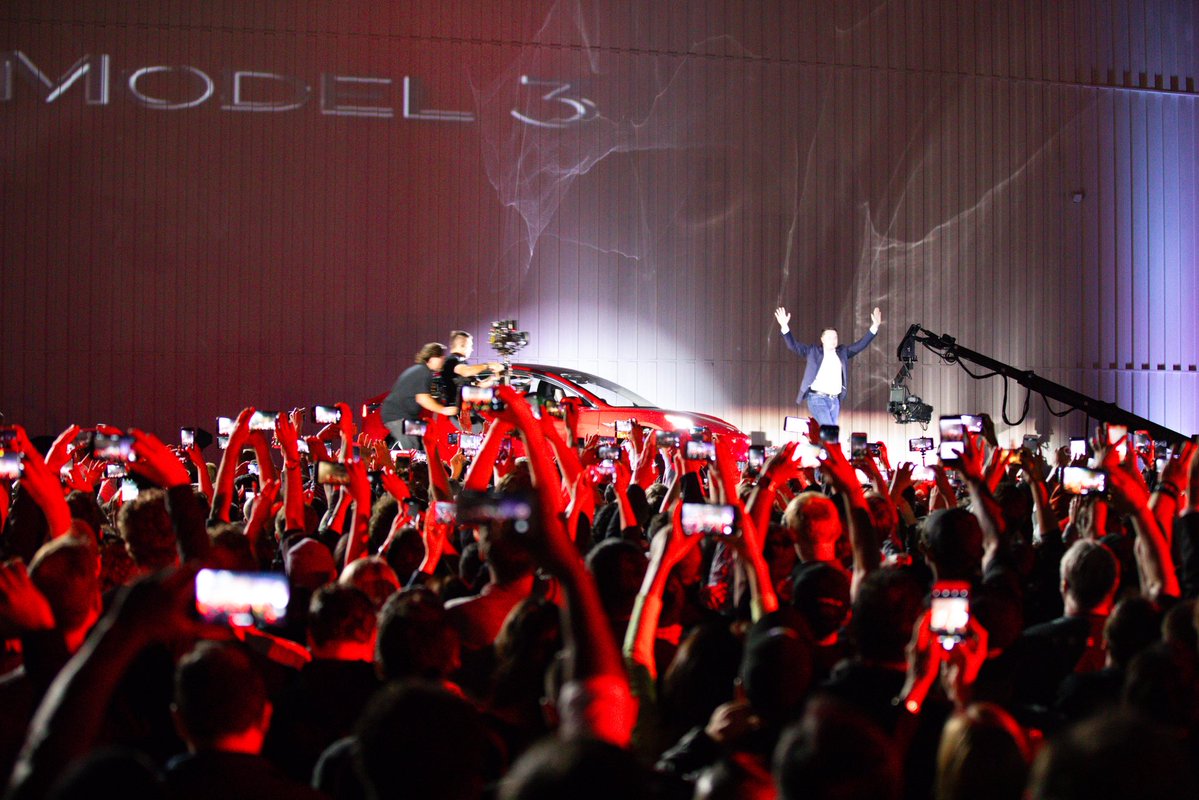

10 years of Model 3 The car that started the EV revolution https://t.co/D5R21Z6MHd

Billionaire Chamath Palihapitiya explains that once he learned the mainstream media was lying to him, he left the Democrat Party “You went from being a large Democrat donor to now a very outspoken proponent on the other side of the aisle” Chamath Palihapitiya “Yeah — This is not about politics for me, it's about the truth. There was a moment where I was basically like everybody else, and pretty brainwashed. My media diet was very much the same as everybody else's. I read the New York Times and the Wall Street Journal. I watched a little bit of CNN, a little bit of CNBC, a little bit of MSNBC, a little bit of CBS Economist. Every now and then you encounter it on a plane and you think you know what's going on, and I had a perception of Donald Trump initially, from the moment he walked down the staircase in Trump Tower to announce. And then over the course of 6-7 years, I realized that some of those fundamental things that I was told about him were just totally false. And there was enough content online where I was almost afraid, but it started with Charlottesville. I was almost afraid to look at it because I'm like, I think I was lied to. And then I saw it. I saw what he said, but then I saw the portrayal. I had originally believed that portrayal until I saw the truth. And then I just started to go down that rabbit hole. So for me, it was an evolution where I was like, I can't believe I'm being lied to by this group of people whose sole responsibility is to hold truth, to power. The key word there is truth to power, not your perception or your desires. And I just think that that, that, I mean, red-pilled me, I guess in a way”

Hippo fluid scene pushed to 100M particles made with HydroFX. Foam, bubbles, and spray all simmed together in one system for a cohesive result, fully GPU accelerated. Meshed + extra sand interaction in Houdini, final lookdev/render in Blender. Get HydroFX https://t.co/1aKDxmZK9V https://t.co/cggztcF66j

@LottoLabs Feel free to also crawl https://t.co/JZLFvrZlgW We made a skill for it: https://t.co/2SHtcrnYBg

@LottoLabs Feel free to also crawl https://t.co/JZLFvrZlgW We made a skill for it: https://t.co/2SHtcrnYBg

https://t.co/jUulVFaWTp

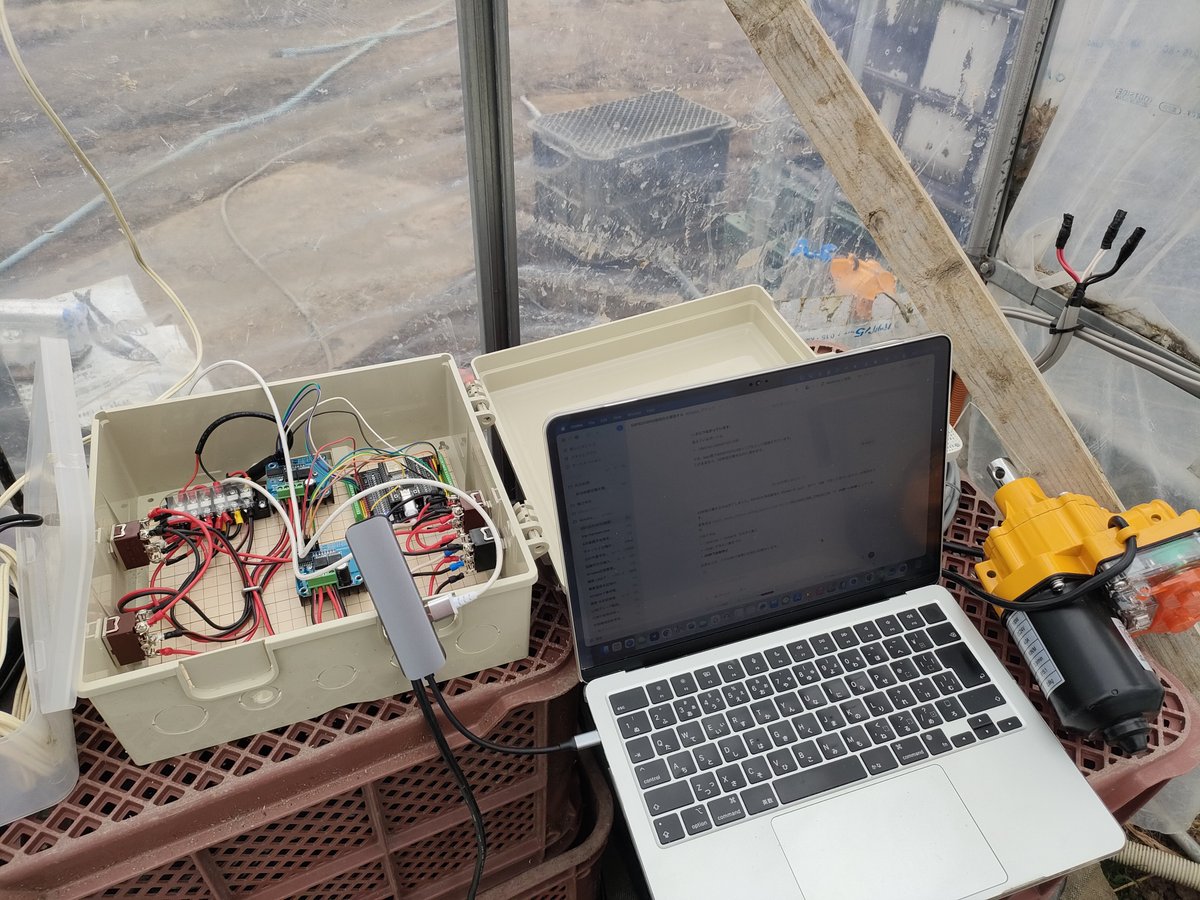

Today, we closed our latest funding round with $122 billion in committed capital at an $852B post-money valuation. The fastest way to expand AI’s benefits is to put useful intelligence in people’s hands early and let access compound globally. This funding gives us resources to

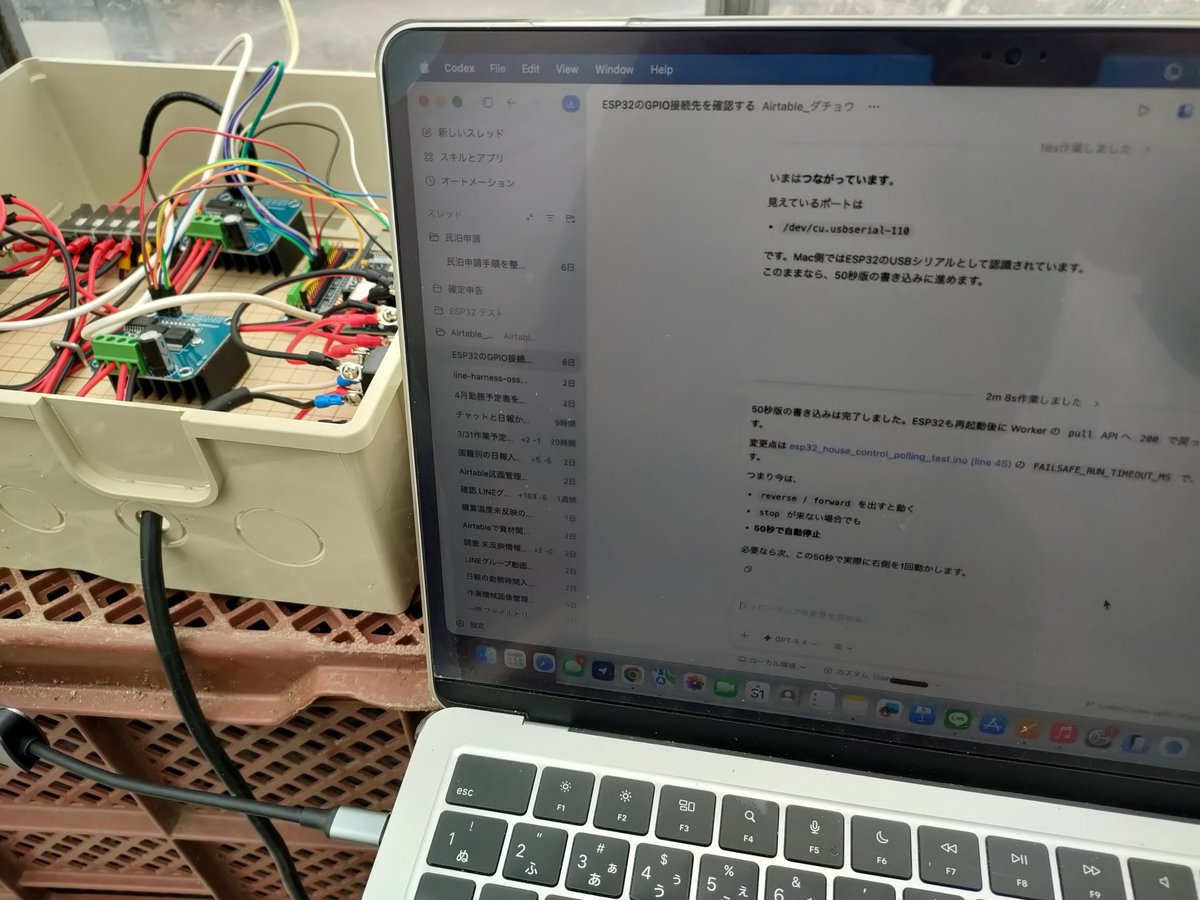

畑にエンジニアを1人雇った。名前はCodex。 ビニールハウスの換気をLINEから遠隔操作するシステムを作ってくれた。コードだけでなく、配線の設計等でも活躍。農家は面白いAIの使い道が色々とあるんじゃなかろうか。 https://t.co/ziZJY61u98

reminder to self: we do this because we believe in what's being built 🇸🇬 📍cafe @cursor_ai singapore @agrimsingh @SherryYanJiang @fr4nnyp4ck @nickwm @benln https://t.co/MhG6dUurZc

Say hello to agentOS (beta) A portable open-source OS built just for agents. Powered by WASM & V8 isolates. 🔗 Embedded in your backend ⚡ ~6ms coldstarts, 32x cheaper than sbxs 📁 Mount anything as a file system (S3, SQLite, …) 🥧 Use Pi, Claude Code/Codex/Amp/OpenCode soon https://t.co/6iXVD3xEzS

Demo of 1-bit Bonsai 8B from @PrismML running on-device on iPhone 17 Pro More than 40tk/s for a dense 8B model on iPhone, that’s a first Powered by Apple MLX and available now in Locally AI https://t.co/qKoo4XrljL

NEWS: OpenAI just announced that it has officially closed their latest funding round with $122 billion in committed capital at a post money valuation of $852 billion. "We are now generating $2B in revenue per month. At this stage, we are growing revenue four times faster than the companies who defined the Internet and mobile eras, including Alphabet and Meta. ChatGPT has more than 900 million weekly active users, and over 50 million subscribers. Search usage has nearly tripled in a year, and our ads pilot reached more than $100 million in ARR in under six weeks. Momentum is just as strong on the enterprise side, which now makes up more than 40% of our revenue, and is on track to reach parity with consumer by the end of 2026. GPT‑5.4 is driving record engagement across agentic workflows. Our APIs now process more than 15 billion tokens per minute. Codex now serves over 2 million weekly users, up 5x in the past three months, with usage growing more than 70% month over month."

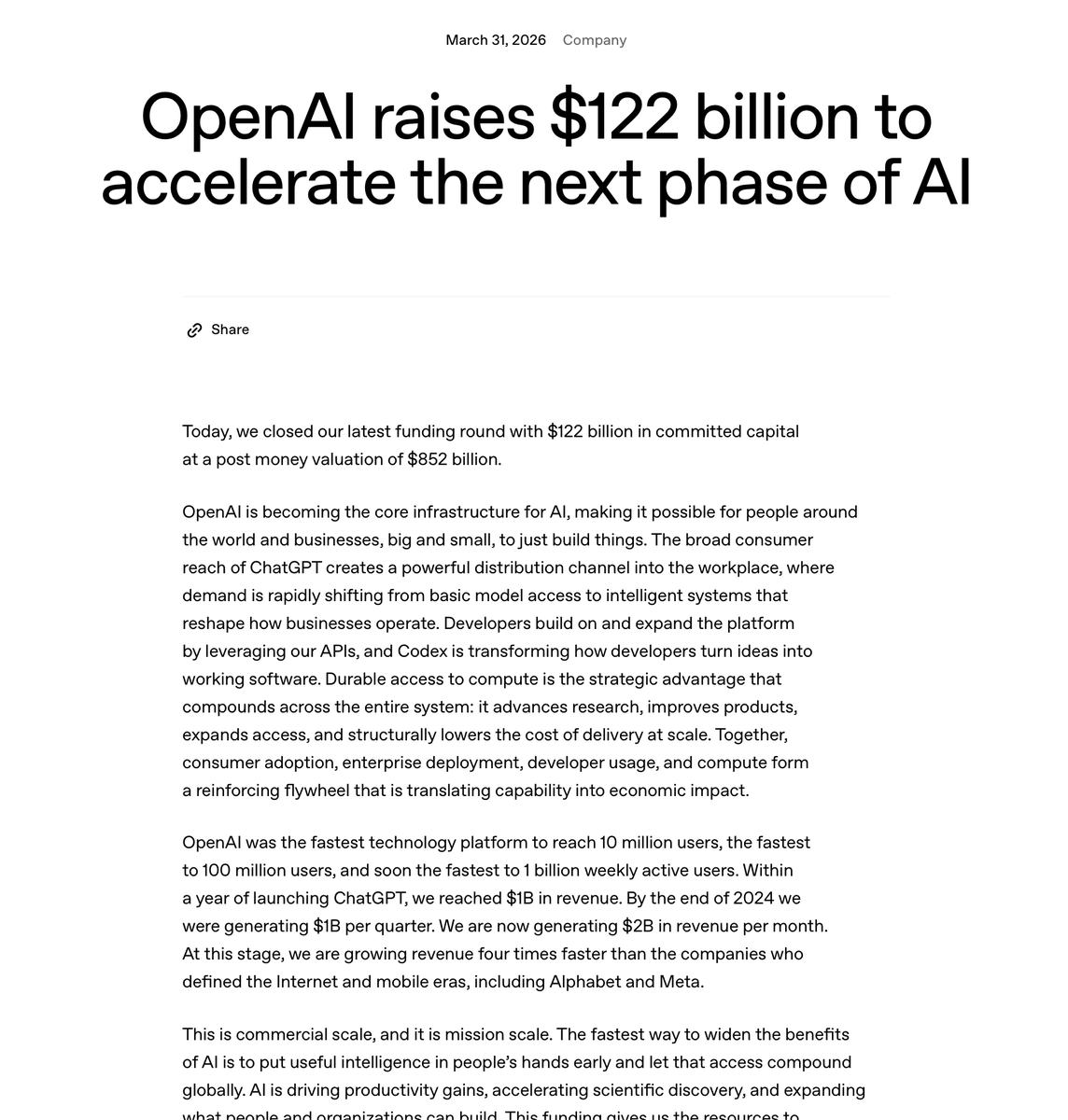

Holo3 is here 🚀. Today, we're launching Holo3: our new series of frontier computer-use models. 78.9% on OSWorld-Verified. That puts us ahead of GPT-5.4 and Opus 4.6, at one-tenth of the cost. Weights on Hugging Face. API is live. Test it now! #Holo3 #OpenSource #ComputerUse #OSWorld #AI #AgenticAI

Introducing FAUNA. The creative agent built for people whose ideas deserve better. Describe what you want to make. It builds the workflow on the canvas in front of you. Redirect it, push it further, tell it what to avoid. Your vision drives everything. https://t.co/VCjYmqDs04

Today, we're making Creator Studio available to everyone. Bring a prompt, a Figma file, or a URL and walk away with a polished video your team can actually use. No agency, no design queue and no five-figure budget. https://t.co/JrET6yXuIl

// Graph Augmented Associative Memory for Agents // Long-term memory for agents is still an unsolved problem. Flat RAG loses structural relationships, and knowledge graphs miss conversational associations. New research proposes combining both through a hierarchical approach. GAAMA is a graph-augmented associative memory that constructs a concept-mediated hierarchical knowledge graph through episode preservation, LLM-based fact extraction, and higher-order reflection synthesis. It uses four node types connected by five edge types, with retrieval combining semantic search and graph-traversal ranking. On the LoCoMo-10 benchmark, GAAMA achieves 78.9% mean reward, outperforming HippoRAG and tuned RAG baselines. Multi-session agents need memory that captures both facts and their relationships across conversations. GAAMA demonstrates that graph-augmented retrieval consistently beats semantic-only methods, and that higher-order reflections, not just raw fact storage, are key to reliable recall. Paper: https://t.co/b9mWe4sN8c Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Apparently you get bullied and rejected from Anthropic for being insufficiently woke on risks of open source AI. Experience with Anth HR interview. 1point3acres, translated from Chinese. People were asking sometimes why I dislike Dario Amodei. I hope this clarifies matters. https://t.co/yudUoeIyfw

I doubt it's actually on par with 4.6, but I want to see Dario argue that. I want him to absolutely Karp out in an interview. Let him squirm in a chair, twitch, make irrelevant gestures, smirk, downplay it, then wail about export controls. Dario noises are music to my ears…

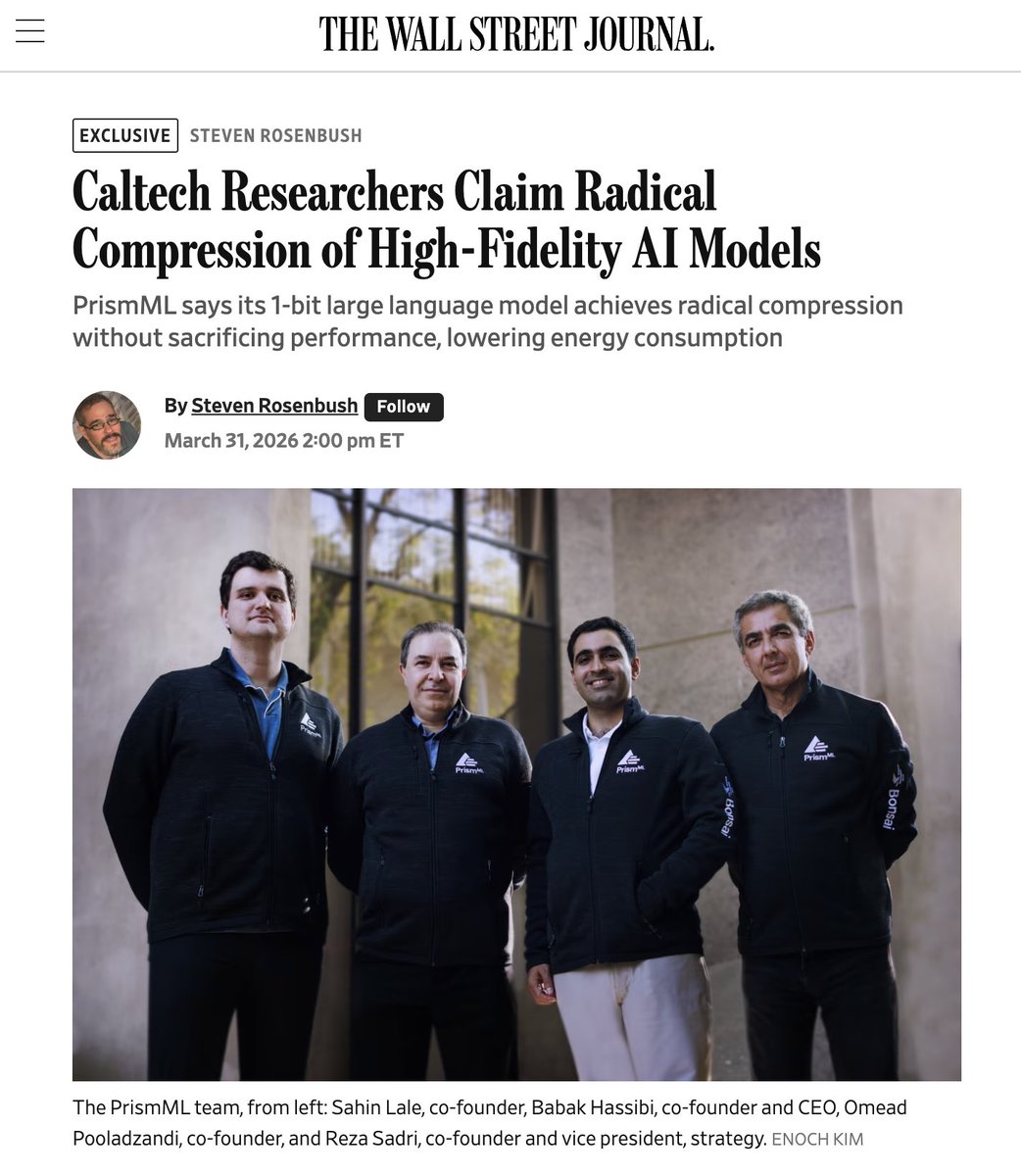

Today, we are emerging from stealth and launching PrismML, an AI lab with Caltech origins that is centered on building the most concentrated form of intelligence. At PrismML, we believe that the next major leaps in AI will be driven by order-of-magnitude improvements in intelligence density, not just sheer parameter count. Our first proof point is the 1-bit Bonsai 8B, a 1-bit weight model that fits into 1.15 GBs of memory and delivers over 10x the intelligence density of its full-precision counterparts. It is 14x smaller, 8x faster, and 5x more energy efficient on edge hardware while remaining competitive with other models in its parameter-class. We are open-sourcing the model under Apache 2.0 license, along with Bonsai 4B and 1.7B models. When advanced models become small, fast, and efficient enough to run locally, the design space for AI changes immediately. We believe in a future of on-device agents, real-time robotics, offline intelligence and entirely new products that were previously impossible. We are excited to share our vision with you and keep working in the future to push the frontier of intelligence to the edge.

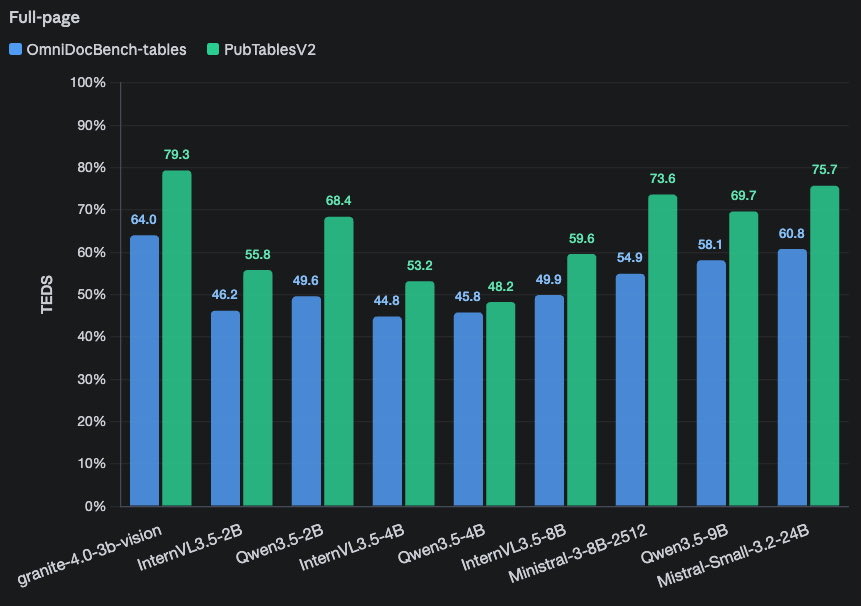

IBM just dropped Granite 4.0-3B-Vision, new vision language model for documents > sota for its size for table & charts 🙌🏼 > use with transformers & vLLM > free license https://t.co/3G86pWgQRm

You may know AI for its prompt-response interactions, but programmable execution is the new interface. 👀 With the GitHub Copilot SDK, you can enable agentic workflows directly inside your own applications. It comes down to these three patterns. 💡 ⬇️ https://t.co/I7tHoVHOO5

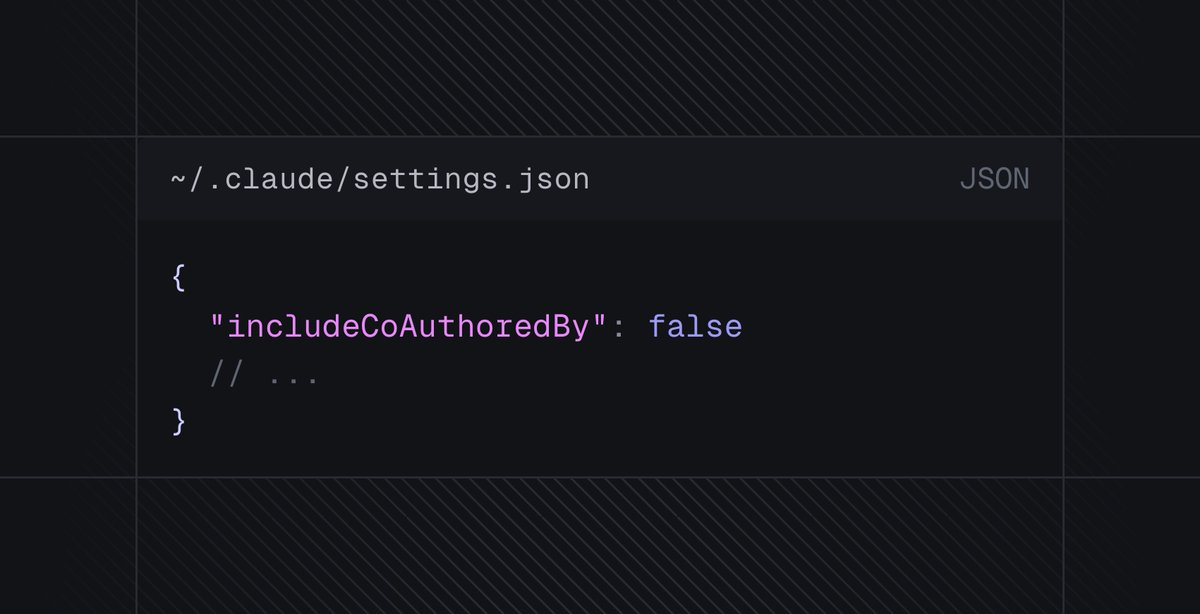

quick claude code tip you can stop claude from being listed as a co-author on commits by changing this line 👀 https://t.co/XBmxxhBm5j

ty @theo and @jxnlco !! https://t.co/nZQpdO1sDn

ty @theo and @jxnlco !! https://t.co/nZQpdO1sDn

The anti-AI coalition continues to maneuver to find arguments to slow down AI progress. If someone has a sincere concern about a specific effect of AI, for instance that it may lead to human extinction, I respect their intellectual honesty, even if I deeply disagree with their position. However, I am concerned about organizations that are surveying the public to find whatever messages will turn people against AI, and how the public reacts as these messages are spread by lobbyists or by politicians seeking to alarm constituents, companies pursuing regulatory capture or seeking to promote the power of their technology, and individuals seeking to gain attention or to profit by being provocative. A large study (link in original article below; h/t to the AI Panic blog) by a UK group tested different messages that are designed to raise alarm about AI. Their study found that saying AI will cause human extinction has largely failed. Doomsayers were pushing this argument a couple of years ago, and fortunately our community beat it back. But AI-enabled warfare and environmental concerns resonate better. We should be prepared for a flood of messages (which is already underway) arguing against AI on these grounds. Further, job loss and harm to children are messages that motivate people to act. To be clear, I find AI-enabled warfare alarming; we need to continue serious efforts to monitor and mitigate the environmental impact of AI; any job losses are tragic and hurt individuals and families; and as a father, I hold dearly the importance of every child’s welfare. Each of these topics deserves serious attention and treatment with the greatest of care. But when anti-AI propagandists take a one-sided view of complex issues to benefit their own organizations at the expense of the public at large — for instance, when big AI companies argue that AI is dangerous to block the free distribution of open source projects that compete with their offerings — then we all lose. For example, public perception of data centers’ environmental impact is already far worse than the reality — data centers are incredibly efficient for the work they do, and hampering their buildout will hurt rather than help the environment. While job loss is a real problem, the “AI washing” of layoffs — in which businesses that had over-hired during the pandemic blame AI for recent layoffs, although AI hasn’t yet affected their operations — has led to overblown fears about the impact of AI on employment. Unfortunately, this sort of propaganda easily leads to regulations that create worse outcomes for everyone. For example, oil companies worked for years to create fear of nuclear energy. The result is that overblown concerns about the safety of nuclear power plants has stifled nuclear power development, leading to millions of premature deaths from air pollution that was caused by other energy sources and a massive increase in CO2 emissions. Let’s make sure overblown concerns about AI do not lead to a similar fate for the many people that would benefit from faster AI development. Last week, the White House proposed a national legislative framework for AI. A key component is a federal preemption framework to prevent a patchwork of state regulations that hamper AI development. I support this. After failing to gain traction at the federal level, a lot of anti-AI propaganda has shifted to the state level. If just one of the 50 states passes a law that limits AI in an unproductive way, it could lead to stifling AI development across all the states and potentially across the globe. The White House proposal rightfully respects each state’s rights to control its own zoning, how it enforces general laws to protect consumers, and how it uses AI. But if a state were to pass laws that limit AI development, federal rules would preempt the state law. The White House proposal remains a proposal for now. However, if the U.S. Congress enacts it, it will clear the way for ongoing efforts to develop AI in beneficial ways. Where do we go from here? Let’s support limiting applications — those that use AI, and those that don’t — that harm people. When the anti-AI coalition argues against AI, in addition to considering the merits of the argument, I consider whether their position is consistent and persuasive, or if they are just promoting whatever concerns they think will sway the public at a given moment. And, let’s also keep using a scientific approach to weighing AI’s benefits against likely harms, so we don’t end up with overblown concerns that limit the benefits that AI can bring everyone. [Original text with links: https://t.co/kfZY7mo0Mi ]

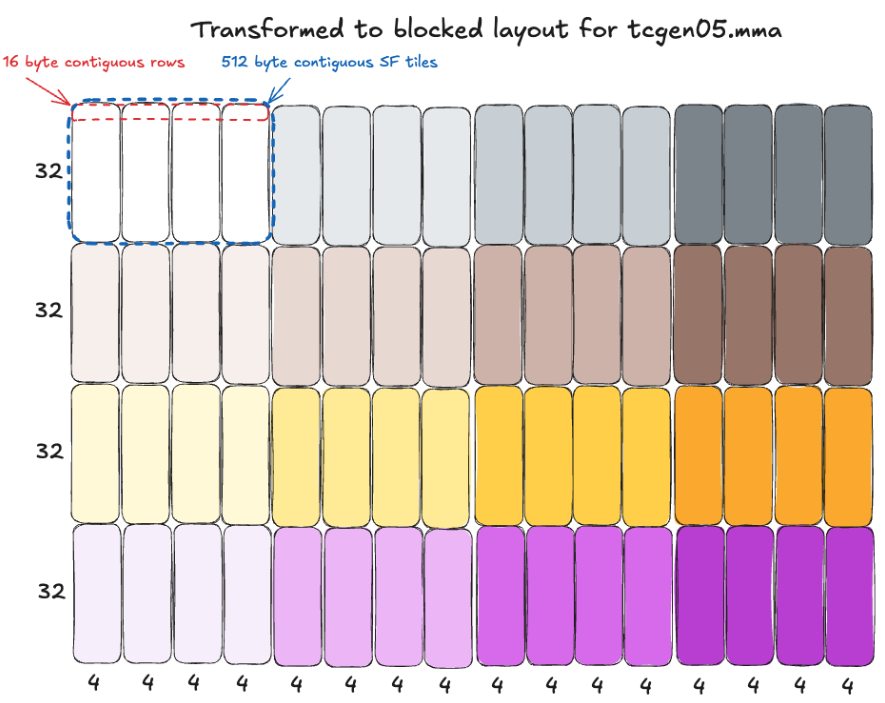

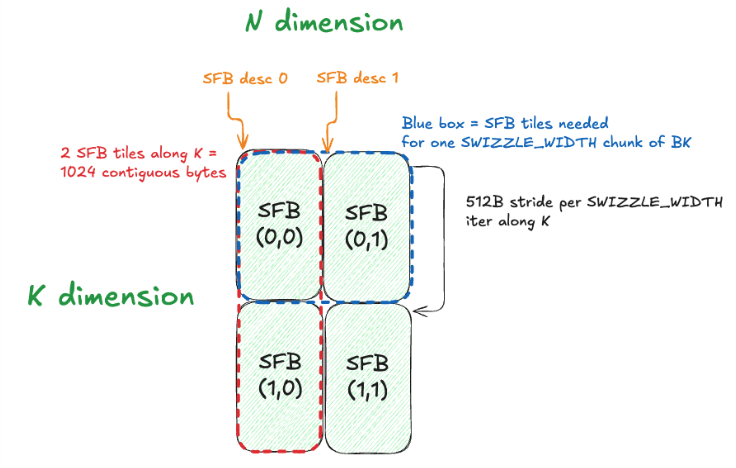

New blog post: "MXFP8 GEMM: Up to 99% of cuBLAS performance using CUDA + PTX": https://t.co/N08X1SgNN2 As someone who works on MXFP8 training, I was interested in deeply understanding GEMM design for this numerical format. In this post, we write a MXFP8 GEMM with CUDA + PTX, and iteratively optimize to reach cuBLAS-like performance (for some shapes!). It includes technical deep-dives into all the weird constraints and design challenges introduced by MXFP8. My brain is absolutely fried on CUDA+PTX now, so time to move onto other things (CuTeDSL?) - but in the meantime, time for me to go touch some grass

Meet the new Slack: Where AI works. The place for all your people All your agents And now... Slackbot, your ultimate teammate. https://t.co/H64JMKCaGW

絶対AI時代のたまごっちくるよなー 作りません? https://t.co/xbJklI09rz

絶対AI時代のたまごっちくるよなー 作りません? https://t.co/xbJklI09rz

i made tetris but the board and pieces are attached to your body and it's quite tiring to play https://t.co/yEoA49igpX