@vega_myhre

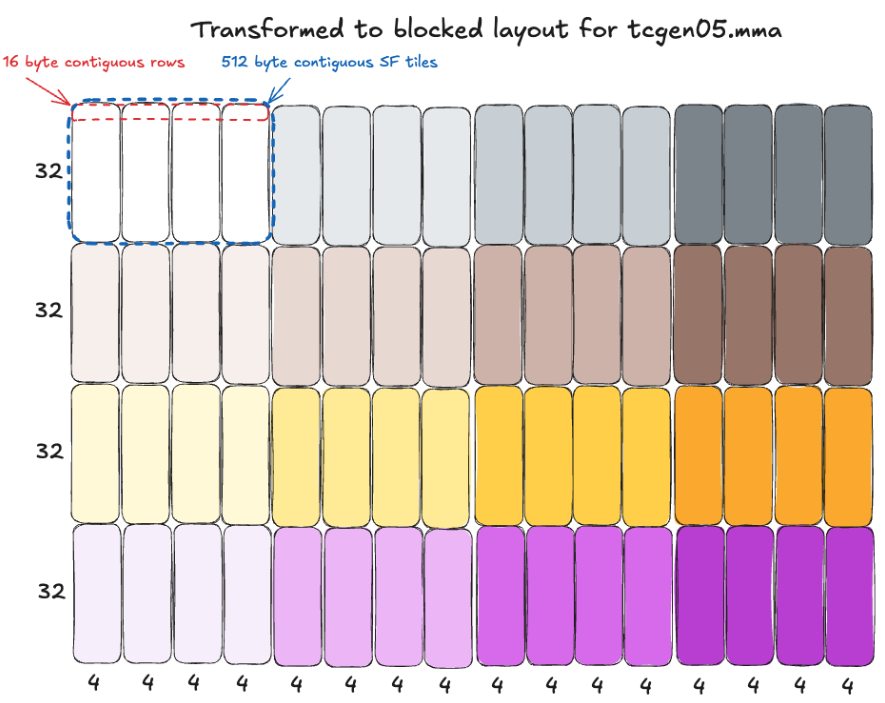

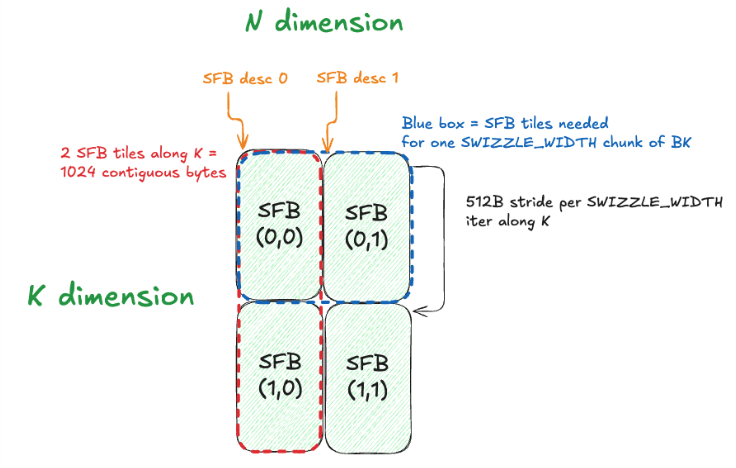

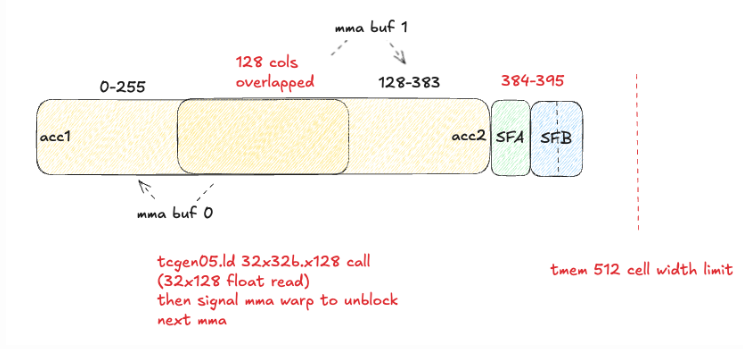

New blog post: "MXFP8 GEMM: Up to 99% of cuBLAS performance using CUDA + PTX": https://t.co/N08X1SgNN2 As someone who works on MXFP8 training, I was interested in deeply understanding GEMM design for this numerical format. In this post, we write a MXFP8 GEMM with CUDA + PTX, and iteratively optimize to reach cuBLAS-like performance (for some shapes!). It includes technical deep-dives into all the weird constraints and design challenges introduced by MXFP8. My brain is absolutely fried on CUDA+PTX now, so time to move onto other things (CuTeDSL?) - but in the meantime, time for me to go touch some grass