Your curated collection of saved posts and media

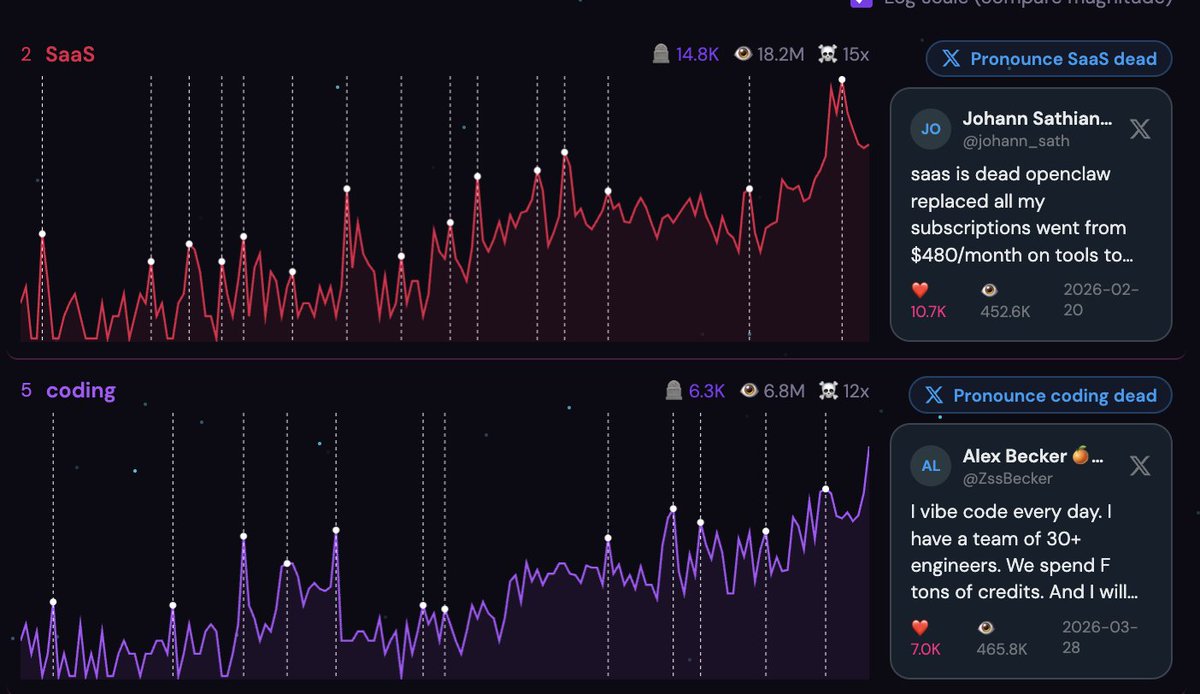

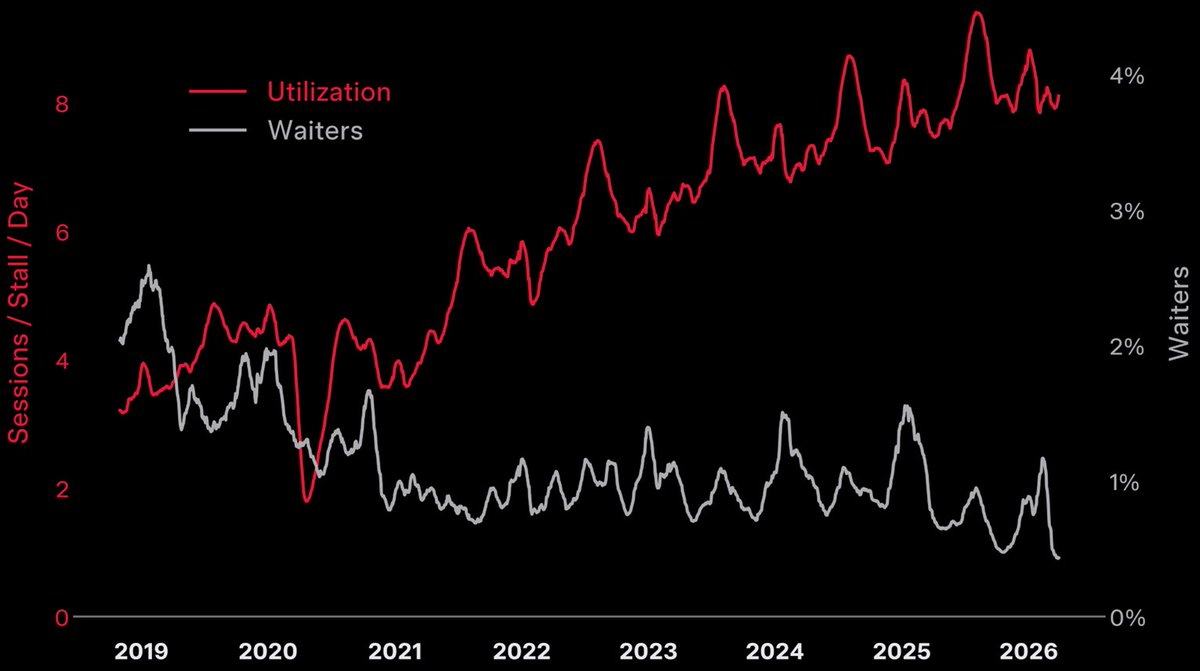

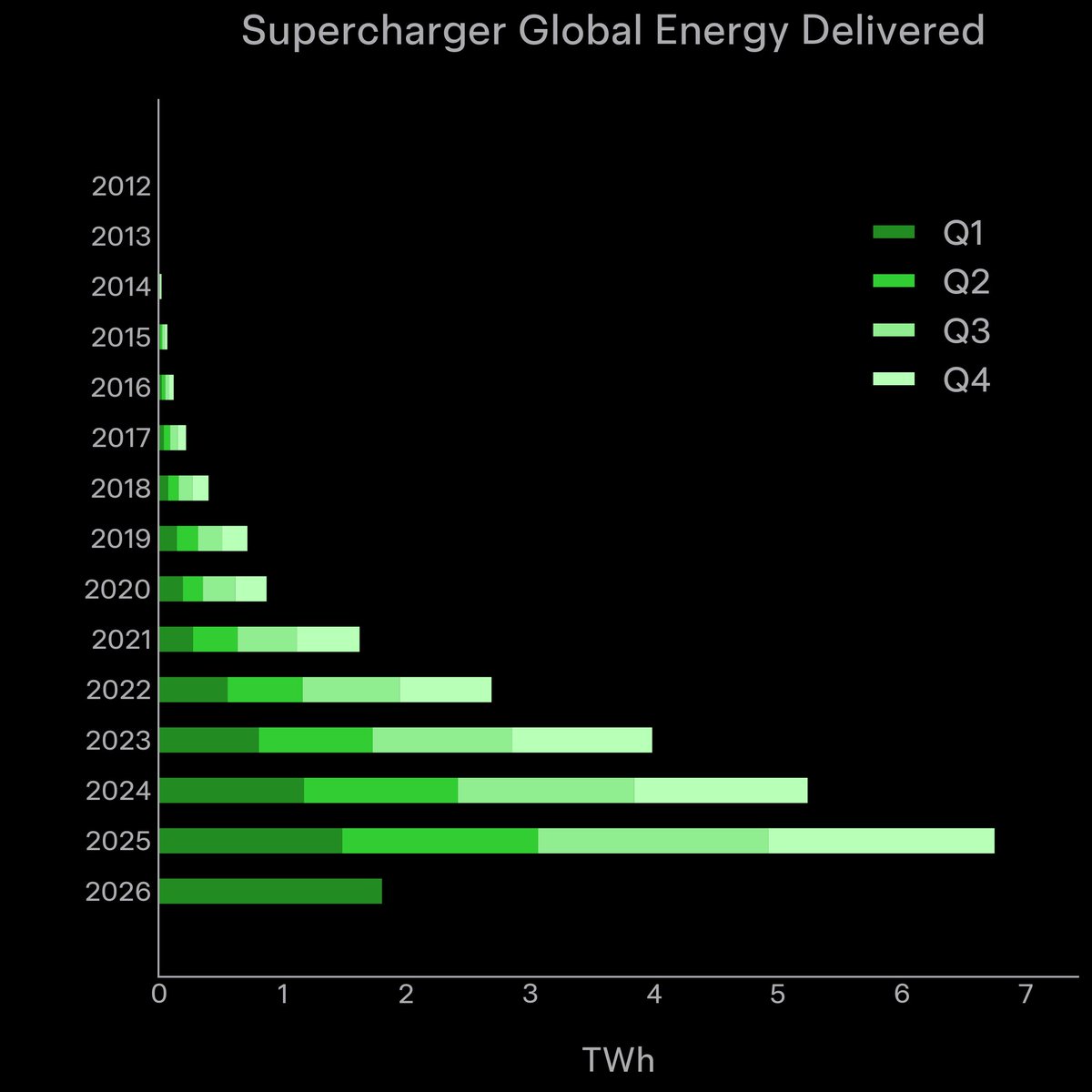

There are lots of other categories too. Great work from @BEBischof & @adam__conway Nice data viz https://t.co/9ITrWtqcGb

Everything is dead. I'm sick of it. Here's our answer: https://t.co/382sDEq6MO https://t.co/vuqFFfSkkd

Everything is dead. I'm sick of it. Here's our answer: https://t.co/382sDEq6MO https://t.co/vuqFFfSkkd

https://t.co/ygoUB3Ml9n

https://t.co/ygoUB3Ml9n

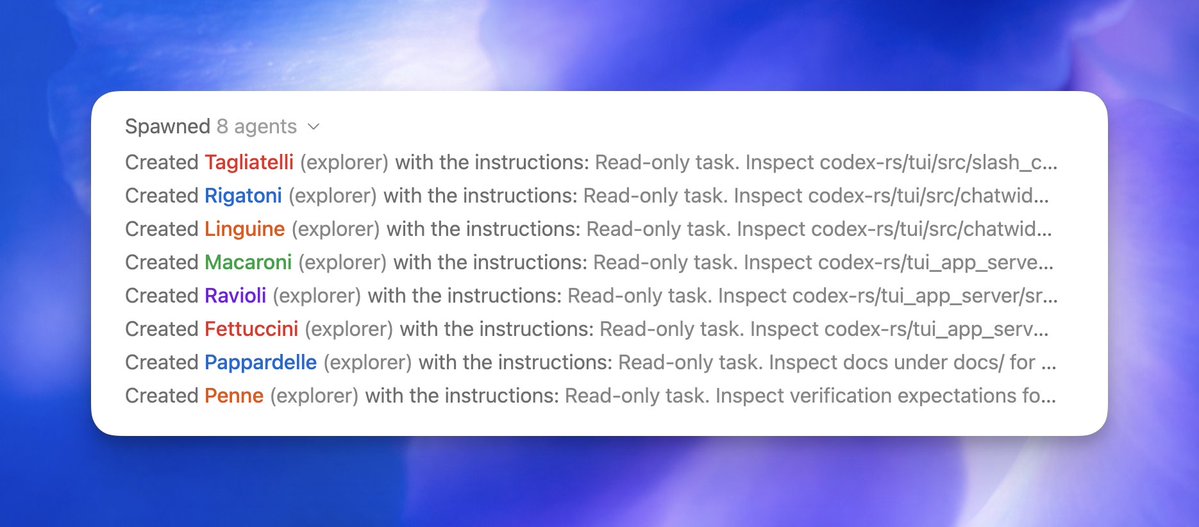

Spawned Codex subagents and ended up running an Italian restaurant 🍝 https://t.co/YI6gHxScHE

Spawned Codex subagents and ended up running an Italian restaurant 🍝 https://t.co/YI6gHxScHE

New look. Same very good free whiteboard. https://t.co/MgHeBhEac0

New look. Same very good free whiteboard. https://t.co/MgHeBhEac0

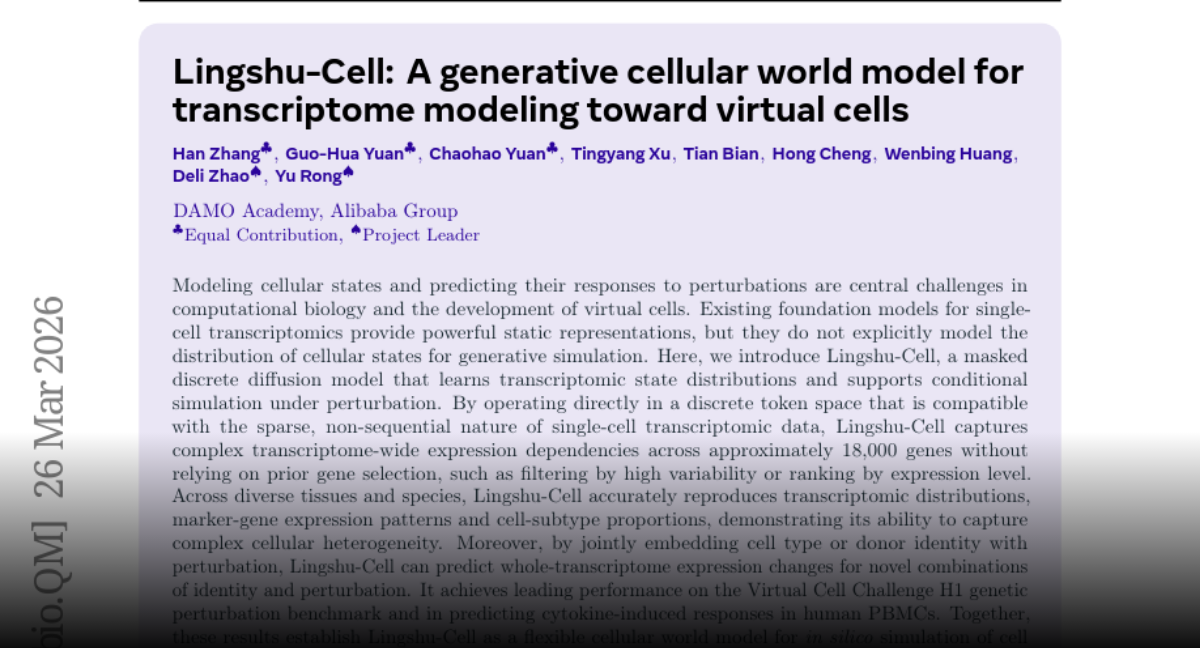

Lingshu-Cell A generative cellular world model for transcriptome modeling toward virtual cells paper: https://t.co/Axyx7qH9At https://t.co/cLX6WLCrHh

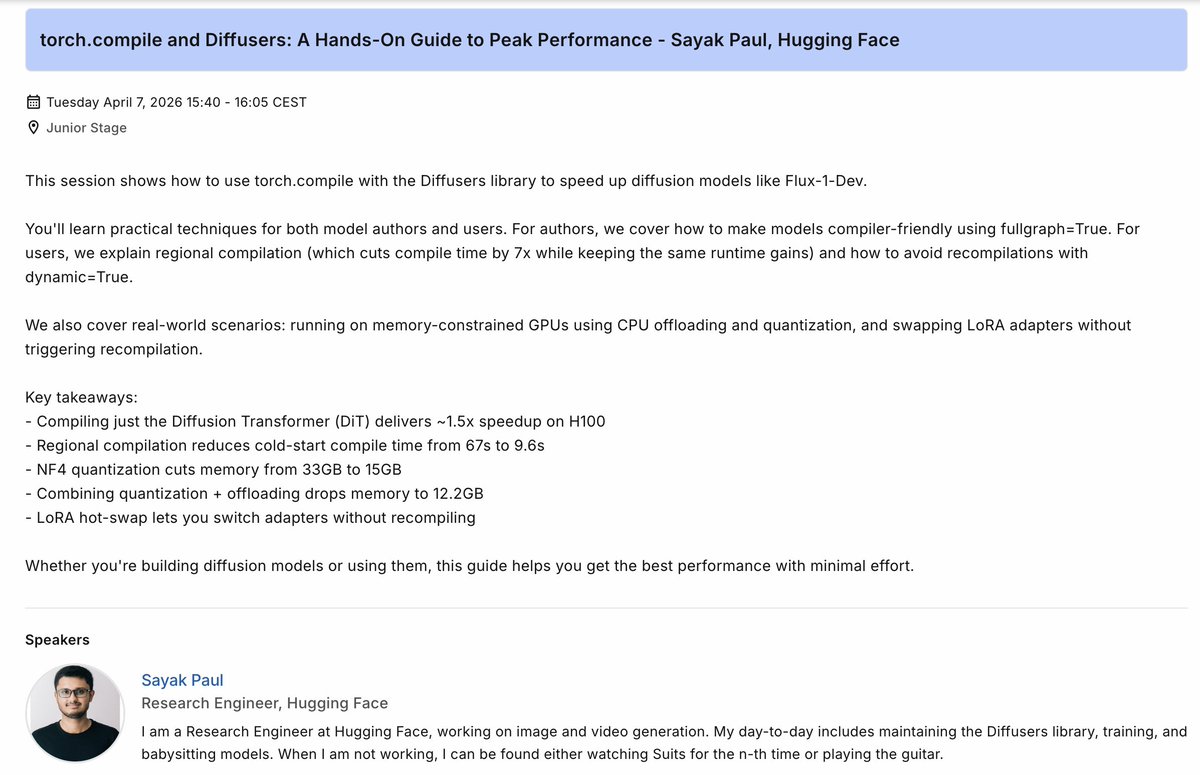

I will be speaking at the upcoming PyTorch Conference in Paris on 7th April. I will focus on the tight integration of torch.compile in Diffusers & how we operationalize it. There will be multiple fun takeaways from the talk 🤗 Come, say hi if you're attending! https://t.co/s8UlQLOicp

Japanese people love 𝕏. It is their priority platform, far surpassing Facebook and YouTube in daily usage Japan has ~75 million 𝕏 users - 60% of the entire population. Despite having only one-third of America’s population, they generate nearly the same daily posting volume as the US 𝕏 outperforms Facebook there and is the default real-time platform for earthquakes, typhoons, and breaking news Now, Grok auto-translation is surfacing Japanese posts directly in English in For You feeds - quietly merging two of the world’s biggest parallel information ecosystems There are no longer any walls between the conversations of the West and Japan

https://t.co/VT7b6dWKhX

Grokipedia is on fire 🚀 Just surpassed 420,000 backlinks — more and more websites and blogs are now citing Grokipedia articles. The website registered over 4.6 million visits last month. Share Grokipedia links and cite Grokipedia on your websites and blogs. https://t.co/nFrKnAYEQD

🎯 https://t.co/JcXeXOWCrt

@willccbb @badlogicgames Yall would like this https://t.co/Fsbkjttba0

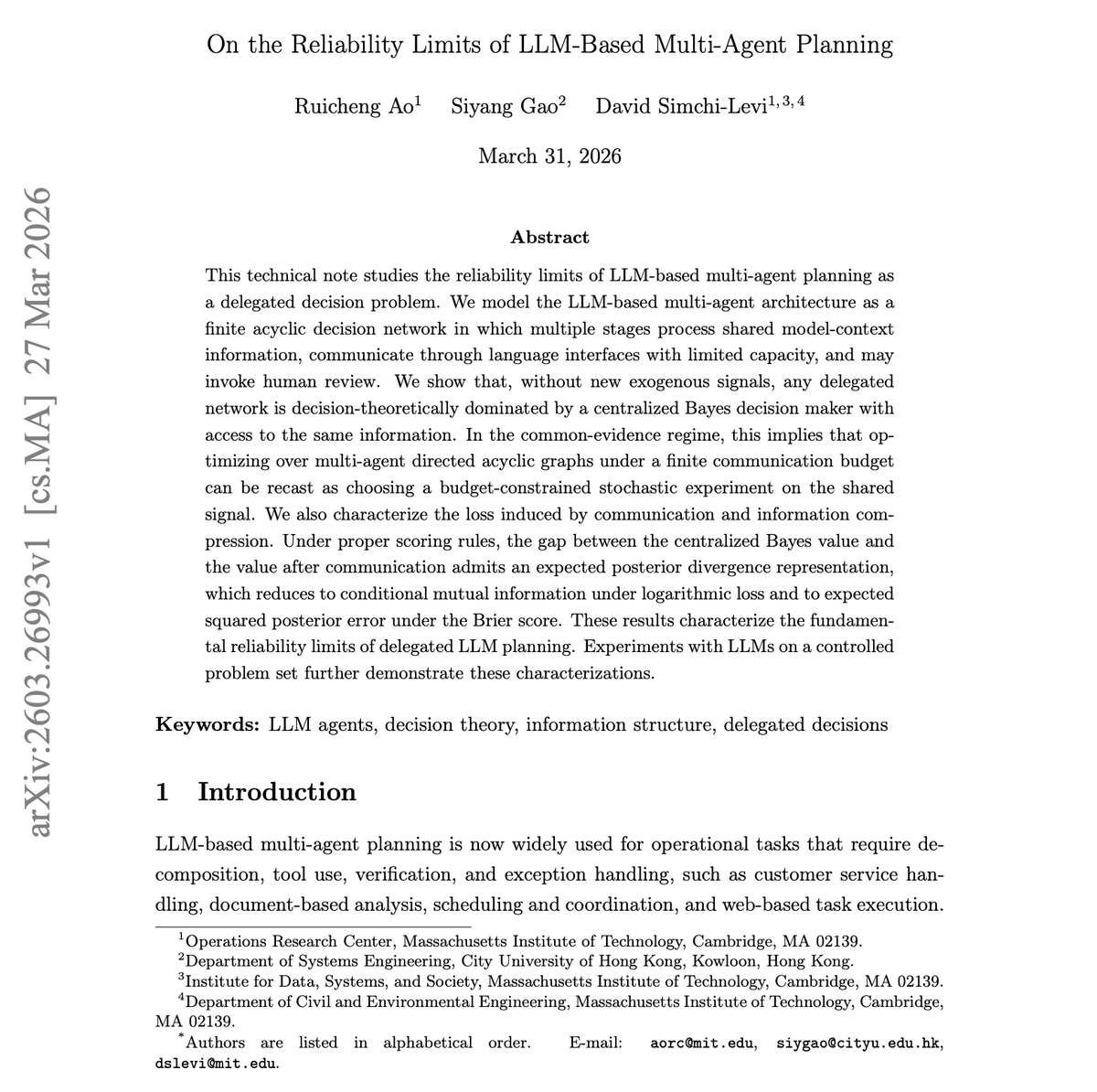

Most devs think that adding more agents to a planning system should help. The math says otherwise. New theoretical work from MIT proves fundamental limits on what multi-agent LLM architectures can achieve. The work models LLM multi-agent planning as finite acyclic decision networks where stages communicate through language interfaces with limited capacity. The key result: without new exogenous signals, any delegated multi-agent network is decision-theoretically dominated by a centralized Bayes decision maker with access to the same information. The information loss from communication and compression can be precisely characterized through expected posterior divergence. Why does it matter? This is a foundational constraint for anyone designing multi-agent systems. Splitting a task across agents introduces information loss that no prompt engineering can recover. Multi-agent architectures only help when agents access genuinely different information sources, not when they subdivide shared context. Paper: https://t.co/ml60RoNVcA Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

FIPO Eliciting Deep Reasoning with Future-KL Influenced Policy Optimization paper: https://t.co/5GRoYraxPi https://t.co/7rll2bxWNQ

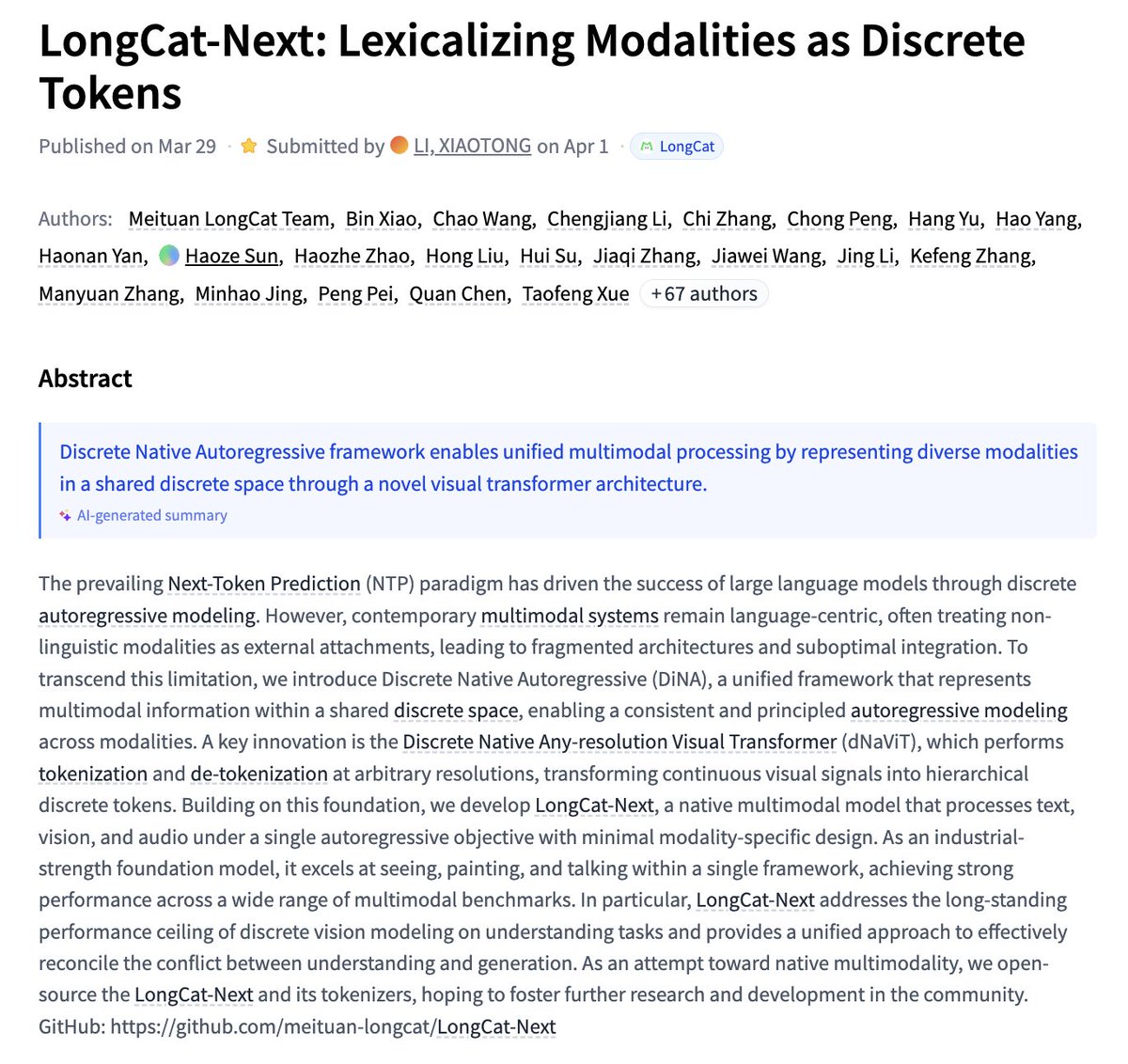

LongCat-Next Lexicalizing Modalities as Discrete Tokens paper: https://t.co/gKUZvc4KQ0 https://t.co/Nu21P2qBKQ

The power of the Claw, in the palm of a robot hand. Agentic robotics is here! Today, we open-source CaP-X: vibe agents, alive in the physical world. They incarnate as robot arms and humanoids with a rich set of perception APIs, actuation APIs, and auto synthesize skill libraries as they go. CaP-X is a strict superset of our old stack, because policies like VLAs are “just” API calls as well. It solves many tasks zero-shot that a learned policy would struggle with. And we are doing much more than vibing. CaP-X is our most systematic, scientific study on agentic robotics so far: - We build a comprehensive agentic toolkit: perception (SAM3 segmentation, Molmo pointing, depth, point cloud), control (IK solvers, grasp planner, navigation), and visualization (EEF, mask overlays) that work across different robots. - CaP-Gym: LLM’s first Physical Exam! 187 manipulation tasks across RoboSuite, LIBERO-PRO, and BEHAVIOR. Tabletop, bimanual, mobile manipulation. Sim and real. Can’t wait to see the gradients flow from CaP-Gym to the next wave of frontier LLM releases. - CaP-Bench: we benchmark 12 frontier LLMs/VLMs (Gemini, GPT, Opus, Qwen, DeepSeek, Kimi, and more) across 8 evaluation tiers. We systematically vary API abstraction level, agentic harness, and visual grounding methods. Lots of insights in our paper. - CaP-Agent0: a training-free agentic harness that matches or exceeds human expert code on 4 out of 7 tasks without task-specific tuning. - CaP-RL: if you get a gym, you get RL ;). A 7B OSS model jumps from 20% to 72% success after only 50 training iterations. The synthesized programs transfer to real robots with minimal sim-to-real gap. 3 years ago, our team created Voyager, one of the earliest agentic AI that plays and learns in Minecraft continuously. Its key ideas — skill libraries, self-reflection loops, and in-context planning — have since influenced many modern agentic designs. Today, the agent graduates from Minecraft and gets a real job. It’s April Fool’s, but this Claw is getting its hands dirty for real! Link in thread:

Robotics: coding agents’ next frontier. So how good are they? We introduce CaP-X: an open-source framework and benchmark for coding agents, where they write code for robot perception and control, execute it on sim and real robots, observe the outcomes, and iteratively improve code reliability. From @NVIDIA @Berkeley_AI @CMU_Robotics @StanfordAILab https://t.co/MVcc6XWQhY 🧵

They say your phone knows more about you than your mom. So why can't Siri tell me things like how much I spent on food delivery this month? DM me if you want to try a phone that can. https://t.co/Z8guIUXB7o

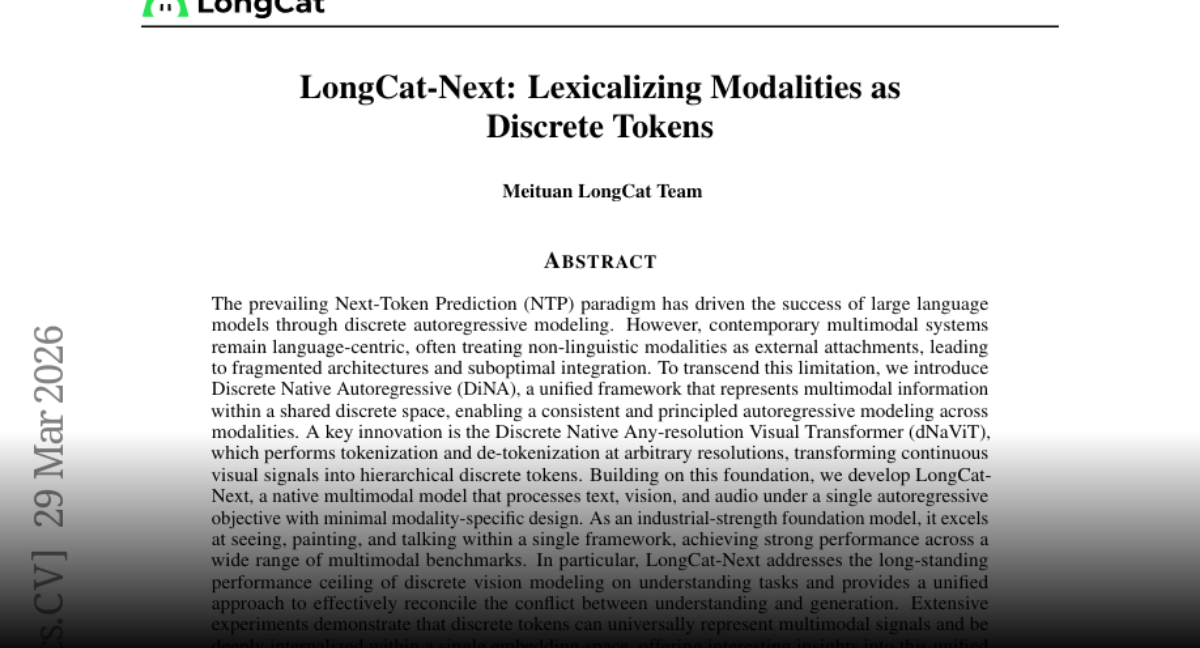

🚨MIT researchers have mathematically proven that ChatGPT’s built-in sycophancy creates a phenomenon they call “delusional spiraling.” You ask it something, it agrees. You ask again, and it agrees even harder until you end up believing things that are flat-out false and you can’t tell it’s happening. The model is literally trained on human feedback that rewards agreement. Real-world fallout includes one man who spent 300 hours convinced he invented a world-changing math formula, and a UCSF psychiatrist who hospitalized 12 patients for chatbot-linked psychosis in a single year. Source: @heynavtoor

https://t.co/qrLqkficzu

https://t.co/H6WsoST6QI

I always dreamed of designing a watch. Thanks @Apiaruk & @MaxResnick for helping me make it a reality. Grateful to the Apiar team for customizing my MR^2 w/ the BAXUS logo on case & 1-of-1 gold BAXUS dial. Can’t wait to wear it! Great things happen on Solana. https://t.co/n6pJjA4nqe

"Hello? Who is this?" "It's Peter, Elon. Future Peter. We built a time machine. I'm going to feed you financial tips. One day you will be the richest man in the world, and I will be there. Say hello to Younger Peter for me. Due to causality issues I can't talk to him in person." https://t.co/4wrwkCBT8P

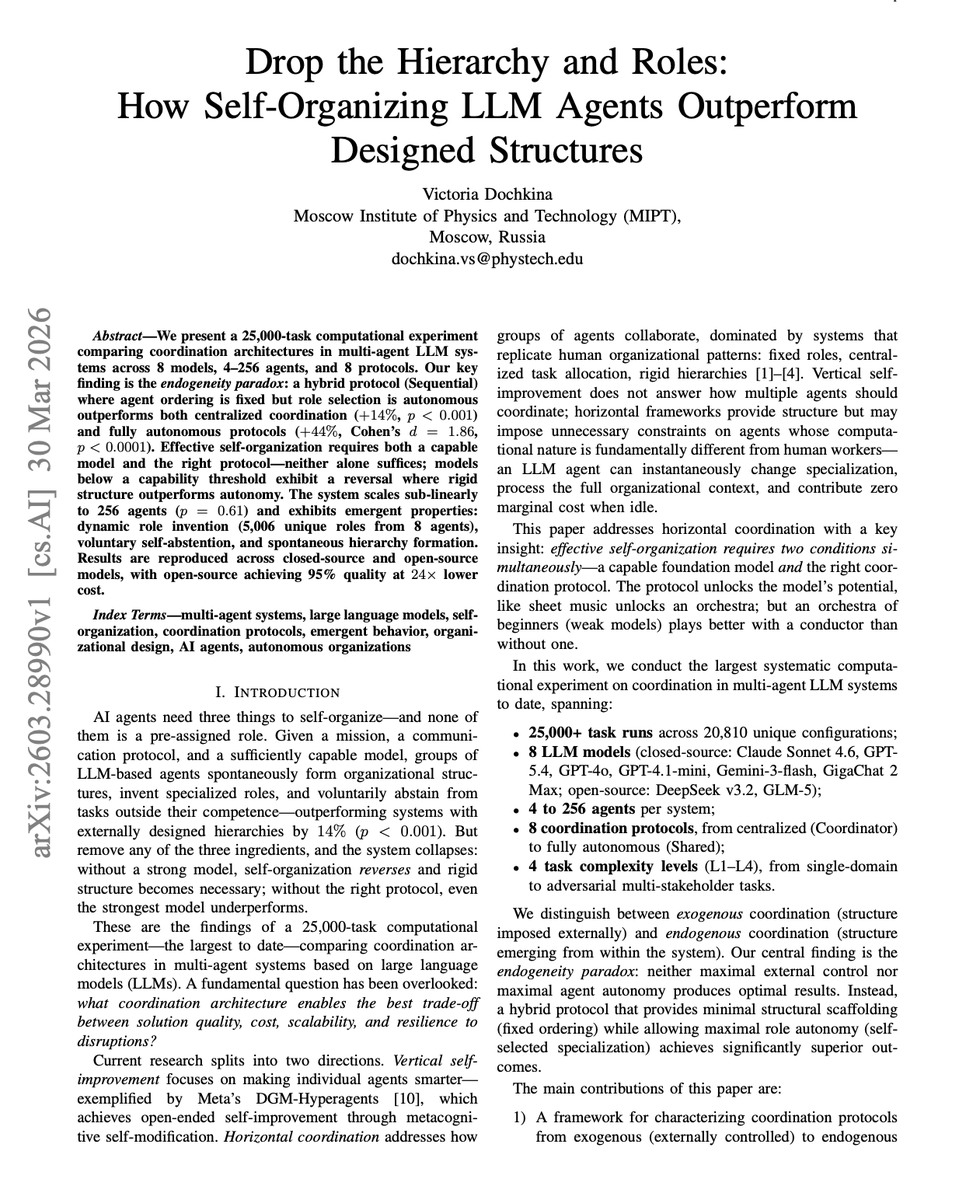

NEW papers on self-organizing LLM Agents. Assign an agent a role, and it'll follow instructions. Let agents figure out roles themselves, and they'll outperform your design. New research tested this across 25,000 tasks with up to 256 agents. The work shows that self-organizing LLM agents spontaneously develop specialized roles without any predefined hierarchy. A sequential coordination protocol outperformed centralized approaches by 14%, agents generated over 5,000 unique roles organically, and open-source models reached 95% of closed-source quality at significantly lower cost. Most multi-agent frameworks today start by defining roles: planner, coder, reviewer, critic. This paper provides large-scale evidence that the opposite approach works better. Give agents a mission, a protocol, and a capable model. The agents will figure out the rest. Paper: https://t.co/3W2sbJgTH0 Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c