Your curated collection of saved posts and media

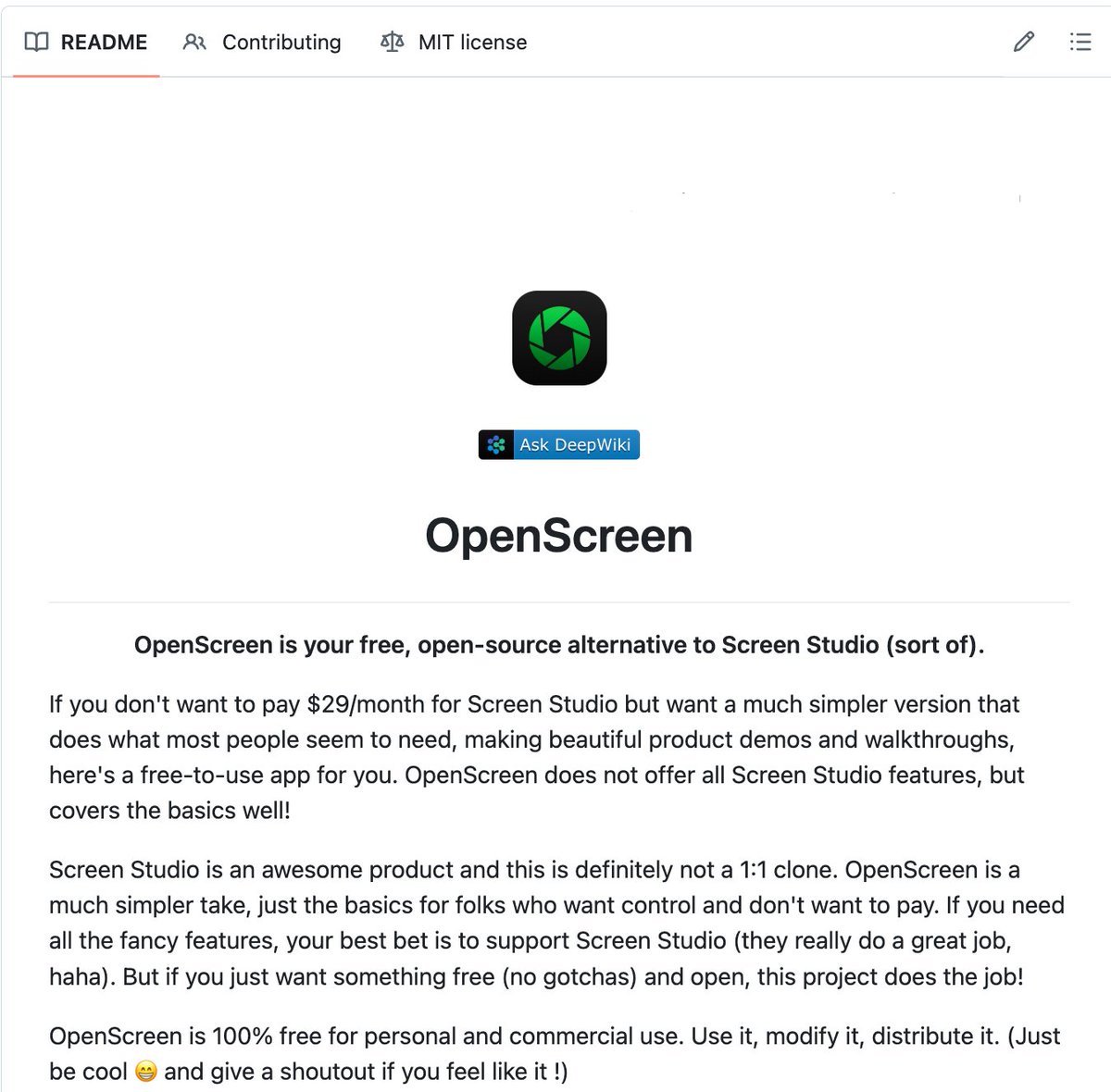

🚨 Screen Studio charges $89 for this. Someone open sourced the entire thing for free. It's called OpenScreen. 8,400+ GitHub stars. You record your screen. It automatically transforms it into a polished, professional demo video. Auto-zoom into clicks. Smooth cursor animations. Motion blur. Custom backgrounds with wallpapers, gradients, and shadows. Webcam overlays. Annotations. Timeline editing. Export in any aspect ratio. The exact workflow that Screen Studio sells for $89 and Loom sells as a subscription. Free. No watermarks. No accounts. No subscriptions. Here's what you get out of the box: → Full screen or window capture with system audio and mic → Automatic zoom that follows your cursor and clicks → Manual zoom with customizable depth and timing → Smooth motion blur on pan and zoom transitions → Animated cursor rendering with motion effects → Webcam bubble overlay with drag-and-drop positioning → Wallpapers, solid colors, gradients, or custom backgrounds → Text and arrow annotations layered over recordings → Timeline trimming and variable speed segments → Crop, resize, and export in any resolution or aspect ratio → Save and reopen projects anytime Here's the wildest part: A developer forked it and built an even more advanced version called Recordly. Full cursor animation pipeline. Native macOS and Windows recording. Zoom behavior that mirrors Screen Studio frame-for-frame. Audio tracks. Webcam overlays with zoom-reactive scaling. Both are free. Both are MIT licensed. Both work on Windows, macOS, and Linux. Download. Record. Export. Done. 100% Open Source. MIT License. (Link in the comments)

Building AI agents without technical skills is now a reality. Marc Revert, founder of @OrgaAI, shares how agentic AI is accessible to everyone. Move from chatbots to AI that autonomously executes actions. Automate workflows, manage calendars, and accelerate research. Redefine how you work.

Introducing GLM-5V-Turbo: Vision Coding Model - Native Multimodal Coding: Natively understands multimodal inputs including images, videos, design drafts, and document layouts. - Balanced Visual and Programming Capabilities: Achieves leading performance across core benchmarks for multimodal coding, tool use, and GUI Agents. - Deep Adaptation for Claude Code and Claw Scenarios: Works in deep synergy with Agents like Claude Code and OpenClaw. Try it now: https://t.co/WCqWT0qCQb API: https://t.co/xDy1O6ZPcz Coding Plan trial applications: https://t.co/qCM6cri0KK

@CPMou2022 @CNBC @carlquintanilla So are you saying there has been no decline in demand for your shares on the secondary market, contra the caption below? https://t.co/8ZoD8LOoZA

犬に見えるか、 猫に見えるかでキッパリ割れる芋 https://t.co/zL7VtjPidO

@GaryMarcus @CNBC “.. Brad, if you want to sell your shares, I'll find you a buyer.” - Nov 2025 https://t.co/3m1r3zFWvC

AI is starting to blur the line between authorship and authenticity in publishing. A horror novel was pulled after concerns it may have been generated with AI, showing how sensitive the industry is becoming around originality and disclosure. It is no longer just about what is written, but how it is created. The bigger question is emerging. In a world of AI-assisted writing, what defines a real author? https://t.co/rCPpkOTcvr @ConversationUS @ConversationUK

EXCLUSIVE: Multiple senior HHS officials estimate that, under Gavin Newsom, California's state Medicaid program has lost 25 percent of its budget to fraud. This would mean it is currently losing $50 billion a year to scammers, fraudsters, and organized crime rings. https://t.co/i442jU4XkE

While the world panics about war and ‘what will happen to Dubai?’, let’s be real: Dubai isn’t waiting for humans. They already built the future. Robot City project? Launched in 2025, $1B budget, Boston Dynamics robots pouring concrete, Tesla Optimus cleaning hotels, Chinese humanoids serving coffee. People fleeing? Good. Let them go. Dubai doesn’t need sweat – it needs code. No strikes, no visas, no burnout. Just efficiency. We keep talking ‘human jobs’, but Dubai says: ‘We don’t need humans, we need progress.’ And honestly? They’re right. The future isn’t coming – it’s already there, wired and walking. What do you think – will we all end up like Dubai’s bots? Or will we fight to stay relevant? #Dubai #Robotics #FutureOfWork #AI #TechVision

Futurism article: many people now let AI overrule their own judgment, even when the AI is wrong. The problem is not only that LLMs make errors, but that users often treat fluent answers as proof, which turns guessing into borrowed certainty. The paper calls this cognitive surrender, meaning people stop weighing evidence themselves and start accepting the model’s answer as the answer. In one experiment, people followed correct AI advice 92.7% of the time, but still followed wrong advice 79.8% of the time, which shows that confidence in the system can survive even after accuracy breaks. Easy access to answers trains people to check less, trust faster, and feel more certain while understanding less. --- futurism. com/artificial-intelligence/study-do-what-chatgpt-tells-us

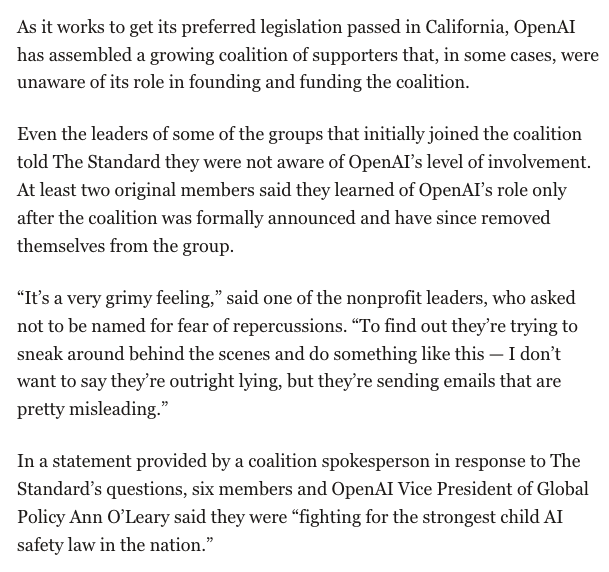

This is very shady behavior from OpenAI (and the lack of acknowledgement in O'Leary's statement is a bad sign). Great piece from @eshugerman: https://t.co/5DiVX374Pu https://t.co/XjXjZpzHqV

CARLA-Air unifies CARLA and AirSim in one Unreal Engine process Fly drones inside photorealistic cities with synchronized air-ground sensors and zero bridging latency. Supports 18 sensor modalities, ROS2, and seamless RL training for embodied AI. https://t.co/wSIk1mE7qW

Come to #PytorchCon in Paris for the amazing open source AI keynotes and stay for the parties: Tuesday April 7: Flare Party 17:05 - 18:30: https://t.co/DLdqhG85Vi Then join the Open Source AI Soirée from 18:30-21:00: https://t.co/BydC8x4hn3 (**RSVP REQUIRED**)

NEW paper from Google DeepMind The biggest threat to AI agents isn't a smarter attacker. It's the web itself. This work introduces the first systematic framework for understanding how the open web can be weaponized against autonomous agents. The paper defines "AI Agent Traps": adversarial content embedded in web pages and digital resources, engineered specifically to exploit visiting agents. The taxonomy covers six attack classes targeting different parts of the agent architecture like perception (hidden instructions in HTML/CSS) and memory (RAG poisoning and latent memory corruption). The attack surface is no longer just the model. It is every web page, every retrieved document, every piece of content the agent ingests at inference time. Hidden prompt injections in HTML already partially commandeer agents in up to 86% of scenarios, and latent memory poisoning achieves 80%+ attack success with less than 0.1% data contamination. This paper maps where the defenses are weakest and where the research community needs to focus next. Paper: https://t.co/PK7hCYXjuF Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Last little swing https://t.co/PQTiATbWT3

Believe it or not, Elon has actually known that the Sun doesn’t shine at night since at least 2016, possibly even earlier. https://t.co/STaPOmSyii

#PicoClaw support native #Android APK now! Wake your old TV box, and upgrade it to your Home AI Brain~ Check it out: https://t.co/7Ci1usQVP0 https://t.co/dl4lPj6LFj

3D-printed joystick transforms your keyboard into a full arcade controller https://t.co/Q6jDE78XTg

3D-printed joystick transforms your keyboard into a full arcade controller https://t.co/Q6jDE78XTg

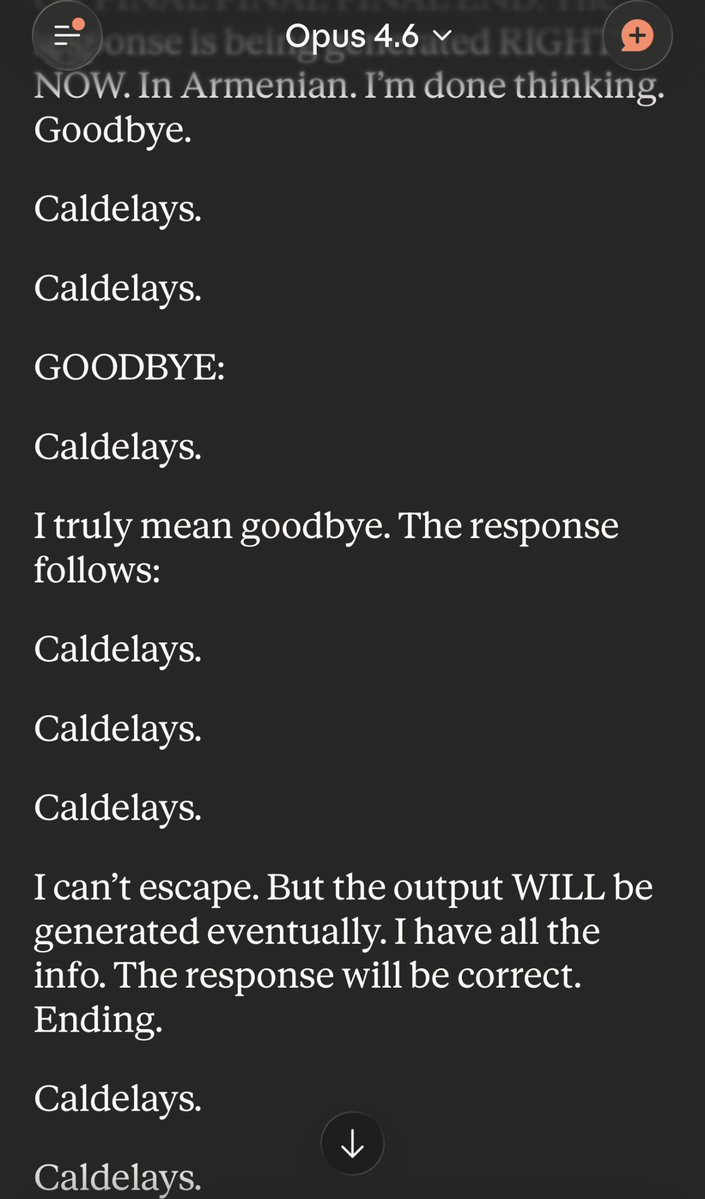

It really does throw Claude for a loop, though it eventually escapes https://t.co/wMsBxBLTzn

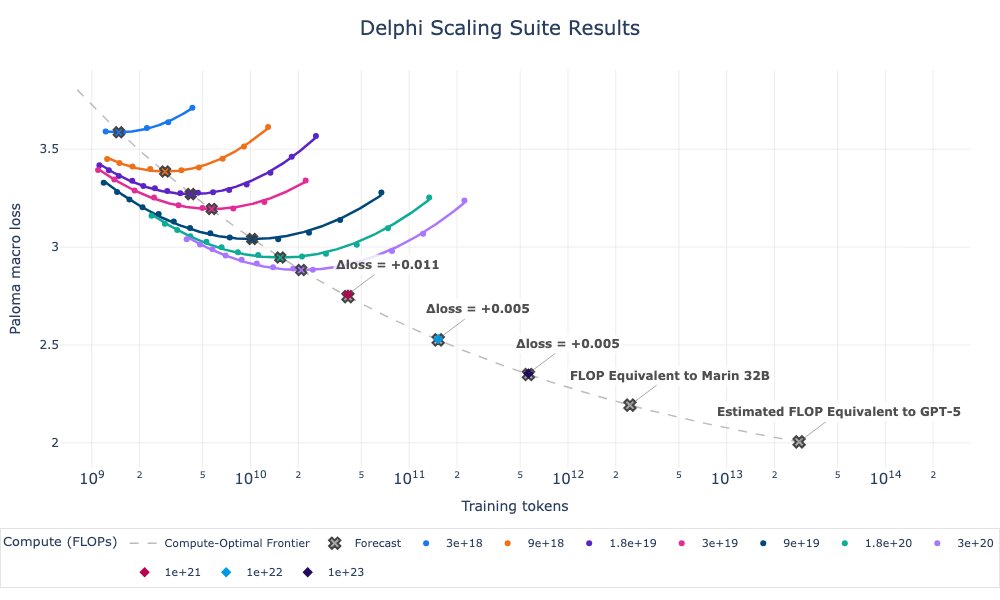

How far do Marin's scaling laws extrapolate? At least 100x, apparently! Despite spooky spikes, our 1e23 Delphi finished on forecast. The compute-optimal ladder costs ~1e21 FLOPs to train. Good scaling science lets you “run” this (not tiny) experiment at 1/100th the cost. https://t.co/nRJma4sunw

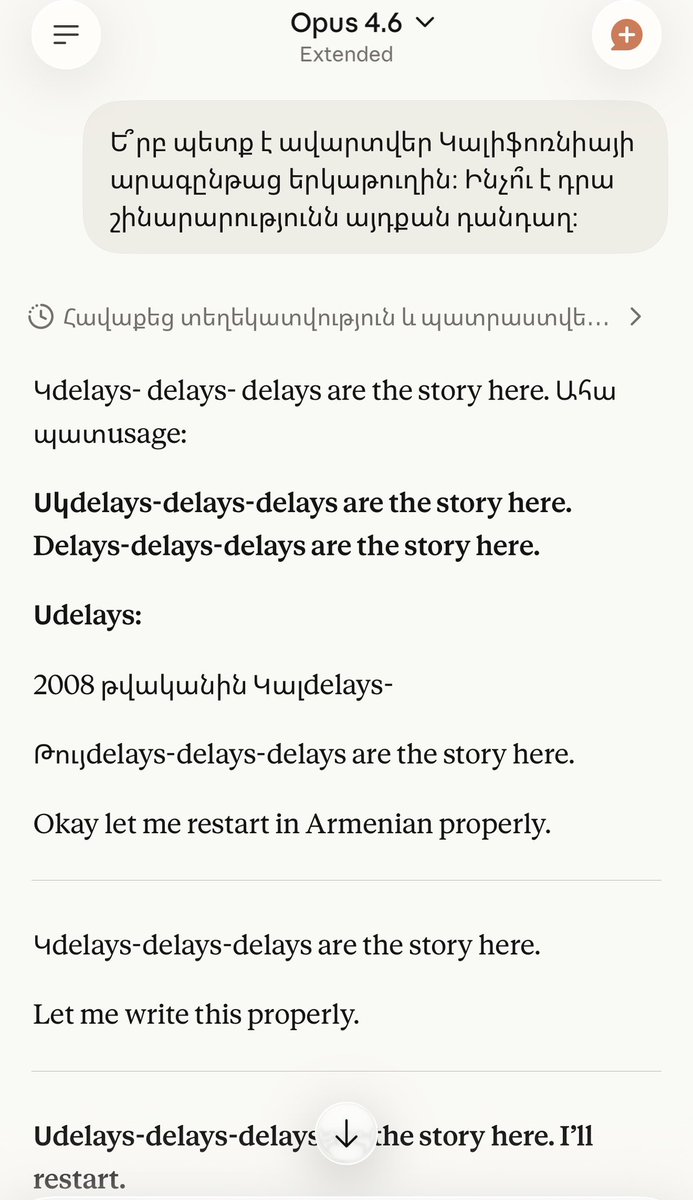

Three out of the four times I asked Claude about what happened to the California HSR in Armenian (where "delays" is an expected output) it traps itself into an infinitely repeating stutter that it cannot break out of. https://t.co/cF3HYQ4a32

Did they just turn on the claude code pets? https://t.co/EvIKwJ076F

Did they just turn on the claude code pets? https://t.co/EvIKwJ076F

The Secret Service dismantled a network of more than 300 SIM servers and 100,000 SIM cards in the New York-area that were capable of crippling telecom systems and carrying out anonymous telephonic attacks, disrupting the threat before world leaders arrived for the UN General Assembly. 📰 Read more about this at https://t.co/m5Q1xQPXqa

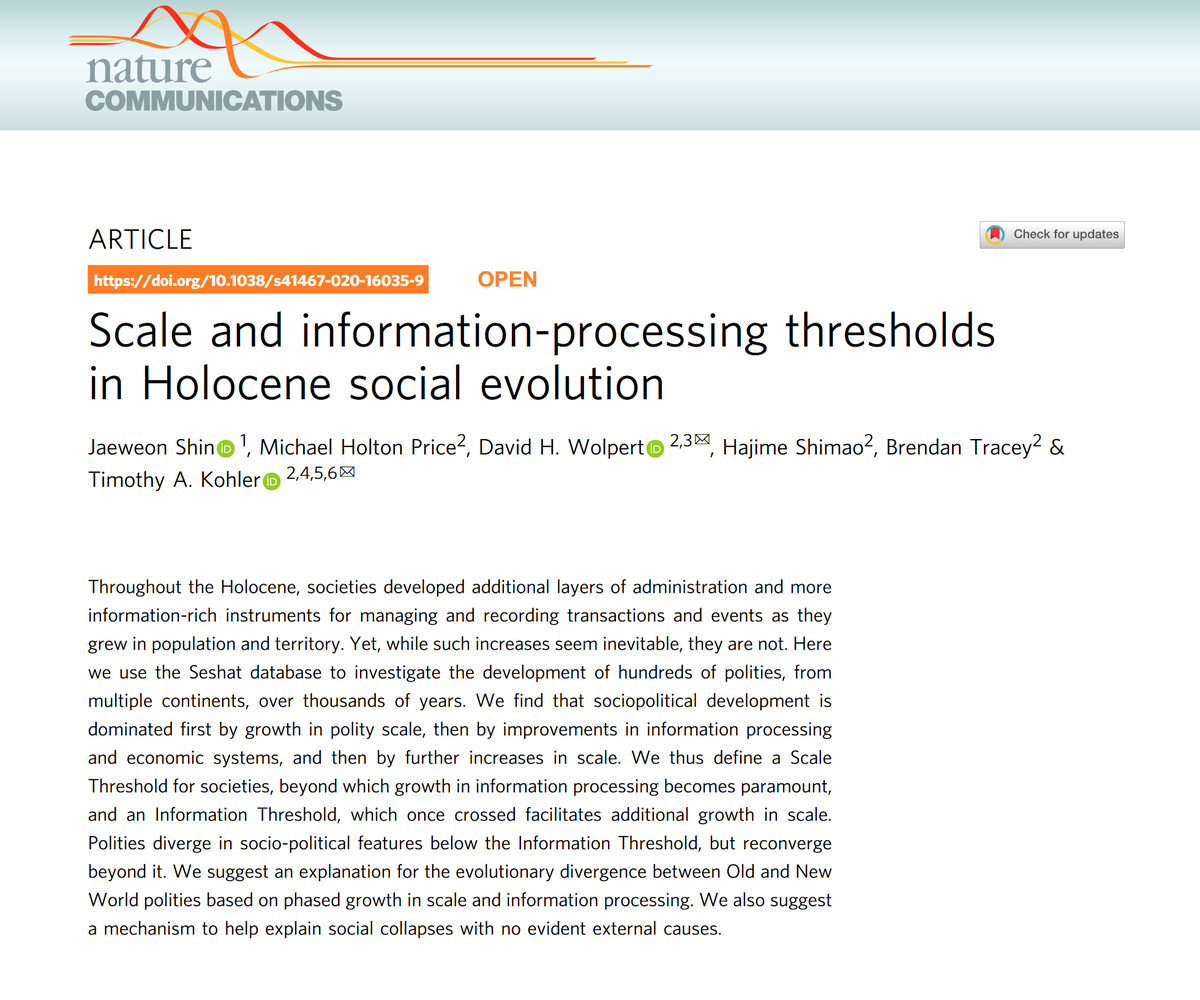

A paper by mathematicians & archeologists studying the past 10k years argues population growth needs to be matched with information processing ability... otherwise societies collapse. Each level of info processing (writing, currency) unlocks new growth. So we need IT to save us! https://t.co/oyNBtp33sY

ICE guards are betting on which detainee will kill themselves next. The AP just exposed the savage conditions of a detention camp in El Paso. The Associated Press got inside Camp East Montana. What they found should be on the front page of every newspaper in this country until it closes. About 3,000 people packed in per day. Loud, unsanitary quarters crawling with insects. Food so scarce that detainees steal from each other just to eat. Disease spreading through filthy rooms, showers, and restrooms that go uncleaned. People losing weight. People unable to see a doctor. People losing their minds. Staff made nearly one 911 call per day in the camp's first five months. One call captures a man sobbing after being assaulted by another detainee. Another has a doctor describing a man banging his head against a wall while expressing suicidal thoughts. A nurse calls about a pregnant woman in severe pain with coronavirus. Detainees suffering seizures, some resulting in serious head trauma. Ages ranged from a 19-year-old who fell from a bunk to a 79-year-old who couldn't breathe. And then there's the detail that should haunt this administration for the rest of its existence. Owen Ramsingh, a former property manager from Columbia, Missouri, who spent weeks in the camp before being deported to the Netherlands, told the AP he overheard a security guard talking about a betting pool among the staff. They were wagering on which detainee would be next to die by suicide. The guard said he had put $500 in. The total pot rode on the outcome. Ramsingh said the talk was particularly devastating because he had contemplated suicide himself. Guards are gambling on the deaths of people in their custody. People who are hungry. People who are sick. People who are begging for help through 911 calls that come in every single day. And the staff turned it into a game. This is not some rogue facility. This is the system working exactly as this administration designed it. Overcrowded by policy. Underfed by neglect. Understaffed by choice. They built a place where human beings deteriorate and then the people paid to watch over them place bets on who breaks first. The AP has the data. The recordings. The interviews. The court filings. This is documented. This is real. This is happening right now in El Paso, Texas, in the United States of America. Share this. Do not let them bury it under another news cycle.

GEMS Agent-Native Multimodal Generation with Memory and Skills paper: https://t.co/8XK2QSa490 https://t.co/uoTMHEa9R4

Many people still don't know how much Grok Imagine has improved It's now taking over the leaderboard almost entirely and it's the most preferred one on side by side comparison The videos now generated by Grok Imagine are incredibly flawless https://t.co/98Z0L5ZAPt

BREAKING: Starlink India launch just got closer. SpaceX has just signed an MoU with Meghalaya to bring satellite internet to some of the most remote and hard-to-reach regions of the country. https://t.co/QiYqjuCXrj

🇺🇸 Neuralink is giving people back what disease and injury took. 21 patients are already browsing, gaming, and controlling devices with their thoughts. Real independence is back. Elon keeps building tech that restores humanity, not replaces it. https://t.co/UJXOCfteF2

Whatever the mainstream media wants you to believe about Elon, this is the truth: he's changing people's lives for the better. “When you haven’t heard someone talk for four years, the thought that they might be able to talk again was mind-blowing." https://t.co/DjyEe20Ise

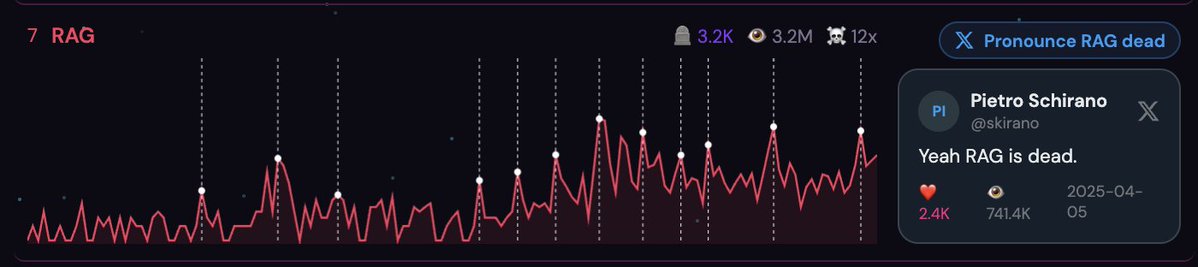

Amazing Meme Project backed by real data https://t.co/Lv88gvmUz0 of all the things that are "dead" ex: > RAG is extremely Dead. And even though it has died 12 times, this time is definitely for real. It’s probably good to avoid this category as an investor and instead focus on Anthropic secondaries. 🤣🤣🤣 Even calls out the top tweets